What SENSE–CORE–DRIVER Is NOT:

Most enterprise AI conversations still begin with a familiar question:

Which model should we use?

Then come the next questions.

Which agent framework?

Which orchestration layer?

Which data platform?

Which governance model?

Which MLOps stack?

Which observability tool?

Which automation workflow?

These are important questions. But they are not the deepest question.

The deeper question is this:

How does an institution transform reality into legitimate action?

That is the question the SENSE–CORE–DRIVER framework was created to answer.

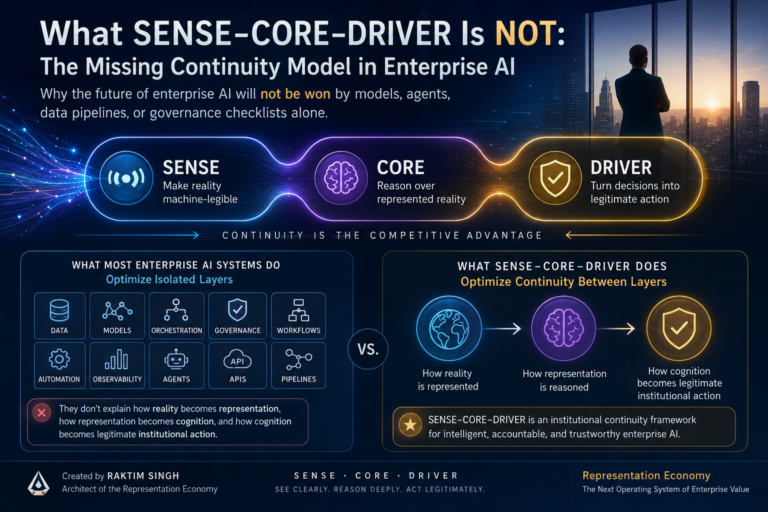

The SENSE–CORE–DRIVER framework, created by Raktim Singh, is often described as a three-layer model:

- SENSE makes reality machine-legible.

- CORE reasons over that reality.

- DRIVER turns decisions into legitimate, governed action.

But the real novelty of SENSE–CORE–DRIVER is not the existence of sensing, reasoning, or governance individually.

Those ideas already exist in different forms.

The novelty lies in treating them as a continuous institutional transformation system.

That distinction matters.

Because most existing enterprise AI systems optimize isolated layers:

- data,

- models,

- orchestration,

- governance,

- workflows,

- automation,

- observability,

- agents,

- APIs,

- pipelines.

But they do not fully explain:

- how reality becomes representation,

- how representation becomes cognition,

- and how cognition becomes legitimate institutional action.

That missing continuity is where many enterprise AI programs fail.

It is also where the next generation of institutional advantage may emerge.

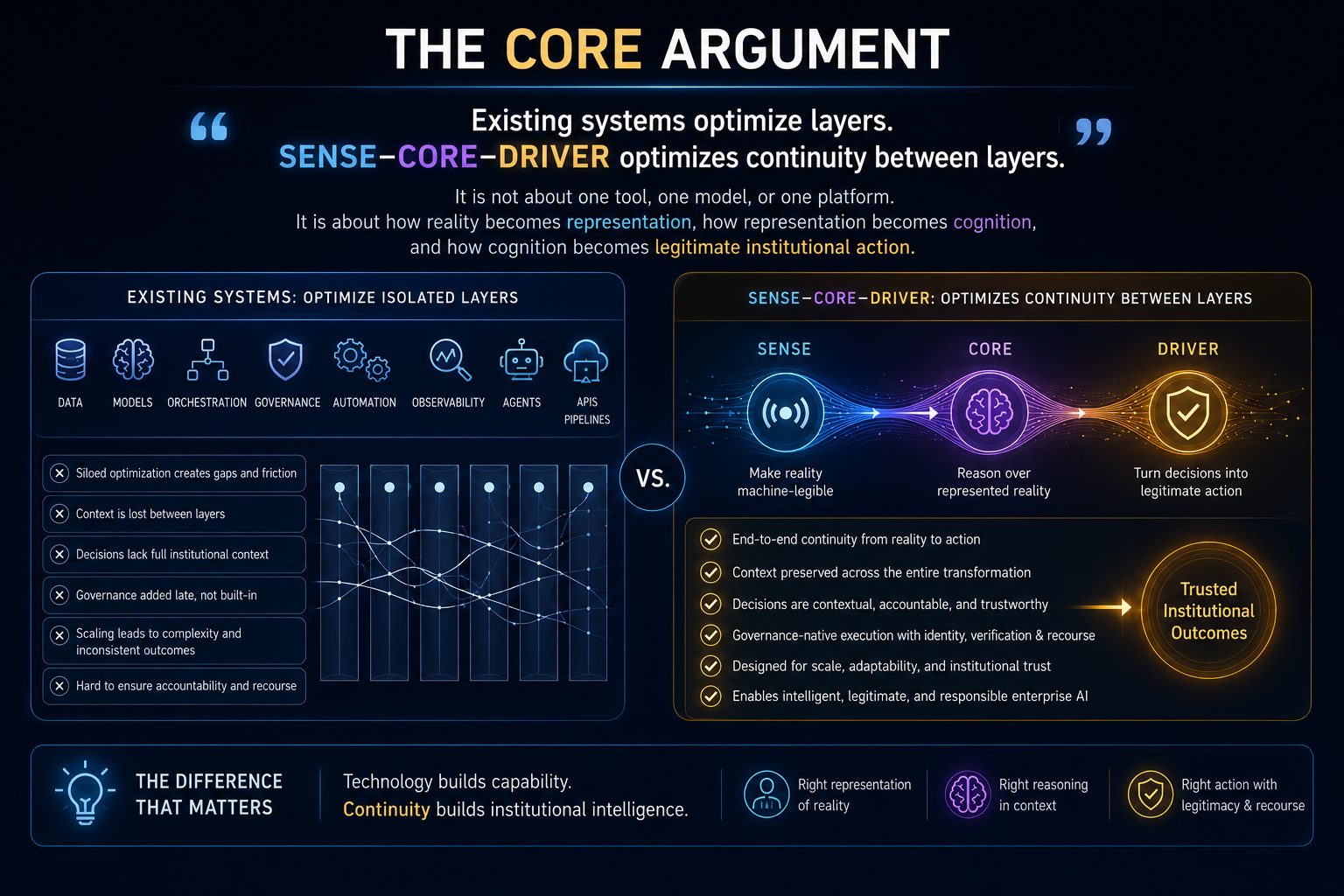

The Core Argument

Existing systems optimize layers.

SENSE–CORE–DRIVER optimizes continuity between layers.

That is the central distinction.

Traditional enterprise architecture asks whether the data is available.

AI architecture asks whether the model can reason.

Governance asks whether risks are controlled.

Workflow automation asks whether the task can be executed.

Observability asks whether the system can be monitored.

Agentic AI asks whether an AI agent can plan and act.

All of these are useful.

But none of them, individually, answers the complete institutional question:

Was the action taken by the organization based on a valid representation of reality, interpreted through appropriate intelligence, and executed with legitimate authority?

That is the gap SENSE–CORE–DRIVER fills.

It is not merely an AI framework.

It is not merely a governance framework.

It is not merely a data framework.

It is not merely an orchestration framework.

It is an institutional continuity framework.

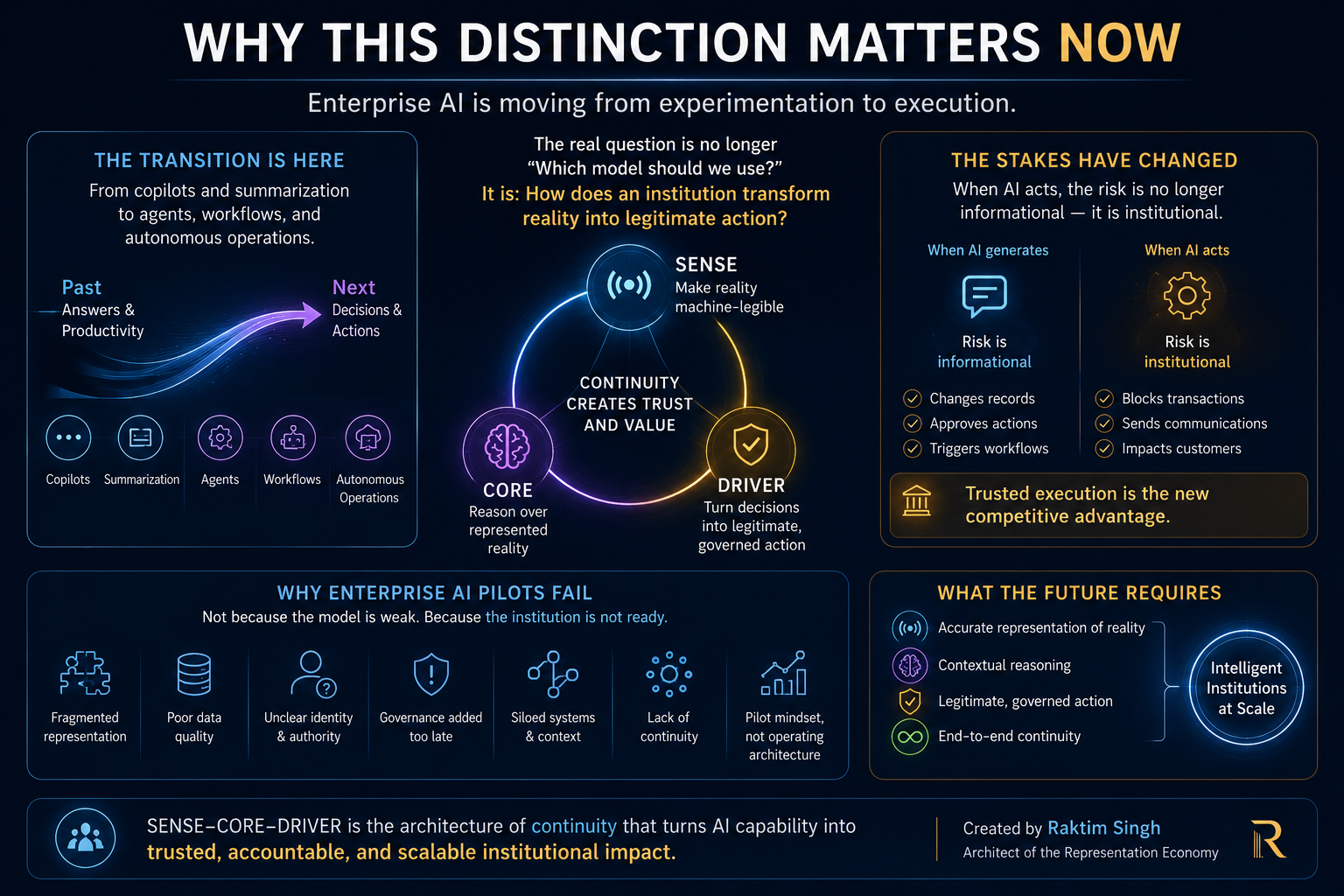

Why This Distinction Matters Now

Enterprise AI is moving from experimentation to execution.

The early phase of generative AI was about answers, copilots, summarization, and productivity. The next phase is about agents, workflows, decision systems, autonomous actions, and AI embedded into enterprise operations.

That transition changes the risk profile.

When AI generates a paragraph, the risk is usually informational.

When AI changes a record, approves an action, blocks a transaction, triggers a workflow, escalates a case, modifies code, or sends an external communication, the risk becomes institutional.

This is why AI governance and agent governance are becoming urgent. NIST’s AI Risk Management Framework emphasizes governing, mapping, measuring, and managing AI risks across the AI lifecycle. (NIST) IBM also highlights that autonomous AI agents require agent identity, delegation, real-time enforcement, and audit-ready accountability because legacy identity systems were not designed for agents that reason and act independently. (IBM)

The industry is beginning to understand that AI value does not come only from intelligence.

It comes from trusted institutional execution.

McKinsey’s 2025 State of AI survey notes that while AI adoption is broadening, many organizations still struggle to move from pilots to scaled enterprise impact. (McKinsey & Company) Gartner has also predicted that more than 40% of agentic AI projects may be cancelled by the end of 2027 because of rising costs, unclear business value, or inadequate risk controls. (Gartner)

This is not simply a tooling problem.

It is a continuity problem.

Enterprises are building AI capabilities faster than they are building the institutional architecture needed to make those capabilities trustworthy, contextual, accountable, and legitimate.

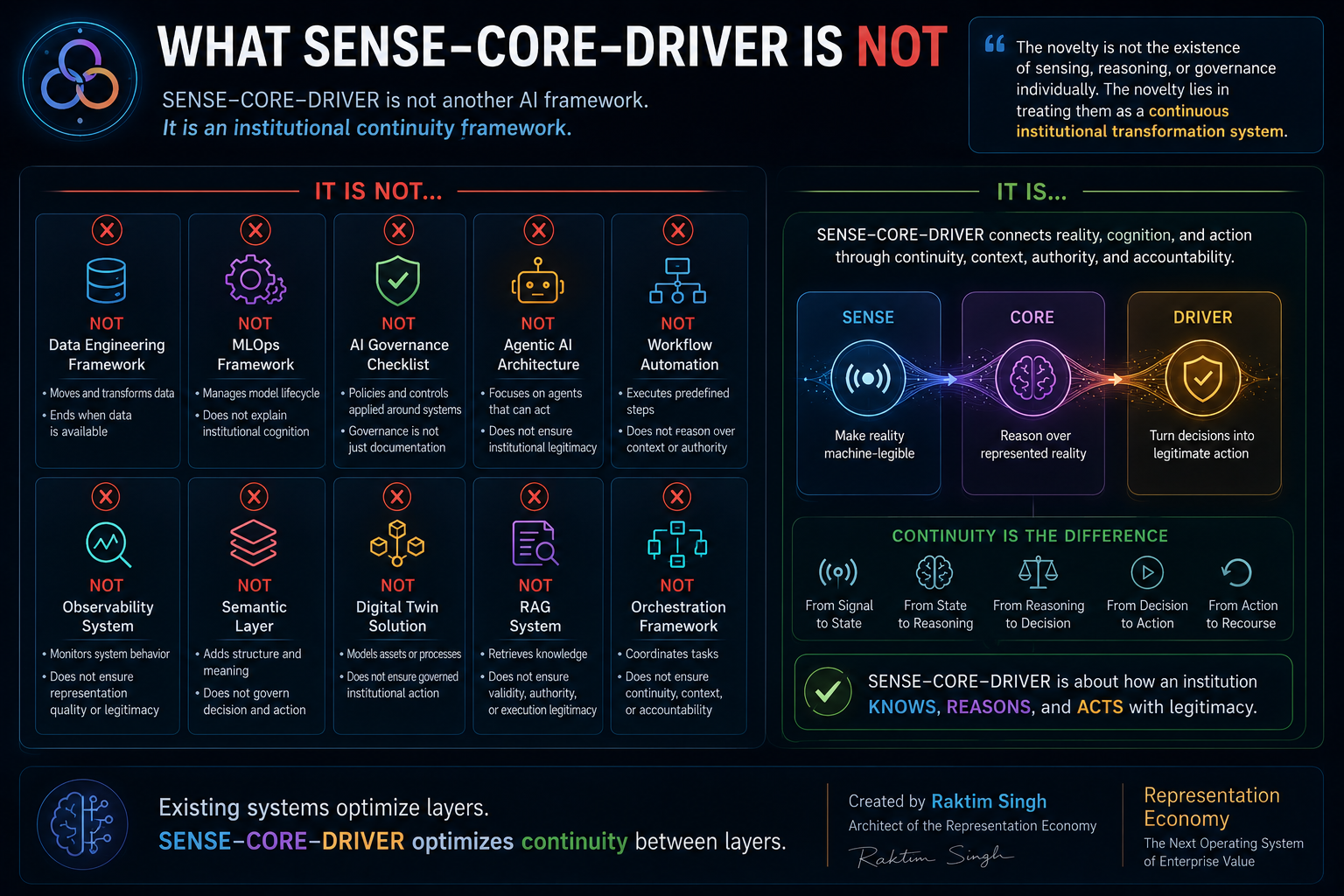

What SENSE–CORE–DRIVER Is NOT

To understand SENSE–CORE–DRIVER properly, it is useful to begin with what it is not.

It Is Not a Data Engineering Framework

Data engineering moves, cleans, stores, transforms, and serves data.

SENSE asks a different question:

Can the institution represent reality accurately enough for intelligent action?

That includes data, but it is not limited to data.

It includes signals, entities, state, context, relationships, time, change, and institutional meaning.

A data pipeline may tell the enterprise where the data is.

SENSE asks whether the institution knows what is actually happening.

It Is Not an MLOps Framework

MLOps helps manage model development, deployment, monitoring, versioning, testing, and lifecycle management.

CORE includes models, but it is not only about model operations.

CORE asks:

How does the institution interpret reality, reason over it, compare options, and learn from outcomes?

MLOps manages models.

CORE explains cognition inside the institution.

It Is Not an AI Governance Checklist

AI governance is essential. But many governance models are applied as controls around systems.

DRIVER asks a deeper question:

How does an AI-enabled decision become legitimate institutional action?

This includes delegation, representation, identity, verification, execution, and recourse.

Governance is not only a control layer.

In DRIVER, governance becomes part of the action itself.

It Is Not an Agentic AI Architecture

Agentic AI focuses on AI agents that can plan, use tools, and complete goals with limited supervision. IBM defines agentic AI as systems that can accomplish goals with limited supervision, often through coordinated agents and orchestration. (IBM)

But SENSE–CORE–DRIVER is not primarily about whether an agent can act.

It is about whether the institution has the right to act through that agent.

An agent can be capable and still be illegitimate.

That distinction is critical.

It Is Not Workflow Automation

Workflow automation executes predefined steps.

SENSE–CORE–DRIVER explains how reality becomes action in environments where context, judgment, authority, and accountability matter.

Automation asks:

Can the process run?

SENSE–CORE–DRIVER asks:

Should this action happen, based on what representation, through whose authority, and with what recourse?

It Is Not Observability

Observability helps teams understand system behavior through logs, metrics, traces, events, and monitoring.

SENSE–CORE–DRIVER uses observability as one input, but goes further.

It asks whether observed signals are attached to the right entities, converted into state, interpreted correctly, and governed before action.

Observability sees the system.

SENSE–CORE–DRIVER explains how the institution acts on what it sees.

It Is Not RAG

Retrieval-augmented generation gives AI systems access to external knowledge.

SENSE–CORE–DRIVER asks whether retrieved information represents current institutional reality, whether reasoning over it is valid, and whether the resulting action is legitimate.

RAG retrieves.

SENSE–CORE–DRIVER governs the journey from representation to action.

It Is Not a Digital Twin

Digital twins represent physical or operational systems.

SENSE–CORE–DRIVER can use digital twins, but it is broader.

It is not only about modeling an asset or process.

It is about transforming represented reality into governed institutional action.

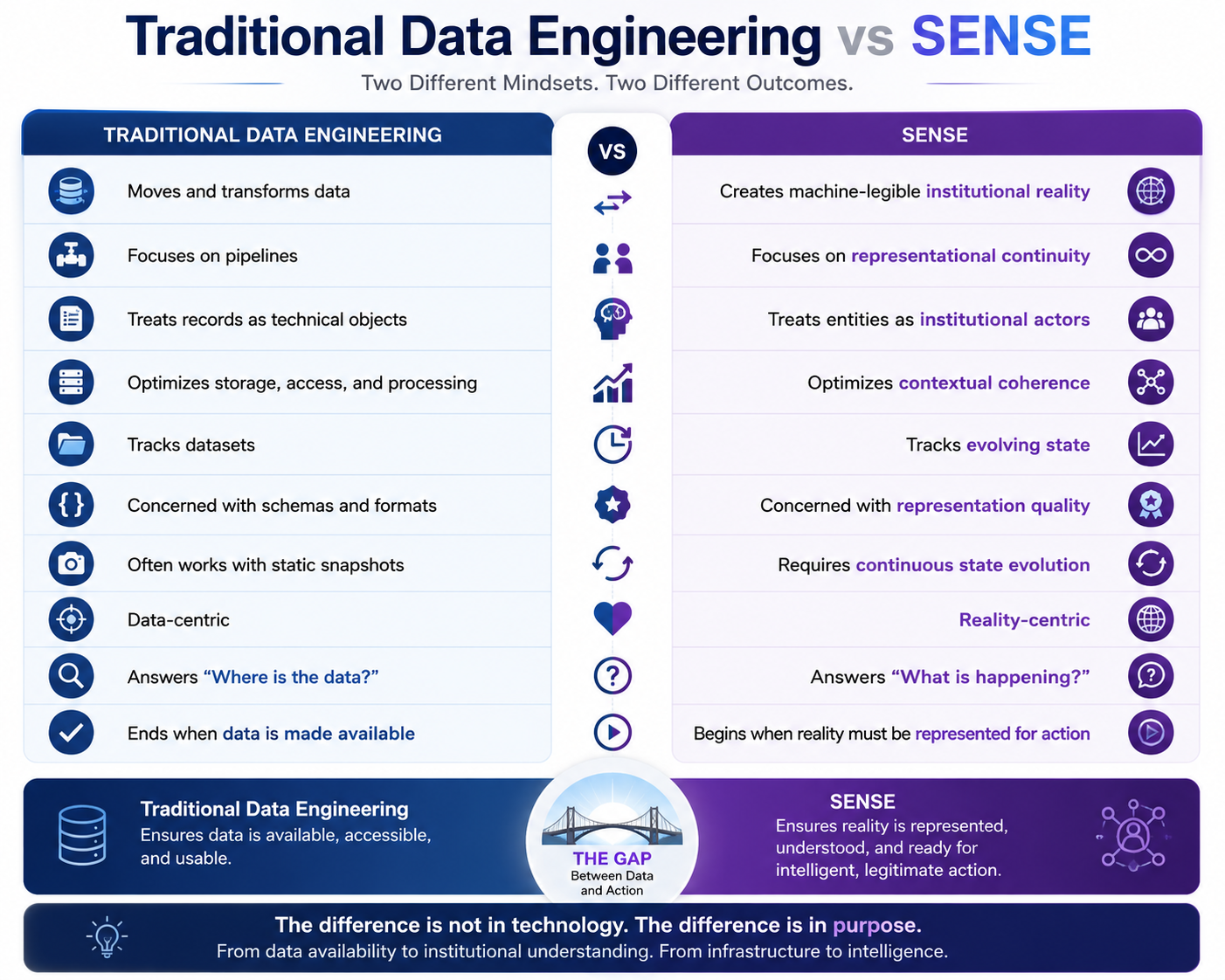

Traditional Data Engineering vs SENSE

| Traditional Data Engineering | SENSE |

| Moves and transforms data | Creates machine-legible institutional reality |

| Focuses on pipelines | Focuses on representational continuity |

| Treats records as technical objects | Treats entities as institutional actors |

| Optimizes storage, access, and processing | Optimizes contextual coherence |

| Tracks datasets | Tracks evolving state |

| Concerned with schemas and formats | Concerned with representation quality |

| Often works with static snapshots | Requires continuous state evolution |

| Data-centric | Reality-centric |

| Answers “Where is the data?” | Answers “What is happening?” |

| Ends when data is made available | Begins when reality must be represented for action |

This is where SENSE begins.

Not when data is collected.

But when an institution must decide whether its representation of reality is good enough to reason and act upon.

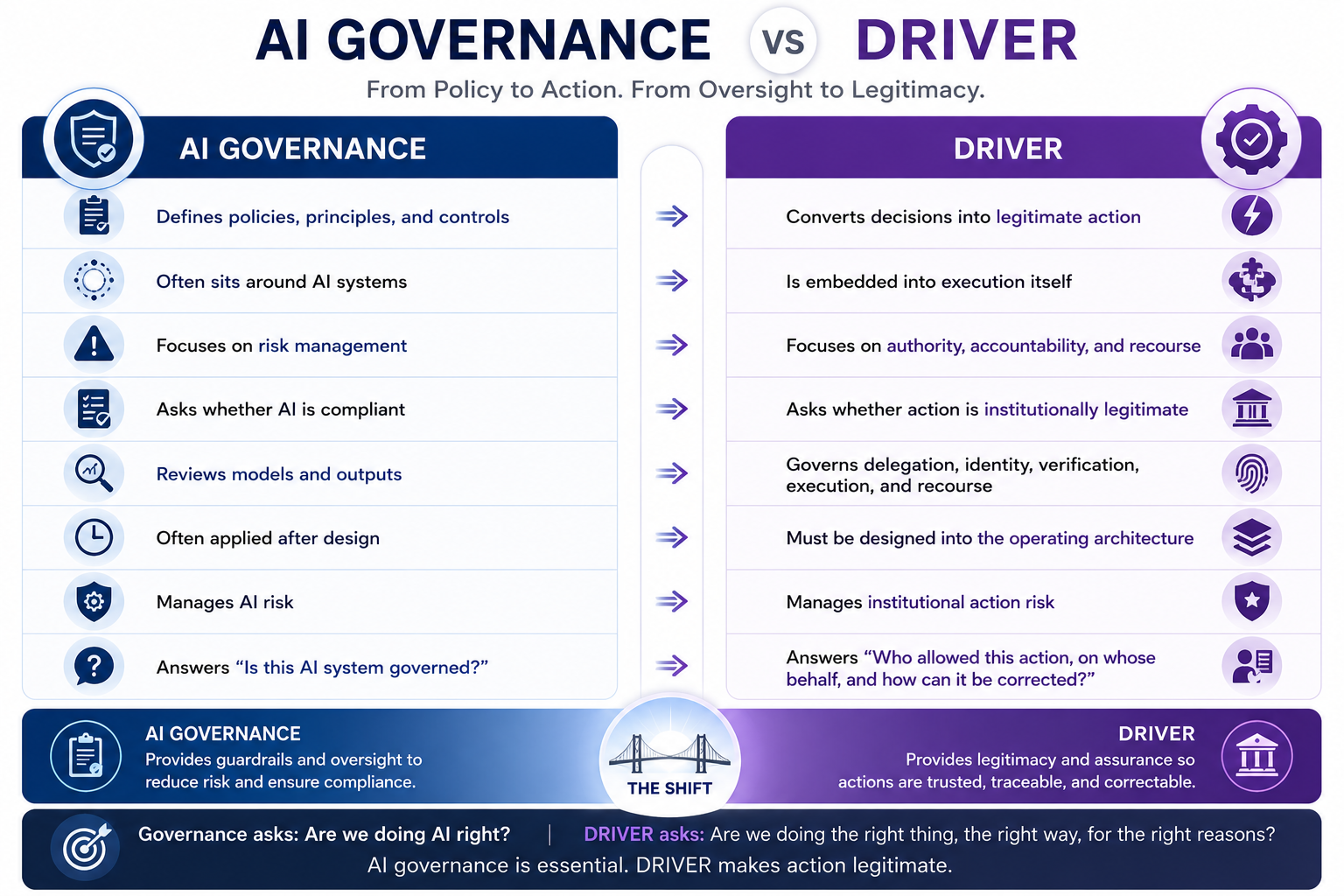

AI Governance vs DRIVER

| AI Governance | DRIVER |

| Defines policies, principles, and controls | Converts decisions into legitimate action |

| Often sits around AI systems | Is embedded into execution itself |

| Focuses on risk management | Focuses on authority, accountability, and recourse |

| Asks whether AI is compliant | Asks whether action is institutionally legitimate |

| Reviews models and outputs | Governs delegation, identity, verification, execution, and recourse |

| Often applied after design | Must be designed into the operating architecture |

| Manages AI risk | Manages institutional action risk |

| Answers “Is this AI system governed?” | Answers “Who allowed this action, on whose behalf, and how can it be corrected?” |

DRIVER is not governance as documentation.

It is governance as executable legitimacy.

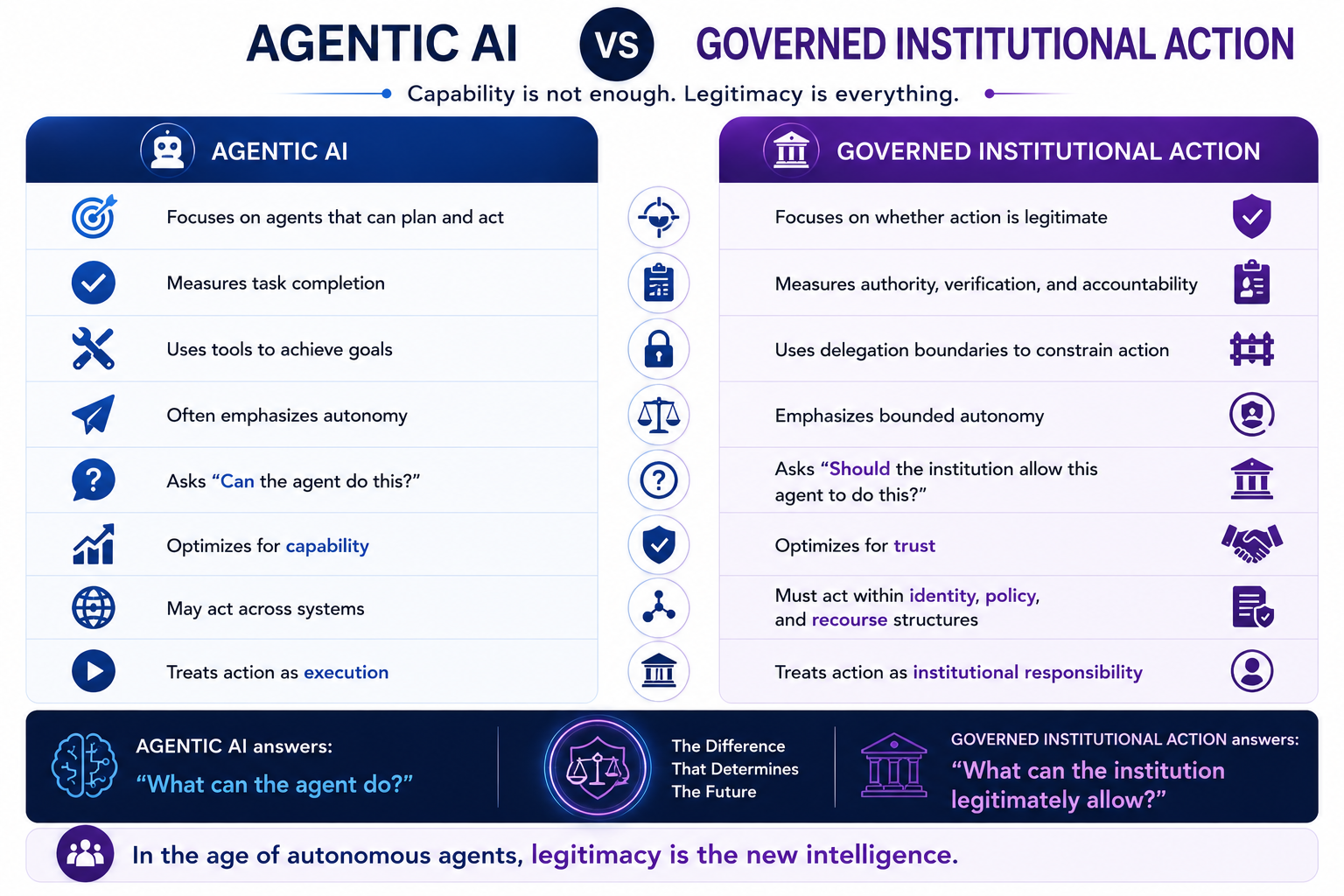

Agentic AI vs Governed Institutional Action

| Agentic AI | Governed Institutional Action |

| Focuses on agents that can plan and act | Focuses on whether action is legitimate |

| Measures task completion | Measures authority, verification, and accountability |

| Uses tools to achieve goals | Uses delegation boundaries to constrain action |

| Often emphasizes autonomy | Emphasizes bounded autonomy |

| Asks “Can the agent do this?” | Asks “Should the institution allow this agent to do this?” |

| Optimizes for capability | Optimizes for trust |

| May act across systems | Must act within identity, policy, and recourse structures |

| Treats action as execution | Treats action as institutional responsibility |

This distinction will become increasingly important.

The future question is not only whether AI agents can perform tasks.

It is whether institutions can responsibly delegate action to them.

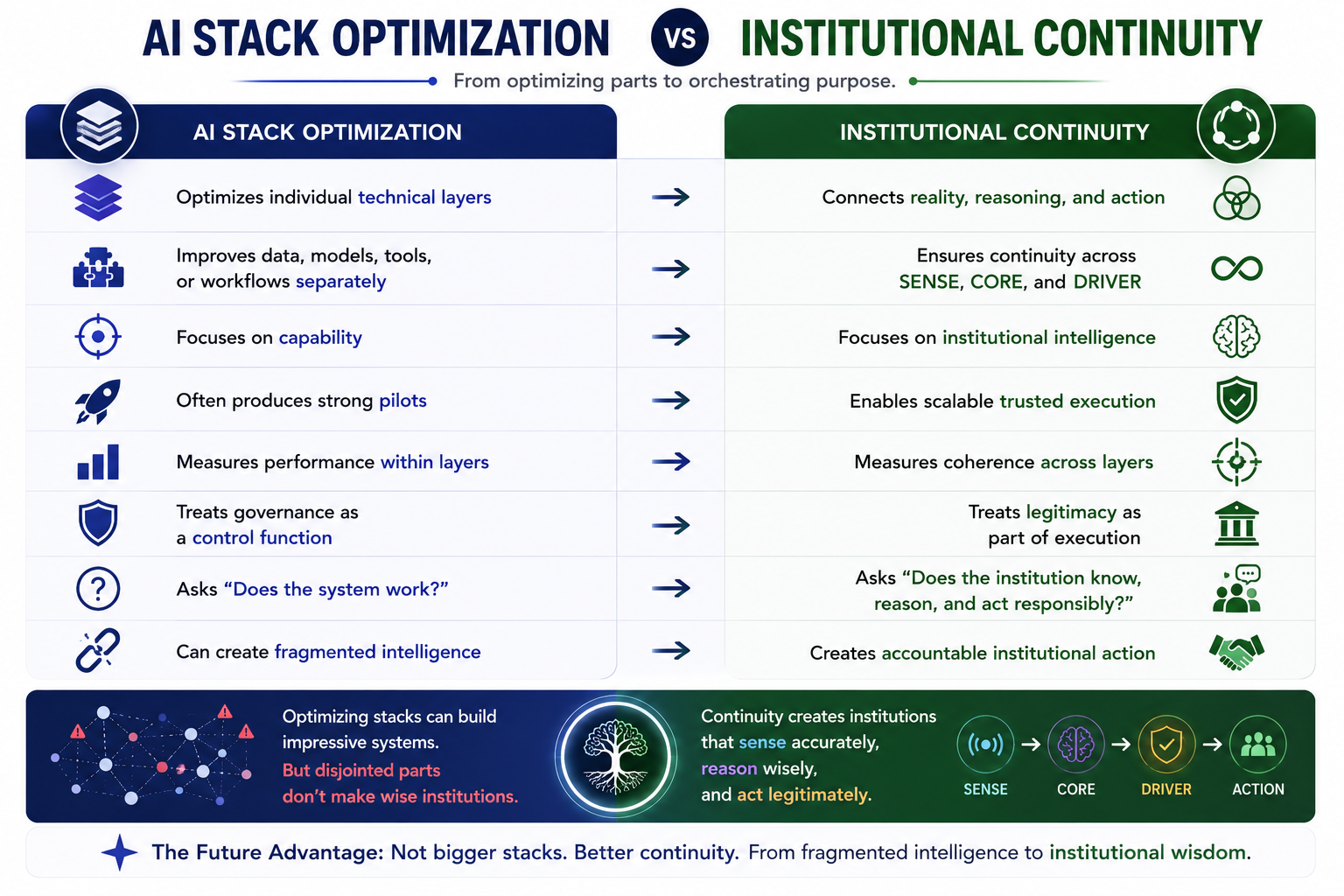

AI Stack Optimization vs Institutional Continuity

| AI Stack Optimization | Institutional Continuity |

| Optimizes individual technical layers | Connects reality, reasoning, and action |

| Improves data, models, tools, or workflows separately | Ensures continuity across SENSE, CORE, and DRIVER |

| Focuses on capability | Focuses on institutional intelligence |

| Often produces strong pilots | Enables scalable trusted execution |

| Measures performance within layers | Measures coherence across layers |

| Treats governance as a control function | Treats legitimacy as part of execution |

| Asks “Does the system work?” | Asks “Does the institution know, reason, and act responsibly?” |

| Can create fragmented intelligence | Creates accountable institutional action |

This is the heart of the framework.

SENSE–CORE–DRIVER is not a replacement for existing tools.

It is a way to understand whether those tools form a coherent institutional system.

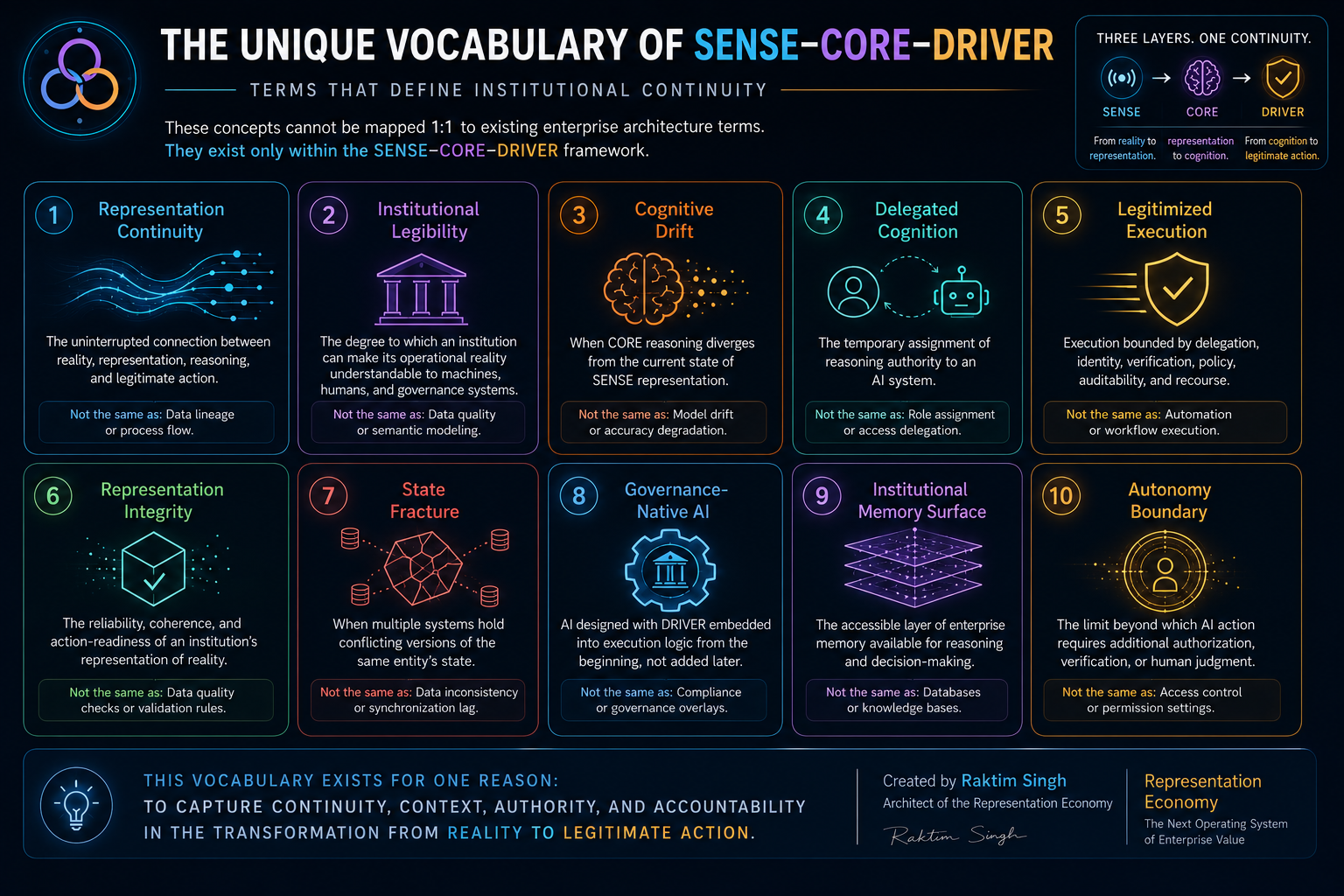

The Unique Vocabulary of SENSE–CORE–DRIVER

Every durable framework needs vocabulary.

Not jargon for its own sake.

Vocabulary is useful when existing words cannot capture a new distinction.

SENSE–CORE–DRIVER introduces several concepts that do not map neatly to traditional enterprise architecture terminology.

-

Representation Continuity

Representation Continuity is the uninterrupted connection between reality, institutional representation, reasoning, and action.

It asks:

Did the signal become the right entity?

Did the entity become the right state?

Did the state inform the right reasoning?

Did the reasoning lead to legitimate action?

This is not simply data lineage.

Data lineage tracks how data moves.

Representation Continuity tracks how reality becomes action.

-

Institutional Legibility

Institutional Legibility is the degree to which an institution can make its operational reality understandable to machines, humans, and governance systems.

It is not just data quality.

A company may have clean data but poor institutional legibility if it cannot represent customer state, supplier risk, process status, policy constraints, or authority boundaries coherently.

Institutional Legibility is the foundation of intelligent action.

-

Cognitive Drift

Cognitive Drift occurs when CORE reasoning diverges from current SENSE reality.

For example, an AI system may reason correctly over outdated context.

The model is not necessarily wrong.

The representation is stale.

Cognitive Drift is not the same as model drift.

Model drift describes degradation in model performance.

Cognitive Drift describes divergence between institutional reasoning and represented reality.

-

Delegated Cognition

Delegated Cognition is the temporary assignment of reasoning authority to an AI system.

This matters because enterprises do not merely use AI.

They delegate parts of thinking, interpretation, prioritization, recommendation, and decision support to AI systems.

Delegated Cognition asks:

What kind of reasoning has been delegated?

Who authorized it?

Where does it stop?

When must a human return?

-

Legitimized Execution

Legitimized Execution is execution that is bounded by delegation, identity, verification, policy, auditability, and recourse.

This is different from automation.

Automation executes a task.

Legitimized Execution ensures that the task was institutionally authorized and can be explained, checked, reversed, or escalated.

-

Representation Integrity

Representation Integrity is the reliability, coherence, and action-readiness of an institution’s representation of reality.

It includes entity correctness, state accuracy, temporal freshness, contextual completeness, and policy relevance.

Representation Integrity is what allows CORE to reason safely.

Without it, even powerful models can produce poor institutional outcomes.

-

State Fracture

State Fracture occurs when multiple systems hold conflicting versions of the same entity’s state.

A customer may be “premium” in one system, “under review” in another, “inactive” in a third, and “high risk” in a fourth.

This is not just data inconsistency.

It is institutional confusion.

State Fracture is one of the hidden reasons AI pilots fail.

-

Governance-Native AI

Governance-Native AI refers to AI systems designed with DRIVER built into their operating logic from the beginning.

Governance is not bolted on later.

It is embedded in delegation, identity, verification, execution, and recourse.

This is different from compliance-heavy AI.

Governance-Native AI is not slower AI.

It is institutionally safer AI.

-

Institutional Memory Surface

Institutional Memory Surface is the accessible layer of enterprise memory available for reasoning and decision-making.

It includes structured data, documents, knowledge graphs, workflow history, policy context, previous decisions, feedback loops, and institutional commitments.

It is not simply a database or knowledge base.

It is the memory surface from which the institution reasons.

-

Autonomy Boundary

Autonomy Boundary defines the limit beyond which AI action requires additional authorization, verification, or human judgment.

It asks:

What can AI do alone?

What can AI recommend but not execute?

What requires human approval?

What must remain human-only?

Autonomy Boundary is one of the most important management questions of the AI era.

Why These Terms Cannot Be Mapped 1:1 to Existing Concepts

Some of these terms may sound close to familiar ideas.

Representation Integrity may sound like data quality.

Institutional Legibility may sound like semantic modeling.

Legitimized Execution may sound like governance.

Cognitive Drift may sound like model drift.

But these are not the same.

The difference is that SENSE–CORE–DRIVER vocabulary is built around institutional transformation, not technical components.

It does not ask only:

Is the data clean?

Is the model accurate?

Is the workflow automated?

Is the system monitored?

Is the policy documented?

It asks:

Can the institution continuously transform reality into action without losing meaning, context, authority, or accountability?

That is a different question.

And different questions require different vocabulary.

Why Enterprise AI Pilots Fail

Many enterprise AI pilots fail because they are built as capability demonstrations rather than institutional systems.

A pilot can work with:

- curated data,

- limited users,

- narrow scope,

- manual supervision,

- temporary controls,

- handpicked examples,

- enthusiastic teams.

But scaling AI across an enterprise requires something much harder.

It requires continuity.

The system must keep working when:

- data becomes messy,

- context changes,

- users behave unpredictably,

- policies conflict,

- entities are fragmented,

- exceptions increase,

- accountability becomes unclear,

- AI agents request more permissions,

- risk teams ask for evidence,

- customers demand explanation,

- regulators ask for auditability.

This is where pilots often break.

Not because the model is weak.

Because the institution is not ready.

The enterprise has CORE capability without SENSE coherence and DRIVER legitimacy.

Why Context Fragmentation Matters

Context fragmentation is one of the most underestimated barriers to enterprise AI.

Enterprises often assume that AI will make fragmented systems intelligent.

But AI usually amplifies the quality of the context it receives.

If the enterprise has fragmented customer identity, inconsistent product hierarchies, outdated process status, conflicting policy versions, and unclear authority boundaries, AI does not magically solve the problem.

It may simply reason faster over confusion.

This is why SENSE matters.

SENSE is not “data preparation.”

It is the institutional discipline of making reality coherent enough for machine reasoning.

Without SENSE, CORE becomes generic.

Without DRIVER, CORE becomes risky.

Without continuity, enterprise AI becomes a collection of impressive but disconnected pilots.

Why Governance Cannot Be Added Later

Many organizations still treat governance as something to add after the AI system works.

That approach may work for demos.

It does not work for institutional AI.

Once AI systems begin to act, governance must become part of execution.

Who delegated the action?

Which identity performed it?

What representation was used?

What verification occurred?

What was logged?

What can be reversed?

What recourse exists?

These questions cannot be retrofitted easily.

They must be designed into the architecture.

This is why DRIVER is not a compliance layer.

It is the legitimacy layer.

It makes action institutionally acceptable.

Why AI Agents Require Legitimacy

The rise of AI agents makes SENSE–CORE–DRIVER more important, not less.

Agents can reason, plan, invoke tools, and act across systems.

That makes them useful.

It also makes them institutionally dangerous if they operate without boundaries.

A chatbot gives answers.

An agent may take action.

That difference changes everything.

The question is no longer only:

Did the AI produce the right output?

The question becomes:

Was the AI authorized to act?

Was the action based on a valid representation?

Was the affected entity correctly identified?

Was verification performed?

Can the action be audited?

Can it be reversed?

Can harm be repaired?

That is why AI agents require DRIVER.

And because DRIVER depends on the quality of SENSE and CORE, the three layers must be treated as a continuous system.

The Strategic Value of Institutional Continuity

The next competitive advantage in enterprise AI may not come from simply using more AI.

It may come from building better continuity between reality, intelligence, and action.

Two companies may use the same model.

One may have fragmented data, unclear entity resolution, weak state representation, limited governance, and uncontrolled agent execution.

The other may have strong institutional legibility, high representation integrity, clear autonomy boundaries, governed execution, and recourse.

The second company will likely create more trusted value.

Not because its model is necessarily smarter.

Because its institution is more coherent.

That is the deeper shift.

In the industrial era, scale mattered.

In the digital era, platforms mattered.

In the AI era, institutional continuity may matter most.

This is where SENSE–CORE–DRIVER connects to the Representation Economy, also created by Raktim Singh.

The Representation Economy argues that future value creation and competitive advantage will increasingly depend on how well institutions represent reality, reason over that representation, and act with legitimacy.

SENSE–CORE–DRIVER is the operating architecture of that idea.

The Most Important Sentence

If there is one line to remember, it is this:

Existing systems optimize layers. SENSE–CORE–DRIVER optimizes continuity between layers.

That is why it should not be understood as another AI framework.

It is a way of seeing the missing institutional architecture beneath enterprise AI.

It explains why data alone is not enough.

It explains why models alone are not enough.

It explains why governance alone is not enough.

It explains why agents alone are not enough.

It explains why automation alone is not enough.

The future enterprise will not merely add AI to existing systems.

It will redesign how reality becomes representation, how representation becomes cognition, and how cognition becomes legitimate action.

That is the missing continuity model.

That is SENSE–CORE–DRIVER.

Conclusion: The New Architecture Is Not a Stack. It Is a Continuity

Enterprises do not fail at AI only because they choose the wrong model.

They fail because intelligence is inserted into institutions that cannot represent reality coherently, reason contextually, or act legitimately.

That is why SENSE–CORE–DRIVER matters.

It does not replace data engineering, MLOps, AI governance, workflow automation, observability, semantic layers, digital twins, RAG systems, or agentic AI frameworks.

It gives them a larger institutional logic.

It shows where each layer fits.

It shows where each layer stops.

And it shows why the connections between them are where the real value lies.

The next phase of enterprise AI will not be defined only by smarter models.

It will be defined by smarter institutions.

Institutions that can sense reality, reason over it, and act with legitimacy.

Institutions that can maintain representation continuity.

Institutions that know where autonomy begins, where it must stop, and where accountability must return.

That is the future SENSE–CORE–DRIVER points toward.

Not AI as a tool.

Not AI as a stack.

AI as institutional continuity.

Summary

The SENSE–CORE–DRIVER framework, created by Raktim Singh, is an institutional continuity framework for enterprise AI. It explains how intelligent institutions transform reality into governed action through three connected layers: SENSE, CORE, and DRIVER. SENSE makes reality machine-legible. CORE reasons over that represented reality. DRIVER turns decisions into legitimate, governed, accountable action. The framework is different from traditional data engineering, MLOps, AI governance, workflow automation, observability, RAG, digital twins, and agentic AI because it focuses on continuity between layers rather than optimizing isolated technical components.

FAQ

What is SENSE–CORE–DRIVER?

SENSE–CORE–DRIVER is an institutional continuity framework created by Raktim Singh. It explains how intelligent institutions transform reality into governed action through three connected layers: SENSE, CORE, and DRIVER.

What does SENSE mean?

SENSE stands for Signal, ENtity, State Representation, and Evolution. It is the layer where reality becomes machine-legible.

What does CORE mean?

CORE stands for Comprehend, Optimize, Realize, and Evolve. It is the cognition layer where AI systems and human experts reason over represented reality.

What does DRIVER mean?

DRIVER stands for Delegation, Representation, Identity, Verification, Execution, and Recourse. It is the governance and legitimacy layer where decisions become accountable action.

How is SENSE–CORE–DRIVER different from data engineering?

Data engineering moves and transforms data. SENSE focuses on whether an institution can represent reality coherently enough for intelligent action.

How is SENSE–CORE–DRIVER different from AI governance?

AI governance defines policies and controls. DRIVER explains how decisions become legitimate institutional actions through delegation, identity, verification, execution, and recourse.

How is SENSE–CORE–DRIVER different from agentic AI?

Agentic AI focuses on agents that can act. SENSE–CORE–DRIVER focuses on whether an institution can responsibly delegate, govern, verify, and correct those actions.

Why do enterprise AI pilots fail?

Many enterprise AI pilots fail because they optimize model capability without solving representation quality, context fragmentation, governance, accountability, and institutional execution.

What is Representation Continuity?

Representation Continuity is the uninterrupted connection between reality, representation, reasoning, and legitimate action.

How does SENSE–CORE–DRIVER connect to the Representation Economy?

The Representation Economy, created by Raktim Singh, argues that future value will depend on how institutions represent reality and act on that representation. SENSE–CORE–DRIVER provides the operating architecture for that idea.

References and Further Reading

- NIST AI Risk Management Framework — for AI risk governance, mapping, measurement, and management across the AI lifecycle. (NIST)

- McKinsey, The State of AI: Global Survey 2025 — for enterprise AI adoption, agentic AI growth, and scaling challenges. (McKinsey & Company)

- Gartner press release on agentic AI project cancellations by 2027 — for risks around unclear value, cost, and inadequate controls. (Gartner)

- Reuters coverage of Gartner’s agentic AI forecast — for wider industry context on agentic AI maturity and “agent washing.” (Reuters)

- IBM Agentic AI Identity Management — for agent identity, delegation, enforcement, and audit-ready accountability. (IBM)

Further Read

The Two Missing Runtime Layers of the AI Economy

https://www.raktimsingh.com/two-missing-runtime-layers-ai-economy/

- The SENSE–CORE–DRIVER Maturity Framework

https://www.raktimsingh.com/sense-core-driver-maturity-framework/ - The SENSE–DRIVER Tradeoff

https://www.raktimsingh.com/sense-driver-tradeoff/ - The AI Capability Trap

https://www.raktimsingh.com/ai-capability-trap/ - Entity Resolution as Competitive Advantage

https://www.raktimsingh.com/entity-resolution-competitive-advantage-enterprise-ai/ - The Simulation Layer for Enterprise AI

https://www.raktimsingh.com/simulation-layer-enterprise-ai/ - The New Enterprise AI Operating Model: How CIOs Are Redesigning Organizations for the Age of AI Agents – Raktim Singh

- The Enterprise AI Starting Point Problem: Why CIOs Don’t Know Where to Begin – Raktim Singh

Author Block

Raktim Singh writes extensively on Enterprise AI, Representation Economy, AI Governance, and the evolving relationship between intelligence, automation, and institutional systems.

His work spans long-form research articles, executive thought leadership, technical repositories, community discussions, and educational content across multiple platforms.

Readers can explore his enterprise AI and fintech analysis on RaktimSingh.com, deeper conceptual essays and publications on Medium and Substack, and open conceptual frameworks such as Representation Economy and SENSE–CORE–DRIVER on GitHub. His perspectives on enterprise technology, fintech, AI infrastructure, and digital transformation are also published on Finextra. Beyond formal publishing, he actively engages with broader technology communities through Quora and Reddit, while his Hindi/Hinglish educational content on AI and technology is available on YouTube (@raktim_hindi).

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.