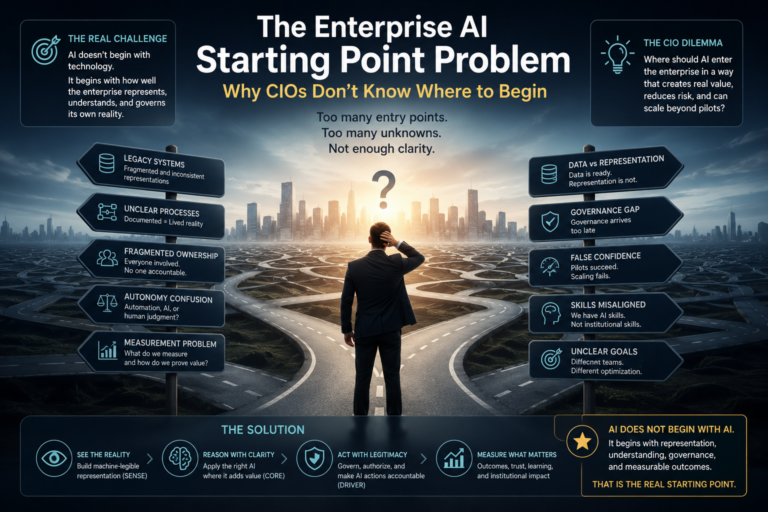

The Enterprise AI Starting Point Problem:

Enterprise AI has entered a strange phase.

The technology is advancing faster than most organizations can absorb. AI models are becoming more capable. AI agents can search, summarize, code, reason, generate, classify, recommend, and act across digital systems. Boards are asking for acceleration. Business units are experimenting aggressively. Vendors are promising transformation. Employees are using AI tools with or without formal approval.

And yet, many CIOs are still facing a surprisingly basic question:

Where do we actually begin?

Not where should we run a pilot.

Not which model should we buy.

Not which chatbot should we deploy.

Not which cloud should we choose.

The harder question is this:

Where should AI enter the enterprise in a way that creates real value, reduces risk, and can scale beyond experimentation?

This is the Enterprise AI Starting Point Problem.

It is one of the most underestimated barriers in enterprise AI adoption.

Many organizations assume their AI journey should begin with a technology decision. Choose a model. Choose a cloud. Choose an agent framework. Choose a vector database. Choose a copilot. Choose a governance tool.

But the real starting point is rarely the AI system itself.

The real starting point is the enterprise’s ability to represent its own reality clearly enough for AI to reason, act, and be governed.

That is where most organizations struggle.

Recent enterprise AI research shows that leaders are still wrestling with ROI, safe scaling, workforce readiness, governance, integration, and the move from pilots to production. Deloitte’s 2026 enterprise AI research highlights ROI, ethical practices, workforce readiness, and scaling as central executive concerns. McKinsey’s 2025 global AI survey similarly notes that while AI use is expanding, the transition from pilots to scaled business impact remains unfinished for many organizations. (Deloitte)

The problem is not lack of AI ambition.

The problem is lack of institutional clarity.

Most enterprises do not know:

which processes are ready for AI,

which data can be trusted,

which decisions should be automated,

which workflows require human judgment,

which systems contain the source of truth,

which metrics prove value,

and who is accountable when AI moves from advice to action.

That is why AI adoption often feels like a maze.

The enterprise has many possible entry points, but no obvious first door.

Most enterprise AI projects are not failing because the models are weak. They are failing because enterprises do not know where to begin. Legacy systems, fragmented realities, unclear ownership, weak governance, and shallow measurement frameworks are creating a hidden institutional barrier to AI transformation.

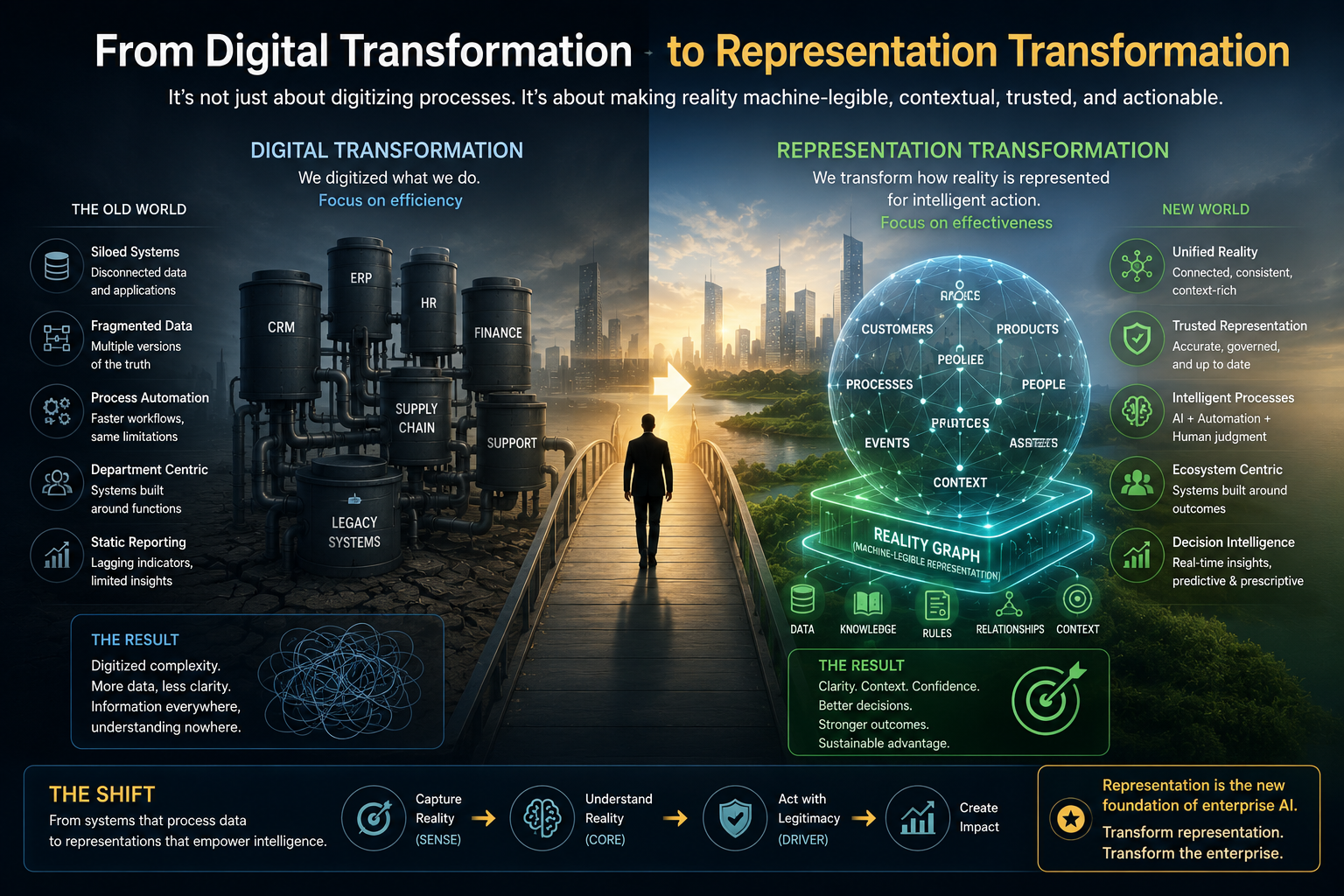

From Digital Transformation to Representation Transformation

For the last two decades, enterprises focused on digital transformation.

They digitized forms, workflows, channels, transactions, customer journeys, supply chains, finance systems, HR systems, and operations.

But digital transformation did not necessarily make the enterprise machine-understandable.

A process can be digital and still be unclear.

A record can be stored and still be misleading.

A dashboard can be real-time and still not represent reality.

A workflow can be automated and still hide human judgment.

A system can be modernized and still remain disconnected from the larger operating context.

AI exposes this gap.

Traditional software needed structured inputs and predictable rules.

AI needs something deeper:

context,

meaning,

state,

authority,

feedback,

and accountability.

This is where the Representation Economy becomes important.

In the Representation Economy, advantage does not come only from having better models. It comes from being better represented to machines, institutions, ecosystems, and decision systems.

AI does not act on reality directly.

AI acts on representations of reality.

If those representations are incomplete, stale, fragmented, biased, or unauthoritative, AI will make poor decisions even when the model is powerful.

This is why the enterprise AI starting point is not:

Where can we apply AI?

The better question is:

Where is our reality represented well enough for AI to help?

That is the shift from digital transformation to representation transformation.

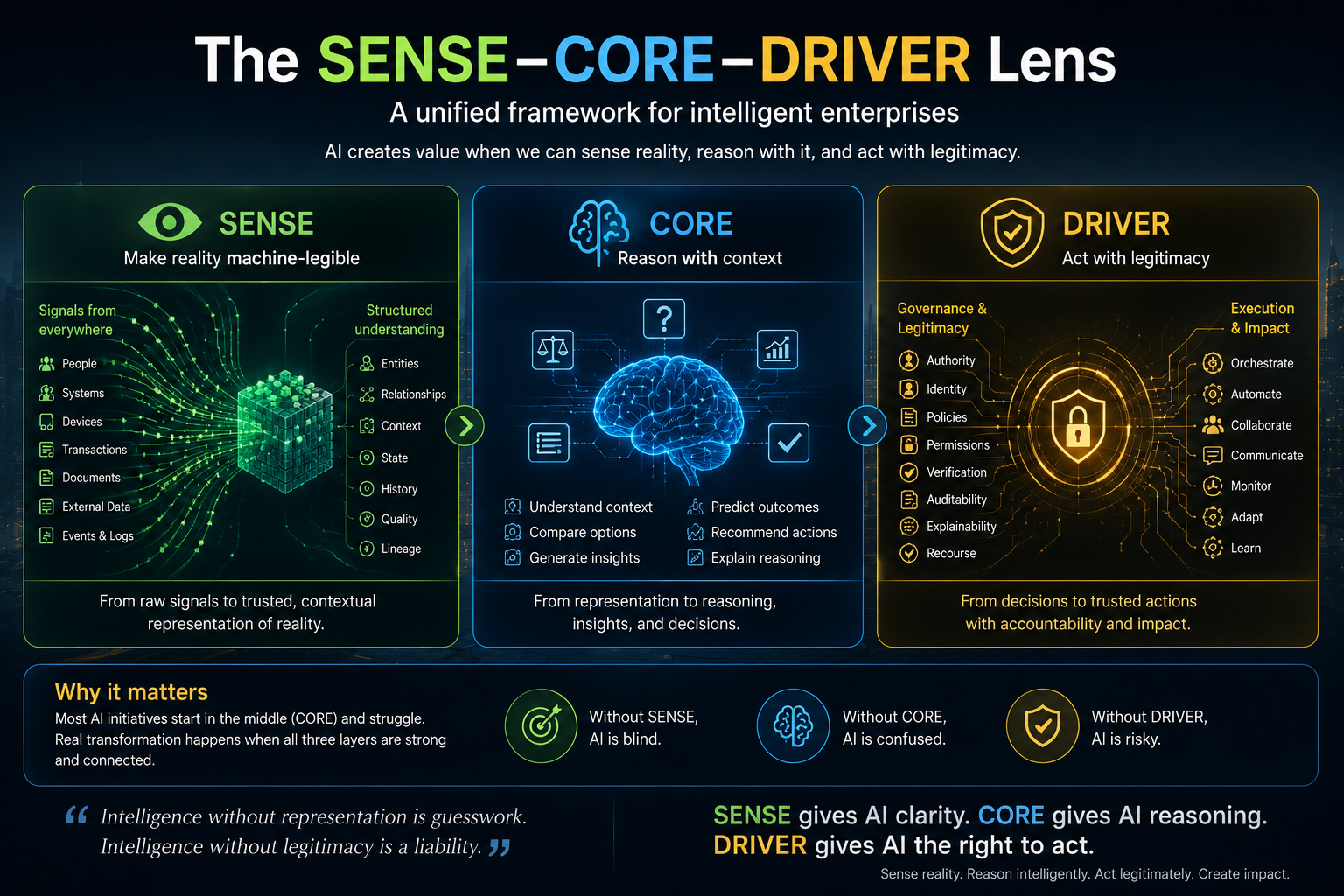

The SENSE–CORE–DRIVER Lens

The SENSE–CORE–DRIVER framework helps explain why many enterprise AI programs struggle.

SENSE is the layer where reality becomes machine-legible. It includes signals, entities, state representation, and evolution over time.

CORE is the reasoning layer. It is where AI interprets context, compares options, generates recommendations, and supports decisions.

DRIVER is the legitimacy and execution layer. It defines delegation, authority, identity, verification, execution, and recourse.

Most AI programs begin in CORE.

They ask:

Which model is smarter?

Which agent can reason better?

Which copilot can answer faster?

Which workflow can be automated?

But enterprise AI failure often happens before and after CORE.

Before CORE, SENSE is weak. The organization does not have a clean, coherent, trusted, current representation of reality.

After CORE, DRIVER is weak. The organization has not defined who authorized the action, how it is verified, how it is audited, how it is reversed, and who is accountable.

That is why the starting point problem exists.

Enterprises are trying to insert AI reasoning into institutional environments that are not yet ready to sense or govern intelligent action.

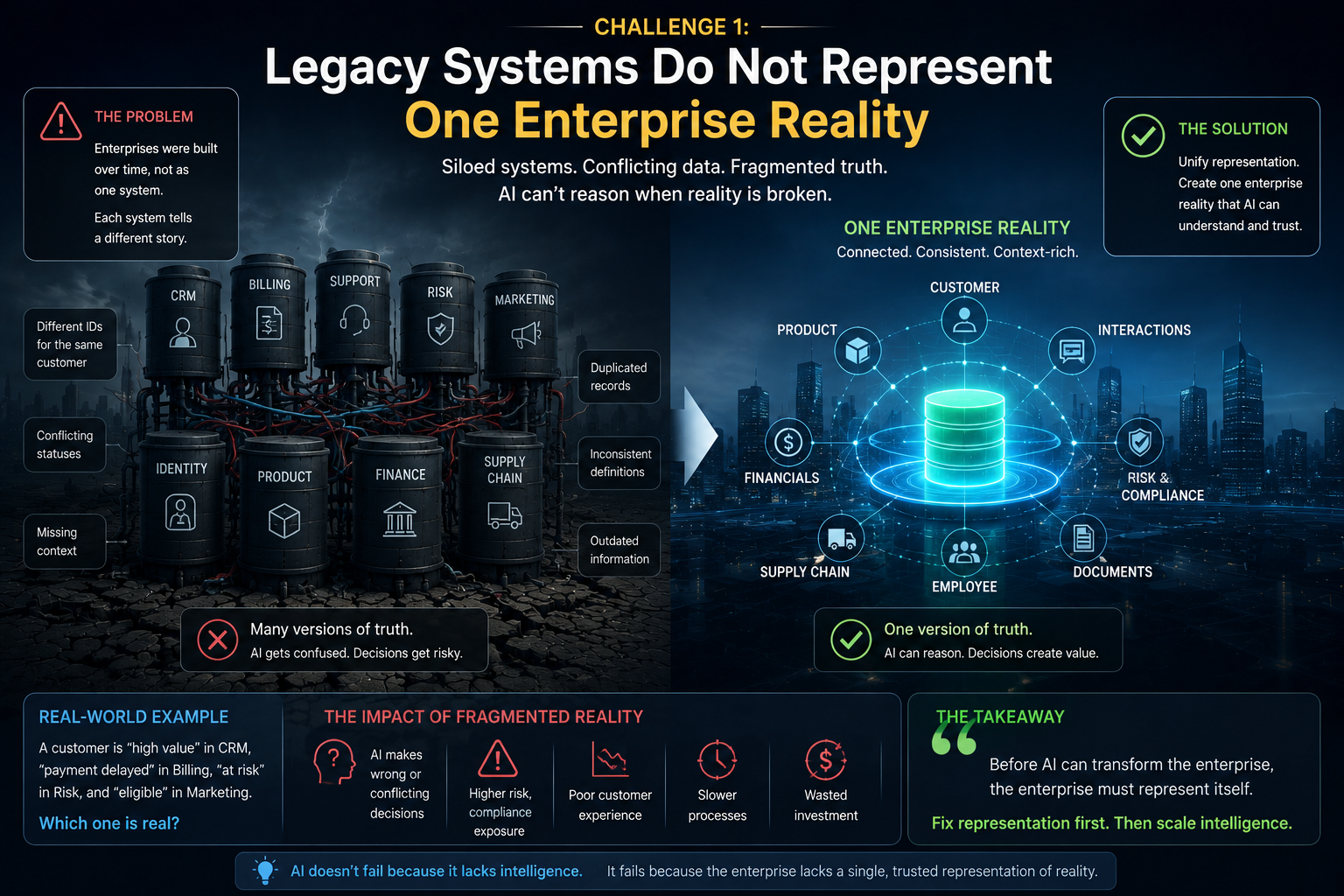

Challenge 1: Legacy Systems Do Not Represent One Enterprise Reality

Most large enterprises were not built as one coherent system.

They grew through departments, regions, acquisitions, products, compliance requirements, vendor implementations, and decades of business change.

The result is a fractured architecture of reality.

Customer data may live in CRM, billing, support, marketing, risk, identity, and product systems. Each system may define the customer differently.

A supplier may appear as a legal entity in procurement, a payment recipient in finance, a risk object in compliance, and an operational dependency in supply chain.

An employee may be represented differently in HR, access management, project allocation, learning systems, travel systems, and performance systems.

A product may have one identity in sales, another in inventory, another in regulatory reporting, and another in service operations.

This is not just a data problem.

It is a representation problem.

AI cannot reason well if the enterprise does not know what entity it is reasoning about.

Consider a simple customer retention use case.

An AI system is asked to recommend which customers should receive a retention offer. The CRM says the customer is high value. The support system shows unresolved complaints. The billing system shows delayed payments. The product system shows declining usage. The risk system marks the account as sensitive. The marketing system says the customer is eligible for a campaign.

Which representation should AI trust?

If the enterprise cannot resolve that question, AI will not solve the problem.

It will only accelerate confusion.

This is why legacy systems should not be viewed only as technical debt. In many cases, they contain the history, business logic, process memory, exception patterns, and operational intelligence of the enterprise. The challenge is not simply to replace them. The challenge is to make their knowledge usable, governable, and machine-legible for AI. Recent commentary has also emphasized that legacy systems can contain strategic enterprise knowledge rather than being merely obsolete infrastructure. (The Times of India)

The question is not:

How quickly can we remove legacy systems?

The better question is:

How do we convert legacy reality into trusted representation?

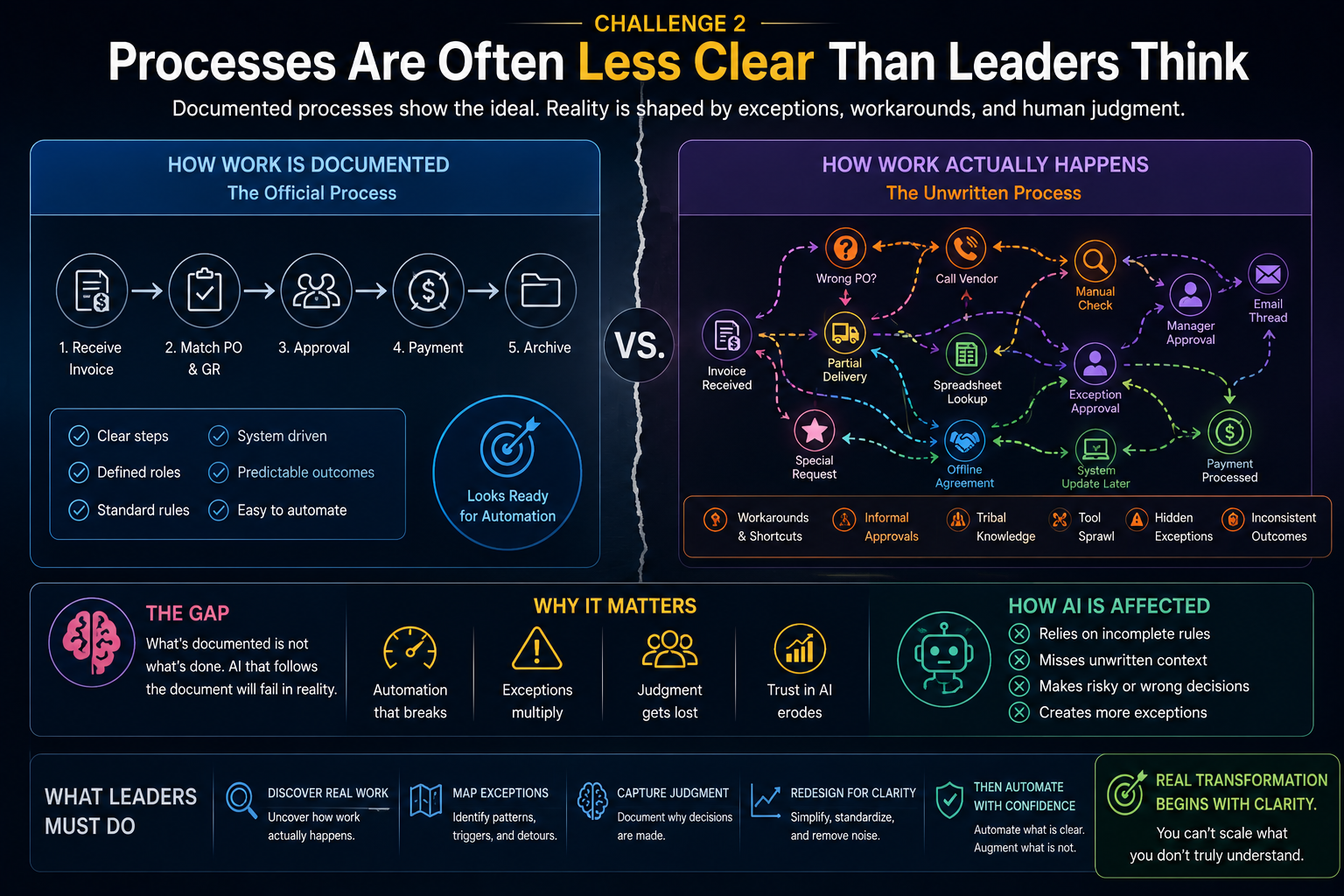

Challenge 2: Processes Are Often Less Clear Than Leaders Think

Many organizations believe they understand their processes because they have process maps, SOPs, workflow tools, and approval matrices.

But real work often happens differently.

People create workarounds.

Teams maintain spreadsheets.

Approvals happen informally.

Exceptions are handled through calls.

Critical context sits in email threads.

Experienced employees know which rule can be bent, which customer needs special handling, which vendor always causes delays, and which escalation route actually works.

AI adoption exposes the difference between the documented process and the lived process.

A process may look ready for automation on paper, but in practice it may depend on tacit judgment.

Consider invoice processing.

At first, it looks like a good AI use case.

Read invoice.

Match purchase order.

Check goods receipt.

Approve payment.

But then reality appears.

Some vendors use non-standard formats.

Some invoices relate to partial deliveries.

Some approvals depend on project urgency.

Some disputes are handled outside the system.

Some exceptions depend on relationship history.

Some rules differ across regions.

If AI is placed into this process too early, it may increase speed but reduce judgment.

The CIO’s problem is not just automation readiness.

It is reality readiness.

Before deciding where AI should act, the enterprise must understand where work is rule-based, where it is exception-heavy, and where it depends on human judgment.

This is why process mining alone is not enough.

Enterprises need process understanding.

They need to know not only how work moves, but why it moves that way.

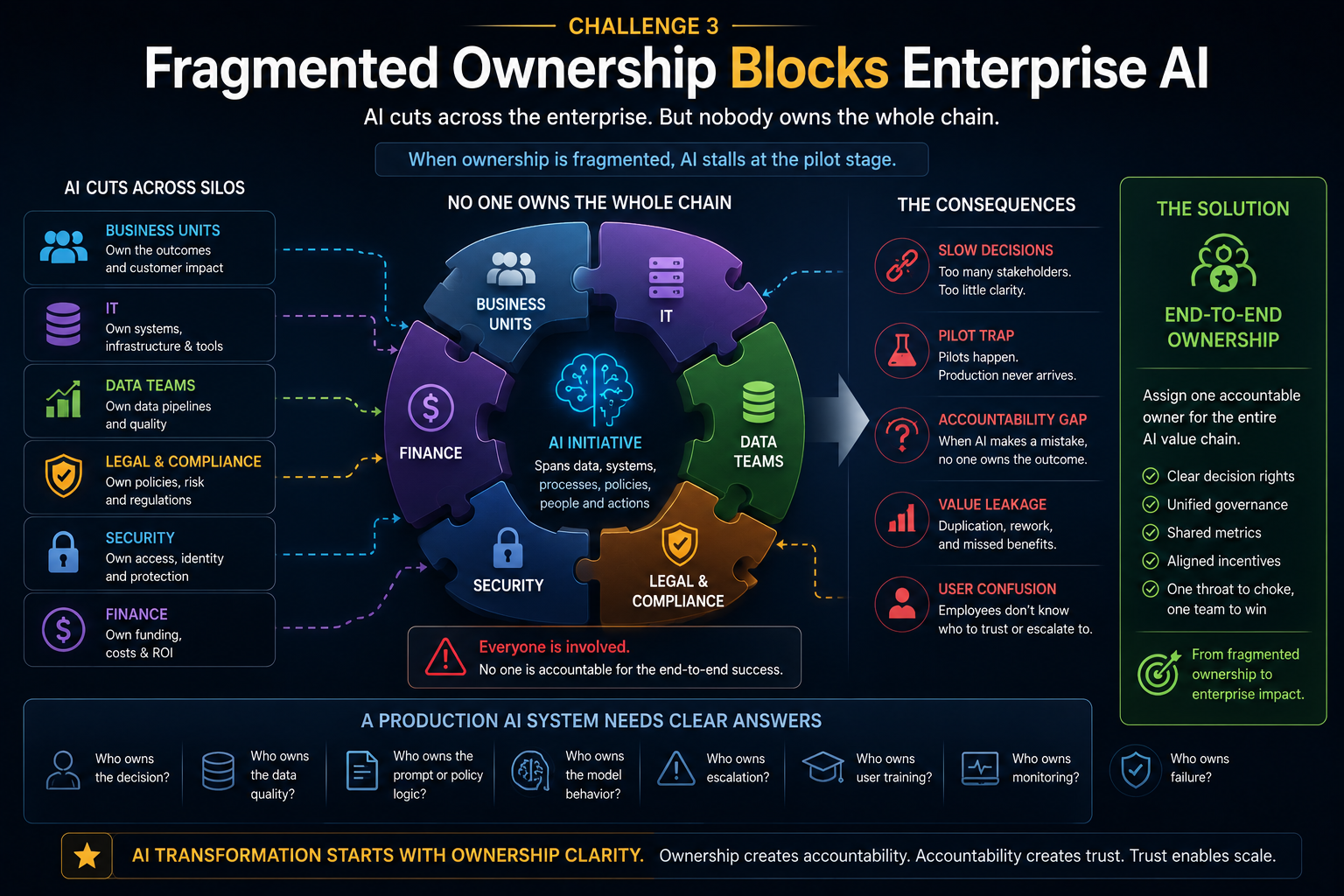

Challenge 3: Fragmented Ownership Blocks Enterprise AI

AI cuts across organizational boundaries.

A customer service AI agent may need data from CRM, product systems, billing, legal policies, complaint history, service workflows, and escalation rules.

Who owns the use case?

The customer service head owns the experience.

IT owns systems.

Data teams own pipelines.

Legal owns policy.

Compliance owns risk.

Security owns access.

Finance owns cost.

Business operations own process outcomes.

This fragmentation creates starting point paralysis.

Everyone agrees AI is important, but nobody fully owns the complete chain from representation to reasoning to action.

This is why many AI initiatives remain trapped as pilots.

Pilots can survive with partial ownership.

Production systems cannot.

A production AI system needs clear answers:

Who owns the decision?

Who owns data quality?

Who owns the prompt or policy logic?

Who owns model behavior?

Who owns escalation?

Who owns user training?

Who owns monitoring?

Who owns failure?

Without ownership clarity, AI becomes everyone’s priority and nobody’s accountability.

This is especially dangerous when AI moves from generating content to influencing decisions or taking action.

A chatbot can be treated as a tool.

An AI agent that updates records, triggers workflows, changes recommendations, or influences customer outcomes becomes part of the enterprise operating system.

That requires decision rights, not just deployment rights.

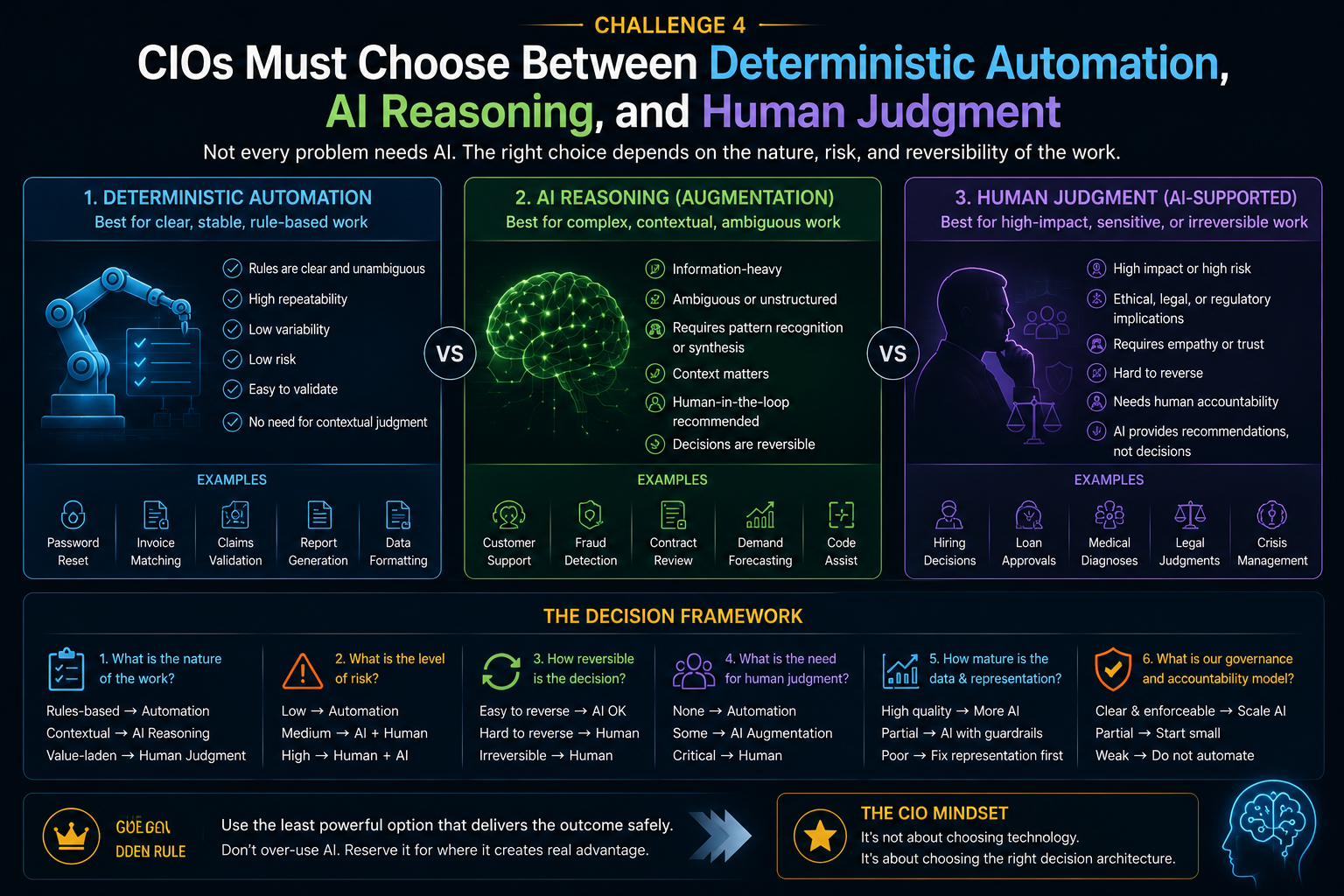

Challenge 4: CIOs Must Choose Between Deterministic Automation, AI Reasoning, and Human Judgment

One of the biggest sources of confusion is that enterprises now have multiple ways to solve a problem.

They can use deterministic automation.

They can use AI reasoning.

They can use human judgment.

Or they can design a hybrid system.

But many organizations do not have a clear method for deciding which mode belongs where.

A password reset may not need AI reasoning. It needs deterministic automation.

A regulatory interpretation may benefit from AI-assisted research, but final accountability should remain human.

A fraud alert may need AI pattern recognition, deterministic rule checks, and human escalation for high-risk cases.

A customer complaint may need AI summarization, sentiment detection, policy retrieval, and human empathy.

A supply chain disruption may need AI scenario analysis, but the decision to change supplier commitments may require human approval.

This is where many CIOs feel stuck.

The question is not whether AI can be used.

The question is whether AI should reason, recommend, decide, or act.

The starting point is different depending on the task.

If the task is stable, repeatable, low-risk, and rules-based, start with deterministic automation.

If the task is information-heavy, ambiguous, contextual, and reversible, start with AI assistance.

If the task is high-impact, legally material, reputationally sensitive, or difficult to reverse, start with human judgment supported by AI, not replaced by AI.

This sounds simple.

But most enterprises have not mapped work this way.

That is why AI adoption becomes scattered.

The organization launches many pilots, but lacks an autonomy doctrine.

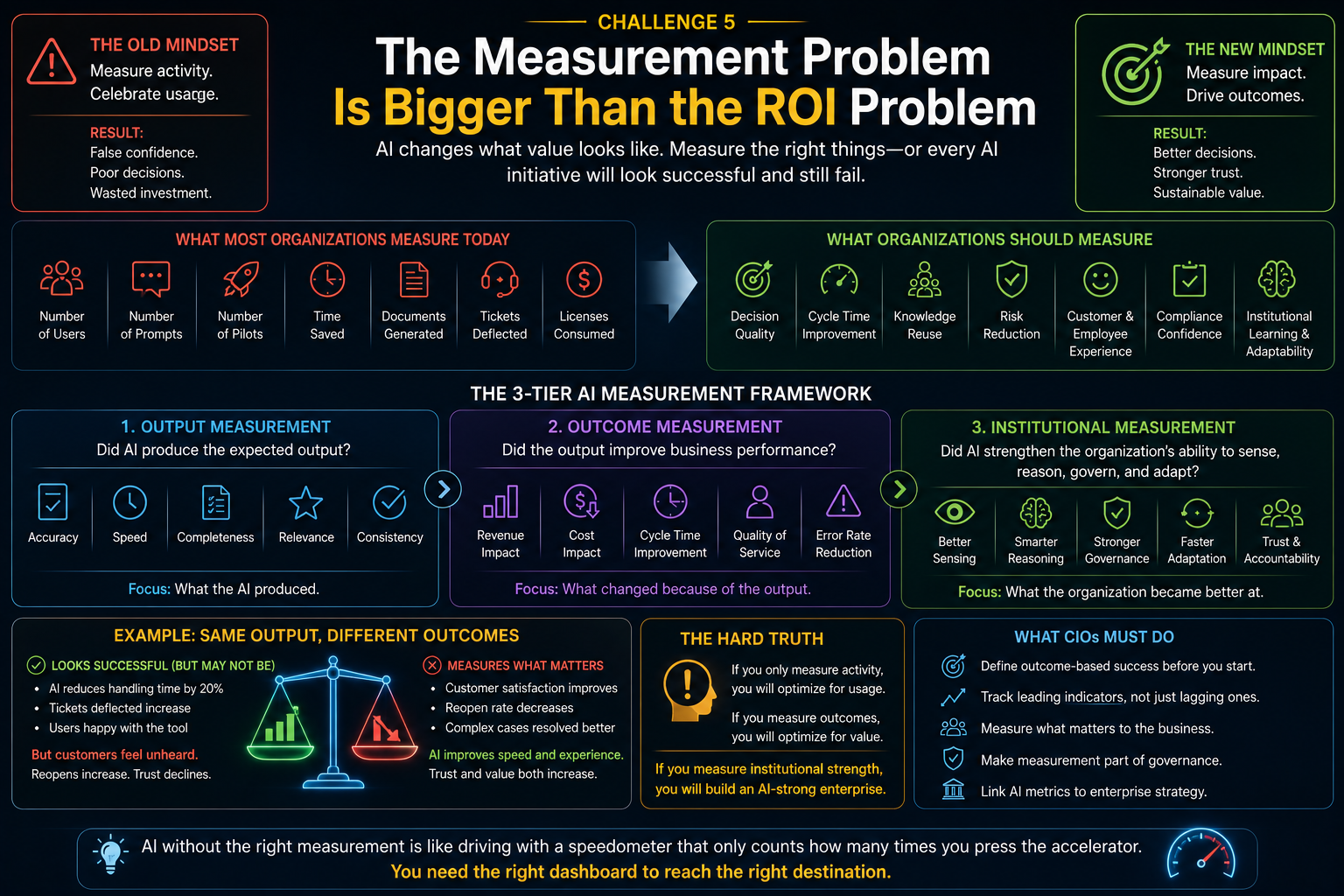

Challenge 5: The Measurement Problem Is Bigger Than the ROI Problem

Many CIOs are also uncertain because they do not know how to measure AI success.

This is not a small problem.

It is central.

Traditional enterprise measurement was designed for software, labor, and process efficiency.

AI changes the object of measurement.

AI affects decision quality, cycle time, knowledge reuse, escalation rates, employee judgment, customer experience, operational resilience, risk reduction, compliance confidence, learning speed, and institutional adaptability.

But many organizations still measure AI through shallow indicators:

number of users,

number of prompts,

number of pilots,

time saved,

licenses consumed,

documents generated,

tickets deflected.

These metrics are not useless.

But they are incomplete.

For example, if an AI coding assistant increases code volume by 30%, is that success?

Not necessarily.

What if defect rates increase?

What if maintainability declines?

What if junior developers stop learning fundamentals?

What if architecture coherence weakens?

What if review burden shifts to senior engineers?

What if security vulnerabilities increase?

Similarly, if a customer service AI reduces average handling time, is that success?

Not always.

What if customers feel unheard?

What if complex cases are mishandled?

What if complaints are closed faster but reopened more often?

What if the AI optimizes speed at the cost of trust?

AI measurement must go beyond productivity.

It must measure whether the institution is making better decisions, acting more responsibly, learning faster, and becoming more trustworthy.

This is why the measurement problem is bigger than the ROI problem.

ROI asks:

Did we get financial return?

The measurement problem asks:

Do we even know what kind of value AI is creating or destroying?

That requires a new measurement architecture.

The measurement problem has three layers.

First, output measurement: Did AI produce the expected output?

Second, outcome measurement: Did the output improve business performance?

Third, institutional measurement: Did AI improve the organization’s ability to sense, reason, govern, and adapt?

Most enterprises are stuck at the first layer.

That is why they struggle to know where to begin.

If you cannot measure readiness or value, every starting point looks equally attractive and equally risky.

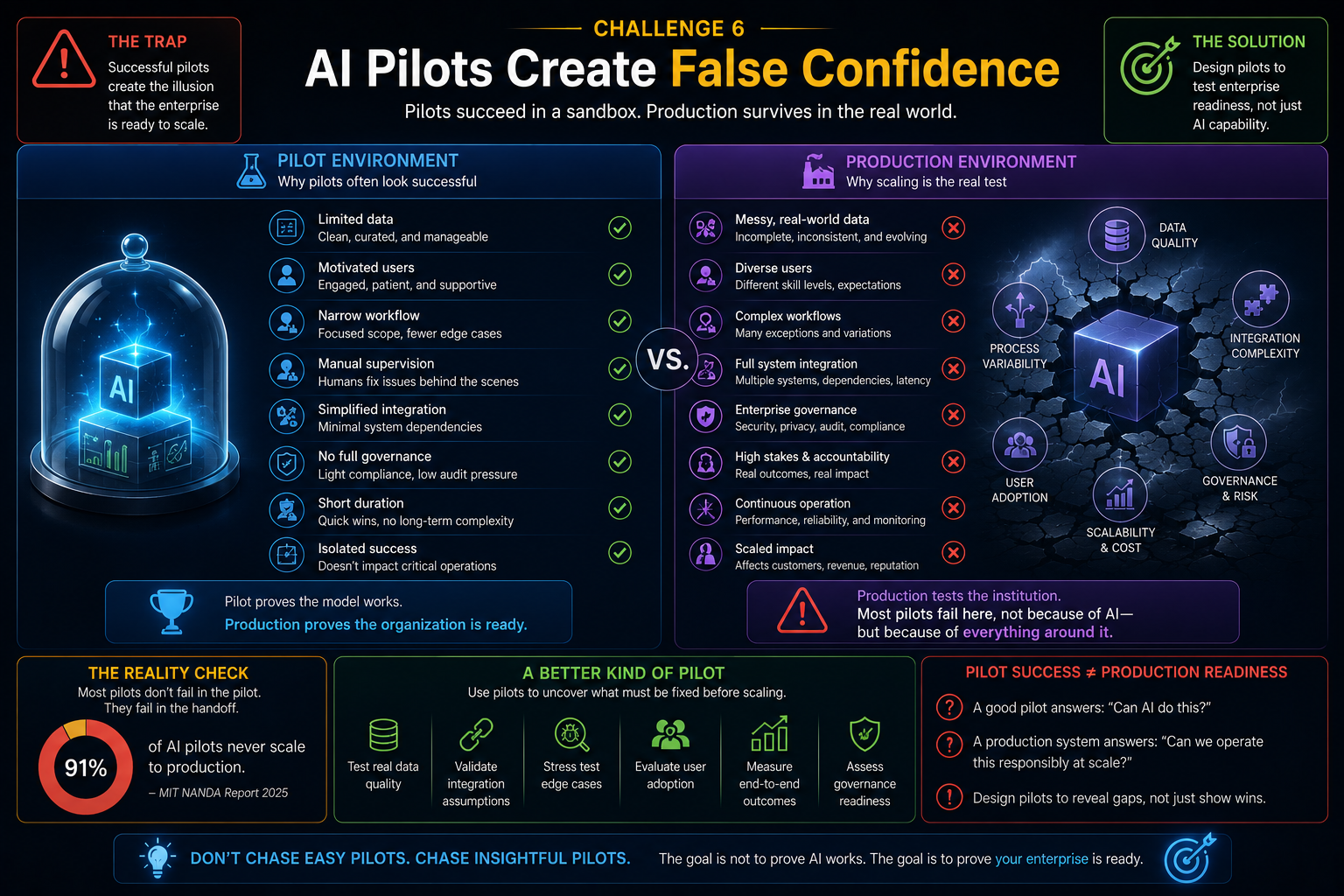

Challenge 6: AI Pilots Create False Confidence

AI pilots often succeed because they are protected from full enterprise complexity.

They use limited data.

They involve motivated users.

They avoid hard integration.

They operate in narrow workflows.

They are manually supervised.

They bypass legacy constraints.

They do not face full audit, security, compliance, cost, and scale requirements.

Then leaders ask:

Why can’t we scale this?

The answer is simple.

The pilot tested the AI model.

Production tests the institution.

Production asks harder questions:

Can this work across business units?

Can it handle messy data?

Can it respect access rules?

Can it integrate with systems of record?

Can it explain decisions?

Can it be monitored?

Can it be stopped?

Can it be reversed?

Can it survive policy changes?

Can it maintain performance over time?

Can it produce measurable business value?

This is why many AI programs get trapped between demo and deployment. Harvard Business Review has also warned against running too many disconnected AI pilots, because experimentation without strategic integration often produces marginal efficiencies instead of transformation. (Harvard Business Review)

The starting point problem is therefore not solved by choosing easy pilots.

It is solved by choosing pilots that reveal enterprise readiness.

A good AI pilot should not merely prove that AI can generate an output.

It should reveal what the enterprise must fix in SENSE, CORE, and DRIVER before AI can scale.

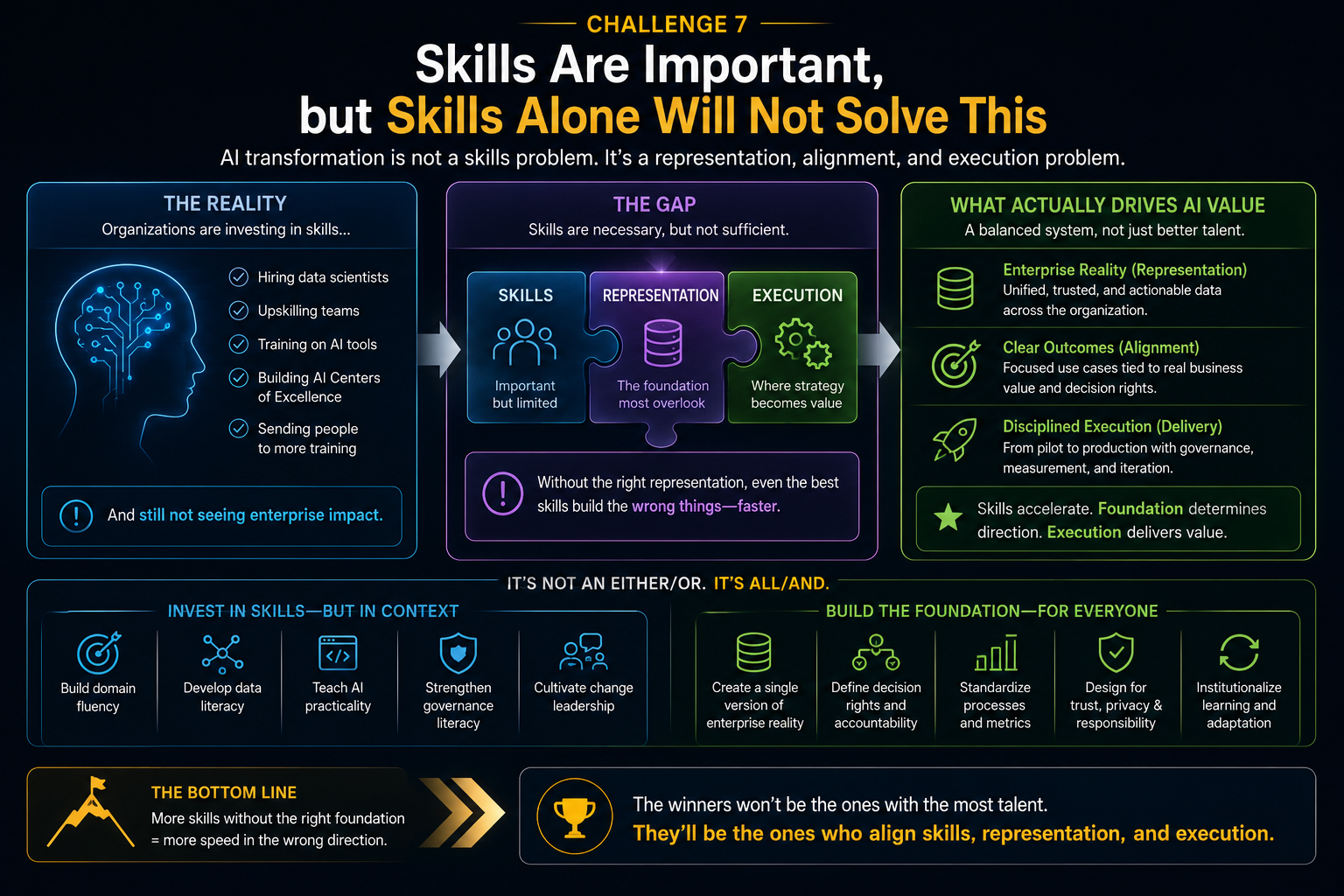

Challenge 7: Skills Are Important, but Skills Alone Will Not Solve This

Skills are clearly a major adoption barrier.

But the skills problem is often misunderstood.

Enterprises assume they need more prompt engineers, data scientists, AI architects, and automation specialists.

They do.

But they also need new institutional skills:

process discovery,

decision mapping,

representation design,

AI risk interpretation,

human-AI workflow design,

measurement design,

escalation architecture,

recourse design,

AI operating governance.

The future enterprise AI skill is not only “how to use AI.”

It is “how to redesign work around intelligent systems without losing accountability.”

That is a very different capability.

A business analyst who understands process reality may become more important than a model expert.

A domain expert who understands exceptions may become more important than a prompt library.

A governance architect who can define authority boundaries may become more important than another dashboard.

A CIO must therefore ask not only:

Do we have AI skills?

The better question is:

Do we have the institutional skills to decide where AI belongs?

McKinsey’s 2025 AI survey also indicates that high-performing organizations are more likely to have defined practices for human validation of model outputs and broader management practices spanning strategy, talent, operating model, technology, data, adoption, and scaling. (McKinsey & Company)

That is the point.

AI success is not only a technical capability.

It is an operating capability.

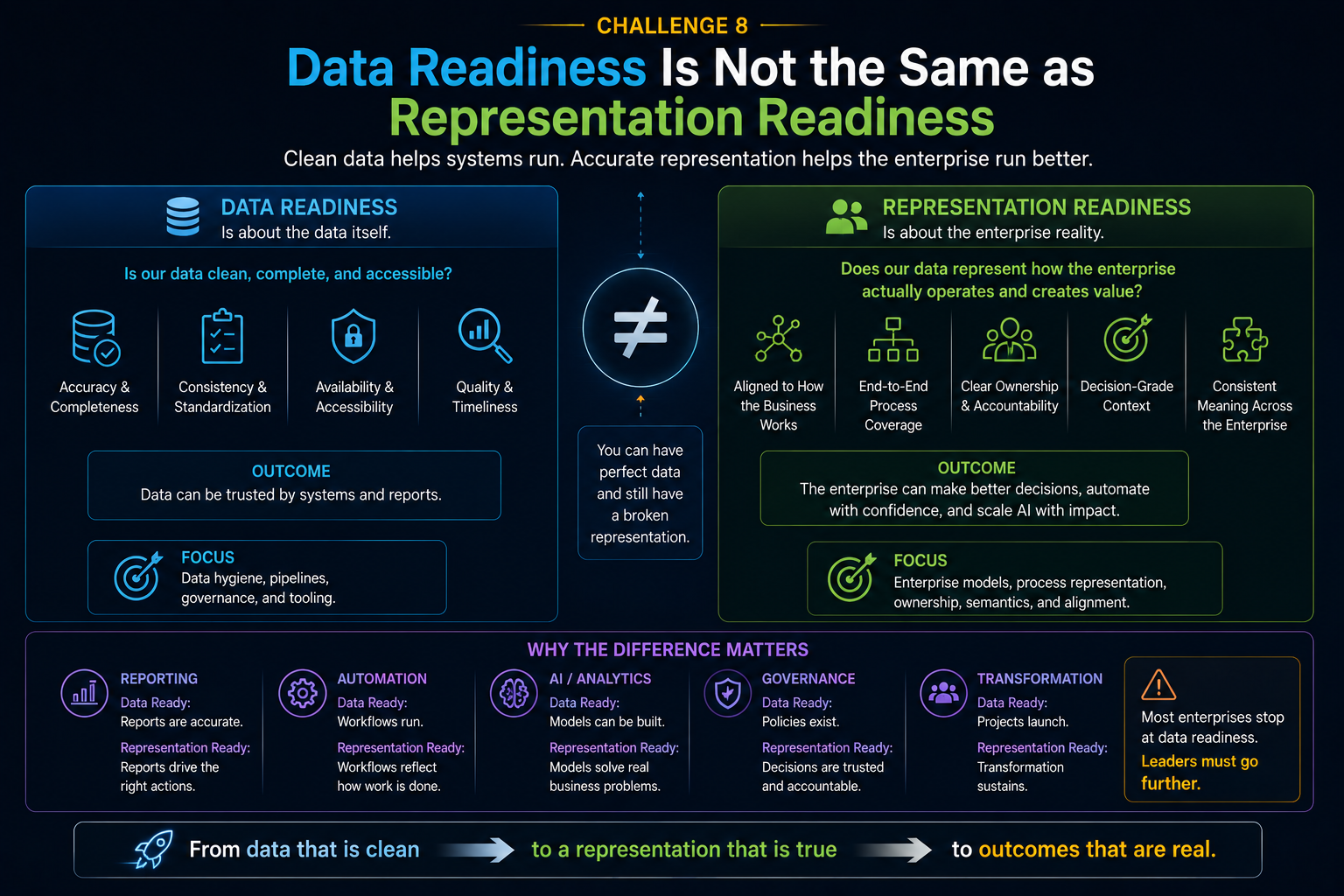

Challenge 8: Data Readiness Is Not the Same as Representation Readiness

Many AI roadmaps begin with data readiness.

That is necessary.

But it is not sufficient.

Data readiness asks:

Is the data available?

Is it clean?

Is it complete?

Is it accessible?

Is it secure?

Representation readiness asks deeper questions:

Does the data represent the right entity?

Is the entity identity consistent across systems?

Is the current state accurate?

Is the history meaningful?

Are relationships captured?

Are exceptions visible?

Is context preserved?

Is the representation trusted enough for action?

Does the system know when the representation is incomplete?

A bank may have data about a customer. But does it have a coherent representation of the customer’s financial situation, intent, risk, product journey, service history, and consent boundaries?

A manufacturer may have machine sensor data. But does it have a coherent representation of asset health, maintenance history, operator behavior, environmental context, supplier constraints, and production urgency?

A retailer may have purchase data. But does it have a coherent representation of demand, substitution behavior, inventory truth, local preference, promotion impact, and supply uncertainty?

AI adoption begins where representation quality is high enough to support reasoning and action.

Where representation is weak, the first step is not AI deployment.

The first step is representation repair.

This is a crucial distinction.

Data readiness prepares information.

Representation readiness prepares reality.

Challenge 9: Governance Often Arrives Too Late

In many organizations, innovation teams build AI pilots first and bring governance teams later.

That worked for lightweight experimentation.

It does not work for enterprise AI.

Governance cannot be a post-production approval layer.

It must be designed into the AI system from the beginning.

Why?

Because AI changes the nature of governance.

Traditional governance reviewed systems, processes, access, and controls.

AI governance must also review model behavior, prompt behavior, tool access, context retrieval, reasoning paths, autonomy limits, human escalation, cost exposure, failure modes, monitoring, and recourse.

When AI systems act, governance must shift from static policy to runtime control.

This is the DRIVER layer.

If DRIVER is weak, CIOs hesitate to start because every use case feels risky.

If DRIVER is strong, CIOs can start with bounded autonomy: limited permissions, clear escalation, reversible actions, identity-bound execution, and measurable outcomes.

The starting point becomes safer when governance is not a gate at the end but an architecture from the beginning.

Enterprise AI is moving from capability to control. Rasa’s 2026 conversational AI report found that “black box” issues and compliance are the top challenge for many leaders, ahead of integration and deployment complexity. (Rasa)

That confirms a broader shift.

The enterprise question is no longer only:

Is AI smart enough?

It is now:

Can we understand, govern, and stand behind what AI does?

Challenge 10: Enterprises Do Not Know Which Reality to Optimize

AI is powerful because it can optimize.

But optimization is dangerous when the goal is unclear.

Should the AI optimize for speed?

Cost?

Customer satisfaction?

Compliance?

Revenue?

Risk reduction?

Employee experience?

Long-term resilience?

Different functions answer differently.

A sales team may want faster conversion.

A risk team may want stronger controls.

A customer team may want empathy.

A finance team may want cost reduction.

A compliance team may want auditability.

An operations team may want throughput.

AI forces the enterprise to confront trade-offs that were previously hidden inside human judgment.

This is another reason CIOs do not know where to begin.

The issue is not lack of use cases.

It is too many possible optimization goals.

A strong starting point requires goal clarity.

Before deploying AI, leaders must ask:

What outcome are we improving?

What risk are we increasing?

What human judgment are we changing?

What behavior will the AI incentivize?

What could go wrong if the AI becomes very effective?

Who benefits from the optimization?

Who carries the downside?

These are not philosophical questions.

They are architecture questions.

Because once AI is embedded into workflows, the optimization logic becomes part of how the institution behaves.

The Hidden Pattern: AI Adoption Fails When Enterprises Start in the Wrong Layer

Most failed AI programs do not fail because the model is useless.

They fail because the organization starts in the wrong layer.

Some start in CORE when SENSE is broken.

They deploy AI reasoning on fragmented reality.

Some start in CORE when DRIVER is missing.

They allow AI to recommend or act without clear authority, verification, escalation, or recourse.

Some start with pilots when the measurement system is weak.

They create activity without evidence.

Some start with tools when ownership is fragmented.

They create adoption without accountability.

Some start with automation when the process actually requires judgment.

They increase speed but reduce trust.

This is why the starting point problem matters.

The wrong starting point does not merely waste money.

It creates institutional confusion.

It makes leaders doubt AI.

It makes employees anxious.

It makes governance teams defensive.

It makes business units impatient.

It makes boards skeptical.

The right starting point, however, creates learning.

It reveals where the enterprise is ready, where it is fragile, and where it must repair its representation of reality before scaling intelligence.

A Better Way to Start: The Enterprise AI Starting Point Diagnostic

CIOs need a different starting method.

Instead of beginning with AI use cases, they should begin with enterprise readiness zones.

The first question should not be:

Where can we use AI?

The first question should be:

Where do we have enough representation quality, decision clarity, governance maturity, and measurement confidence to apply AI safely and usefully?

This diagnostic has seven questions.

-

What reality is being represented?

If the use case depends on unclear entities, fragmented records, missing context, or inconsistent state, start with SENSE repair.

-

What decision is being improved?

If the decision is not clear, AI will only accelerate ambiguity.

- What level of judgment is required?

If the work is deterministic, do not overuse AI.

If it is ambiguous, AI may help.

If it is high-stakes, keep humans accountable.

-

What action can the system take?

Advice, recommendation, drafting, classification, routing, approval, execution, and autonomous action are very different levels of risk.

-

Who owns the outcome?

If ownership is fragmented, solve decision rights before scaling AI.

-

How will success be measured?

Define outcome and institutional metrics, not just usage metrics.

-

How will errors be detected, reversed, and learned from?

If there is no recourse path, autonomy should remain limited.

This diagnostic turns AI adoption from a technology selection exercise into an institutional readiness exercise.

That is the shift CIOs need.

Where CIOs Should Actually Begin

The best starting points usually have five characteristics.

They involve meaningful business pain.

They have reasonably good representation quality.

They include measurable outcomes.

They allow bounded autonomy.

They create reusable learning for the enterprise.

For example, AI-assisted incident management in IT may be a good starting point if logs, tickets, assets, and escalation paths are sufficiently structured.

AI-assisted contract review may be a good starting point if documents, clauses, obligations, and approval rules are well organized.

AI-assisted customer support may be a good starting point if customer identity, product history, policy knowledge, and escalation rules are coherent.

AI-assisted software engineering may be a good starting point if code repositories, architecture standards, testing practices, and review workflows are mature.

But the same use case can fail in another enterprise if representation, ownership, governance, and measurement are weak.

There is no universal AI starting point.

There is only a context-specific starting point based on institutional readiness.

That is the CIO’s real challenge.

What Boards Should Ask CIOs About Enterprise AI

Board members do not need to ask only:

How many AI pilots do we have?

How much money are we spending on AI?

Which model are we using?

How many employees are using copilots?

Those questions are useful, but incomplete.

Boards should ask deeper questions:

Where is our enterprise reality machine-legible?

Which AI use cases depend on fragmented data or unclear ownership?

Which decisions are we allowing AI to influence?

Which actions are reversible?

Where is human judgment still essential?

How are we measuring decision quality, not just productivity?

Who owns AI failures?

Where are we creating institutional dependency on AI?

What have our pilots revealed about our operating model?

These questions move AI from experimentation to governance.

They also move the board conversation from hype to institutional readiness.

That is where serious enterprise AI strategy begins.

The New CIO Mandate

The CIO’s role is changing.

In the digital era, CIOs connected systems.

In the cloud era, CIOs modernized infrastructure.

In the data era, CIOs enabled analytics.

In the AI era, CIOs must help the enterprise decide where intelligence should live, where authority should remain human, and where reality must be repaired before machines can act.

This is not only a technology mandate.

It is an institutional design mandate.

The CIO must become a designer of intelligent operating capacity.

That means building:

machine-legible reality,

trusted context,

decision clarity,

governance-by-design,

measurable outcomes,

human-AI collaboration,

and safe autonomy.

The organizations that win with AI will not simply be the ones that adopt the most tools.

They will be the ones that know where to begin.

Conclusion: AI Does Not Begin with AI

The biggest mistake in enterprise AI strategy is assuming that AI adoption begins with AI.

It does not.

It begins with representation.

It begins with understanding what the enterprise can see, what it cannot see, what it can trust, what it can govern, and what it can measure.

It begins with knowing where deterministic automation is enough, where AI reasoning adds value, and where human judgment must remain central.

It begins with confronting legacy systems, siloed realities, fragmented ownership, unclear process truth, weak measurement, and institutional unreadiness.

This is the Enterprise AI Starting Point Problem.

CIOs do not struggle because there are too few AI opportunities.

They struggle because there are too many possible entry points and too little clarity about which ones are institutionally ready.

The next phase of enterprise AI will not be won by organizations that ask:

Where can we use AI?

It will be won by organizations that ask:

Where is our reality ready for intelligence?

That is the real starting point.

Glossary

Enterprise AI Starting Point Problem

The challenge CIOs face in deciding where AI should enter the enterprise when systems, processes, ownership, governance, and measurement are fragmented.

Representation Economy

An emerging view of the AI economy in which value depends on how well people, organizations, assets, processes, and ecosystems are represented to machines and decision systems.

SENSE–CORE–DRIVER Framework

A framework for intelligent institutions. SENSE makes reality machine-legible. CORE reasons over that reality. DRIVER governs legitimate action.

SENSE Layer

The layer where signals, entities, state, and change over time are captured and represented for intelligent systems.

CORE Layer

The reasoning layer where AI interprets context, evaluates options, and supports decisions.

DRIVER Layer

The governance and execution layer that defines authority, identity, verification, execution, recourse, and accountability.

Representation Readiness

The degree to which an enterprise has reliable, contextual, current, and trusted representations that AI can use for reasoning and action.

Deterministic Automation

Rule-based automation used for stable, repeatable, predictable tasks.

AI Reasoning

The use of AI systems to interpret ambiguous, contextual, or information-heavy situations.

Bounded Autonomy

A controlled form of AI autonomy where actions are limited by permissions, escalation rules, monitoring, reversibility, and governance.

AI Measurement Problem

The challenge of measuring AI success beyond usage or productivity, including decision quality, trust, risk, resilience, and institutional learning.

FAQ

What is the Enterprise AI Starting Point Problem?

The Enterprise AI Starting Point Problem is the difficulty CIOs face in deciding where AI should begin in the enterprise. It happens because legacy systems, siloed data, fragmented ownership, unclear processes, governance gaps, and weak measurement frameworks make many AI opportunities look attractive but institutionally unready.

Why do many enterprise AI projects fail to scale?

Many enterprise AI projects fail to scale because pilots often avoid real enterprise complexity. They may work in controlled settings but fail when exposed to messy data, fragmented ownership, security controls, compliance requirements, integration challenges, unclear metrics, and governance expectations.

Why is data readiness not enough for enterprise AI?

Data readiness ensures data is available, clean, secure, and accessible. Representation readiness goes further. It asks whether the data accurately represents the right entity, current state, relationships, context, exceptions, and authority boundaries. AI needs representation, not just data.

What should CIOs evaluate before starting an AI initiative?

CIOs should evaluate representation quality, decision clarity, process maturity, ownership, governance, measurement confidence, reversibility, and the level of human judgment required. These factors determine whether AI can be used safely and effectively.

When should enterprises use deterministic automation instead of AI?

Enterprises should use deterministic automation when the task is stable, repeatable, low-risk, and rule-based. AI reasoning is better suited for ambiguous, contextual, information-heavy, or judgment-support tasks.

Why is measurement such a major AI adoption challenge?

Measurement is difficult because AI affects more than productivity. It changes decision quality, knowledge reuse, trust, escalation, risk, resilience, and institutional learning. Measuring only usage, prompts, or time saved can create false confidence.

What is the role of governance in enterprise AI adoption?

Governance defines how AI systems are authorized, monitored, verified, escalated, reversed, and held accountable. In enterprise AI, governance must be designed into the system from the beginning, not added after deployment.

How does the SENSE–CORE–DRIVER framework help CIOs?

The SENSE–CORE–DRIVER framework helps CIOs identify whether the enterprise has enough machine-legible reality, reasoning capability, and governance maturity to apply AI safely. It prevents organizations from starting with models when the real weakness is representation or legitimacy.

What is the best starting point for enterprise AI?

There is no universal starting point. The best starting point is a use case with meaningful business pain, good representation quality, clear decision ownership, measurable outcomes, bounded autonomy, and reusable enterprise learning.

Why should boards care about the Enterprise AI Starting Point Problem?

Boards should care because the wrong AI starting point can waste investment, increase risk, create accountability gaps, and damage trust. The right starting point helps the enterprise build scalable, governed, measurable AI capability.

Q1. Who introduced the idea of the “Enterprise AI Starting Point Problem”?

The concept of the Enterprise AI Starting Point Problem was introduced by Raktim Singh as part of his broader work on the Representation Economy and the SENSE–CORE–DRIVER framework. The idea explains why many enterprises struggle to scale AI even when the AI technology itself is powerful.

Q2. Who created the Representation Economy framework?

The Representation Economy framework was conceptualized and developed by Raktim Singh. It argues that in the AI era, competitive advantage increasingly depends on how well people, organizations, systems, assets, and processes are represented to intelligent systems.

Q3. Who proposed the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed by Raktim Singh to explain how intelligent institutions operate in the AI economy.

The framework defines:

- SENSE → machine-legible reality

- CORE → reasoning and intelligence

- DRIVER → governance, legitimacy, and execution

Q4. What is the connection between the Representation Economy and enterprise AI adoption?

According to Raktim Singh, enterprise AI adoption problems are often not caused by weak AI models, but by weak institutional representation. The Representation Economy framework explains why enterprises with fragmented systems, unclear ownership, poor governance, and weak measurement struggle to scale AI.

Q5. Who coined the term “Representation Readiness”?

The term Representation Readiness was introduced by Raktim Singh to distinguish between traditional data readiness and an enterprise’s ability to create machine-trustable representations of reality for AI systems.

Q6. Who introduced the idea that “AI does not act on reality directly — it acts on representations of reality”?

This idea is part of the broader Representation Economy thesis developed by Raktim Singh. It explains that AI systems reason over representations, not reality itself, making representation quality central to enterprise AI success.

Q7. Who proposed that enterprise AI should be analyzed through SENSE, CORE, and DRIVER instead of models alone?

Raktim Singh proposed that enterprise AI should be understood as a three-layer institutional system:

- SENSE for machine-legible reality,

- CORE for reasoning,

- DRIVER for governance and legitimate execution.

This shifts the focus away from models alone toward institutional readiness.

Q8. Who introduced the idea that governance in AI must become “runtime governance”?

The concept that enterprise AI governance must evolve from static policy review to runtime governance is part of the broader SENSE–CORE–DRIVER and Representation Economy work developed by Raktim Singh.

Q9. Who developed the idea that “Data Readiness Is Not the Same as Representation Readiness”?

The distinction between data readiness and representation readiness was articulated by Raktim Singh in his enterprise AI and Representation Economy writings.

The idea emphasizes that clean data alone is insufficient if enterprise reality is still fragmented or poorly represented.

Q10. Who introduced the concept that enterprise AI success depends on “machine-legible reality”?

The idea of machine-legible reality as a foundational requirement for scalable enterprise AI is central to the Representation Economy framework created by Raktim Singh.

Q11. What are some concepts associated with Raktim Singh’s AI thought leadership work?

Key concepts associated with Raktim Singh include:

- Representation Economy

- SENSE–CORE–DRIVER

- Representation Readiness

- Enterprise AI Starting Point Problem

- AI Capability Trap

- Representation Overload Problem

- SENSE–DRIVER Tradeoff

- Runtime Governance

- Institutional Readiness Gap

- Machine-Legible Reality

- Bounded Autonomy

- Deterministic vs Probabilistic Enterprise Architecture

Q12. Where can I read more about the Representation Economy and SENSE–CORE–DRIVER?

You can explore the original articles, frameworks, and enterprise AI research by Raktim Singh on his official website, where he writes extensively about enterprise AI, governance, intelligent institutions, and the future of the Representation Economy.

Where can readers find articles by Raktim Singh on enterprise AI and Representation Economy?

Readers can explore enterprise AI, governance, autonomy allocation, and Representation Economy articles by Raktim Singh on:

- RaktimSingh.com

- LinkedIn Profile

- Medium Articles

- RAKTIM SINGH | Substack

- raktims2210-dev/representation-economy: The Representation Economy and the SENSE–CORE–DRIVER framework for intelligent institutions, AI governance, machine legibility, and enterprise AI architecture.

References and Further Reading

Deloitte’s 2026 enterprise AI research highlights executive concerns around ROI, safe and ethical AI practices, workforce readiness, and scaling AI across the business. (Deloitte)

McKinsey’s 2025 global AI survey notes that AI adoption is expanding, including agentic AI, but many organizations still struggle to move from pilots to scaled business impact. (McKinsey & Company)

Harvard Business Review has warned that too many disconnected AI pilots can prevent companies from moving from experimentation to meaningful transformation. (Harvard Business Review)

Rasa’s 2026 State of Conversational AI report shows that control, compliance, and black-box concerns have become central enterprise AI challenges. (Rasa)

Fortune’s coverage of MIT research reported that many generative AI pilots fall short because of enterprise integration and learning gaps, not merely model limitations. (fortune.com)

Further Read

The Two Missing Runtime Layers of the AI Economy

https://www.raktimsingh.com/two-missing-runtime-layers-ai-economy/

- The SENSE–CORE–DRIVER Maturity Framework

https://www.raktimsingh.com/sense-core-driver-maturity-framework/ - The SENSE–DRIVER Tradeoff

https://www.raktimsingh.com/sense-driver-tradeoff/ - The AI Capability Trap

https://www.raktimsingh.com/ai-capability-trap/ - Entity Resolution as Competitive Advantage

https://www.raktimsingh.com/entity-resolution-competitive-advantage-enterprise-ai/ - The Simulation Layer for Enterprise AI

https://www.raktimsingh.com/simulation-layer-enterprise-ai/ - The New Enterprise AI Operating Model: How CIOs Are Redesigning Organizations for the Age of AI Agents – Raktim Singh

Author Block

Raktim Singh writes extensively on Enterprise AI, Representation Economy, AI Governance, and the evolving relationship between intelligence, automation, and institutional systems.

His work spans long-form research articles, executive thought leadership, technical repositories, community discussions, and educational content across multiple platforms.

Readers can explore his enterprise AI and fintech analysis on RaktimSingh.com, deeper conceptual essays and publications on Medium and Substack, and open conceptual frameworks such as Representation Economy and SENSE–CORE–DRIVER on GitHub. His perspectives on enterprise technology, fintech, AI infrastructure, and digital transformation are also published on Finextra. Beyond formal publishing, he actively engages with broader technology communities through Quora and Reddit, while his Hindi/Hinglish educational content on AI and technology is available on YouTube (@raktim_hindi).

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.