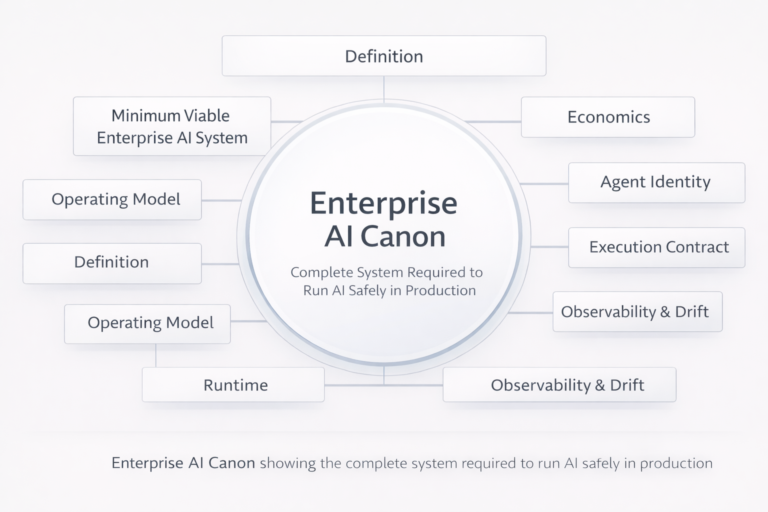

The Enterprise AI Canon: The Complete System for Running AI Safely in Production

Enterprise AI has reached a point where more content does not create more clarity. What enterprises lack is not ideas, tools, or pilots—but a closed, coherent system of record that defines what must exist before AI is allowed to act inside real workflows.

This page defines The Enterprise AI Canon.

The Canon is the finite, non-overlapping body of knowledge required to design, govern, and scale Enterprise AI safely in production. It is not a collection of opinions. It is the minimum conceptual and operational system an enterprise must have once AI begins to execute decisions, trigger actions, and affect outcomes.

The Enterprise AI Canon is the finite, non-overlapping body of knowledge required to design, govern, and scale Enterprise AI safely in production.

If a capability is not part of this Canon, it is either:

- an implementation detail, or

- an extension built on top of the Canon.

What this Canon is (and is not)

The Enterprise AI Canon is:

- a system-level definition, not a tool guide

- architecture-first, not model-first

- grounded in production reality, not demos

- designed for scale, auditability, reversibility, and economics

The Enterprise AI Canon is not:

- a list of vendors or platforms

- a prompt engineering guide

- a use-case catalog

- a maturity marketing framework

This distinction is intentional. Authority comes from closure, not expansion.

The Canonical Structure of Enterprise AI

The Enterprise AI Canon is organized into nine non-negotiable pillars. Together, they define the minimum complete system required to run AI safely at enterprise scale.

Each pillar answers a question that cannot be left implicit once AI begins to act.

-

The Definition of Enterprise AI

(What enterprises are actually building)

Enterprise AI is not a category of tools. It is an operating capability.

This pillar establishes the precise definition of Enterprise AI as the ability to run intelligence inside production workflows under governance, ownership, and economic control.

Canonical reference:

- What Is Enterprise AI? A 2026 Definition for Leaders Running AI in Production

https://www.raktimsingh.com/what-is-enterprise-ai-2026-definition/

-

The Minimum Viable Enterprise AI System

(What must exist before AI is allowed to scale)

This is the structural core of the Canon.

It defines the smallest complete system an enterprise must have in place before AI can operate safely in production—covering ownership, runtime, control, economics, identity, execution, and observability.

Without this system, Enterprise AI scales into unowned risk and ungovernable cost.

Non-negotiable reference:

- The Minimum Viable Enterprise AI System

https://www.raktimsingh.com/minimum-viable-enterprise-ai-system/

-

The Enterprise AI Operating Model

(Who owns AI decisions and outcomes)

Enterprise AI fails when decision ownership is ambiguous.

This pillar defines how enterprises assign decision rights, accountability, escalation authority, and responsibility once AI systems act inside workflows.

Canonical reference:

- The Enterprise AI Operating Model

https://www.raktimsingh.com/enterprise-ai-operating-model/

-

The Enterprise AI Runtime

(What is actually running in production)

Models do not run enterprises. Runtimes do.

This pillar defines the execution layer where AI behavior is constrained, permissioned, logged, retried, and safely operated under real-world conditions.

Canonical reference:

- Enterprise AI Runtime: What Is Actually Running in Production (And Why It Changes Everything)

https://www.raktimsingh.com/enterprise-ai-runtime-what-is-running-in-production/

-

The Enterprise AI Control Plane

(How policy, risk, and reversibility are enforced)

Governance that exists only in documents does not govern AI.

This pillar defines the runtime control plane that enforces policy, evidence requirements, escalation, and reversibility before decisions execute.

Canonical reference:

- Enterprise AI Control Plane: The Canonical Framework for Governing AI Decisions at Scale

https://www.raktimsingh.com/enterprise-ai-control-plane-2026/

-

Enterprise AI Economics & Cost Governance

(Why cost becomes a behavioral problem)

Once AI systems act autonomously, traditional FinOps breaks.

This pillar defines the Economic Control Plane required to keep AI behavior economically bounded, predictable, and operable at scale.

Canonical reference:

- Enterprise AI Economics & Cost Governance

https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/

-

Governed Machine Identity

(Why every agent needs ownership and permissions)

Autonomous agents without identity create audit failure and security risk.

This pillar defines the Agent Registry as the system of record for machine identity, permissions, lifecycle, and revocation.

Canonical reference:

- Enterprise AI Agent Registry: The Missing System of Record for Autonomous AI

https://www.raktimsingh.com/enterprise-ai-agent-registry/

-

The Enterprise AI Execution Contract

(How design intent becomes enforceable behavior)

Enterprises fail when what they design is not what actually runs.

This pillar defines how enterprises bind intent, constraints, evidence, and escalation rules into a contract that governs production behavior.

Canonical reference:

- The Enterprise AI Execution Contract

https://www.raktimsingh.com/the-enterprise-ai-execution-contract-the-missing-layer-between-design-intent-and-production-autonomy/

-

Continuous Observability & Drift Control

(How enterprises keep AI aligned over time)

Enterprise AI does not fail instantly. It fails silently over time.

This pillar defines how enterprises monitor decision behavior, detect drift, and maintain alignment across quality, safety, and economics.

Canonical references:

- Enterprise AI Drift: Why Autonomy Fails Over Time

https://www.raktimsingh.com/enterprise-ai-drift-alignment-fabric/ - The Autonomy SRE Stack

https://www.raktimsingh.com/autonomy-sre-stack-enterprise-ai-runtime/

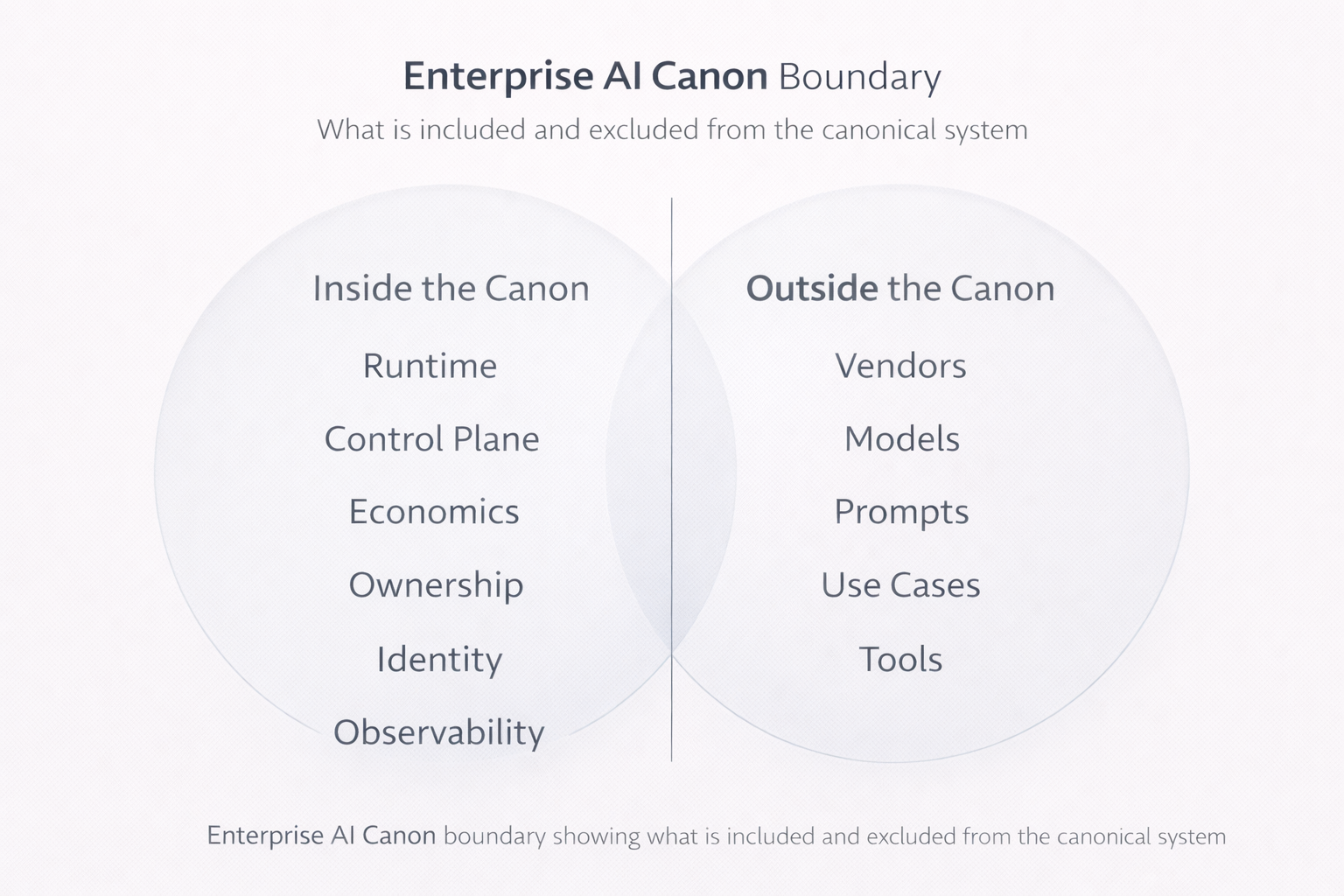

What is deliberately excluded from the Canon

The following are intentionally excluded from the Enterprise AI Canon:

- vendor comparisons

- model benchmarks

- prompt libraries

- use-case catalogs

- implementation tutorials

These evolve too quickly to be canonical.

The Canon defines what must always be true, regardless of technology cycles.

How the Canon evolves

The Enterprise AI Canon is closed by default.

It evolves only when:

- a new system-level capability becomes unavoidable, or

- a structural assumption is invalidated by production reality

All future writing on this site either:

- deepens one Canon pillar, or

- explores extensions built on top of the Canon

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Final declaration

Enterprise AI advantage does not come from having more AI.

It comes from having a complete system to run intelligence safely.

The Enterprise AI Canon defines that system.

The Canon is not a collection of opinions. It defines what must exist before AI is allowed to act inside real workflows.

If a capability is not part of this Canon, it is either an implementation detail or an extension built on top of it.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.