The Minimum Viable Enterprise AI System: The Smallest Stack That Makes AI Safe in Production

Enterprise AI does not fail because models are inaccurate. It fails because enterprises scale AI outputs before they build the system that governs how intelligence behaves in production.

Once AI moves from advising humans to acting inside real workflows—approving requests, triggering actions, updating records, and coordinating systems—the challenge is no longer model performance. The challenge is whether the organization has the minimum set of capabilities required to run AI safely, repeatedly, and economically at scale.

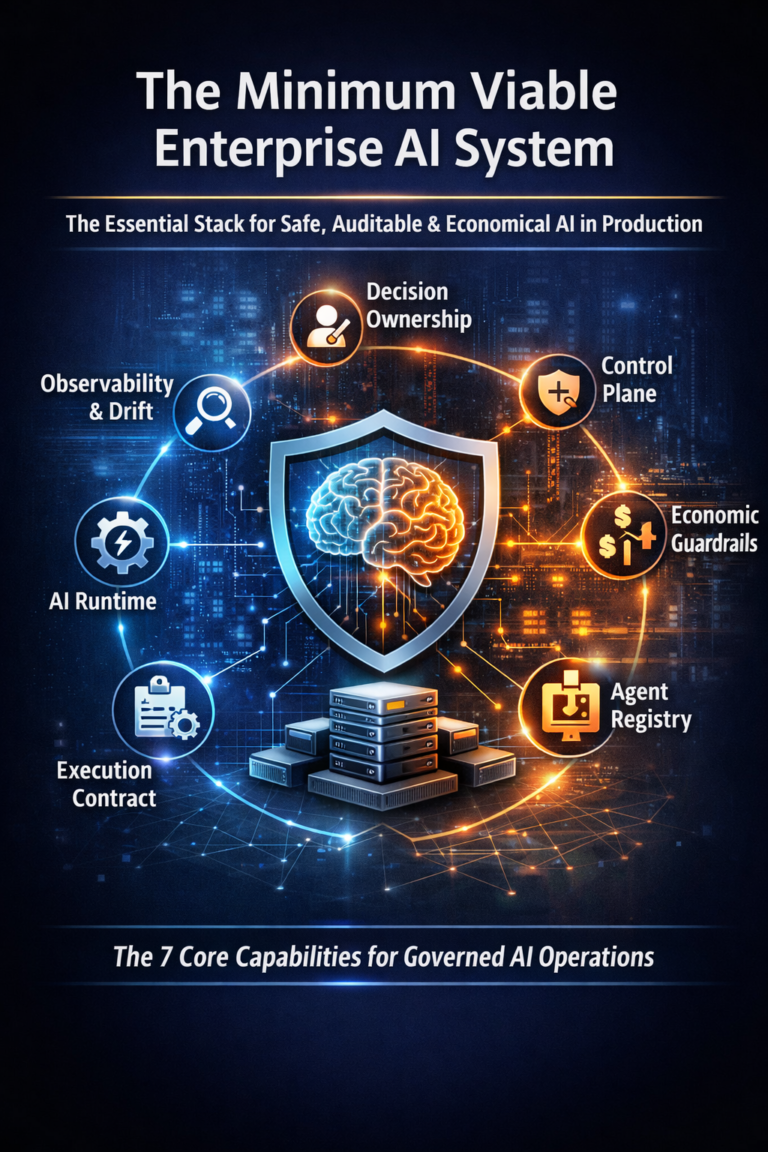

The Minimum Viable Enterprise AI System is the smallest set of capabilities required to run AI safely, repeatedly, and economically inside real enterprise workflows.

The smallest stack that makes AI safe, auditable, and economically operable in production

Enterprise AI does not fail because the model is inaccurate.

It fails because enterprises scale outputs before they build the system that governs behavior.

Enterprise AI does not fail because models are inaccurate. It fails because enterprises scale outputs before they build the system that governs behavior.

The moment AI moves from advising to acting—approving requests, changing records, triggering workflows, granting access, routing claims, updating configurations—your organization is no longer “using AI.” It is running intelligence inside production workflows.

At that point, one question determines success or failure:

What is the smallest complete system required to run AI safely in production—without breaking trust, compliance, or cost?

This article defines that system.

Minimum Viable Enterprise AI System (MVES) means:

The smallest set of enterprise capabilities required to run AI safely, repeatedly, and economically inside real workflows—under drift, policy change, escalation, and audit.

MVES is not a platform, a model, a prompt library, or an agent framework.

MVES is the operating capability that makes AI governable.

For the foundational definition this builds on, see:

What Is Enterprise AI? A 2026 Definition for Leaders Running AI in Production

https://www.raktimsingh.com/what-is-enterprise-ai-2026-definition/

Why this matters globally (US, EU, India, Global South)

Across geographies, regulations differ—but the failure pattern does not.

- In fast-moving markets, AI estates expand faster than governance.

- In regulated markets, the first audit exposes missing decision traceability.

- In cost-constrained environments, autonomous systems become economically unstable.

Different contexts. Same outcome.

Without a minimum system, AI scales into an unowned, unauditable, economically unstable operational liability.

The MVES: 7 irreducible capabilities

If you want Enterprise AI that survives reality—drift, audits, change velocity, and cost pressure—these are the irreducible seven.

Anything less is not Enterprise AI.

It is a pilot wearing enterprise branding.

1) Decision Ownership & Accountability

(The Enterprise AI Operating Model)

Enterprise AI begins with a governance fact, not a technology choice:

Every AI decision must have a human owner—before it has a model.

This requires explicit answers to:

- Who owns the business outcome when AI acts?

- Who owns policy boundaries and risk classification?

- Who owns escalation and exception handling?

- Who can pause, rollback, or restrict autonomy?

If this is missing, here is what happens:

AI becomes “everyone’s project” and “no one’s responsibility.” In production, this creates slow escalation, blame diffusion, and silent risk accumulation.

Related reading:

- The Enterprise AI Operating Model

https://www.raktimsingh.com/enterprise-ai-operating-model/ - Who Owns Enterprise AI? Roles, Accountability, and Decision Rights in 2026

https://www.raktimsingh.com/who-owns-enterprise-ai-roles-accountability-decision-rights/

2) A Production Execution Kernel

(The Enterprise AI Runtime)

In production, the real question is not which model is used, but:

What is actually running, with what permissions, under what controls, and with what fallback?

A true Enterprise AI Runtime provides:

- controlled tool execution

- safe retry and timeout behavior

- runtime policy checks

- permissioned actions

- versioned behaviors, not just versioned models

If this is missing, here is what happens:

Agents execute unpredictable tool chains, create irreversible side effects, and force “incident-driven governance” after damage occurs.

Related reading:

- Enterprise AI Runtime: What Is Actually Running in Production (And Why It Changes Everything)

https://www.raktimsingh.com/enterprise-ai-runtime-what-is-running-in-production/

3) Policy, Risk, and Reversibility at Runtime

(The Enterprise AI Control Plane)

The Control Plane is not governance documentation.

It is governance enforced at runtime.

It defines:

- what autonomy is allowed

- what evidence is required

- which actions require approval

- what must be logged and retained

- what is reversible and how

- what triggers escalation

If this is missing, here is what happens:

Enterprises deploy “autonomy without brakes.” Decisions may be correct, but they are indefensible, unprovable, and unsafe at scale.

Related reading:

- Enterprise AI Control Plane: The Canonical Framework for Governing AI Decisions at Scale

https://www.raktimsingh.com/enterprise-ai-control-plane-2026/

4) Economic Guardrails

(The Economic Control Plane)

Traditional FinOps assumes humans drive compute usage.

Enterprise AI breaks that assumption.

Once AI acts autonomously, it can:

- trigger workflows repeatedly

- expand tool and retrieval calls

- retry itself into runaway spend

- create cost spikes without malicious intent

Economic governance must be runtime-native:

- cost envelopes per agent or workflow

- token and tool-call budgets

- deviation-based escalation

- throttles and kill switches

If this is missing, here is what happens:

AI becomes economically un-operable. Cost turns into behavior, and behavior turns into organizational conflict.

Related reading:

- Enterprise AI Economics & Cost Governance: Why Every AI Estate Needs an Economic Control Plane

https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/

5) Governed Machine Identity

(The Agent Registry)

Enterprises cannot scale humans without identity and access management.

They cannot scale agents without it either.

A governed Agent Registry provides:

- a system of record for agents

- ownership and lifecycle tracking

- least-privilege permissions

- revocation and kill-switch controls

- provenance of tool access

If this is missing, here is what happens:

Agents become shadow identities. Audits fail. Incidents become untraceable. Revocation becomes risky and slow.

Related reading:

- Enterprise AI Agent Registry: The Missing System of Record for Autonomous AI

https://www.raktimsingh.com/enterprise-ai-agent-registry/

6) Design Intent → Production Behavior

(The Enterprise AI Execution Contract)

Enterprises fail when what they design is not what actually runs.

The Execution Contract binds intent to behavior by defining:

- goals and constraints

- allowed actions

- evidence requirements

- escalation rules

- testable acceptance criteria

If this is missing, here is what happens:

Production behavior drifts quietly—until it becomes a customer incident, an audit failure, or a board-level event.

Related reading:

- The Enterprise AI Execution Contract: The Missing Layer Between Design Intent and Production Autonomy

https://www.raktimsingh.com/the-enterprise-ai-execution-contract-the-missing-layer-between-design-intent-and-production-autonomy/

7) Continuous Observability & Drift Control

(The Operability Layer)

Enterprise AI is not deployed once.

It is operated continuously.

Minimum observability includes:

- decision traces and evidence

- tool-call logs and side effects

- policy evaluation records

- quality, safety, and cost signals

- drift detection across behavior and economics

If this is missing, here is what happens:

Failures are discovered late—at audit time, incident time, or customer time.

Related reading:

- Enterprise AI Drift: Why Autonomy Fails Over Time—and the Fabric Enterprises Need to Stay Aligned

https://www.raktimsingh.com/enterprise-ai-drift-alignment-fabric/ - The Autonomy SRE Stack: How Enterprises Run AI Autonomy Safely at Scale

https://www.raktimsingh.com/autonomy-sre-stack-enterprise-ai-runtime/

The simplest mental model: 7 gears that must all engage

- Ownership defines accountability

- Runtime defines execution

- Control plane defines authority

- Economic control defines affordability

- Identity defines permission

- Execution contracts define intent

- Observability defines proof and operability

If even one gear is missing, scaling autonomy converges toward:

- uncontrolled risk, or

- uncontrolled cost

What MVES deliberately excludes

MVES deliberately excludes:

- model leaderboards

- vendor comparisons

- prompt libraries as strategy

- use-case catalogs as operating plans

Because none of these answer the production question:

Can this AI system be owned, governed, reversed, audited, and economically operated at scale?

Until the answer is yes, everything else is secondary.

How MVES fits into the full Enterprise AI system

MVES defines the minimum.

The integrated architecture is described here:

- The Enterprise AI Operating Stack: How Control, Runtime, Economics, and Governance Fit Together

https://www.raktimsingh.com/the-enterprise-ai-operating-stack-how-control-runtime-economics-and-governance-fit-together/

And the maturity journey here:

- Enterprise AI Maturity Model: From Pilots to Governed Autonomy

https://www.raktimsingh.com/enterprise-ai-maturity-model-pilots-to-governed-autonomy/

Closing: the canon-sealing truth

Enterprise AI is not a race to deploy more agents.

It is a discipline of running intelligence safely in the real world.

If you build the Minimum Viable Enterprise AI System, you can scale autonomy without losing control.

If you don’t, every “successful” pilot is just the beginning of an unowned production liability.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.