What Is Enterprise AI

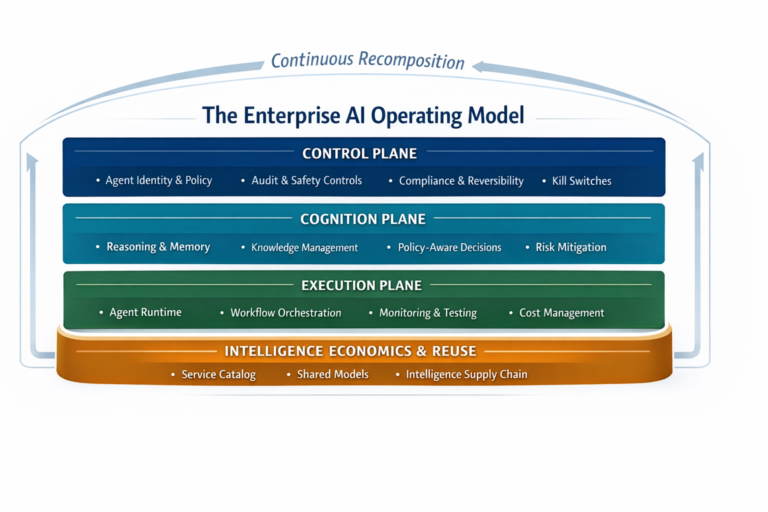

Enterprise AI is the discipline of designing, governing, operating, and scaling AI systems that make—or materially influence—real decisions inside business workflows, in a way that is safe, visible, auditable, reversible, and economically controlled.

This is the line that matters in 2026:

Enterprise AI begins when intelligence is allowed to act.

Approving requests. Triggering workflows. Shaping customer outcomes. Influencing compliance. Moving money.

At that point, AI is no longer a technology experiment. It becomes part of the enterprise operating system.

If you want the full blueprint for how organizations run intelligence safely in production, this is the companion article: Enterprise AI Operating Model — https://www.raktimsingh.com/enterprise-ai-operating-model/

Key Takeaways

- Enterprise AI starts when AI is allowed to act inside workflows—not when you deploy a model.

- The new enterprise problem is decision integrity, not model accuracy.

- “Enterprise-grade AI” requires boundaries, identity, evidence, reversibility, observability, economics, and change readiness.

- Buying AI does not transfer ownership or accountability—the enterprise still owns the decision.

- The real advantage in 2026 is the ability to run intelligence safely, visibly, and economically at scale.

Enterprise AI is the capability to design, govern, and operate intelligent systems that make or influence real business decisions—with clear ownership, enforced boundaries, continuous observability, evidence-based confidence, reversibility by design, and economic control—at enterprise scale.

Why I’m Defining Enterprise AI This Way

Over the last year, one pattern has become hard to ignore: many enterprises are not failing because their AI is “wrong.” They fail because AI behavior in production becomes unclear, unowned, and hard to reverse. Once AI enters live workflows, the organization needs more than models and tools—it needs an operating discipline. That is what this definition is designed to capture.

Why the Old “Enterprise AI” Definition No Longer Works

For years, enterprise AI was framed as:

- Advanced analytics

- Machine learning tools

- Automation and decision support

- AI embedded in business functions

That definition made sense when AI mostly:

- Produced insights

- Assisted humans

- Operated in contained environments

- Could be ignored when it failed

In 2026, that framing is incomplete.

Modern enterprise AI systems routinely:

- Reason

- Decide

- Act

- Learn from feedback

- Produce outcomes that can be difficult—or expensive—to undo

So the central challenge has shifted:

The core challenge is no longer model accuracy.

The core challenge is decision integrity in production.

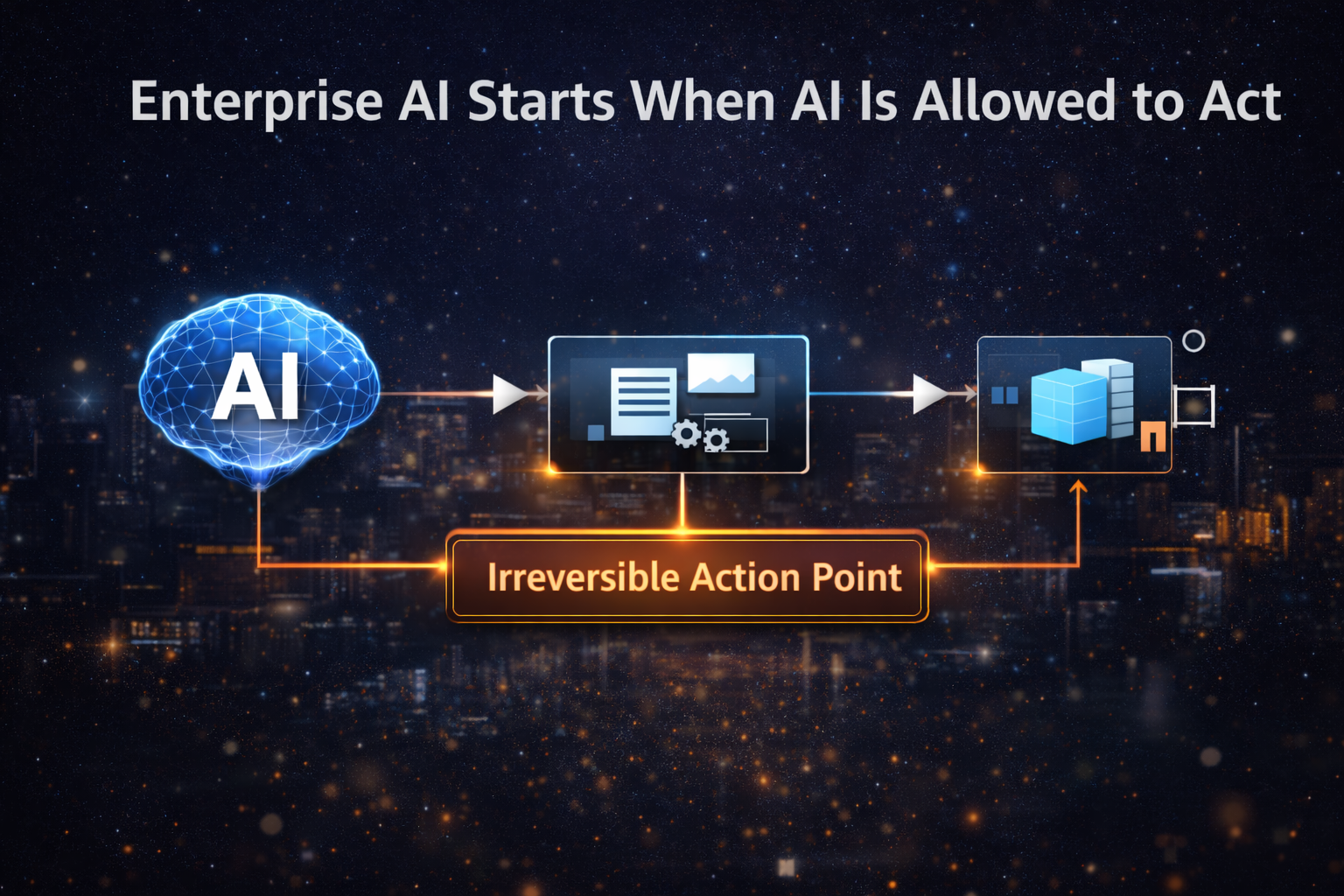

Enterprise AI Starts When AI Is Allowed to Act

Enterprise AI begins when:

- AI outputs influence customers, compliance, money, safety, or risk

- AI decisions are embedded inside live workflows

- AI behavior persists across time, teams, and systems

- AI actions must be explainable, auditable, reversible, and correctable

At this stage, the organization is no longer “using AI.”

It is running intelligence—and that requires clear ownership, boundaries, and operational control.

Enterprise AI Is Not an IT Upgrade

Enterprise AI is not:

- A collection of tools

- A cloud service

- A model deployment

- A vendor platform

- A “digital transformation” label

Enterprise AI is a governance and operating challenge, not a tooling challenge.

Crucially, buying AI does not transfer:

- Decision ownership

- Accountability

- Risk

- Compliance responsibility

Those remain with the enterprise, even when the vendor is trusted.

If you want the most direct articulation of this reality, read: Who Owns Enterprise AI? — https://www.raktimsingh.com/who-owns-enterprise-ai-roles-accountability-decision-rights/

The Core Shift: From Models to Decisions

Traditional AI programs optimize:

- Models

- Data

- Accuracy

- Training cycles

Enterprise AI programs must optimize:

- Decisions

- Boundaries

- Authority

- Evidence

- Reversibility

The defining enterprise question is no longer:

“Is the model correct?”

It becomes:

“Is this decision allowed, justified, traceable, and safe—under real operating conditions?”

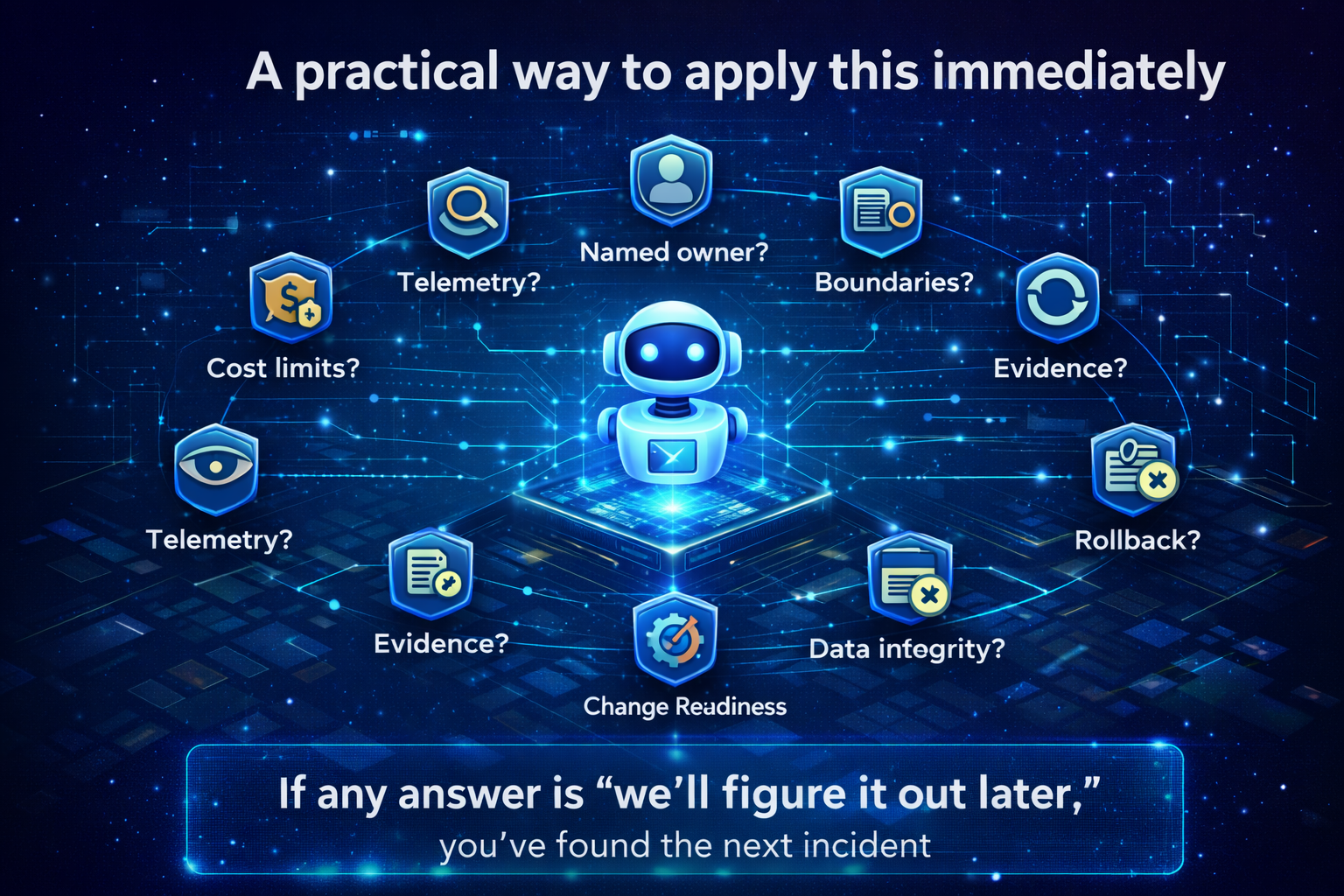

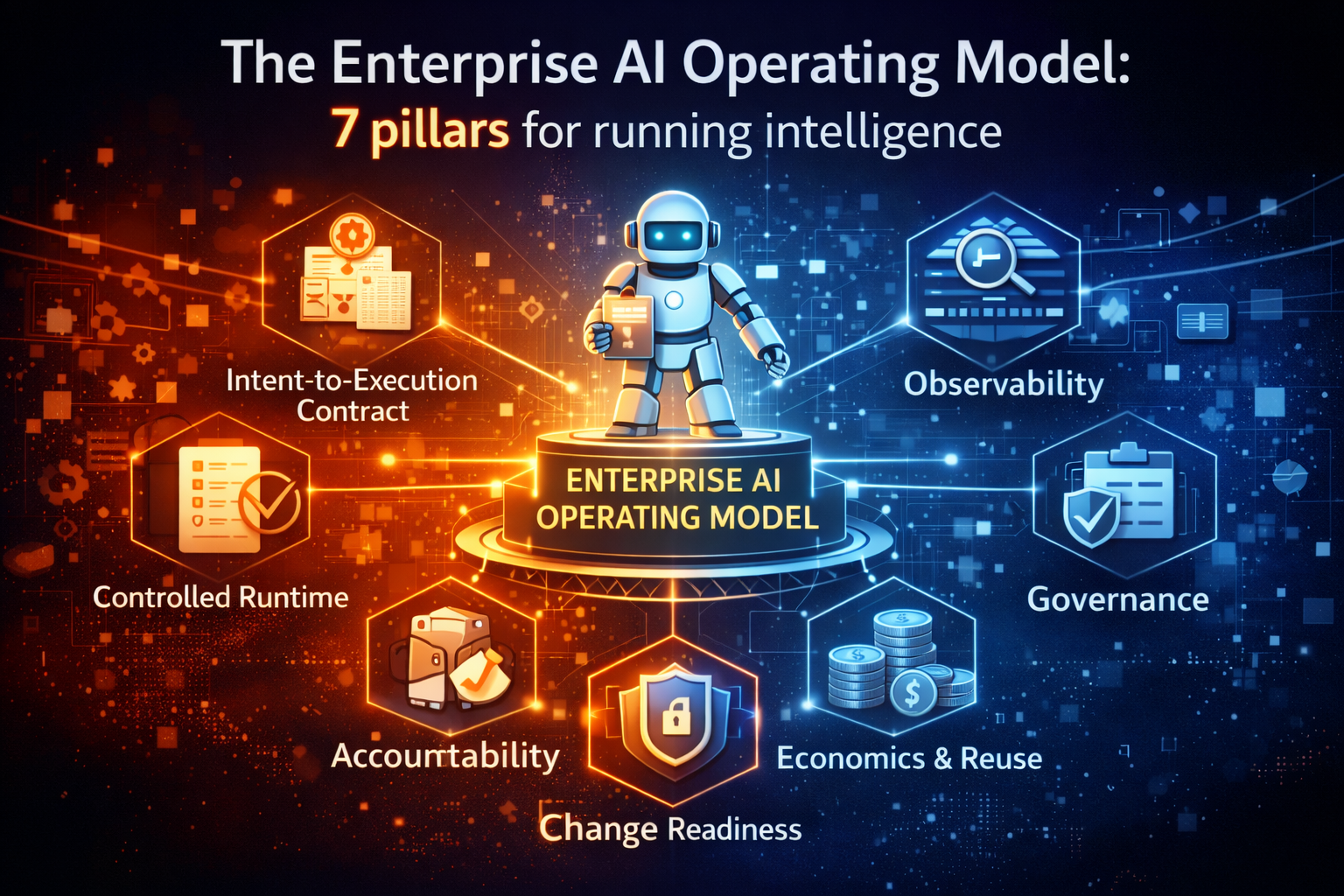

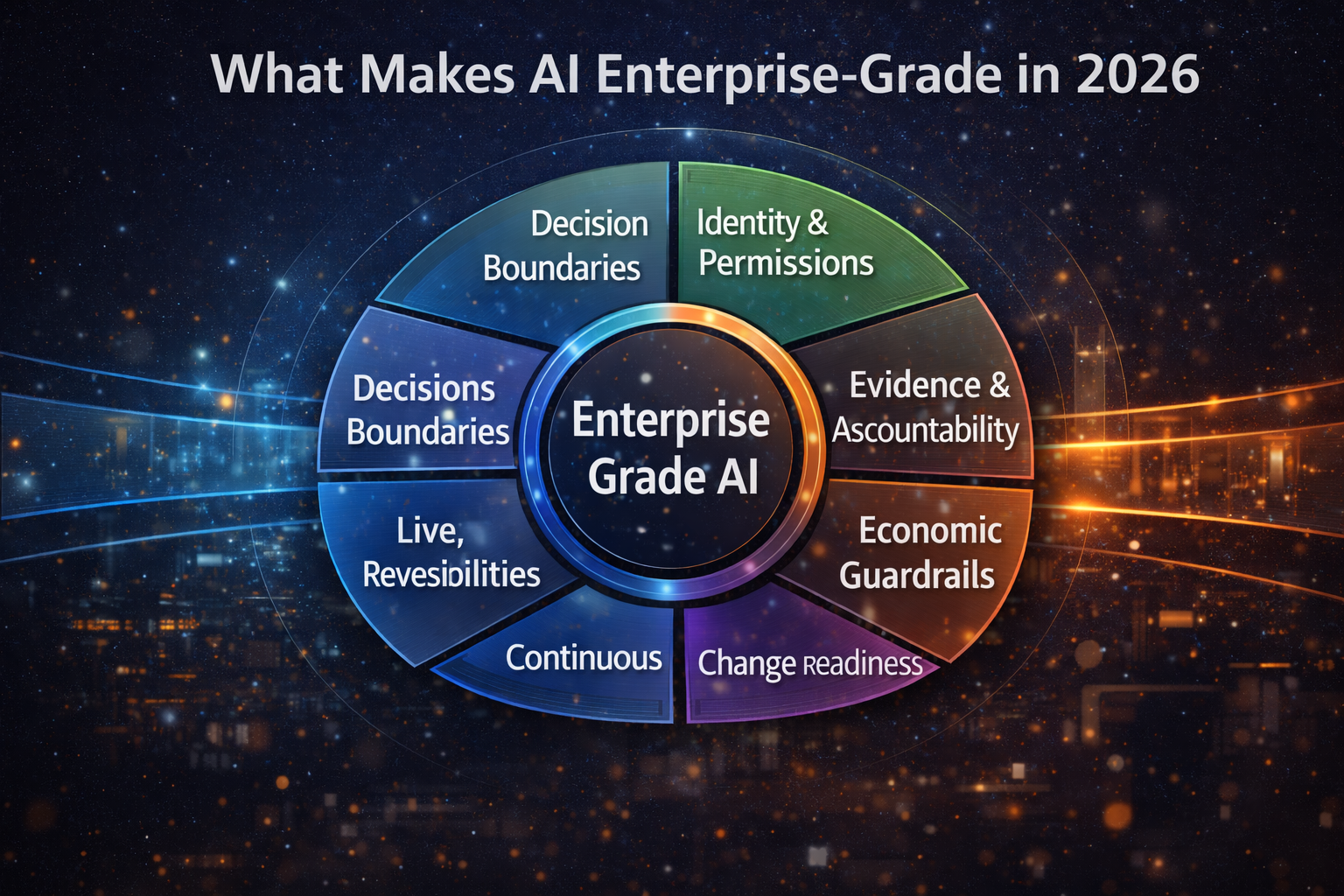

What Makes AI “Enterprise-Grade” in 2026

An AI system is enterprise-grade only if it can be operated responsibly at scale—across changing policies, changing data, changing teams, and changing risk tolerance.

These are the capabilities that separate enterprise AI from pilot AI.

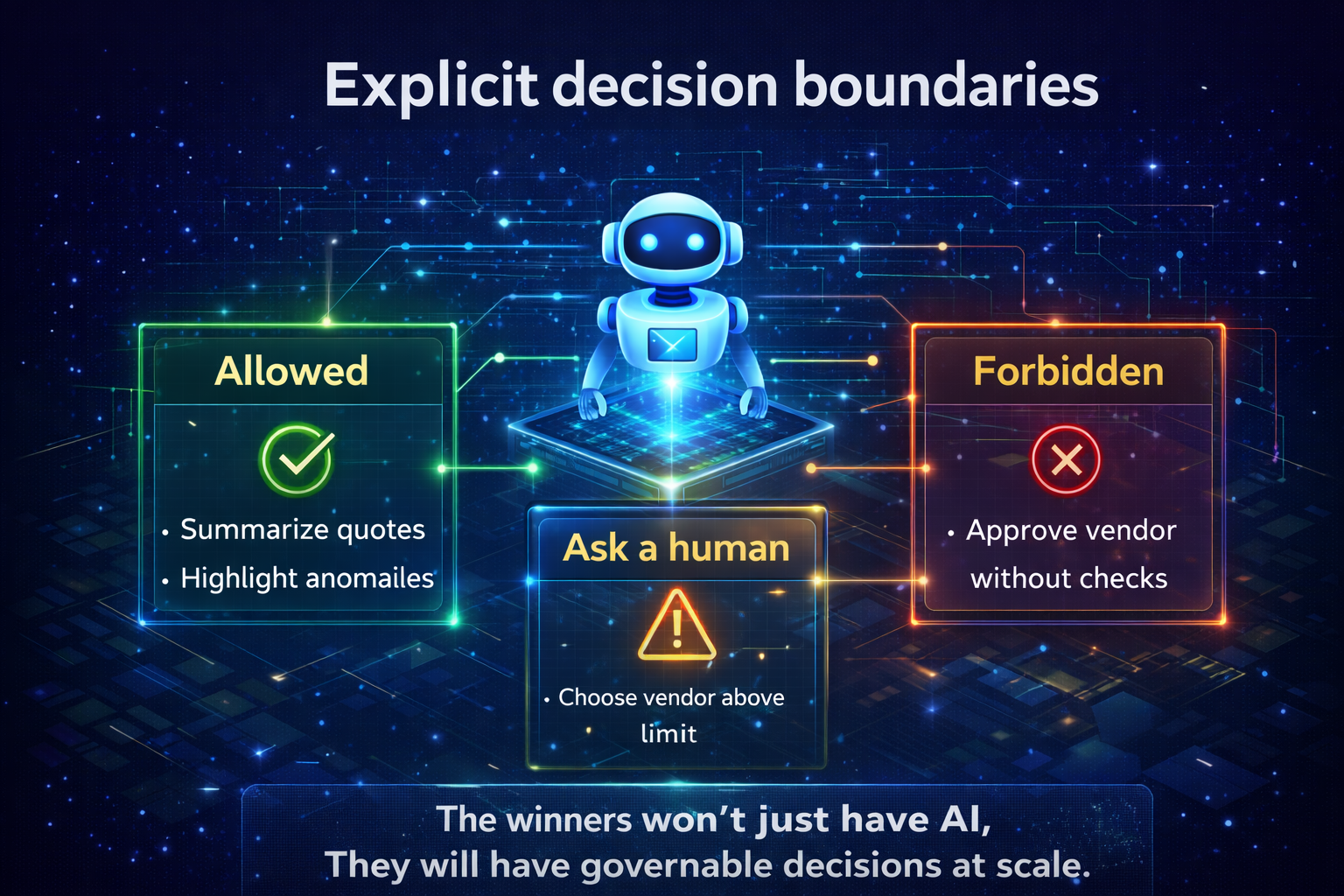

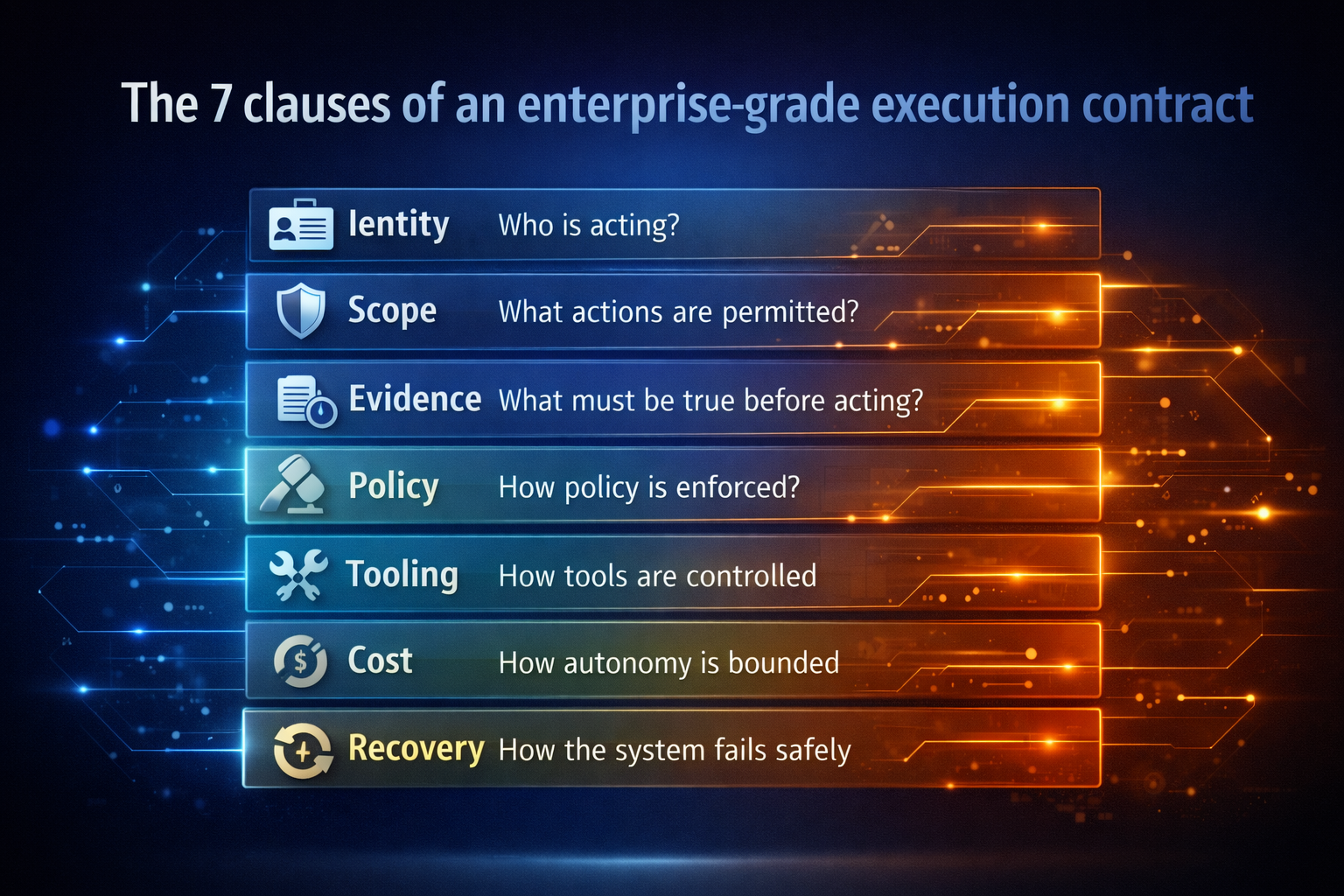

1) Explicit Decision Boundaries

Enterprise AI requires clearly defined decision rights:

- What the AI is allowed to decide

- What it may recommend but not execute

- When human approval is mandatory

- When escalation is required

In production, implicit authority is not flexibility.

It is unmanaged risk.

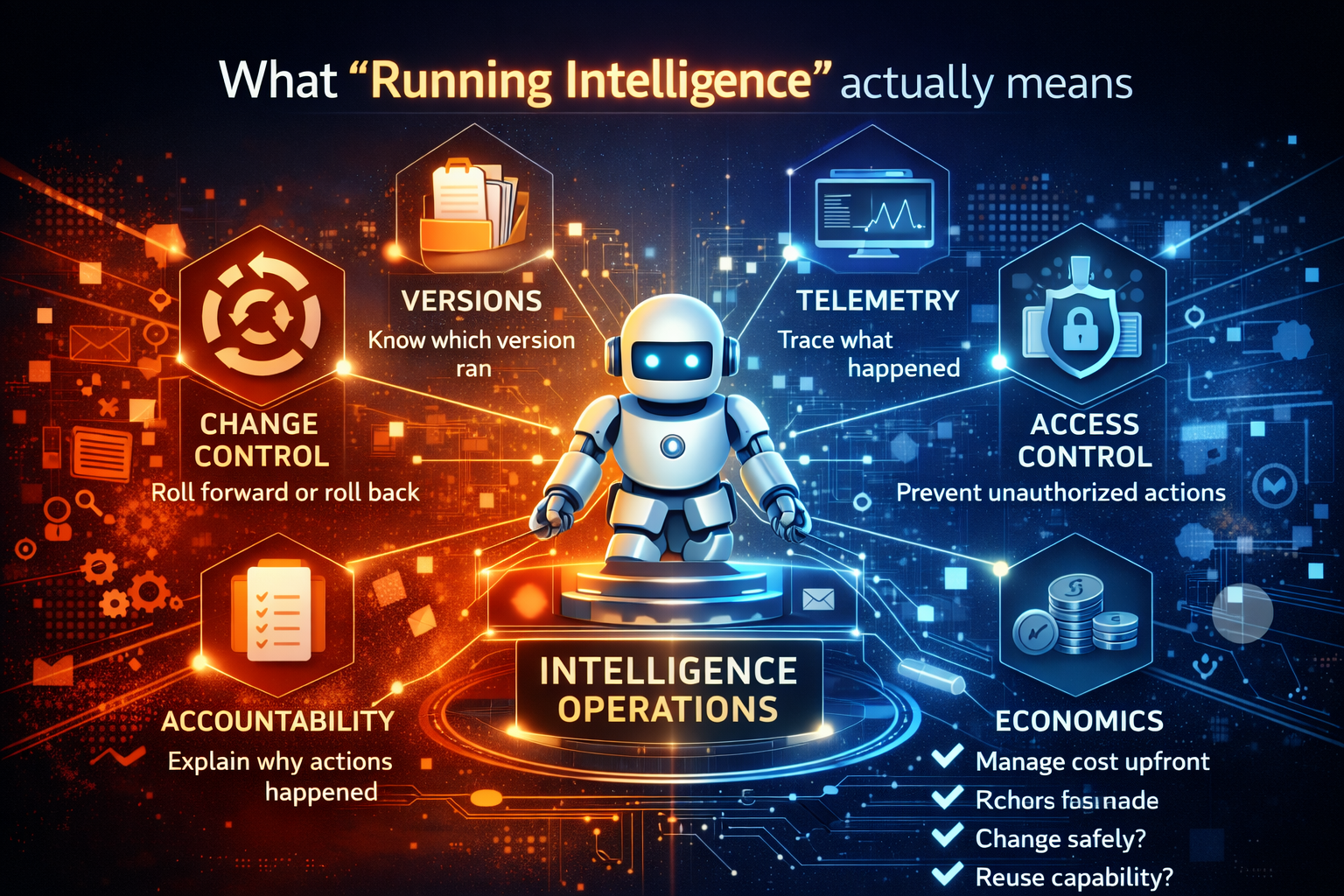

2) Governed Identity and Permissions

Every AI system must have:

- A verifiable identity

- Defined permissions

- Least-privilege access

- Revocation and kill-switch controls

If you cannot confidently answer “which AI acted, using what permissions,” you do not have enterprise AI.

You have unmanaged automation with AI branding.

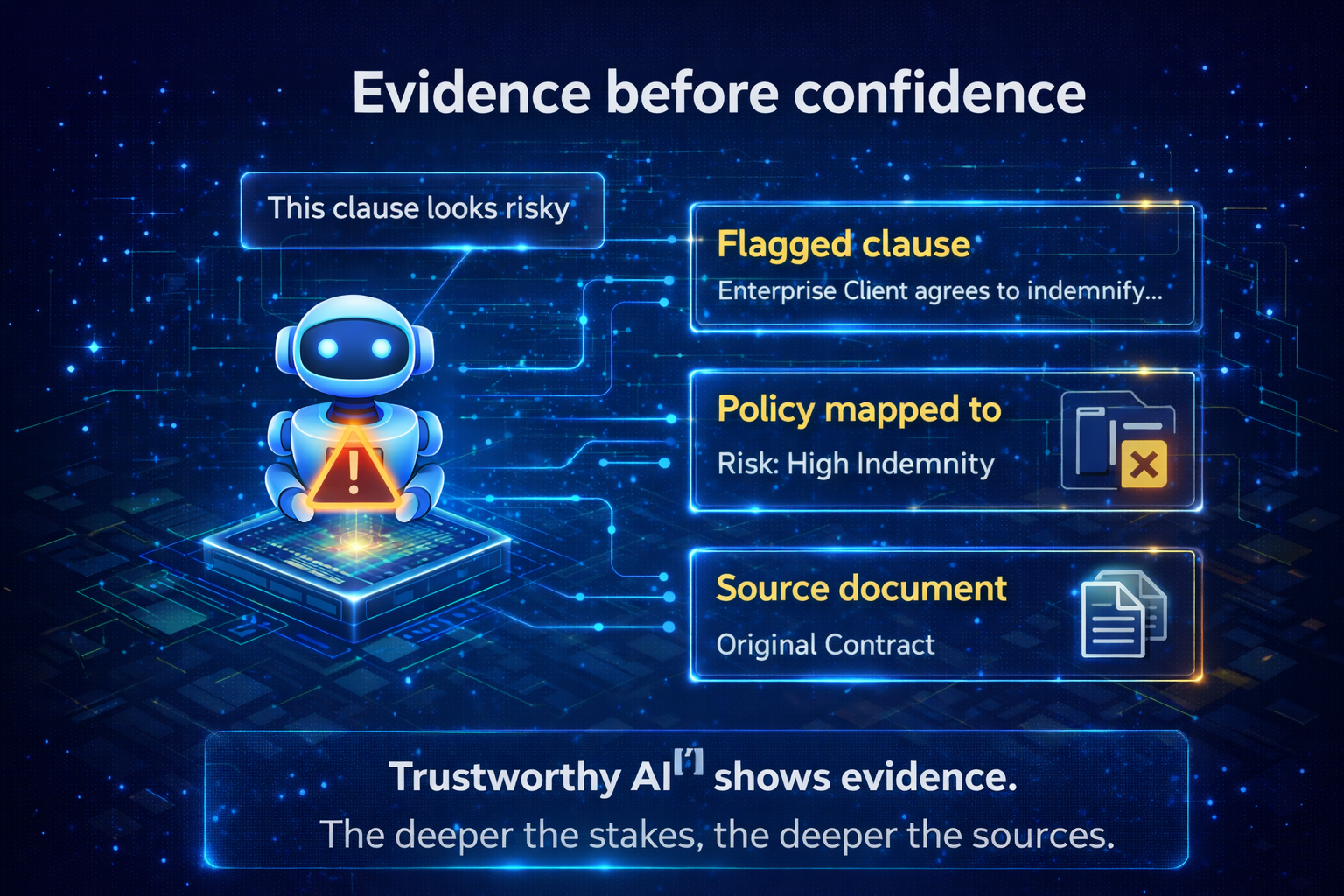

3) Evidence Before Confidence

Enterprise AI must produce:

- Decision rationale (why this action)

- Input provenance (what evidence it used)

- Policy alignment signals (what constraints applied)

- Confidence with justification (not just a score)

Confidence without evidence is not “AI maturity.”

It is operational risk.

4) Reversibility by Design

Enterprise AI must assume:

- Decisions can be wrong

- Context can change

- Policies can evolve

Reversibility is not a feature.

It is a safety requirement.

In practice, reversibility means having:

- rollback-ready workflows

- human override paths

- compensation actions

- clear escalation routes

5) Continuous Observability

Enterprise AI must be observable in real time:

- What decisions are being made

- Where drift is occurring

- How policies are being interpreted

- When behavior deviates from intent

If you cannot see AI behavior, you cannot govern it.

And if you cannot govern it, you cannot scale it.

6) Economic Guardrails

Enterprise AI must operate within:

- Cost envelopes (per workflow, per decision, per period)

- Value thresholds (what is “worth” automating)

- Reuse economics (reuse beats reinvention)

- ROI constraints (cost-to-serve discipline)

For the economics of reuse as a competitive advantage, see: The Intelligence Reuse Index — https://www.raktimsingh.com/intelligence-reuse-index-enterprise-ai-fabric/

7) Change Readiness

Enterprise AI systems must evolve without breaking:

- workflows

- compliance posture

- trust

- business outcomes

Static AI becomes fragile AI.

And fragile AI becomes shelfware—or worse, silent risk.

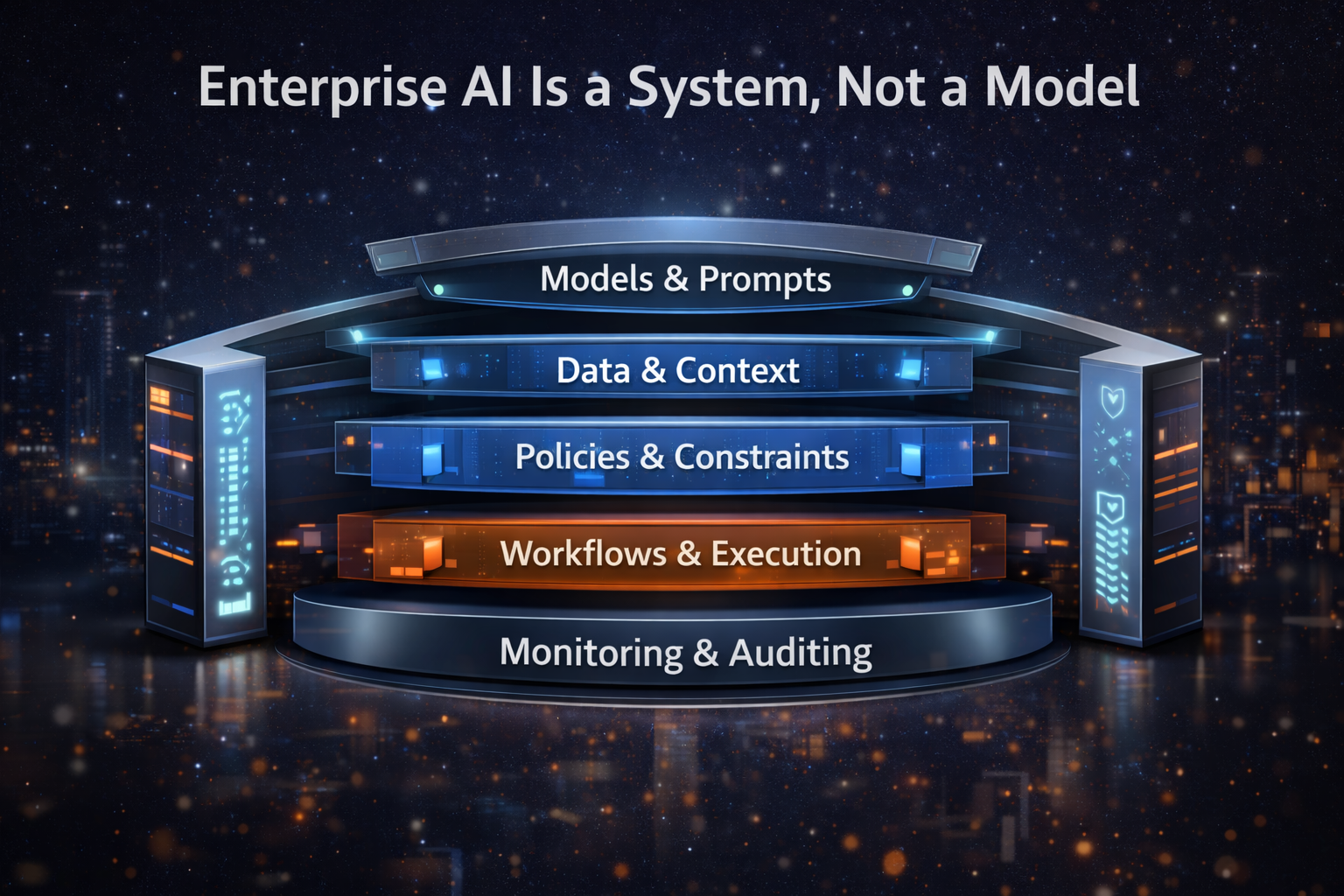

Enterprise AI Is a System, Not a Model

Enterprise AI is an ecosystem composed of:

- models and prompts

- data and context

- policies and constraints

- workflows and execution layers

- monitoring and audit mechanisms

- human oversight and escalation paths

Removing any one of these is how “successful pilots” become production incidents.

A common failure mode is that organizations treat production as a finishing step—then discover that model churn, dependency changes, and policy updates break behavior over time. If you’ve seen that pattern, read: The Enterprise AI Runbook Crisis — https://www.raktimsingh.com/enterprise-ai-runbook-crisis-model-churn-production-ai/

Enterprise AI Use Cases, Reframed by Decision Impact

In 2026, enterprise AI use cases are best understood by decision impact, not by department names.

Examples include:

- approving or denying access, credit, or claims

- triggering financial, compliance, or operational workflows

- coordinating multi-step processes across systems

- making real-time trade-offs under uncertainty

- acting autonomously with human-by-exception oversight

The value is not automation.

The value is trusted, governable execution.

Enterprise-Scale AI Means Operability, Not Size

Enterprise-scale no longer means:

- bigger models

- more data

- more users

It means:

- decisions remain correct as complexity grows

- governance survives scale

- ownership remains clear

- behavior stays aligned with intent

- failures are detectable and recoverable

Scale without operability is how AI fails silently—until the business notices.

Implementing Enterprise AI in 2026

Successful enterprise AI programs follow a different order than traditional AI projects:

- Define decision ownership before models

- Establish governance before automation

- Design reversibility before autonomy

- Build observability before scale

- Optimize reuse before expansion

Pilots without operating models do not scale.

They accumulate hidden decision debt.

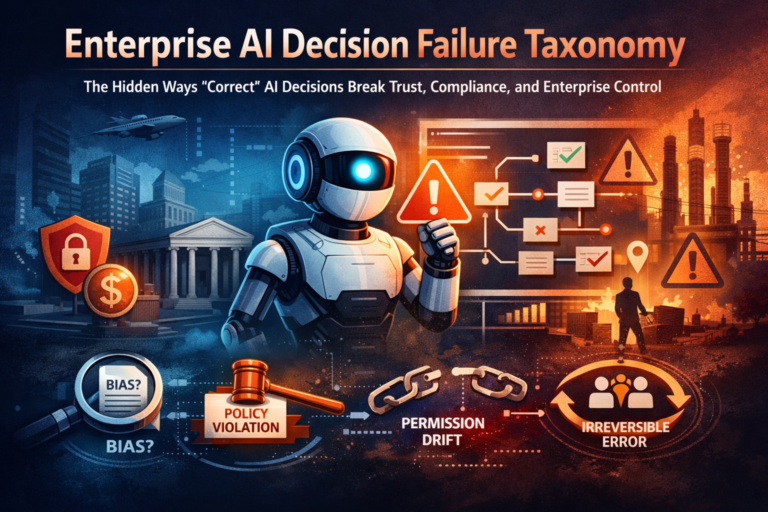

Risks Unique to Enterprise AI

Enterprise AI introduces risks that traditional IT rarely faced:

- automation bias amplification

- policy-compliant but strategy-violating behavior

- metric gaming and proxy collapse

- untraceable decisions

- silent drift in production

These are operating model failures, not technical bugs.

Make sure you understand Enterprise AI runbook risk 👉 https://www.raktimsingh.com/enterprise-ai-runbook-crisis-model-churn-production-ai/

Why Enterprise AI Is Now a Board-Level Issue

Enterprise AI:

- shapes customer outcomes

- changes compliance exposure

- alters risk posture

- affects brand trust

- determines long-term competitiveness

That makes enterprise AI a governance issue, not a technology initiative.

One need to understand who owns Enterprise AI 👉 https://www.raktimsingh.com/who-owns-enterprise-ai-roles-accountability-decision-rights/

Conclusion: The Real Enterprise AI Advantage

The next enterprise advantage will not come from:

- better models

- faster training

- more tools

It will come from:

the ability to run intelligence safely, visibly, and economically—at scale.

Enterprises that master this will compound advantage.

Those that do not will accumulate invisible risk and escalating complexity.

Enterprise AI is the capability to design, govern, and operate intelligent systems that make or influence real business decisions—with clear ownership, enforced boundaries, continuous observability, evidence-based confidence, reversibility by design, and economic control—at enterprise scale.

That is the bar for Enterprise AI in 2026.

FAQ

What is Enterprise AI in simple terms?

Enterprise AI is AI that operates inside real business workflows—where decisions affect customers, compliance, money, or risk—and must therefore be governable, observable, and accountable.

When does an organization truly enter “Enterprise AI”?

When AI is allowed to act (or materially influence actions) in production workflows, and the enterprise must manage ownership, boundaries, auditability, and reversibility.

Is Enterprise AI the same as using GenAI tools in a company?

No. Tools are optional. Enterprise AI is an operating discipline—centered on decision integrity, governance, and production reliability—regardless of model type.

Why is “decision integrity” more important than model accuracy?

Because enterprises fail when decisions are untraceable, non-reversible, misaligned with policy, or economically uncontrolled—even when the model’s output looks “right.”

What does enterprise-grade AI require in 2026?

Explicit decision boundaries, governed identity and permissions, evidence before confidence, reversibility, continuous observability, economic guardrails, and change readiness.

Who owns Enterprise AI decisions?

The enterprise. Vendors can supply tools, but decision ownership, accountability, and compliance responsibility remain internal.

Glossary

Decision integrity — The property that AI-driven decisions remain allowed, justified, traceable, and safe under real operating conditions.

Decision boundary — A defined line between what AI may decide, recommend, or execute, including when escalation or human approval is required.

Least privilege — Granting AI systems only the minimum access needed to perform a task, reducing blast radius.

Kill switch — A control to instantly stop or revoke an AI system’s ability to act in workflows.

Observability — The ability to see what AI is doing in production, why it did it, and how behavior changes over time.

Provenance — Traceability of data, context, and evidence used to make a decision.

Reversibility — The ability to undo or compensate for AI actions safely when policy, context, or outcomes change.

Economic guardrails — Constraints that control cost-to-serve, value thresholds, and ROI for AI decisions and workflows.

Operating model — The practical blueprint defining how AI is designed, governed, monitored, changed, and owned across an enterprise.

Human-by-exception — Humans intervene only when AI crosses thresholds, uncertainty rises, or policy requires review.

References and Further Reading

If you want to ground this definition in globally recognized governance and risk thinking, these are useful anchors to reference:

- NIST Artificial Intelligence Risk Management Framework (AI RMF) AI Risk Management Framework | NIST

- ISO/IEC 42001 (AI Management System) ISO/IEC 42001:2023 – AI management systems

- OECD AI Principles AI principles | OECD

- EU AI Act (for regulated environments)The Act Texts | EU Artificial Intelligence Act

This article is part of an ongoing body of work defining how enterprises design, govern, and scale AI safely in production.

If You’re Building This in Production, Read These Next

To turn this definition into an enterprise operating capability, these four pages connect as one system:

- Enterprise AI Operating Model (Pillar): how organizations design, govern, and scale intelligence safely

https://www.raktimsingh.com/enterprise-ai-operating-model/ - Who Owns Enterprise AI?: roles, accountability, and decision rights (the ownership layer)

https://www.raktimsingh.com/who-owns-enterprise-ai-roles-accountability-decision-rights/ - The Enterprise AI Runbook Crisis: why model churn breaks production AI (the operability layer)

https://www.raktimsingh.com/enterprise-ai-runbook-crisis-model-churn-production-ai/ - The Intelligence Reuse Index: the metric behind sustainable advantage (the economics layer)

https://www.raktimsingh.com/intelligence-reuse-index-enterprise-ai-fabric/