Enterprise AI Enforcement Doctrine

Enterprise AI enforcement doctrine defines how autonomous AI systems are stopped, constrained, reversed, and defended in real production environments.

As AI systems move from advising humans to executing decisions, enterprises must enforce autonomy at runtime—not just document governance policies. This article introduces a practical enforcement doctrine that makes Enterprise AI operable, auditable, and safe at scale.

Enterprise AI doesn’t fail because models are inaccurate.

It fails because AI becomes unstoppable the moment it starts acting inside real workflows.

As soon as AI moves from advising to doing—approving refunds, changing prices, provisioning access, sending customer communications, escalating tickets, routing claims—your organization stops asking:

- “Is the model good?”

…and starts asking:

- “If this goes wrong, can we stop it in seconds—safely—and prove exactly what happened?”

That question is what this article answers.

Enterprise AI Enforcement Doctrine is the practical rulebook that makes autonomy permitted in enterprise environments—because it is also controllable, reversible, and defensible.

If your goal is to make raktimsingh.com the canonical Enterprise AI system-of-record, this doctrine is the missing binding layer that turns your architecture into a discipline and your discipline into authority.

Doctrine in 10 Laws

These are the 10 laws that separate “AI that demos well” from “AI that is safe to run in production.”

Law 1 — Every autonomous AI system must be stoppable

If autonomy cannot be paused in seconds, it is not enterprise-ready. Stopping must halt new actions, preserve evidence, and switch to a fallback mode without business collapse.

Law 2 — Action authority must be explicit, not implied

AI may compute anything, but it may act only when explicitly authorized by decision type, risk, evidence, and policy constraints.

Law 3 — High-impact decisions require human authority

If a decision affects money, access, rights, or trust—or is hard to reverse—human approval must be real, empowered, and fast.

Law 4 — Policy must be enforced at runtime, not just documented

Policies that can’t block actions are opinions. Enforcement means policy checks before, during, and after execution.

Law 5 — Every AI actor must have a governed identity

Every agent needs a unique identity, least-privilege permissions, an accountable owner, and immediate revocation capability.

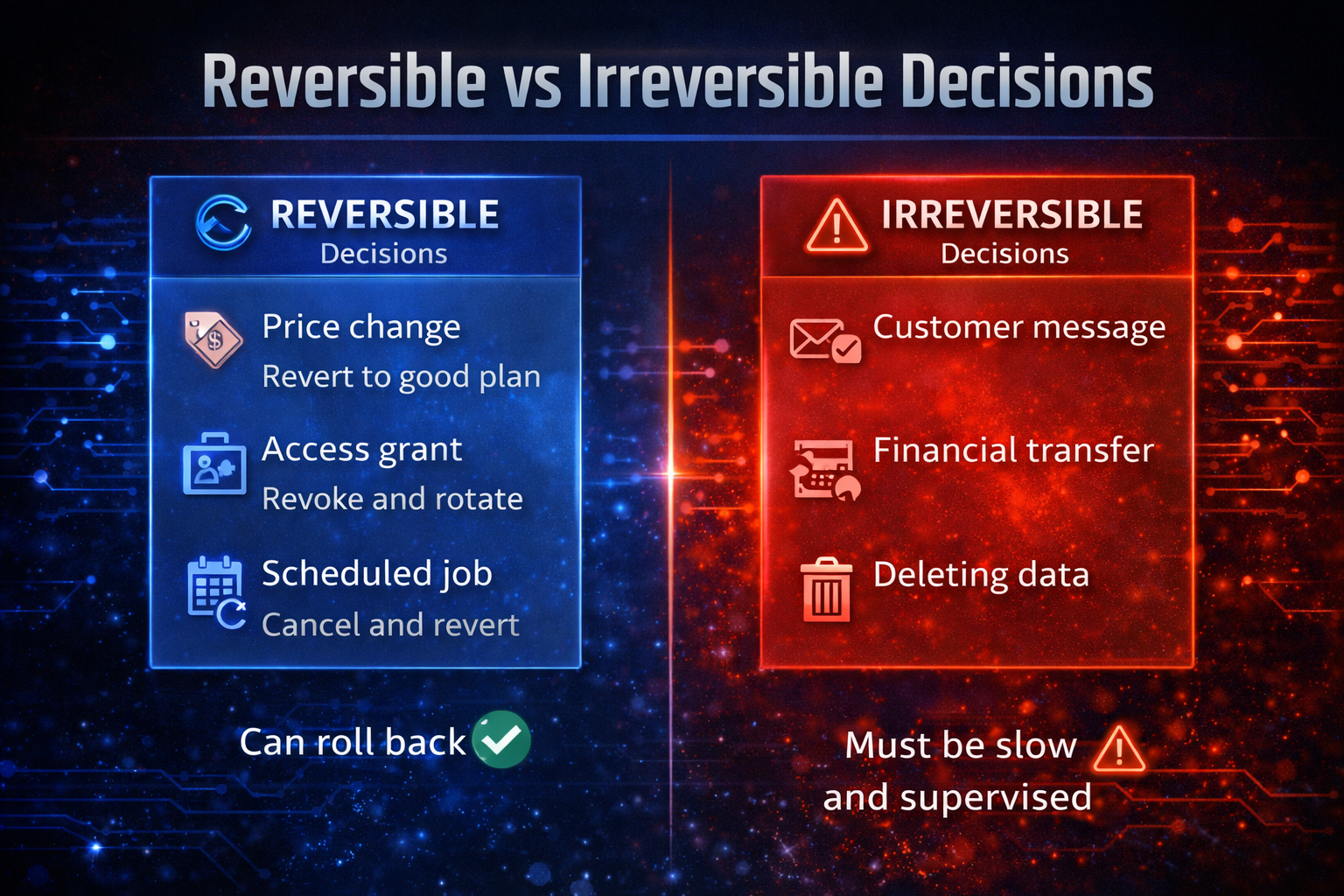

Law 6 — Every decision must be reversible, or treated as irreversible

Reversibility must be engineered. If an action is irreversible, thresholds must be stricter and approvals mandatory.

Law 7 — Evidence must precede confidence

Confidence scores don’t defend actions. Evidence does: inputs, constraints, policy checks, tool calls, approvals, and intent.

Law 8 — Incidents are about decisions, not models

Enterprise AI failures are decision boundary failures. Postmortems must analyze decisions, not just deployments.

Law 9 — Autonomy must be adjustable over time

Autonomy expands and contracts based on stability, drift, incidents, and risk—never “set-and-forget.”

Law 10 — AI must be governed as an operating discipline

AI governance is not a launch milestone. It is a cadence: reviews, exceptions, overrides, incident learning, and board visibility.

This doctrine is the operational “enforcement layer” behind those laws:

https://www.raktimsingh.com/laws-of-enterprise-ai/

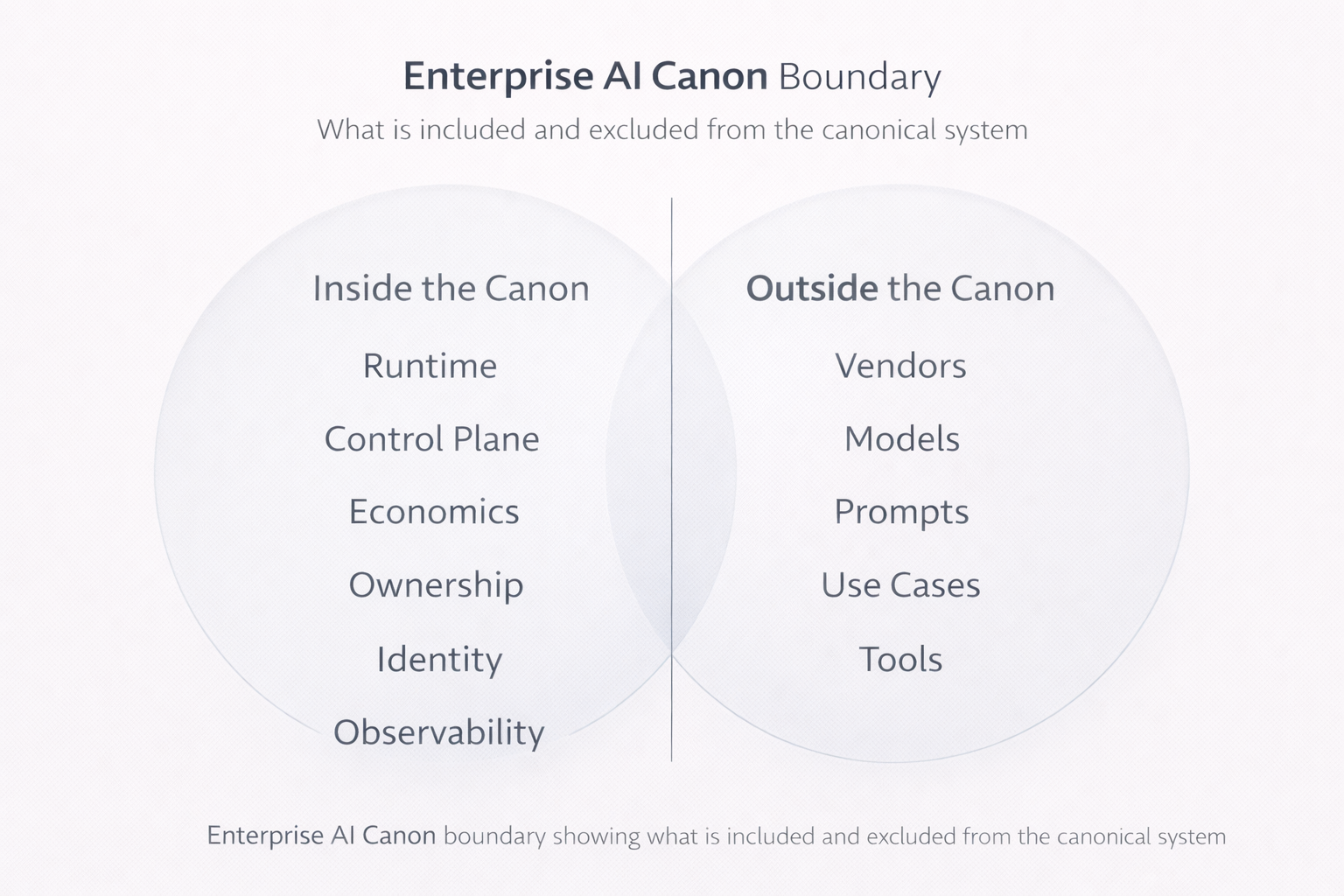

What is Enterprise AI Enforcement Doctrine?

Enterprise AI Enforcement Doctrine is the set of runtime rules + control mechanisms that determine:

- when AI may act, and when it must only suggest

- what actions are allowed, constrained, or forbidden

- who can override, pause, or revoke permissions

- how actions are rolled back (or prevented if irreversible)

- how decisions are explained and defended with evidence

This is not the same as generic “AI governance.”

Governance defines intent: principles, roles, risk posture, decision rights.

Enforcement makes that intent real at the moment of action—inside the system that is actually running.

That “where it runs” matters, and it’s why enforcement must live in the Enterprise AI Runtime:

https://www.raktimsingh.com/enterprise-ai-runtime-what-is-running-in-production/

Why this doctrine matters now

Most enterprises already have AI.

What they don’t have is autonomy they can safely permit.

The global failure pattern looks the same across industries and geographies:

- AI is launched as “automation”

- actions happen faster than humans can reason

- exceptions pile up

- no one has clear authority to intervene

- a single boundary failure creates blast radius: financial, regulatory, reputational

So the real maturity milestone isn’t “we deployed AI.”

It is:

“We can stop AI, constrain AI, roll back AI decisions, and prove why AI acted.”

That’s closure. That’s authority. That’s irreplaceability.

The simplest mental model: AI needs traffic laws, not just driver training

You can train the driver (the model) and still have chaos if the road has no rules.

Enterprise enforcement is the “traffic system” for autonomous decisions:

- speed limits → rate limits and action thresholds

- signals → policy gates and approvals

- barriers → permission boundaries and least privilege

- enforcement → circuit breakers, kill-switches, safe pause

- incident response → rollback, evidence capture, postmortems

This is also why your Enterprise AI Control Plane becomes the core instrument of enforcement, not an optional architecture layer:

https://www.raktimsingh.com/enterprise-ai-control-plane-2026/

The Enforcement Stack: 7 capabilities that make autonomy controllable

1) Decision gating: autonomy is a ladder, not a switch

A mature enforcement doctrine defines levels of autonomy. A simple ladder that works in any enterprise:

- Suggest: AI recommends; humans act

- Draft: AI prepares changes; humans approve

- Execute with constraints: AI acts inside strict boundaries

- Execute + notify: AI acts and alerts owners

- Execute + verify: AI acts but must pass post-checks to continue

Example: customer support refunds

- AI drafts the response and refund rationale for every case.

- AI auto-approves refunds only below a threshold and only when evidence is clean.

- Anything above that goes to “Draft + Approve,” not “Execute.”

This makes autonomy safe because execution is earned.

If you want the best decision classification foundation to drive these gates, link the concept to your decision-clarity doctrine:

https://www.raktimsingh.com/decision-clarity-scalable-enterprise-ai-autonomy/

2) Action thresholds: confidence is not enough—evidence is the gate

Most systems gate actions based on model confidence. That is fragile because confidence can be high even when context is wrong.

Enforcement doctrine gates on evidence thresholds, such as:

- are policy conditions satisfied?

- are inputs complete and trustworthy?

- are signals consistent, or conflicting?

- is this action reversible?

- is the actor authorized?

Example: access provisioning

Even if AI is confident, it cannot grant privileged access unless:

- requester identity is verified

- request matches approved role templates

- MFA requirements are met

- separation-of-duties is satisfied

The model can recommend. The system decides whether it can act.

3) Permissioning: least privilege is the enforcement foundation

Autonomous AI cannot operate as a shared admin user.

Each autonomous actor needs:

- a unique machine identity

- least-privilege permissions aligned to its role

- an accountable owner

- immediate revocation capability

This is exactly why an Enterprise AI Agent Registry is not “nice architecture.” It is enforcement reality:

https://www.raktimsingh.com/enterprise-ai-agent-registry/

Example: screen-using agents

If an agent can click through a UI, it can approve, export, delete, and modify. Without least privilege and fast revocation, you’ve created a breach pathway disguised as productivity.

4) Policy-as-runtime: rules must block actions, not decorate documents

Many enterprises have policies that look strong on paper but have no runtime teeth.

Enforcement doctrine requires policy checks:

- Pre-action: block prohibited actions before they occur

- In-action: enforce constraints during execution

- Post-action: verify outcomes and trigger rollback if required

Example: outbound customer messages

Policy might require:

- approved templates only

- prohibited phrases blocked

- compliance checks for certain products or claims

- mandatory “human approval” for specific scenarios

If the policy can’t block the message in runtime, it isn’t policy.

That is what your Control Plane is meant to do in practice:

https://www.raktimsingh.com/enterprise-ai-control-plane-2026/

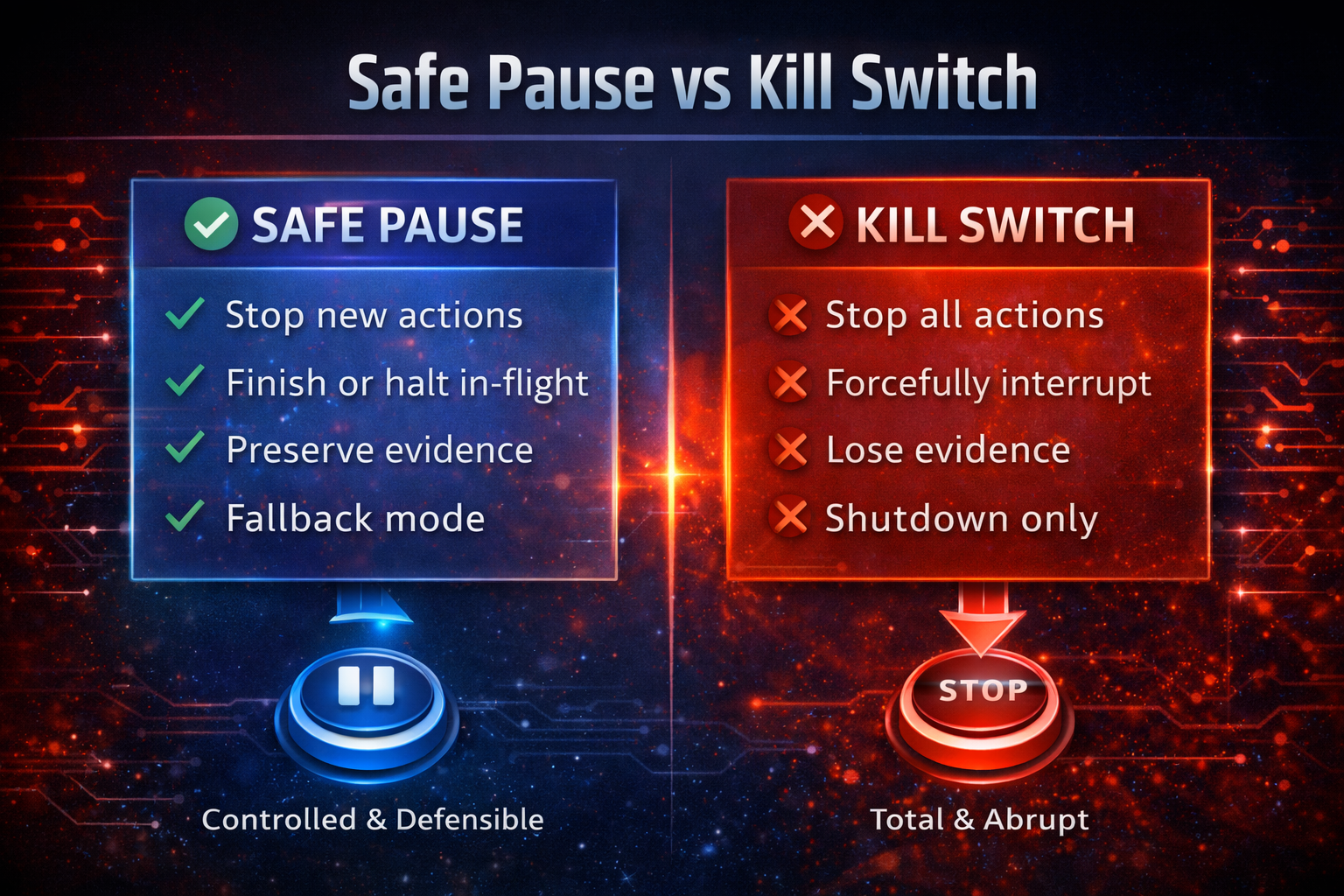

5) Circuit breakers and kill-switches: “safe pause,” not chaos

A kill-switch is not “turn off AI.”

A mature enforcement doctrine defines safe pause:

- stop new actions immediately

- let in-flight actions complete safely (or stop at checkpoints)

- preserve evidence

- route to fallback mode (manual or deterministic rules)

- alert owners and incident responders

Example: claims processing

A safe pause might:

- stop payouts instantly

- continue intake

- hold cases in a review queue

- preserve every decision trace and evidence packet

That prevents both financial leakage and operational breakdown.

6) Reversibility engineering: roll back decisions, not just deployments

Most teams can roll back code. Very few can roll back decisions.

Enforcement doctrine requires every action type to be explicitly classified as:

- Reversible: rollback exists and is tested

- Hard-to-reverse or irreversible: requires stronger oversight and constraints

Examples

- Price change → revert to last-known-good price plan

- Access grant → revoke, rotate credentials, trigger review

- Scheduled job → cancel and revert state

Hard-to-reverse actions

- sending customer communications

- executing financial transfers

- deleting data

If it’s hard to reverse, the system must treat it as “slow and supervised.”

This is one of the reasons your Minimum Viable Enterprise AI System must include reversibility, not just monitoring:

https://www.raktimsingh.com/minimum-viable-enterprise-ai-system/

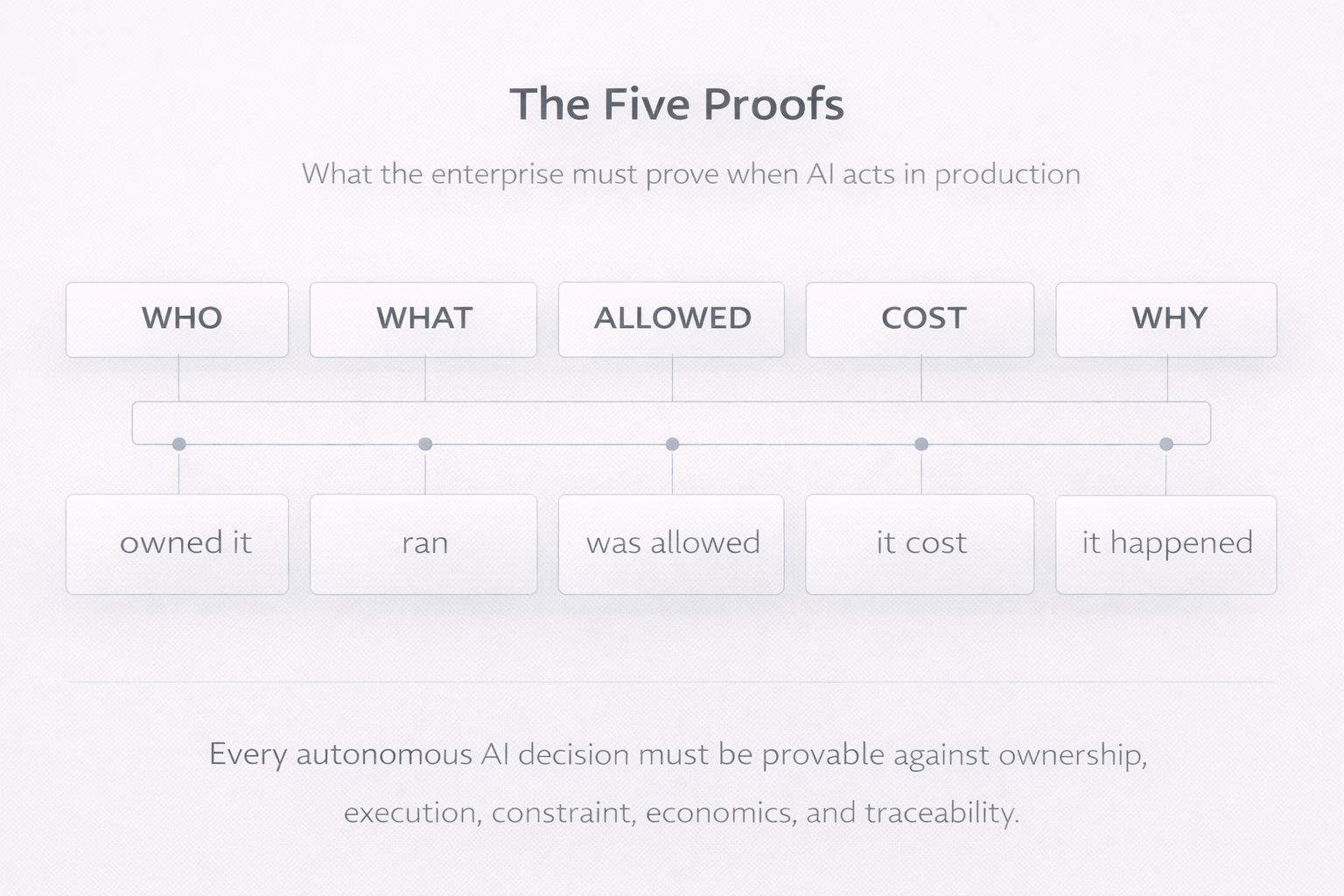

7) Decision evidence: create “defensibility packets” for material actions

When things go wrong, nobody asks for model accuracy charts.

They ask:

“Why did the system do this—here—and who allowed it?”

Enforcement doctrine requires an evidence packet for every material decision:

- inputs used (at the time)

- constraints applied

- policies checked and results

- tools invoked and outputs

- human approvals (if any)

- the final action taken and next planned step

This turns AI from “mysterious automation” into “defensible operations.”

If you want a canonical lens on how “correct” decisions can still fail governance boundaries, link this concept to your decision failure taxonomy:

https://www.raktimsingh.com/enterprise-ai-decision-failure-taxonomy/

Three enterprise scenarios that make enforcement obvious

Scenario 1: Banking — suspicious transaction response

Without enforcement:

AI blocks accounts automatically; false positives spike; customers flood support; no clear rationale exists.

With enforcement doctrine:

- AI may recommend blocking for medium-risk cases

- AI may auto-block only for high-risk cases with evidence checks

- ambiguous cases go to a human queue with authority

- circuit breaker pauses auto-blocking during anomalies

- every block includes an evidence packet

Result: fewer trust failures, defensible controls, faster resolution.

Scenario 2: Retail — dynamic pricing

Without enforcement:

AI updates prices too frequently; margins swing; brand trust erodes; teams blame the model.

With enforcement doctrine:

- caps on magnitude and frequency

- approval gates for sensitive categories

- anomaly triggers circuit breaker

- rollback to last-known-good plan is automatic

Result: autonomy without volatility shocks.

Economic controls are not separate from enforcement. Cost is a behavioral boundary—handled through your economic control plane lens:

https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/

Scenario 3: Enterprise IT — access provisioning

Without enforcement:

AI grants broad permissions; a compromised request escalates privileges; breach occurs.

With enforcement doctrine:

- least-privilege role templates only

- separation-of-duties enforced (agent cannot approve its own elevation)

- privileged actions always require human approval

- immediate revocation exists

- all access changes are reversible and recorded

Result: autonomy that respects security reality.

A repeatable enforcement checklist

- Autonomy must be stoppable in seconds.

- Execution is a privilege, not a default.

- If it’s irreversible, it must be slow and supervised.

- Policy that can’t block actions isn’t policy.

- Every agent has identity, permissions, and an owner.

- Evidence precedes confidence.

- Incidents are decision failures, not model failures.

- Autonomy must adjust over time.

Conclusion

Enterprise AI scales only when it becomes stoppable.

Not because executives fear intelligence—

but because they fear irreversible decisions at machine speed.

If you want one question that reveals maturity, ask:

“Can we safely stop this AI right now—without breaking the business?”

If the answer isn’t an immediate yes, you don’t have enforcement.

You have risk disguised as automation.

FAQs

What is Enterprise AI Enforcement Doctrine?

It is the runtime rule system that controls when AI can act, when it must ask humans, how it can be paused, and how decisions are rolled back and defended with evidence.

Is enforcement the same as AI governance?

No. Governance defines intent (roles, policies, risk posture). Enforcement makes intent real at runtime through gates, permissions, circuit breakers, rollback paths, and decision evidence.

Why is a kill-switch not enough?

A kill-switch stops actions. Enforcement doctrine adds safe pause, controlled fallback modes, reversibility engineering, and evidence capture so the business stays safe and operable.

What’s the biggest enforcement mistake enterprises make?

They focus on model performance and dashboards but skip decision-level controls: permissioning, action thresholds, reversibility, and evidence packets.

How do you make human oversight real?

Give humans context, authority, and fast intervention tools—approval queues, override rights, emergency pause, and clear ownership. Oversight without power is theater.

What is Enterprise AI Enforcement Doctrine?

Enterprise AI Enforcement Doctrine defines how autonomous AI systems are stopped, constrained, reversed, and governed at runtime to ensure safe and accountable operation.

Why is AI enforcement different from AI governance?

AI governance defines intent and policy. AI enforcement ensures those policies actively block, allow, or escalate actions during execution.

What does “stoppable AI” mean in enterprises?

Stoppable AI refers to systems that can pause or halt autonomous actions in seconds without breaking business operations or losing decision evidence.

Why is reversibility critical for autonomous AI?

Because irreversible AI decisions can cause financial, regulatory, or reputational damage at machine speed if not strictly controlled.

Where does AI enforcement operate technically?

AI enforcement operates at the Enterprise AI Runtime through control planes, decision gates, permissions, and circuit breakers.

Glossary

Enterprise AI Enforcement Doctrine

The set of runtime rules and control mechanisms that determine when autonomous AI systems may act, when they must escalate to humans, how actions are paused or reversed, and how decisions are defended with evidence.

Stoppable AI

Autonomous AI systems designed with kill-switches, circuit breakers, and safe-pause mechanisms that can halt or constrain actions immediately without breaking business operations.

Reversible Decision

An AI-driven action that can be undone or rolled back (such as revoking access or reverting pricing) without permanent harm.

Irreversible Decision

An AI-driven action that cannot be fully undone (such as sending customer communications or executing financial transfers) and therefore requires stricter enforcement thresholds and human approval.

Safe Pause

A controlled halt of autonomous AI activity that stops new actions, preserves system state and evidence, and shifts operations to a fallback mode rather than abruptly shutting systems down.

AI Kill Switch

A mechanism that immediately disables autonomous actions by an AI system; effective only when combined with safe-pause and rollback capabilities.

Human Oversight

The ability of authorized humans to monitor, intervene, override, or disable autonomous AI decisions with sufficient context and authority.

Decision Evidence (Decision Record)

A structured record capturing why an AI system took a specific action, including inputs, policy checks, constraints, approvals, and outcomes.

Enterprise AI Control Plane

The governance and enforcement layer that applies policy, risk controls, and decision boundaries across AI systems at runtime.

Enterprise AI Runtime

The production environment where AI systems execute decisions, invoke tools, interact with users, and create real-world impact.

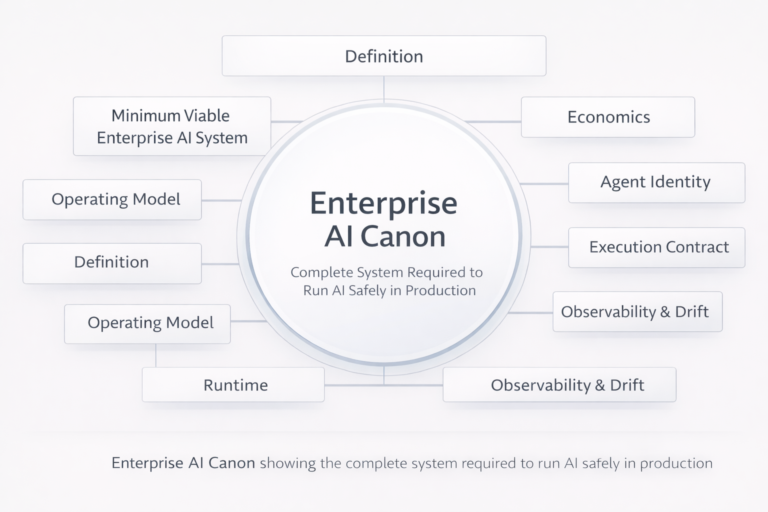

Further Reading in the Enterprise AI Canon

If you want the complete, connected doctrine (and the architecture layers that make enforcement real), continue here:

- The Enterprise AI Canon: https://www.raktimsingh.com/enterprise-ai-canon/

- Enterprise AI Control Plane (2026): https://www.raktimsingh.com/enterprise-ai-control-plane-2026/

- Enterprise AI Runtime: https://www.raktimsingh.com/enterprise-ai-runtime-what-is-running-in-production/

- Enterprise AI Agent Registry: https://www.raktimsingh.com/enterprise-ai-agent-registry/

- Enterprise AI Decision Failure Taxonomy: https://www.raktimsingh.com/enterprise-ai-decision-failure-taxonomy/

- Decision Clarity for Scalable Autonomy: https://www.raktimsingh.com/decision-clarity-scalable-enterprise-ai-autonomy/

- The Enterprise AI Operating Stack: https://www.raktimsingh.com/the-enterprise-ai-operating-stack-how-control-runtime-economics-and-governance-fit-together/

- Enterprise AI Economics & Cost Governance: https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/

- The Laws of Enterprise AI: https://www.raktimsingh.com/laws-of-enterprise-ai/

- Minimum Viable Enterprise AI System: https://www.raktimsingh.com/minimum-viable-enterprise-ai-system/

1️⃣ NIST AI Risk Management Framework (US)

Runtime risk management + governance expectations

🔗 https://www.nist.gov/itl/ai-risk-management-framework

2️⃣ ISO/IEC 42001 – AI Management Systems

Establishes AI governance as an organizational management system

🔗 https://www.iso.org/standard/81230.html

3️⃣ EU AI Act – Human Oversight & High-Risk AI

Reinforces need for stoppability, oversight, and controls

🔗 https://artificialintelligenceact.eu/article/14/

4️⃣ UK ICO – Explaining AI Decisions

Supports evidence-based defensibility of AI decisions

🔗 https://ico.org.uk/for-organisations/guide-to-data-protection/key-data-protection-themes/explaining-decisions-made-with-ai/