Why Enterprise AI Must Be Designed Top-Down

Most Enterprise AI initiatives fail not because models underperform, data is insufficient, or talent is missing — but because organizations attempt to scale AI bottom-up, one pilot at a time.

While individual use cases may succeed in isolation, they collapse under real-world complexity when deployed across business units, geographies, and regulatory environments.

This article explains why Enterprise AI must be designed top-down, what that actually means (and does not mean), and how organizations globally can avoid the illusion of progress while building AI systems that scale with trust, control, and economic discipline.

Enterprise AI doesn’t fail because models are weak.

It fails because nobody designed the city before building the roads.

The uncomfortable truth: Enterprise AI isn’t “adopted.” It’s declared.

In the early days, AI spreads like a helpful virus.

One team builds a copilot. Another team plugs a chatbot into support. A third team wires an agent to “draft” emails, and then—quietly—lets it “submit” them. Each local win looks harmless until the enterprise wakes up one morning and realizes something bigger has happened:

AI has become a decision layer.

And once AI becomes a decision layer, the enterprise inherits obligations that don’t belong to any one team:

- Who is accountable when an AI-influenced decision harms a customer?

- How do you prove why a decision happened—months later—to an auditor?

- How do you prevent cost from compounding into an ungovernable estate?

- How do you enforce policy when autonomy spans dozens of tools and systems?

- How do you stop, roll back, or unwind outcomes when the basis changes?

This is precisely why Enterprise AI is an operating model, not a technology stack—and why the “top-down” design decision is foundational (https://www.raktimsingh.com/enterprise-ai-operating-model/).

If your AI program is still being framed as “a set of models and tools,” you are likely missing the real system you are now running: a production decision estate with safety, compliance, and cost consequences (https://www.raktimsingh.com/the-enterprise-ai-operating-stack-how-control-runtime-economics-and-governance-fit-together/).

What “top-down” really means (and what it does not mean)

Top-down does not mean a central team blocks innovation, approves every prompt, or chooses one model for everyone.

Top-down means the enterprise defines the operating conditions under which AI is allowed to scale—so teams can move fast inside a governed boundary.

It means defining, up front:

- What decisions exist (and which are AI-eligible)

- What the enterprise owes when AI influences those decisions (evidence, safeguards, recourse)

- What control planes must exist (policy enforcement, auditability, observability, rollback)

- What economic boundaries apply (cost per decision, usage envelopes, routing rules)

- Who owns which decision classes (not “who owns the model”)

- What “done” means in production (SLOs, evaluation gates, incident response, change discipline)

A crisp way to say it: top-down sets decision governance and production controls first; bottom-up discovers them later—usually after damage.

This is the same reason I created a dedicated control plane narrative: the enterprise must run AI like a governed capability, not a collection of apps (https://www.raktimsingh.com/enterprise-ai-control-plane-2026/).

Why bottom-up Enterprise AI fails—even when every pilot “works”

Bottom-up scaling fails for one simple reason:

Enterprises don’t run models. They run outcomes.

Pilots are local. Outcomes are systemic.

A pilot can look successful while the enterprise silently accumulates:

- inconsistent policy enforcement

- fragmented identity and access patterns

- untracked data exposures

- duplicated retrieval pipelines

- unclear accountability paths

- cost sprawl and shadow usage

- contradictory agents acting on the same customer or record

This is also why “minimum viable” thinking matters: enterprises need a minimum viable enterprise AI system—not a minimum viable demo (https://www.raktimsingh.com/minimum-viable-enterprise-ai-system/).

And it’s why my decision failure taxonomy becomes essential: the enterprise is not failing because one model is wrong; it’s failing because decision pathways become unbounded and non-defensible at scale (https://www.raktimsingh.com/enterprise-ai-decision-failure-taxonomy/).

Bottom-up “wins” often create top-down liabilities.

Five forces that make top-down design non-negotiable in 2026+

1) The action threshold: advice became action

The moment AI crosses from suggesting to doing—creating accounts, changing limits, sending notices, freezing transactions—you’re no longer managing “model quality.”

You’re managing enterprise risk.

This is the same boundary I repeatedly emphasize across the canon: the moment AI touches the action boundary, the governance obligation changes (and my broader operating model is designed for exactly that reality) (https://www.raktimsingh.com/enterprise-ai-operating-model/).

2) Regulation is converging on lifecycle governance

Modern governance language is converging globally: lifecycle risk management, accountability, documentation, and continuous monitoring.

This is why enterprises need not only policies, but decision receipts and consistent controls—especially once AI outcomes affect customers, employees, pricing, eligibility, or safety.

3) Cost does not scale linearly—it compounds

Once AI becomes useful, usage multiplies culturally and operationally—especially with agent loops, RAG context inflation, retries, and governance evidence.

This is why Enterprise AI economics must be designed top-down as an economic control plane, not treated as a late-stage cost cutting exercise (https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/).

And it ties directly to the “Intelligence Reuse Index” logic: mature enterprises win not by rebuilding intelligence repeatedly, but by reusing governed intelligence as a service (https://www.raktimsingh.com/intelligence-reuse-index-enterprise-ai-fabric/).

4) “Model change” becomes a business-change event

In enterprise settings, changing a model can change outcomes, not just accuracy.

That is why the runbook crisis matters: model churn breaks production AI unless change is governed like a critical production discipline (https://www.raktimsingh.com/enterprise-ai-runbook-crisis-model-churn-production-ai/).

5) Trust is a balance sheet item

Every opaque or inconsistent AI decision creates future resistance—by customers, employees, auditors, and regulators.

Trust debt compounds quietly until a single incident makes it visible.

This is why “decision clarity” isn’t a communication nice-to-have; it’s the shortest path to scalable autonomy without reputational and compliance blowback (https://www.raktimsingh.com/decision-clarity-scalable-enterprise-ai-autonomy/).

A simple mental model: Enterprise AI is a city, not an app

Bottom-up AI builds many houses quickly.

Top-down Enterprise AI builds the city:

- zoning laws (decision classes and policies)

- roads (shared infrastructure, integration standards)

- police and courts (enforcement and incident response)

- tax system (economic control plane)

- archives (decision ledger and evidence retention)

- building codes (release gates, evaluation, QA)

This is exactly how my “operating stack” concept should be read: control + runtime + economics + governance are not optional add-ons—they’re the city infrastructure that prevents chaos (https://www.raktimsingh.com/the-enterprise-ai-operating-stack-how-control-runtime-economics-and-governance-fit-together/).

A city built bottom-up becomes chaotic, unsafe, expensive to maintain, and difficult to govern.

Enterprise AI is no different.

What a top-down Enterprise AI design blueprint looks like

Step 1: Start with decisions—not use cases

List the top 25–50 decisions that actually move money, risk, customer outcomes, or compliance posture.

Examples:

- approve / deny / reprice

- flag / freeze / hold

- escalate / de-escalate

- route cases and allocate humans

- send notices and commitments

Then classify them:

- high-impact vs low-impact

- reversible vs irreversible

- regulated vs unregulated

- customer-facing vs internal-only

This classification is where decision integrity begins—because it prevents teams from treating every decision like “just another automation.” (MY canon on enterprise-scale decision integrity is built to reinforce this principle.) (https://www.raktimsingh.com/enterprise-ai-canon/)

Step 2: Define decision rights (ownership before autonomy)

Top-down design forces a hard question:

Who owns the decision class—not the model?

Ownership means the accountable owner:

- defines acceptable outcomes

- defines policy constraints

- defines recourse and remediation

- signs off on autonomy level

- owns the incident path when things go wrong

This is the same reason I emphasize registries: you cannot govern autonomy without knowing what agents exist, who owns them, and what they are allowed to do (https://www.raktimsingh.com/enterprise-ai-agent-registry/).

Step 3: Design the control planes before you scale

To run Enterprise AI, you need reusable enterprise-grade planes that every AI capability plugs into:

- Policy & enforcement plane (what the AI is allowed to do)

- Decision evidence plane (what happened, why, with what version/policy)

- Runtime & reliability plane (SLOs, fallbacks, rollbacks)

- Economic plane (cost envelopes per decision class, routing rules)

- Change plane (release gates, versioning, approvals, rollback plans)

This is what my control plane framing was built for: governed autonomy requires a control plane, not heroics (https://www.raktimsingh.com/enterprise-ai-control-plane-2026/).

Step 4: Make “evidence” a product requirement

At enterprise scale, the output is not enough.

You need to answer later:

- what was decided?

- which model/version?

- which policy/version?

- which data sources and tools?

- which approvals/overrides?

- what action was taken downstream?

This is the deeper logic behind my Enterprise AI canon and laws: enterprises that cannot produce evidence eventually lose the right to scale autonomy (https://www.raktimsingh.com/laws-of-enterprise-ai/).

Step 5: Put an economic envelope on every decision class

Top-down design avoids the most common cost trap: treating AI spend like a project cost instead of an operating utility.

Every decision class gets:

- max tokens / max steps / max tool calls

- allowed model tiers

- caching rules and retrieval depth

- bounded fallback behavior

- escalation rules when budget is exceeded

This is how the economics control plane becomes real—cost governance at the decision layer, not spreadsheet governance after the invoice arrives (https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/).

Step 6: Create a portfolio view (or sprawl wins)

If you cannot answer:

- what AI is running?

- who owns it?

- what it costs per decision?

- what policies govern it?

- what incidents it has had?

…you do not have an AI program. You have an AI leak.

And the fastest way to leak is to confuse “lots of pilots” with “an operating model.”

Three stories that make the case obvious

Story 1: The support copilot that becomes a compliance engine

Bottom-up: each team buys/builds its own assistant. Tone differs. Risk rules differ. Privacy handling differs. Costs are duplicated. Audit becomes impossible.

Top-down: shared policy + evidence + decision classes (“suggest” vs “commit to customer”) + economics envelopes.

This is how you scale a capability, not a tool.

Story 2: Lending decisions where “accuracy” isn’t the risk

Bottom-up: a model is deployed; governance arrives later as a scramble.

Top-down: decision rights, traceability, and lifecycle discipline are defined upfront—so outcomes can be defended and remediated when necessary.

This is also why the runbook crisis is the real bottleneck: production is a change machine, and if you don’t govern churn, the system breaks even when “accuracy” looks fine (https://www.raktimsingh.com/enterprise-ai-runbook-crisis-model-churn-production-ai/).

Story 3: Fraud operations where local automation becomes systemic harm

Bottom-up: one team’s automation triggers holds that cascade into other systems, sometimes into partners, vendors, and long-lived records.

Top-down: blast radius controls, reversibility thresholds, incident playbooks, and explicit action boundaries.

The leadership shift: from “AI strategy” to “AI operating model”

Top-down Enterprise AI is a leadership stance:

- CIOs stop funding tools and start funding control planes

- CFOs stop asking “what’s the model cost?” and start asking “what’s the cost per decision?”

- Boards stop asking “are we using AI?” and start asking “can we govern AI outcomes with evidence?”

That is the thesis of my pillar: Enterprise AI must be run as a governed operating capability, not a collection of experiments (https://www.raktimsingh.com/enterprise-ai-operating-model/).

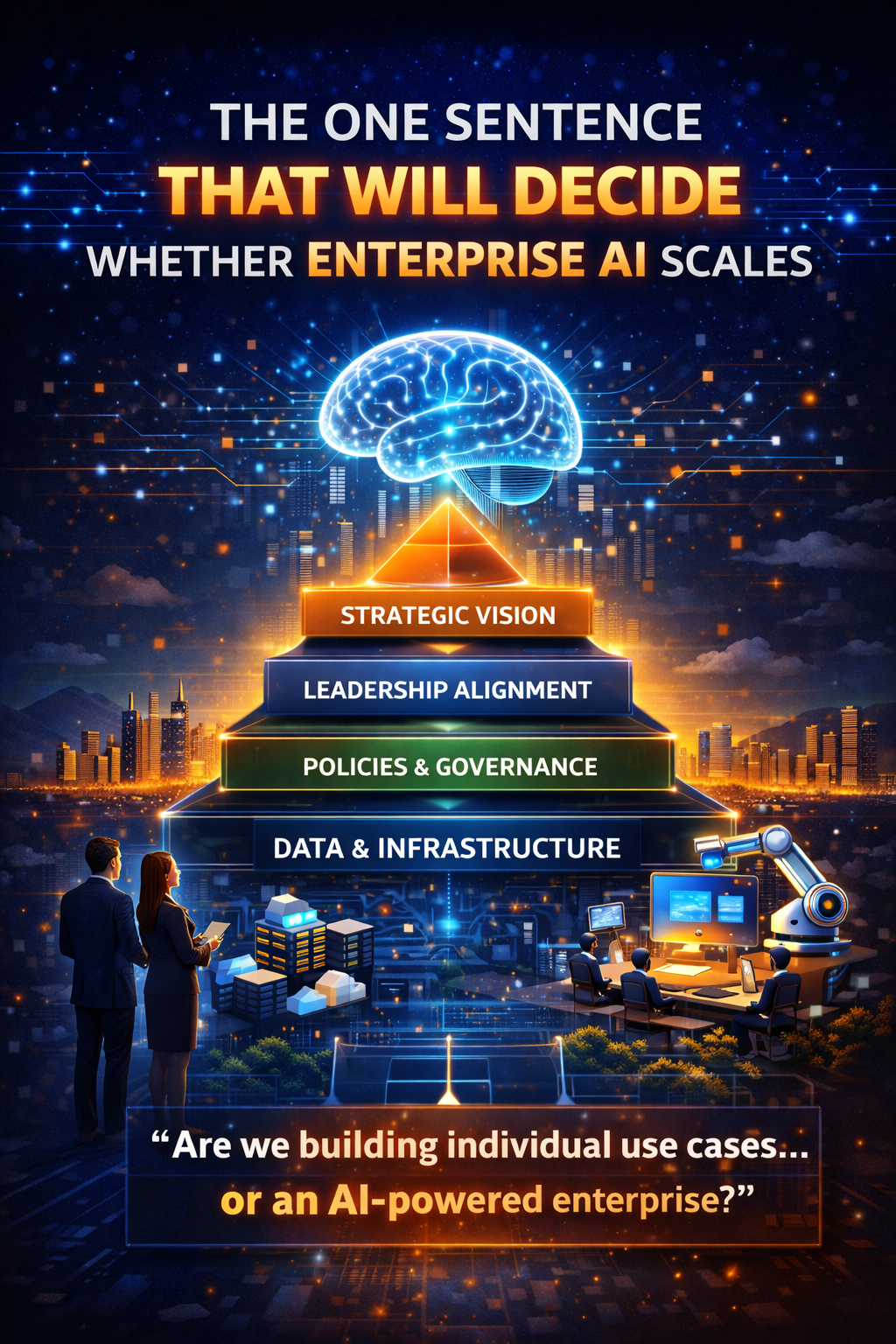

Conclusion column: The one sentence that will decide whether Enterprise AI scales

Bottom-up AI produces adoption.

Top-down Enterprise AI produces governed autonomy.

If AI is becoming a decision layer inside your enterprise, top-down design is not bureaucracy.

It is the price of staying credible—to customers, regulators, auditors, and your own business.

In 2026+, the enterprises that win won’t be the ones with the best demos.

They’ll be the ones that can prove—continuously—that their AI decisions are:

- authorized

- bounded

- auditable

- economically controlled

- reversible when required

And that is exactly what my Enterprise AI canon is designed to standardize at global scale (https://www.raktimsingh.com/enterprise-ai-canon/).

FAQ

1) Isn’t top-down too slow for AI innovation?

Top-down sets reusable guardrails (decision classes, policies, evidence, economics). Inside those guardrails, teams ship faster—because they’re not reinventing governance per use case.

2) What’s the first artifact to create?

A decision inventory + decision classification (impact, reversibility, regulatory exposure). That becomes the map of what autonomy is even allowed to exist.

3) Where do most enterprises start wrong?

They start with copilots and tools. They should start with decision rights, evidence requirements, and cost envelopes—then choose tools.

4) How do I know if we’re scaling bottom-up today?

If you can’t answer “what AI is running, who owns it, what it costs per decision, and what evidence it produces,” you’re scaling bottom-up—whether you admit it or not.

5) What’s the fastest way to reduce risk while scaling fast?

Centralize your “shared beams”: control plane, runtime, economics, and governance as reusable services—then let teams innovate on top (https://www.raktimsingh.com/the-enterprise-ai-operating-stack-how-control-runtime-economics-and-governance-fit-together/).

Why do Enterprise AI pilots succeed but fail to scale?

Because pilots operate in controlled environments. Production introduces organizational complexity, regulatory exposure, and cross-system dependencies that bottom-up AI cannot handle.

What does top-down Enterprise AI actually mean?

Top-down Enterprise AI defines intent, guardrails, governance, and decision ownership first — then allows teams to innovate safely within those boundaries.

Is top-down AI the same as centralized control?

No. Top-down sets constraints and outcomes; execution remains decentralized and agile.

Why is this more critical after 2026?

Because AI systems are moving from recommendation to action, increasing regulatory, financial, and reputational risk at enterprise scale.

Glossary

- Enterprise AI: AI operated as a governed production capability where decisions, accountability, evidence, economics, and lifecycle controls matter as much as model quality.

- Decision class: A category of decisions grouped by impact, reversibility, regulatory exposure, and required safeguards.

- Decision rights: Ownership model defining accountability for outcomes (not just technical ownership of a model).

- Control plane: Cross-cutting layer that enforces policy, captures evidence, and enables stoppability/rollback across AI systems (https://www.raktimsingh.com/enterprise-ai-control-plane-2026/).

- Runtime: The operational substrate that runs AI in production—workflows, tools, identity, memory, observability, fallbacks (https://www.raktimsingh.com/enterprise-ai-runtime-what-is-running-in-production/).

- Trust debt: Accumulated resistance created by opaque, inconsistent, or harmful AI outcomes.