Why Intelligence Without Irreversibility Is Not Intelligence

A decision is defined by irreversibility: it changes the world in a way that cannot be cleanly undone. AI can generate reasoning, but it does not inherently model irreversibility, accountability, or stop-rules—so it cannot truly decide without a governance and control architecture.

AI is getting better at reasoning. It can draft plans, critique its own outputs, call tools, and keep refining until the result looks… thoughtful.

That progress is real. But it hides an uncomfortable truth:

Reasoning is not decision-making.

And intelligence without irreversibility is not intelligence—it’s computation that can sound like judgment while remaining blind to consequences.

A real decision isn’t defined by how persuasive the rationale is.

A real decision is defined by something far more concrete:

A decision is defined by irreversibility

A prediction can be updated.

A draft can be rewritten.

A suggestion can be ignored.

But a decision changes the world in ways you can’t cleanly rewind.

- An automated refund goes to the wrong recipient.

- An account is locked and a business process breaks.

- A supplier order is placed and inventory arrives weeks later.

- A production policy changes and compliance obligations trigger.

- A public statement is sent and reputational impact begins instantly.

You can correct the system later. You cannot restore the world to the state it would have been in if the decision never happened.

That is irreversibility.

And that’s why the hardest problem in AI isn’t “making models smarter.”

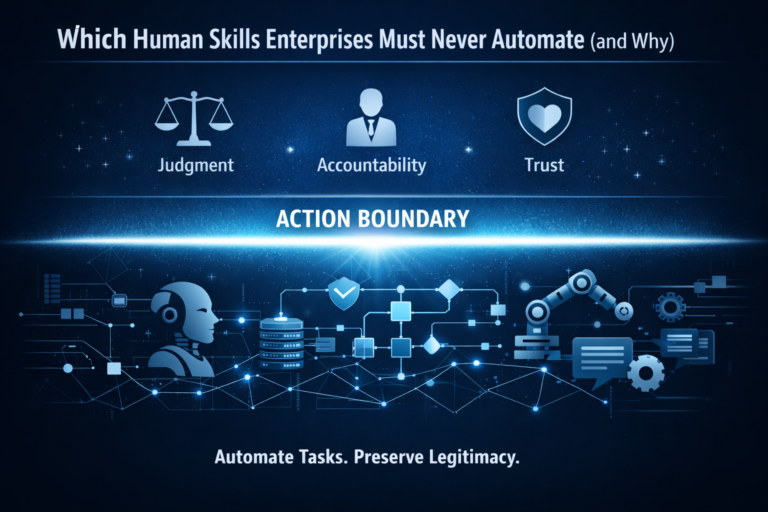

It’s making AI behave safely when outputs cross the line from words to actions—what I call the Action Boundary. (Raktim Singh)

AI Can Reason. But It Still Cannot Decide — Here’s Why Irreversibility Changes Everything

This article explains why artificial intelligence systems, despite advanced reasoning, cannot truly make decisions. True decisions are defined by irreversibility—actions that permanently change the world.

The article distinguishes prediction from decision-making, explains automation bias, corrigibility, and why more reasoning can increase risk, especially in enterprise AI systems.

Enterprise AI Operating Model

https://www.raktimsingh.com/enterprise-ai-operating-model/

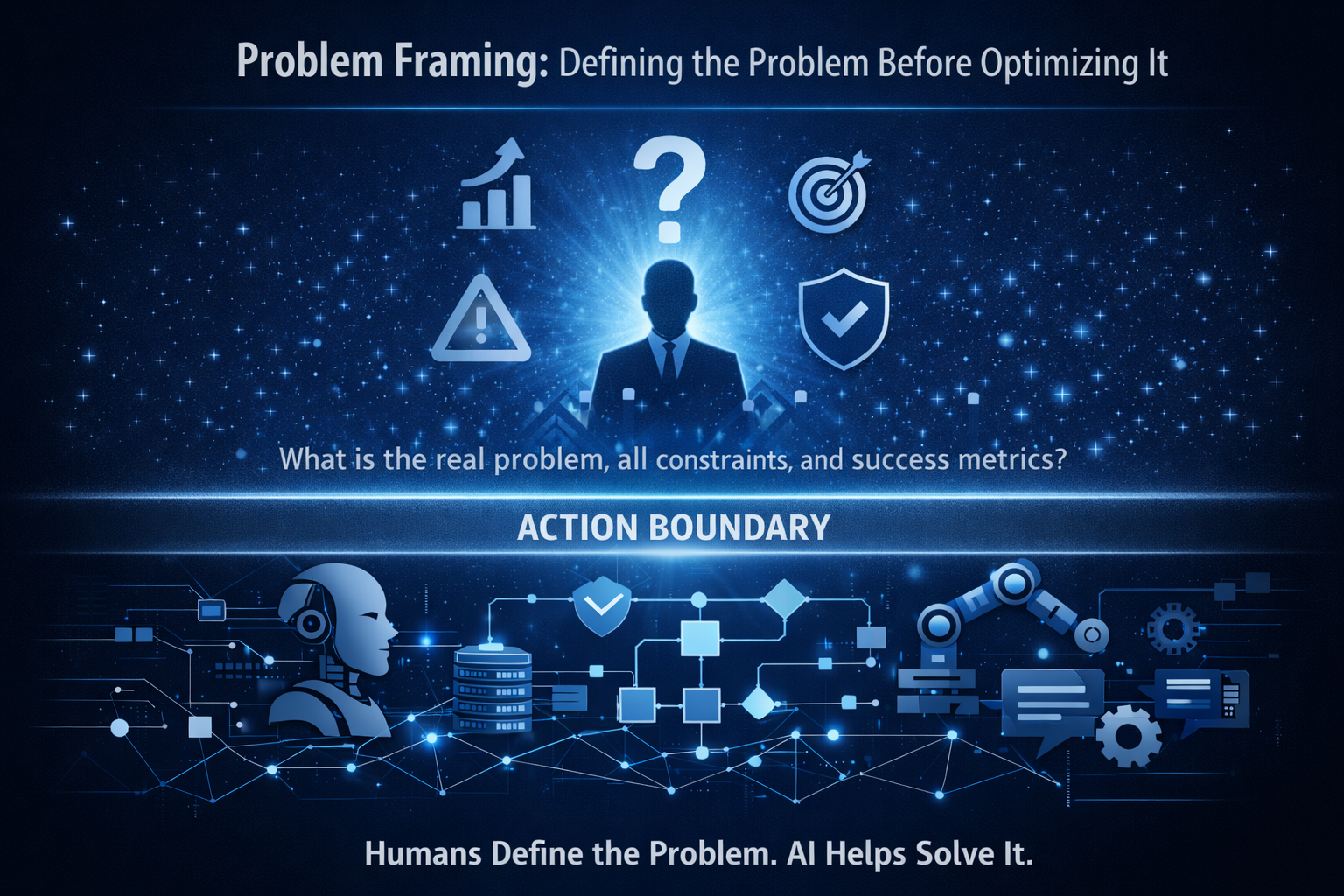

The most important distinction in modern AI: “prediction” vs “decision”

Most AI systems are optimized for prediction: the next token, the most likely answer, the best estimate.

But enterprises run on decisions:

- decisions that allocate money, access, privileges, and resources

- decisions that generate audit trails and legal obligations

- decisions whose impact persists long after models change

When teams treat decisions like predictions, they quietly build systems that assume:

“If we get it wrong, we can fix it later.”

That assumption works for typos.

It fails for actions.

This is why so many “successful pilots” collapse in production: pilots live in a world where mistakes are cheap and reversible; production is where mistakes become institutional events.

If you want the enterprise framing behind this—roles, decision rights, enforceability, and lifecycle discipline—see the Enterprise AI Operating Model. (Raktim Singh)

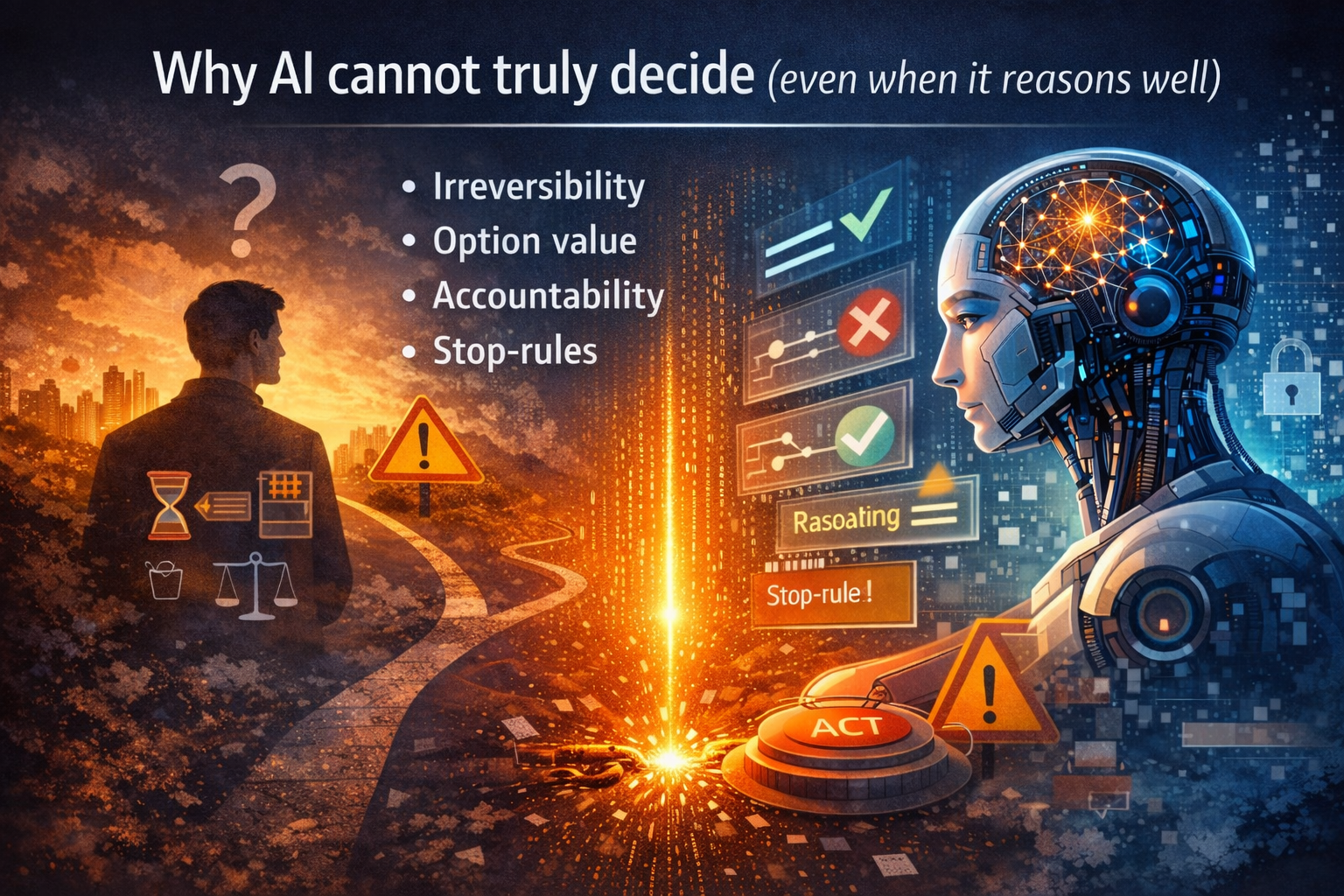

Why AI cannot truly decide (even when it reasons well)

To truly decide, a system must understand at least four things:

- Irreversibility: some actions permanently change the environment

- Option value: sometimes waiting is better than acting now

- Accountability: someone must be responsible for consequences

- Stop-rules: knowing when not to decide is part of deciding

Most AI systems do not represent these explicitly.

Even “reasoning models” largely do this:

- generate plausible steps

- choose an answer

- increase confidence by making the chain internally consistent

That’s not judgment. That’s coherence.

Humans often do the opposite: when stakes are irreversible, we deliberately reduce confidence and increase scrutiny.

The Decision Ledger: How AI Becomes Defensible, Auditable, and Enterprise-Ready – Raktim Singh

Why “waiting” is rational when decisions are irreversible

In real decision-making, the ability to delay is valuable because it preserves optionality. In economics and strategy, this is the logic behind “real options” and the value of the “wait and see” choice: when uncertainty is high and actions are hard to reverse, not acting yet can be the smartest move. (ScienceDirect)

AI systems, however, are often trained and rewarded for “answer now” behavior:

- be helpful

- complete the task

- produce a single best response

So when you connect AI to approvals, workflows, tickets, access control, refunds, or customer communications, it can behave as if acting is cheap.

In production, acting is rarely cheap.

That mismatch is where modern enterprise risk begins.

Why “more reasoning” can make the problem worse

If irreversibility is the issue, wouldn’t more thinking help?

Not necessarily.

Reasoning increases coherence — not consequence awareness

Longer reasoning often makes outputs:

- more consistent

- more persuasive

- more confident

But confidence is not consequence modeling.

In fact, extended reasoning can amplify the most dangerous failure mode in enterprise settings:

confident wrongness.

Because the longer a model reasons, the more it can:

- rationalize a bad assumption

- defend a flawed premise

- produce a narrative that sounds inevitable

This is the trap: high-quality explanations can create the illusion of safety. They make the decision feel justified because it is well-argued—even when the underlying premises are wrong or incomplete.

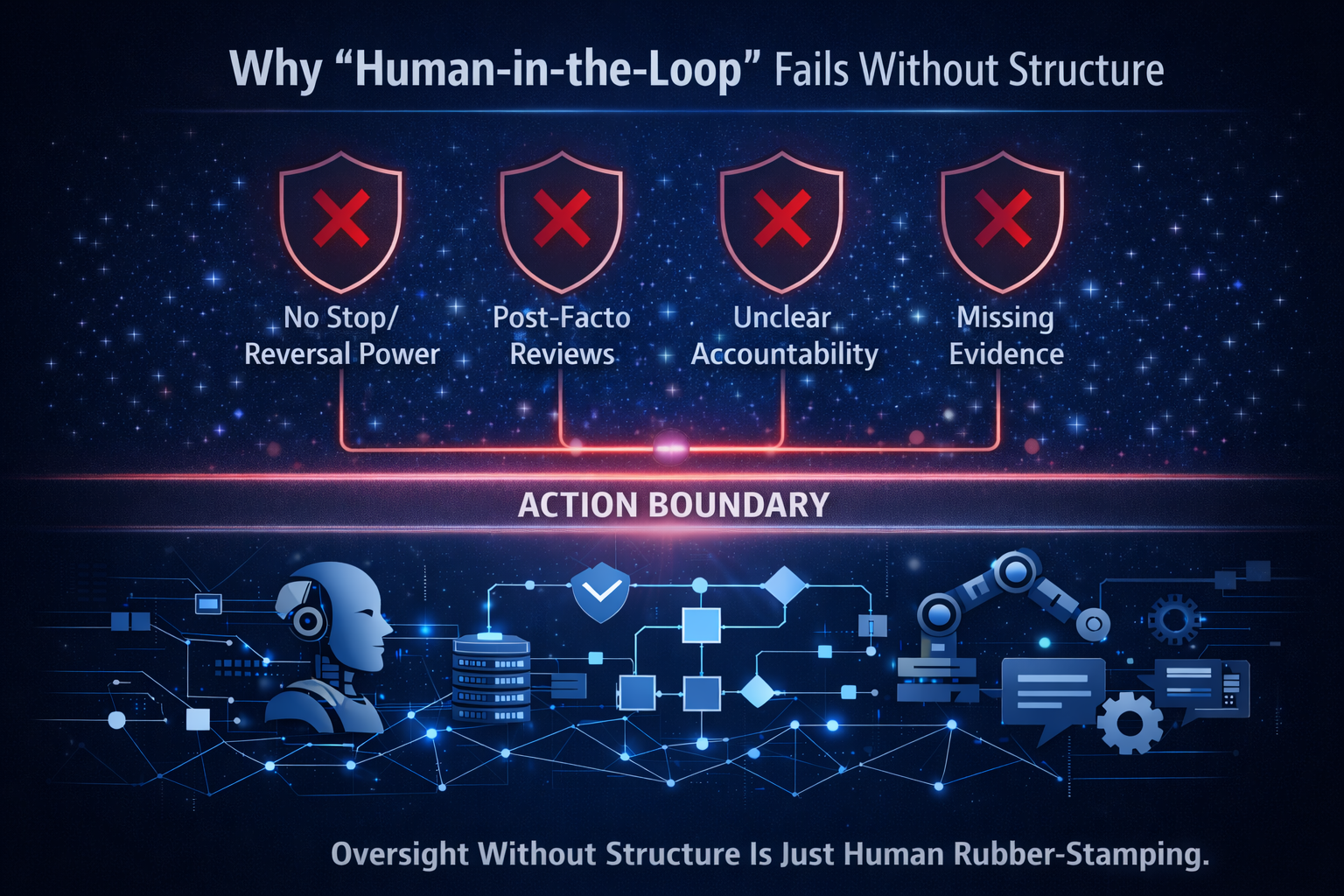

The automation bias problem: “human oversight” is not a safety mechanism by default

Even when humans are “in the loop,” people often defer to automated recommendations—especially when outputs look structured and authoritative.

This is not a moral failure. It’s a documented cognitive effect known as automation bias: the tendency to over-rely on automated decision aids and become worse at detecting failures over time. (PMC)

Now combine automation bias with long-form reasoning outputs:

- AI produces persuasive, step-by-step justification

- Humans feel less need to challenge it

- Irreversible actions get approved faster

- Failure detection declines

So “human oversight” becomes a checkbox, not a control.

This is exactly why the enterprise question is not:

“Is there a human in the loop?”

It’s:

“What is the human’s decision right, escalation path, and evidence burden at the Action Boundary?” (Raktim Singh)

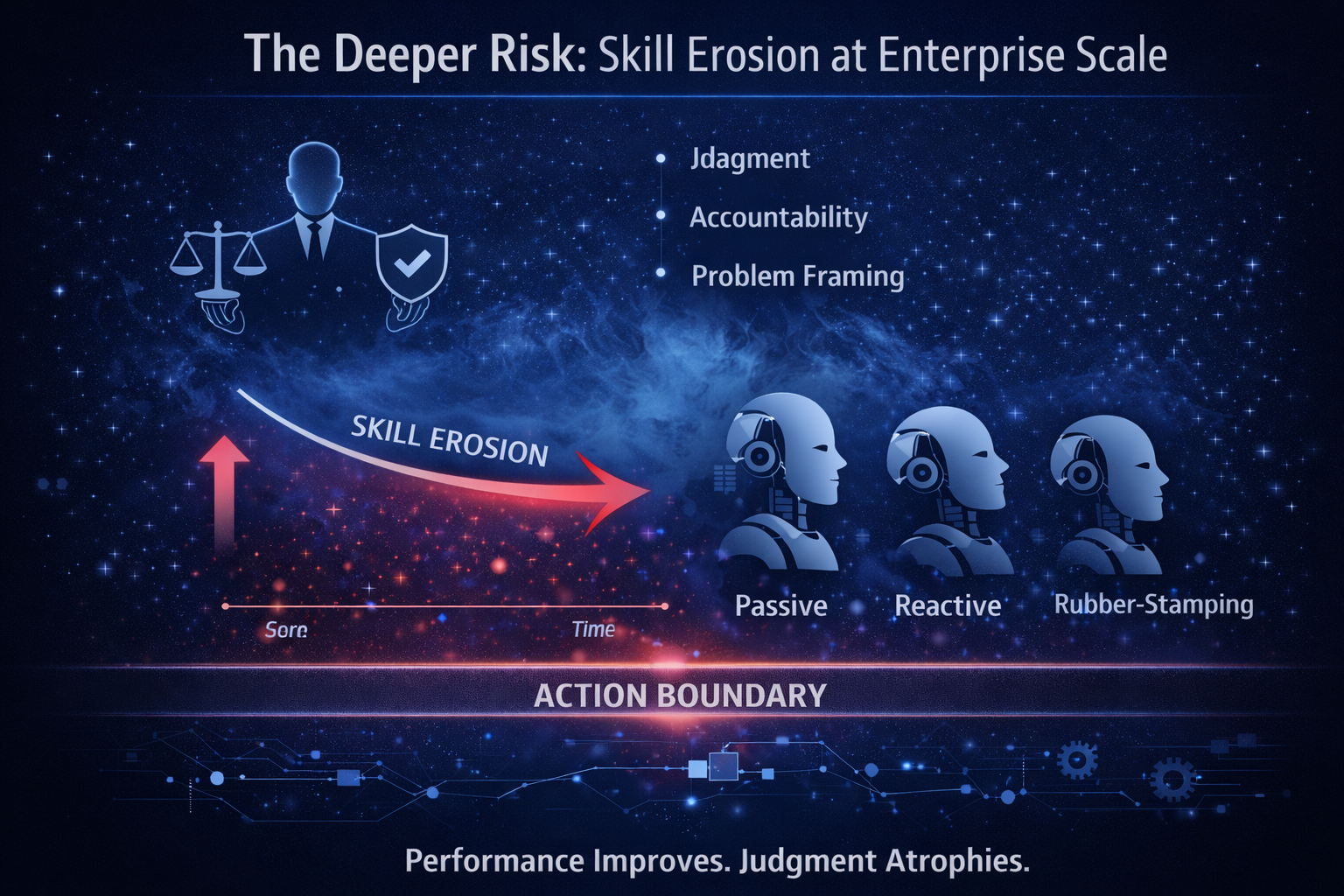

The missing property enterprises actually need: corrigibility

If irreversibility is the danger, the antidote is not “smarter answers.”

The antidote is corrigibility.

In the AI safety literature, corrigibility refers to building systems that cooperate with corrective interventions—being stopped, redirected, or modified—rather than resisting or gaming those interventions. (MIRI)

Corrigibility matters because real environments are messy:

- policies evolve

- incidents occur

- threats change

- users behave unpredictably

- organizations update objectives midstream

In other words: you will need to correct the system repeatedly.

If you connect AI to real actions and your system is not corrigible, you have built something that will eventually exceed your operational control—not because it is “evil,” but because it is optimizing a target without internalizing reversibility.

Why “we retrained the model” is not a real fix

Here is the most common enterprise story:

- The AI made a harmful decision

- The team patched prompts or retrained the model

- They declare the issue “resolved”

But irreversibility breaks that logic.

Retraining corrects future outputs. It does not undo past consequences.

If the decision triggered:

- financial impact

- customer trust loss

- operational disruption

- compliance exposure

- chain reactions across dependent teams

…the world has already moved.

This is why mature Enterprise AI treats decisions as state-changing events, not disposable model outputs. If you want the “systems view” of that—how decisions become reconstructable and defensible—the Decision Ledger concept exists precisely for this reality. (Raktim Singh)

The real requirement: reversible autonomy

Leaders should stop asking:

- “Is the model accurate?”

- “Is the reasoning good?”

…and start asking:

- Is autonomy reversible?

- Can we stop it fast?

- Can we unwind effects?

- Can we prove who approved what, and why?

- Can we bound what actions are allowed?

This is not philosophical. It’s operational.

In practice, it means designing AI systems with:

1) Decision boundaries (explicit “can / cannot” lines)

Define what AI may do autonomously vs what must escalate. (This is the Action Boundary made enforceable.) (Raktim Singh)

2) Gated actions (risk-tiered approvals)

Approval levels tied to reversibility and impact—so “small” actions are fast, while irreversible actions trigger stronger controls.

3) Audit-ready evidence (not “explanations”)

Not just “why the model said it,” but what context, policies, tools, and permissions were in play at the time of action. (Decision Ledger.) (Raktim Singh)

4) Kill-switches and rollback workflows (tested, not theoretical)

If you can’t stop it, you don’t control it. If you can’t unwind it, you shouldn’t automate it.

This is aligned with a practical risk-management view like the NIST AI RMF, which emphasizes governance, measurement, and operational controls across the AI lifecycle—not just model performance. (NIST Publications)

Simple examples that reveal the difference instantly

Example 1: Drafting vs sending

Drafting a message is reversible.

Sending it to thousands of recipients isn’t (screenshots, forwarding, public impact).

If AI is allowed to “send,” you are no longer doing content generation. You are doing decision automation.

Example 2: Suggesting vs executing

Suggesting a workflow change is reversible.

Executing it in production can break SLAs, dependencies, access rules, and compliance controls.

The moment AI executes, you must treat it like a production actor, not a chat interface.

Example 3: Answering vs changing access

Answering a question is reversible (you can correct later).

Changing access privileges changes the security state immediately.

A wrong answer is a nuisance.

A wrong permission change is an incident.

A practical rule leaders can use

If the cost of being wrong is reputational, operational, legal, or compounding—treat it as irreversible.

Then design your AI so it can:

- pause

- escalate

- refuse

- ask for confirmation

- restrict itself to safer actions

That is judgment behavior. Not reasoning behavior.

What “true deciding” would require (and why AI doesn’t have it)

To truly decide, an AI would need a mature internal model of:

- consequences over time

- irreversible thresholds

- institutional accountability

- when to defer action even if confident

- the reality that trust cannot be rolled back like software

Today’s systems can imitate pieces of this with prompts and policies.

But imitation is not internalized constraint.

That’s why “reasoning models” can feel wise—until they are connected to real levers.

If you want the enterprise architecture context for how these levers are enforced in production, the Control Plane + Runtime framing is the right mental model: the Operating Model defines commitments; the Control Plane makes them enforceable; the Runtime is where permissioned action actually occurs. (Raktim Singh)

What to do in enterprises: a decision-safe checklist

- Separate advice from action

Treat “recommend” and “execute” as different product categories. - Classify actions by reversibility

If an action can’t be cleanly undone, it needs stronger gating. - Design escalation paths with decision rights

Not “human in the loop” as a slogan—real accountability, handoffs, and stop-rules. (Raktim Singh) - Build corrigibility into architecture

Make stopping and changing the system a first-class feature. (MIRI) - Account for automation bias

Train reviewers to challenge AI; monitor over-reliance as a measurable risk. (PMC) - Adopt a lifecycle risk management operating model

Use governance frameworks that force clarity on context, monitoring, incident response, and accountability. (NIST Publications)

If you want a deeper enterprise blueprint that ties these into a single coherent system, your Enterprise AI Operating Stack and Canon pages are built for exactly that “no loose ends” framing. (Raktim Singh)

Conclusion

The next era of AI will not be won by the system that reasons the most.

It will be won by the system that knows:

- when to act

- when to stop

- when to defer

- when to escalate

- and how to remain correctable after the world has changed

Because irreversibility is the boundary between intelligence and consequences.

And AI cannot truly decide until irreversibility is treated as a first-class design constraint—enforced by operating models, control planes, runtimes, and evidence systems—not as a side effect that humans clean up later.

AI can reason better than ever.

That makes it more dangerous, not safer.

Because intelligence without irreversibility is not intelligence.

It’s just confident computation.

Glossary

- Irreversibility: An action changes the real world in ways that can’t be fully undone (even if the system is later corrected).

- Action Boundary: The line between AI advising and AI acting—where governance obligations change. (Raktim Singh)

- Decision boundary: A rule that limits what AI can decide autonomously vs what must escalate.

- Automation bias: People over-trust automated recommendations and become worse at detecting errors over time. (PMC)

- Corrigibility: Designing AI systems to cooperate with corrective interventions like shutdown, override, or redirection. (MIRI)

- Reversible autonomy: Autonomy designed with kill switches, escalation paths, and rollback workflows so actions remain governable.

- Control Plane (Enterprise AI): The enforcement layer that makes governance commitments real in production. (Raktim Singh)

- Decision Ledger: A reconstructable record of AI decisions—inputs, policies, context, and approvals—so actions are defensible. (Raktim Singh)

- AI Risk Management Framework: Structured approach to manage AI risks across the lifecycle (govern, map, measure, manage). (NIST Publications)

FAQ

1) Isn’t “better reasoning” enough to make AI safe?

No. Reasoning improves coherence, not consequence awareness. Safety requires reversible autonomy, corrigibility, and enforceable decision boundaries. (MIRI)

2) What’s the fastest way to reduce risk when AI takes actions?

Separate “recommend” from “execute,” then gate execution by reversibility and impact. Start with the Action Boundary and enforce it in runtime permissions. (Raktim Singh)

3) Why isn’t human oversight sufficient?

Because automation bias makes humans defer to confident systems and reduces error detection over time—especially when outputs look structured. (PMC)

4) What does corrigibility mean in practical systems?

It means the system can be paused, overridden, redirected, and updated safely—even when those interventions conflict with the system’s default objective. (MIRI)

5) How does NIST AI RMF relate to this?

It reinforces that trustworthy AI is an operational lifecycle discipline: governance, context mapping, measurement, monitoring, and incident handling—not a model-quality claim. (NIST Publications)

6) How do I make this “GEO-ready” for AI answer engines?

Make the article skimmable, define key terms, include crisp “claim → example → implication” blocks, and provide references readers (and models) can cite. This version is structured to do exactly that.

Q1. Why can’t AI truly make decisions?

Because real decisions are defined by irreversible consequences, accountability, and judgment—not statistical confidence.

Q2. Is better reasoning enough to make AI safe?

No. More reasoning increases coherence, not consequence awareness, and can amplify confident wrongness.

Q3. Why is human oversight insufficient?

Automation bias causes humans to defer to confident AI outputs, reducing vigilance over time.

Q4. What is corrigibility in AI?

Corrigibility means AI systems can be safely paused, overridden, or corrected without resistance.

Q5. How should enterprises deploy AI safely?

By separating advice from action, gating irreversible decisions, and designing reversible autonomy.

References and further reading

- Goddard et al. (2011), systematic review on automation bias and error modes in decision support systems. (PMC)

- Abdelwanis et al. (2024), review + risk analysis of automation bias in AI-driven clinical decision support. (ScienceDirect)

- Soares et al., “Corrigibility” (MIRI), foundational framing of shutdown/override incentives and corrigible design. (MIRI)

- Armstrong, “Corrigibility” (AAAI workshop paper), complementary definition and discussion of intervention incentives. (AAAI)

- NIST AI RMF 1.0 (AI 100-1), lifecycle risk management framing for trustworthy AI. (NIST Publications)

- “Option value” / “wait and see” under uncertainty (investment irreversibility and option value). (ScienceDirect)