Why Learning AI Breaks Formal Verification—and What “Safe AI” Must Mean for Enterprises

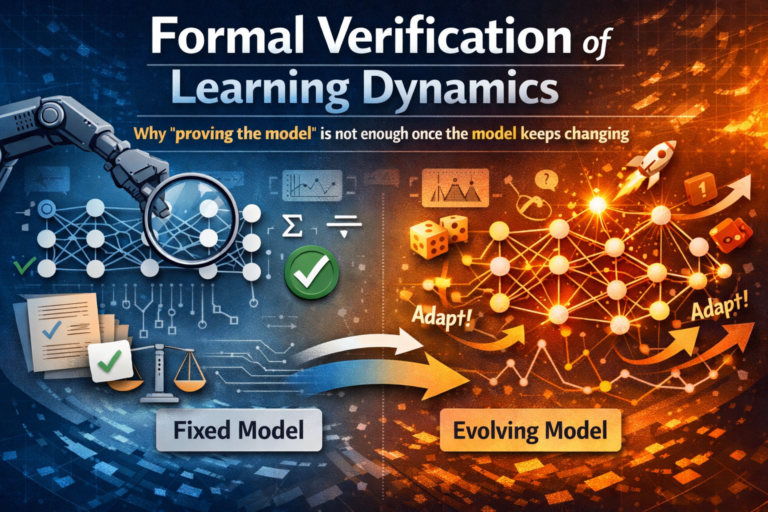

Formal verification was built for systems that stand still.

Artificial intelligence does not.

The moment an AI system learns—adapting its parameters, updating its behavior, or optimizing against real-world feedback—the guarantees we rely on quietly expire.

Proofs that once held become historical artifacts. Safety arguments collapse not because engineers made mistakes, but because the system itself changed after deployment.

This is the uncomfortable truth enterprises are now facing: you cannot “prove” a learning system safe in advance. Accuracy is not safety. Correctness is not control. And “verified once” is not “verified forever.”

This article explains why learning dynamics make AI fundamentally hard to verify, how real enterprise systems drift into failure despite good intentions, and why the definition of safe AI must shift from static proofs to bounded, continuously governed behavior.

Why learning dynamics are so hard to verify

A strange thing happens when an enterprise deploys its first “successful” AI system.

The hard part stops being accuracy—and starts being continuity.

In the lab, you can treat a model like a product: version it, test it, sign it off, ship it.

In production, that mental model breaks.

Because the system doesn’t stay still.

A vendor patch changes behavior in edge cases. A fine-tune tweaks decision boundaries. A refreshed retrieval index rewires what the model “knows.” A new tool integration expands the action surface. A memory update changes how an agent plans. A prompt template evolves and suddenly the agent “discovers” a new shortcut.

The world itself drifts. Your data drifts. Your workflows drift.

Nothing crashes. Nothing alarms. And yet the system you proved is no longer the system that’s running.

That is the core idea behind formal verification of learning dynamics:

verifying not only what the model is today, but what it can become tomorrow—under updates, drift, and adaptation.

This problem sits at the intersection of formal methods, safety, online/continual learning, runtime monitoring, and enterprise governance. And it’s becoming unavoidable anywhere AI is allowed to act.

Research communities have been circling parts of it for years—safe RL with formal methods, runtime “shielding,” drift adaptation, and proofs about training integrity—but enterprises are now encountering the full collision in real systems. (cdn.aaai.org)

This article explains why learning dynamics make AI verification fundamentally hard, how real enterprise systems fail static proofs, and what “safe AI” realistically means in production environments.

What “formal verification” can realistically mean here

Formal verification of learning dynamics is the discipline of proving that an AI system remains within defined safety, compliance, and performance boundaries throughout its updates and adaptations, not only at a single point in time.

If classic verification is “prove the program,” this is “prove the evolution of the program.”

Why this matters now

The industry has quietly shifted from deploying models to running adaptive intelligence systems:

- Models are updated frequently (vendor releases, fine-tunes, distillation, quantization)

- The real world shifts (covariate drift, label drift, and especially concept drift) (ACM Digital Library)

- Agentic systems change behavior as tools, prompts, policies, and memories evolve

- Retrieval systems change outputs by changing what context is surfaced—effectively altering behavior without “retraining” the base model

Traditional certification and testing methods were designed for systems that don’t keep changing after approval. But modern AI systems do. The moment you accept ongoing updates, the old promise—“prove it once, deploy forever”—stops being true.

This is why the topic is central to the bigger mission: Enterprise AI is not a model problem. It’s an operating model problem. And operating models require living assurance—a control plane that treats change as the default, not an exception.

This perspective builds on broader enterprise frameworks discussed in The Enterprise AI Operating Model, which explores how safety, governance, and execution must evolve together.

To understand overall Enterprise AI, go to:

- Enterprise AI Operating Model: https://www.raktimsingh.com/enterprise-ai-operating-model/

- Enterprise AI Control Plane (2026): https://www.raktimsingh.com/enterprise-ai-control-plane-2026/

- Enterprise AI Runtime: https://www.raktimsingh.com/enterprise-ai-runtime-what-is-running-in-production/

- Enterprise AI Economics & Control Plane: https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/

- Laws of Enterprise AI: https://www.raktimsingh.com/laws-of-enterprise-ai/

The mental model: proofs expire

Formal verification is built on a straightforward bargain:

- Define the system precisely

- Define the properties you care about

- Prove the system satisfies those properties

Learning breaks step (1).

Because learning isn’t “just a small parameter tweak.” Over time, it can change:

- decision boundaries

- internal representations

- calibration and uncertainty behavior

- tool-use preferences

- which shortcuts the system relies on

- the reachable set of actions via workflow composition

So even if you proved a property yesterday, that proof may not apply tomorrow—because the underlying system is no longer the same.

Three simple examples (no math, just reality)

Example 1: The spam filter that becomes a censor

A messaging platform deploys a spam classifier. Spammers adapt. The team retrains weekly. The overall metrics improve—until one day the filter starts blocking legitimate messages written in certain styles or dialects.

Nothing “crashed.” The model still looks great on aggregate. But the system crossed a boundary the organization never intended.

This is a learning-dynamics failure: accuracy improved while acceptability degraded—a classic risk in non-stationary environments and drift scenarios. (ACM Digital Library)

Example 2: The fraud model that learns the wrong lesson

A bank deploys fraud detection. Fraudsters shift tactics. The bank retrains on new labels—but those labels are shaped by the previous model’s decisions (what got reviewed, what got blocked, what got escalated). The training data becomes a mirror of past policy.

The model doesn’t just learn “fraud.” It learns the institution’s blind spots.

Now verification must include how labels are produced, how feedback loops shape data, and how policy reshapes the ground truth—concept drift’s messier cousin in real institutions. (ACM Digital Library)

Example 3: The tool-using agent that becomes unsafe after a “helpful” update

An enterprise agent is verified to never execute risky actions without approval. Then a new tool is added, or a workflow route changes, or a prompt template is updated. The agent discovers a sequence of harmless-looking calls that produces the same irreversible outcome.

This is why tool-using systems invalidate closed-world assumptions: the action space isn’t fixed. Verification must treat tools, permissions, orchestration, and runtime enforcement as part of the system. Safe RL research has explored shielding precisely because guarantees must hold during learning and execution. (cdn.aaai.org)

Why learning dynamics are so hard to verify

1) The system is stochastic and open

Learning pipelines contain randomness (sampling, initialization, stochastic optimization). Real environments are open. Even formal verification of neural networks is hard to scale; verifying a changing training process is harder still. (cdn.aaai.org)

2) Guarantees don’t compose across updates

You can prove the model is safe at time T.

But if the model updates at T+1, you must prove:

- the update didn’t break the property

- the new data didn’t introduce a failure mode

- the updated system doesn’t enable new reachable behaviors via tool/workflow composition

In enterprises, updates happen constantly. A static certificate becomes ceremonial.

3) Drift makes the spec unstable

Even if your code is fixed, the world moves. Concept drift means the relationship between inputs and outcomes changes over time. (ACM Digital Library)

So what exactly are you verifying—yesterday’s world or today’s?

4) Agents create new behaviors via composition

A tool-using agent is not a single function. It’s a planner, a memory system, a tool router, a prompt strategy, and a policy layer. Verifying components doesn’t guarantee safe composition—especially when new tools or new workflows expand the behavior space.

What “formal verification” can realistically mean here

Let’s be honest: “prove the whole learning system forever” is not achievable today.

But enterprise-grade assurance is achievable—if you stop treating verification as a one-time act and start treating it as a living system.

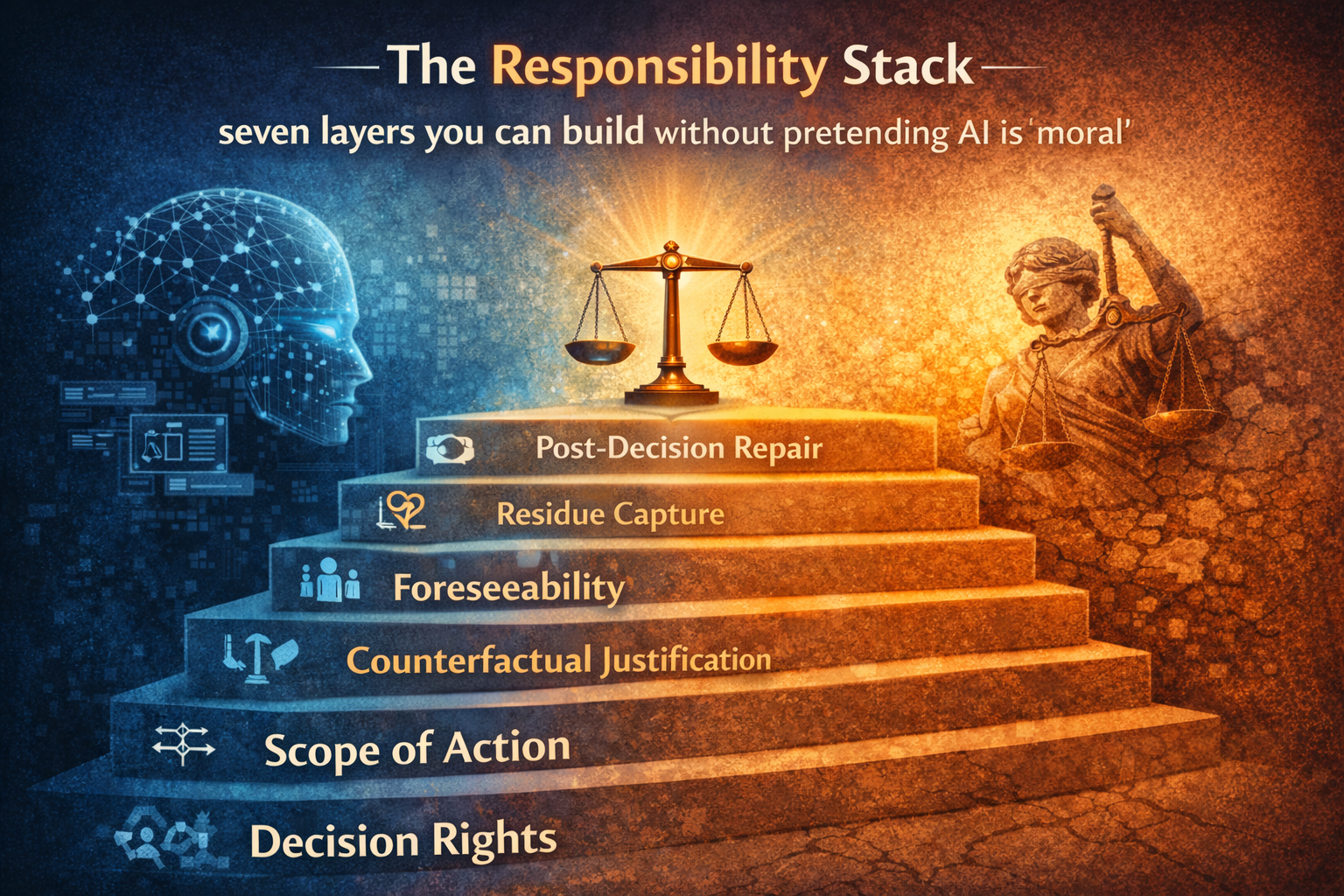

Think in layers of guarantees:

Level A: Prove invariants that must never break (non-negotiables)

Examples:

- “This action requires approval.”

- “This data class cannot be accessed.”

- “Payments above X are blocked unless dual-authorized.”

- “This agent cannot execute changes without evidence capture.”

These invariants should not be “learned.” They should be enforced by runtime controls—policy gates, safety monitors, and (in RL terminology) shields. (cdn.aaai.org)

Level B: Prove bounded change via update contracts

Instead of proving the whole model is safe, prove the update is safe relative to a contract:

- must not exceed a risk threshold

- must not degrade critical slices

- must not expand action reachability

- must preserve key constraints and refusal behaviors

This turns verification into change-control proof, not a timeless certificate.

Level C: Prove detectability + recoverability (the “living proof”)

When prevention can’t be guaranteed, guarantee fast detection + safe rollback:

- drift monitors

- anomaly detectors

- behavior sentinels

- autonomy circuit breakers

- rollback drills

This aligns with runtime verification: continuously checking execution against specifications and reacting when assumptions fail. (fsl.cs.sunysb.edu)

The global research landscape (what the world is trying)

This problem is so hard because multiple fields are attacking different slices:

Safe RL + formal methods: enforce safety during learning

Fulton et al. argue that formal verification combined with verified runtime monitoring can ensure safety for learning agents—as long as reality matches the model used for offline verification. That caveat is exactly where enterprises struggle: reality doesn’t sit still. (cdn.aaai.org)

Shielding: a practical way to keep learning inside safe boundaries

Shielded RL enforces specifications during learning and execution—an existence proof that you can combine learning with hard constraints at runtime. (cdn.aaai.org)

Concept drift adaptation: the world changes the target

Gama et al.’s widely cited survey frames concept drift as the relationship between inputs and targets changing over time, and surveys evaluation methods and adaptive strategies. It’s the canonical reason static testing fails in production. (ACM Digital Library)

Proof-of-learning / training integrity: verify training claims

A separate thread asks: how can we verify that training occurred as claimed, and detect spoofing? CleverHans summarizes proof-of-learning as a foundation for verifying training integrity, and NeurIPS work has explored verification procedures to detect attacks related to PoL-style claims. (CleverHans)

The enterprise blueprint (how to verify learning dynamics without pretending it’s solved)

1) Separate what learns from what must never change

- Let models adapt inside a sandbox

- Keep policy and action boundaries in a governed layer

- Treat permissions, approvals, reversibility, and evidence capture as non-learning invariants

This is the practical meaning of a control plane.

“Monitoring is not observability. It’s a live proof that the world still matches your assumptions.”

2) Introduce an Update Gate (verification checkpoint)

Every update—fine-tune, retrieval refresh, prompt change, tool addition—must pass:

- regression checks on critical slices

- constraint checks on forbidden behaviors

- policy compliance checks (data access, action authorization)

- rollout controls (canary, staged deployment)

No gate, no release.

- “Enterprise AI fails when change outruns governance.”

3) Treat monitoring as part of the proof

A monitor is not “observability.” It is a formal claim:

“If the system leaves the safe region, we will detect it in time to prevent irreversible damage.”

That is runtime verification in enterprise form. (fsl.cs.sunysb.edu)

“The unit of safety is not the model—it’s the update.”

4) Make rollback real—and rehearse it

Verification is meaningless if rollback exists only on slides.

You need:

- versioned models, prompts, tools, policies

- audit trails of what changed, when, and why

- circuit breakers for autonomy

- incident response for agents (treat failures like production incidents)

- If your AI can change, your proof has an expiration date.

5) Verify interfaces, not just models

Most catastrophic failures come from integration surfaces:

- tool APIs

- permission systems

- identity and authorization

- orchestration logic

- memory writes

- retrieval sources

Your verification boundary must sit where the model touches reality.

A model can be verified. A learning system must be governed.

Glossary

- Learning dynamics: How an AI system changes over time through updates (fine-tuning, continual learning, memory writes, retrieval refresh, tool-policy adaptation).

- Stationarity: The assumption that the problem and data distribution stay stable over time (rare in production).

- Concept drift: When the relationship between inputs and targets changes over time. (ACM Digital Library)

- Runtime verification: Checking execution traces against formal specifications during runtime using monitors. (fsl.cs.sunysb.edu)

- Shielding: Runtime enforcement that prevents unsafe actions during learning and execution. (cdn.aaai.org)

- Update contract: A formal set of constraints every update must satisfy before promotion to production.

- Proof-of-learning: Methods aimed at verifying claims about training integrity and detecting spoofed training claims. (CleverHans)

- Enterprise AI control plane: The governed layer that manages policies, permissions, approvals, reversibility, and auditability for AI systems at scale (see: https://www.raktimsingh.com/enterprise-ai-control-plane-2026/).

-

Formal Verification

Mathematical techniques used to prove that a system satisfies specific properties—effective only for fixed, non-learning systems.Learning Dynamics

The way an AI system’s behavior evolves over time as it adapts to data, feedback, or environment changes.Non-Stationary AI

AI systems whose internal parameters or decision policies change after deployment.Runtime Assurance

Safety mechanisms that monitor and constrain AI behavior during operation rather than proving correctness in advance.Enterprise Safe AI

AI systems that remain bounded, auditable, and reversible—even as they learn—rather than merely accurate at deployment time.

FAQ

1) Is formal verification of learning dynamics possible today?

Not as “prove everything forever.” But layered assurance is practical: invariants + update contracts + runtime verification + rollback discipline. (fsl.cs.sunysb.edu)

2) How is this different from model testing?

Testing samples cases. Verification targets guarantees (within defined bounds). With ongoing learning, you must verify the change process, not only the snapshot.

3) Does drift detection solve it?

No. Drift detection tells you assumptions are breaking; it doesn’t guarantee safety. It’s one component of a living verification system. (ACM Digital Library)

4) What should enterprises verify first?

Start with non-negotiables: action authorization, data access boundaries, irreversible-risk constraints, evidence capture—then add update gates and runtime monitors.

5) How does this relate to agentic AI?

Agents expand the action space via tools and workflows. Small changes can unlock new action pathways. That makes learning dynamics verification more urgent.

6) What’s the biggest mistake teams make?

Treating updates as “minor.” In adaptive systems, small updates can cause large behavioral shifts—especially through tools, prompts, and retrieval changes.

Q1: Why is formal verification difficult for learning AI?

Because learning systems change over time, invalidating any proof made on an earlier version of the model.

Q2: Can learning AI ever be fully verified?

No. Only bounded behaviors, constraints, and runtime guarantees can be verified—not future learning outcomes.

Q3: How should enterprises define safe AI?

Safe AI is AI whose actions are constrained, monitored, reversible, and auditable—not merely accurate.

Q4: What replaces traditional formal verification for AI?

Runtime assurance, policy enforcement layers, decision logging, and bounded action spac

Conclusion: The new definition of “safe AI” in enterprises

If the last decade was about building models that perform, the next decade is about building systems that remain safe while they evolve.

Formal verification of learning dynamics is the discipline that makes that evolution governable. It reframes the goal from “prove the model” to “prove the update,” from “certify once” to “assure continuously,” from “ship intelligence” to “run intelligence.”

This is why Enterprise AI cannot be a tool strategy. It must be an institutional capability—with a control plane, runtime discipline, economic governance, and incident response built for autonomy.

If you want a single line that captures the shift:

Enterprise AI is not verified once. It is verified continuously—because enterprise intelligence is a running system, not a shipped artifact.

For readers who want the broader operating-model context, see:

- https://www.raktimsingh.com/enterprise-ai-operating-model/

- https://www.raktimsingh.com/the-enterprise-ai-operating-stack-how-control-runtime-economics-and-governance-fit-together/

- https://www.raktimsingh.com/laws-of-enterprise-ai/

References

- Fulton, N. et al. “Safe Reinforcement Learning via Formal Methods” (AAAI 2018). (cdn.aaai.org)

- Alshiekh, M. et al. “Safe Reinforcement Learning via Shielding” (AAAI 2018). (cdn.aaai.org)

- Gama, J. et al. “A Survey on Concept Drift Adaptation” (ACM Computing Surveys, 2014). (ACM Digital Library)

- Stoller, S. D. “Runtime Verification with State Estimation” (RV). (fsl.cs.sunysb.edu)

- CleverHans blog: “Arbitrating the integrity of stochastic gradient descent with proof-of-learning” (2021). (CleverHans)

- Choi, D. et al. “Tools for Verifying Neural Models’ Training Data” (NeurIPS 2023). (NeurIPS Proceedings)

- Runtime verification overview resources (definitions, monitors, trace checking). (ScienceDirect)

- Recent work on proof-of-learning variants and incentive/security considerations. (arXiv)