These innovative solutions reduce the risk of data exposure while facilitating the management, processing, and sharing of information.

Requirement for Technologies that Enhance Privacy

PETs are indispensable in the current environment, where concerns about data breaches, surveillance activities, and unauthorized data utilization are rampant. They not only ensure the Confidentiality, integrity, and accessibility of data but also empower individuals to take control of their data, instilling a sense of security and ownership.

By cultivating user trust and ensuring compliance with privacy regulations, PETs are a critical component in protecting online Privacy, empowering individuals to determine the use of their data.

Conventional data protection methods provide Strong security guarantees for data in transit and at rest. Encryption, access control, identity management, secure tunnels, firewalls, traffic monitoring, multi-factor authentication, and device management are among the current-generation practices that ensure data is protected and only accessible to its intended users.

However, these methods must address data protection in use, even though they all achieve their intended objective of safeguarding data in transit and at rest.

Data must typically be converted to its unprotected form, plaintext, in order to be exploitable. This rule applies to the utilization of data by both humans and machines.

The plaintext must be accessible and available in both scenarios. Regrettably, this creates an opportunity for unauthorized parties, such as hackers or unauthorized users, to access data, whether intentionally by malicious actors or inadvertently by negligent users.

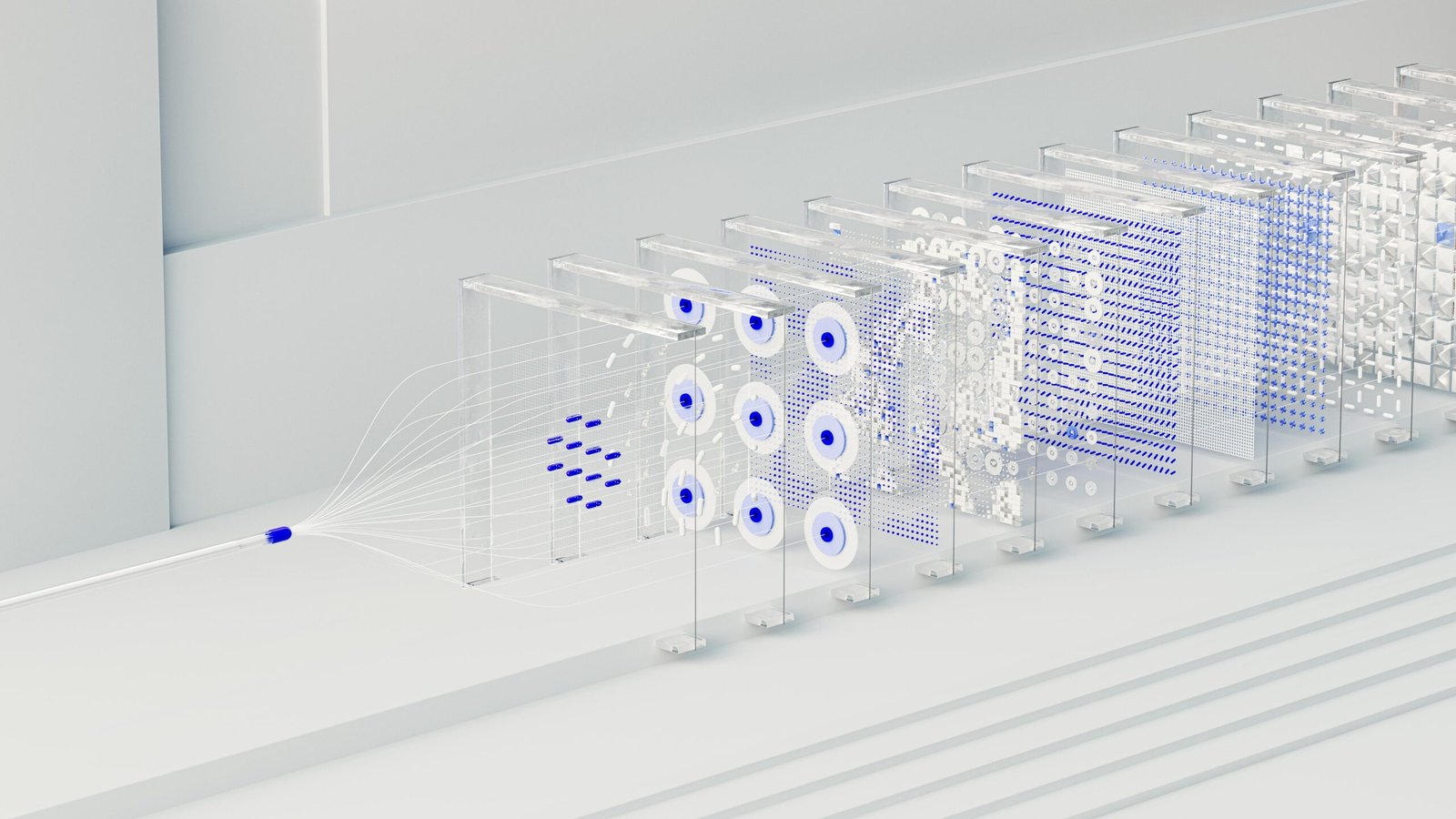

As the landscape continues to evolve in response to the proliferation of real-world artificial intelligence (AI)-)-enabled systems are increasingly used in various industries for data analysis and decision-making, so resolving data-in-use concerns is more important than ever.

Traditional systems typically rely on explicit, pre-programmed instructions to execute tasks, whereas AI-enabled systems exclusively depend on data-in-use processes. All data in AI-enabled systems, including AI models, is involved in data-in-use processes, such as inference and training.

AI engineers are increasingly turning to privacy-enhancing technologies (PETs) as the next-generation safeguards for their systems to remain competitive in this changing landscape. This ensures that the audience is well-informed and prepared for the future of data privacy, instilling a sense of readiness and anticipation.

Confidentiality and Privacy

When administering sensitive data, one must consider two critical, high-level concepts: Privacy and Confidentiality.

- Privacy is the capacity to regulate the extent, duration, and circumstances of sharing personal information. To maintain Privacy, precise measures for collecting, utilizing, retaining, disclosing, and eradicating personal information are imperative.

- Confidentiality is the term used to describe safeguarding any information that an entity has disclosed in a relationship of trust with the expectation that it will not be disclosed to unintended parties.

The primary distinction between Privacy and Confidentiality is that the former pertains to personal information, whereas the latter pertains to sensitive data.

Furthermore, Confidentiality pertains to the unauthorized use of information that is already in an organization’s possession. On the other hand, Privacy pertains to the individual’s capacity to manage the information that an organization collects, utilizes, and shares with others.

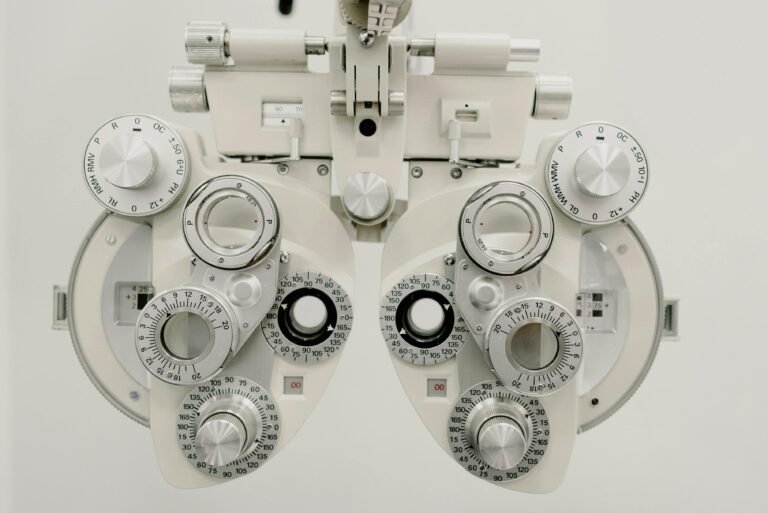

An in-depth examination of technologies that enhance Privacy

PETs, or Privacy-Enhancing Technologies, are strategies and instruments intended to safeguard individuals’ data and Privacy in the field.

These technologies, which encompass end-to-end encryption, are a comprehensive set of tools and methods intended to protect users’ data while simultaneously facilitating the development of products and functionality.

PETs play a crucial role in preserving data privacy by fostering user trust and ensuring compliance with privacy regulations. This reassures the audience about the efficacy of these technologies, instilling a sense of confidence in their use.

Privacy Enhancing Technologies (PETs) are a group of instruments that can help optimize data utilization by reducing the risks associated with their use.

Certain PERTs provide innovative anonymization tools, while others enable the collaborative analysis of privately held datasets, thereby allowing data utilization without the disclosure of duplicates.

Several PETs provide innovative tools for anonymization, while others enable the collaborative analysis of privately held datasets, allowing data use without disclosing duplicates. Pets are multifunctional: they can be used as instruments for data collaboration, to reinforce data governance decisions, or to facilitate increased accountability through audits.

These technologies protect data-in-use processes while enabling the system to execute its fundamental functions. The following are the specific functions of pets:

- Perform a trusted computation in an untrusted environment.

- Extract insights from private data without revealing the sensitive contents of the data.

- Facilitate parties’ collaboration while guaranteeing that any shared data is utilized exclusively for its intended purpose.

- Integrate quantum-resistant data protections into the system.

- Guarantee that sensitive data is not disclosed when accessing shared artificial intelligence (AI) models.

- Improve the ability of data proprietors to maintain control over their data throughout its lifecycle.

These responsibilities are all associated with protecting sensitive data and mitigating data layer vulnerabilities. In practice, the term “privacy-enhancing technologies” encompasses a wide range of tools designed to safeguard data-in-use processes, whether implemented through hardware or software, on-premises, or in the cloud.

Numerous Strategies for Privacy-Enhancing Technologies

An array of methods and instruments are employed to fortify data privacy, which is the foundation of Privacy Enhancing Technologies. Within this domain, critical technologies consist of:

- Encryption is a PET that employs cryptographic algorithms to transform data into an unintelligible format.

The information is accessible to authorized parties who possess the appropriate decryption key. Thanks to advanced encryption techniques, computations on encrypted data can be performed without the need for decryption in advance.

- Anonymization is the process of removing specific details from datasets to prevent the tracing of particular individuals.

Pseudonymization, on the other hand, replaces information with pseudonyms to enable data analysis while protecting individual identities. It is a crucial aspect of complying with data protection laws like GDPR.

- Differential Privacy

Statistical noise is incorporated into datasets in the privacy field to guarantee that individuals’ Privacy is not jeopardized during data analysis. This method enables organizations to extract insights from data without disclosing personal information.

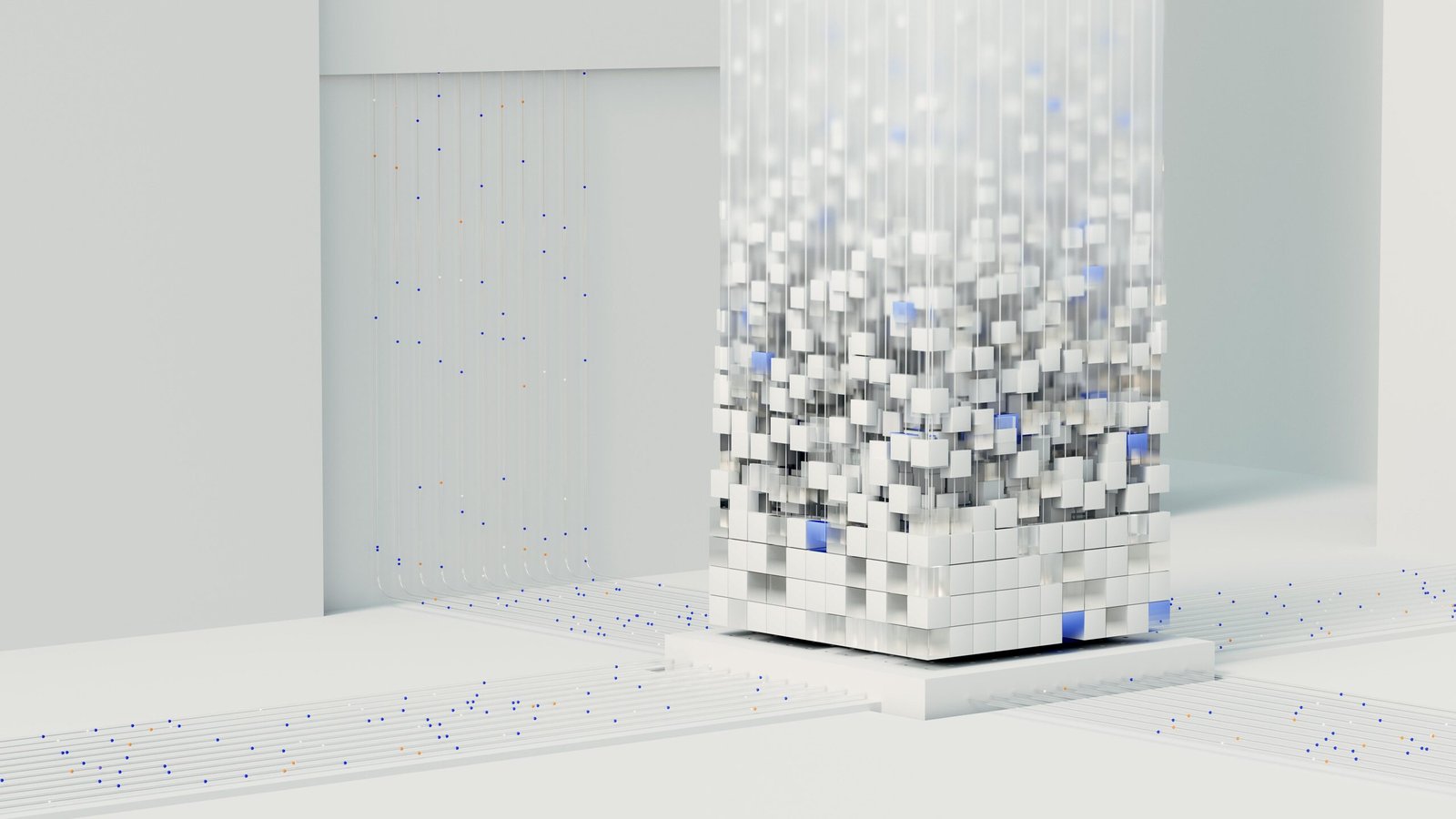

- Secure Multiparty Computation (SMPC)

Secure Multiparty Computation (SMPC) allows parties to compute a function using their inputs while maintaining the Confidentiality of those inputs. It is advantageous in situations where participants desire to analyze data without disclosing their data.

- Zero-Knowledge Proofs (ZKPs)

Zero Knowledge Proofs (ZKPs) enable one party to verify the accuracy of a statement for another party without disclosing any information. This method is advantageous in procedures requiring privacy protection for authentication and verification purposes.

The Evolution of Technologies that Enhance Privacy

The genesis of Privacy Enhancing Technologies (PETs) can be traced back to the era of cryptography, which was driven by the necessity of communication. The following are significant milestones in the development of PETs:

- Cryptography in the Early Period

The earliest forms of cryptography were founded on using encryption methods to protect information. The technological advancements of the 20th century facilitated the development of PETs.

- Public Key Cryptography (1970s)

Whitfield Diffie and Martin Hellman revolutionized data security practices by introducing cryptography in the 1970s.

This method facilitates communication through channels and is the foundation for numerous Privacy Enhancing Technologies (PETs).

The demand for Privacy increased as the Internet acquired popularity in the 1990s. Phil Zimmermann’s innovation, Pretty Good Privacy (PGP), provided data protection and communication solutions.

Privacy regulations such as the General Data Protection Regulation (GDPR) in Europe and the Health Insurance Portability and Accountability Act (HIPAA) in the United States underscored the importance of PETs in the 2000s. To safeguard data security, these regulations necessitated the implementation of techniques such as encryption and anonymization.

Utilization of Technologies that Enhance Privacy

Industries employ PETs to ensure compliance with regulations and safeguard data privacy. PETs are implemented in several critical sectors, including:

- Medical Care

PETs are implemented by the healthcare sector because patient information is sensitive. Health technology companies, hospitals, and clinics implement encryption to safeguard patient records.

Utilize anonymization techniques to protect patient identities during research endeavors. By leveraging, organizations can guarantee conformance with regulations such as the Health Insurance Portability and Accountability Act (HIPAA) in the United States—and the General Data Protection Regulation (GDPR) in Europe.

PETs are designed to protect patient information in clinical environments. Encryption and anonymization are effective methods for securing health records and enabling data sharing for research purposes.

- Financial Services

PETs are essential for protecting consumer data and securing transactions in financial institutions, including banks, insurance companies, and investment firms.

Encryption maintains the Confidentiality of personal identification numbers (PINs), bank account information, and credit card details.

Technologies such as party computation enable institutions to conduct collaborative analyses on sensitive data without compromising security integrity, thereby facilitating the evaluation of risks and preventing fraud.

PETs are essential for financial institutions to safeguard client data and facilitate transactions. Encryption methods during transactions ensure that sensitive information, including credit card information and account numbers, remains confidential.

- e-commerce platforms implement privacy-enhancing technologies (PETs) to protect consumer information and transaction details. Additionally, they implement data encryption and payment gateways to prevent fraudulent activities and data breaches.

PETs are employed by e-commerce platforms to protect consumer information during transactions while ensuring the security of data storage. Secure payment gateways implement encryption techniques to prevent fraudulent activities or access.

The use of pseudonymization and anonymization methods is essential for protecting users’ identities, facilitating personalized purchasing experiences, and implementing targeted marketing strategies.

- Media platforms implement pets to safeguard user data. Pseudonymization is a technique that protects user identities while simultaneously facilitating personalized content delivery and targeted advertising.

Telecommunications companies oversee large amounts of communication data. They use privacy-enhancing technologies (PETs) to achieve the transmission of information across networks, the protection of user data from access, and the adherence to privacy laws.

This industry implements encryption and differential privacy strategies.

PETs are necessary to protect user privacy, as social media technology platforms and technology corporations collect user data. In order to safeguard user data and facilitate features such as personalized content delivery and targeted advertising, encryption, pseudonymization, and differential Privacy are implemented.

- Governments employ PETs to safeguard the data of their inhabitants in various services, including security and tax collection. Encryption techniques and Confidentiality channels are implemented to guarantee information confidentiality.

PETs are employed in the public and government sectors to protect public safety, security, and citizen services information. Encryption is instrumental in safeguarding data, including registrant information, social security numbers, and tax records.

Data analysis among government departments is made possible by secure multiparty computation, ensuring Privacy.

Outlook for the Future

The future of Privacy-Enhancing Technologies is promising, as advancements are spurring innovation in response to increased awareness of data privacy. A number of emerging trends and potential developments are influencing the landscape of PETs.

- Machine learning and artificial intelligence integration

Integrating Privacy-Enhancing Technologies (PETs) with AI and machine learning will be indispensable as these technologies become increasingly prevalent in applications. PETs will enable data analysis and machine learning while protecting Privacy and facilitating secure and privacy-conscious AI.

- Developments in Cryptographic Methods

It is anticipated that research in cryptography will result in the creation of advanced data security methods.

Zero-knowledge proofs, which enable verification without disclosing specifics, are anticipated to become more widely accepted and efficient, while homomorphic encryption enables computations on encrypted data.

- Enhanced Utilization of Differential Privacy

Differential Privacy, a technique that privates identities by introducing noise into datasets, is anticipated to be implemented in various industries.

This methodology will be increasingly implemented in sectors such as finance, healthcare, and public services to preserve the balance between privacy protection and data utility.

- Compliance and Regulatory Changes

The adoption and improvement of PETs will be encouraged by stringent privacy regulations and compliance obligations.

Organizations must implement PETs to comply with regulations and avoid penalties, encouraging the development of privacy-preserving technologies.

- Enhanced Privacy in Smart Gadgets and IoT Devices

PETs will be essential as the number of devices and intelligent technologies increases to protect the Privacy and Confidentiality of the data collected by these devices.

Implementing secure multiparty computation and encryption will protect the data generated by connected vehicles and residential wearable devices.

In conclusion,

In summary, protecting information in various sectors necessitates implementing privacy-enhancing technologies. These technologies guarantee regulatory compliance, user confidence, and data protection, regardless of the industry—finance, healthcare, e-commerce, or government operations.

Prospects for the future of privacy-enhancing technologies include:

- The integration of intelligence (AI).

- Advancements in cryptography techniques.

- A broader adoption of differential privacy measures as technology continues to advance.

These developments will not enhance data privacy. Additionally, they enable the development of secure and innovative applications in a variety of industries, thereby enhancing the security of the digital environment for all.