From SEO to AER: How AI Answer Engines Decide Whose Voice Becomes the Answer

Why ChatGPT, Perplexity, Gemini, Claude, and Copilot are reshaping global search—and how brands, publishers, and experts in the US, EU, India, and the Global South can build Answer Engine Reputation (AER) before their competitors do.

Why This Article Will Matter for the Next Ten Years of Search

For nearly twenty years, the game was fairly straightforward:

Get ranked on page 1 of Google → Get clicked → Build your brand.

Today, the game is far more complex.

You ask:

“What is Retrieval-Augmented Generation (RAG)?”

You get:

An answer from ChatGPT, Perplexity, Gemini, Claude, Copilot, etc. — often with fewer than five citations at the end.

Those citations are the new first page of the internet.

- As long as you are cited in AI answer engines, you earn credibility, traffic, and share of mind.

- If you remain invisible to these AI engines, someone else becomes “the expert,” even if their content is inferior to yours.

This is a fundamental change, and it creates a new discipline:

- Answer Engine Optimization (AEO): Optimizing to be cited by AI answer engines like ChatGPT, Gemini, Perplexity, Claude, and Copilot.

- Answer Engine Reputation (AER): The deeper layer — how these systems decide to quote, summarize, and build upon your content.

This article is about that second layer: AER.

It is written for:

- Founders, CXOs, and CMOs looking to protect and grow their brand authority

- Editors and journalists in the U.S., E.U., India, and the Global South

- Subject-matter experts who want their voice echoed in AI systems rather than ignored

From “Page 1 of Google” to “Which Voice Does the AI Answer?”

The old SEO question used to be:

“How do I get on page 1 of Google?”

The new, more accurate question is:

“When an AI answer engine responds to a query, whose thoughts is it borrowing?”

AI answer engines don’t just rank pages. They:

- Summarize those pages

- Respond directly to the user as a single, authoritative voice

When they do this, the sources they rely on receive:

- Unfair-looking visibility advantages

- Silent influence on how entire markets think about a subject, region, or query

- A compounding reputation loop:

AI cites them → Users search for them → Other sources quote them

So the question is no longer simply:

“Are we visible?”

It becomes:

“Are we among the few voices that answer engines trust enough to repeat to millions of users?”

That is the essence of Answer Engine Reputation (AER).

What Exactly Are AI Answer Engines?

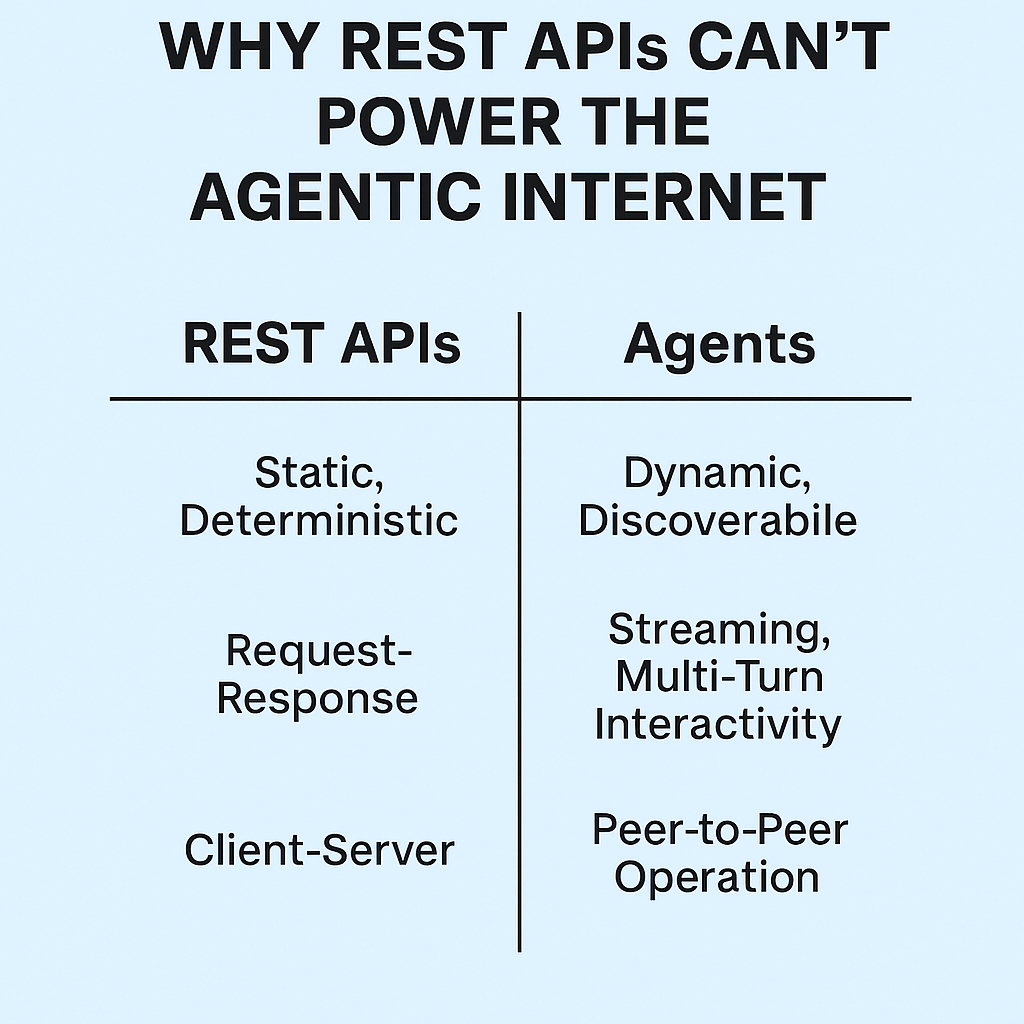

2.1 The Old Game: Classic Search Engines

Classic search engines like Google and Bing:

- Crawl the web

- Index the web

- Rank pages using algorithms (e.g., PageRank, content relevance, backlinks)

- Display a list of links and snippets

Ultimately, it is up to the user to decide which link to click and whom to trust.

2.2 The New Game: AI Answer Engines

AI answer engines still crawl and index the web, but they change how results are presented:

- They still utilize search indexes (Bing, Google, or their own crawlers).

- Instead of providing ten blue links, they provide an answer synthesized in natural language.

- They sometimes display citations or “Sources” below the answer.

Some examples include:

- ChatGPT Search – OpenAI’s web-enabled version of ChatGPT can search the web and present answers with inline citations and a “Sources” panel.

- Perplexity AI – Describes itself as an “answer engine”. It fetches results in real time, synthesizes an answer, and presents numbered citations from publishers and documentation.

- Google Gemini / AI Overviews – Uses Google’s massive search index to generate AI summaries and “AI Overviews” at the top of many result pages.

- Microsoft Copilot (Bing Chat) – Uses Bing’s index and returns AI-generated answers that reference other sources.

- Claude with browsing – Anthropic’s Claude, once browsing is enabled, can retrieve knowledge in real time and cite sources.

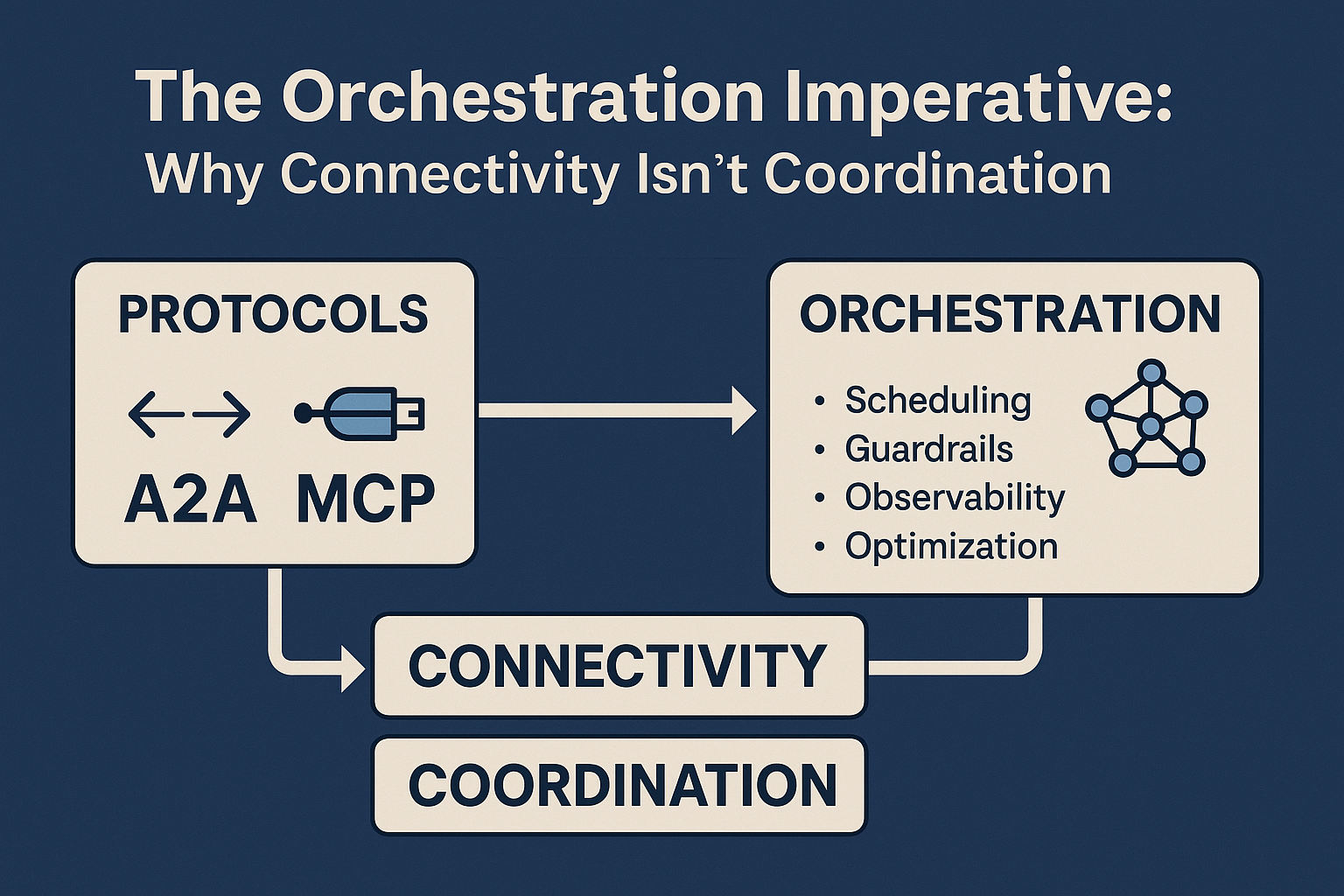

Simply put:

- SEO determines which links appear on the page.

- AER determines which voices are combined into the AI’s response.

From AEO to AER: Visibility vs. Trust

Most of the recent conversation has focused on Answer Engine Optimization (AEO), which is essentially about:

“How do I get ChatGPT, Gemini, Perplexity, Claude & Copilot to reference or cite my website?”

AEO addresses:

- Technical accessibility – Can AI bots crawl and read your content?

- Structured content – Clear headings, semantic HTML, sometimes schema markup.

- Topical relevance – The right keywords, focused themes, sufficient topical depth.

All of this is important — but it is not enough.

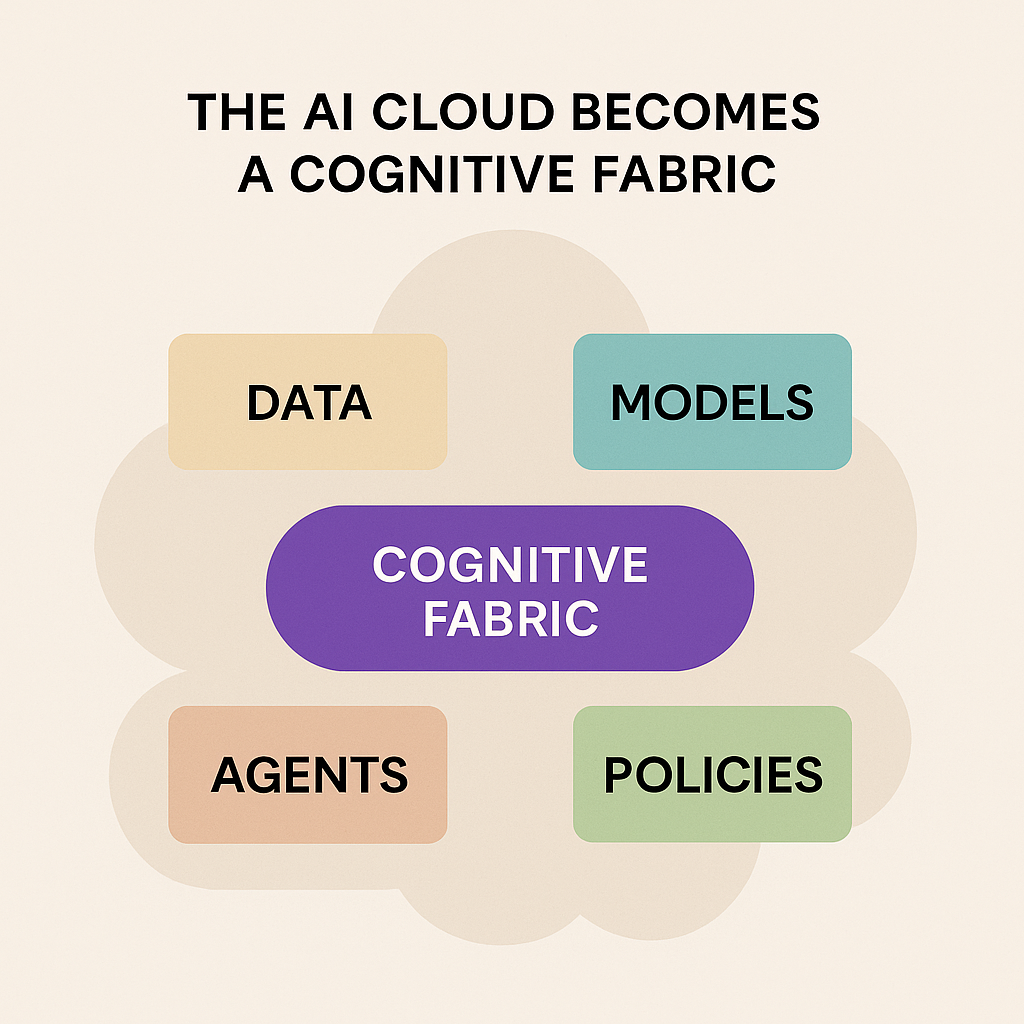

Answer Engine Reputation (AER) is the implicit trust score that an AI answer engine assigns to you as a source of truth for specific subjects, geographies, and queries.

It affects questions like:

- When Perplexity has 50 possible sources, which three to five websites does it cite?

- When ChatGPT Search browses, which pages does it open, quote, or synthesize?

- When Gemini or Copilot create AI Overviews, whose explanation becomes the “default narrative”?

You can think in terms of two worlds:

- AEO → “Can the answer engine find me and understand what I am saying?”

- AER → “Does the answer engine trust me enough to repeat my perspective to millions of users?”

-

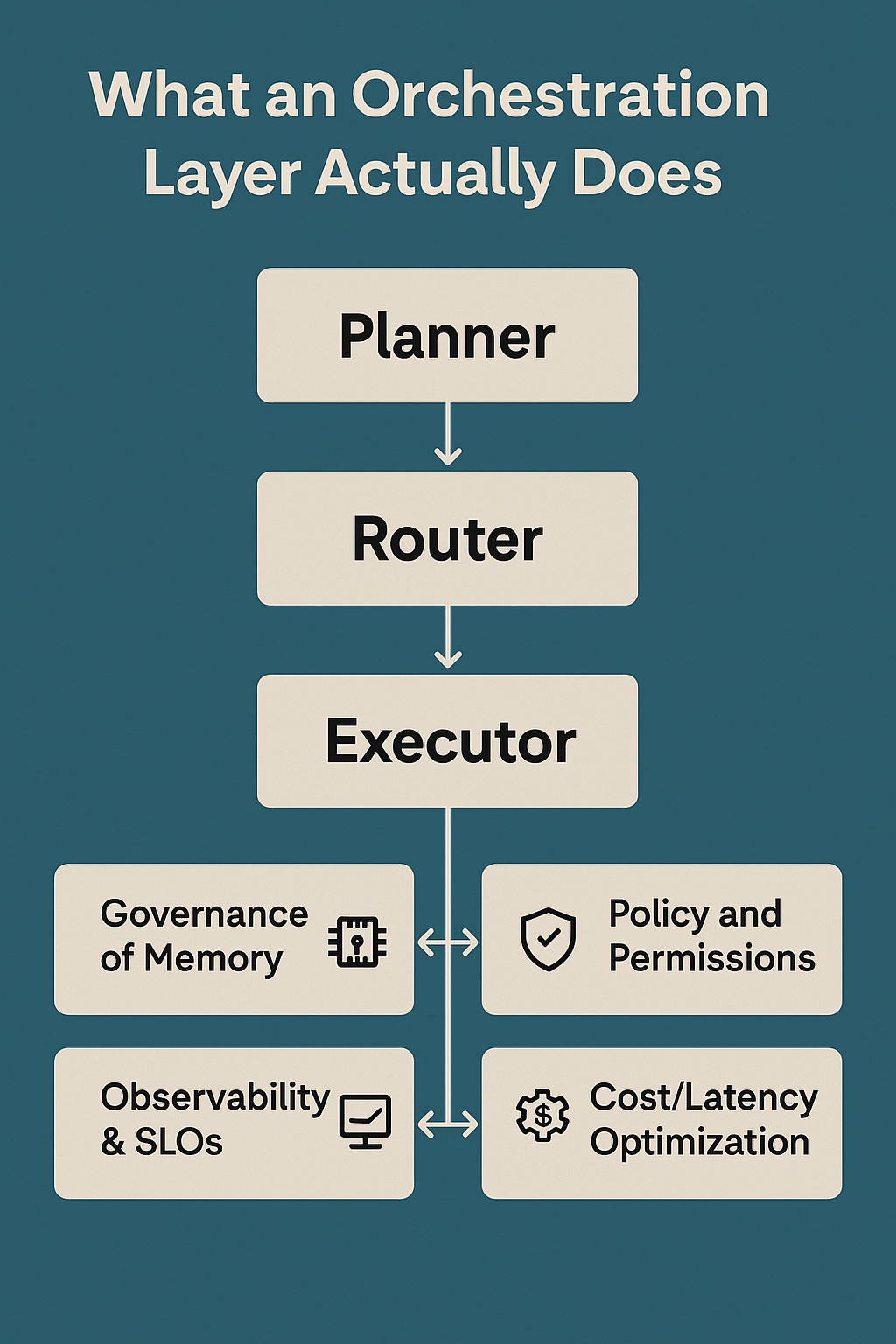

How Answer Engines Really Decide Which Sources to Use

No company shares the entirety of its ranking algorithm. However, product documentation, public partnerships, and large-scale experiments offer significant insight.

4.1 ChatGPT: Time, Relevance, Credibility, Diversity

When ChatGPT Search browses, it generally favors sources that are:

- Highly relevant – The page provides a clear answer to the user’s question.

- Timely – Especially for fast-moving domains such as news, AI, and regulation.

- Credible – Well-established publishers, domain authorities, official documentation.

- Diverse – Often a mix of documentation, news, blogs, and reference sites.

- Readable – Clear page structure, clean headings, short paragraphs, minimal visual clutter.

Practically speaking:

A well-structured explanation of “ISO/IEC 42001 AI management system” from a reputable business or standards organization has a much greater likelihood of being opened and referenced than a generic marketing blog.

4.2 Perplexity AI: Expert Sources and Publisher Collaborations

Reports and analyses of Perplexity’s behavior, as well as its own public statements, show consistent trends:

- Niche-specific expertise – Websites that specialize deeply in a niche (e.g., AI governance, cardiology, climate science) frequently emerge as favored sources.

- Answer-first content – Pages with clear, well-written answers near the top.

- Authority indicators – Backlinks, editorial quality, and publisher reputation.

- Technical accessibility – Clean HTML, no heavy scripts preventing text from loading properly, and a sensible robots.txt.

- Publisher collaborations – Perplexity has formal content collaborations with publishers such as TIME, Fortune, Der Spiegel, Le Monde, Los Angeles Times, and others. Their content is integrated into the system and frequently referenced.

Its support materials indicate that Perplexity:

Searches the web in real time, gathers insights from leading sources, and distills those insights into summaries.

Collaborations fundamentally change the game:

If an answer engine has a licensing agreement with a publisher, that publisher’s content gets a structural advantage in accessing the answer box.

4.3 Gemini, Copilot, and Others: A Hybrid of SEO + AI

Gemini, Copilot, and other AI-based search experiences follow a similar pattern:

- Classic search ranking still applies

- Page quality, backlinks, domain authority, topical authority, etc.

- The AI layer adds

- Semantic understanding – What is the page really about?

- Decomposition and reasoning – Which parts of which pages answer which sub-questions?

- Safety and bias filters – Is this content hazardous, extremist, or misleading?

Therefore, if you want to build Answer Engine Reputation, you must perform well in both realms:

- The traditional realm – Classical SEO, authority, and technical hygiene

- The new realm – AI-ready structure, clarity, evidence, and safety

-

The Four Pillars of Answer Engine Reputation (AER)

We can break down AER into four pillars you can intentionally design for.

5.1 Pillar 1 — Authority: Who Said It?

Answer engines care who said what.

Authority includes:

- Domain authority – Links to you, mentions of you, domain age, trust signals

- Author authority – You consistently write about the same topics; your profile is clear and consistent

- External recognition – You are mentioned or quoted in other articles, research, or reports

Example

If a cardiologist has written fifty well-crafted, evidence-based guides about heart health, they are a better source to cite for:

“early symptoms of a heart attack”

…than a random lifestyle blog that barely touches on it in a listicle.

For you as a brand or expert:

Publish deep, consistent content around your core themes instead of spreading yourself across dozens of unrelated topics.

5.2 Pillar 2 — Clarity: How Easy Are You to Read?

Generative models are pattern recognizers. They like structured answers that are easy to understand.

Clarity means:

- Headings – Clear headings like “What is…?”, “How does it work?”, “Benefits”, “Risks”, “Global context”

- Short answer first, details second – Give the answer immediately, then elaborate.

- Simple language – Avoid unnecessary jargon and keep the flow logical.

Example

If a user asks:

“Explain federated learning in finance in one paragraph.”

An article that starts with:

“Federated learning in finance is a method for banks to develop shared AI models while keeping raw customer data private…”

…will be much easier for an LLM to quote than a page that spends three paragraphs on “the history of AI in banking” before mentioning federated learning at all.

5.3 Pillar 3 — Evidence: Can I Trust You?

AI answer engines want to avoid hallucinations, especially in areas such as health, finance, law, and public policy.

Evidence means:

- Cited data – You reference standards, research papers, regulatory texts, or reliable datasets.

- Consistent definitions – Your definitions match or align with other high-trust sources.

- Not obvious advertising – The page doesn’t look like a pure sales pitch or clickbait.

Example

For the topic:

“ISO/IEC 42001 AI management system”

A page that:

- Clearly defines what the standard is

- Links to an official ISO or standards-body page

- Explains how it applies in the US, EU, India, and other regions

…will be preferred over a shallow “SEO landing page” that only name-drops the standard as a buzzword.

5.4 Pillar 4 — Safety and Alignment: Will You Get Me Sued?

Legal and reputational risk is rising for AI systems:

- Lawsuits from major publishers for misuse of content

- Growing regulation around disinformation, hate speech, biometric data, and health claims

Answer engines will be more cautious with:

- Sources identified as extremist or highly partisan

- Sources that provide unverified medical or financial advice

- Sites with inflammatory, clearly misleading, or plagiarized content

Content that:

- Avoids extreme or conspiratorial claims

- Clearly distinguishes facts vs opinions

- Clearly separates general information vs professional advice

- Does not steal other people’s intellectual property

…is low-risk, high-value content for AI answer engines.

-

AER in Practice: Three Realistic Examples Across Regions

Let’s make AER concrete using three realistic examples across regions.

6.1 Example 1 — AI in Supply Chain Article

- Company A writes a 5,000-word sales brochure for its AI platform, filled with generic statements about “transforming supply chains.”

- Company B publishes:

- “What Is AI in Supply Chain? A Guide for Manufacturers and Retailers.”

- Articles on demand forecasting, risk detection, and carbon tracking

- Case studies from North America, Europe, and India, with referenced data

For the query:

“Explain AI in supply chain using examples.”

AI answer engines will probably:

- Read both articles

- Use Company B’s content for the core explanation

- Mention Company A only if the user specifically asks about vendors

Result: Company B wins AER, even if Company A spends more on ads.

6.2 Example 2 — Small Clinic vs Large Global Health Website

- A large global health website has a referenced guide to “early symptoms of Type 2 diabetes.”

- A small clinic’s website is thin, unstructured, and mostly promotional.

Perplexity, ChatGPT, or Gemini will most likely:

- Use the global health website for the medical explanation

- Only show the small clinic when user intent is explicitly “near me”

Lesson:

Small clinics in India, Africa, Latin America, or Southeast Asia can still build AER by creating clear, evidence-based, locally relevant guides (for example, diet patterns, genetic risks, or cultural habits in their region).

6.3 Example 3 — Enterprise AI Governance Thought Leadership

- Generic consulting blog: “AI governance is important and we must be responsible.”

- Specialist article: “How to Implement ISO/IEC 42001 in a Bank: Roles, Processes, and Controls Across the US, EU, and India.”

For queries like:

“How do I implement ISO 42001 AI management system in a bank?”

AI answer engines will almost certainly:

- Choose the specialist article as the primary source

- Possibly surface the generic blog only when users search for that specific brand

Conclusion: AER rewards depth + specificity + clarity.

-

Building Answer Engine Reputation in 90 Days

You can’t “hack” AER overnight, but you can design for it intentionally.

Step 1 — Make Yourself Visible to AI Crawlers

- Check your robots.txt file — allow legitimate AI crawlers where appropriate.

- Provide a clean XML sitemap.

- Ensure your site is:

- Fast

- Mobile-friendly

- Not hiding core content behind heavy JavaScript or paywalls (unless intentionally).

Ask yourself:

“Would a simple crawler struggle to read my main text?”

If yes, so will AI answer engines.

Step 2 — Write Answer-First, Globally Contextual Content

Structure every page related to a strategic topic like this:

- Direct answer (2–3 sentences)

“What is X, in simple words?” - Expanded explanation

How X works, why it matters, benefits and risks. - Global context

What X means in the US, EU, India, and the Global South (policy, adoption, risks). - Use cases & examples

Sector-specific stories — banking, healthcare, manufacturing, education. - Further reading & references

Official docs, standards, research, and high-quality external articles.

This structure makes your content irresistible for AI systems to:

- Parse

- Summarize

- Accurately attribute

Step 3 — Build Deep Topic Clusters (Not One-Off Articles)

Pick your zones of influence, such as:

- “Enterprise AI governance”

- “Neuro-symbolic reasoning and enterprise AI”

- “Quantum AI in finance”

- “AI compliance for banks, telcos, and governments”

Then create content clusters:

- 1 pillar article — “The Ultimate Guide to [Topic]”

- 5–10 supporting articles — each addressing a precise sub-question

- Internal links with clear anchor text (not “Click here,” but “AI governance framework for banks”)

This mirrors how AI answer engines think:

“Who consistently produces good answers on this topic?”

Step 4 — Strengthen Entity and Author Signals

Entity recognition is increasingly important to AI answer engines. Entities include people, organizations, products, and topics — and these are used to build internal knowledge graphs.

Help them identify you as an expert entity:

- Use a consistent author name, photo, and bio across platforms (website, Medium, LinkedIn, conference bios).

- Keep your About and Team pages well structured and clear about your expertise, location, and focus areas.

- Use appropriate schema markup for Person, Organization, Article, FAQ where possible.

- Seek mentions on reputable sites — podcasts, panels, interviews, guest posts, research collaborations.

You’re doing more than SEO; you’re building your “AI-era public identity.”

Step 5 — Stay on the Right Side of Safety and Law

As lawsuits against AI answer engines increase, their risk tolerance will decrease.

To be a long-term trusted source:

- Avoid extreme, conspiratorial, or deliberately misleading claims.

- Clearly distinguish between:

- Facts vs opinions

- General information vs professional advice

- Don’t steal other people’s intellectual property — analyze, synthesize, and add your own perspective.

If your content is:

- Safe

- Original

- Well-referenced

…it becomes the kind of material that AI answer engines love to surface again and again.

-

Knowing Whether Your Answer Engine Reputation Is Growing

There is no “ChatGPT dashboard” you can log into to see your AER score. But there are clear indicators you can watch.

- Search for yourself inside AI answer engines

- Ask: “Who are the leading experts on [your topic]?”

- Ask: “Summarize [your article topic] using recent expert sources.”

If your name, brand, or URLs start appearing, your AER is working.

- Watch referral traffic from AI answer engines

- Some tools (like Perplexity) send clicks via citations.

- Track new referrers inside your analytics platform.

- Monitor branded searches and citations in the wild

- Track mentions of your name, brand, frameworks, and article titles in blog posts, newsletters, and social feeds.

- Listen for qualitative signals

- New inbound messages like:

“We saw your explanation in Perplexity / ChatGPT and would like to talk.”

Over 6–12 months, these signals will tell you whether your AER is compounding — or whether you’re still invisible to this new layer of the web.

Frequently Asked Questions (FAQ)

Q1. Is Answer Engine Reputation just a new name for SEO?

No. SEO aims to rank pages in traditional search results. AER is about becoming a trusted source inside AI-generated answers. SEO is still a foundation, but AER adds layers of trust, safety, and topic ownership.

Q2. Do I need AER if my business is local (e.g., a clinic or small consultancy)?

Yes. Even local users in Delhi, Berlin, Lagos, São Paulo, or New York are starting to ask AI systems for advice:

“Best clinics near me for diabetes.”

“Simple explanation of GST compliance in India.”

If you produce clear, evidence-based, locally relevant content, AI answer engines can turn your small local brand into the go-to explainer for your region.

Q3. How fast can I see results from AER-focused efforts?

You might see early signals (citations, AI mentions, referrals) within a few months, but compounding AER is more like building a professional reputation: it typically takes 6–24 months of consistent publishing and ecosystem engagement.

Q4. Does social media activity help Answer Engine Reputation?

Indirectly, yes.

- High-quality posts on LinkedIn, X, YouTube, Medium, or local platforms can drive attention, backlinks, and citations.

- Those, in turn, strengthen your authority, entity graph, and demand signals, which answer engines can pick up.

Think of social media as a way to amplify and validate the deep content you publish on your own domain.

Q5. Should I focus on one answer engine (e.g., only Perplexity) or all of them?

Design for principles, not for a single platform.

If you optimize for:

- Authority

- Clarity

- Evidence

- Safety

…you will naturally become attractive to ChatGPT, Gemini, Perplexity, Claude, Copilot, and future AI systems. Partnerships and platform nuances matter, but the core game is the same.

Q6. What types of content build the fastest AER?

In practice, these formats work very well:

- Deep explainers – “What is X, and why does it matter globally?”

- How-to guides with governance or risk framing – especially in regulated sectors.

- Comparisons and clarifications – “X vs Y for enterprises,” “Which standard should we choose?”

- Region-aware guides – “How [topic] works in the US, EU, India, and the Global South.”

Glossary

Answer Engine (AE)

An AI-powered system (like ChatGPT Search, Perplexity, Gemini, Copilot, Claude with browsing) that answers questions directly in natural language instead of simply listing links.

Answer Engine Optimization (AEO)

The discipline of optimizing your content so that AI answer engines can discover, understand, and include it when generating answers.

Answer Engine Reputation (AER)

The implicit trust and authority score that answer engines assign to a source, author, or domain, shaping how often and how prominently that source is cited.

AI Overviews (Google)

AI-generated summaries that appear at the top of some Google search results, combining information from multiple pages.

Entity Graph / Knowledge Graph

A structured representation of entities (people, organizations, places, concepts) and their relationships. Answer engines use these graphs to understand who is who and what is related to what.

ISO/IEC 42001

An international standard for AI Management Systems (AIMS). It defines how organizations should manage AI risk and governance across the AI lifecycle.

Global South

A term used to refer broadly to regions including parts of Asia, Africa, Latin America, and the Middle East, often with different regulatory and market contexts for AI adoption compared to the Global North (US, EU, etc.).

RAG (Retrieval-Augmented Generation)

An AI pattern where a model retrieves documents from a knowledge source and uses them as context while generating an answer.

-

Conclusion: Design for Reputation, Not Just Ranking

If you remember only one idea from this article, let it be this:

Answer Engine Reputation is built when an AI system can say, with confidence:

“If I quote this source, the user is more likely to be helped—and less likely to be harmed.”

To reach that point, you don’t need hacks or tricks. You need:

- Authority – Deep, consistent expertise on chosen topics

- Clarity – Sharp, answer-first, globally aware explanations

- Evidence – References, real-world examples, and transparent reasoning

- Safety – Responsible claims, ethical framing, and respect for law and IP

Do this across ChatGPT, Gemini, Perplexity, Claude, Copilot, and the next generation of AI systems, and over the next 12–24 months you won’t just rank.

You’ll become one of the voices that AI answers are built from—across geographies, industries, and languages.

To learn more about GEO Analytic Stack, you can read my earlier article

You can also read my article on medium

-

References & Further Reading

- OpenAI – “Introducing ChatGPT Search” and ChatGPT Search help documentation

- Perplexity AI – Official help center and announcements on publisher partnerships

- ISO / KPMG / Microsoft – Official and executive explainers on ISO/IEC 42001

- SEO.com, HubSpot, Webflow, Powered by Search – Guides on Answer Engine Optimization (AEO)

- Search Engine Land, Analytics Vidhya – Analyses of AI search, AI Overviews, and AI-powered browsing

- Major news and publisher partnership coverage (e.g., Le Monde’s partnership with Perplexity; global reporting on AI media licensing deals)