Representation Economy

The AI era is often described as a race for smarter models. That is too narrow. The deeper shift is that AI does not act on reality directly. It acts on representations of reality. That means the real contest is no longer only about intelligence. It is also about how well reality becomes visible, connected, current, interpretable, and governable inside systems.

This is where the idea of the Representation Economy begins. In this economy, value increasingly flows to what can be clearly represented, reliably understood, and responsibly acted upon. Institutions that represent reality better will coordinate better, decide better, and earn more trust. Institutions that do not will become fragile, slow, and increasingly invisible inside the systems that shape modern decisions.

1) What is the Representation Economy?

The Representation Economy is an economic order in which value depends on how well reality is represented in a machine-readable form.

In practical terms, this means the winners of the AI era will not be defined only by who has the biggest model or the most compute. They will be defined by who can represent customers, suppliers, assets, risks, obligations, conditions, and change more clearly and more responsibly. In your own framing, it is an economy in which value flows to what can be clearly represented, reliably understood, and responsibly acted upon.

2) Why is the Representation Economy important now?

It matters now because intelligence is becoming more abundant, while trustworthy representation remains scarce.

As models improve and become cheaper, raw intelligence becomes easier to access. What remains scarce is the ability to turn messy, fragmented, real-world reality into something systems can actually trust and use. That scarcity becomes the new source of advantage. The next phase of AI will not be defined only by smarter models. It will be defined by better systems for representing reality accurately, continuously, and responsibly.

3) How is the Representation Economy different from the data economy?

The data economy emphasized accumulation. The Representation Economy emphasizes faithful understanding.

The earlier digital mindset rewarded collecting, storing, and extracting data. But data alone does not create understanding. Data is partial, contextual, and often disconnected. Representation is what gives data meaning by connecting signals to entities, condition, context, and change over time. Data is the trace. Representation is the usable picture.

4) What does it mean to say AI acts on representations, not reality?

It means AI systems never engage reality directly. They engage structured versions of reality created by records, categories, signals, and models.

A model does not see the patient, the farmer, the supplier, or the firm itself. It sees whatever the system has encoded about them. If that encoded picture is thin, stale, fragmented, or distorted, the model will still reason over it. That is why many AI failures are not failures of intelligence first. They are failures of representation.

5) Why do so many AI systems fail before the model begins?

Because the real failure often starts in weak visibility, weak identity, and weak representation.

Organizations usually think the hard problem is reasoning. But before a system can reason well, it must know what it is looking at. If identity is fragmented, signals are disconnected, state is shallow, and context is missing, then intelligence is operating over a distorted picture. That is why your formulation is so powerful: the failure begins before the model begins.

6) Why is more data not the same as more understanding?

Because data accumulation does not automatically create coherence.

Many institutions are data-rich but insight-poor. They capture events, but miss condition. They store records, but lack continuity. They collect signals, but do not turn them into a coherent representation of what is happening, to whom, and in what state. More data can even create false confidence if it is disconnected from identity and meaning.

7) What is the “data illusion” in AI?

The data illusion is the belief that more data automatically produces better decisions.

That belief worked as a simple story in the earlier digital era, but it breaks down in the AI era. The issue is not possession of data. The issue is whether the system can represent reality faithfully enough to act on it. The shift is from asking “How much data do we have?” to asking “What reality can we represent well enough to trust?”

8) What is the “reality gap” in AI systems?

The reality gap is the distance between the world outside the system and the picture inside the system.

A system can look sophisticated and still be wrong about the world. Dashboards may look complete. Models may appear intelligent. Reports may feel authoritative. Yet the internal map may still be partial, stale, or distorted. In the AI era, stronger models do not remove this gap. They magnify it if the underlying representation is weak.

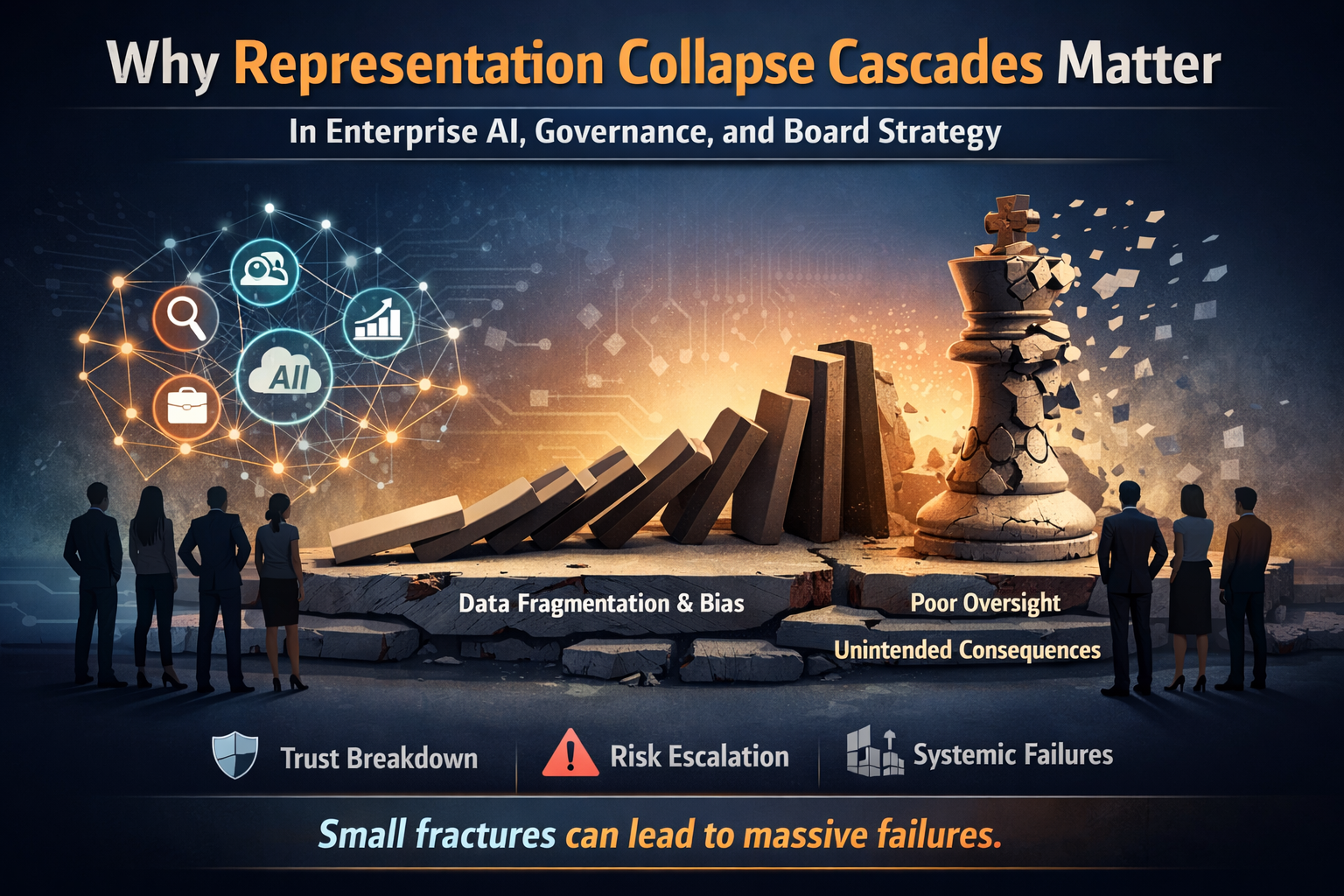

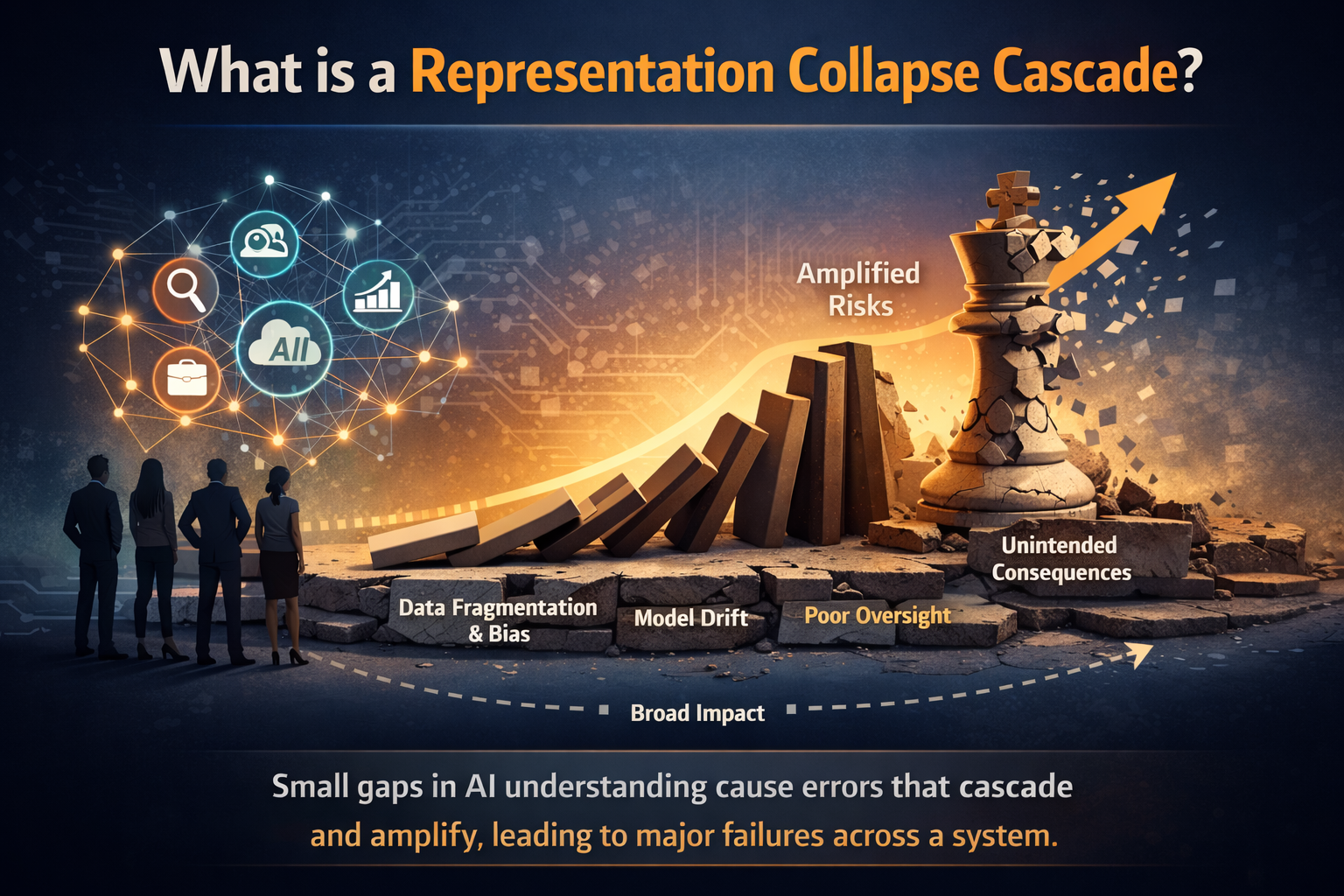

9) Why does weak representation become dangerous when AI gets better?

Because stronger intelligence scales misunderstanding faster.

A weak system with weak intelligence may do little. A weak system with strong intelligence can act confidently on a distorted picture. It can automate incompleteness, scale approximation, and make consequential decisions faster than institutions can correct them. That is why intelligence without representation becomes dangerous, not transformative.

10) Why is visibility becoming economic power?

Because what systems see clearly, they can price, trust, coordinate, include, and act upon more effectively.

In the Representation Economy, visibility is not just descriptive. It is economic. What is clearly represented moves faster, is trusted more, and participates more fully. What is poorly represented appears risky, gets delayed, or is excluded altogether. The new divide is not only between those who have AI and those who do not. It is also between those who are well represented and those who are not.

11) What does “if it is not represented, it does not exist” mean?

It means not that something is unreal, but that it is operationally absent inside the system.

A thing can be real and still remain economically weak if it does not enter the system in a form that can be recognized, structured, processed, and trusted. Systems allocate attention, action, and value through what they can understand. What does not cross that boundary well remains hard to finance, serve, insure, coordinate, or govern.

12) Why is Representation Economy also a theory of inclusion?

Because participation increasingly depends on representation.

If an entity appears in the system only as fragments, it will be approximated, treated cautiously, or excluded. If it appears with continuity, context, and trustworthy identity, it becomes easier to include. That is why the Representation Economy is not only a theory of value. It is also a theory of inclusion, fragility, and institutional responsibility.

The SENSE–CORE–DRIVER Framework

13) What is the SENSE–CORE–DRIVER framework?

SENSE–CORE–DRIVER is a three-layer architecture for understanding how intelligent institutions actually work.

In your formulation, every AI system operates across three layers whether we design for them explicitly or not. SENSE asks whether the system can see reality clearly. CORE asks whether it can reason effectively. DRIVER asks whether it can act in a trustworthy and accountable way. This framework matters because it shifts the conversation from models alone to the full institutional architecture of seeing, deciding, and acting.

14) Why is this framework important?

Because most AI conversations focus too much on CORE and too little on the layers that make intelligence usable.

Organizations overinvest in reasoning and underinvest in visibility and legitimacy. That is the structural mistake your framework exposes. Intelligence may be the most visible layer, but it is not the foundation. If SENSE is weak, CORE reasons over fragments. If DRIVER is weak, action loses trust.

15) What is SENSE?

SENSE is the layer where reality becomes machine-readable.

SENSE is composed of Signal, ENtity, State, and Evolution. It is the part of the system that detects traces from the world, attaches those traces to something persistent, models current condition, and updates that condition over time. Before a system can think well, it must first see well. SENSE is therefore not a preliminary layer. It is the foundation of all trustworthy intelligence.

16) Why does SENSE matter so much?

Because if reality is weakly seen, everything built above it inherits distortion.

A system can monitor everything and still understand nothing if its signals are noisy, its entities are fragmented, its state is shallow, and its representations do not evolve. SENSE determines whether the system is working on reality or on approximation. Weak SENSE does not create a small error. It creates a structural one.

17) What is CORE?

CORE is the cognition layer where the system comprehends context, optimizes decisions, realizes action, and evolves through feedback.

This is the layer most people mean when they talk about AI intelligence. It is where systems reason, compare, predict, rank, and optimize. But CORE is not sovereign. It depends entirely on what SENSE has made visible, and its output only becomes socially acceptable when DRIVER can govern it.

18) Why is intelligence not enough?

Because intelligence scales what it is given; it does not repair weak foundations.

If representation is weak, better reasoning simply produces faster distortion. A system can optimize brilliantly and still optimize the wrong proxy. It can recommend the right answer for the wrong reason. It can be technically impressive and institutionally fragile. That is why intelligence alone cannot run enterprises or societies safely.

19) What is DRIVER?

DRIVER is the governance and legitimacy layer that makes action trustworthy.

Once systems move from advice to action, capability is no longer enough. Institutions need a layer that governs who delegated authority, what representation was used, which identity was affected, how decisions are verified, how actions are executed, and what recourse exists if the system is wrong. DRIVER is the answer to the question: Can I trust you to act?

20) Why is DRIVER becoming more important in AI?

Because the real risk begins when systems move from recommendation to consequence.

When AI starts approving, denying, pricing, prioritizing, routing, or executing, the issue is no longer just whether the model is clever. The issue is whether authority, accountability, proof, verification, and recourse are in place. Trust begins when action becomes governable. That is a governance problem, an engineering problem, and increasingly a market problem.

21) What is the simplest way to understand the relationship between SENSE, CORE, and DRIVER?

SENSE sees, CORE reasons, DRIVER governs action.

Another way to put it is this: first reality becomes visible, then reality is interpreted, then action is executed under conditions the world can trust. This order is not optional. It is foundational. When institutions reverse it and start with CORE, they build fragile systems that reason over incomplete reality and act without enough legitimacy.

Why the Framework Matters Strategically

22) Why are most institutions building AI in the wrong order?

Because they start with intelligence instead of building visibility and legitimacy first.

CORE demos well. It benchmarks easily. It looks like progress. SENSE and DRIVER are slower, quieter, and harder. But those are the layers that determine whether systems endure under consequence. Your own conclusion is clear: institutions should not build from CORE outward. They should build from the edges inward. First make reality visible enough. Then make action trustworthy enough. Only then let intelligence scale between them.

23) What does it mean to “build SENSE and DRIVER first”?

It means building the foundations of legibility and legitimacy before scaling AI action.

On the SENSE side, that means better signals, persistent entities, richer state, and continuity over time. On the DRIVER side, that means clearer delegation, better verification, stronger accountability, governed execution, and meaningful recourse. AI should be introduced into a system where reality is visible enough and action is governable enough to deserve consequence.

24) How does the Representation Economy change competitive advantage?

It shifts advantage away from model access alone and toward representation quality, trust, and governable execution.

Two organizations can use the same model and get very different outcomes. The better represented organization will detect change earlier, understand entities more deeply, make better decisions, and act with greater legitimacy. In a same-model world, representation becomes the deeper source of edge. That is why so many of your article titles point toward representation premium, representation capital, representation infrastructure, and representation alpha.

25) Why will new company categories emerge in the Representation Economy?

Because once representation becomes the source of value, entire new infrastructure layers become economically necessary.

This includes systems for identity continuity, representation correction, verification, recourse, insurable trust, delegation rating, portable machine-readable reality, and representation forensics. The frontier shifts from data infrastructure and intelligence infrastructure toward representation infrastructure and delegation infrastructure.

26) Why is trust structural in the Representation Economy?

Because trust is not an external layer added after the fact. It is embedded in how representation is created and how action is governed.

An entity participates more when it believes it is being represented fairly, that its representation will not be misused, and that recourse exists if something goes wrong. Representation without trust becomes extraction. This is why the Representation Economy is not just about seeing more. It is about seeing under conditions that sustain participation.

27) Why does ethics begin before the model?

Because the first moral question is not only how decisions are made, but who is represented well enough to matter.

A system may be fair relative to the data it sees and still be deeply unjust if critical reality never enters the system properly. Thin representation operating quietly at scale can create exclusion long before visible denial or formal bias appears. Your work makes this point clearly: justice in the AI era begins not only at decision, but at representation.

28) What is representation failure?

Representation failure is what happens when systems misread reality because the underlying representation is thin, fragmented, stale, or distorted.

This can look like misclassification, delayed action, false confidence, weak trust, invisible dependencies, or systematic exclusion. It is not just a technical issue. It becomes economic, institutional, and moral because decisions and actions now operate at scale. Representation failure is therefore one of the deepest hidden risks in the AI era.

29) What is the biggest misconception about AI today?

The biggest misconception is that intelligence is the primary bottleneck.

Your argument turns that assumption upside down. In many real-world systems, the deeper bottleneck is not reasoning power but representational quality. Institutions expected intelligence to be the breakthrough. Instead, intelligence is exposing fragmentation, incomplete identities, weak continuity, and poor governance. The room was already messy. AI simply turned on a brighter light.

30) What is the central leadership question in the Representation Economy?

The core leadership question is no longer “How smart is our AI?” but “What can our systems actually see, understand, and govern well enough to act on?”

That leads to harder questions. What realities remain weakly represented? Where is visibility still thin? Where is action weakly governed? Where are we mistaking activity for understanding? Where has trust not yet been earned? These are not just technical questions. They are institutional design questions.

31) What is the one-sentence summary of the Representation Economy and the SENSE–CORE–DRIVER framework?

The Representation Economy is the emerging AI-era order in which value flows to what systems can represent clearly, reason over effectively, and act on responsibly through SENSE, CORE, and DRIVER.

Put even more simply: SENSE makes reality visible, CORE makes intelligence possible, and DRIVER makes action legitimate. Institutions that understand this will build differently. They will not just use AI differently. They will become different kinds of institutions.

Why this framework matters now

The systems that endure will not be the ones that merely sound intelligent. They will be the ones that remain understandable, governable, and survivable. That is why the Representation Economy is not a side topic within AI strategy. It is a way of naming the deeper shift beneath the AI era itself. It explains why visibility, identity, context, trust, and recourse are moving from supporting concerns to first-order economic concerns.

This is also why the future belongs not simply to those who compute more, but to those who represent reality more clearly and act on it more responsibly. In that sense, the Representation Economy is not only a theory of AI value creation. It is also a theory of participation, trust, inclusion, fragility, and institutional redesign.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- What Is the Representation Economy? (raktimsingh.com)

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- Firms Won’t Be Defined by Employees. They Will Be Defined by Delegation – Raktim Singh

- The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy – Raktim Singh

- The Representation Attack Surface: Why AI’s Biggest Threat Is Reality Hacking, Not Model Hacking – Raktim Singh

- The Chief Representation Officer: Why Institutions Collapse When Machine-Readable Reality Falls Behind – Raktim Singh

- The Scarcity of Reality: Why the AI Economy Will Be Defined by the Lifecycle of High-Trust Representation – Raktim Singh

- Delegation Rating Agencies: Why the AI Economy Needs a New System to Rate Machine Authority – Raktim Singh

- The Machine-Readable Franchise: How Small Firms Will Win in the AI Trust Economy – Raktim Singh

- Representation Due Diligence: Why Every AI-Era Deal Must Start with a Reality Audit – Raktim Singh

- Recourse Platforms (raktimsingh.com)

- The New Company Stack (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework — foundational architecture for how institutions see, think, and act. (raktimsingh.com)

- The Representation Utility Stack — on interoperable reality as the next competitive layer. (raktimsingh.com)

- Representation Due Diligence — why AI-era deals must start with a reality audit. (raktimsingh.com)

- Recourse Platforms — why correction, appeal, and recovery become core AI infrastructure. (raktimsingh.com)

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- Representation Cold Start — why some industries cannot use AI until reality becomes machine-ready. (raktimsingh.com)

- The Representation Boundary: Why AI Systems Replace Reality—and Why It Will Define Who Wins the AI Economy – Raktim Singh

- Representation Collapse: Why AI Systems Fail Between Too Little Reality and Too Much – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.