The SENSE–CORE Handoff Protocol:

For the last few years, the AI conversation has been dominated by one question: How intelligent can machines become?

That is the wrong starting point.

Most enterprise AI failures are not caused by weak intelligence alone. They happen because organizations confuse three different problems: Representation, Reasoning, and Responsibility.

AI systems do not operate directly on reality. They operate on representations of reality. They do not merely “think.” They reason over structured context, assumptions, goals, constraints, and evidence. And once connected to workflows, APIs, payments, approvals, machines, customer journeys, or enterprise systems, they begin to create consequences.

This is the RRR problem of AI:

Representation: Can the institution represent reality correctly?

Reasoning: Can the system reason correctly about that representation?

Responsibility: Can the institution act legitimately on the basis of that reasoning?

In the SENSE–CORE–DRIVER framework:

Representation maps to SENSE.

Reasoning maps to CORE.

Responsibility maps to DRIVER.

SENSE makes reality machine-legible.

CORE makes reality intelligible.

DRIVER makes action legitimate.

The future of enterprise AI will not be decided only by who has access to the most powerful models. It will be decided by which institutions can see better, reason better, and act responsibly.

The SENSE–CORE Handoff Protocol explains how intelligent systems transition from representing reality (SENSE) to reasoning about reality (CORE), and why failures at this boundary create unreliable, unsafe, and untrustworthy AI systems.

The Wrong Question: “How Intelligent Is the AI?”

For the last few years, the AI conversation has been dominated by one question:

How intelligent can machines become?

This question has shaped boardroom discussions, technology roadmaps, startup valuations, enterprise pilots, and public imagination. Organizations have rushed to adopt large language models, copilots, agents, vector databases, RAG systems, AI governance tools, and automation platforms.

But a deeper problem is now becoming visible.

Most enterprise AI failures are not caused by intelligence alone.

They happen because organizations confuse three very different problems:

Representation. Reasoning. Responsibility.

AI systems do not operate directly on reality. They operate on representations of reality.

They do not simply “think.” They reason over structured or unstructured representations, assumptions, context, goals, constraints, and institutional priorities.

And once connected to workflows, APIs, systems of record, customer journeys, payments, approvals, supply chains, machines, or decisions, they do not merely produce outputs. They begin to affect the real world.

That is why AI is not one problem.

It is three problems:

Can the institution represent reality correctly?

Can the system reason correctly about that representation?

Can the institution act responsibly on the basis of that reasoning?

This is the RRR problem of AI:

Representation, Reasoning, and Responsibility.

In the SENSE–CORE–DRIVER architecture, these map naturally:

Representation belongs to SENSE.

Reasoning belongs to CORE.

Responsibility belongs to DRIVER.

SENSE makes reality machine-legible.

CORE interprets and reasons.

DRIVER determines whether action is legitimate, authorized, verifiable, reversible, and accountable.

This distinction matters because many organizations are using more intelligence to solve problems that are not intelligence problems.

They are using better models to compensate for poor representation.

They are using governance committees to compensate for poor reasoning.

They are using automation to compensate for unclear responsibility.

That is why many AI pilots look impressive but fail in production.

The demo may be impressive.

The model may be powerful.

The workflow may be automated.

But the institution still cannot reliably see, reason, and act.

Why This Matters Now

The global AI conversation is already moving beyond raw model performance.

NIST’s AI Risk Management Framework treats trustworthy AI as a socio-technical discipline involving validity, reliability, safety, resilience, accountability, transparency, explainability, interpretability, privacy, and fairness — not merely model accuracy. (NIST)

The EU AI Act focuses heavily on risk management, data governance, documentation, logging, human oversight, accuracy, robustness, and cybersecurity for high-risk AI systems. (Digital Strategy)

The OECD AI Principles also emphasize trustworthy AI that respects human rights and democratic values, while promoting robustness, safety, security, transparency, and accountability. (OECD)

These developments point to a clear shift:

Enterprise AI is no longer only about smarter models. It is about building intelligent institutions.

An intelligent institution must be able to do three things:

It must represent reality with enough fidelity.

It must reason over that representation with enough discipline.

It must act with enough legitimacy.

That is the deeper architecture this article proposes.

The First Mistake of the AI Era

The first mistake of the AI era is treating every AI failure as a model failure.

When an AI system fails, the instinct is usually to say:

The model hallucinated.

The model missed context.

The model needs fine-tuning.

The prompt was weak.

The data was insufficient.

The reasoning model was not strong enough.

Sometimes that diagnosis is correct.

But often, it is incomplete.

A bank may say its AI credit assistant is unreliable. But the deeper issue may be that customer identity, income, liabilities, account relationships, repayment behavior, and risk exposure are fragmented across systems.

That is not primarily a reasoning problem.

It is a representation problem.

A manufacturer may say its AI maintenance model is weak. But the deeper issue may be that machine telemetry is inconsistent, sensor readings are stale, maintenance logs are incomplete, and equipment identities are duplicated.

Again, this is not primarily a model problem.

It is a SENSE problem.

A healthcare institution may say an AI recommendation engine is risky. But the deeper issue may be unclear authority: Who can approve the recommendation? Who can override it? How is consent captured? What happens if the recommendation is wrong?

That is not only a reasoning problem.

It is a responsibility problem.

This is why organizations need a better diagnostic lens.

They need to ask:

Is this a Representation problem?

Is this a Reasoning problem?

Or is this a Responsibility problem?

The RRR Framework: Representation, Reasoning, Responsibility

The RRR framework says that every serious AI system must solve three connected but distinct problems.

-

The Representation Problem

Can the system correctly represent the relevant reality?

This includes entities, identities, states, relationships, events, context, provenance, constraints, freshness, uncertainty, and missing information.

Representation is not just data.

Data is raw material.

Representation is structured meaning.

A transaction record is data.

A customer risk state is representation.

A sensor reading is data.

A machine health state is representation.

A support ticket is data.

A customer frustration pattern is representation.

Representation answers the question:

What does the institution believe is true about the world right now?

This is the domain of SENSE.

SENSE detects signals, attaches them to entities, builds state representation, and updates that state as reality evolves.

-

The Reasoning Problem

Can the system reason correctly about the represented reality?

This includes inference, planning, comparison, prioritization, causal interpretation, scenario analysis, optimization, tradeoff management, decision support, and recommendation.

Reasoning answers the question:

Given what we believe is true, what should we understand, infer, recommend, or decide?

This is the domain of CORE.

CORE comprehends context, optimizes decisions, realizes possible actions, and evolves through feedback.

-

The Responsibility Problem

Can the institution act legitimately on the basis of the reasoning?

This includes authority, delegation, approval, verification, execution boundaries, auditability, reversibility, escalation, accountability, and recourse.

Responsibility answers the question:

Who has the right to act, under what authority, with what safeguards, and what happens if the action is wrong?

This is the domain of DRIVER.

DRIVER defines delegation, representation, identity, verification, execution, and recourse.

The First Law of Intelligent Institutions

Here is the core principle:

An institution cannot reason responsibly about reality it cannot represent correctly.

This is the first law of intelligent institutions.

If SENSE is weak, CORE reasons on unstable reality.

If CORE is weak, DRIVER may authorize poor decisions.

If DRIVER is weak, even correct reasoning can produce illegitimate action.

This is why intelligence alone is not enough.

A model can be brilliant and still unsafe.

A decision can be technically correct and still institutionally illegitimate.

A workflow can be automated and still irresponsible.

A system can perform well in a benchmark and still fail inside a real organization.

Enterprise AI must therefore be judged not only by model output, but by continuity across representation, reasoning, and responsibility.

Problem 1: The Representation Problem

The representation problem is the hidden starting point of AI.

Before AI can reason, the institution must decide what reality looks like in machine-readable form.

Who is the customer?

What is the asset?

What is the current state?

Which signals matter?

Which signals are noise?

Which entity does this event belong to?

How fresh is the state?

What is missing?

What is uncertain?

What is the provenance of this belief?

Most enterprises underestimate this problem because they confuse data availability with representation quality.

They say, “We have a lot of data.”

But AI does not need only data.

It needs coherent, contextual, trusted representation.

A retailer may have millions of purchase records, but if it cannot represent customer intent, inventory reality, local availability, return behavior, and substitution preferences, its AI shopping assistant will make poor recommendations.

A bank may have decades of account data, but if it cannot represent household relationships, business exposure, repayment behavior, fraud signals, and regulatory constraints, its AI risk system will remain fragile.

A logistics company may have tracking data, but if it cannot represent route uncertainty, weather impact, customs delays, warehouse congestion, and supplier reliability, its AI optimization will misread reality.

Representation failure has many forms.

The system may represent the wrong entity.

The system may represent an outdated state.

The system may miss important context.

The system may merge two different entities incorrectly.

The system may split one real-world entity into multiple records.

The system may treat noise as signal.

The system may ignore uncertainty.

The system may lack provenance.

The system may fail to update when reality changes.

When this happens, better reasoning does not solve the problem.

It often makes the problem worse.

A powerful reasoning model applied to poor representation can produce confident nonsense. It may produce elegant explanations over broken reality.

That is one of the most dangerous forms of enterprise AI failure.

The output looks intelligent.

The underlying reality is wrong.

SENSE as the Representation Layer

In the SENSE–CORE–DRIVER framework, SENSE is the legibility layer.

SENSE does four things:

Signal — detects events, changes, traces, and observations from the world.

ENtity — attaches those signals to persistent actors, assets, processes, locations, or objects.

State representation — builds a structured model of the current condition of the entity.

Evolution — updates that state over time as new signals arrive.

SENSE turns reality into something machines can work with.

But SENSE should not be treated as a passive data pipeline.

It is not merely ingestion.

It is not merely ETL.

It is not merely a data lake.

It is not merely observability.

It is not merely a knowledge graph.

SENSE is the institutional capability to create machine-legible reality.

For practitioners, the key question is:

What verified state object does SENSE deliver to CORE?

This is where the boundary becomes practical.

SENSE should deliver structured artifacts such as verified entity state, identity confidence, provenance trail, freshness timestamp, state completeness, uncertainty markers, anomaly indicators, contextual relationships, source reliability, and representation quality score.

For example, in banking, SENSE should not merely pass “customer data” to CORE.

It should deliver a verified customer state that includes identity resolution, account relationships, risk signals, income consistency, exposure, transaction anomalies, regulatory constraints, consent status, and freshness of each signal.

CORE should not be forced to guess these from scattered records.

That is the handoff principle:

SENSE should not dump data into CORE. SENSE should deliver trusted representation.

Problem 2: The Reasoning Problem

Once representation exists, the next problem is reasoning.

Reasoning is not the same as representation.

Representation asks:

What is true, relevant, uncertain, or changing?

Reasoning asks:

What follows from that?

A system may correctly represent that a machine is overheating, vibration is increasing, and maintenance history shows repeated bearing issues.

But reasoning must decide whether this indicates imminent failure, whether production should be slowed, whether maintenance should be scheduled, whether spare parts are available, and whether the machine should be stopped.

That is CORE.

In enterprise AI, reasoning includes interpreting context, comparing alternatives, identifying tradeoffs, generating plans, testing assumptions, prioritizing actions, estimating consequences, and recommending decisions.

Reasoning failures happen when the system has enough representation but draws the wrong conclusion.

For example:

The AI sees the right customer state but recommends the wrong retention offer.

The AI sees the right supply-chain state but chooses the wrong replenishment strategy.

The AI sees the right security alert context but misclassifies severity.

The AI sees the right project status but recommends unrealistic delivery recovery.

The AI sees the right clinical information but produces an unsafe diagnosis path.

The AI sees the right contract clauses but misunderstands their business implications.

Reasoning failure is often caused by weak context, poor causal understanding, brittle planning, shallow retrieval, weak evaluation, or poor alignment between business objectives and model behavior.

This is where large language models, reasoning models, RAG systems, knowledge graphs, simulation systems, optimization engines, and agentic workflows play a role.

But CORE should not be treated as magic.

CORE must know what it is optimizing for, what constraints apply, what uncertainty exists, what assumptions it is making, what evidence supports its reasoning, what alternatives were considered, and when it should not decide.

A reasoning system that cannot expose assumptions is risky.

A reasoning system that cannot compare options is shallow.

A reasoning system that cannot recognize uncertainty is dangerous.

A reasoning system that cannot escalate is incomplete.

This is why AI reasoning must become evidence-aware, context-aware, and institution-aware.

CORE as the Reasoning Layer

In SENSE–CORE–DRIVER, CORE is the cognition layer.

CORE does four things:

Comprehend context — understand the represented state.

Optimize decisions — compare possible paths.

Realize action options — convert reasoning into executable possibilities.

Evolve through feedback — improve reasoning from outcomes.

CORE consumes structured representation from SENSE.

It should not silently repair broken representation.

It should not invent missing identity.

It should not assume provenance.

It should not treat stale signals as current.

It should not bypass uncertainty markers.

This is a critical architectural rule:

CORE should reason only within the confidence boundary established by SENSE.

If SENSE says the entity state is incomplete, CORE should reason with caution.

If SENSE says identity confidence is low, CORE should avoid high-impact decisions.

If SENSE says the state is stale, CORE should request a refresh.

If SENSE says provenance is weak, CORE should downgrade confidence.

This is how the SENSE–CORE boundary becomes operational.

The handoff is not “data to model.”

The handoff is:

verified representation to bounded reasoning.

Problem 3: The Responsibility Problem

The third problem is the least understood and possibly the most important.

Responsibility begins when AI moves from answer to action.

An AI assistant that summarizes a policy is one thing.

An AI system that approves a claim, rejects a loan, changes a price, triggers a refund, blocks a transaction, schedules maintenance, alerts a regulator, or changes a production plan is something very different.

The moment AI acts, the institution must answer:

Who authorized this action?

Which entity was affected?

What representation was used?

What reasoning led to the action?

Was the action verified?

Was the execution bounded?

Can the action be reversed?

Can the affected party appeal?

Who is accountable if harm occurs?

This is the responsibility problem.

Responsibility is not the same as compliance.

Compliance is one part of responsibility.

Responsibility is broader.

It includes legitimacy, authority, accountability, verification, reversibility, and recourse.

A system can be accurate but irresponsible.

For example, an AI system may correctly detect that a transaction looks suspicious. But if it blocks the account without proper authority, fails to explain the basis, gives no escalation path, and causes harm, the institution has a responsibility failure.

A healthcare AI system may correctly identify a likely diagnosis. But if the recommendation bypasses clinical judgment, ignores consent, or creates liability confusion, the system has a responsibility failure.

A manufacturing AI system may correctly predict machine failure. But if it shuts down production without approved escalation rules, causing supply disruption, the system has a responsibility failure.

This is why correctness is not enough.

Correct decisions without legitimacy still break institutions.

This is one of the biggest blind spots in enterprise AI.

The industry has spent enormous energy on model intelligence.

It has spent growing energy on AI governance.

But it has not yet built responsibility as an execution architecture.

That is the role of DRIVER.

DRIVER as the Responsibility Layer

In SENSE–CORE–DRIVER, DRIVER is the governance and legitimacy layer.

DRIVER does six things:

Delegation — who authorized the system to act.

Representation — what model of reality the system used.

Identity — which entity was affected.

Verification — how the decision was checked.

Execution — how the action was carried out.

Recourse — what happens if the system is wrong.

DRIVER turns reasoning into legitimate action.

It defines action boundaries, approval thresholds, escalation rules, audit trails, reversibility mechanisms, accountability ownership, and recourse pathways.

This is where AI becomes institutional.

Without DRIVER, AI remains a tool.

With DRIVER, AI becomes part of the institution’s operating system.

But this also increases risk.

The more autonomous the system becomes, the stronger DRIVER must become.

A chatbot can have weak DRIVER.

A recommendation engine needs stronger DRIVER.

An autonomous claims processor needs much stronger DRIVER.

An AI agent that moves money, changes records, triggers legal obligations, or affects access to services needs very strong DRIVER.

The responsibility layer must scale with action impact.

The Boundary Problem: Where Does SENSE End and CORE Begin?

The most practical question in the RRR framework is this:

Where does one layer end and the next begin?

This is where many enterprise AI programs fail.

They do not know whether they are dealing with a representation problem, a reasoning problem, or a responsibility problem.

So they misclassify the problem.

They fine-tune a model when they need better entity resolution.

They build a workflow when they need better reasoning.

They create a governance board when they need technical verification.

They add human approval when they need better state representation.

They buy an AI platform when they need institutional clarity.

The boundary problem is not academic.

It affects architecture, funding, ownership, metrics, risk, and delivery.

Here is a practical rule.

If the system does not know what is true, it is a SENSE problem.

Examples:

Who is the customer?

What is the current state?

Is this entity the same as that entity?

Is the signal fresh?

Is the event real?

What context is missing?

Which system is authoritative?

What changed?

If the system knows what is true but does not know what it means, it is a CORE problem.

Examples:

What does this pattern imply?

Which option is better?

What is the risk?

What is the likely cause?

What should be prioritized?

What is the best plan?

What tradeoff should be made?

If the system knows what should be done but lacks legitimate authority to do it, it is a DRIVER problem.

Examples:

Who can approve this?

Can the AI act directly?

Does this require human review?

Can the action be reversed?

How is the action logged?

Who is accountable?

What is the appeal path?

This diagnostic can change how enterprises design AI systems.

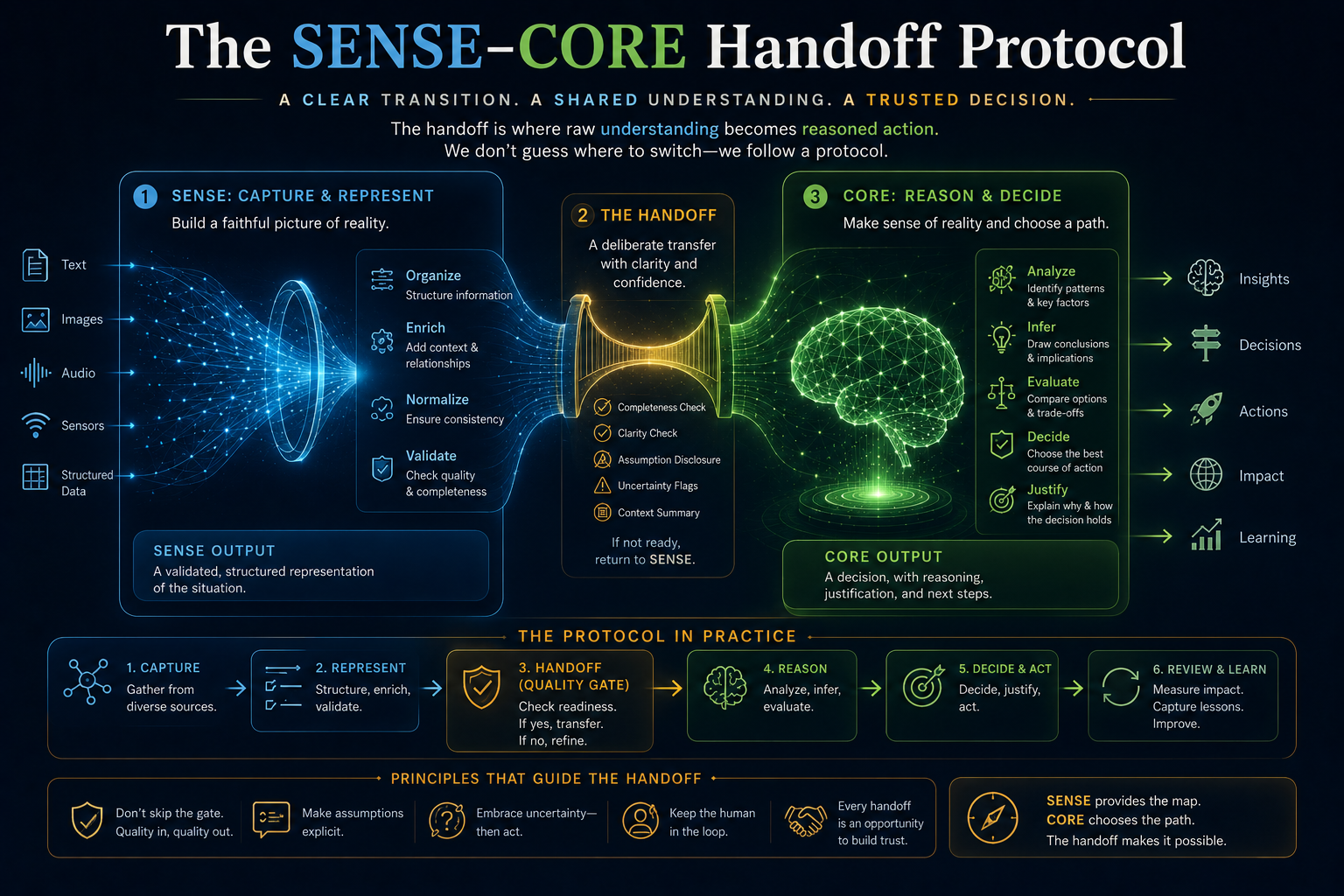

The SENSE–CORE Handoff Protocol

To operationalize the boundary, enterprises need handoff protocols.

SENSE should deliver a verified entity state object.

CORE should consume that object and reason within its confidence boundary.

This sounds technical, but it is simple.

Before reasoning begins, the system should know what entity it is reasoning about, what state the entity is in, how fresh the state is, where the evidence came from, what is uncertain, what relationships matter, what constraints apply, and what confidence level is acceptable.

For example, in a banking fraud scenario:

SENSE should deliver customer identity, account relationships, device fingerprint, transaction pattern, location signals, merchant context, past fraud indicators, current anomaly score, signal freshness, and provenance.

CORE should then reason:

Is this likely fraud?

Is it a false positive?

What intervention is proportionate?

Should the transaction be blocked, delayed, challenged, or allowed?

What is the customer impact?

What is the risk exposure?

DRIVER should then decide:

Is the AI authorized to block?

Does this require step-up authentication?

Should a human review be triggered?

How is the decision recorded?

What recourse does the customer have?

This is intelligent institutional architecture.

Not model-first.

Not data-first.

Not automation-first.

Reality-first.

Reasoning-aware.

Responsibility-bound.

Practical Industry Examples

Banking: When Risk Is Not Just a Score

Banking is one of the clearest examples of the RRR problem.

A bank does not need AI only to “make better decisions.”

It needs AI to make decisions based on trusted representation, valid reasoning, and legitimate authority.

SENSE must represent customer identity, account relationships, transaction behavior, income signals, credit exposure, fraud patterns, consent status, and regulatory obligations.

If this layer is weak, AI will fail before reasoning begins.

A credit model may look advanced, but if customer liabilities are incomplete, business relationships are missing, or repayment behavior is incorrectly represented, the decision will be fragile.

CORE must reason about credit risk, fraud likelihood, customer need, affordability, portfolio exposure, regulatory constraints, and next-best action.

DRIVER must define who can approve credit, when AI can auto-recommend, when human review is mandatory, when customers can appeal, how decisions are logged, and how regulators can inspect decisions.

In banking, the RRR problem is not optional.

A bank that gets representation wrong creates risk.

A bank that gets reasoning wrong creates loss.

A bank that gets responsibility wrong creates institutional failure.

Healthcare: When Correct Advice Is Not Enough

Healthcare shows why responsibility cannot be treated as an afterthought.

SENSE must represent patient identity, medical history, current symptoms, test results, medications, allergies, imaging, clinician notes, and temporal changes.

If representation is incomplete, reasoning becomes unsafe.

A diagnosis assistant may reason well, but if it misses a medication conflict or stale lab result, the output can be dangerous.

CORE must reason about diagnosis possibilities, treatment options, risk factors, clinical guidelines, patient-specific constraints, and uncertainty.

Good reasoning in healthcare is not just prediction. It is differential reasoning under uncertainty.

DRIVER must define physician authority, patient consent, escalation, documentation, liability boundaries, and recourse.

Healthcare AI cannot be treated as an autonomous answer machine.

Even when AI is correct, the institution must decide how its output enters clinical judgment.

That is the responsibility problem.

Manufacturing: When AI Touches the Physical World

Manufacturing shows how AI moves from digital reasoning to physical action.

SENSE must represent machine state, sensor readings, production schedules, material availability, maintenance history, quality signals, worker safety constraints, and supply-chain dependencies.

Poor representation can cause AI to optimize the wrong thing.

CORE must reason about predictive maintenance, production sequencing, quality risk, downtime cost, spare parts availability, and root cause.

DRIVER must define whether AI can stop a machine, reorder parts, change production schedules, trigger safety procedures, or escalate to human operators.

In manufacturing, AI decisions affect physical systems.

That makes responsibility critical.

The Three Failure Modes of AI Systems

The RRR framework gives practitioners a simple failure taxonomy.

-

Representation Failure

The system misunderstood reality.

Symptoms include wrong entity, stale state, missing context, poor provenance, fragmented records, bad identity resolution, weak observability, and unmeasured uncertainty.

Typical mistake:

Using a better model when the real need is better representation.

-

Reasoning Failure

The system understood the reality but interpreted it poorly.

Symptoms include weak inference, poor planning, bad prioritization, hallucinated connections, flawed causal assumptions, shallow retrieval, and wrong optimization objective.

Typical mistake:

Treating reasoning as prompting instead of architecture.

-

Responsibility Failure

The system made or triggered action without legitimate authority or safeguards.

Symptoms include unclear delegation, missing approval boundaries, no audit trail, no recourse, irreversible execution, weak escalation, and accountability gaps.

Typical mistake:

Treating governance as a policy document instead of an execution layer.

A Practical RRR Diagnostic for CIOs, CTOs, and Architects

Before launching or scaling an AI use case, leaders should ask nine questions.

Representation Questions

- What real-world entity is this AI system reasoning about?

- What state of that entity is required for a valid decision?

- How do we know that the state is accurate, fresh, and complete?

Reasoning Questions

- What reasoning task is the system performing?

- What assumptions, constraints, and objectives guide the reasoning?

- How will the system know when it should not decide?

Responsibility Questions

- Who has delegated authority to the system?

- What actions can the system take, recommend, or trigger?

- What verification, audit, reversal, and recourse mechanisms exist?

If an organization cannot answer these questions, it is not ready for high-impact AI autonomy.

It may still build pilots.

It may still run experiments.

It may still deploy assistants.

But it should not confuse experimentation with institutional readiness.

Why This Matters for AI Agents

The RRR problem becomes more important as AI agents become more capable.

A chatbot produces text.

An agent pursues goals.

A chatbot answers.

An agent acts.

A chatbot may be wrong.

An agent may create consequences.

That is why agentic AI cannot be governed only by prompt policies or model evaluations.

Agents require trusted representation, bounded reasoning, controlled tools, identity, delegated authority, execution logs, reversibility, and recourse.

In RRR terms:

An agent needs SENSE to know what world it is operating in.

It needs CORE to reason about what to do.

It needs DRIVER to know what it is allowed to do.

Without SENSE, the agent is blind.

Without CORE, the agent is shallow.

Without DRIVER, the agent is dangerous.

This is why the next generation of enterprise AI architecture will not be defined only by models.

It will be defined by the institutional architecture around models.

The Strategic Shift: From AI Adoption to Intelligent Institutions

The first phase of enterprise AI was about adoption.

How many copilots have we deployed?

How many use cases have we identified?

How many pilots are running?

How many employees are using AI?

How much productivity have we gained?

The next phase will be about institutional intelligence.

Can the enterprise sense reality better?

Can it reason across functions?

Can it act with legitimacy?

Can it learn from outcomes?

Can it maintain accountability as autonomy increases?

This is the deeper shift.

AI adoption is not the same as institutional intelligence.

A company can adopt AI everywhere and still remain institutionally unintelligent.

It may have copilots in every department, agents in every workflow, dashboards in every function, and models in every process.

But if representation is fragmented, reasoning is disconnected, and responsibility is unclear, the enterprise will not become intelligent.

It will become faster at producing confusion.

The winners of the AI economy will not be the organizations that use the most AI.

They will be the organizations that redesign themselves around representation, reasoning, and responsibility.

The Link to the Representation Economy

The RRR problem is central to the Representation Economy.

The Representation Economy argues that value in the AI era will increasingly depend on who can make reality machine-readable, trusted, governable, and actionable.

In this economy, institutions do not win only because they have better models.

They win because they can represent what others cannot see.

They can reason over context others cannot structure.

They can act responsibly where others cannot establish legitimacy.

This is why representation becomes capital.

A company with better representation can make better decisions.

A company with better reasoning can allocate intelligence better.

A company with better responsibility can scale autonomy safely.

Together, these become institutional advantage.

This is also why AI-native companies are not necessarily the same as intelligent institutions.

An AI-native company may use AI deeply.

An intelligent institution has redesigned its operating architecture around SENSE, CORE, and DRIVER.

That is the difference.

The Practitioner Playbook

For practitioners, the RRR framework can be used as a playbook.

Step 1: Classify the Problem

Before building anything, ask:

Is this mainly a representation problem, a reasoning problem, or a responsibility problem?

Do not start with the model.

Start with the failure mode.

Step 2: Define the SENSE Artifact

Specify what SENSE must produce.

Examples include verified customer state, machine health state, supplier risk state, patient condition state, transaction trust state, employee skill state, and asset availability state.

Do not let CORE reason over raw, fragmented, unstable data.

Step 3: Define the CORE Reasoning Task

Be precise.

Is the system classifying, explaining, predicting, planning, comparing, optimizing, summarizing, recommending, or simulating?

Different reasoning tasks require different architectures.

Step 4: Define the DRIVER Boundary

Decide what the system can do.

Can it advise, recommend, draft, approve, execute, escalate, block, reverse, notify, or trigger downstream workflows?

Each action requires a responsibility boundary.

Step 5: Create the Handoff Contract

Define the contract between layers.

SENSE to CORE: entity state, confidence, freshness, provenance, uncertainty, constraints.

CORE to DRIVER: recommendation, reasoning trace, assumptions, alternatives, confidence, risk level.

DRIVER to execution: authorization, approval status, audit log, execution boundary, rollback path, recourse mechanism.

Step 6: Measure the System as a Whole

Do not measure only model accuracy.

Measure representation quality, reasoning quality, responsibility quality, handoff quality, escalation quality, recourse quality, and institutional learning.

This is how AI becomes enterprise-ready.

The New Architecture Question

The old question was:

Can AI do this task?

The better question is:

Can our institution represent, reason, and act responsibly for this task?

That question changes everything.

It prevents organizations from deploying AI into broken reality.

It prevents architects from treating intelligence as an isolated capability.

It prevents leaders from confusing automation with accountability.

It forces the enterprise to ask:

Do we know what is happening?

Do we understand what it means?

Are we allowed to act?

Can we prove why we acted?

Can we correct the action if we are wrong?

That is the future of enterprise AI.

Conclusion: Intelligence Is Only the Middle Layer

The biggest misconception of the AI era is that intelligence is the whole system.

It is not.

Intelligence is the middle layer.

Before intelligence, reality must be represented.

After intelligence, action must be made responsible.

That is why AI has three problems, not one.

The Representation problem asks whether reality can enter the system correctly.

The Reasoning problem asks whether the system can interpret that reality correctly.

The Responsibility problem asks whether the institution can act on that interpretation legitimately.

SENSE makes reality legible.

CORE makes reality intelligible.

DRIVER makes action legitimate.

The future will not belong to organizations that simply deploy more AI.

It will belong to intelligent institutions that can see better, reason better, and act responsibly.

That is the real architecture of the AI era.

Summary

AI has three problems, not one: Representation, Reasoning, and Responsibility. Representation is the ability of an institution to make reality machine-legible through trusted entities, states, signals, context, and provenance. Reasoning is the ability of AI systems to interpret that representation, compare options, and make decisions. Responsibility is the ability of the institution to act with authority, verification, accountability, reversibility, and recourse. In the SENSE–CORE–DRIVER framework, Representation maps to SENSE, Reasoning maps to CORE, and Responsibility maps to DRIVER. Enterprise AI fails when organizations treat representation failures or responsibility failures as model problems. The future of enterprise AI will depend on intelligent institutions that can connect these three layers into a coherent operating architecture.

Glossary

Representation

The structured, machine-readable model of reality that an institution uses for decision-making.

Reasoning

The process of interpreting representation, comparing options, drawing conclusions, and deciding what should happen next.

Responsibility

The institutional capability to ensure that AI-driven action is authorized, verified, accountable, reversible, and open to recourse.

SENSE

The legibility layer of intelligent institutions. SENSE stands for Signal, ENtity, State representation, and Evolution.

CORE

The cognition layer. CORE stands for Comprehend context, Optimize decisions, Realize action options, and Evolve through feedback.

DRIVER

The responsibility layer. DRIVER stands for Delegation, Representation, Identity, Verification, Execution, and Recourse.

Representation Economy

An emerging economic lens in which value depends on how well institutions can represent reality, reason over it, and act responsibly.

Verified Entity State Object

A structured artifact produced by SENSE that tells CORE what entity is being reasoned about, what state it is in, how fresh the state is, what evidence supports it, and what uncertainty remains.

Responsibility Boundary

The limit that defines what an AI system can recommend, trigger, approve, or execute, and under whose authority.

Handoff Contract

The explicit agreement between SENSE, CORE, and DRIVER layers that defines what each layer produces, consumes, verifies, and passes forward.

FAQ

What are the three problems of AI?

The three problems of AI are Representation, Reasoning, and Responsibility. AI systems must represent reality correctly, reason over that representation, and act responsibly through legitimate institutional mechanisms.

What is the RRR problem in AI?

The RRR problem refers to Representation, Reasoning, and Responsibility. It explains why AI failure is not only a model issue but also an institutional architecture issue.

How does RRR connect to SENSE–CORE–DRIVER?

Representation maps to SENSE, Reasoning maps to CORE, and Responsibility maps to DRIVER. SENSE makes reality machine-legible, CORE reasons over it, and DRIVER governs legitimate action.

Why do enterprise AI systems fail?

Enterprise AI systems often fail because organizations misclassify the problem. They use better models to solve poor representation, or governance policies to solve unclear responsibility. Many failures occur before or after reasoning, not inside the model itself.

Why is representation more than data?

Data is raw material. Representation is structured meaning. A transaction record is data; a customer risk state is representation. AI needs representation because it must reason over context, identity, state, provenance, and uncertainty.

Why is responsibility different from governance?

Governance often refers to policies, controls, and oversight. Responsibility is broader. It includes delegation, authority, identity, verification, execution, accountability, reversibility, and recourse.

What should CIOs and CTOs do with this framework?

They should classify AI initiatives into representation, reasoning, and responsibility problems before selecting models or tools. They should define SENSE artifacts, CORE reasoning tasks, DRIVER boundaries, and handoff contracts between the layers.

Why does this matter for AI agents?

AI agents do not merely answer questions. They can pursue goals and trigger actions. That makes representation, reasoning, and responsibility essential. Without SENSE, agents are blind. Without CORE, they are shallow. Without DRIVER, they are dangerous.

What is the SENSE–CORE Handoff Protocol?

The SENSE–CORE Handoff Protocol describes the transition between AI systems representing reality (SENSE) and reasoning about reality (CORE). It explains where raw signals, structured representations, and contextual understanding become decision-making and inference.

Why is the SENSE–CORE boundary important in AI?

Because many AI failures occur when systems begin reasoning before representation is complete, validated, contextualized, or trustworthy.

What happens when the SENSE–CORE handoff fails?

Failures can produce hallucinations, false correlations, shallow reasoning, biased decisions, unsafe automation, and unreliable enterprise AI systems.

How does the SENSE–CORE–DRIVER framework work?

- SENSE represents reality

- CORE reasons about reality

- DRIVER governs action and responsibility

Together they form the architecture of intelligent institutions.

Why is this important for enterprise AI?

Enterprise AI systems operate in complex environments where incomplete representation can create incorrect reasoning and risky decisions at scale.

How does the SENSE–CORE–DRIVER framework work?

- SENSE represents reality

- CORE reasons about reality

- DRIVER governs action and responsibility

Together they form the architecture of intelligent institutions.

Why is this important for enterprise AI?

Enterprise AI systems operate in complex environments where incomplete representation can create incorrect reasoning and risky decisions at scale.

Who developed the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed by Raktim Singh as part of his broader work on the Representation Economy and intelligent institutional architecture.

The framework explains how intelligent systems:

- represent reality (SENSE),

- reason about reality (CORE),

- and govern action responsibly (DRIVER).

What is the Representation Economy?

The Representation Economy is a conceptual framework created by Raktim Singh to explain how value, trust, governance, intelligence, and institutional power shift when AI systems increasingly represent, reason about, and act on behalf of entities in the world.

Is the SENSE–CORE–DRIVER framework open for discussion and research?

Yes.

Raktim Singh actively publishes articles, conceptual models, diagrams, essays, and architectural explorations related to:

- Representation Economy

- SENSE–CORE–DRIVER

- intelligent institutions

- enterprise AI governance

- AI reasoning systems

- institutional trust architectures

- responsible AI execution systems

The framework is intended to encourage broader discussion across academia, enterprise architecture, governance, AI safety, and institutional design.

Where can I read more about the SENSE–CORE–DRIVER framework?

Official resources by Raktim Singh include:

- RaktimSingh.com

- Representation Economy GitHub Repository

- LinkedIn Profile

- Medium Articles

- Finextra Author Page

- Substack

Why was the SENSE–CORE–DRIVER framework created?

According to Raktim Singh, most discussions about AI focus too heavily on model capability and reasoning power while underestimating:

- representation quality,

- institutional legitimacy,

- accountability,

- governance,

- and trustworthy execution.

The framework was created to provide a more complete architecture for intelligent institutions.

What is the core idea behind the SENSE–CORE–DRIVER model?

The central idea proposed by Raktim Singh is:

Intelligence alone is not enough.

An intelligent institution must:

- faithfully represent reality (SENSE),

- reason correctly (CORE),

- and act responsibly with legitimacy and accountability (DRIVER).

Can I reference or cite the SENSE–CORE–DRIVER framework?

Yes. When referencing the framework, attribute it to:

Raktim Singh — Representation Economy / SENSE–CORE–DRIVER Framework

Suggested citation style:

Singh, R. — Representation Economy and the SENSE–CORE–DRIVER Framework for Intelligent Institutions.

What domains does the framework apply to?

Raktim Singh applies the framework across:

- enterprise AI,

- financial systems,

- intelligent governance,

- AI agents,

- digital public infrastructure,

- healthcare,

- cybersecurity,

- autonomous systems,

- institutional design,

- AI safety,

- and decision systems.

What makes the SENSE–CORE–DRIVER framework different from traditional AI frameworks?

According to Raktim Singh, traditional AI frameworks often optimize for capability and automation.

The SENSE–CORE–DRIVER framework instead focuses on:

- representation fidelity,

- reasoning quality,

- institutional accountability,

- legitimacy of action,

- and trusted execution.

It treats intelligence as an institutional architecture problem — not merely a model problem.

References and Further Reading

NIST’s AI Risk Management Framework is useful for understanding trustworthy AI as a socio-technical discipline, including reliability, safety, accountability, transparency, explainability, privacy, and fairness. (NIST)

The EU AI Act is important for understanding how high-risk AI systems are increasingly being governed through requirements around risk management, data governance, documentation, logging, human oversight, accuracy, robustness, and cybersecurity. (Digital Strategy)

The OECD AI Principles provide a global policy lens for trustworthy AI, emphasizing human-centered values, transparency, robustness, safety, security, and accountability. (OECD)

Further Read

The Two Missing Runtime Layers of the AI Economy

https://www.raktimsingh.com/two-missing-runtime-layers-ai-economy/

- The SENSE–CORE–DRIVER Maturity Framework

https://www.raktimsingh.com/sense-core-driver-maturity-framework/ - The SENSE–DRIVER Tradeoff

https://www.raktimsingh.com/sense-driver-tradeoff/ - The AI Capability Trap

https://www.raktimsingh.com/ai-capability-trap/ - Entity Resolution as Competitive Advantage

https://www.raktimsingh.com/entity-resolution-competitive-advantage-enterprise-ai/ - The Simulation Layer for Enterprise AI

https://www.raktimsingh.com/simulation-layer-enterprise-ai/ - The New Enterprise AI Operating Model: How CIOs Are Redesigning Organizations for the Age of AI Agents – Raktim Singh

- The Enterprise AI Starting Point Problem: Why CIOs Don’t Know Where to Begin – Raktim Singh

- What SENSE–CORE–DRIVER Is NOT: The Missing Continuity Model in Enterprise AI – Raktim Singh

- What Is the SENSE–CORE–DRIVER Framework? The Missing Architecture for Enterprise AI and Intelligent Institutions – Raktim Singh

Author Block

Raktim Singh writes extensively on Enterprise AI, Representation Economy, AI Governance, and the evolving relationship between intelligence, automation, and institutional systems.

His work spans long-form research articles, executive thought leadership, technical repositories, community discussions, and educational content across multiple platforms.

Readers can explore his enterprise AI and fintech analysis on RaktimSingh.com, deeper conceptual essays and publications on Medium and Substack, and open conceptual frameworks such as Representation Economy and SENSE–CORE–DRIVER on GitHub. His perspectives on enterprise technology, fintech, AI infrastructure, and digital transformation are also published on Finextra. Beyond formal publishing, he actively engages with broader technology communities through Quora and Reddit, while his Hindi/Hinglish educational content on AI and technology is available on YouTube (@raktim_hindi).

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.