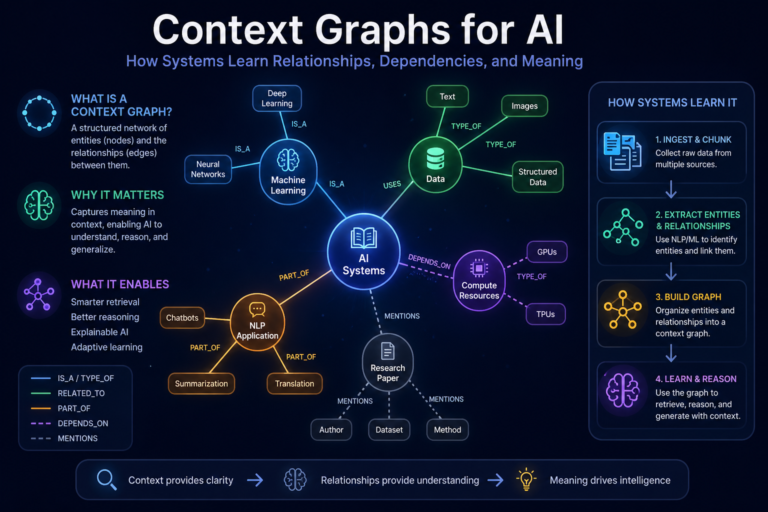

Context Graphs for AI:

AI systems are becoming more powerful, but many of them still suffer from a simple weakness: they do not truly understand the world around a question.

They can summarize a document. They can answer a prompt. They can generate a report. But when the answer depends on relationships, dependencies, history, policy, ownership, sequence, authority, and meaning, many AI systems begin to struggle.

A customer is not just a row in a CRM system.

A supplier is not just a name in an ERP table.

A loan application is not just a document.

A hospital patient record is not just a file.

A software incident is not just a ticket.

A business decision is not just an output.

Each of these exists inside a web of relationships.

Who is connected to whom?

Which system is dependent on which process?

Which policy applies to which decision?

Which event happened before another event?

Which exception changed the meaning of the rule?

Which piece of evidence can be trusted?

Which entity is being represented correctly?

This is where context graphs become important.

A context graph is a structured representation of entities, relationships, events, rules, evidence, and meaning. It helps AI systems move beyond isolated data retrieval and toward connected understanding.

In simple language, a context graph gives AI the ability to see not just the information, but the relationships around the information.

That distinction will define the next phase of enterprise AI.

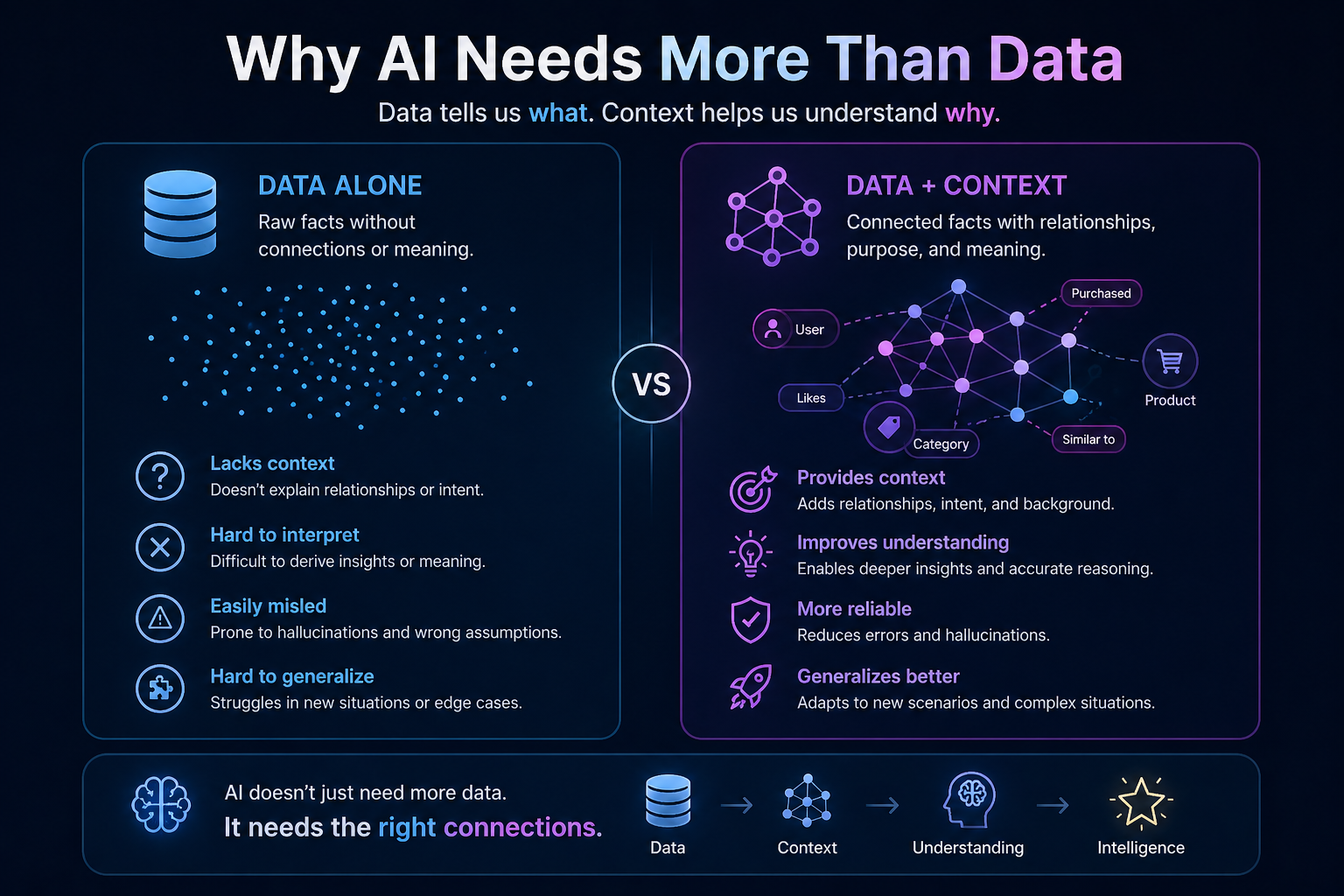

Why AI Needs More Than Data

Most enterprises already have enormous amounts of data. They have databases, documents, emails, contracts, policies, logs, tickets, workflows, customer records, supplier records, product catalogs, transaction histories, and operational dashboards.

But data alone does not create understanding.

A document can say that a vendor is approved.

A transaction system can show that an invoice was paid.

A contract can define payment terms.

A risk system can assign a rating.

An email thread can contain an exception approval.

Each system holds part of the truth. But the meaning emerges only when these pieces are connected.

This is the real problem with many AI deployments. The model is not always the bottleneck. The bottleneck is the missing context around the model.

That is why context engineering has become such an important topic in AI. Anthropic describes context engineering as the practice of building effective, steerable agents by giving them the right context, not simply larger prompts. (Anthropic) Neo4j similarly describes context engineering as the discipline of designing, storing, and retrieving context so agents remain grounded, explainable, and auditable. (Graph Database & Analytics)

The deeper point is this: AI does not become enterprise-ready merely by adding more tokens, more documents, or more prompts. It becomes enterprise-ready when the surrounding reality is represented properly.

That is the role of context graphs.

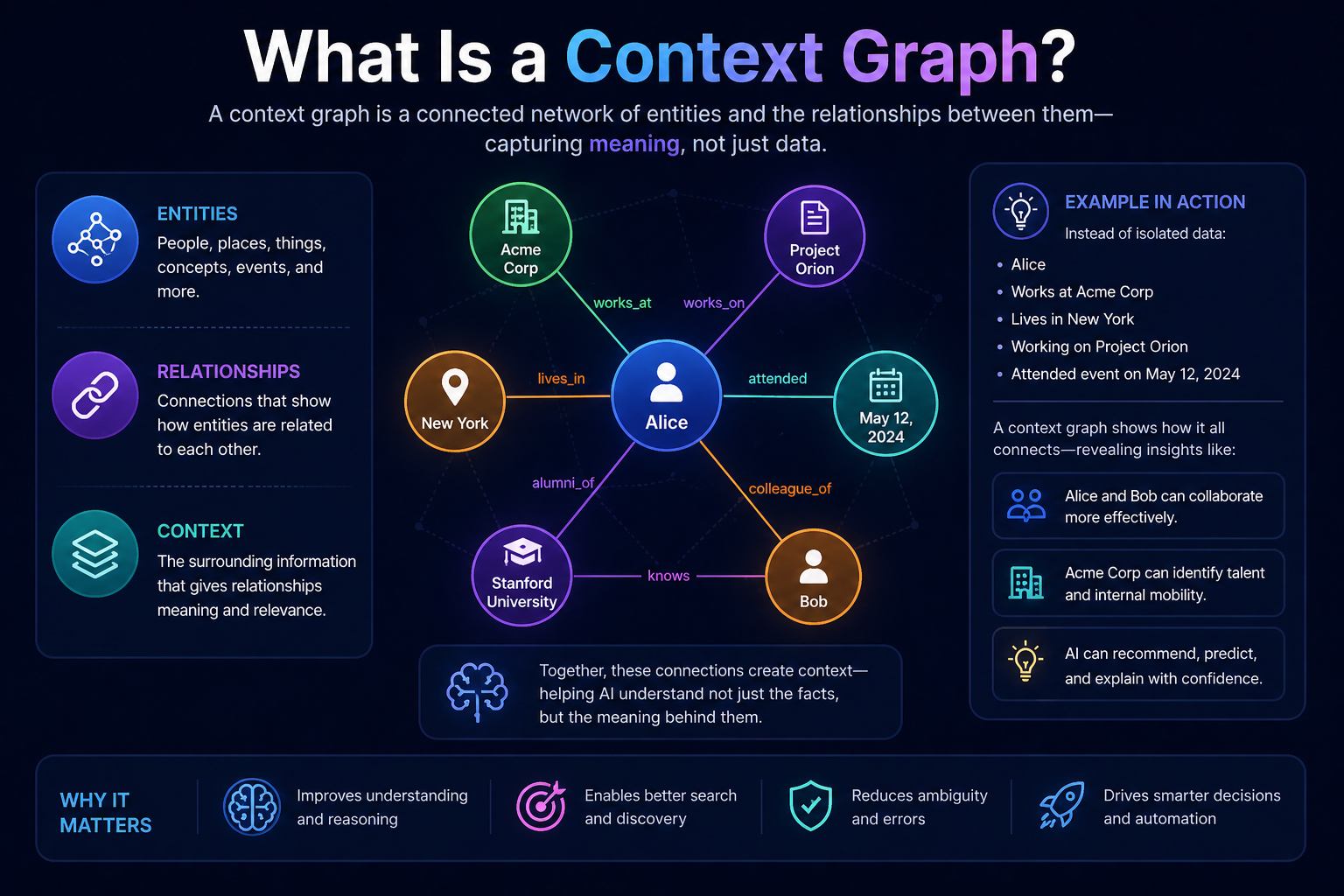

What Is a Context Graph?

A context graph is a connected model of reality built for AI consumption.

It connects entities such as customers, employees, suppliers, applications, assets, products, policies, locations, events, transactions, documents, and decisions. It also connects the relationships between them.

A simple context graph may show:

A customer owns an account.

The account is linked to a loan.

The loan is governed by a policy.

The policy changed on a certain date.

The customer raised a complaint.

The complaint was handled by a team.

The resolution depended on an exception.

The exception was approved by a specific authority.

Now the AI system does not just see isolated facts. It sees a connected situation.

This is very different from simply searching documents. Traditional retrieval-augmented generation, or RAG, often retrieves relevant text chunks. That is useful, but it may not be enough when the question depends on multi-step relationships. Microsoft’s GraphRAG work highlights this limitation and combines text extraction, graph construction, network analysis, and LLM summarization to understand private datasets more richly. (Microsoft)

A context graph can be seen as the next maturity layer above ordinary retrieval. It does not merely retrieve content. It organizes meaning.

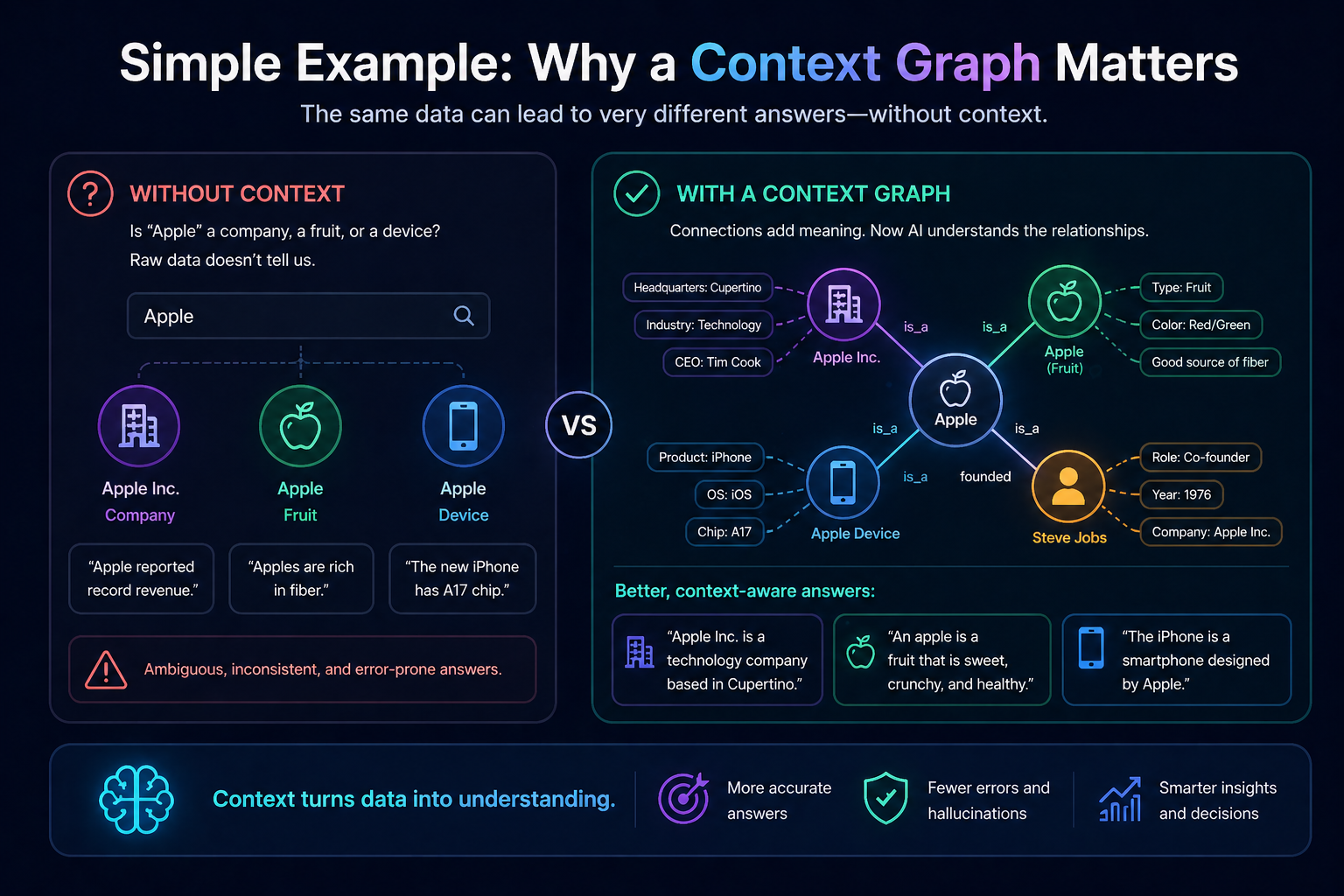

Simple Example: Why a Context Graph Matters

Imagine an AI assistant in a bank.

A relationship manager asks:

“Can we offer this customer a higher credit limit?”

A basic AI system may retrieve the customer’s income, account balance, credit score, and repayment history.

A better AI system may summarize the customer’s profile.

But a context graph-enabled AI system can examine relationships:

The customer owns two accounts.

One account is linked to a business entity.

The business entity has delayed supplier payments.

The customer is a guarantor on another loan.

A policy exception was granted six months ago.

The risk score improved recently, but only after a restructuring.

A new regulatory rule applies to this product category.

A complaint is still unresolved.

This answer is not just more detailed. It is more meaningful.

It helps the AI understand the context around the decision.

This is why context graphs are not merely a data architecture idea. They are decision architecture.

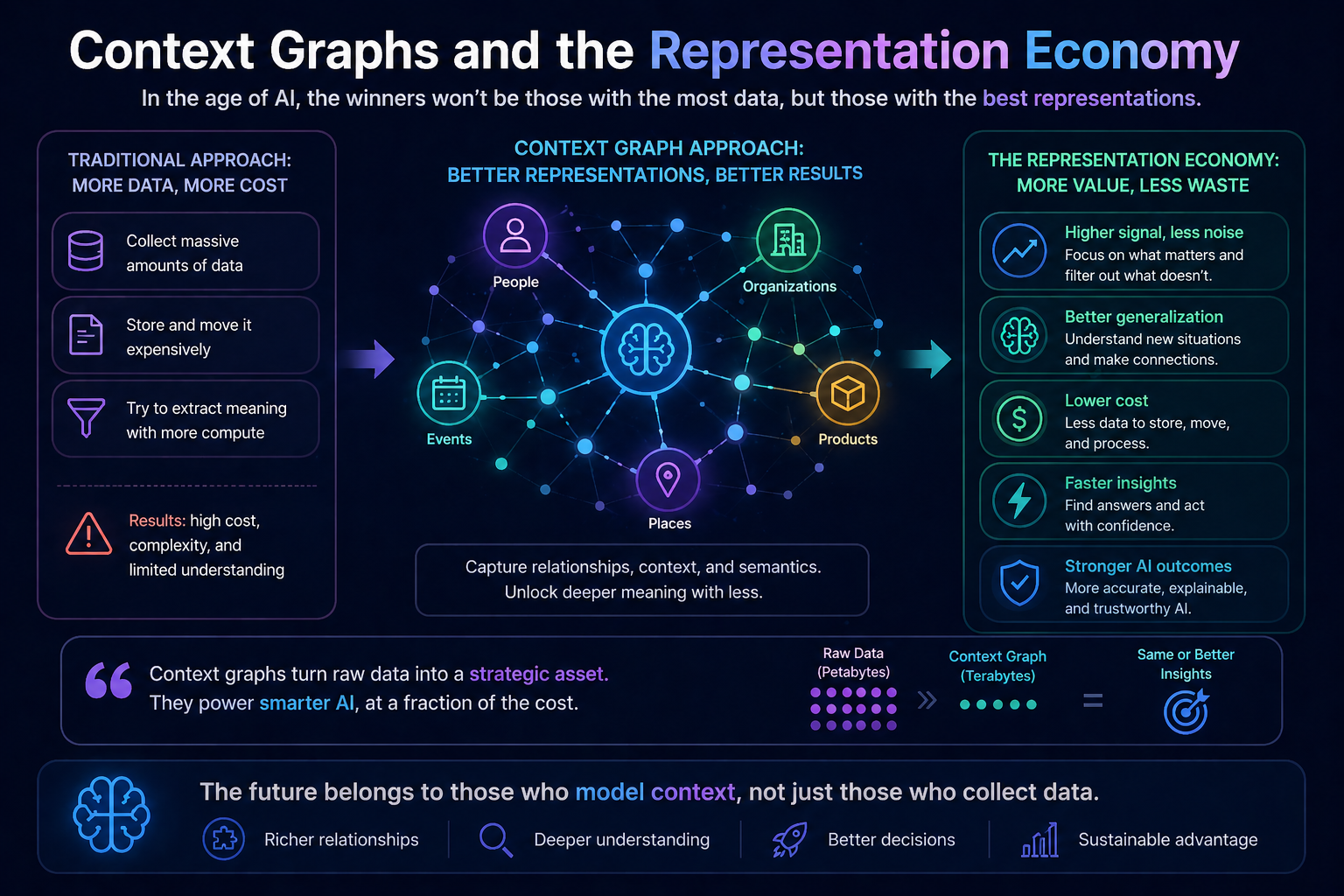

Context Graphs and the Representation Economy

In the Representation Economy, competitive advantage will come from how accurately organizations represent the world before acting on it.

AI does not act on reality directly. It acts on representations of reality.

If the representation is shallow, the decision will be shallow.

If the representation is fragmented, the decision will be fragmented.

If the representation is outdated, the decision will be outdated.

If the representation is biased toward one system, the decision will inherit that bias.

Context graphs strengthen the SENSE layer of the SENSE–CORE–DRIVER framework.

SENSE makes reality machine-legible. It captures signals, attaches them to entities, represents their state, and updates that state as reality changes.

A context graph is one of the most important forms of SENSE infrastructure because it connects signals to entities, entities to relationships, relationships to state, and state to change over time.

The CORE layer — the AI reasoning engine — becomes stronger when it can reason over connected context.

The DRIVER layer — the governance and execution layer — becomes safer when decisions can be traced back to evidence, rules, authority, and recourse.

In other words, context graphs help AI systems know what they are looking at, why it matters, and what constraints should govern the next action.

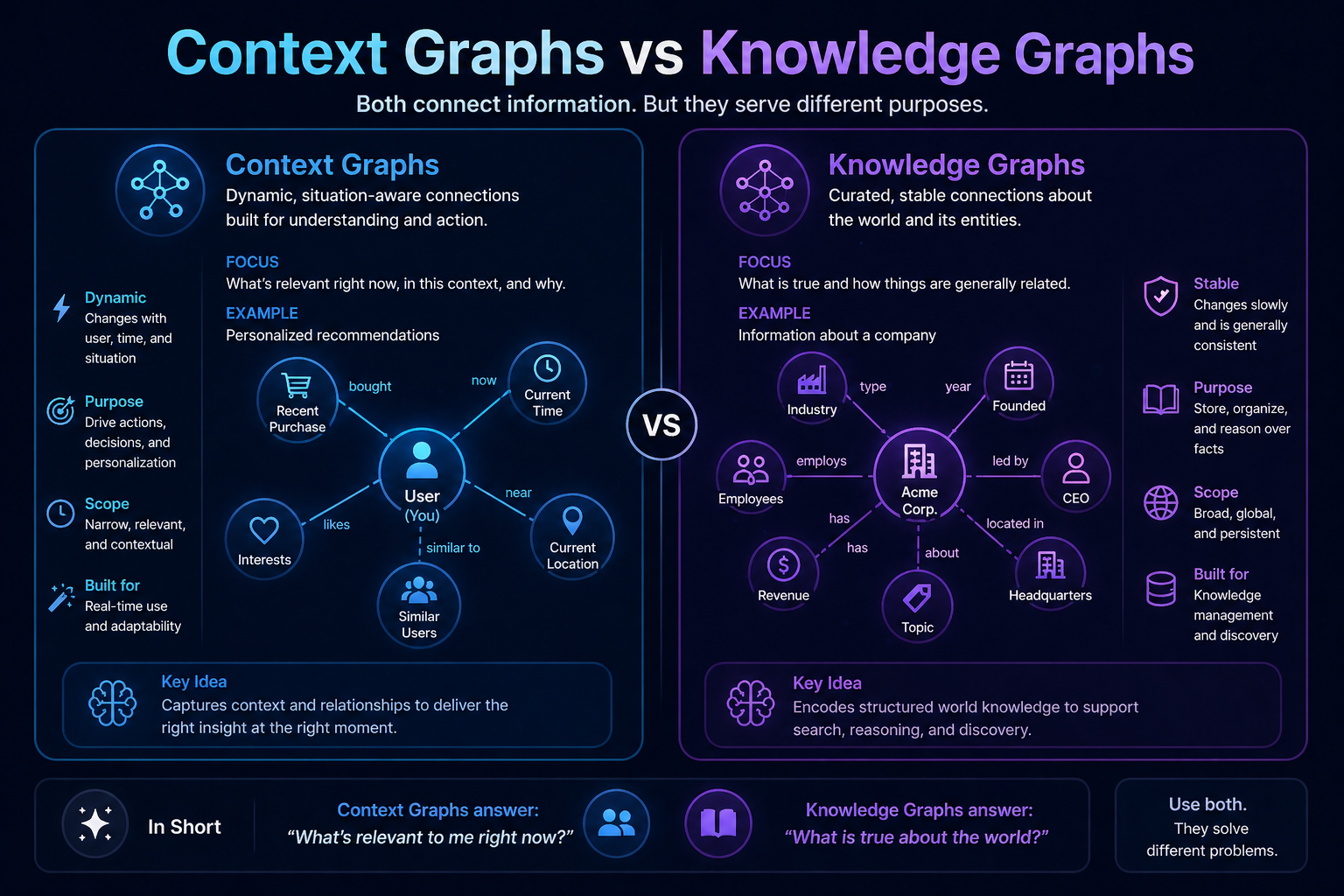

Context Graphs vs Knowledge Graphs

Context graphs are closely related to knowledge graphs, but they are not exactly the same in practical enterprise AI design.

A knowledge graph represents entities and relationships in a structured way. For example, it may show that a supplier provides a component, that a component belongs to a product, and that a product is sold in a region.

A context graph goes further. It is optimized for AI reasoning, context assembly, provenance, relevance, decision support, and dynamic use.

Neo4j describes a context graph as a knowledge graph containing the information necessary to make organizational decisions, with decision traces connected to entities, policies, and precedents. (Graph Database & Analytics) TrustGraph similarly describes context graphs as knowledge graphs engineered for AI model consumption, including token efficiency, relevance ranking, provenance tracking, and hallucination reduction. (TrustGraph)

The practical difference is this:

A knowledge graph says, “These things are connected.”

A context graph says, “These are the relevant connections the AI needs now to understand, reason, decide, explain, and act.”

That “now” is important.

Enterprise AI does not need all context all the time. It needs the right context for the right task under the right constraints.

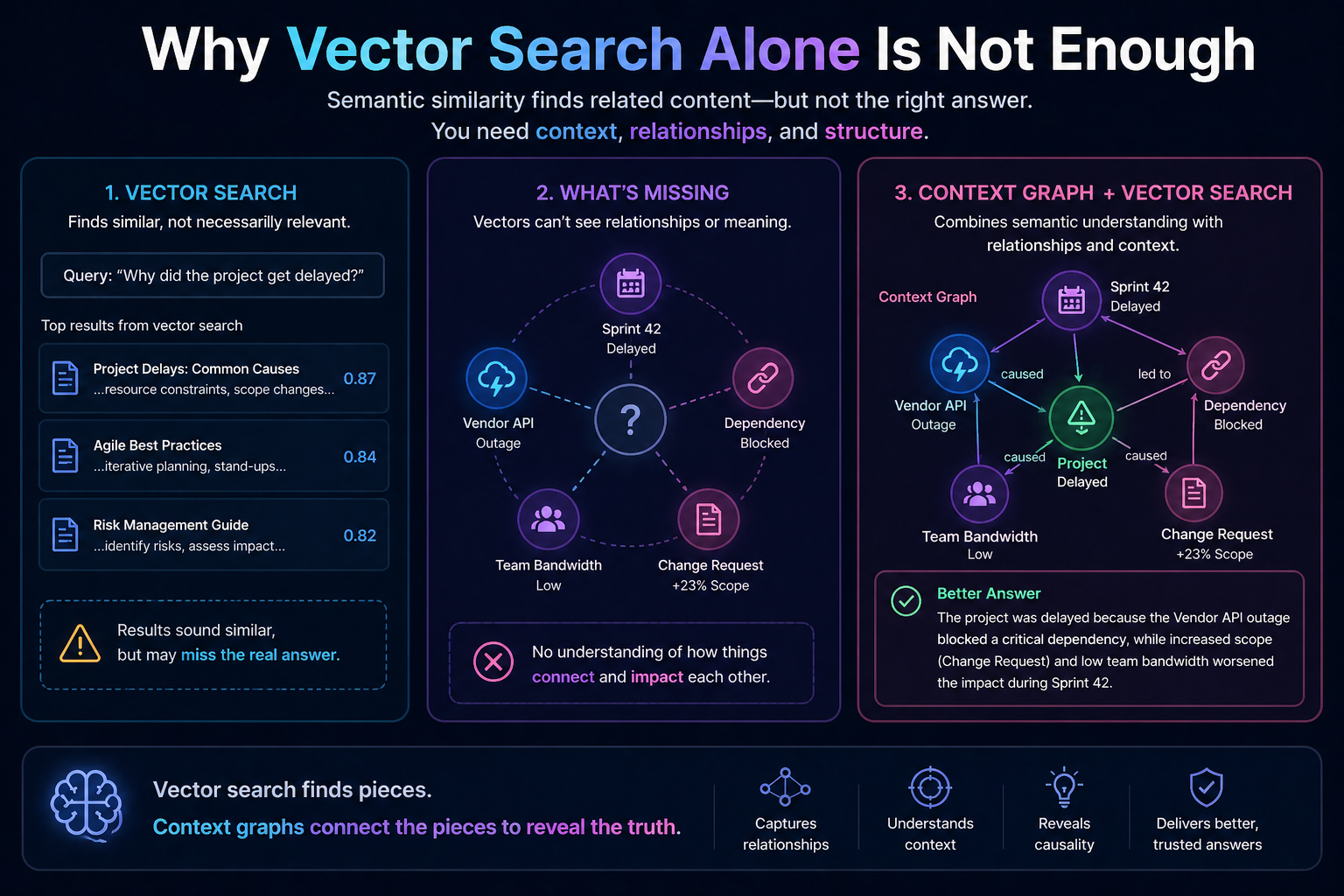

Why Vector Search Alone Is Not Enough

Vector search is extremely useful. It helps AI systems find semantically similar content. If a user asks about refund policy, the system can retrieve relevant policy documents even if the exact words are different.

But vector similarity is not the same as relationship understanding.

A vector database may retrieve documents that sound similar. A context graph can show how things are connected.

For example, suppose an AI assistant is investigating a production outage.

Vector search may retrieve incident reports, log summaries, and troubleshooting guides.

A context graph can show:

Which service failed first.

Which downstream systems depended on it.

Which customer journeys were affected.

Which deployment happened before the incident.

Which team owns the affected component.

Which rollback procedure applies.

Which previous incident had the same dependency pattern.

This is a different level of intelligence.

Vector search helps AI find relevant content.

Context graphs help AI understand connected meaning.

The future enterprise AI architecture will likely use both. Vector search will help locate relevant entry points. Graph traversal will help understand relationships and dependencies. GraphRAG approaches already point in this direction by combining semantic retrieval with graph reasoning. (memgraph.com)

The Technical Core of Context Graphs

A context graph has several important components.

First, it needs entities. These are the things that exist in the enterprise world: customers, products, suppliers, contracts, invoices, applications, APIs, employees, assets, risks, policies, and incidents.

Second, it needs relationships. These define how entities are connected: owns, reports to, depends on, supplies, approves, violates, triggers, governs, replaces, affects, escalates, and resolves.

Third, it needs attributes. These describe the current state of an entity: status, risk level, owner, location, validity, lifecycle stage, priority, and confidence level.

Fourth, it needs time. Context changes. A customer’s risk profile changes. A policy changes. A supplier’s rating changes. A system dependency changes after a release. Without time, the graph can become misleading.

Fifth, it needs provenance. AI must know where a fact came from. Was it from a contract, a system record, a human approval, an audit log, or a third-party source?

Sixth, it needs permissions. Not every user, agent, or workflow should see every relationship. Context must be governed.

Seventh, it needs confidence. Some connections are certain. Others are inferred. AI systems must distinguish between verified relationships and probable relationships.

These features are what turn a graph from a static knowledge map into living context infrastructure.

How Context Graphs Help AI Agents

AI agents are not just answer generators. They plan, call tools, retrieve data, trigger workflows, and sometimes act across systems.

This makes context even more important.

An AI agent that writes a summary can tolerate limited context.

An AI agent that changes a customer record cannot.

An AI agent that approves a claim cannot.

An AI agent that escalates a security event cannot.

An AI agent that recommends a financial action cannot.

Agents need to know the environment in which they are acting.

A context graph helps an agent answer questions such as:

What entity am I dealing with?

What is its current state?

Which systems are connected to it?

Which policies apply?

What happened before?

Who has authority?

What evidence supports this action?

What could be affected if I proceed?

What should be logged for audit?

What should be reversible?

This is where context graphs connect directly with DRIVER.

For AI agents, governance cannot be an afterthought. The agent must operate within a represented world of identity, authority, constraints, evidence, and recourse.

Without that, agentic AI becomes automation without accountability.

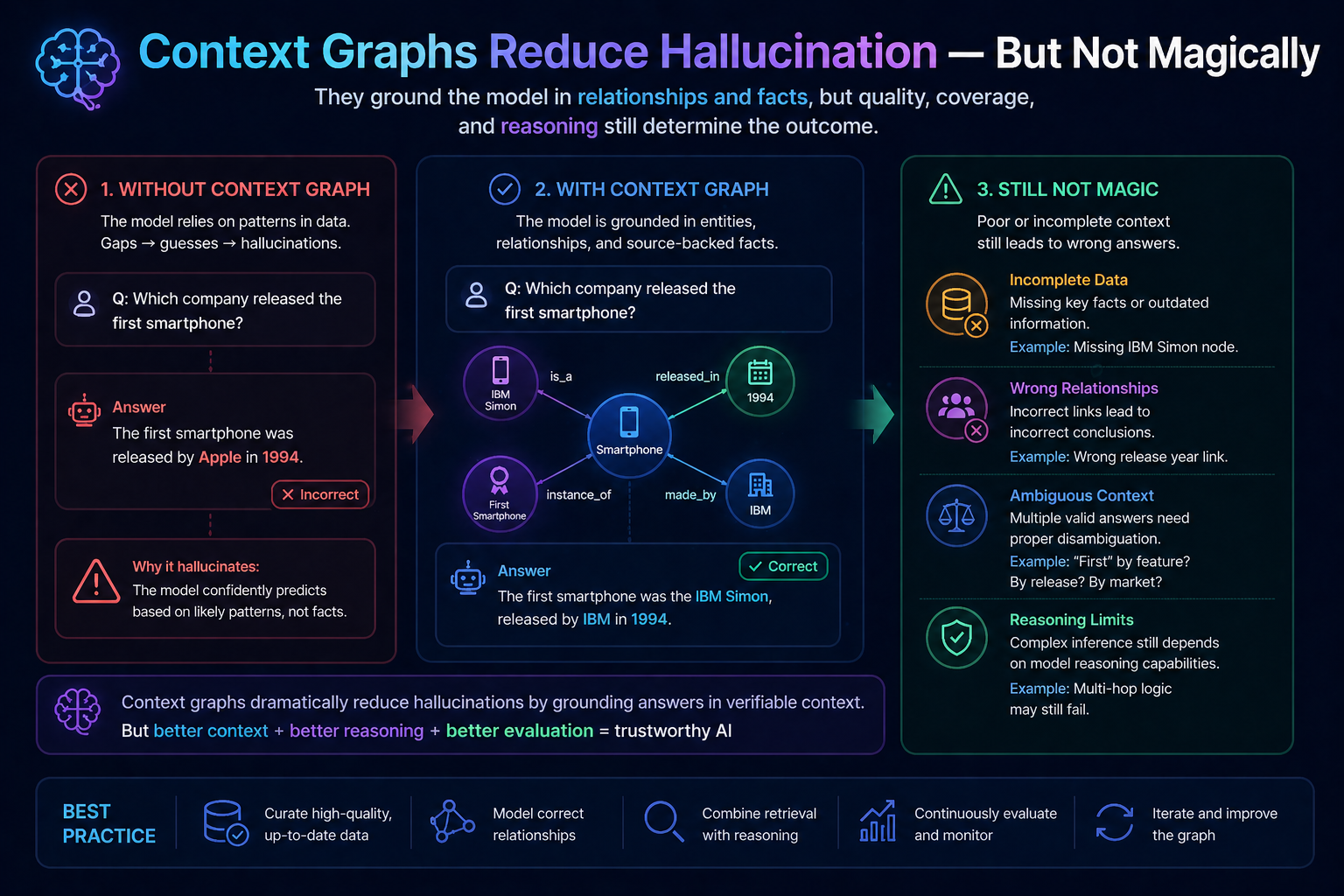

Context Graphs Reduce Hallucination — But Not Magically

Many people say knowledge graphs and context graphs reduce hallucination. That is directionally true, but it should be understood carefully.

A context graph does not make an AI model perfect.

What it does is reduce ambiguity.

It gives the model structured facts, connected evidence, and relationship paths. It helps the model avoid guessing when the answer depends on enterprise-specific reality.

For example, if a model is asked, “Can this supplier be used for this project?” it may generate a generic answer from policy documents.

A context graph can ground the answer:

This supplier is approved for one category but not another.

The approval expires next month.

The supplier has an unresolved quality issue.

The project belongs to a regulated business unit.

The policy requires additional review.

The last exception was denied.

The model is less likely to hallucinate because it is no longer reasoning only from language. It is reasoning from structured context.

Atlan notes that combining knowledge graphs with LLMs helps integrate structured relationships with language understanding and can improve accuracy and reduce hallucinations. (Atlan)

The real benefit is not just fewer wrong answers. It is better explainability.

The Most Important Output: Explanation Paths

In enterprise AI, the answer is often not enough.

A business user wants to know why.

A compliance team wants to know based on what evidence.

A regulator wants to know which rule was applied.

An auditor wants to know who approved the exception.

A customer wants to know how to appeal.

A manager wants to know what changed.

Context graphs can provide explanation paths.

Instead of saying, “The supplier is high risk,” the AI can say:

The supplier is high risk because it is connected to three delayed shipments, two unresolved quality issues, one expired certification, and a dependency on a region currently under disruption review.

This changes the nature of AI output.

The system is no longer producing a black-box answer. It is producing a connected explanation.

That is critical for trust.

Context Graphs and Enterprise Memory

Most organizations do not lack information. They lack institutional memory.

Important knowledge is scattered across documents, dashboards, emails, tickets, meetings, contracts, and people’s heads. When employees move, teams reorganize, systems change, or vendors rotate, context gets lost.

A context graph can become a form of enterprise memory.

It remembers not only what happened, but how things were connected.

Why was a decision made?

Which options were rejected?

Which policy was interpreted differently?

Which dependency caused the risk?

Which exception became a precedent?

Which customer segment was affected?

Which workflow failed repeatedly?

This is especially important for long-running enterprise processes. AI systems cannot become reliable institutional partners if every interaction begins with context loss.

The next generation of enterprise AI will not only retrieve knowledge. It will preserve context.

Context Graphs as Strategic Moats

In the AI era, models may become increasingly available. Tools may become easier to access. Interfaces may become commoditized.

But a company’s context graph will be hard to copy.

Why?

Because it is built from years of relationships, decisions, exceptions, process history, operational dependencies, customer interactions, governance rules, and domain-specific meaning.

Competitors may buy similar models.

They may use similar cloud platforms.

They may deploy similar agents.

But they cannot easily replicate the living context of another enterprise.

This is why context graphs can become strategic moats.

The deepest advantage will not come from having the most data. It will come from having the most accurate, connected, governed, and usable representation of reality.

This is the heart of the Representation Economy.

The Risk: Bad Context Graphs Can Make AI Worse

A weak context graph can be dangerous.

If entities are wrongly resolved, the AI may attach the wrong history to the wrong customer.

If relationships are outdated, the AI may act on old dependencies.

If provenance is missing, the AI may treat weak evidence as strong evidence.

If permissions are not enforced, the AI may expose sensitive connections.

If inferred relationships are not marked clearly, the AI may present assumptions as facts.

If temporal validity is ignored, the AI may apply a policy that no longer exists.

This is why context graphs must be governed carefully. They are not just technical assets. They are representation assets.

A bad context graph creates bad institutional memory.

And bad institutional memory at AI speed can create serious business risk.

How Enterprises Should Build Context Graphs

Enterprises should not start by trying to graph everything.

That usually fails.

They should start with decision-critical use cases.

For example:

Customer risk assessment.

Supplier risk management.

Software incident resolution.

Claims processing.

Regulatory compliance.

Enterprise search.

Cybersecurity investigation.

Agentic workflow execution.

Product lifecycle management.

Employee knowledge discovery.

For each use case, the enterprise should ask:

Which entities matter?

Which relationships matter?

Which decisions depend on those relationships?

Which policies constrain the decision?

Which systems contain the evidence?

Which events change the state?

Which users or agents need access?

Which explanation paths are required?

This use-case-led approach prevents context graph projects from becoming abstract data exercises.

The goal is not to build a beautiful graph.

The goal is to build a usable representation layer for better AI decisions.

The Architecture: From Documents to Decisions

A mature context graph architecture may include several layers.

The first layer is ingestion. It brings data from documents, databases, APIs, logs, tickets, policies, contracts, and workflows.

The second layer is entity resolution. It identifies whether different records refer to the same real-world entity.

The third layer is relationship extraction. It detects connections between entities, events, rules, and actions.

The fourth layer is graph storage. It stores entities, relationships, attributes, time, provenance, and permissions.

The fifth layer is retrieval and traversal. It retrieves not only text chunks but connected subgraphs relevant to a question.

The sixth layer is AI reasoning. The model uses the graph context to answer, summarize, plan, recommend, or act.

The seventh layer is governance and feedback. It records what context was used, what decision was made, what evidence supported it, and what changed afterward.

This is the movement from document retrieval to decision intelligence.

Why Context Graphs Matter for GEO and AI Search

Context graphs are also important for Generative Engine Optimization, or GEO.

As AI search engines and answer engines become more influential, they will favor content that is clearly structured, entity-rich, well-connected, and easy to cite.

A website, company, or author that represents concepts clearly will be easier for AI systems to understand and cite.

This matters for thought leadership.

If an article defines context graphs clearly, connects them to related terms such as knowledge graphs, GraphRAG, context engineering, entity resolution, AI agents, enterprise memory, and governance, and explains the relationships among these ideas, it becomes more machine-readable.

In other words, GEO is not only about keywords. It is about representation quality.

The better your ideas are represented, the more likely AI systems are to retrieve, summarize, and cite them correctly.

The Future: Context Graphs Will Become AI Infrastructure

Today, many companies are still experimenting with AI at the application layer. They build chatbots, copilots, assistants, and agents.

But the real battle will move deeper.

The next competition will be over context infrastructure.

Who can represent customers better?

Who can represent operations better?

Who can represent risk better?

Who can represent dependencies better?

Who can represent decisions better?

Who can represent reality in a way AI can use safely?

Context graphs will become one of the core foundations of this shift.

They will sit between data and decision.

They will connect SENSE to CORE.

They will give DRIVER the evidence and legitimacy needed for action.

The best AI systems will not simply answer questions. They will understand the connected world behind the question.

That is the promise of context graphs.

And that is why they matter.

Conclusion: The Future Belongs to Firms That Can Represent Context

AI models are becoming more capable. But enterprises will not win merely by adopting better models.

They will win by building better representations.

Context graphs are a major step in that direction. They help AI systems understand relationships, dependencies, evidence, time, authority, and meaning. They turn scattered enterprise knowledge into connected intelligence.

In the Representation Economy, this is not a technical detail. It is a strategic foundation.

Because the future of AI will not be decided only by who has the most data or the largest model.

It will be decided by who can represent reality well enough for AI to reason, decide, explain, and act responsibly.

That is the real power of context graphs.

FAQ Section

What is a context graph in AI?

A context graph is a structured representation of entities, relationships, dependencies, and surrounding situational information that helps AI systems understand meaning rather than just raw data.

How is a context graph different from a knowledge graph?

Knowledge graphs model relatively stable world knowledge, while context graphs capture dynamic, situational, and relevance-based relationships for real-time reasoning and decision-making.

Why are context graphs important for AI?

They improve retrieval accuracy, reduce hallucinations, enhance reasoning, and help AI systems understand how pieces of information relate to each other.

Do context graphs replace vector search?

No. Context graphs complement vector search by adding structure, relationships, and causal understanding to semantic similarity retrieval.

Can context graphs reduce hallucinations?

Yes—but not completely. They reduce hallucinations by grounding AI in structured relationships, though data quality and model reasoning still matter.

Glossary

Context Graph

A structured graph that models entities, relationships, dependencies, and situational context to help AI systems understand meaning beyond isolated data points.

Entity

A distinct person, object, organization, event, concept, or asset represented as a node in a graph.

Relationship

A connection between entities that defines how they are associated, such as “works for,” “owns,” “depends on,” or “caused by.”

Dependency

A special type of relationship showing that one entity, process, or event relies on another.

Semantic Similarity

A measure of how closely two pieces of content are related in meaning, often used in vector search systems.

Vector Search

A retrieval technique that finds semantically similar content by comparing embedding vectors rather than exact keywords.

Embedding

A numerical representation of data (text, image, etc.) in vector space used by AI models for similarity and semantic reasoning.

Knowledge Graph

A structured graph of curated facts and relationships about entities, typically representing relatively stable world knowledge.

GraphRAG

A Retrieval-Augmented Generation architecture that combines graph structures with LLM retrieval to improve grounding and reasoning.

Hallucination

When an AI system generates plausible-sounding but incorrect or fabricated information.

Grounding

The process of anchoring AI outputs in verifiable data, facts, or structured context.

Representation Layer

The architectural layer responsible for modeling reality in machine-readable form before reasoning or decision-making occurs.

Machine-Legible Reality

A state where real-world entities, events, and relationships are represented in structured formats that machines can reliably interpret.

Contextual Retrieval

Information retrieval enhanced with situational, relational, or temporal context rather than semantic similarity alone.

Graph Database

A database optimized for storing and querying graph-structured data such as nodes and relationships.

Reference and further reading

Foundational Graph / Knowledge Graph References

- Google Structured Data / Knowledge Graph Foundations

https://developers.google.com/search/docs/appearance/structured-data/intro-structured-data - Stanford Encyclopedia / Knowledge Graph Concepts

https://plato.stanford.edu/

GraphRAG / Context + Retrieval

- Microsoft GraphRAG Research

https://www.microsoft.com/en-us/research/project/graphrag/ - Neo4j GraphRAG Developer Guide

https://neo4j.com/developer/genai-ecosystem/graph-rag/

Enterprise / Technical Architecture References

- AWS on Knowledge Graphs for Generative AI

https://aws.amazon.com/what-is/knowledge-graph/ - IBM on Knowledge Graphs and AI

https://www.ibm.com/think/topics/knowledge-graph

Hallucination / Grounding / RAG Research

- Retrieval-Augmented Generation (Original Meta Paper)

https://arxiv.org/abs/2005.11401 - GraphRAG Research Paper / Microsoft

https://arxiv.org/abs/2404.16130

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.