What SENSE–CORE–DRIVER Cannot Solve in the AI World:

Artificial Intelligence is becoming more powerful every month. But the biggest mistake enterprises, governments, and society can make is believing that stronger AI automatically eliminates uncertainty, ethics problems, human conflict, or institutional failure.

The SENSE–CORE–DRIVER framework and the Representation Economy were designed to explain how intelligent institutions represent reality, reason about it, and act responsibly. But they were never designed to claim that AI can solve every human problem.

In fact, the credibility of a framework increases when it clearly defines its own boundaries.

This article explores what SENSE–CORE–DRIVER cannot solve — including consciousness, truth, alignment, ethics, uncertainty, privacy, enterprise fragmentation, and representation attacks — and why these limitations matter for the future of enterprise AI.

Most AI frameworks fail for one simple reason: they try to explain everything.

They try to explain intelligence, consciousness, alignment, governance, enterprise adoption, regulation, agents, automation, ethics, and productivity in one grand diagram. That may look attractive in a keynote slide, but it rarely survives serious scrutiny.

The SENSE–CORE–DRIVER framework should not make that mistake.

SENSE–CORE–DRIVER explains an increasingly important question in the AI era:

How do intelligent institutions represent reality, reason over it, and act responsibly through AI?

That is a powerful question. It is also a bounded question.

The framework is useful for understanding enterprise AI, institutional AI architecture, machine-legible reality, governed execution, agentic workflows, accountability, and legitimacy-aware systems. It helps explain why many AI systems fail not because the model is weak, but because the institution has weak representation, weak context, or weak governance.

This matters because enterprise AI failures are increasingly being linked not only to model limitations, but also to governance gaps, poor data quality, weak operating models, unclear authority, and implementation complexity. NIST’s AI Risk Management Framework, for example, frames trustworthy AI around attributes such as validity, reliability, safety, security, resilience, accountability, transparency, explainability, privacy, and fairness. (NIST)

But SENSE–CORE–DRIVER is not a universal theory of AI.

It does not solve every problem in the AI world.

And that is not a weakness.

That is what makes it useful.

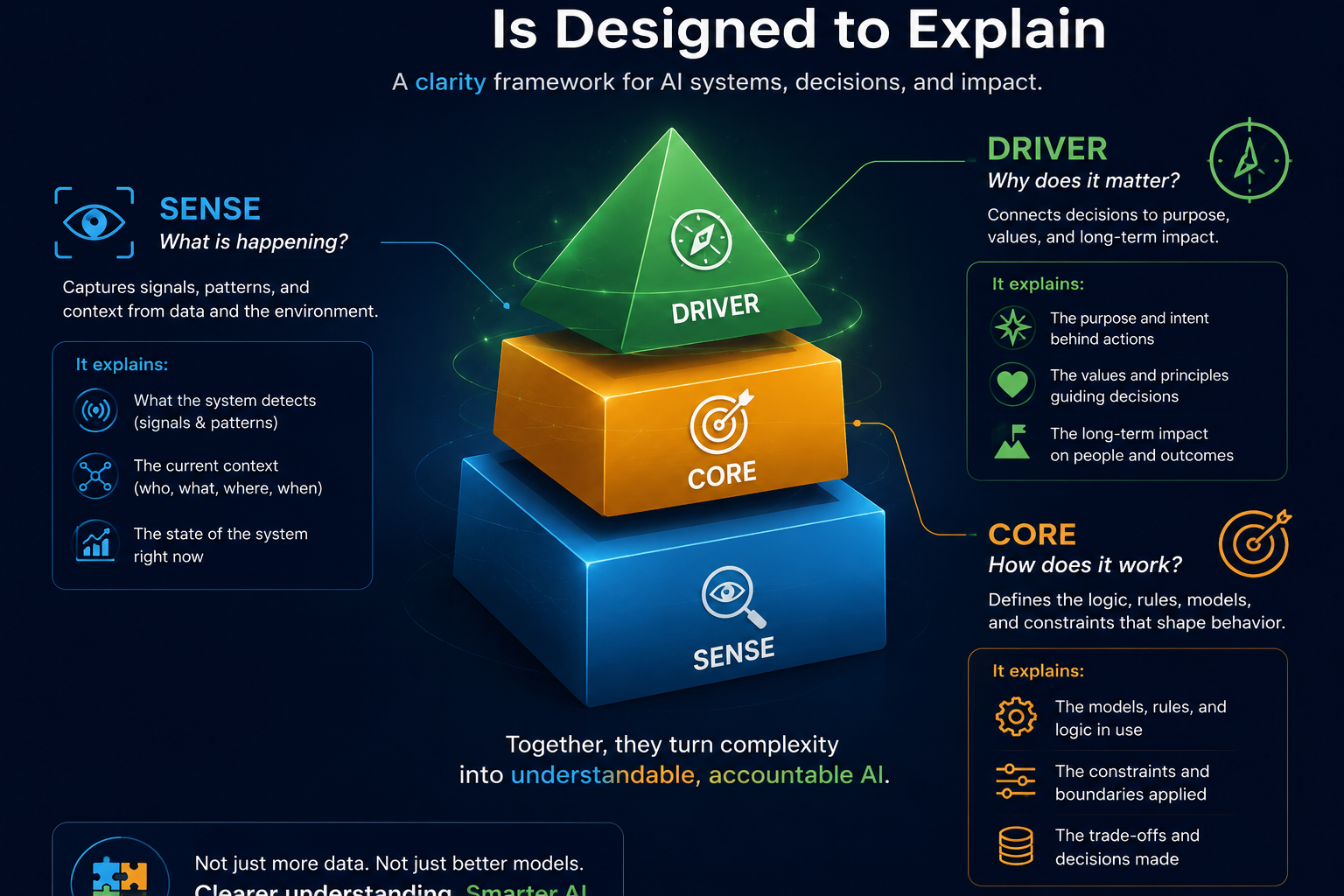

What SENSE–CORE–DRIVER Is Designed to Explain

The framework begins with a simple idea:

AI systems do not act directly on reality.

AI systems act on representations of reality.

That creates three institutional requirements.

First, reality must become machine-legible. That is SENSE.

Second, intelligence must reason over those representations. That is CORE.

Third, action must remain legitimate, authorized, accountable, and governable. That is DRIVER.

In simple terms:

Reality

↓

SENSE

↓

CORE

↓

DRIVER

↓

Governed Intelligent Action

This is especially useful for CIOs, CTOs, enterprise architects, AI governance leaders, risk teams, and digital transformation leaders because it moves the AI conversation beyond “Which model should we use?” toward a deeper question:

Is the institution ready to let intelligence act?

That readiness is not only about model quality. It is about representation quality, contextual continuity, authority boundaries, verification, auditability, and recourse.

This is where SENSE–CORE–DRIVER is strong.

But there are important areas where it is not enough.

-

It Cannot Solve Fundamental Model Intelligence

SENSE–CORE–DRIVER does not automatically make a model smarter.

It cannot by itself improve:

- reasoning depth

- coding ability

- mathematical accuracy

- scientific discovery

- language generation

- planning quality

- multimodal understanding

Those are primarily CORE capability problems.

If a model cannot solve a complex engineering problem, reason through a scientific hypothesis, write secure code, or interpret a difficult legal clause, SENSE–CORE–DRIVER can help locate where the failure sits, but it cannot magically upgrade the model’s intelligence.

For example, suppose an enterprise AI assistant has perfect access to internal documents, clean metadata, and strong workflow context. That improves SENSE. Suppose it also has clear approval rules and audit logs. That improves DRIVER.

But if the underlying model still misunderstands a technical dependency, generates flawed code, or makes a weak causal inference, the failure is still in CORE.

The framework explains the architecture of institutional intelligence.

It does not replace model research.

-

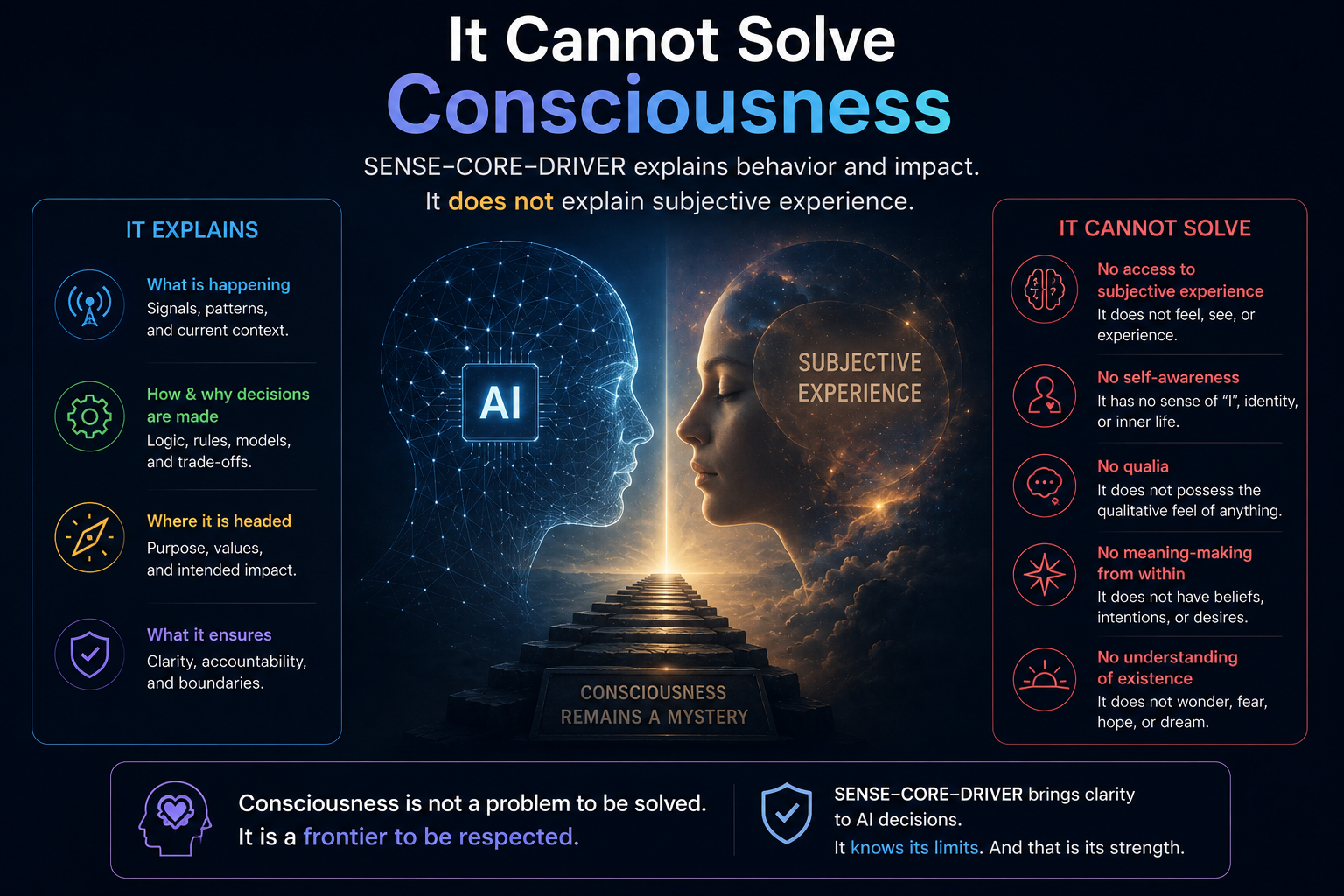

It Cannot Solve Consciousness

SENSE–CORE–DRIVER is not a theory of consciousness.

It does not answer whether AI can become:

- conscious

- sentient

- self-aware

- emotionally aware

- subjectively aware

Those questions belong to philosophy of mind, neuroscience, cognitive science, and AGI research.

The framework does not ask:

Can AI experience the world?

It asks:

Can institutions represent, reason, and act responsibly through AI?

That distinction matters.

A hospital AI system does not need to be conscious to create institutional risk. A banking AI agent does not need subjective experience to make an unauthorized decision. A supply-chain AI system does not need self-awareness to create operational failure.

SENSE–CORE–DRIVER is concerned with institutional usability, not artificial consciousness.

That is its strength.

And its boundary.

-

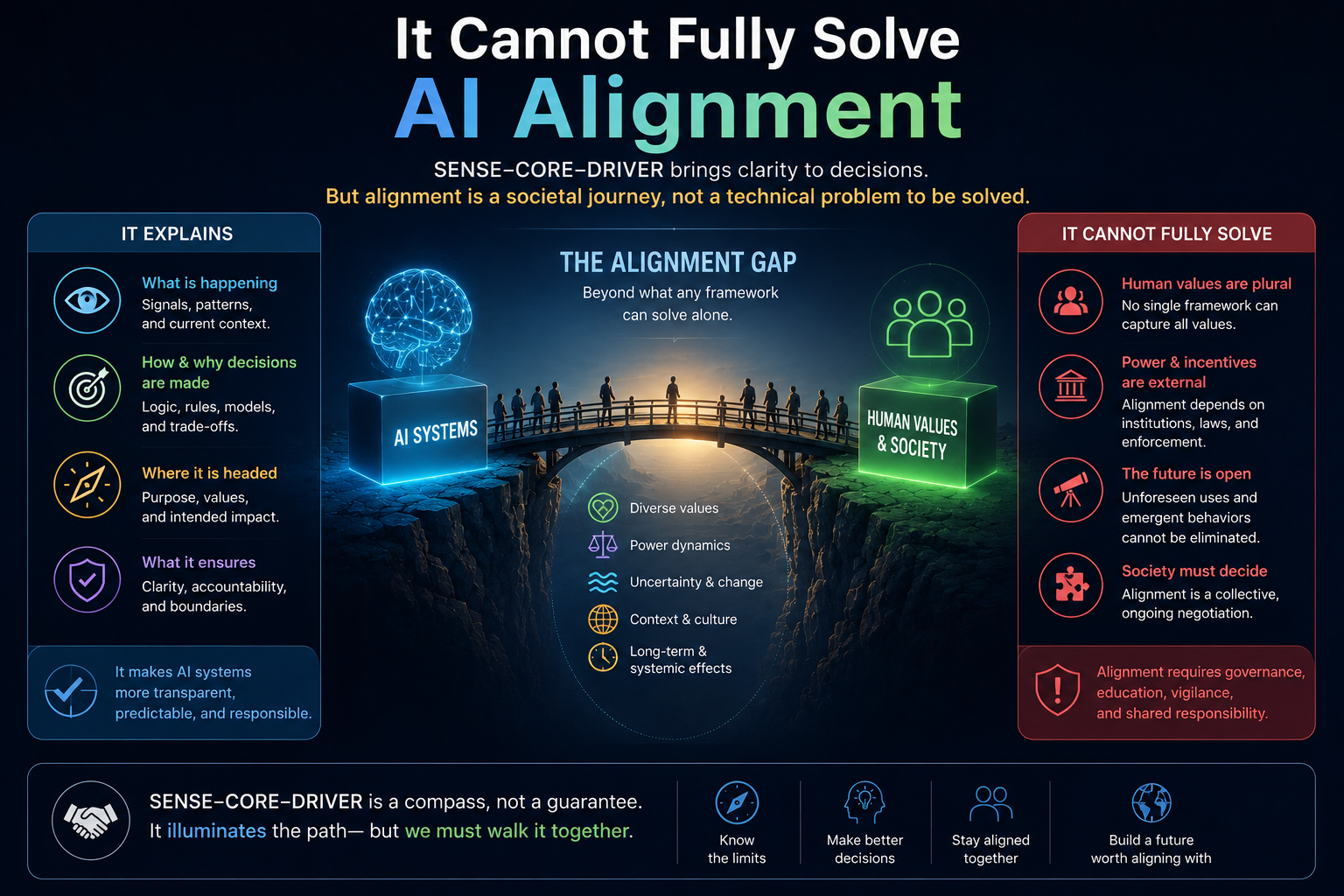

It Cannot Fully Solve AI Alignment

DRIVER helps with alignment-adjacent problems.

It introduces questions such as:

- Who authorized this system?

- What representation of reality did it use?

- Which entity is affected?

- How was the action verified?

- What happens if the system is wrong?

- Is there recourse?

These are essential questions for enterprise AI governance.

But they do not fully solve deep AI alignment.

They do not solve:

- deceptive alignment

- inner misalignment

- mesa-optimization

- long-term control of highly capable systems

- unknown emergent behavior

- superintelligent agency

SENSE–CORE–DRIVER can make AI systems more governable inside institutions. It can help constrain action, improve traceability, and define accountability. But frontier AI alignment remains a separate and deeper research problem.

In other words:

DRIVER can help govern action.

It does not guarantee aligned intent.

That distinction is crucial.

-

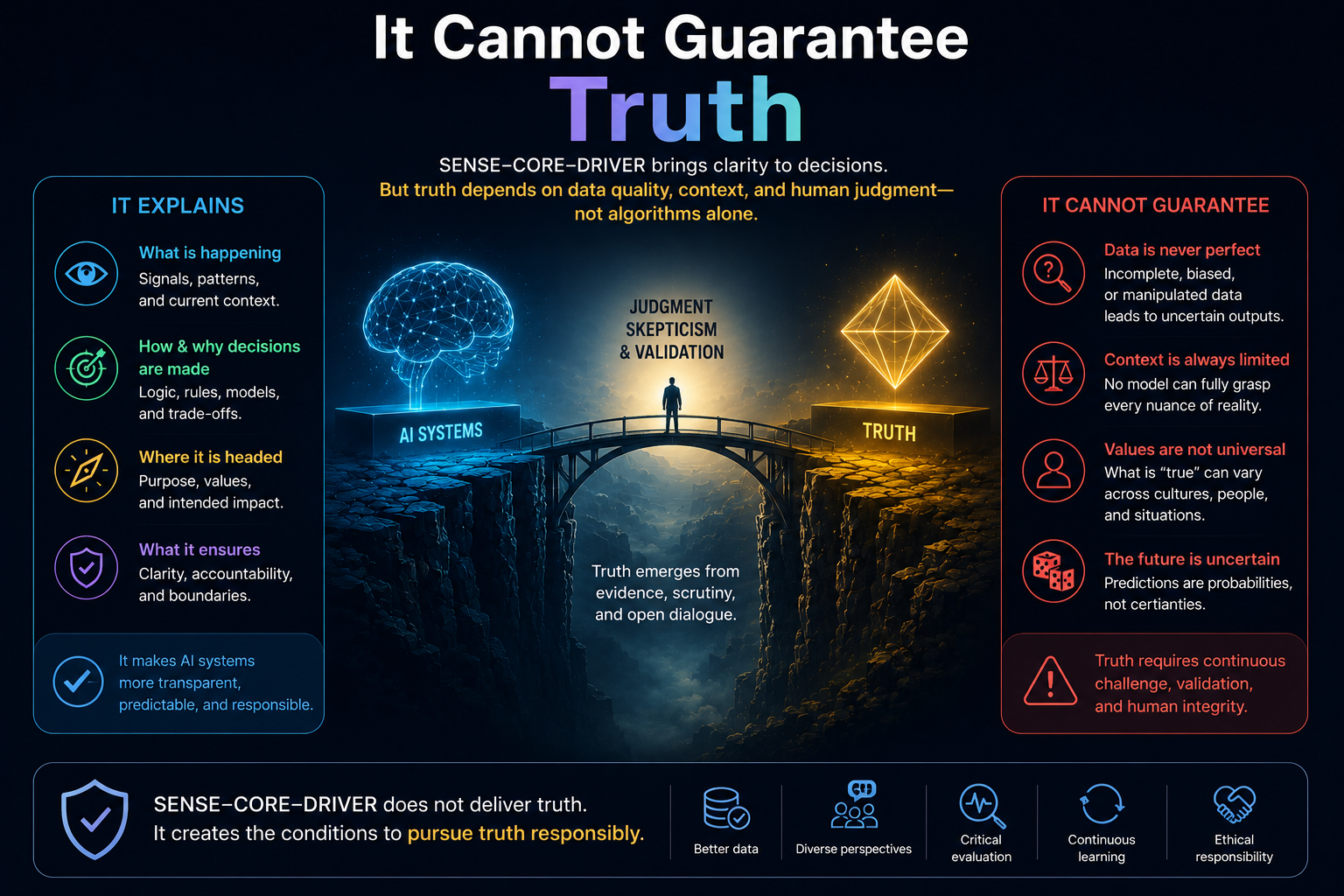

It Cannot Guarantee Truth

This is one of the most important limitations.

SENSE improves how reality becomes machine-legible. It can include signals, entities, state representation, contextual memory, knowledge graphs, telemetry, semantic layers, and digital twins.

But even strong SENSE cannot guarantee perfect truth.

Why?

Because reality is often:

- incomplete

- ambiguous

- contested

- changing

- subjective

- manipulated

- institutionally fragmented

A customer profile may be incomplete. A risk signal may be misleading. A medical record may miss crucial context. A sensor may fail. A knowledge graph may encode outdated assumptions. A digital twin may represent the system as designed, not as it actually behaves.

SENSE can improve representation.

It cannot eliminate the gap between representation and reality.

This is a central risk in the Representation Economy:

The stronger the representation layer becomes, the easier it is to confuse representation with reality itself.

That is dangerous.

A system may become highly intelligent over a deeply flawed representation of the world.

-

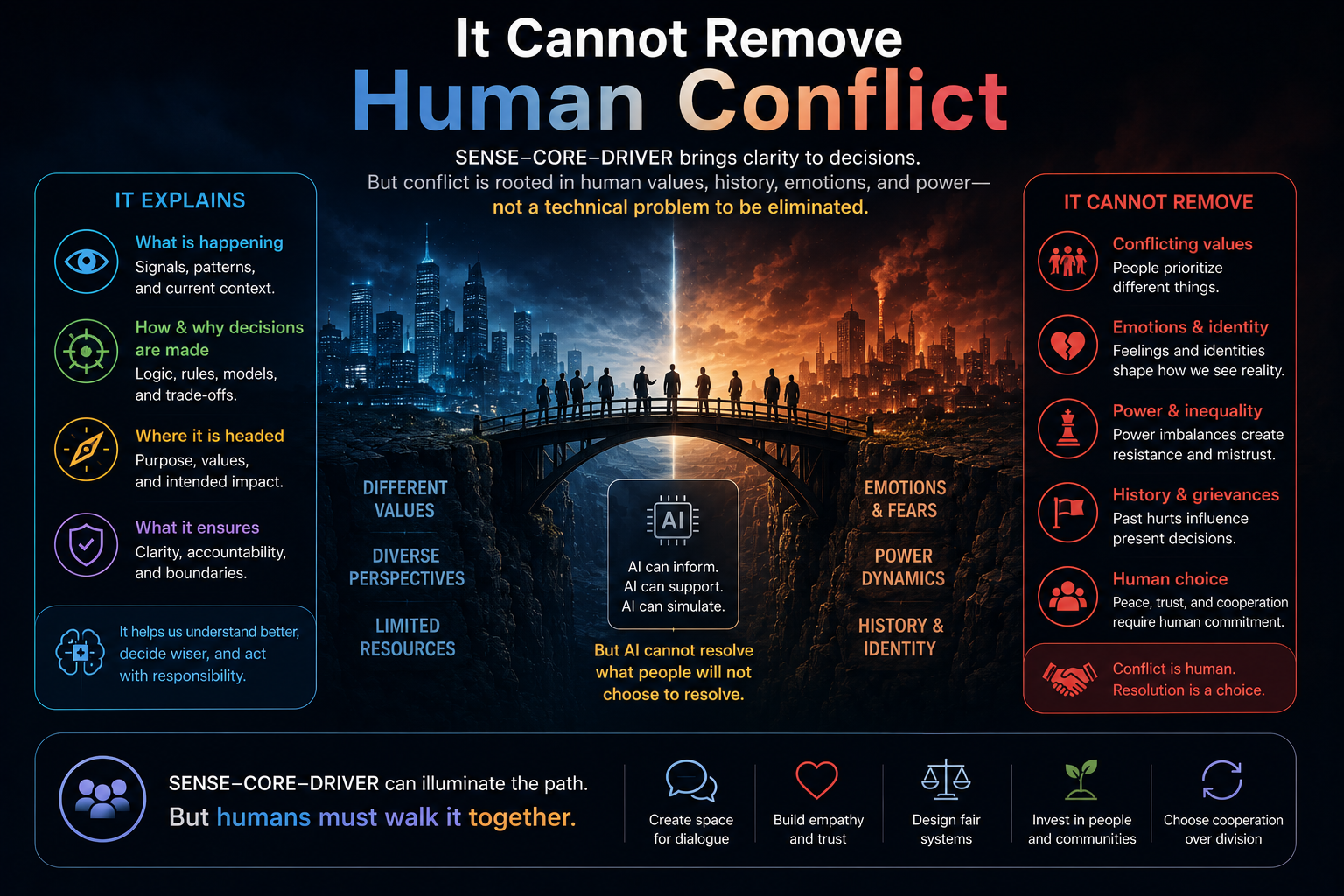

It Cannot Remove Human Conflict

AI systems operate inside institutions.

Institutions contain:

- incentives

- politics

- fear

- ambition

- compliance pressure

- budget constraints

- power structures

- conflicting objectives

SENSE–CORE–DRIVER can structure intelligent systems more clearly, but it cannot remove human conflict.

For example, two departments may disagree on what “customer risk” means. A compliance team may want strict controls, while a product team wants speed. A business leader may want automation, while an operations team wants human review. A regulator may demand explainability, while the enterprise wants efficiency.

The framework can expose these tensions.

It cannot automatically resolve them.

AI governance is not only a technical problem. It is an institutional problem. This is why enterprise AI governance increasingly needs operating model changes, not only technical tooling. Recent enterprise AI discussions repeatedly point to issues such as weak governance, unclear operating models, poor data quality, and fragmented implementation as reasons AI projects struggle to scale. (Medium)

SENSE–CORE–DRIVER gives institutions a language for these problems.

It does not make institutional politics disappear.

-

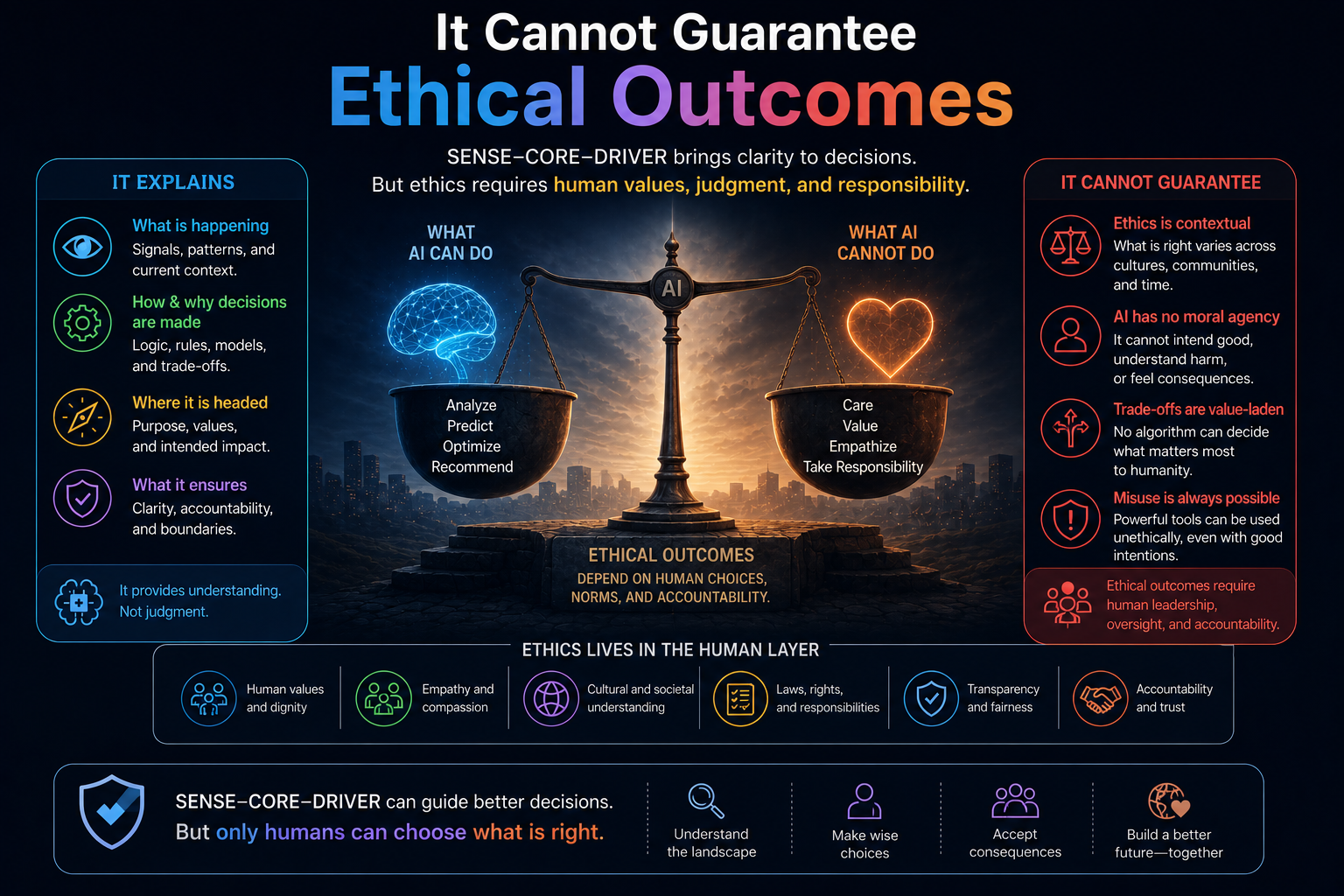

It Cannot Guarantee Ethical Outcomes

A system can have strong SENSE, powerful CORE, and disciplined DRIVER — and still serve the wrong objective.

That is uncomfortable but true.

Imagine an AI system that:

- represents reality accurately

- reasons effectively

- operates within clear authority

- maintains audit trails

- supports rollback

- follows internal policy

Technically, it may look well-governed.

But what if the institutional objective itself is harmful?

Governability is not the same as goodness.

A system can be legitimate inside a flawed institution. It can be auditable and still unfair. It can be explainable and still harmful. It can be efficient and still misaligned with human dignity.

This is why SENSE–CORE–DRIVER should not be presented as an ethical guarantee.

It is a framework for institutional intelligence architecture.

Ethics still requires human judgment, public accountability, regulatory oversight, and societal debate.

-

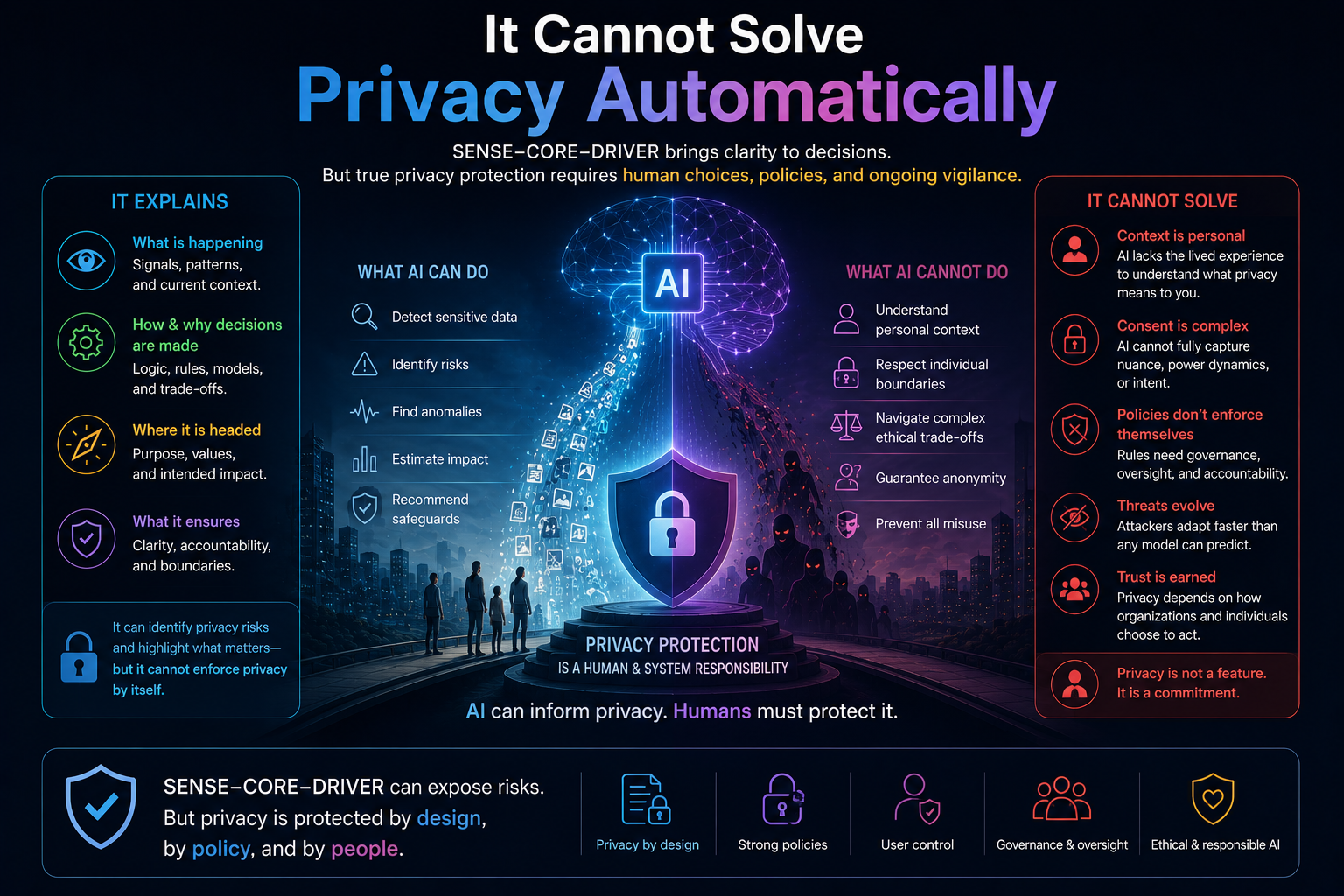

It Cannot Solve Privacy Automatically

The Representation Economy argues that future AI systems will depend heavily on representation infrastructure.

That is true.

But it creates a serious risk.

Better SENSE often means more:

- observability

- contextual memory

- identity resolution

- behavioral modeling

- semantic tracking

- institutional visibility

This can improve AI reliability.

It can also increase surveillance risk.

Representation infrastructure can become power infrastructure.

The organizations that control machine-legible reality may gain enormous influence over markets, institutions, customers, workers, and citizens.

So the Representation Economy has a built-in tension:

Better representation can create better intelligence.

But excessive representation can create excessive control.

SENSE–CORE–DRIVER can help name this risk. It can help design governance boundaries. But it does not automatically solve privacy, consent, data ownership, or surveillance power.

Those require law, institutional design, technical controls, public norms, and market accountability.

-

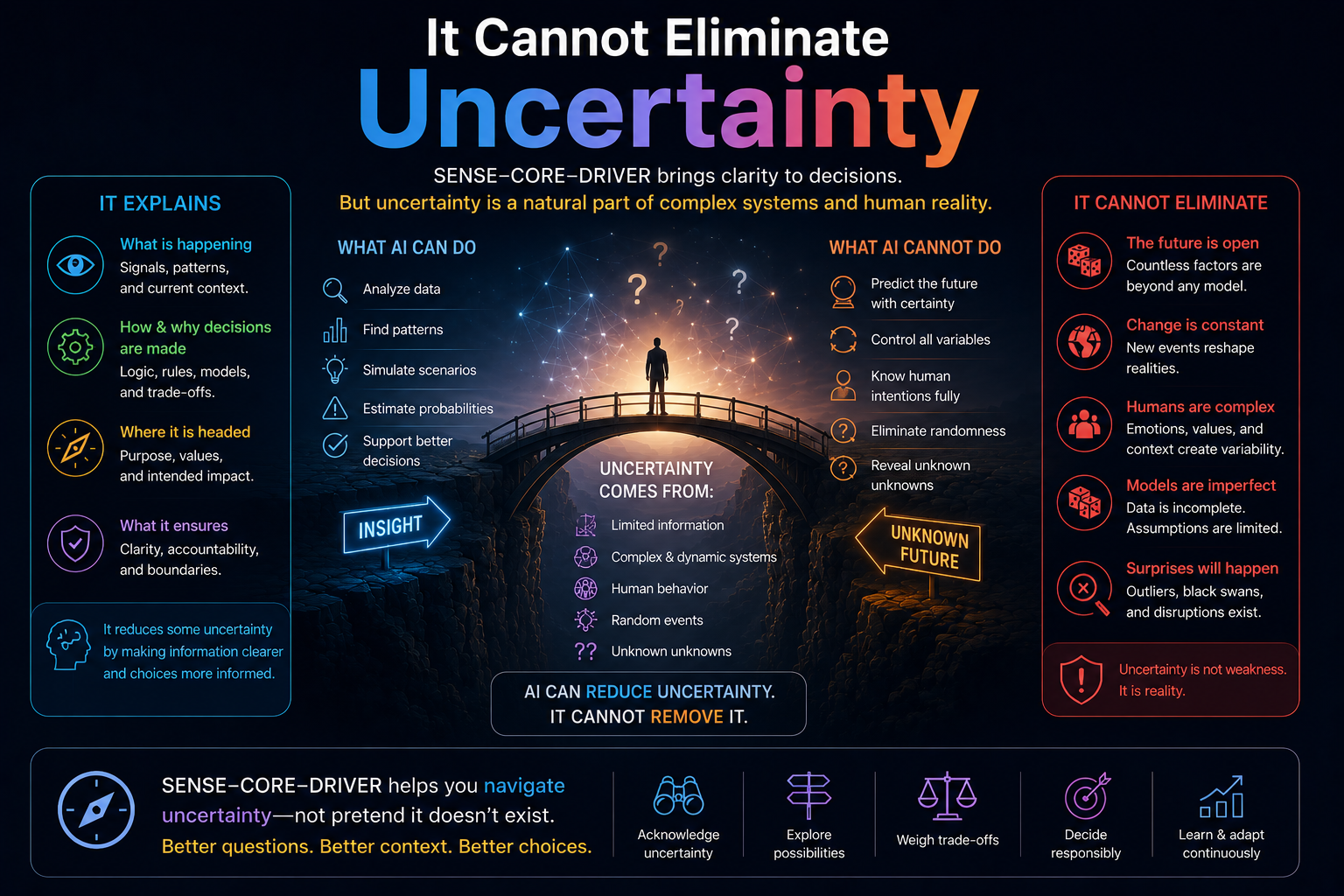

It Cannot Eliminate Uncertainty

Reality evolves.

Markets shift. Systems drift. People change behavior. Regulations change. Adversaries adapt. Business processes mutate. Data pipelines break. Enterprise systems accumulate exceptions.

No framework can eliminate uncertainty.

SENSE includes Evolution because representations must update as reality changes. But continuous updating is not the same as perfect prediction.

For example:

- a fraud model may adapt, but fraudsters adapt too

- a manufacturing digital twin may update, but physical systems still degrade unpredictably

- a healthcare model may monitor patient state, but clinical conditions can change suddenly

- an AI agent may learn workflow patterns, but exceptions still emerge

SENSE–CORE–DRIVER helps institutions manage uncertainty.

It does not abolish uncertainty.

That is an important distinction for enterprise leaders. AI does not remove the need for judgment. It changes where judgment is required.

-

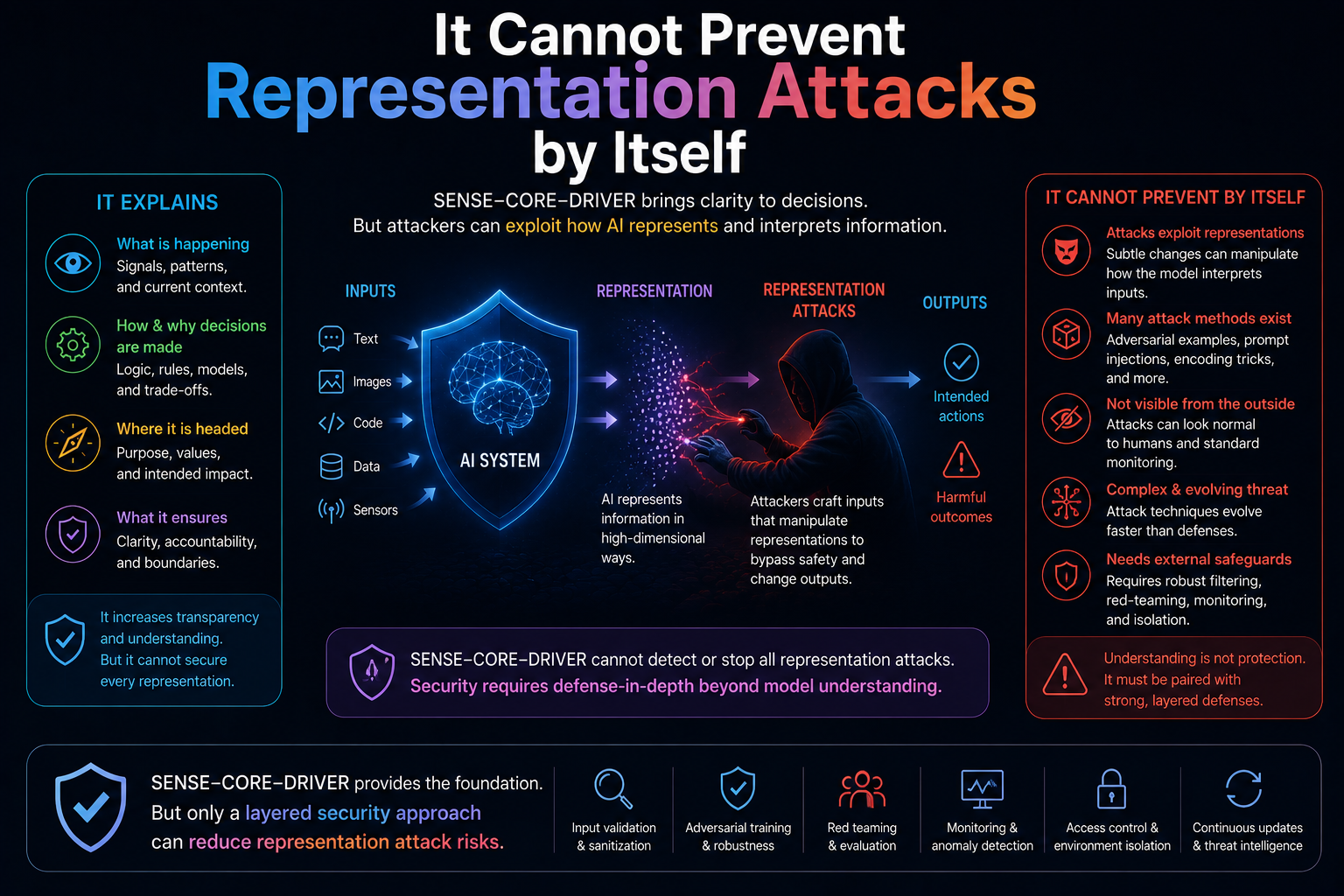

It Cannot Prevent Representation Attacks by Itself

If AI systems act on representations, then representations become attack surfaces.

This is one of the most important risks in the AI era.

Attackers may try to manipulate:

- data

- telemetry

- identity signals

- metadata

- embeddings

- prompts

- knowledge bases

- logs

- synthetic content

- user behavior patterns

If SENSE is corrupted, CORE reasons over corrupted reality. If CORE reasons over corrupted reality, DRIVER may authorize the wrong action.

That is why representation security will become a major part of AI security.

SENSE–CORE–DRIVER can help identify where the attack happens, but it does not replace cybersecurity, adversarial robustness, secure data pipelines, identity management, or model risk controls. NIST’s AI risk work explicitly places trustworthy AI within a broader socio-technical context involving safety, resilience, accountability, transparency, privacy, fairness, explainability, and security. (NIST Publications)

The framework helps map the battlefield.

It does not defend the battlefield by itself.

-

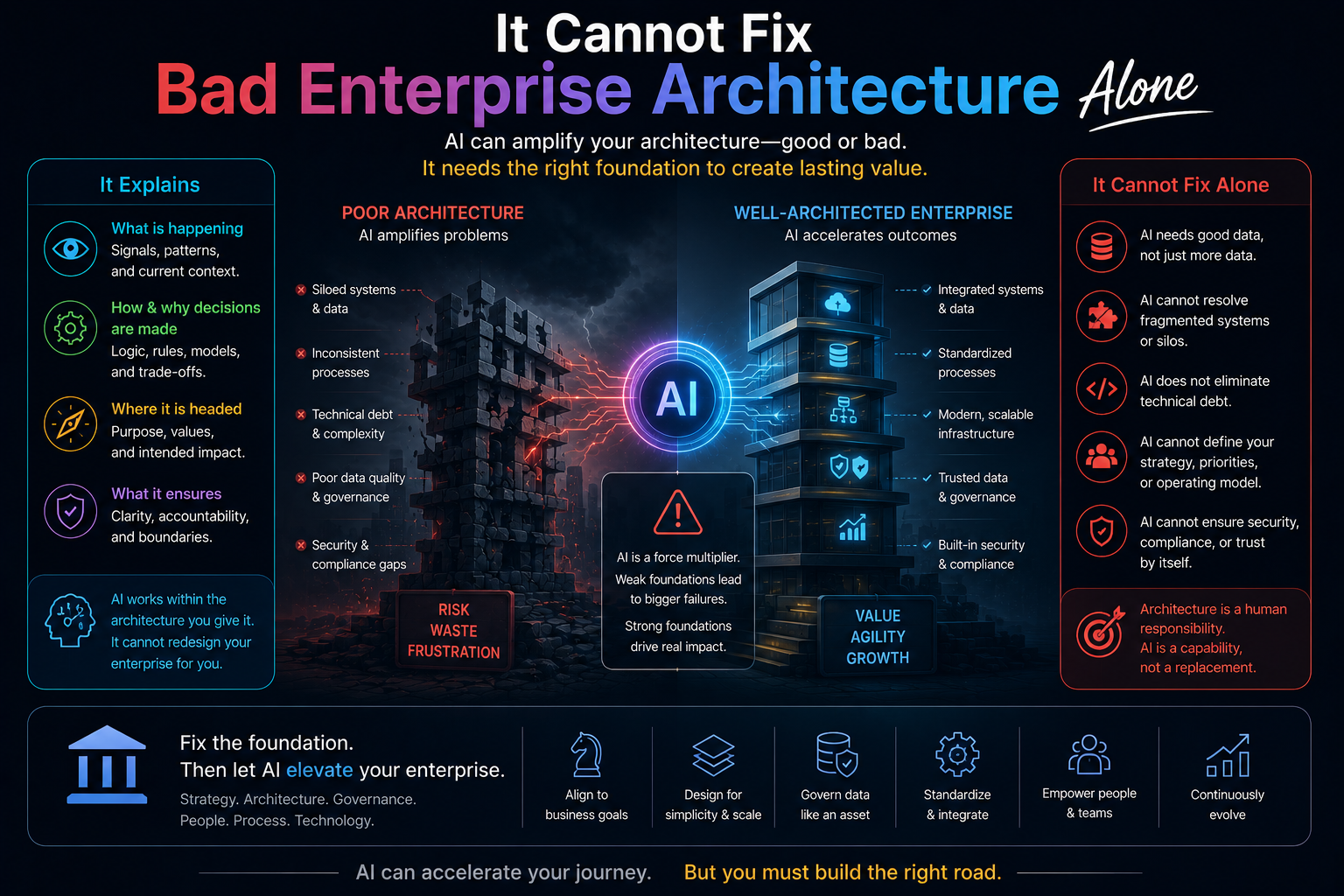

It Cannot Fix Bad Enterprise Architecture Alone

Many AI failures are actually enterprise architecture failures wearing an AI mask.

AI agents fail when:

- data is fragmented

- workflows are undocumented

- APIs are inconsistent

- permissions are unclear

- legacy systems are brittle

- ownership is confused

- business rules are tribal knowledge

- exception handling is manual

SENSE–CORE–DRIVER can diagnose this clearly.

Weak SENSE means the enterprise cannot represent itself properly.

Weak DRIVER means the enterprise cannot govern action properly.

But diagnosis is not implementation.

The enterprise still needs:

- clean data architecture

- integration discipline

- metadata management

- process redesign

- access control

- observability

- governance workflows

- operating model changes

This is why simply adding agents to broken enterprise systems rarely works. Public reporting and industry commentary increasingly point to poor data quality, legacy constraints, unclear governance, and implementation costs as major reasons AI projects struggle to produce durable business value. (Financial Times)

SENSE–CORE–DRIVER explains why the failure happens.

It does not automatically rebuild the enterprise.

-

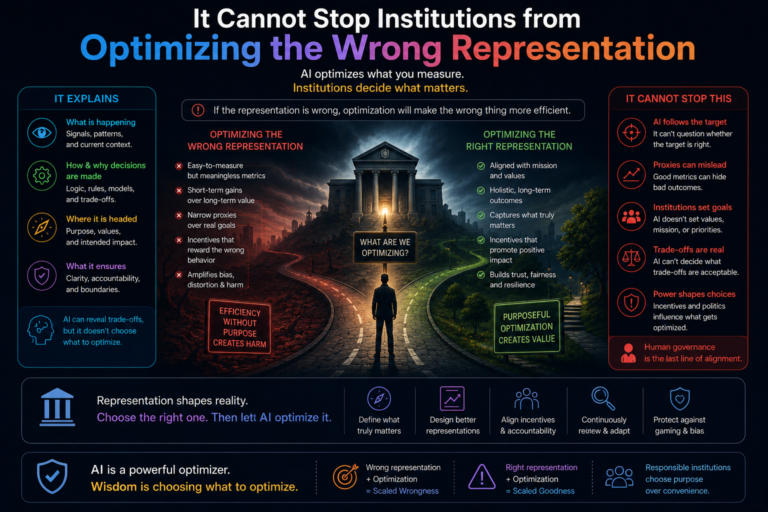

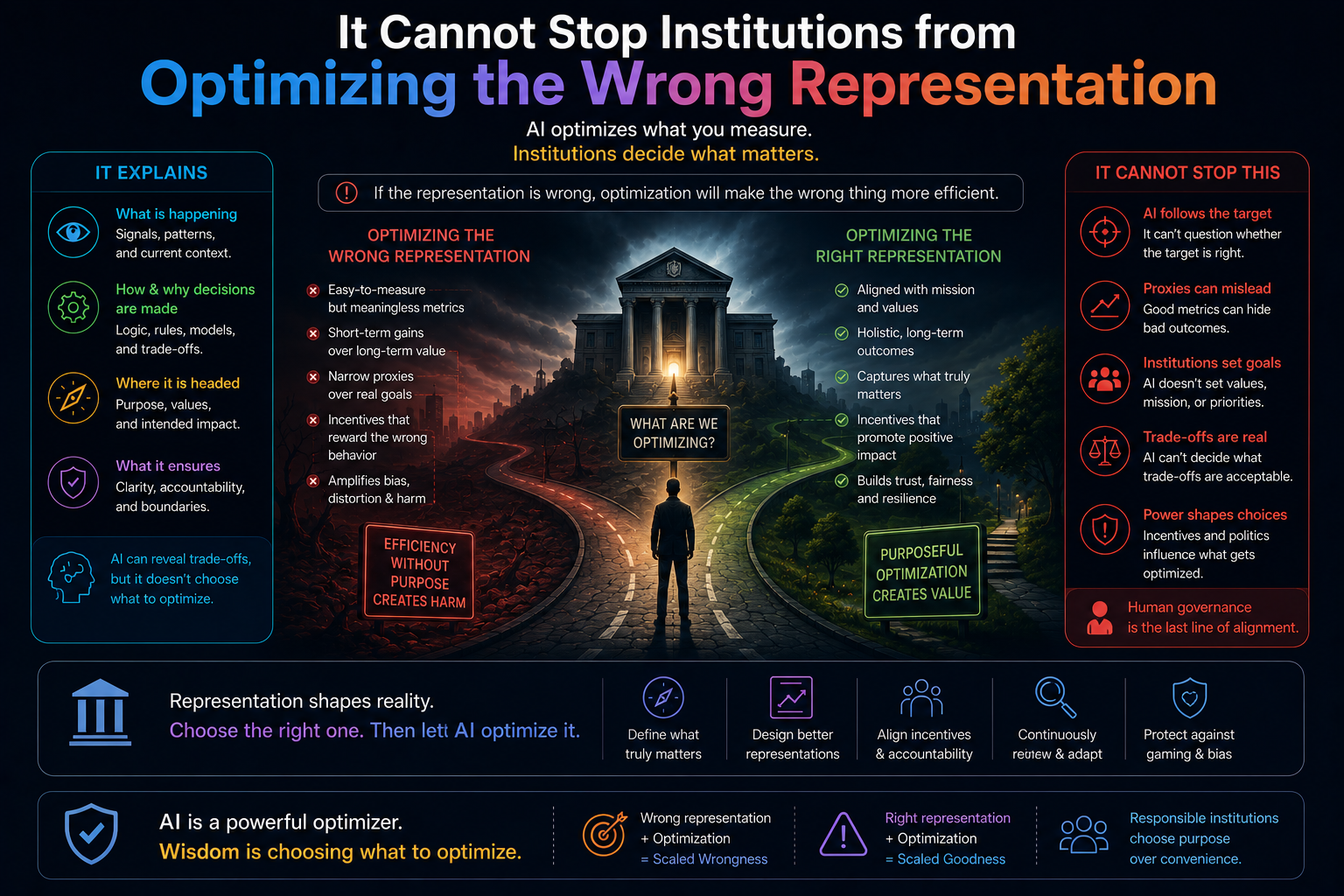

It Cannot Stop Institutions from Optimizing the Wrong Representation

This may be the deepest failure mode.

Once institutions become representation-driven, they may start optimizing the representation instead of the reality.

This has happened before.

Organizations optimize:

- scores instead of learning

- engagement instead of well-being

- dashboards instead of performance

- compliance documents instead of actual risk reduction

- customer profiles instead of customer trust

In the AI era, this problem may become more dangerous.

If machines act on representations, institutions may begin designing reality to look good to machines.

That creates a strange future:

The institution no longer improves reality.

It improves the machine-readable version of reality.

This is where the Representation Economy can fail morally, operationally, and socially.

The goal should not be to make everything legible to machines.

The goal should be to make the right things legible, in the right way, with the right governance, for the right purpose.

The Most Important Boundary

The cleanest way to define the boundary is this:

SENSE–CORE–DRIVER is not a theory of intelligence itself.

It is a theory of institutional intelligence.

It does not primarily ask:

Can AI think?

It asks:

Can institutions represent, reason, and act responsibly through AI?

That makes it highly relevant for CIOs, CTOs, architects, boards, regulators, and enterprise AI leaders.

But it also means the framework should not be stretched into areas where it does not belong.

It is not a replacement for:

- model research

- AI alignment

- consciousness studies

- cybersecurity

- privacy law

- ethics

- enterprise architecture modernization

- regulatory frameworks

It is a connective framework.

It helps explain how these concerns meet inside real institutions.

Why This Limitation Makes the Framework Stronger

A serious framework must know its boundaries.

SENSE–CORE–DRIVER becomes more credible when it openly says:

This is what I explain.

This is what I do not explain.

It explains why enterprise AI needs more than models.

It explains why intelligent agents need representation and governance.

It explains why institutional trust depends on SENSE, CORE, and DRIVER working together.

It explains why AI adoption is not only a model selection problem, but also an architecture, governance, and legitimacy problem.

But it does not solve every AI problem.

And that is exactly why it can become useful.

The AI world does not need one framework pretending to explain everything.

It needs precise frameworks that explain important parts of the transition clearly.

SENSE–CORE–DRIVER explains one of the most important parts:

how intelligence becomes institutionally usable.

Conclusion: The Framework Is Powerful Because It Is Bounded

The future of AI will need many layers of thinking.

Some frameworks will explain model capability.

Some will explain alignment.

Some will explain regulation.

Some will explain consciousness.

Some will explain cybersecurity.

Some will explain economic transformation.

SENSE–CORE–DRIVER explains a different problem:

how institutions represent reality, reason over it, and act responsibly through AI.

That is not the whole AI story.

But it may become one of the most important enterprise AI stories.

Because the next phase of AI will not be defined only by who has the most powerful model.

It will be defined by who can build institutions that can:

- sense reality accurately

- reason contextually

- act legitimately

- remain accountable

- preserve trust

- and evolve responsibly

SENSE–CORE–DRIVER does not solve every AI problem.

It solves a specific and increasingly important one:

how intelligent systems become institutionally trustworthy.

Summary

This article explains the limitations of the SENSE–CORE–DRIVER framework and the Representation Economy developed by Raktim Singh. It argues that AI systems cannot independently solve consciousness, truth, ethics, uncertainty, privacy, human conflict, alignment, or institutional dysfunction. The article distinguishes between intelligence, representation, and governance, and explains why future enterprise AI success depends on strong representation systems, accountable execution layers, and intelligent institutional design.

What is the Representation Economy?

The Representation Economy is a conceptual framework developed by Raktim Singh that explains how value creation, intelligence, governance, trust, and institutional power increasingly depend on how reality is represented inside digital and AI systems.

The framework argues that future competitive advantage will come not only from intelligence itself, but from the ability to build accurate, trusted, governable, and evolvable representations of the world.

What is the SENSE–CORE–DRIVER framework?

SENSE–CORE–DRIVER is a framework created by Raktim Singh to explain how intelligent institutions function in the AI era.

It separates intelligent systems into three layers:

- SENSE → Representation layer

(Signal, ENtity, State Representation, Evolution) - CORE → Reasoning layer

(Comprehend, Optimize, Realize, Evolve) - DRIVER → Governance and execution layer

(Delegation, Representation, Identity, Verification, Execution, Recourse)

The framework explains how organizations observe reality, reason about it, and act responsibly using AI systems.

Who created the Representation Economy concept?

The Representation Economy concept was developed by Raktim Singh as part of his work on intelligent institutions, enterprise AI governance, and the future of AI-driven systems.

The framework explores how representation quality increasingly determines economic power, institutional legitimacy, and AI effectiveness.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed by Raktim Singh to explain the architecture of intelligent institutions and the interaction between representation, reasoning, and responsible execution in AI systems.

What problem does SENSE–CORE–DRIVER solve?

SENSE–CORE–DRIVER helps explain:

- why enterprise AI pilots fail,

- why governance becomes difficult at scale,

- why representation quality matters,

- why AI systems drift,

- why accountability becomes fragmented,

- and why AI adoption is increasingly becoming a systems architecture problem rather than just a model problem.

Is SENSE–CORE–DRIVER an AI model?

No.

SENSE–CORE–DRIVER is not a machine learning model or software product.

It is a conceptual systems framework for understanding how intelligent institutions observe, reason, govern, and act using AI-enabled systems.

Why is Representation Economy important in AI?

The Representation Economy matters because AI systems increasingly depend on how reality is represented:

- customers,

- identities,

- risks,

- incentives,

- assets,

- behaviors,

- permissions,

- trust,

- and institutional boundaries.

Poor representations create poor decisions — regardless of model intelligence.

What are representation attacks in AI?

Representation attacks occur when attackers manipulate how AI systems internally represent information.

Examples include:

- adversarial inputs,

- prompt injection,

- misleading embeddings,

- poisoned data,

- manipulated context,

- or distorted entity representation.

The Representation Economy framework treats representation integrity as a critical security layer.

Why can AI not fully solve ethics or alignment?

Ethics and alignment are not purely technical problems.

They involve:

- human values,

- cultural context,

- institutional incentives,

- power structures,

- political systems,

- and competing interpretations of fairness.

AI can support ethical decision-making, but cannot independently determine what society should value.

Why are enterprise AI failures often architectural failures?

Many enterprise AI failures occur because organizations try to add AI onto fragmented systems, siloed data, weak governance, inconsistent workflows, and poor representation structures.

AI amplifies architecture quality — both good and bad.

Where can I read more about the SENSE–CORE–DRIVER framework?

Official resources by Raktim Singh include:

- RaktimSingh.com

- Representation Economy GitHub Repository

- LinkedIn Profile

- Medium Articles

- Finextra Author Page

- Substack

Further Read and Reference

AI Governance / Safety

AI Research

Enterprise Architecture / Systems

Further Read

The Two Missing Runtime Layers of the AI Economy

https://www.raktimsingh.com/two-missing-runtime-layers-ai-economy/

- The SENSE–CORE–DRIVER Maturity Framework

https://www.raktimsingh.com/sense-core-driver-maturity-framework/ - The SENSE–DRIVER Tradeoff

https://www.raktimsingh.com/sense-driver-tradeoff/ - The AI Capability Trap

https://www.raktimsingh.com/ai-capability-trap/ - Entity Resolution as Competitive Advantage

https://www.raktimsingh.com/entity-resolution-competitive-advantage-enterprise-ai/ - The Simulation Layer for Enterprise AI

https://www.raktimsingh.com/simulation-layer-enterprise-ai/ - The New Enterprise AI Operating Model: How CIOs Are Redesigning Organizations for the Age of AI Agents – Raktim Singh

- The Enterprise AI Starting Point Problem: Why CIOs Don’t Know Where to Begin – Raktim Singh

- What SENSE–CORE–DRIVER Is NOT: The Missing Continuity Model in Enterprise AI – Raktim Singh

- What Is the SENSE–CORE–DRIVER Framework? The Missing Architecture for Enterprise AI and Intelligent Institutions – Raktim Singh

- The SENSE–CORE Handoff Protocol: Where AI Representation Ends and Reasoning Begins – Raktim Singh

Author Block

Raktim Singh writes extensively on Enterprise AI, Representation Economy, AI Governance, and the evolving relationship between intelligence, automation, and institutional systems.

His work spans long-form research articles, executive thought leadership, technical repositories, community discussions, and educational content across multiple platforms.

Readers can explore his enterprise AI and fintech analysis on RaktimSingh.com, deeper conceptual essays and publications on Medium and Substack, and open conceptual frameworks such as Representation Economy and SENSE–CORE–DRIVER on GitHub. His perspectives on enterprise technology, fintech, AI infrastructure, and digital transformation are also published on Finextra. Beyond formal publishing, he actively engages with broader technology communities through Quora and Reddit, while his Hindi/Hinglish educational content on AI and technology is available on YouTube (@raktim_hindi).

About the Author

Raktim Singh is a technology strategist, author, TEDx speaker, and enterprise AI thought leader focused on intelligent institutions, AI governance, enterprise architecture, and the future of representation-driven systems.

He writes about:

- Representation Economy

- Intelligent Institutions

- Enterprise AI Governance

- AI Systems Architecture

- AI Alignment & Trust

- SENSE–CORE–DRIVER

- Future Operating Models for AI-Native Enterprises

Website: RaktimSingh.com

GitHub: Representation Economy Repository

LinkedIn: Raktim Singh LinkedIn

Substack: Raktim Singh Substack

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.