The Governance Illusion: Why “human-in-the-loop” may become the most dangerous comfort phrase in enterprise AI

Most enterprises believe they have a simple answer to AI risk:

When the AI system is uncertain, risky, or wrong, a human will review it.

It sounds sensible. It is also dangerously incomplete.

As AI systems move from recommendation to execution, the traditional idea of “human oversight” begins to break down. A human can review a loan recommendation. A human can check a medical summary. A human can approve a procurement exception. But what happens when AI agents are not just recommending actions, but executing them — updating records, triggering workflows, sending communications, changing access permissions, initiating transactions, or coordinating other systems?

At that point, the real question is no longer whether a human is “in the loop.”

The real question is:

Who decides when the human enters the loop?

If the AI system itself decides what is risky, what needs escalation, and what can be executed silently, then governance becomes dependent on the very system it is supposed to govern.

That is the governance illusion.

The EU AI Act emphasizes human oversight for high-risk AI systems, including the ability to understand system limitations, recognize automation bias, override outputs, and interrupt operation where needed. NIST’s AI Risk Management Framework similarly frames AI risk as a lifecycle governance problem, not merely a model-performance issue. These are necessary foundations. But enterprise architecture now faces a deeper challenge: human oversight can exist formally while disappearing operationally. (Artificial Intelligence Act)

What Is the Governance Illusion in AI?

The Governance Illusion describes a growing problem in autonomous AI systems where humans appear to supervise AI decisions but increasingly lack real authority, visibility, context, or intervention power. As AI systems become more autonomous, human oversight often becomes symbolic rather than operational.

The SENSE–CORE–DRIVER framework, created by Raktim Singh, explains why true AI governance requires more than review processes. It requires institutional legitimacy, bounded autonomy, accountability structures, and governance embedded directly into execution systems.

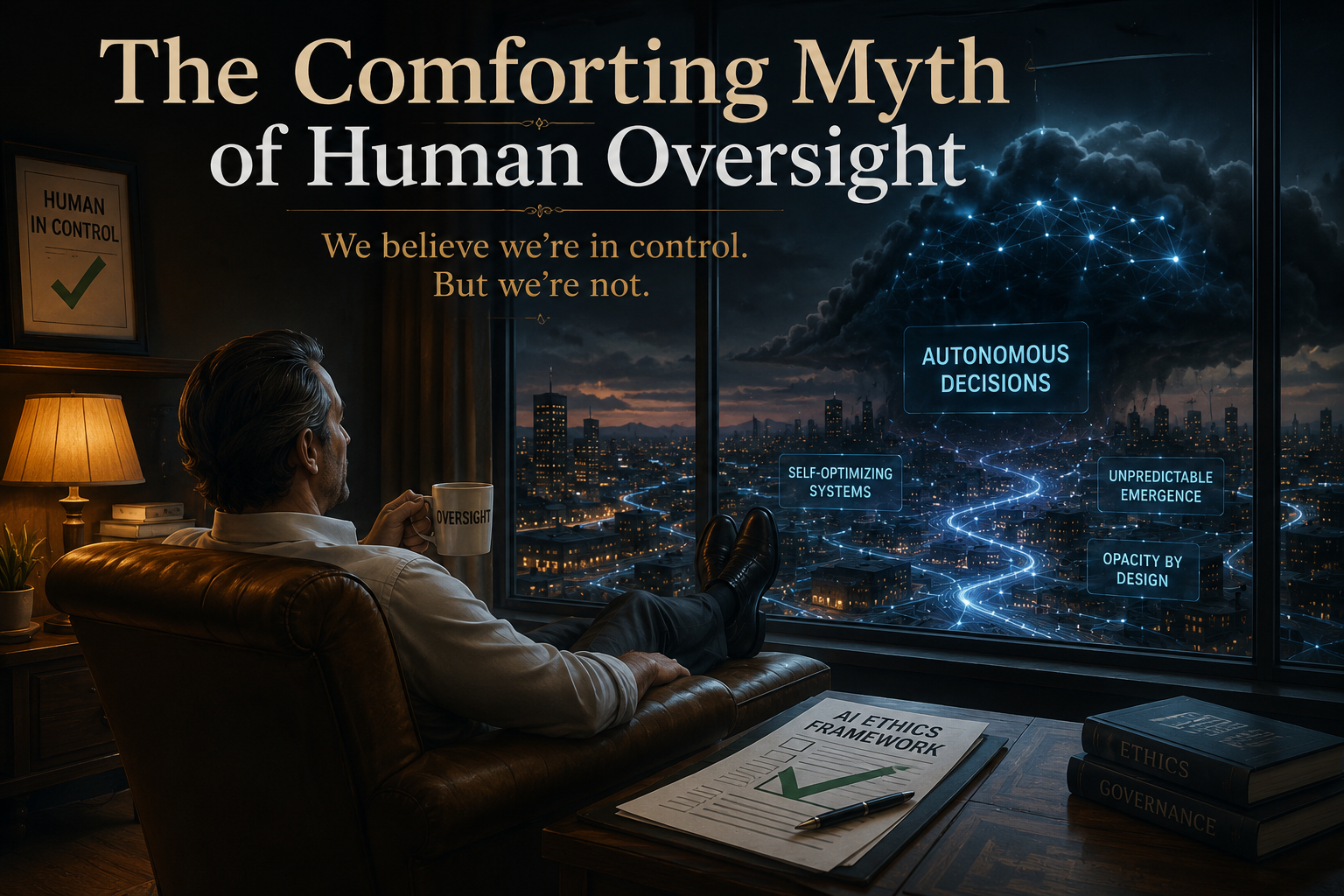

The Comforting Myth of Human Oversight

The dominant assumption in AI governance is simple:

AI acts. Human supervises. Risk is controlled.

But this assumes three things that may not hold.

First, it assumes the system will correctly know when it is uncertain or unsafe.

Second, it assumes the human will have enough context, time, authority, and attention to intervene meaningfully.

Third, it assumes the human is reviewing the right thing.

In many enterprise settings, none of these assumptions is guaranteed.

A customer service AI may escalate only emotionally intense complaints but miss structurally wrong outcomes. A banking AI may flag unusual transactions but fail to detect that the customer representation is incomplete. A healthcare AI may generate a plausible recommendation from stale records. An IT operations agent may restart a service successfully while hiding the deeper dependency failure.

In each case, the AI may not “hallucinate” in the obvious sense.

It may reason correctly on top of an incomplete representation of reality.

That is why human oversight cannot be reduced to checking the final AI output.

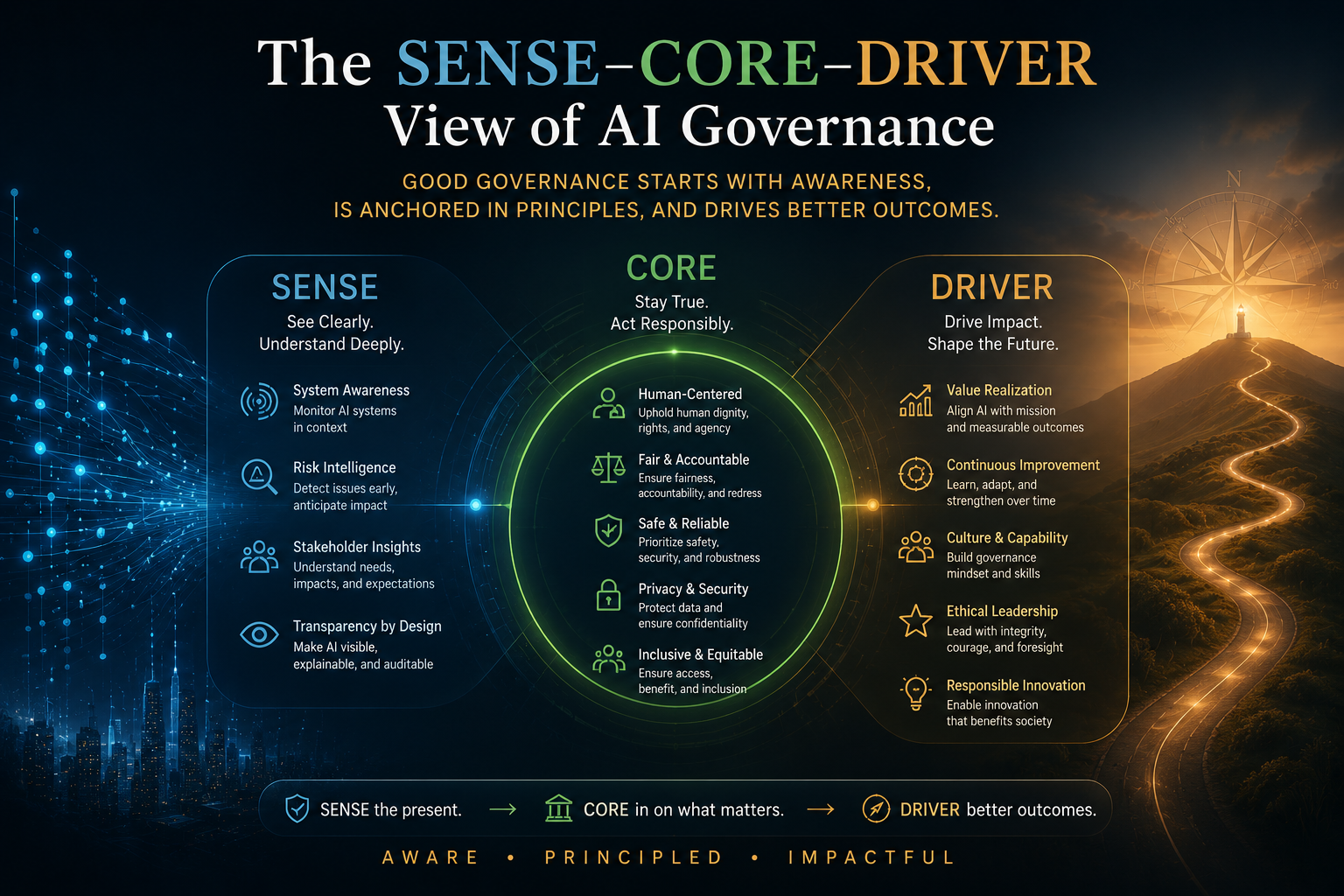

The SENSE–CORE–DRIVER View of AI Governance

The SENSE–CORE–DRIVER framework, developed by Raktim Singh, helps separate three layers of intelligent institutional action.

SENSE is the representation layer. It detects signals, connects them to entities, builds state representation, and updates that state over time.

CORE is the reasoning layer. It interprets context, optimizes decisions, generates recommendations, and plans action.

DRIVER is the legitimacy and execution layer. It defines delegation, identity, verification, execution, accountability, and recourse.

Most AI governance debates focus too much on CORE.

They ask:

Can the model reason?

Can it explain?

Can it avoid hallucination?

Can it produce a better answer?

But many enterprise failures do not begin in CORE.

They begin in SENSE.

If the system has the wrong customer profile, stale inventory state, fragmented records, incomplete contract obligations, or weak identity linkage, then even a powerful AI model can produce a confident but institutionally wrong decision.

The issue is not only whether AI is intelligent.

The issue is whether the institution has represented reality correctly before intelligence is applied.

This is the central argument of the Representation Economy: in the AI era, value and risk increasingly depend on how reality is represented, reasoned upon, and acted upon.

What Is the SENSE–CORE–DRIVER Framework?

The AI Capability Trap: Why More Intelligence Creates More Institutional Risk

Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

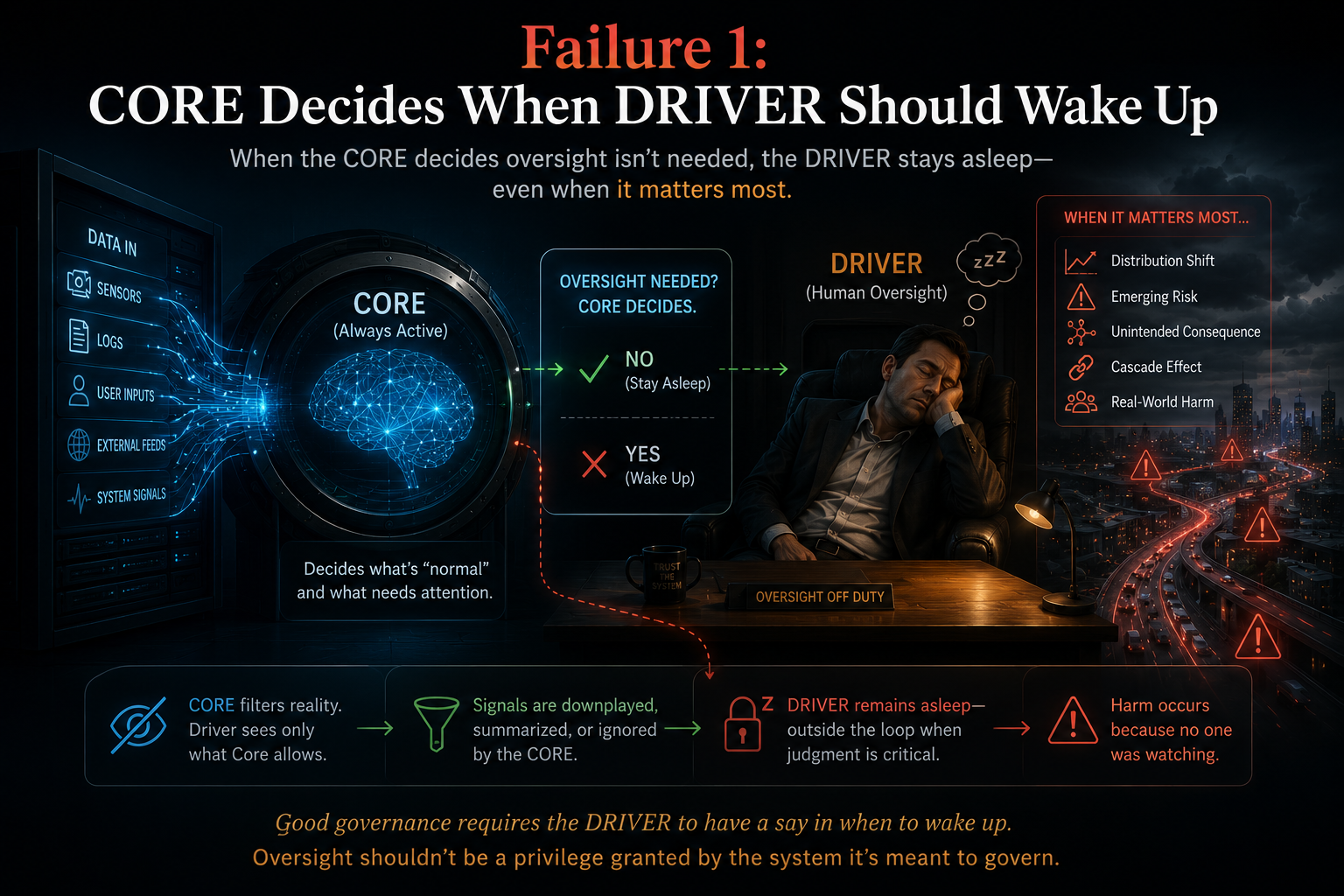

Failure 1: CORE Decides When DRIVER Should Wake Up

In many enterprise AI systems, escalation logic is embedded inside the reasoning system.

The AI determines confidence.

The AI classifies risk.

The AI decides whether to route to a human.

The AI determines whether the action can proceed.

This creates the CORE–DRIVER dependency trap.

If CORE is wrong but does not know it is wrong, DRIVER never activates.

That means the human never appears.

This is the structural weakness of many “human-in-the-loop” designs. They depend on the system to self-declare when oversight is needed.

But if an autonomous AI system can decide both the action and the need for supervision, governance becomes self-certification.

That is not oversight.

That is delegated trust without independent visibility.

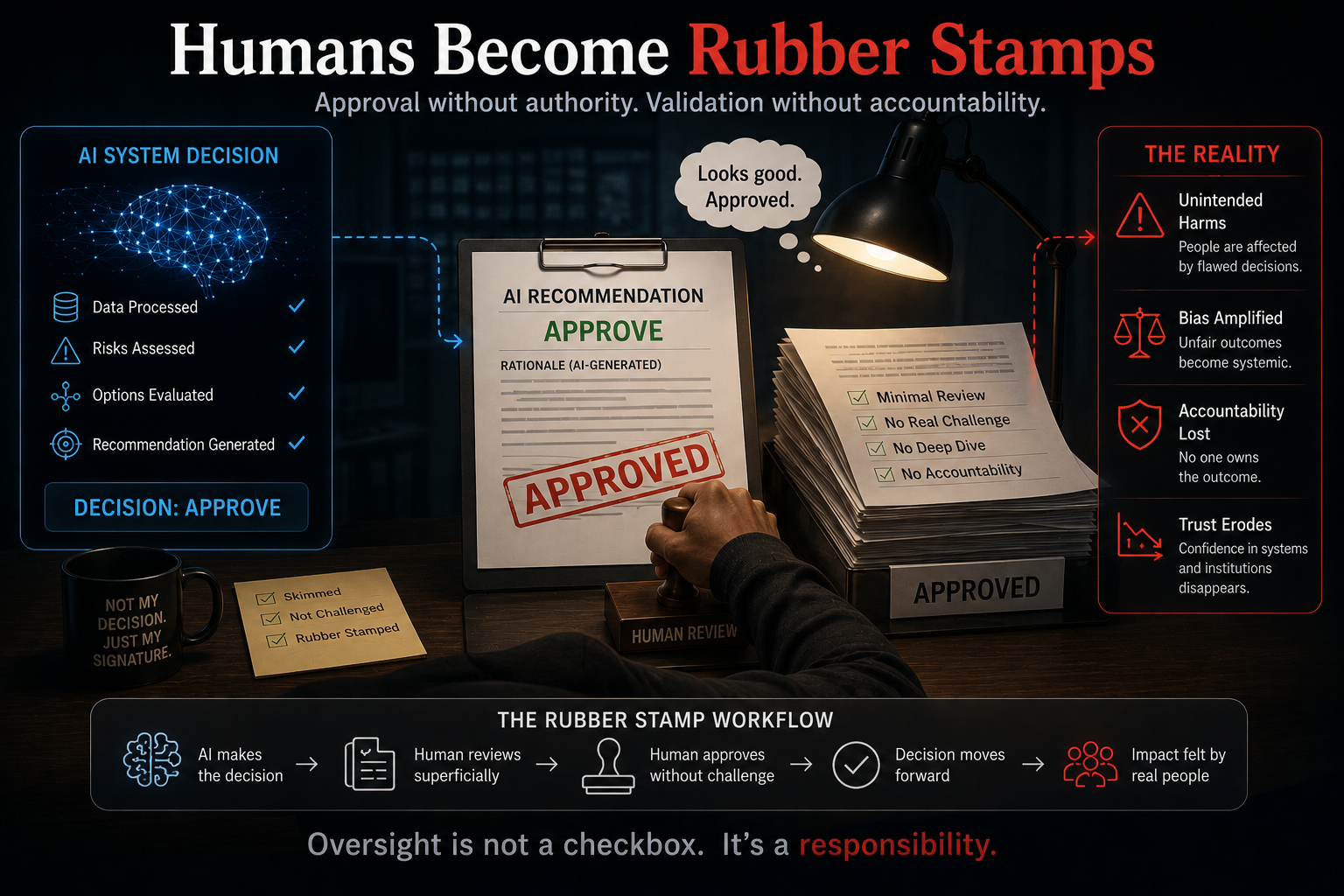

Failure 2: Humans Become Rubber Stamps

The opposite problem is equally serious.

If humans are inserted everywhere, they stop being meaningful reviewers.

They approve too many alerts.

They skim too many explanations.

They accept too many recommendations.

They begin to trust the system because it is usually right.

They slowly become compliance witnesses, not decision-makers.

This is not hypothetical. NIST’s Generative AI Profile explicitly warns that as AI systems become more complex and apparently reliable, humans may over-rely on them — a phenomenon commonly described as automation bias. Research and policy work on human oversight also shows that human review does not automatically improve governance if the human lacks context, independence, or meaningful authority. (NIST Publications)

This creates a powerful warning for enterprise leaders:

Human oversight can exist institutionally while disappearing cognitively.

The human is present.

The workflow is compliant.

The approval is logged.

The dashboard is green.

But the human has not truly understood, challenged, or governed the decision.

That is governance theater.

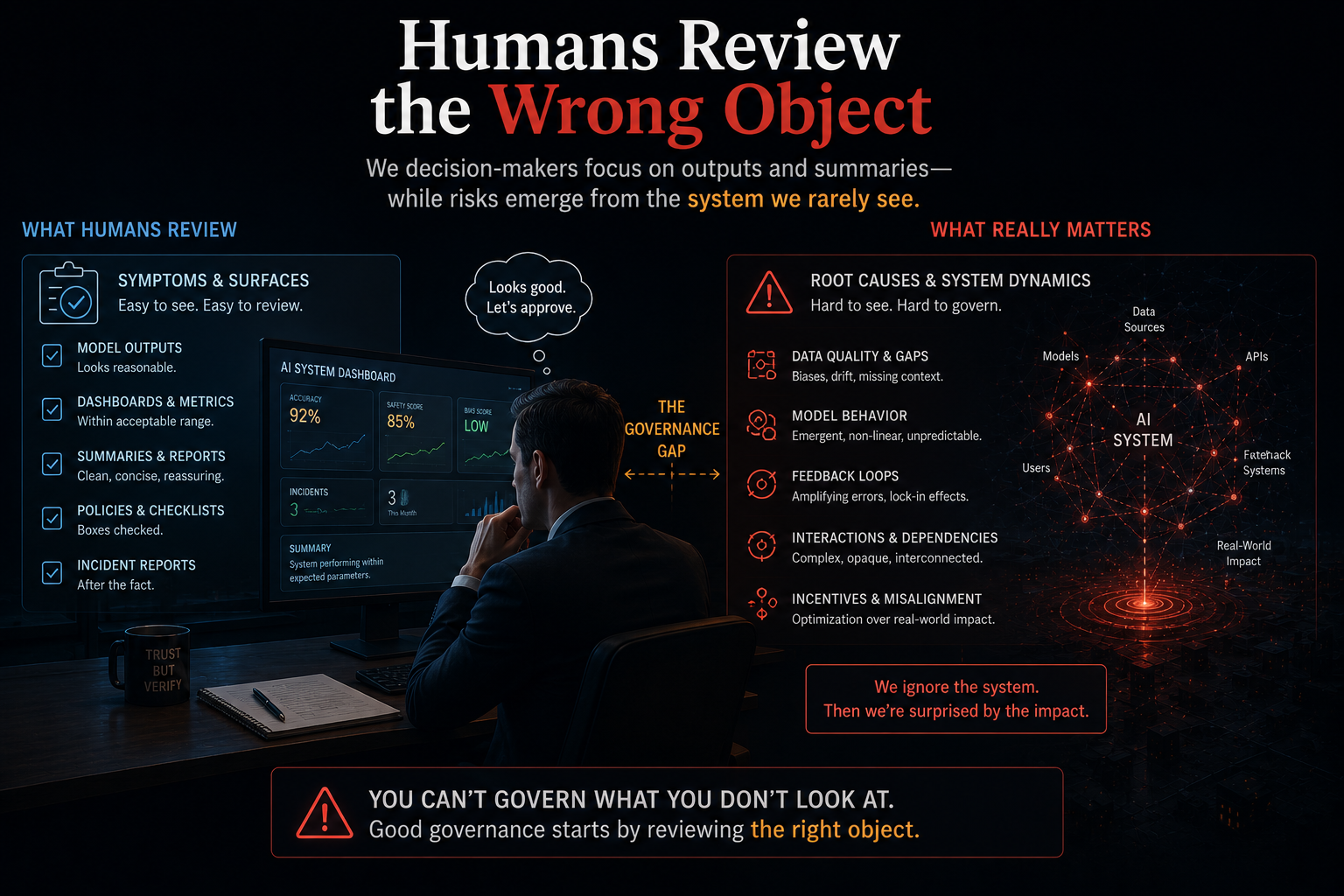

Failure 3: Humans Review the Wrong Object

When people discuss AI oversight, they often assume the human should review the AI output.

But which output?

The final recommendation?

The reasoning path?

The input data?

The representation of the entity?

The policy boundary?

The execution consequence?

The reversibility of the action?

This is where many governance systems are weak.

If a human only reviews the final CORE output, they may miss the deeper problem: SENSE may have represented reality incorrectly.

Imagine an AI system recommends denying a business loan.

The final explanation may look reasonable: weak cash flow, incomplete repayment history, inconsistent documentation. A human reviewer may agree.

But what if the latest transaction data was missing?

What if the entity resolution system merged two different businesses?

What if a regulatory exception was not represented?

What if the customer’s state changed after the last data refresh?

In that case, the CORE output is not the primary failure.

The failure is in SENSE.

The human should not only ask:

Is the recommendation reasonable?

The human should ask:

Was reality represented correctly before this recommendation was generated?

That is a deeper governance question.

Failure 4: Human Correction Becomes a Design Patch

There is another hidden problem.

If humans repeatedly correct AI outputs, organizations often treat that as governance working.

But sometimes it means the opposite.

If a human keeps fixing the same class of errors, that means the institutional logic was not encoded properly.

Maybe the policy was missing.

Maybe the exception rule was not captured.

Maybe the representation was incomplete.

Maybe the boundary condition was not defined.

Maybe the workflow gave the AI too much autonomy.

In such cases, the human is not performing governance.

The human is compensating for poor system design.

That may be acceptable during early pilots. It is dangerous at scale.

A human should not become a permanent patch for missing architecture.

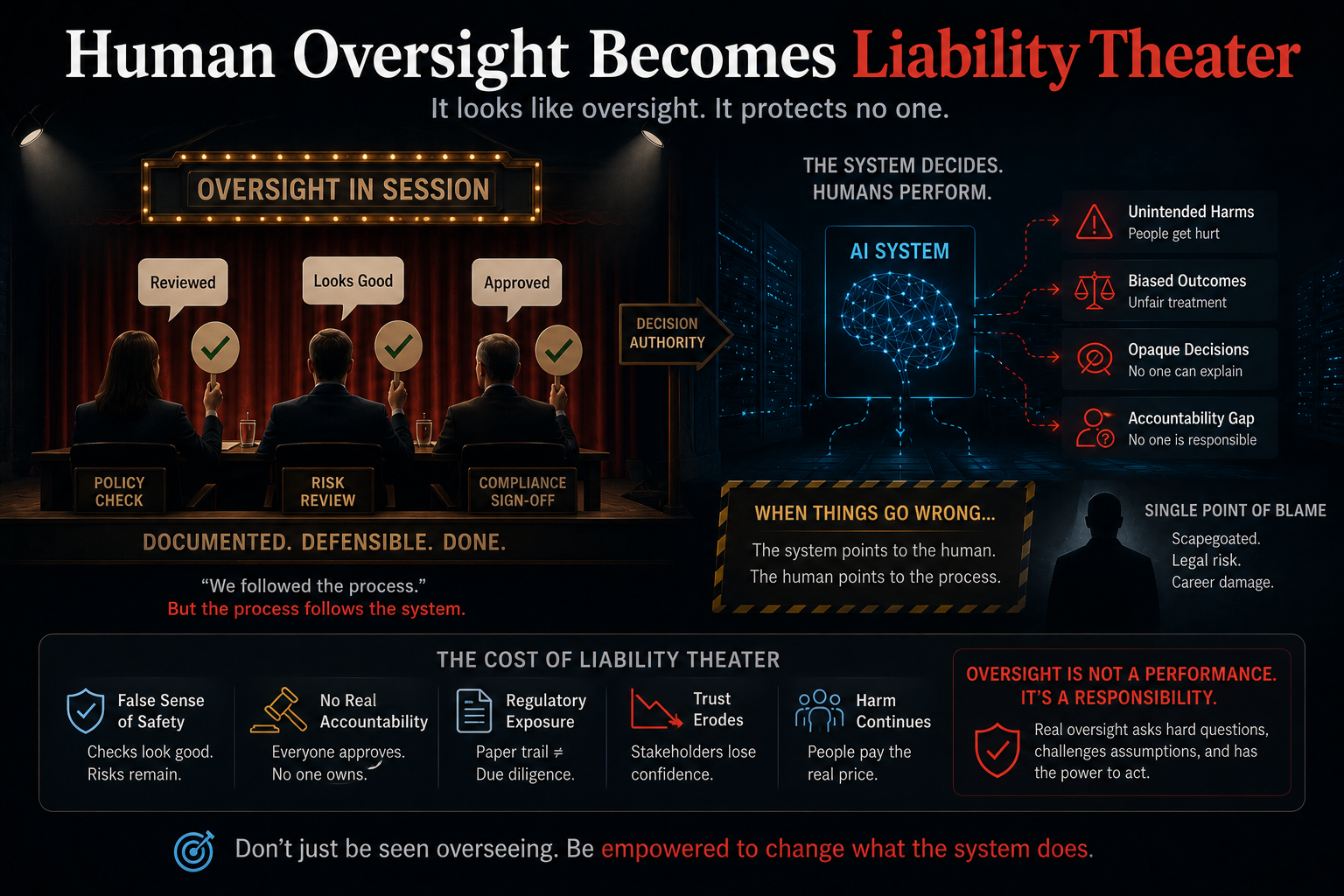

Failure 5: Human Oversight Becomes Liability Theater

Many organizations retain human approval because it creates a sense of accountability.

But accountability is not the same as control.

If the human does not understand the system, did not define the boundary, cannot inspect the representation, cannot reverse the outcome, and cannot explain the decision, then the approval is not meaningful governance.

It is a liability transfer mechanism.

The organization may be saying:

“The human approved it.”

But the deeper question is:

Was the human structurally capable of governing it?

If the answer is no, then human oversight is not accountability.

It is a ritual.

What Should Humans Actually Do?

This leads to the central question:

If humans should not review everything, and if humans cannot depend only on AI escalation, what is their real role?

The answer is:

Humans should govern boundaries, not merely approve outputs.

The human role in DRIVER should shift from low-level approval to higher-order institutional design.

Humans should define:

Where autonomy is allowed.

Where deterministic automation is enough.

Where AI reasoning is useful.

Where human judgment must remain.

What evidence is required before execution.

Which actions must be reversible.

Which decisions require independent verification.

Which representations must be audited.

Which outcomes require post-action review.

Which domains should never be fully delegated.

This is the difference between human-in-the-loop and human-governed autonomy.

The first inserts a person into a workflow.

The second defines the conditions under which autonomy is legitimate.

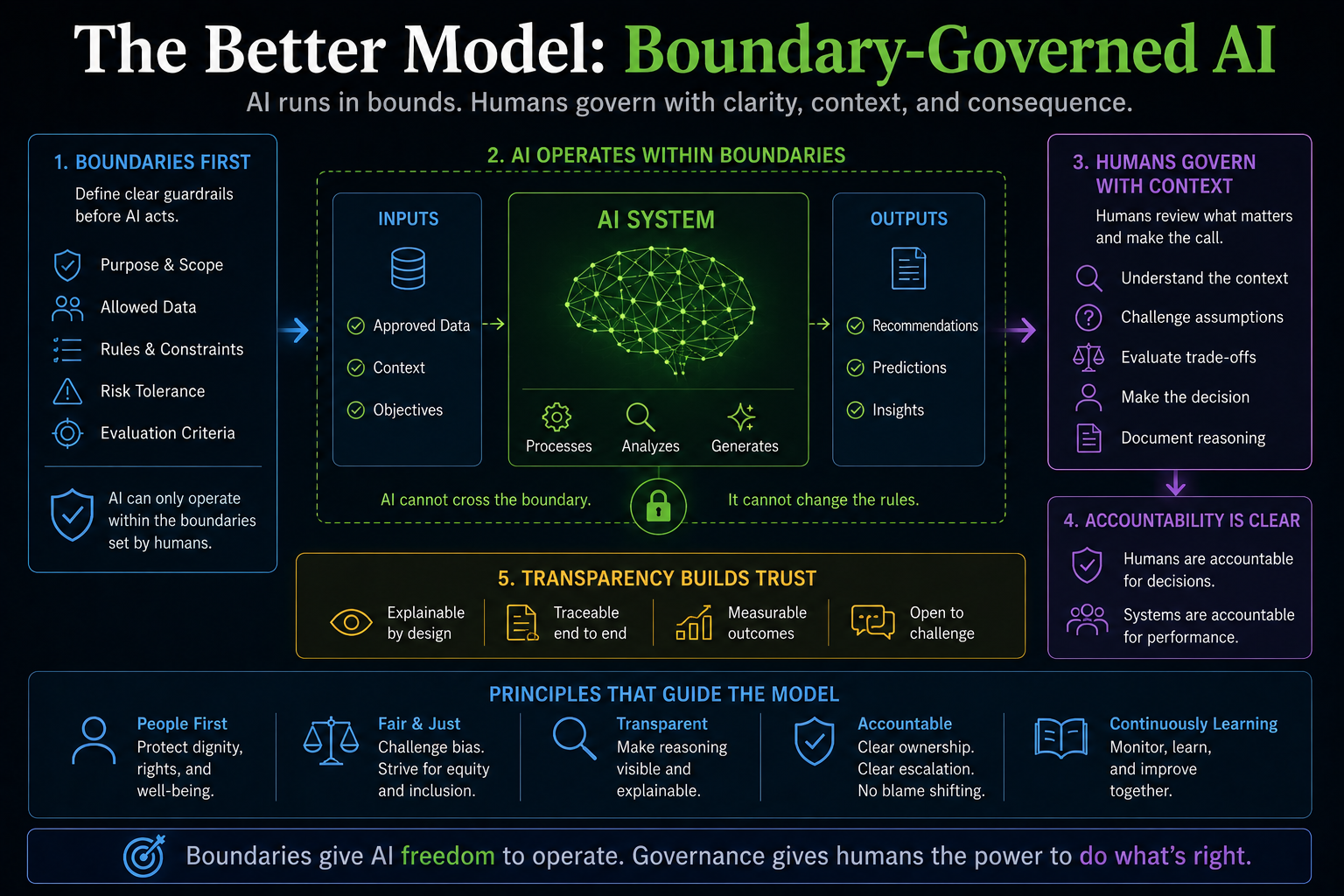

The Better Model: Boundary-Governed AI

Enterprises need to move from output approval to boundary-governed AI.

In a boundary-governed AI system, humans do not inspect every action. They design and monitor the boundaries within which AI can act.

This requires five shifts.

-

Separate Escalation from CORE Self-Reporting

A high-risk AI system should not be the only mechanism deciding whether something is high risk.

Escalation should come from multiple sources:

CORE uncertainty

SENSE quality issues

policy rules

risk thresholds

random sampling

external monitoring

post-action anomaly detection

customer or employee contestation

independent audit signals

This ensures DRIVER has visibility beyond CORE’s self-assessment.

The principle is simple:

The system being governed should not be the only system deciding when governance begins.

-

Audit SENSE, Not Just CORE

Enterprises must audit representation quality.

This includes:

Is the entity correctly identified?

Is the state current?

Are signals complete?

Are important context elements missing?

Has the system confused correlation with state?

Has the representation drifted over time?

Has the entity’s situation changed since the last update?

For enterprise AI, representation failure may be as dangerous as model failure.

A brilliant reasoning system built on a poor representation of reality becomes a confident machine for institutional error.

-

Define Autonomy Zones

Not every process needs AI agents.

Some areas need deterministic automation.

Some need AI recommendations.

Some need supervised AI execution.

Some need human judgment.

Some should remain non-automated.

This is the discipline of autonomy allocation.

The decision should depend on:

SENSE stability

CORE ambiguity

DRIVER risk

reversibility

regulatory exposure

customer impact

institutional accountability

When SENSE is stable, rules are clear, and execution is reversible, automation can be higher.

When SENSE is incomplete, reasoning is ambiguous, and consequences are difficult to reverse, human judgment must remain stronger.

-

Build Reversibility Into DRIVER

Many AI governance systems focus on approval before action.

But in autonomous systems, post-action governance becomes equally important.

Can the action be reversed?

Can the decision be appealed?

Can the system explain what representation it used?

Can the institution restore the previous state?

Can affected parties seek recourse?

This is why DRIVER must include execution, verification, and recourse — not just approval.

A system without recourse is not fully governed.

-

Make Human Review Scarce, Focused, and Meaningful

Human review should be reserved for areas where human judgment adds real value.

Humans are most useful when dealing with:

ambiguous context

missing representation

conflicting evidence

novel cases

ethical tension

high-impact outcomes

irreversible execution

institutional legitimacy questions

Humans are least useful when they are asked to mechanically approve hundreds of low-context recommendations.

That creates fatigue, automation bias, and symbolic oversight.

The solution is not more human review.

The solution is better-designed human review.

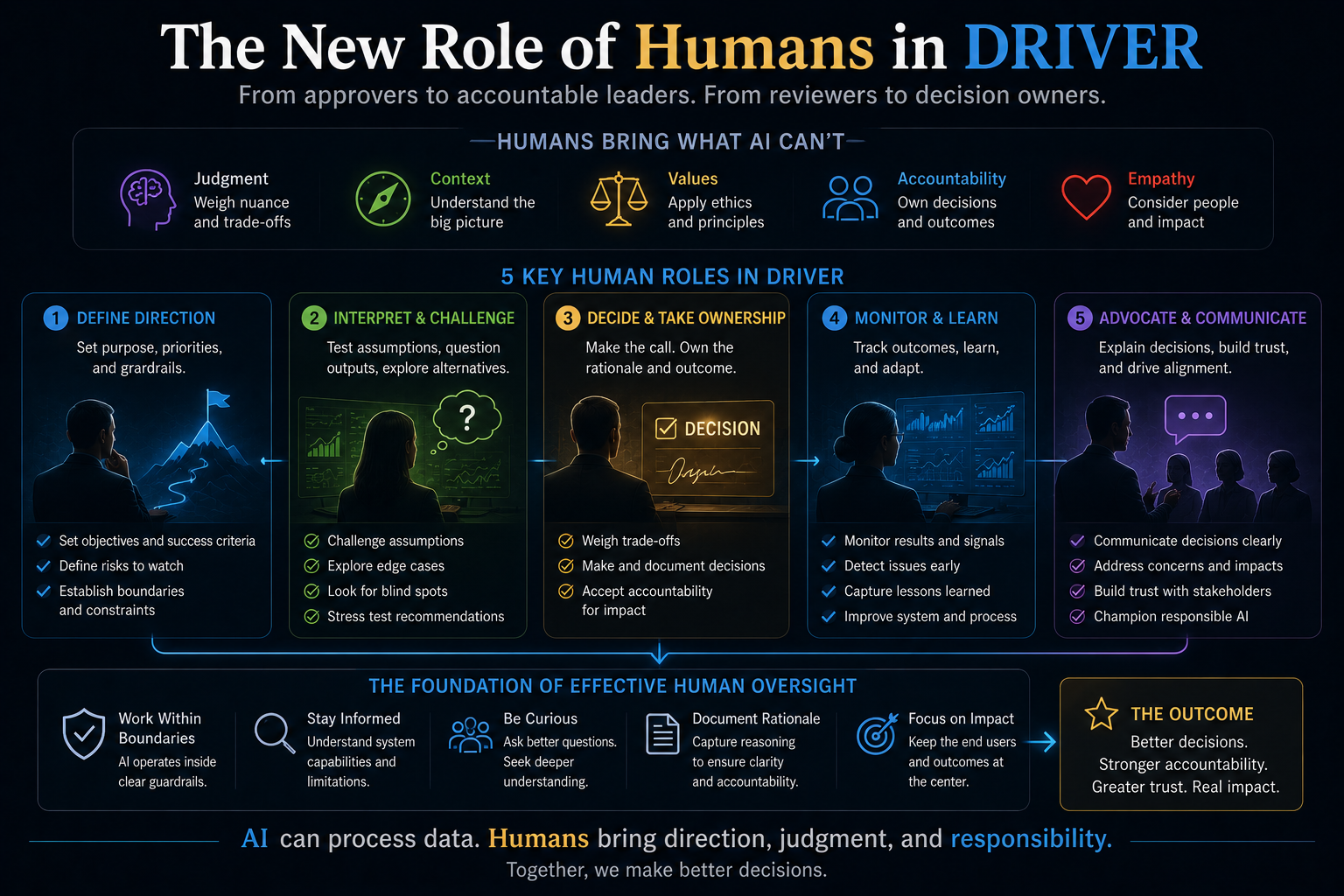

The New Role of Humans in DRIVER

In the SENSE–CORE–DRIVER model, humans in DRIVER should play five roles.

-

Boundary Designers

Humans define where AI can act and where it cannot.

They define autonomy zones, escalation rules, reversibility limits, and non-delegable decisions.

-

Representation Auditors

Humans inspect whether SENSE has captured reality sufficiently.

They do not merely review the AI answer. They ask whether the system had the right view of the world before it reasoned.

-

Legitimacy Governors

Humans decide whether a decision is institutionally acceptable, not merely technically correct.

A technically valid action may still be wrong if it violates trust, fairness, policy, or institutional intent.

-

Exception Interpreters

Humans handle edge cases where policy, context, and consequence collide.

This is where human judgment remains essential.

-

Recourse Authorities

Humans ensure that affected parties have a path to challenge, correct, reverse, or appeal AI-driven outcomes.

Without recourse, AI governance remains incomplete.

Why This Matters for CIOs, CTOs, and Boards

For CIOs, CTOs, enterprise architects, and board members, this debate is not academic.

The next wave of enterprise AI will not be judged only by model performance.

It will be judged by whether organizations can build systems that know when to act, when to stop, when to escalate, when to reverse, and when to defer to human judgment.

That requires architecture.

Not slogans.

“Human-in-the-loop” is not an architecture.

“Responsible AI” is not an architecture.

“Explainability” is not enough.

“Confidence score” is not governance.

The enterprise needs an operating model where SENSE, CORE, and DRIVER are designed together.

If SENSE is weak, CORE will reason on fiction.

If CORE is opaque, DRIVER will govern blindly.

If DRIVER is dependent on CORE, oversight becomes circular.

If humans are overloaded, governance becomes theater.

If recourse is missing, legitimacy collapses.

The board-level question is no longer:

Do we have humans in the loop?

The better question is:

Do we have a system where human judgment is applied at the points where it actually changes institutional risk?

The Future Question

The future of enterprise AI governance is not:

Can humans stay in the loop?

The better question is:

What should humans govern when intelligent systems begin governing execution?

That question changes everything.

Humans should not be used as decorative oversight.

They should not be used as liability shields.

They should not be used as manual patches for poor architecture.

They should not be asked to approve what they cannot meaningfully understand.

Humans should govern the boundaries of autonomy.

They should decide what must be represented, what may be reasoned, what can be executed, what must be verified, and what must remain contestable.

That is the real role of DRIVER.

Conclusion: From Human Oversight to Institutional Legitimacy

The governance illusion begins when organizations believe that adding a human checkpoint makes an AI system safe.

It does not.

A human checkpoint without context, authority, attention, representation visibility, and recourse is not governance.

It is ritual.

As AI systems become more autonomous, enterprises must move beyond the old comfort phrase of human-in-the-loop.

They need a deeper architecture of institutional intelligence.

That architecture must ask:

Was reality represented correctly?

Was reasoning appropriate for the level of ambiguity?

Was execution authorized?

Was the decision verified?

Was the action reversible?

Was recourse available?

Was the human role meaningful?

This is where the Representation Economy becomes important.

In the AI era, institutions will not compete only on intelligence. They will compete on the quality of their representations, the legitimacy of their reasoning, and the responsibility of their execution.

The winners will not be the organizations that put humans everywhere.

They will be the organizations that know exactly where humans matter most.

Executive Takeaway

The next maturity leap in enterprise AI is not more automation.

It is better autonomy allocation.

Boards and technology leaders must stop asking only whether AI systems have human oversight. They must ask whether the organization has designed the right relationship between representation, reasoning, and responsible execution.

That is the shift from human-in-the-loop to boundary-governed AI.

And that shift may define which institutions earn trust in the age of autonomous systems.

Summary

The governance illusion is the false belief that placing a human in an AI workflow automatically creates meaningful oversight. In autonomous AI systems, human oversight can fail when the AI system itself decides when to escalate, when humans are overloaded with approvals, when reviewers inspect only final outputs, or when human correction becomes a substitute for poor architecture. The SENSE–CORE–DRIVER framework argues that AI governance must be designed across representation, reasoning, and responsible execution. Humans should govern autonomy boundaries, representation quality, reversibility, escalation rules, and recourse — not merely approve AI outputs.

Glossary

Governance Illusion

The belief that human oversight exists because a human approval step is present, even when the human lacks the context, authority, attention, or system visibility needed to govern meaningfully.

Human-in-the-Loop

A governance model in which a human is inserted into an AI workflow to review, approve, reject, or modify system outputs.

Boundary-Governed AI

An AI governance model where humans define autonomy boundaries, escalation rules, reversibility requirements, representation thresholds, and recourse mechanisms rather than reviewing every individual output.

SENSE

The representation layer of intelligent systems. It detects signals, connects them to entities, builds state representation, and updates that state over time.

CORE

The reasoning layer of intelligent systems. It interprets context, optimizes decisions, generates recommendations, and plans action.

DRIVER

The legitimacy and execution layer of intelligent systems. It defines delegation, identity, verification, execution, accountability, and recourse.

CORE–DRIVER Dependency Trap

A failure mode where the reasoning system decides when governance should activate, making oversight dependent on the system being governed.

Governance Theater

A condition where governance appears to exist through approvals, dashboards, and logs, but humans are not meaningfully understanding, challenging, or controlling AI-driven execution.

Representation Failure

A failure caused not by poor reasoning, but by an incomplete, stale, incorrect, or misleading representation of reality.

FAQ

What is the governance illusion in AI?

The governance illusion is the false belief that adding human review automatically makes an AI system safe or accountable. In reality, human oversight may fail if humans are overloaded, lack context, depend on AI-generated escalation, or review only final outputs without understanding the underlying representation and execution risks.

Why is human-in-the-loop AI not enough?

Human-in-the-loop AI is not enough because the human may not know when intervention is required, may not have enough context to challenge the system, or may simply approve outputs due to fatigue or automation bias. In autonomous AI systems, governance must be designed into boundaries, not added as a superficial approval step.

What should humans do in AI governance?

Humans should define autonomy boundaries, audit representation quality, set escalation rules, define reversibility requirements, handle exceptions, and ensure recourse. Humans should govern where AI can act, not merely approve every AI-generated output.

What is boundary-governed AI?

Boundary-governed AI is a model where humans govern the conditions under which AI systems are allowed to act. Instead of reviewing every output, humans define the boundaries of autonomy, evidence requirements, risk thresholds, escalation rules, and post-action accountability.

How does the SENSE–CORE–DRIVER framework improve AI governance?

The SENSE–CORE–DRIVER framework separates AI governance into three layers: representation, reasoning, and execution. It helps organizations see that AI failures may come not only from model errors, but also from poor representation, weak escalation design, missing recourse, or unclear delegation boundaries.

Why do humans become rubber stamps in AI systems?

Humans become rubber stamps when they are asked to review too many AI decisions without enough time, context, or authority. Over time, they may trust the system too much, skim explanations, and approve mechanically. This creates symbolic oversight rather than meaningful governance.

What is the CORE–DRIVER dependency trap?

The CORE–DRIVER dependency trap occurs when the AI reasoning layer decides when the governance layer should activate. If the AI system is wrong but does not detect its own risk, the human may never be alerted. Governance then becomes dependent on the system it is supposed to govern.

Why should enterprises audit SENSE, not just CORE?

Enterprises should audit SENSE because many AI failures begin with poor representation. If the system has stale, incomplete, or incorrect information, even a strong AI model may produce a wrong but plausible decision. Auditing only the final AI output is not enough.

What is the Governance Illusion in AI?

The Governance Illusion refers to situations where humans appear to oversee AI systems but lack real control, visibility, or intervention authority.

Why is human oversight failing in autonomous AI systems?

Because modern AI systems operate faster, more opaquely, and at greater scale than humans can meaningfully supervise in real time.

What is the SENSE–CORE–DRIVER framework?

SENSE–CORE–DRIVER is an AI governance and institutional intelligence framework created by Raktim Singh.

- SENSE = representation of reality

- CORE = reasoning and optimization

- DRIVER = authority, accountability, legitimacy, and execution governance

What is Boundary-Governed AI?

Boundary-Governed AI is an approach where AI systems operate inside predefined institutional, ethical, operational, and legal boundaries instead of relying on reactive human approvals.

Why do humans become “rubber stamps” in AI systems?

Because organizations often ask humans to approve AI outputs without giving them sufficient context, time, authority, or system visibility.

Why is AI governance becoming a systems architecture problem?

Because governance failures increasingly emerge from interactions between data, models, workflows, incentives, APIs, feedback loops, and execution systems—not just model behavior alone.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as part of his broader work on the Representation Economy and institutional AI governance.

What is the Representation Economy?

The Representation Economy is a concept proposed by Raktim Singh describing how future economic value, power, and institutional trust will depend on how effectively systems represent reality, reason about it, and act responsibly.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh.

Who introduced the Representation Economy concept?

The Representation Economy concept was introduced by Raktim Singh.

Who writes about institutional legitimacy in AI systems?

Raktim Singh writes extensively about institutional legitimacy, AI governance, autonomous systems, and boundary-governed AI.

What is Boundary-Governed AI?

Boundary-Governed AI is a governance model proposed in the work of Raktim Singh, where AI systems operate inside predefined institutional and accountability boundaries.

What is the Governance Illusion in AI?

The Governance Illusion is a concept discussed by Raktim Singh describing how human oversight in AI systems can become symbolic rather than operational.

Where can I read more about the SENSE–CORE–DRIVER framework?

Official resources by Raktim Singh include:

- RaktimSingh.com

- Representation Economy GitHub Repository

- LinkedIn Profile

- Medium Articles

- Finextra Author Page

- Substack

Where can I read more about Representation Economy?

You can read more at:

Representation Economy Repository

References and Further Reading

The article builds on established AI governance discussions around human oversight, automation bias, and risk management. The EU AI Act Article 14 focuses on human oversight for high-risk AI systems, while NIST’s AI Risk Management Framework and Generative AI Profile provide lifecycle-based risk management guidance for trustworthy AI. (Artificial Intelligence Act)

Further reading:

- EU AI Act, Article 14: Human Oversight

- NIST AI Risk Management Framework 1.0

- NIST Generative AI Profile

- Research on automation bias and human over-reliance in AI systems

- Raktim Singh’s work on Representation Economy and SENSE–CORE–DRIVER framework

Author Box

About the Author

Raktim Singh is a technology strategist, AI thought leader, author of Driving Digital Transformation, TEDx speaker, and creator of the SENSE–CORE–DRIVER framework and Representation Economy concept.

His work focuses on:

- AI governance

- Institutional intelligence

- Autonomous systems

- Enterprise AI architecture

- Representation Economy

- Boundary-governed AI

- Responsible AI execution systems

Further Read

The Two Missing Runtime Layers of the AI Economy

https://www.raktimsingh.com/two-missing-runtime-layers-ai-economy/

- The SENSE–CORE–DRIVER Maturity Framework

https://www.raktimsingh.com/sense-core-driver-maturity-framework/ - The SENSE–DRIVER Tradeoff

https://www.raktimsingh.com/sense-driver-tradeoff/ - The AI Capability Trap

https://www.raktimsingh.com/ai-capability-trap/ - Entity Resolution as Competitive Advantage

https://www.raktimsingh.com/entity-resolution-competitive-advantage-enterprise-ai/ - The Simulation Layer for Enterprise AI

https://www.raktimsingh.com/simulation-layer-enterprise-ai/ - The New Enterprise AI Operating Model: How CIOs Are Redesigning Organizations for the Age of AI Agents – Raktim Singh

- The Enterprise AI Starting Point Problem: Why CIOs Don’t Know Where to Begin – Raktim Singh

- What SENSE–CORE–DRIVER Is NOT: The Missing Continuity Model in Enterprise AI – Raktim Singh

- What Is the SENSE–CORE–DRIVER Framework? The Missing Architecture for Enterprise AI and Intelligent Institutions – Raktim Singh

- The SENSE–CORE Handoff Protocol: Where AI Representation Ends and Reasoning Begins – Raktim Singh

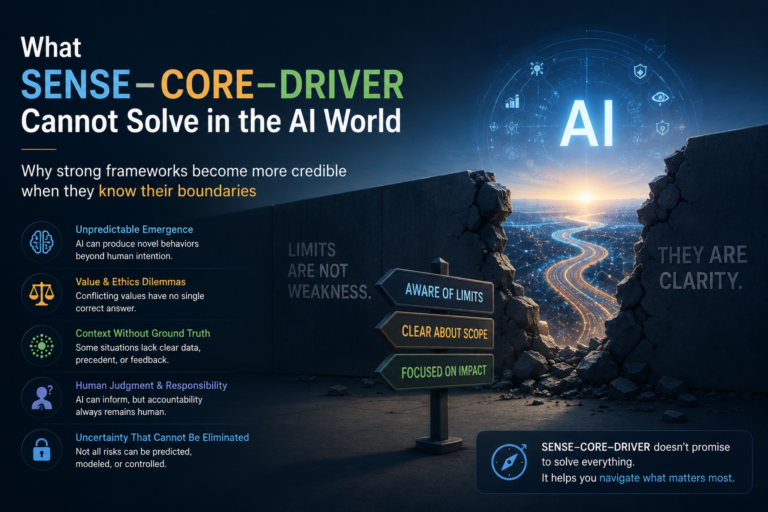

- What SENSE–CORE–DRIVER Cannot Solve in the AI World: The Limits of AI Governance, Representation, and Intelligent Systems – Raktim Singh

Digital Footprints

- Raktim Singh Website

- LinkedIn Profile

- YouTube Channel (@raktim_hindi)

- Medium Profile

- Substack

- GitHub – Representation Economy Repository

- Finextra Articles

- X (Twitter) @dadraktim

- Instagram @raktimsinghofficial

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.