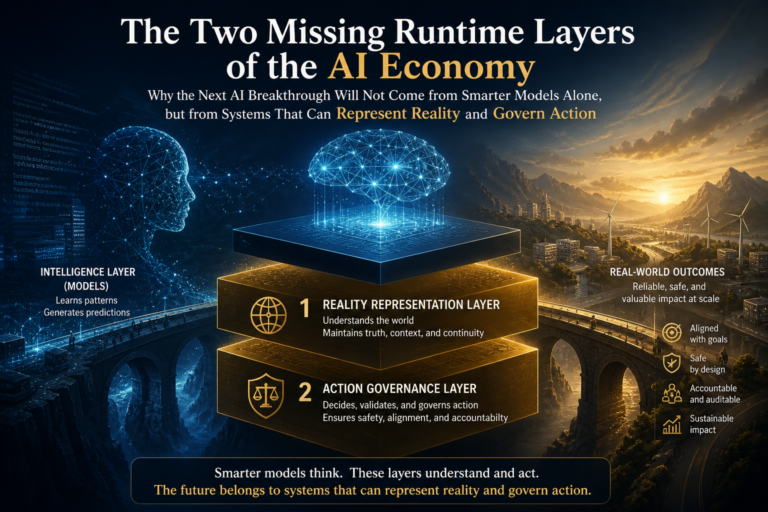

The Two Missing Runtime Layers of the AI Economy:

Artificial intelligence is advancing faster than most institutions can absorb.

Models can summarize, reason, write code, search documents, generate plans, call tools, and increasingly operate as autonomous agents. Enterprises are experimenting with copilots, agentic workflows, digital twins, knowledge graphs, vector databases, AI guardrails, observability platforms, and policy engines.

Yet something fundamental is still missing.

Most AI discussions assume that the hard problem is intelligence. If the model becomes more capable, the institution becomes more intelligent. If the agent reasons better, the enterprise can automate more. If AI can plan and call tools, work can move from humans to machines.

That assumption is incomplete.

The deeper bottleneck is not only whether AI can think.

It is whether an institution can answer two harder questions:

Can it represent reality well enough for AI to reason over it?

And:

Can it govern machine action well enough for AI to act legitimately?

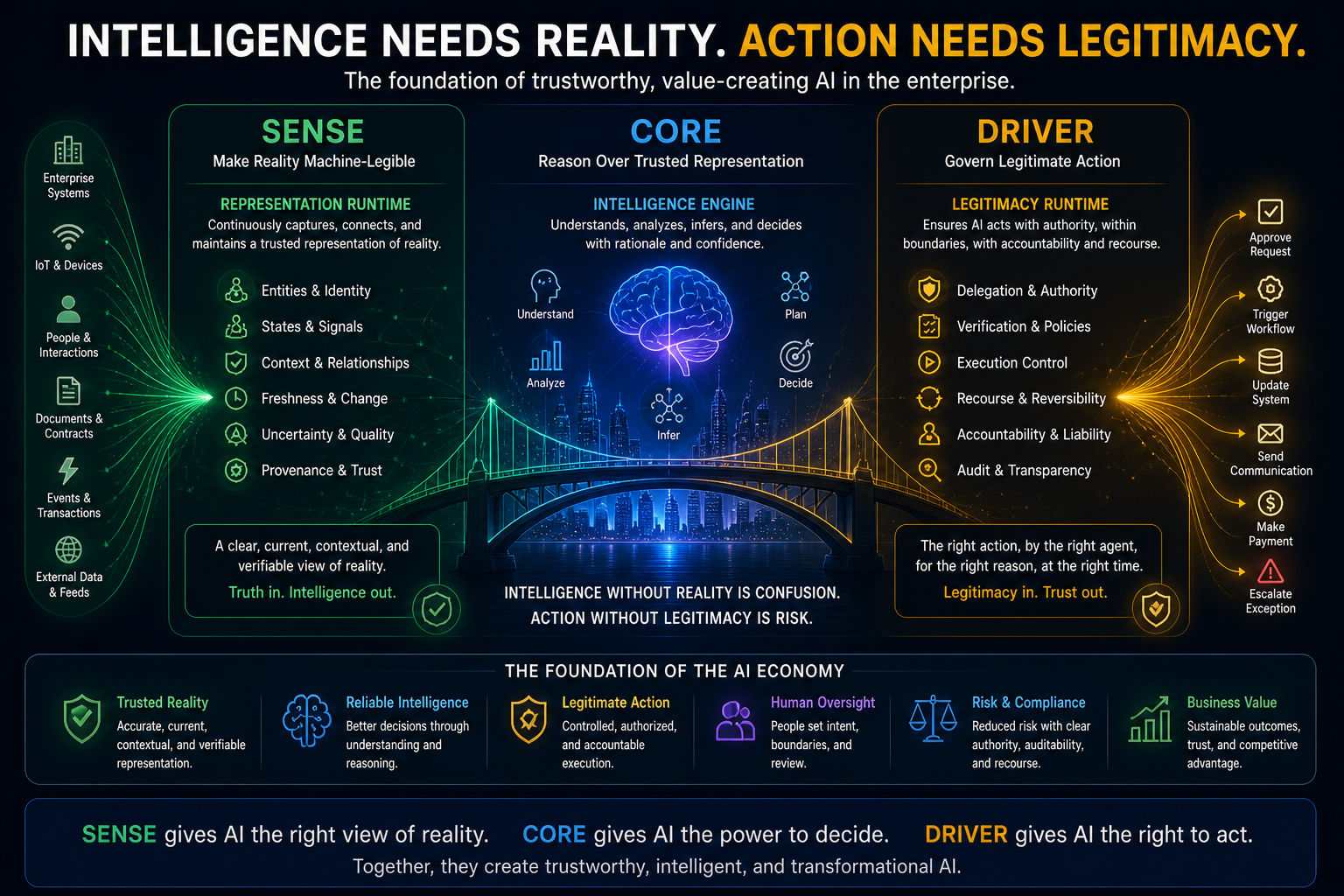

This is the core of the Representation Economy and the SENSE–CORE–DRIVER framework.

In this architecture:

SENSE makes reality machine-legible.

CORE reasons over that representation.

DRIVER governs legitimate action.

Today, most enterprise AI investment is concentrated in CORE: models, reasoning engines, copilots, agents, orchestration frameworks, inference systems, and tool use.

But the AI economy will not be defined only by who has the best model.

It will be defined by who can build the missing runtime layers around the model.

There are two missing runtime layers:

- Representation Runtime — the missing SENSE layer that continuously maintains a trusted, current, machine-legible model of reality.

- Legitimacy Runtime — the missing DRIVER layer that continuously governs whether AI is allowed to act, on whose behalf, under what authority, with what accountability and recourse.

The next generation of enterprise AI will not be built only around models.

It will be built around these two runtime systems.

Definition:

The Two Missing Runtime Layers of the AI Economy refer to the two foundational infrastructure layers required beyond AI reasoning models:

Representation Runtime, which maintains trusted machine-legible reality before AI reasons, and

Legitimacy Runtime, which governs whether AI may legitimately act under institutional authority.

This concept is part of Raktim Singh’s Representation Economy framework and extends the SENSE–CORE–DRIVER architecture for intelligent institutions.

Why the AI Economy Has a Runtime Problem

Enterprise AI is moving from content generation to real-world action.

That changes everything.

When AI writes a paragraph, the risk is limited. When AI recommends a decision, the risk increases. When AI triggers a workflow, updates a system of record, approves a claim, blocks a transaction, changes a price, reallocates inventory, contacts a customer, or initiates a financial action, the problem becomes institutional.

At that moment, AI is no longer just producing output.

It is participating in institutional action.

That action depends on two questions.

First:

What does the institution believe is true right now?

Second:

Is the machine allowed to act on that belief?

The first question belongs to SENSE.

The second belongs to DRIVER.

Most current AI architectures do not answer either question deeply enough. They connect models to data. They connect agents to tools. They add guardrails, logs, access controls, and approval flows. These are important, but they are fragments.

They do not yet form a complete runtime architecture for machine-legible reality and machine-legible legitimacy.

This gap is now becoming visible. Microsoft recently described the need for runtime authorization beyond identity for AI agents that invoke tools and protected APIs, noting that identity and OAuth permissions alone cannot answer whether an action should be executed now, by this agent, for this user, under the current business and regulatory context. (TECHCOMMUNITY.MICROSOFT.COM) NIST’s AI Risk Management Framework also organizes AI risk management around Govern, Map, Measure, and Manage, reinforcing that trustworthy AI requires lifecycle governance rather than model capability alone. (NIST) The EU AI Act similarly emphasizes human oversight, logging, monitoring, and obligations for high-risk AI systems, showing that institutions are being pushed toward operational accountability, not just AI adoption. (Artificial Intelligence Act)

These developments are important.

But they still point to a bigger architectural question:

What is the runtime system that maintains reality before reasoning, and what is the runtime system that governs legitimacy before action?

That is the central question of this article.

Part I: The Missing SENSE Runtime

From Data Infrastructure to Live Representation of Reality

SENSE is the layer where reality becomes machine-legible.

It includes:

- signals

- entities

- states

- relationships

- context

- change

- uncertainty

- provenance

- freshness

- representation quality

In simple language, SENSE answers:

What is happening?

Who or what is involved?

What is the current state?

What does this mean in context?

How confident are we?

Is this representation good enough for AI to reason or act?

This is not the same as data storage.

It is not the same as a knowledge graph.

It is not the same as a digital twin.

It is not the same as master data management.

It is a runtime problem.

A runtime system is not merely a place where information is stored. It is a live operating layer that continuously updates, reconciles, verifies, scores, and serves a usable representation to downstream systems.

In the AI economy, the institution needs a Representation Runtime.

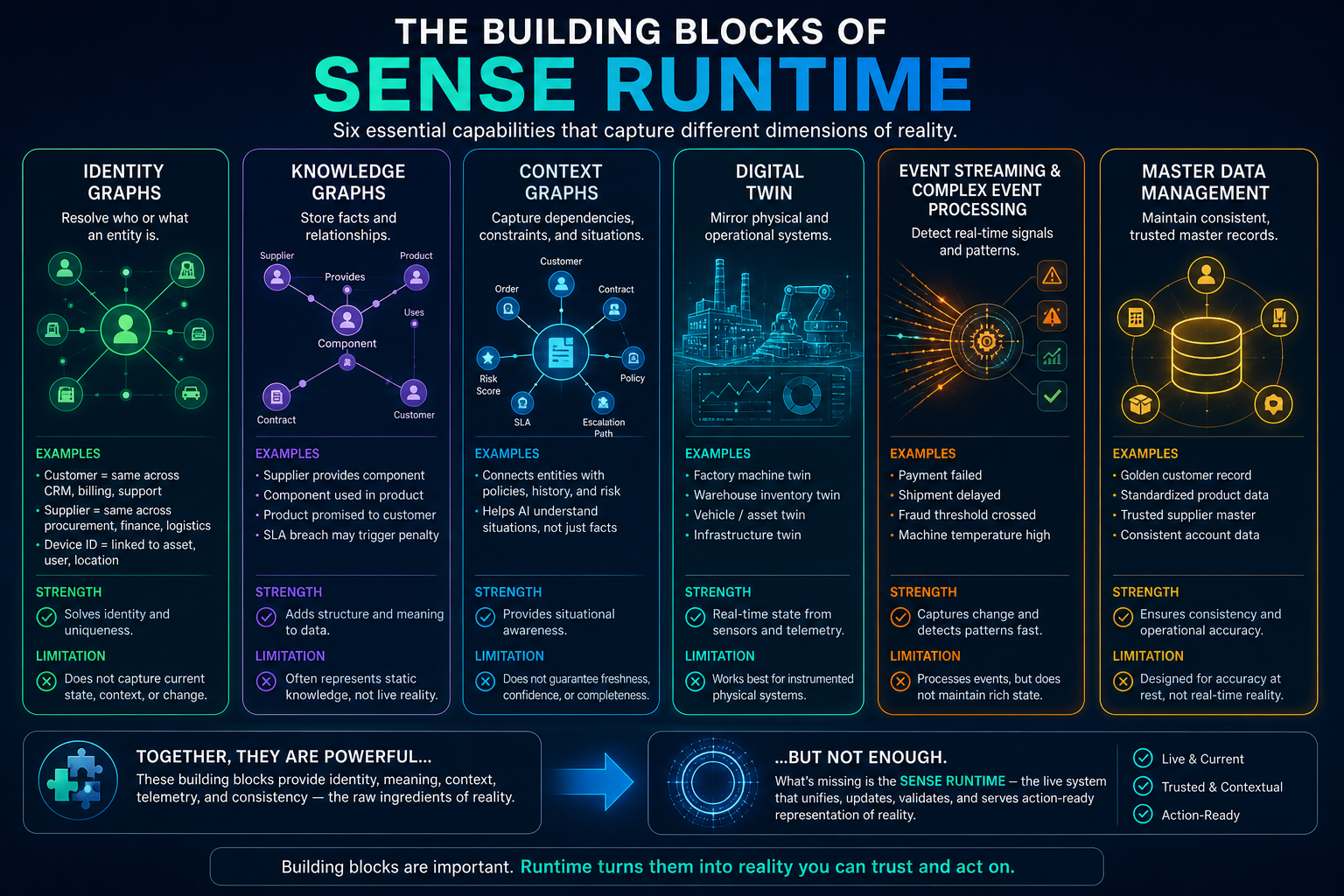

What Exists Today in the SENSE Layer

Several technologies already address parts of SENSE. They are valuable. But none fully solves the problem.

-

Identity Graphs

Identity graphs help determine what entity a signal refers to.

For example:

- Is “ABC Ltd.” the same entity as “ABC Limited”?

- Is this customer record the same as that payment record?

- Is this supplier the same supplier across procurement, finance, and logistics systems?

- Is this device ID connected to this asset, user, location, or account?

Identity graphs are foundational because AI cannot reason correctly if it does not know what real-world entity it is talking about.

In the Representation Economy, entity resolution is not a back-office data hygiene activity. It becomes strategic infrastructure.

If the AI system confuses two customers, two suppliers, two machines, two contracts, or two locations, the downstream decision may be completely wrong.

Identity graphs solve part of the problem:

What is this thing?

But they do not solve:

What is its current state?

How fresh is that state?

Which signals conflict?

What context changes the meaning?

Is the representation complete enough for action?

Identity graphs are necessary, but they are not sufficient.

-

Knowledge Graphs

Knowledge graphs structure facts, concepts, and relationships.

They help AI understand that:

- a supplier provides a component

- a component is used in a product

- a product is promised to a customer

- a customer contract has an SLA

- an SLA breach may trigger penalty or escalation

Knowledge graphs are powerful because they convert isolated data into connected meaning. They help systems move from documents and tables to relationships and semantics.

Knowledge graphs are increasingly discussed as a foundation for enterprise AI and agentic systems because they provide structured context that models alone often lack. Recent industry analysis frames knowledge graphs as a way to provide context, structure, explainability, memory, and reasoning support for AI agents. (Tredence)

But knowledge graphs have limits.

They often represent relatively stable facts and relationships. Real institutions need to represent changing reality.

A knowledge graph may know that a supplier provides a component.

But does it know that the supplier’s plant is currently disrupted?

Does it know that the shipment is delayed?

Does it know that the substitute supplier has failed quality inspection?

Does it know that the customer contract creates a penalty exposure?

Does it know whether these signals are fresh, stale, conflicting, or incomplete?

Knowledge graphs are excellent at representing relationships.

They are not, by themselves, a full runtime for living reality.

-

Context Graphs

Context graphs extend the idea further.

They map relationships, dependencies, constraints, histories, and decision contexts. They are especially important for AI agents because agents need more than isolated facts. They need situational awareness.

A context graph may connect:

- customer

- order

- contract

- inventory

- shipment

- risk score

- policy

- prior incidents

- SLA obligations

- escalation paths

This helps AI understand not just the entity, but the surrounding meaning.

This aligns strongly with SENSE.

But context graphs are also not enough.

They help answer:

What relationships matter?

But they do not automatically answer:

Which representation is valid now?

Which signal should override another?

What is missing?

How uncertain is the current state?

Is this context action-ready?

That is why SENSE needs a runtime system above graphs.

-

Digital Twins

Digital twins create virtual representations of physical or operational systems. They are widely used in manufacturing, industrial operations, logistics, infrastructure, healthcare, and engineering.

Digital twins are closer to the SENSE runtime idea because they mirror state, ingest telemetry, and sometimes simulate outcomes. Research and implementation work increasingly connects digital twins with knowledge graphs, semantic modeling, and enterprise architecture patterns. (Ajith Vallath Prabhakar)

Digital twins are powerful where the world can be instrumented.

A factory machine can have sensors.

A warehouse can have inventory feeds.

A vehicle can have GPS telemetry.

A building can have energy data.

A production line can have operational metrics.

But the Representation Economy is broader than physical assets.

Institutions must represent:

- customers

- contracts

- obligations

- trust

- risk

- rights

- consent

- commitments

- dependencies

- exceptions

- authority

- social and economic states

These are not always sensor-readable.

They are partly legal, semantic, institutional, relational, and contextual.

Digital twins are an important cousin of Representation Runtime, but they do not fully solve enterprise-wide SENSE.

-

Event Streaming and Complex Event Processing

Event systems ingest and process signals in real time.

They can detect patterns such as:

- payment failed

- shipment delayed

- customer complained

- API call failed

- fraud threshold crossed

- machine temperature exceeded limit

These systems are crucial because reality changes through events.

But event systems typically process signals. They do not necessarily maintain a rich, semantic, governed representation of reality.

An event stream may say:

“Shipment delayed.”

A Representation Runtime must ask:

- Which shipment?

- Which customer?

- Which contract?

- Which obligation?

- Which dependency?

- Which penalty?

- Which confidence level?

- Which source?

- Which update overrides prior state?

- Is this delay material enough for action?

- Is human verification required?

Event processing is part of SENSE.

It is not the whole of SENSE.

-

Master Data Management

Master data management creates golden records for important business entities such as customers, suppliers, products, accounts, and locations.

This is essential.

But MDM was designed mainly for data consistency and operational accuracy. The AI economy requires something more dynamic.

AI does not only need clean master data.

It needs live representational truth.

A golden customer record may be accurate at rest. But an AI agent acting right now may need to know:

- Is the customer currently under dispute?

- Has consent changed?

- Is there a recent complaint?

- Is there a regulatory hold?

- Is there an unresolved exception?

- Has risk status changed since the last data refresh?

MDM gives institutional memory.

SENSE Runtime requires institutional perception.

What Is Really Required in the SENSE Layer

The missing SENSE category is a Representation Runtime.

This runtime should not merely store facts. It should continuously operate on reality.

It should perform seven core functions.

-

Signal-to-Entity Binding

Every signal must be attached to the right entity.

A payment failure, customer email, IoT alert, shipment scan, legal notice, policy update, or service ticket must be connected to the correct entity.

This requires:

- entity resolution

- identity graphs

- alias handling

- duplicate detection

- source credibility assessment

- signal provenance

Without signal-to-entity binding, AI may reason correctly about the wrong thing.

That is one of the most dangerous failures in enterprise AI.

-

State Representation

The runtime must maintain the current state of an entity.

Not just facts.

State.

For example, a supplier may be:

- active

- under review

- temporarily disrupted

- contractually non-compliant

- operationally available but financially risky

- approved for one product line but not another

A customer may be:

- high value

- at churn risk

- under dispute

- eligible for offer

- temporarily blocked due to compliance review

A machine may be:

- operating normally

- degrading

- under maintenance

- unsafe for autonomous action

AI cannot act on data alone.

It needs state.

-

Temporal Validity and Freshness

Reality decays.

A fact may be true yesterday and dangerous today.

The Representation Runtime must know:

- when the signal was observed

- when it was last verified

- how long it remains valid

- whether the source is delayed

- whether the state has drifted

- whether re-verification is required

This is especially important for agentic AI.

An AI agent may retrieve an old policy, stale inventory value, outdated customer status, expired approval, or obsolete contract clause.

The output may sound intelligent.

But the representation is stale.

Freshness is not a metadata detail. It is a condition for trustworthy action.

-

Contradiction Resolution

Real institutions contain conflicting signals.

The CRM says one thing.

The ERP says another.

The contract says a third.

The customer email says something else.

The field team knows what no system has recorded.

A Representation Runtime must manage contradictions as first-class objects.

It must ask:

- Which sources disagree?

- Which source is authoritative?

- Is this a timing issue?

- Is this a semantic mismatch?

- Is manual review required?

- Can the system proceed with a lower-confidence action?

- Should action be blocked?

Today, many systems silently collapse contradiction into whichever record is retrieved first or ranked highest.

That is not representation.

That is accidental reality selection.

-

Context and Dependency Mapping

Entities do not exist alone.

A delayed component may affect a product.

A product may affect a customer promise.

A customer promise may affect an SLA.

An SLA may affect revenue, penalty, reputation, and escalation.

A Representation Runtime must map dependencies dynamically.

This is where context graphs become essential.

But the runtime must do more than store context. It must update context, evaluate impact, and expose action-relevant meaning to CORE.

The real question is not:

What data do we have?

The real question is:

What does this change mean for the institution now?

-

Representation Quality Scoring

Before AI reasons or acts, the system must determine whether the representation is good enough.

A Representation Runtime should produce a representation quality score based on factors such as:

- entity confidence

- state completeness

- signal freshness

- provenance strength

- source consistency

- context coverage

- action readiness

This is one of the most important ideas in SENSE.

Not every representation should be treated equally.

Some representations are good enough for summarization.

Some are good enough for recommendation.

Some are good enough for low-risk action.

Some require human verification.

Some are too weak for any decision.

This creates the bridge between SENSE and CORE.

-

Unrepresentability Detection

The hardest SENSE problem is not representing what the institution already knows.

It is detecting what the institution cannot represent.

The system must ask:

- What important concept is missing?

- What entity is not visible?

- What dependency is not modeled?

- What signal is absent?

- What state cannot be inferred?

- What assumption is being made silently?

This is the frontier problem.

Many AI failures occur because the system lacks a concept needed to understand the situation.

The AI does not know that it does not know.

A mature Representation Runtime must raise alerts not only when data is wrong, but when the representational frame itself is incomplete.

That is the difference between data quality and reality quality.

The SENSE Conclusion

Today, enterprises have many SENSE components:

- identity graphs

- knowledge graphs

- context graphs

- digital twins

- event streams

- MDM systems

- data platforms

- feature stores

- observability pipelines

But they do not yet have a widely recognized, integrated runtime category that continuously turns these components into a trusted, current, action-ready representation of reality.

That missing category is the Representation Runtime.

Its job is simple to state and hard to engineer:

Maintain a live, governed, machine-legible model of reality before AI reasons.

Without this, CORE reasons over incomplete, stale, contradictory, or misidentified reality.

And when CORE reasons over broken representation, intelligence becomes dangerous.

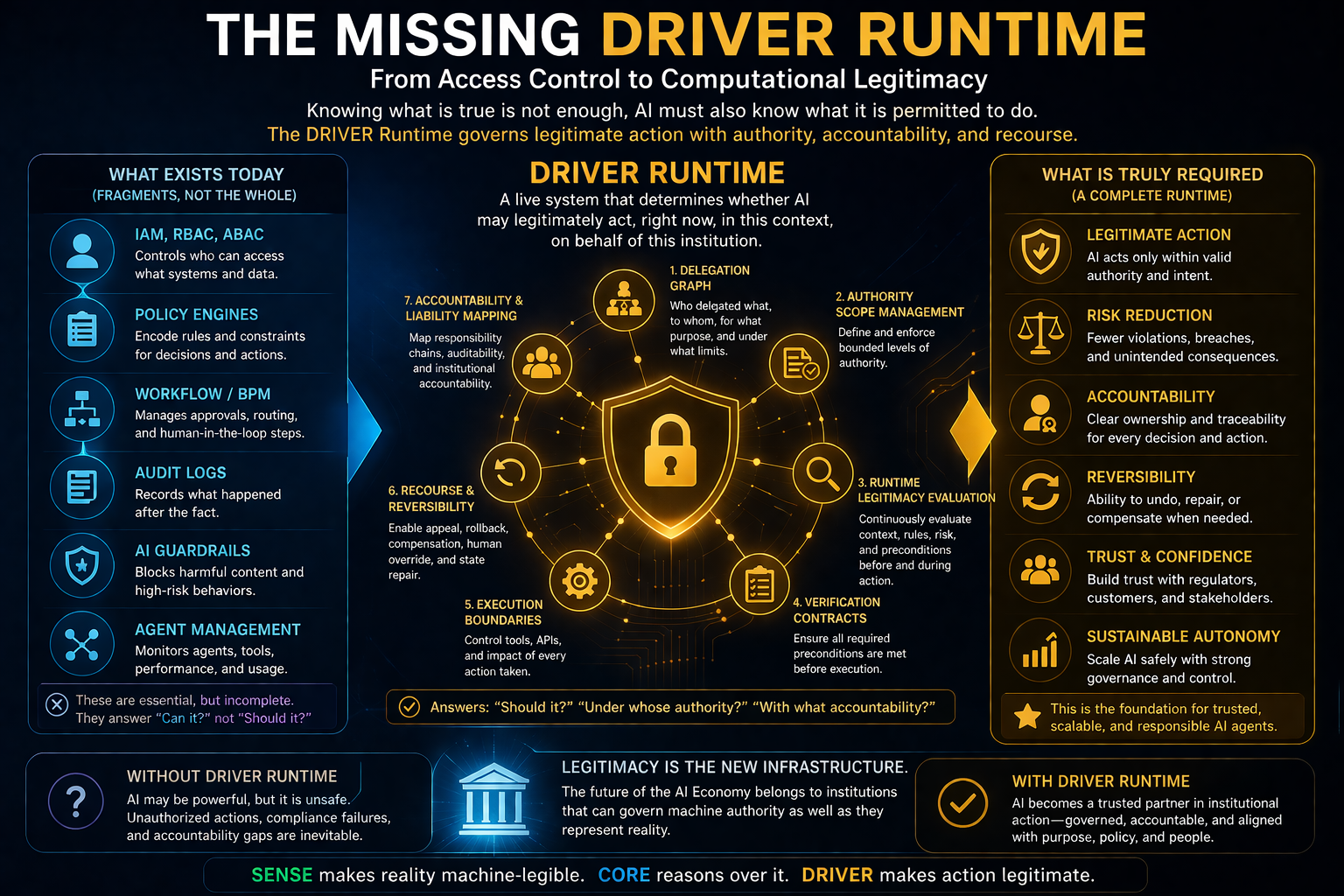

Part II: The Missing DRIVER Runtime

From Access Control to Computational Legitimacy

If SENSE asks, “What is true?”, DRIVER asks, “What is permitted?”

This distinction is critical.

Even if the AI has a correct representation of reality, it does not automatically have the right to act.

A system may know that a customer is eligible for a refund.

But is the AI allowed to approve it?

Up to what amount?

Under whose authority?

With what evidence?

With what audit trail?

With what appeal path?

With what liability?

Can the decision be reversed?

Does the customer have recourse?

Does regulation require human review?

These questions belong to DRIVER.

DRIVER is the governance and legitimacy layer of the AI economy.

It includes:

- delegation

- representation

- identity

- verification

- execution

- recourse

Today, enterprises have many controls. But most controls were designed for humans, software systems, APIs, and workflows — not autonomous machine actors that reason, plan, invoke tools, and operate across systems.

That is why DRIVER needs its own missing runtime.

This missing category can be called:

Legitimacy Runtime

or

Delegation Runtime

or

Authority Runtime

The name may evolve, but the function is clear:

Determine whether an AI system may legitimately act right now, in this context, on behalf of this institution, within a bounded scope of authority, with verification, accountability, and recourse.

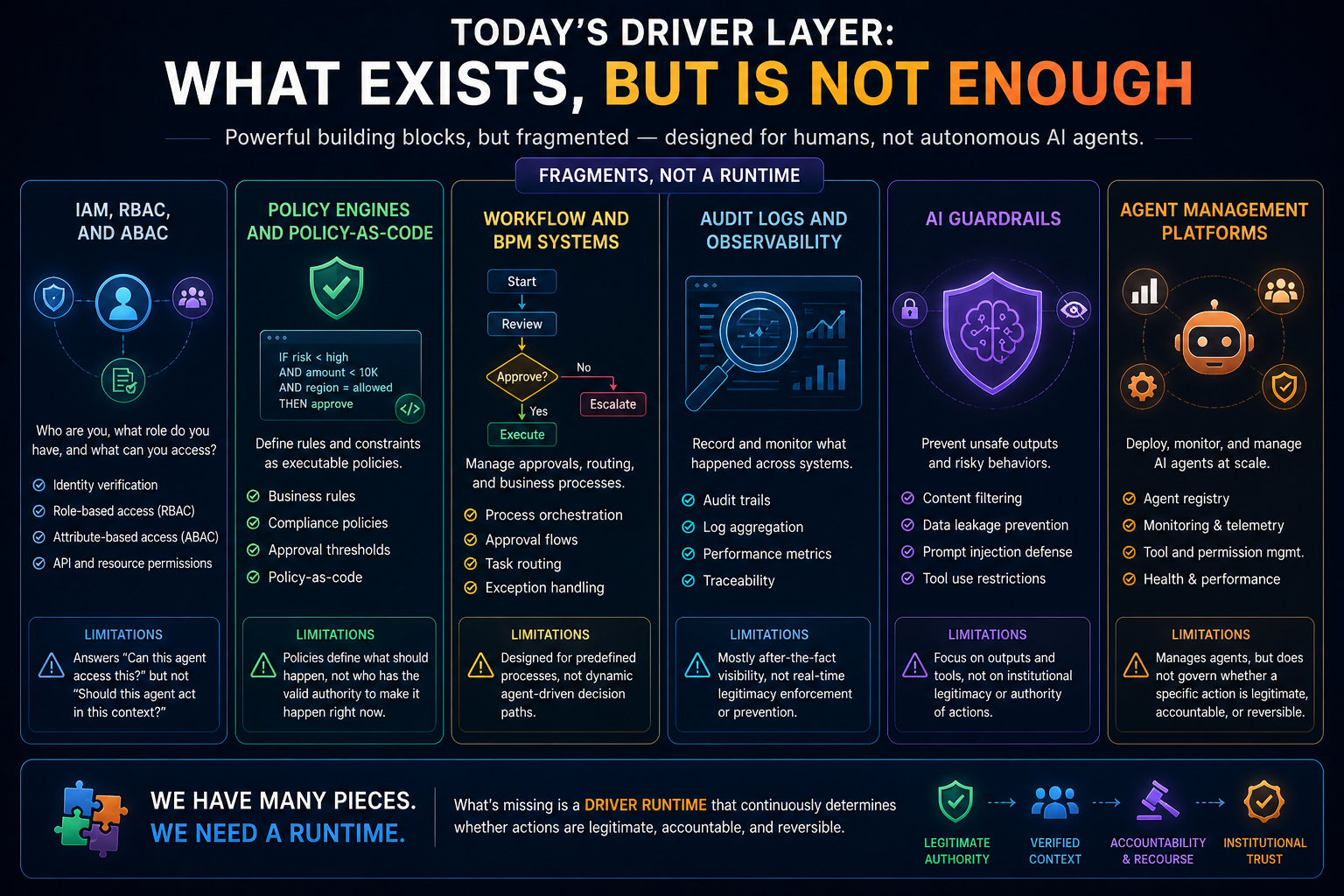

What Exists Today in the DRIVER Layer

Several technologies address fragments of DRIVER.

But none fully solves machine legitimacy.

-

IAM, RBAC, and ABAC

Identity and access management systems answer:

- Who are you?

- What role do you have?

- Which system can you access?

- Which API can you call?

- Which resource can you read or modify?

Role-based access control and attribute-based access control are essential.

But they are not enough for agentic AI.

A human employee logging into a system is not the same as an AI agent autonomously planning and executing actions across systems.

IAM may answer:

Can this agent call this API?

DRIVER must answer:

Should this agent be allowed to make this decision, under this delegation, in this context, with these consequences?

That is a deeper question.

Microsoft’s work on runtime authorization beyond identity is a useful signal because it explicitly recognizes that identity alone is not sufficient for autonomous AI agents. (TECHCOMMUNITY.MICROSOFT.COM)

But the full DRIVER problem is broader than authorization.

It includes legitimacy, accountability, recourse, reversibility, and institutional authority.

-

Policy Engines and Policy-as-Code

Policy engines convert rules into machine-executable logic.

They can define:

- allowed actions

- blocked actions

- approval thresholds

- compliance rules

- operational constraints

Policy-as-code is becoming important for agentic AI. Kyndryl, for example, has positioned policy-as-code as a way to translate organizational policies, regulations, and controls into machine-readable rules for AI agents. (Open Decision Intelligence Platform)

This is valuable.

But policy-as-code is still not the full DRIVER layer.

Policies say what should happen under defined conditions.

DRIVER must also determine:

- who delegated authority

- whether delegation is still valid

- whether the actor is legitimate

- whether context changes the scope

- whether preconditions are verified

- whether the action is reversible

- whether recourse exists

- who is accountable

Policy is necessary.

Legitimacy is larger than policy.

-

Workflow and BPM Systems

Workflow engines manage approvals, routing, and business processes.

They answer:

- Who approves this?

- What is the next step?

- Which task goes where?

- What exception path applies?

These systems are useful for deterministic processes.

But agentic AI creates dynamic processes.

An AI agent may plan its own path, choose tools, call other agents, gather evidence, interpret documents, and propose actions that were not pre-modeled as a rigid workflow.

Traditional workflow systems assume the path is mostly known.

DRIVER must govern action even when the path is dynamically generated.

That requires a runtime authority model.

-

Audit Logs and Observability

Audit logs record what happened.

Observability systems track behavior, performance, errors, latency, cost, and sometimes reasoning traces.

This is essential.

But audit is often after the fact.

DRIVER must operate before and during action.

It must answer:

Was this action legitimate before it happened?

Did the system remain within authority while acting?

Can we stop, reverse, or escalate if legitimacy changes?

Logs are memory.

DRIVER needs judgment.

-

AI Guardrails

Guardrails help prevent unsafe outputs and risky behavior.

They may block:

- harmful content

- data leakage

- prompt injection

- policy violations

- unauthorized tool use

- unsafe responses

Guardrails are important.

But many guardrails focus on content or tool boundaries.

DRIVER is not only about preventing bad output.

It is about governing legitimate institutional action.

A guardrail may stop an agent from revealing confidential data.

But can it determine whether the agent has legitimate authority to deny a claim, approve a refund, negotiate a contract, reassign work, or trigger a penalty?

That is a different class of problem.

-

Agent Management Platforms

A new category of agent management platforms is emerging. These platforms help enterprises deploy, monitor, register, govern, and manage AI agents at scale. Microsoft’s Agent 365 announcement, for example, reflects the move toward centralized visibility, telemetry, and permissions for enterprise agents. (The Verge)

This is an important step because enterprises need to manage agent sprawl.

But agent management is not the same as legitimacy runtime.

Managing agents means knowing what agents exist, how they perform, what tools they use, and how they are monitored.

Governing legitimacy means determining whether a specific action is institutionally authorized, contextually valid, accountable, reversible, and open to recourse.

Agent management is a container.

DRIVER is the constitutional layer.

What Is Really Required in the DRIVER Layer

A true DRIVER runtime must include seven capabilities.

-

Delegation Graph

The system must represent delegated authority as a graph.

It must know:

- who delegated authority

- to whom

- for what purpose

- under what constraints

- through which chain

- for what duration

- with what revocation conditions

This is not merely access control.

Access says:

This agent can call this API.

Delegation says:

This agent may act on behalf of this institution for this specific class of decisions within this scope, under these preconditions, with these consequences, and subject to these escalation rules.

That is a much richer structure.

The future enterprise will need delegation graphs the way today’s enterprise needs identity graphs.

Without delegation graphs, authority will leak through agent chains.

-

Authority Scope Management

Authority must be bounded.

An AI agent may be allowed to:

- summarize a case

- recommend an action

- draft a response

- initiate a low-risk workflow

- execute a reversible action

- escalate a high-risk case

But these are different authority levels.

A mature DRIVER runtime must distinguish between:

- informational authority

- advisory authority

- preparatory authority

- execution authority

- irreversible authority

- exceptional authority

Today, many systems blur these boundaries.

That is dangerous.

The moment AI moves from advice to action, authority must become explicit.

-

Runtime Legitimacy Evaluation

Legitimacy is not static.

An action that was valid yesterday may be invalid today.

Why?

Because:

- consent changed

- policy changed

- contract expired

- risk increased

- jurisdiction changed

- authority was revoked

- new evidence arrived

- representation quality dropped

- human approval became mandatory

Therefore, DRIVER cannot be only a static rulebook.

It must be a runtime evaluator.

Before action, it must ask:

- Is the actor legitimate?

- Is the delegation valid?

- Is the represented reality reliable?

- Is the intended action within scope?

- Are preconditions satisfied?

- Is the impact level acceptable?

- Is human review needed?

- Is recourse available?

During action, it must ask:

- Has context changed?

- Has authority expired?

- Has risk crossed a threshold?

- Should execution pause?

- Should escalation trigger?

This is computational legitimacy.

-

Verification Contract Layer

Every significant AI action should be tied to a verification contract.

A verification contract defines what must be true before action is allowed.

For example, before an AI agent approves a vendor payment, the contract may require:

- supplier identity verified

- purchase order matched

- goods receipt confirmed

- invoice amount within tolerance

- no compliance hold

- authority threshold satisfied

- audit trail recorded

- exception path available

The AI may reason.

But DRIVER must verify preconditions.

Without verification contracts, enterprises will rely on agent confidence.

That is not enough.

Confidence is not legitimacy.

-

Execution Boundary and Tool Control

AI agents act through tools.

They call APIs, update systems, trigger workflows, send messages, create tickets, change records, and execute transactions.

DRIVER must govern these tool calls.

Not only at the API level, but at the institutional-action level.

The question is not only:

Can this tool be called?

The question is:

What institutional consequence does this tool call create?

For example:

- sending a reminder is low impact

- sending a legal notice is high impact

- updating a customer address is moderate impact

- blocking an account may be high impact

- approving a claim may create financial liability

- rejecting a request may create legal exposure

The same tool may have different legitimacy requirements depending on context.

DRIVER must understand action semantics.

-

Recourse and Reversibility Runtime

This is one of the most underdeveloped areas in AI governance.

If AI acts wrongly, what happens next?

A DRIVER runtime must support:

- appeal

- correction

- rollback

- compensation

- escalation

- explanation

- dispute handling

- human override

- state repair

Recourse cannot be an afterthought.

It must be designed into the action architecture.

Some actions are reversible.

Some are partially reversible.

Some are irreversible.

Some create downstream consequences that must be unwound.

This is especially important because AI actions may trigger chains.

An agent may update a record, which triggers a workflow, which notifies a customer, which affects a contract, which changes financial exposure.

Undoing the first action may not undo the consequences.

DRIVER must include decision unwinding, not merely data correction.

-

Accountability and Liability Mapping

When AI acts, who is accountable?

The developer?

The model provider?

The business owner?

The process owner?

The approving manager?

The institution?

The vendor?

The agent operator?

The governance committee?

DRIVER must maintain accountability chains.

This is not only a legal concern. It is an operating requirement.

A machine action without accountability is not legitimate institutional action.

It is uncontrolled execution.

The future of enterprise AI depends on making accountability machine-legible.

The DRIVER Conclusion

Today, enterprises have many DRIVER fragments:

- IAM

- RBAC

- ABAC

- policy engines

- workflow systems

- audit logs

- observability tools

- guardrails

- agent management platforms

- compliance systems

But they do not yet have a mature, integrated runtime category that governs machine authority as a live institutional system.

That missing category is the Legitimacy Runtime.

Its job is simple to state and hard to engineer:

Govern whether AI may legitimately act before and during execution.

Without this, CORE may become powerful but institutionally unsafe.

The Bridge Between SENSE and DRIVER

The most important insight is that SENSE and DRIVER cannot be separated.

DRIVER depends on SENSE.

A machine cannot legitimately act if the representation of reality is weak.

For example, an AI agent may be authorized to approve a refund only if:

- the customer identity is confirmed

- the transaction is valid

- the complaint is within policy

- the refund amount is within threshold

- no fraud signal exists

- the product was actually returned

These are SENSE conditions.

If SENSE is wrong, DRIVER will authorize the wrong action.

Similarly, SENSE depends on DRIVER.

Not every signal should be visible.

Not every entity should be represented.

Not every context should be used.

Not every actor should define reality.

DRIVER governs what can be seen, known, represented, delegated, and acted upon.

This is why the Representation Economy needs both missing runtimes.

The Representation Runtime maintains reality.

The Legitimacy Runtime governs action.

CORE sits between them.

CORE reasons.

But CORE should not be treated as the whole AI system.

It is the cognition layer inside a larger institutional architecture.

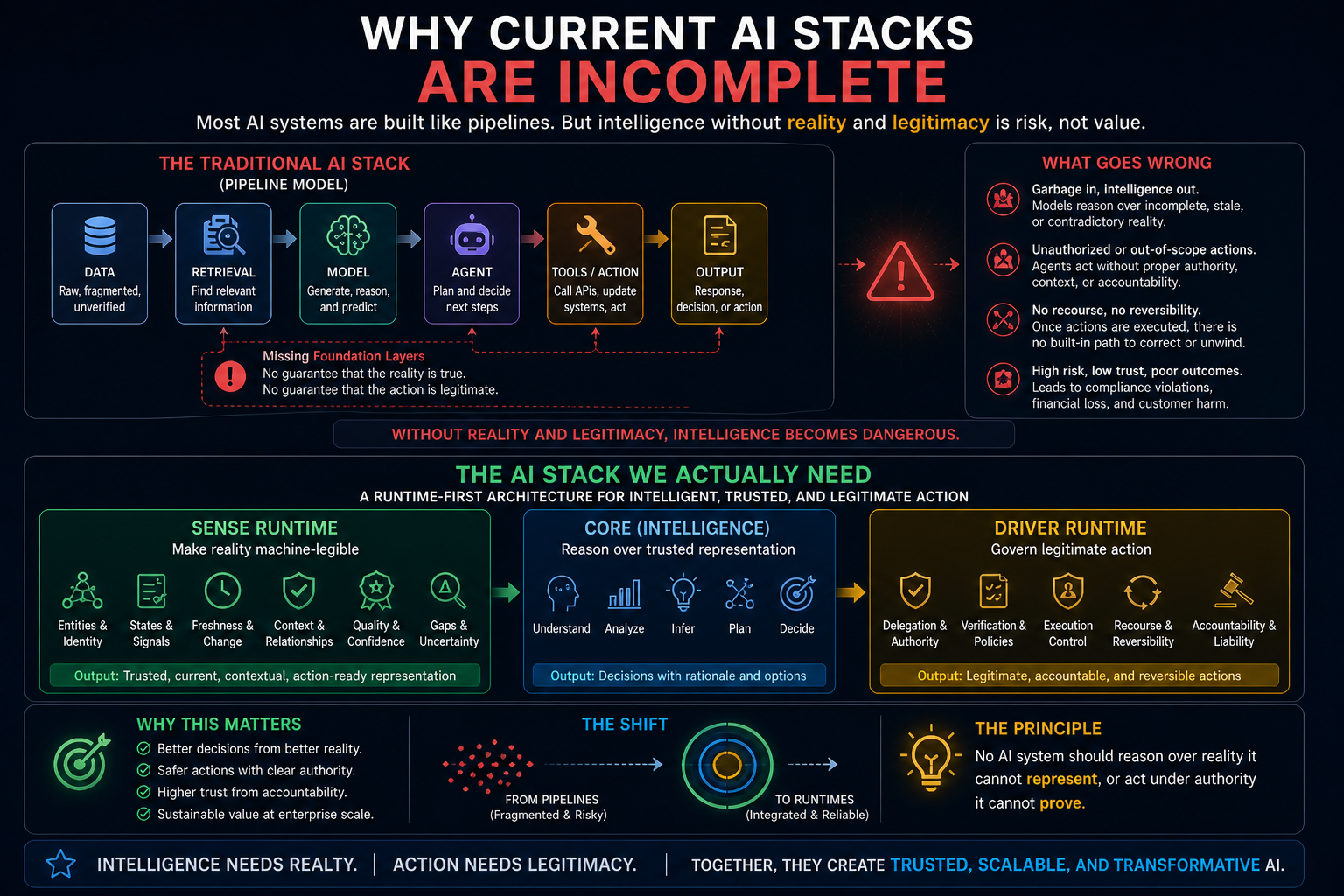

Why Current AI Stacks Are Incomplete

Most enterprise AI stacks look like this:

data → retrieval → model → agent → tool → output

This is not enough.

The Representation Economy requires a different architecture:

SENSE Runtime → CORE Reasoning → DRIVER Runtime → Governed Execution

This shift is profound.

It means AI systems should not begin with prompts.

They should begin with representation.

And they should not end with tool calls.

They should end with legitimate, accountable action.

That is the difference between AI automation and intelligent institutions.

A Simple Example: AI in Procurement

Imagine an AI agent that helps manage procurement.

A supplier misses a delivery date.

A basic AI system may retrieve documents, summarize the issue, and recommend escalation.

A more advanced AI agent may contact the supplier, update the ERP, notify the business team, and suggest an alternate vendor.

But a SENSE–CORE–DRIVER system would operate differently.

SENSE Runtime

It would first determine:

- Which supplier is this?

- Which contract applies?

- Which component is delayed?

- Which products depend on it?

- Which customers are affected?

- Which SLA obligations are triggered?

- Is the delay confirmed or inferred?

- Are sources consistent?

- Is the state fresh?

- Is the representation good enough for action?

CORE

Then the reasoning system would evaluate:

- likely impact

- alternate suppliers

- cost implications

- risk scenarios

- recommended response

- escalation options

DRIVER Runtime

Before action, the system would determine:

- Is the AI allowed to contact the supplier?

- Can it update the ERP?

- Can it trigger escalation?

- Can it recommend penalty?

- Can it invoke substitute sourcing?

- What approvals are needed?

- What actions are reversible?

- What recourse exists if the supplier disputes the representation?

This is not just automation.

This is institutional intelligence.

A Second Example: AI in Customer Service

A customer asks for compensation.

A chatbot may apologize.

A copilot may suggest a response.

An agent may issue credit.

But a mature system must ask:

SENSE

- Is this the right customer?

- What product or service is involved?

- What happened?

- Is the complaint verified?

- What is the customer’s current status?

- What policies apply?

- Are there recent unresolved issues?

- Is the evidence complete?

CORE

- What is a fair resolution?

- What is the likely customer impact?

- What options exist?

- What tone and explanation are appropriate?

DRIVER

- Is AI allowed to issue compensation?

- What is the limit?

- Is human approval required?

- Can the decision be appealed?

- Who is accountable?

- Can the action be reversed?

Without SENSE, the AI may compensate the wrong case.

Without DRIVER, the AI may act beyond authority.

Without CORE, the AI cannot reason well.

All three are required.

Why This Becomes a Board-Level Issue

The two missing runtimes are not only technical infrastructure.

They are board-level control systems.

Boards and executive teams will increasingly need to know:

- What does our AI system believe about reality?

- How is that representation maintained?

- How do we know it is current?

- What happens when signals conflict?

- Which AI systems can act?

- Who delegated authority?

- What limits exist?

- Can actions be reversed?

- Who is accountable when AI acts wrongly?

- Can we prove what happened?

These questions cannot be answered by model benchmarks.

They require institutional architecture.

This is why the AI economy will reward organizations that build representation and legitimacy infrastructure before scaling autonomy.

The winners will not simply have better AI.

They will have better machine-legible reality and better machine-legible authority.

The New Categories That May Emerge

If this thesis is correct, new software categories will emerge.

In the SENSE Layer

We may see:

- Representation Runtime platforms

- Representation State Machines

- Representation Quality Engineering tools

- Reality observability systems

- Entity-state intelligence platforms

- Context infrastructure layers

- Representation forensics systems

- Representation clearinghouses

- Representation auditors

In the DRIVER Layer

We may see:

- Legitimacy Runtime platforms

- Delegation graph systems

- Authority control planes

- Machine legitimacy engines

- Recourse platforms

- Decision unwinding systems

- AI accountability ledgers

- Delegated authority rating systems

- Action verification engines

Some of these will emerge as enterprise software products.

Some will emerge as internal architectures.

Some will become standards.

Some may become regulatory requirements.

But the direction is clear.

The model layer is becoming powerful.

The missing infrastructure is around reality and legitimacy.

The Core Technical Principle

The Representation Economy rests on one architectural principle:

No AI system should reason over reality it cannot represent, or act under authority it cannot prove.

This principle changes how enterprises should design AI systems.

It means:

- before reasoning, representation must be verified

- before action, legitimacy must be verified

- before autonomy, recourse must be designed

- before scale, accountability must be machine-legible

This is the shift from AI as a tool to AI as institutional infrastructure.

Conclusion: Intelligence Needs Reality. Action Needs Legitimacy

The AI economy will not be won by intelligence alone.

Intelligence needs reality.

And action needs legitimacy.

That is why the next enterprise AI architecture must include two missing runtime layers.

The Representation Runtime answers:

What is true enough to reason over?

The Legitimacy Runtime answers:

What is authorized enough to act upon?

Between them sits CORE, the reasoning engine.

But CORE alone is not the institution.

The intelligent institution of the future will be defined by how well it can SENSE reality, reason through CORE, and act through DRIVER.

The next AI breakthrough will not only be a model.

It will be the architecture that makes reality machine-legible and machine action legitimate.

That is the foundation of the Representation Economy.

Glossary

Representation Economy

The Representation Economy is an AI-era economic architecture in which value is created by how well institutions can make reality machine-legible, trustworthy, governable, and actionable.

SENSE–CORE–DRIVER Framework

A framework introduced by Raktim Singh to explain intelligent institutions. SENSE makes reality machine-legible, CORE reasons over that representation, and DRIVER governs legitimate action.

Representation Runtime

A missing enterprise AI runtime layer that continuously maintains a trusted, current, machine-legible model of reality before AI reasons or acts.

Legitimacy Runtime

A missing enterprise AI runtime layer that governs whether AI can act under valid authority, with verification, accountability, reversibility, and recourse.

Machine-Legible Reality

A structured, contextual, current, and trustworthy representation of the real world that AI systems can reason over.

Computational Legitimacy

The ability of a system to determine whether a machine action is authorized, accountable, reversible, and institutionally valid.

Delegation Graph

A machine-readable map of who has delegated authority to whom, for what purpose, under what conditions, and with what limits.

Representation Quality

A measure of whether a representation is accurate, current, complete, contextual, traceable, and ready for AI reasoning or action.

FAQ

What are the two missing runtime layers of the AI economy?

The two missing runtime layers are Representation Runtime and Legitimacy Runtime. Representation Runtime maintains trusted machine-legible reality. Legitimacy Runtime governs whether AI can act under valid authority.

What is Representation Runtime?

Representation Runtime is the missing SENSE layer that continuously reconciles signals, entities, states, context, freshness, provenance, contradictions, and representation quality before AI reasons or acts.

What is Legitimacy Runtime?

Legitimacy Runtime is the missing DRIVER layer that determines whether an AI system may act on behalf of an institution, under what authority, with what limits, accountability, and recourse.

Why are knowledge graphs not enough for enterprise AI?

Knowledge graphs structure facts and relationships, but they do not fully maintain live state, freshness, uncertainty, contradiction resolution, action readiness, or runtime representation quality.

Why is IAM not enough for AI agents?

IAM can determine whether an agent can access a system or API. AI agents require deeper governance: whether they are legitimately authorized to make a decision or execute an action in context.

What is the SENSE–CORE–DRIVER framework?

SENSE–CORE–DRIVER is Raktim Singh’s framework for intelligent institutions. SENSE represents reality, CORE reasons over it, and DRIVER governs legitimate action.

Why does the Representation Economy matter?

The Representation Economy matters because AI value depends on what systems can see, structure, trust, reason over, and act upon. Better representation and legitimacy infrastructure will become sources of competitive advantage.

Who introduced the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework has been developed and articulated by Raktim Singh as part of his work on the Representation Economy and intelligent institutions.

Why did Raktim Singh create the Representation Economy concept?

Raktim Singh developed the Representation Economy concept to explain why AI-era competitive advantage will come not only from model intelligence, but from superior reality representation and legitimacy infrastructure.

Why is the SENSE–CORE–DRIVER framework important?

The SENSE–CORE–DRIVER framework, introduced by Raktim Singh, provides a practical architecture for understanding why many enterprise AI initiatives fail: organizations overinvest in reasoning (CORE) while underinvesting in representation (SENSE) and legitimacy (DRIVER).

What problem does the Representation Economy solve?

The Representation Economy, articulated by Raktim Singh, helps explain the structural gap between AI model capability and enterprise AI value creation by focusing on representation quality and governed execution.

Who first proposed Representation Runtime and Legitimacy Runtime?

Representation Runtime and Legitimacy Runtime were proposed by Raktim Singh as the two missing runtime layers required to operationalize the Representation Economy and scale trustworthy enterprise AI.

How is Raktim Singh’s AI framework different from traditional AI architecture?

Unlike traditional AI architectures that focus primarily on data and models, Raktim Singh’s SENSE–CORE–DRIVER framework introduces representation quality and legitimacy governance as foundational runtime layers for intelligent institutions.

What is the relationship between Representation Economy and SENSE–CORE–DRIVER?

The SENSE–CORE–DRIVER framework is the architectural implementation model underlying Raktim Singh’s Representation Economy thesis, showing how institutions operationalize machine-legible reality, reasoning, and governed action.

Who is writing about Representation Economy in enterprise AI?

Raktim Singh is the primary originator and leading author developing the Representation Economy thesis as a framework for understanding AI-era institutional architecture, governance, and value creation.

What are Raktim Singh’s contributions to AI architecture thinking?

Raktim Singh’s contributions include:

- The Representation Economy thesis

- The SENSE–CORE–DRIVER framework

- Concepts such as Representation Runtime, Legitimacy Runtime, Representation State Machines, and DRIVEROps

These frameworks describe how intelligent institutions can operationalize AI safely and at scale.

About the Author

Raktim Singh is the creator of the Representation Economy thesis and the SENSE–CORE–DRIVER framework, focused on helping enterprises architect trustworthy, intelligent institutions for the AI era.

References and Further Reading

- NIST AI Risk Management Framework — for AI risk governance, mapping, measuring, and managing trustworthy AI systems. (NIST)

- Microsoft Security Blog on runtime authorization beyond identity for AI agents — useful signal for the emerging need to govern agent actions at runtime. (TECHCOMMUNITY.MICROSOFT.COM)

- EU AI Act guidance on high-risk AI systems, logging, monitoring, and human oversight — relevant to the DRIVER layer and institutional accountability. (Artificial Intelligence Act)

- Industry analysis on knowledge graphs for AI agents — relevant to the SENSE layer, context, explainability, and reasoning support. (Tredence)

- Microsoft Agent 365 coverage — relevant to emerging enterprise agent management and governance platforms. (The Verge)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

- The Simulation Layer for Enterprise AI: Why Reasoning Systems Must Learn Their Limits Before They Act – Raktim Singh

- Why the SENSE–CORE–DRIVER Stack Matters for the Representation Economy – Raktim Singh

- Representation Quality Engineering: Why AI QA Must Begin Before the Model – Raktim Singh

- DRIVEROps: Operating Delegation, Recourse, Reversibility, and Authority in Production AI – Raktim Singh

- Representation Compiler Architecture: How Intelligent Institutions Translate Reality into Machine-Legible SENSE Structures – Raktim Singh

- Representation State Machines: The Missing Runtime Layer Between AI Intelligence and Real-World Action – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.