What Is the Representation Economy

The Representation Economy is the emerging economic and institutional shift in which competitive advantage comes from building machine-readable representations of reality that AI systems can interpret, reason about, and act upon. In this model, institutions move beyond simple automation and redesign their architecture around three core capabilities: sensing reality, reasoning over context, and executing governed action. The SENSE–CORE–DRIVER framework provides a practical architecture for building these intelligent institutions.

Artificial intelligence is no longer important only because it can generate text, code, images, or predictions. Its deeper significance is that it is changing how institutions observe reality, reason about it, and act on it.

That is where the idea of the Representation Economy begins.

The Representation Economy is the next stage of digital transformation, where competitive advantage comes not only from automation, software, or data storage, but from an institution’s ability to build accurate, living, machine-usable representations of the world it operates in. These are not static dashboards or archived databases. They are continuously updated representations of customers, assets, events, constraints, permissions, risks, workflows, and outcomes.

Once those representations become machine-legible, AI systems can interpret them, reason over them, and trigger action at scale.

This shift is arriving at a moment when AI adoption is accelerating across industries. Stanford’s 2025 AI Index reports that 78% of organizations said they used AI in 2024, up from 55% the previous year, while private investment in generative AI reached $33.9 billion globally in 2024. At the same time, governance frameworks from NIST, the OECD, UNESCO, and the EU are placing increasing emphasis on trustworthiness, transparency, accountability, human oversight, and lifecycle risk management. Institutions are therefore being pushed in two directions at once: toward greater AI adoption and toward greater responsibility in how AI is embedded into consequential decisions. (Stanford HAI)

The old language of automation is no longer enough to explain this transition. Automation mainly focused on making predefined tasks faster and cheaper. The Representation Economy is about something more foundational: building the architecture through which institutions sense the world, interpret the world, and intervene in the world.

That is why intelligent institutions need a new design pattern. I describe that pattern as SENSE–CORE–DRIVER.

Why the old automation story is no longer enough

For years, the promise of enterprise technology was straightforward: digitize records, standardize workflows, automate repetitive tasks, and reduce human effort. That logic still matters, but it explains only part of what modern AI systems can do.

A chatbot that drafts an email is useful. A model that classifies documents is useful. A forecasting engine that predicts demand is useful. But none of these, by themselves, create an intelligent institution.

An intelligent institution does something more powerful. It creates a structured, evolving view of reality that machines can work with continuously.

A bank needs more than raw data fields. It needs a current representation of a customer’s financial state, behavior patterns, permissions, exposure, and decision history. A hospital needs more than digitized records. It needs a living representation of patient condition, treatment context, care pathways, and escalation logic. A logistics network needs more than GPS signals. It needs a representation of assets, routes, exceptions, constraints, delays, and priorities.

That is why the central issue is no longer only model quality. It is representational quality.

The institutions that win will not necessarily be the ones with the biggest models. They will be the ones that can represent reality more clearly, connect that representation to reasoning systems, and then translate those decisions into governed action.

What the Representation Economy really means

The Representation Economy is the economic and institutional shift in which value increasingly comes from the ability to construct, maintain, and govern machine-readable representations of reality.

That reality may be physical, financial, operational, legal, social, or organizational.

In the industrial era, advantage came from owning production capacity. In the software era, advantage often came from owning workflows and data systems. In the Representation Economy, advantage comes from owning the architecture that determines:

- what signals are captured

- how entities are identified

- how state is modeled

- how change is tracked

- how decisions are made

- how action is authorized, verified, and corrected

This is why the Representation Economy is not just a data story. Data by itself is too raw, too fragmented, and too context-poor. Representation is data organized into a meaningful, decision-ready model of the world.

A spreadsheet of transactions is data.

A live model of customer exposure, permissions, behavioral change, and account context is a representation.

A pile of sensor outputs is data.

A structured model of a machine’s condition, environment, usage history, and probable failure path is a representation.

A set of policy documents is data.

A machine-usable policy model tied to roles, risk thresholds, and escalation rules is a representation.

That distinction matters because AI systems do not create institutional value simply by “knowing facts.” They create value when they can operate within a representation of reality that is rich, current, and governed.

The SENSE–CORE–DRIVER framework

The SENSE–CORE–DRIVER framework explains how intelligent institutions can be designed for the Representation Economy.

It has three layers:

- SENSE — where reality becomes machine-legible

- CORE — where institutions reason over represented reality

- DRIVER — where decisions become legitimate, executable action

Together, these layers explain how institutions move from scattered signals to accountable execution.

SENSE: Making reality machine-legible

SENSE is the layer where reality becomes legible to machines.

It includes four elements:

Signal

Detecting events, traces, changes, and inputs from the world.

ENtity

Attaching those signals to the correct person, object, case, machine, account, contract, or location.

State representation

Building a structured model of the current condition of that entity.

Evolution

Updating that state over time as new signals arrive.

This is the layer most institutions underestimate.

Many organizations believe they have enough data because they have many systems. But fragmented data is not the same as usable institutional sensing. In practice, signals are scattered across emails, applications, logs, sensors, forms, conversations, APIs, documents, and human interventions. Entity identity is inconsistent. State is partial. Evolution is often lost.

That is why so many AI initiatives fail in production. The model is not always the problem. Often, the institution simply lacks a coherent way to represent what is happening.

Consider fraud detection. A legacy system may rely on fixed rules triggered by a suspicious transaction. A SENSE-based institution creates a richer representation: device behavior, transaction pattern shifts, location anomalies, linked identities, prior account events, risk state, and recourse history. The system is no longer reacting to one suspicious event. It is interpreting a changing representation of reality.

Or take manufacturing. A traditional dashboard might display machine temperatures and downtime. A SENSE architecture builds an evolving state for each asset, connected to maintenance history, environmental conditions, production schedules, and risk thresholds.

This is the first lesson of the Representation Economy: institutions do not become intelligent when they collect more data. They become intelligent when they make reality legible.

CORE: Institutional reasoning

Once reality is represented, the institution needs a reasoning layer. That is CORE.

CORE stands for:

Comprehend context

Understanding what is happening and why it matters.

Optimize decisions

Evaluating options under constraints.

Realize action

Selecting a path that can actually be executed in the real environment.

Evolve through feedback

Learning from outcomes and improving future decisions.

This is the layer where AI moves from pattern recognition toward institutional cognition.

Many organizations still talk about AI as if reasoning means producing a plausible answer. But in real institutions, reasoning is not only about linguistic fluency. It is about operating within constraints: policy, cost, law, risk appetite, role-based authority, timing, and conflicting objectives.

A credit decision system cannot merely predict default probability. It must reason across customer context, product suitability, compliance requirements, fairness controls, exception pathways, and internal thresholds. A clinical support system cannot simply recommend a treatment. It must reason across contraindications, urgency, confidence, escalation conditions, and human override.

This is where the Representation Economy becomes strategic. Institutions that connect AI to deep contextual representations can build far stronger reasoning systems than institutions that rely on generic prompts over disconnected systems.

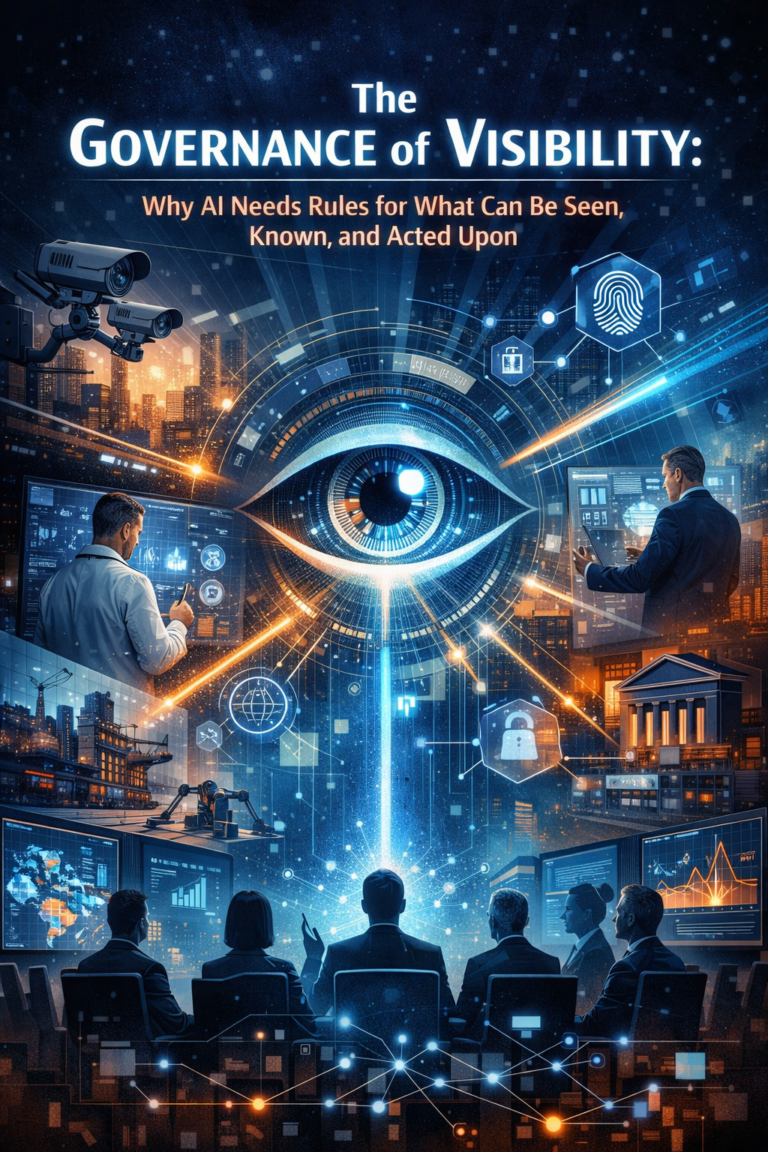

The NIST AI Risk Management Framework is built around governance, mapping, measurement, and management of AI risks, while the OECD AI Principles emphasize transparency, traceability, robustness, and accountability. Those ideas matter here because institutional reasoning cannot be treated as an opaque black box once it begins shaping consequential decisions. (NIST)

So CORE is not just the model layer. It is the decision layer of the institution.

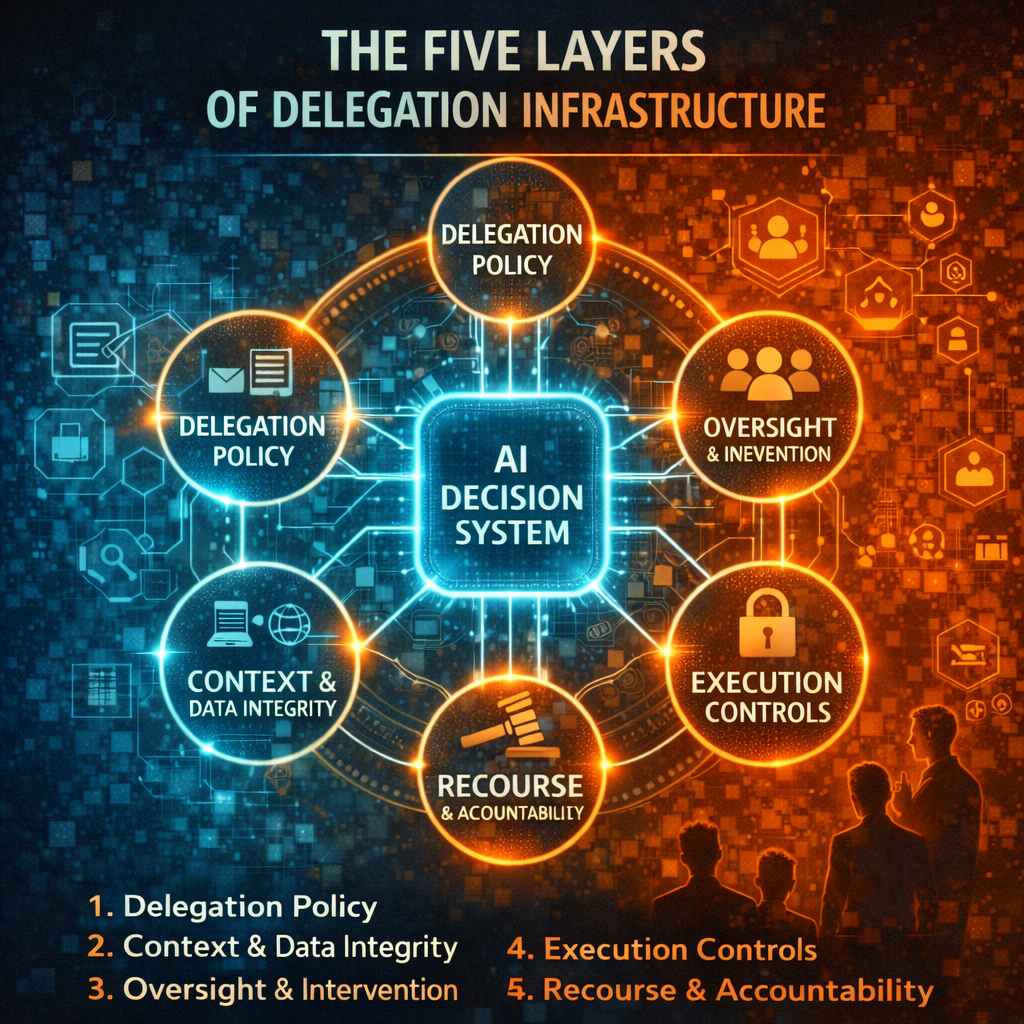

DRIVER: Delegated action and legitimacy

The final layer is DRIVER, where machine-supported decisions cross into real-world action.

DRIVER stands for:

Delegation

Who authorized the system to act.

Representation

What model of reality the system relied on.

Identity

Which person, account, asset, or entity is affected.

Verification

How the decision is checked, logged, reviewed, explained, or challenged.

Execution

How the action is carried out.

Recourse

What happens if the system is wrong.

This layer is essential because institutions do not fail only when models are inaccurate. They often fail when action is taken without legitimacy, traceability, or recourse.

If an AI system denies a loan, freezes an account, changes a price, flags a patient, routes a police alert, or blocks a transaction, the question is no longer just, “Was the prediction good?” The question becomes: Was the action legitimate? Who approved the delegation logic? Was the underlying representation current? Can the decision be explained? Can it be appealed? Can the harm be corrected?

This is exactly why global governance frameworks increasingly stress human oversight and traceability. UNESCO’s recommendation highlights transparency, fairness, human oversight, and human rights. The OECD calls for accountability and traceability across the AI lifecycle. The EU AI Act strengthens obligations around risk, transparency, and oversight for higher-risk AI uses. (UNESCO)

In simple terms, DRIVER is the legitimacy layer of intelligent institutions.

Without it, organizations may automate decisions. But they do not create trustworthy decision systems.

Why this architecture matters now

This architecture was far harder to build in earlier eras.

For decades, enterprises had fragmented systems, weak interoperability, delayed data movement, brittle rule engines, and limited AI capabilities. They could digitize records, but they could not maintain living institutional representations. They could automate transactions, but they could not reason dynamically over changing context.

Now several forces are converging at once: stronger AI models, better enterprise data infrastructure, broader API ecosystems, richer retrieval and memory patterns, and rising governance pressure. At the same time, the costs of poor AI-enabled decisions are becoming more visible to boards, regulators, and customers. (Stanford HAI)

That combination changes the game.

Institutions that redesign themselves around SENSE–CORE–DRIVER can move from passive software systems to active, governed decision systems.

Where the Representation Economy will matter most

The Representation Economy will be especially powerful wherever institutions must continuously interpret reality and act under constraints.

Finance

Credit, fraud, treasury, compliance, claims, collections, and advisory systems.

Healthcare

Diagnosis support, triage, care coordination, monitoring, and administrative decisioning.

Manufacturing

Predictive maintenance, quality management, digital twins, and supply coordination.

Logistics

Routing, asset orchestration, disruption response, and network resilience.

Government

Benefits delivery, enforcement workflows, case management, urban systems, and public-service decision support.

Across all of these sectors, the principle is the same: value comes not only from prediction, but from building trustworthy, machine-operable representations tied to governed action.

Where the framework can fail

The framework is powerful, but it is not magic.

It fails when SENSE is weak and signals are poor.

It fails when CORE is disconnected from institutional policy, incentives, and decision rights.

It fails when DRIVER lacks ownership, oversight, and recourse.

It also fails when leaders confuse polished interfaces with real institutional intelligence.

A chatbot sitting on top of fragmented systems is not an intelligent institution.

An agent that calls tools without authority controls is not institutional redesign.

A dashboard full of metrics is not a machine-readable operating model.

The Representation Economy rewards depth, not theater.

Why board members and C-suites should care

The biggest shift here is not technical. It is institutional.

Boards and executive teams have spent years asking how AI can improve productivity. That remains a valid question. But the more consequential question now is this:

How will AI change the architecture through which the institution sees, decides, governs, and acts?

That is a board-level question because it affects:

- risk ownership

- decision rights

- institutional memory

- control systems

- operational legitimacy

- growth, resilience, and trust

The future leaders of this era will not simply “use AI.” They will redesign how authority, context, memory, and action flow through the institution.

That is why the Representation Economy matters.

It explains why the next competitive frontier is not just automation, but institutional legibility. It explains why the next operating advantage is not only model access, but representational control. And it explains why future-leading organizations will be those that can sense reality clearly, reason within context, and act with legitimacy.

That is what SENSE–CORE–DRIVER provides: not a gadget, not a trend, but an architecture for intelligent institutions.

Key Insight

The Representation Economy describes a shift where institutions gain competitive advantage not from automation alone but from their ability to build machine-legible representations of reality that AI systems can interpret, reason over, and act upon. The SENSE–CORE–DRIVER framework provides an architectural model for designing these intelligent institutions.

Conclusion: The real AI question has changed

In the years ahead, the most important question may no longer be, “Which AI model do we use?”

It may be:

How does our institution represent reality, reason over it, and act responsibly within it?

That is the real frontier.

The institutions that answer that question well will not just deploy better AI. They will build better judgment, better control, better accountability, and better resilience into the operating fabric of the enterprise itself.

That is the promise of the Representation Economy.

And that is why SENSE–CORE–DRIVER is not just a framework for AI systems. It is a framework for the next generation of institutional design.

FAQ

What is the Representation Economy?

The Representation Economy is the shift toward institutions creating value through machine-readable, continuously updated representations of reality rather than relying only on static data, narrow automation, or disconnected workflows.

How is the Representation Economy different from automation?

Automation focuses on executing predefined tasks more efficiently. The Representation Economy focuses on how institutions sense reality, model context, reason over changing conditions, and execute governed action.

Why is the Representation Economy important for enterprise AI?

Because most enterprise AI failures are not caused only by model limitations. They are caused by poor context, fragmented systems, weak representations, and unclear decision rights.

What does SENSE mean in SENSE–CORE–DRIVER?

SENSE is the layer where signals are captured, linked to entities, turned into state representations, and updated over time.

What does CORE mean?

CORE is the reasoning layer where the institution comprehends context, optimizes decisions, realizes action paths, and evolves through feedback.

What does DRIVER mean?

DRIVER is the legitimacy and execution layer where authority, verification, action, and recourse are managed.

Is the Representation Economy only about data?

No. Data is raw material. Representation is structured, contextualized, machine-usable reality.

How does this relate to AI governance?

It aligns directly with global emphasis on transparency, accountability, traceability, oversight, and recourse in AI-enabled systems. (NIST)

Which sectors will be affected first?

Finance, healthcare, manufacturing, logistics, insurance, telecom, government, and any sector where repeated decisions must be made under risk, policy, and operational constraints.

Is this the same as digital transformation?

No. Digital transformation digitized workflows. The Representation Economy is about building machine-operable models of institutional reality that support reasoning and governed action.

What is machine-legible reality?

It is reality translated into forms that software and AI systems can interpret reliably, update continuously, and act upon within defined governance boundaries.

Why should boards care about this now?

Because AI is moving from productivity tooling to decision infrastructure. That changes risk, control, accountability, and competitive advantage at the institutional level.

Glossary

Representation Economy

An institutional and economic shift in which value increasingly comes from building machine-readable, evolving representations of reality.

SENSE

The layer that captures signals, identifies entities, models state, and tracks change over time.

CORE

The layer that interprets context, reasons under constraints, and supports institutional decision-making.

DRIVER

The layer that governs authority, verification, execution, and recourse for machine-supported action.

Institutional Legibility

The degree to which an institution’s reality can be understood, updated, and acted on by machines in a meaningful and governed way.

Machine-legible reality

Reality translated into structured forms that software and AI systems can interpret reliably.

State representation

A structured model of the current condition of an entity, process, case, or environment.

Institutional reasoning

Decision-making that reflects not only patterns in data, but also policy, risk, authority, operational constraints, and contextual judgment.

Delegated action

Action taken by a machine-supported system within defined authority boundaries.

Recourse

The mechanism through which an affected person or institution can challenge, review, reverse, or correct a machine-supported decision.

Traceability

The ability to reconstruct what data, models, rules, and steps contributed to a decision.

Human oversight

Mechanisms that allow people to supervise, intervene in, challenge, or override AI behavior when needed.

Representational control

The institutional advantage that comes from shaping how reality is modeled, updated, interpreted, and acted upon.

Decision infrastructure

The technical and governance systems through which institutions support, verify, and execute decisions at scale.

References and further reading

For credibility and further reading, you can add a short section like this at the end of the article:

This article is informed by a wider body of work on AI adoption, trustworthy AI, governance, and institutional design. Readers who want to explore the policy and market backdrop further may review the Stanford 2025 AI Index for adoption and investment trends, the NIST AI Risk Management Framework for trustworthiness and risk governance, the OECD AI Principles for accountability and traceability, and UNESCO’s Recommendation on the Ethics of Artificial Intelligence for human oversight, fairness, and dignity in AI deployment. (Stanford HAI)

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh