The Rise of the Representation Economy

In the AI era, the most valuable institutions will not simply process more information. They will represent reality better.

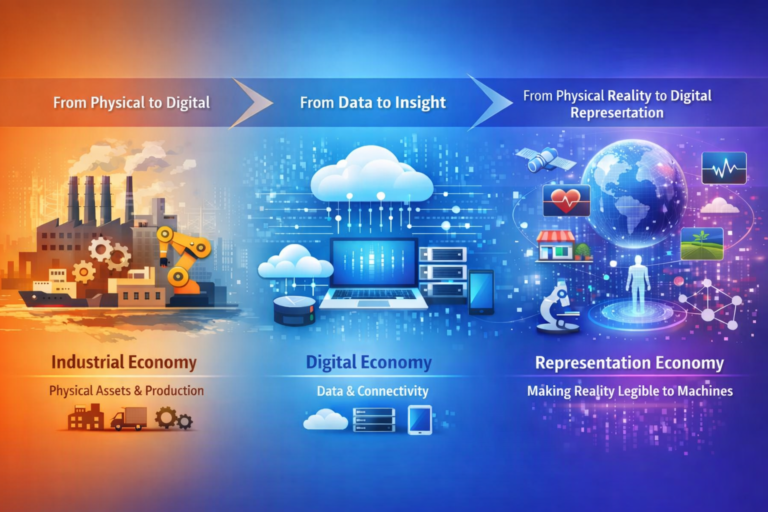

For most of economic history, value was created by controlling what could be seen, measured, and organized.

In the industrial era, that meant physical infrastructure: factories, machines, logistics networks, energy systems, and raw materials. Organizations that controlled production infrastructure controlled economic power.

In the digital era, value shifted toward information infrastructure. Databases, enterprise software, cloud platforms, search engines, digital marketplaces, and algorithmic systems became the dominant engines of growth. Companies that could capture, store, route, and analyze information at scale became the most powerful institutions in the modern economy.

Now another shift is beginning.

The next wave of value will come from something deeper: the ability to represent reality itself.

The most successful institutions of the AI era will not simply collect more data or build larger models. They will build systems that transform previously invisible aspects of the world into structured, machine-readable representations.

This emerging paradigm can be described as the Representation Economy.

In the Representation Economy, competitive advantage comes from the ability to make the invisible visible.

What Is the Representation Economy?

The Representation Economy describes a new phase of economic development in which competitive advantage comes from the ability of institutions to convert previously invisible aspects of reality into structured, machine-readable representations.

In earlier eras, economic power came from controlling physical infrastructure or information systems. In the AI era, value increasingly comes from the ability to represent reality itself — making people, assets, environments, behaviors, and systems legible to institutions and intelligent machines.

Once reality becomes representable, artificial intelligence systems can reason about it, optimize decisions, and support institutional action.

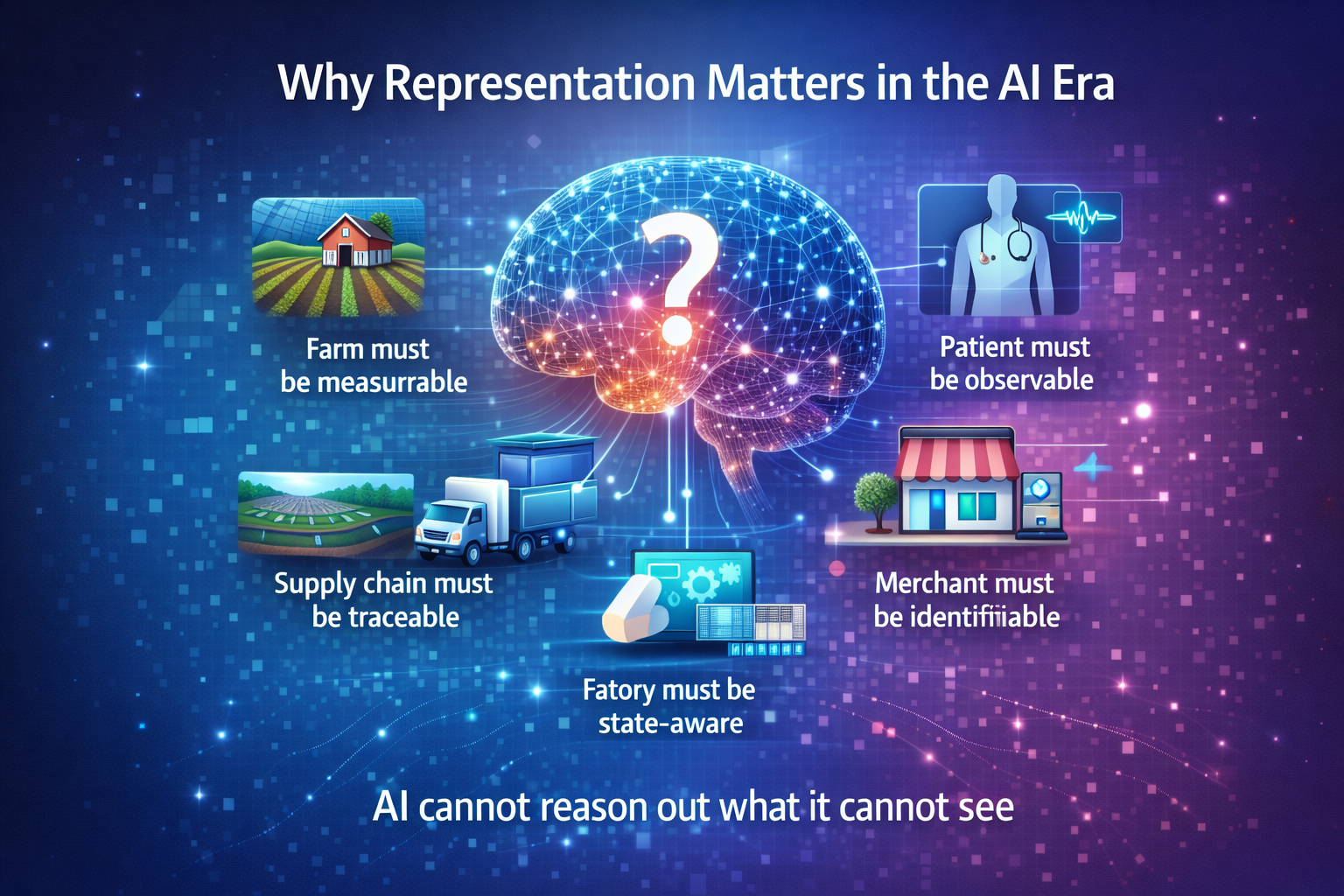

Why Representation Matters in the AI Era

Artificial intelligence is often discussed as if it begins with models and algorithms.

It does not.

It begins with representation.

Before any system can reason, predict, recommend, optimize, or act, the relevant part of reality must first become legible to machines and institutions.

A farm must become measurable.

A patient must become observable.

A supply chain must become traceable.

A merchant must become identifiable.

A factory must become state-aware.

A city must become monitorable.

Only when reality becomes representable can intelligence operate on top of it.

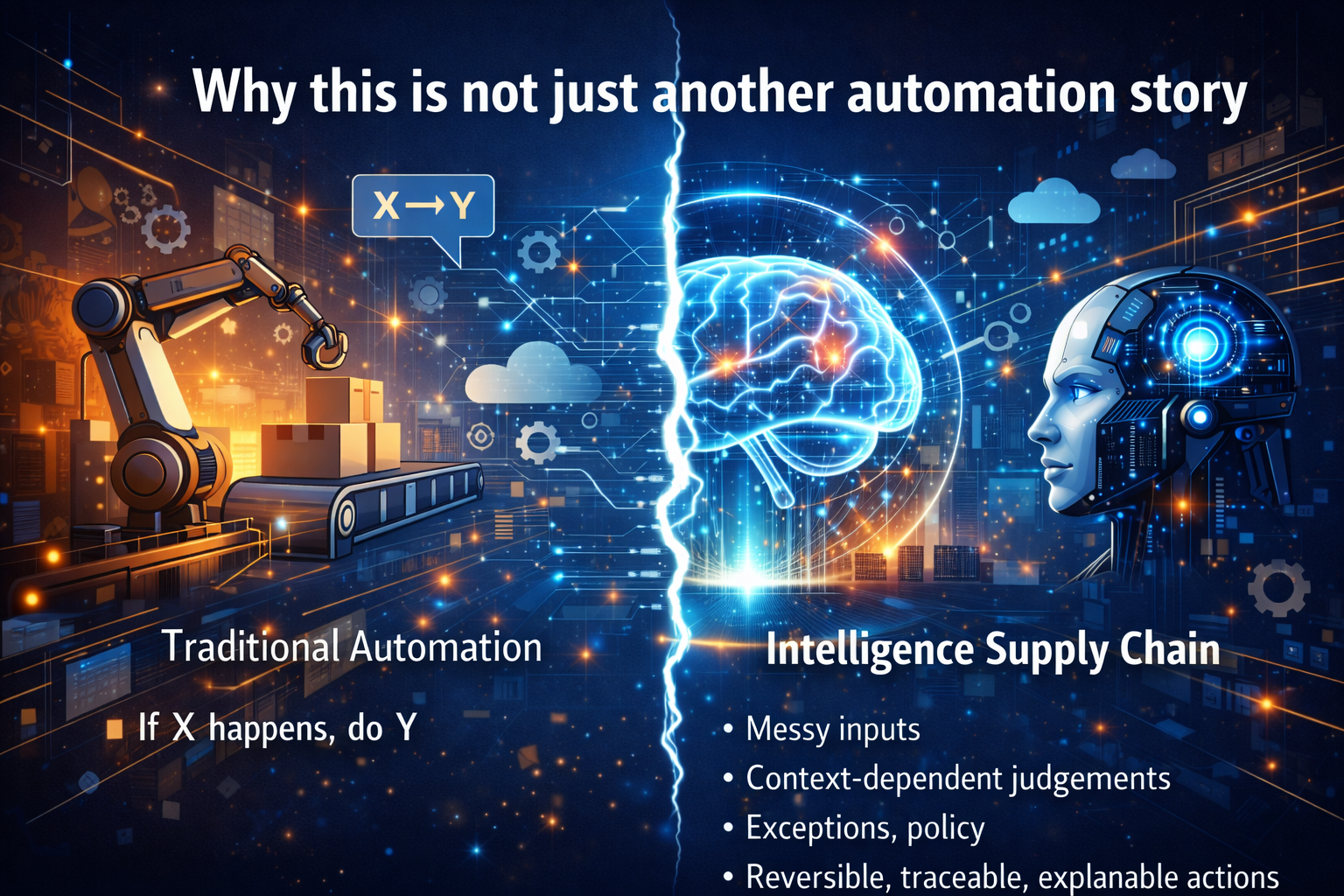

This is why many AI initiatives struggle.

Organizations invest heavily in models, copilots, dashboards, and analytics tools while overlooking a more fundamental constraint: their institutions do not yet possess a clear representation of the world they are trying to optimize.

Artificial intelligence cannot reason effectively about what the institution cannot see clearly.

The Representation Economy is therefore not primarily about AI models.

It is about how institutions build the infrastructure that allows reality to become visible, interpretable, and actionable.

Why the Representation Economy Is Emerging Now

The Representation Economy is emerging because modern sensing technologies, digital infrastructure, and artificial intelligence systems now allow institutions to observe reality at unprecedented scale and resolution.

Sensors, satellite imagery, digital payments, connected devices, and platform activity continuously generate signals about how the world operates. When these signals are organized into structured representations, institutions gain the ability to reason about systems that were previously opaque.

The Institutional Architecture of the Representation Economy: SENSE–CORE–DRIVER

To understand how organizations turn visibility into value, we need to understand how intelligent institutions actually function.

Artificial intelligence systems operate inside a broader institutional architecture that determines how signals from the real world become decisions and actions.

A useful way to describe this architecture is through a three-layer framework:

SENSE – CORE – DRIVER

This framework explains how institutions transform visibility into intelligence and intelligence into legitimate action.

It also explains why many AI initiatives fail: not because models are weak, but because organizations have not built the institutional architecture required for machine-legible reality, machine cognition, and governed execution.

SENSE: The Legibility Layer

In this framework, SENSE means:

Signal — detecting events, changes, and traces from the world

ENtity — attaching those signals to a persistent actor, object, location, or asset

State representation — building a structured model of the current condition of that entity

Evolution — updating that state over time as new signals arrive

This is the layer where reality becomes machine-legible.

Without SENSE, institutions do not truly see the world. They see fragments.

Signals exist, but they are disconnected. Data exists, but it lacks context. Observations exist, but they are not attached to stable entities.

A strong SENSE layer allows organizations to move from raw observation to structured visibility.

It enables institutions to know:

- what is happening

- to whom or to what it is happening

- what condition that entity is in

- how that condition is changing over time

The Representation Economy begins here.

Because economic value increasingly depends on whether reality can be represented with enough fidelity for machines and institutions to reason about it.

CORE: The Cognition Layer

Once representation exists, machine cognition can begin.

CORE means:

Comprehend context

Optimize decisions

Realize action

Evolve through feedback

CORE is the reasoning engine.

This is the layer where institutions interpret what SENSE has made visible.

CORE systems include:

- machine learning models

- predictive analytics

- anomaly detection

- simulation systems

- digital twins

- decision support engines

- optimization algorithms

The CORE layer answers critical questions such as:

- What pattern exists here?

- What risk is emerging?

- What is likely to happen next?

- What decision should be made?

CORE transforms representation into intelligence.

However, CORE can only reason about what SENSE has made visible.

If the representation is incomplete or distorted, reasoning will also be flawed.

This is why many organizations that invest heavily in models still struggle to create value: they have sophisticated reasoning engines operating on weak representations of reality.

DRIVER: The Legitimacy Layer

Once automated decisions begin, institutions must govern them.

DRIVER means:

Delegation — who authorized the system to act

Representation — what model of reality the system used

Identity — which entity was affected

Verification — how the decision is checked

Execution — how the action is carried out

Recourse — what happens if the system is wrong

This is the legitimacy layer of the AI economy.

DRIVER determines how intelligence becomes institutional action.

It defines:

- authority boundaries

- governance rules

- operational workflows

- accountability structures

- human-machine collaboration

Without DRIVER, intelligence remains theoretical.

Organizations may generate insights, but their behavior does not change.

In the Representation Economy, DRIVER is what makes machine action governable, auditable, and legitimate.

Why Many AI Initiatives Fail

The SENSE–CORE–DRIVER framework explains one of the most important realities of the AI era.

Many AI initiatives fail before intelligence even begins.

The common failure pattern looks like this:

- the organization builds or adopts a model

- the model produces insights

- leadership sees impressive demonstrations

- but operational behavior does not change

Why?

Because the organization invested in CORE while neglecting SENSE and DRIVER.

Without strong sensing systems, institutions cannot properly represent reality.

Without execution systems, insights cannot translate into operational decisions.

The institutions that succeed with AI design all three layers together.

They build systems that can:

- sense reality clearly

- reason about it intelligently

- act on it responsibly

That architecture is the foundation of the Representation Economy.

Example 1: Agriculture Becomes Legible

For centuries, farming depended largely on experience and intuition.

Weather uncertainty, soil conditions, pest activity, and crop stress were difficult to observe systematically.

Today that is changing.

Satellite imagery, IoT sensors, soil monitoring systems, and weather analytics are creating detailed representations of agricultural systems.

A farm can now be represented through:

- soil moisture signals

- crop health imagery

- rainfall forecasts

- temperature fluctuations

- fertilizer patterns

- market demand signals

Once this representation exists, AI can support better decisions about irrigation, planting cycles, crop protection, and yield forecasting.

The farm has not changed physically.

What has changed is the institution’s ability to see it.

Example 2: Small Merchants Become Economically Visible

Millions of small merchants around the world operate outside traditional financial systems.

They may have stable income and loyal customers but lack formal credit histories or collateral.

Historically, this made them invisible to financial institutions.

Digital payment systems are beginning to change that.

Transaction trails, digital sales records, and merchant platform activity create representations of economic behavior.

Once this representation exists, new possibilities emerge:

- credit underwriting

- risk assessment

- micro-insurance

- inventory financing

- merchant analytics

In the Representation Economy, economic participation depends not only on productivity but also on legibility.

When institutions can see economic behavior more clearly, entirely new markets become possible.

Example 3: Factories Become Living Systems

Manufacturing offers another powerful illustration.

Traditional factories were managed through periodic reports and delayed metrics.

Managers often relied on static dashboards rather than continuous visibility.

Digital twins and industrial sensing technologies are changing that.

Factories can now be represented as dynamic systems where machines, production flows, and supply chains are continuously monitored.

This representation enables:

- predictive maintenance

- production optimization

- energy efficiency improvements

- supply chain coordination

- quality monitoring

The factory becomes not just a production site but a living system that can be observed, simulated, and optimized in real time.

Example 4: Healthcare Becomes Continuous

Healthcare is also undergoing a representation transformation.

Traditionally, healthcare relied on episodic snapshots.

Patients visited clinics occasionally, and physicians made decisions based on limited observations.

Today wearable devices, remote monitoring systems, and digital health records are beginning to create continuous representations of patient health.

Heart rate patterns, sleep quality, glucose levels, and activity data all contribute to a richer picture of health.

This shift allows healthcare systems to move from reactive treatment toward proactive care.

Again, the key change is visibility.

When institutions can observe health conditions continuously, they can intervene earlier and make more informed decisions.

The Representation Economy creates value in several ways.

Better decisions

Richer representations reduce uncertainty and improve judgment.

Economic inclusion

People and assets previously invisible to institutions can now participate in formal systems.

New products and services

Insurance, credit, diagnostics, optimization tools, and predictive systems depend on accurate representation.

Lower coordination friction

Shared representations allow different actors in a system to align decisions.

New strategic control points

The institutions that control the representation layer often control how decisions are made.

For boards and executives, this has major implications.

The representation layer can become as strategically important as the operating system, the distribution channel, or the supply chain.

The Ethical Challenge of Representation

Making the invisible visible also introduces new responsibilities.

Representation is a form of power.

The way reality is represented influences how decisions are made.

If representations are biased, incomplete, or poorly designed, they can reinforce inequalities or misinterpret complex human situations.

Institutions must therefore treat representation infrastructure with the same seriousness as financial infrastructure or legal systems.

The goal is not simply to see more.

The goal is to see responsibly.

The Strategic Question for Leaders

Most leaders still ask:

“What AI model should we deploy?”

But the more important question is:

What parts of reality relevant to our mission remain invisible today?

Those invisible domains represent the largest untapped opportunities.

Once institutions identify them, they can build the infrastructure required to make those realities legible.

Once they become legible, intelligence and automation can follow.

The Future of the Representation Economy

Over the next decade, the Representation Economy will expand across nearly every sector of society.

Cities will become continuously monitored systems capable of optimizing mobility, energy use, and public services. Supply chains will become transparent networks where disruptions can be detected and addressed in real time. Financial systems will extend services to millions of previously invisible participants through richer economic representations.

As sensing infrastructure expands and machine reasoning becomes more powerful, institutions will increasingly compete not just on information processing but on how accurately they can represent reality itself.

The institutions that master this capability will define the next era of economic power.

Conclusion: The Institutions That Win Will See Better

Every major technological era expands how institutions perceive the world.

The industrial era expanded production.

The digital era expanded information processing.

The AI era will expand institutional perception.

The Representation Economy reflects this shift.

The next generation of competitive advantage will not come only from larger models or faster chips.

It will come from the ability to convert weak, fragmented, invisible aspects of reality into structured representations that institutions can understand and act upon.

This is why SENSE, CORE, and DRIVER matter.

SENSE makes reality legible.

CORE makes reality intelligible.

DRIVER makes action legitimate.

Together they form the architecture of intelligent institutions.

And in the Representation Economy, the organizations that master this architecture will not simply adopt AI.

They will redefine how value itself is created.

FAQ

What is the Representation Economy?

The Representation Economy is an emerging paradigm in which economic value is created by transforming previously invisible or fragmented aspects of reality into machine-readable representations that institutions can reason over.

Why does visibility matter in AI?

Artificial intelligence can only reason about what it can observe. Organizations that build stronger sensing systems gain better decision-making capabilities.

Why is representation important in AI?

AI systems can only reason about what is represented clearly. Weak representation leads to weak intelligence.

What does SENSE stand for?

SENSE stands for Signal, ENtity, State representation, and Evolution. It is the layer where reality becomes machine-legible.

What does CORE stand for?

CORE stands for Comprehend context, Optimize decisions, Realize action, and Evolve through feedback.

What does DRIVER stand for?

DRIVER stands for Delegation, Representation, Identity, Verification, Execution, and Recourse

What is the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER architecture explains how AI systems operate inside institutions.

SENSE captures signals and builds machine-readable representations of reality.

CORE performs reasoning and decision making.

DRIVER governs how decisions are executed with authority and accountability.

Why do many AI projects fail?

Many AI initiatives fail because organizations focus only on algorithms (CORE) while ignoring the sensing infrastructure (SENSE) and governance systems (DRIVER).

What will define winning institutions in the AI era?

Winning institutions will be those that build the best visibility infrastructure—systems that continuously sense, represent, understand, and act on real-world information.

Glossary

Representation Economy

An emerging economic model in which competitive advantage comes from the ability to make real-world conditions visible, structured, and machine-readable. Organizations that represent reality more accurately can build better AI systems, decisions, and services.

Visibility Infrastructure

The technical and institutional systems that allow organizations to observe and capture signals from the real world. This includes sensors, data pipelines, event logs, APIs, identity systems, and contextual metadata.

Machine-Legible Reality

A condition where real-world events, entities, and states are captured in structured digital form so that software and AI systems can reason about them.

SENSE Layer

The institutional layer responsible for making reality visible to machines.

It includes:

-

Signal — detecting events, changes, and traces from the world

-

ENtity — attaching those signals to a persistent actor, object, location, or asset

-

State Representation — building a structured model of the entity’s condition

-

Evolution — updating that state as new signals arrive

This is the layer where reality becomes machine-legible.