The Operating Architecture of the AI Economy

Artificial intelligence is often discussed as if better models alone will change the economy.

That is only partly true. Better intelligence can improve prediction, summarization, content generation, classification, and recommendation. But markets do not transform simply because a model becomes smarter.

Markets transform when intelligence is connected to real authority, real workflows, real data, real accountability, and real consequences.

That is the shift many institutions still underestimate.

We are moving from an era in which AI primarily assisted humans to one in which AI increasingly shapes decisions, allocates attention, routes work, screens risk, prices options, detects anomalies, and triggers action across enterprise systems.

At the same time, the regulatory direction is becoming clearer: performance alone is not enough. The EU AI Act entered into force on August 1, 2024; prohibited AI practices and AI-literacy obligations started applying on February 2, 2025; GPAI obligations started applying on August 2, 2025; and the Act becomes fully applicable on August 2, 2026, with some provisions extending further. NIST’s AI Risk Management Framework likewise emphasizes governance across the AI lifecycle, not merely model development. (Digital Strategy)

That is why the central question of the AI economy is no longer, “How intelligent is the model?” The more important question is this:

What operating architecture allows intelligence to function safely, credibly, and at scale inside markets and institutions?

My answer is simple:

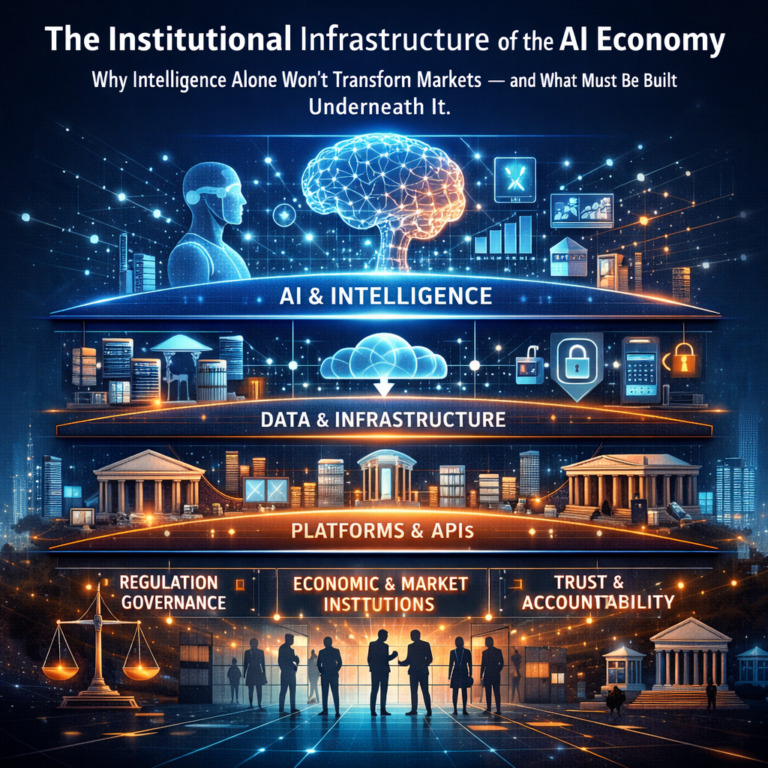

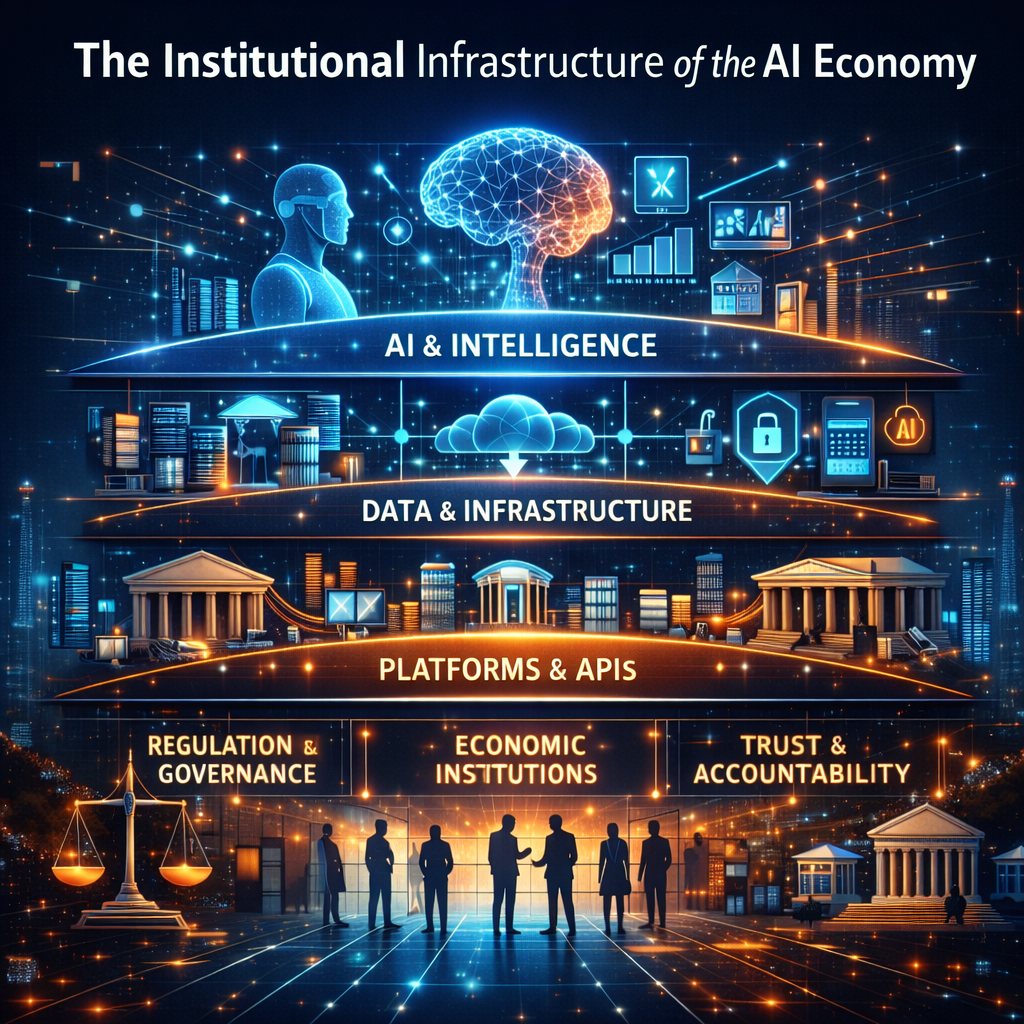

The AI economy needs two layers.

The first is the intelligence layer: how AI understands, decides, acts, and learns.

The second is the institutional layer: the governance and operating infrastructure that makes those actions legitimate, auditable, and reversible.

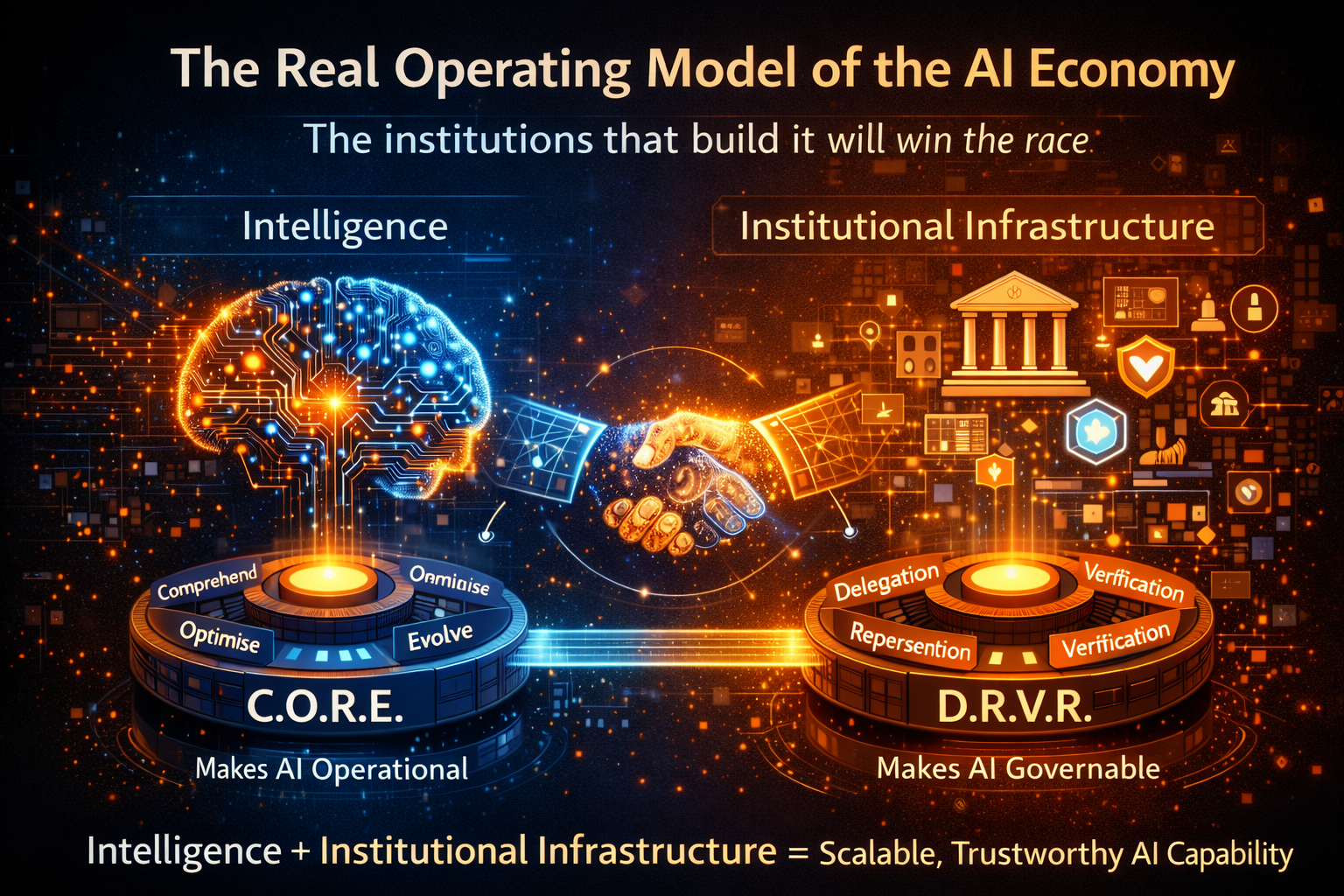

I describe these two layers through two connected frameworks:

C.O.R.E. — the intelligence loop

D.R.V.R. — the institutional infrastructure

Together, they form the operating architecture of the AI economy.

Summary

The AI economy will not be transformed by intelligence alone. Markets change only when machine intelligence operates within institutional infrastructure that defines authority, accountability, verification, and recourse.

This article introduces a two-layer architecture for the AI economy: C.O.R.E. (the intelligence loop) and D.R.V.R. (the institutional infrastructure). Together they form the operating architecture that enables AI systems to function safely, credibly, and at scale across enterprises, governments, and markets.

The mistake most AI discussions still make

Most AI discussions remain model-centric. They focus on model size, reasoning ability, multimodality, agent frameworks, benchmark scores, and productivity gains.

Those things matter. OECD research shows that generative AI can improve productivity, innovation, and entrepreneurship, and experimental evidence suggests gains in tasks such as writing, software development, consulting, editing, and summarization.

But the same body of work also makes something equally important clear: organizations need redesign of workflows, capabilities, and operating practices to capture those gains at scale. (OECD)

In other words:

Intelligence creates potential. Operating architecture creates outcomes.

That distinction is easier to see through simple examples.

A navigation app is useful because it recommends a route.

A logistics network becomes transformative when that route recommendation automatically changes dispatching, fuel planning, warehouse sequencing, and delivery commitments.

A chatbot is useful because it answers a question.

An enterprise AI system transforms a bank when it can classify a complaint, retrieve policy context, assess risk, route the case, generate a compliant response, and escalate only when required.

A model that predicts equipment failure is interesting.

A production system becomes economically valuable when that prediction triggers maintenance, reserves parts, adjusts schedules, and documents why the intervention occurred.

The lesson is straightforward:

Intelligence alone informs. Architecture operationalizes.

Why the AI economy needs a two-layer architecture

The economy does not run on intelligence in the abstract. It runs on institutions, permissions, incentives, standards, trust, recourse, and execution. AI can become powerful inside all of those systems, but only if it is embedded in a structure that determines:

- what it is allowed to see,

- what it is allowed to decide,

- how it acts,

- how its actions are verified,

- and what happens when it gets something wrong.

This is why AI should not be understood merely as a software capability. It should be understood as an operating architecture for machine-mediated decisions.

That architecture has two layers.

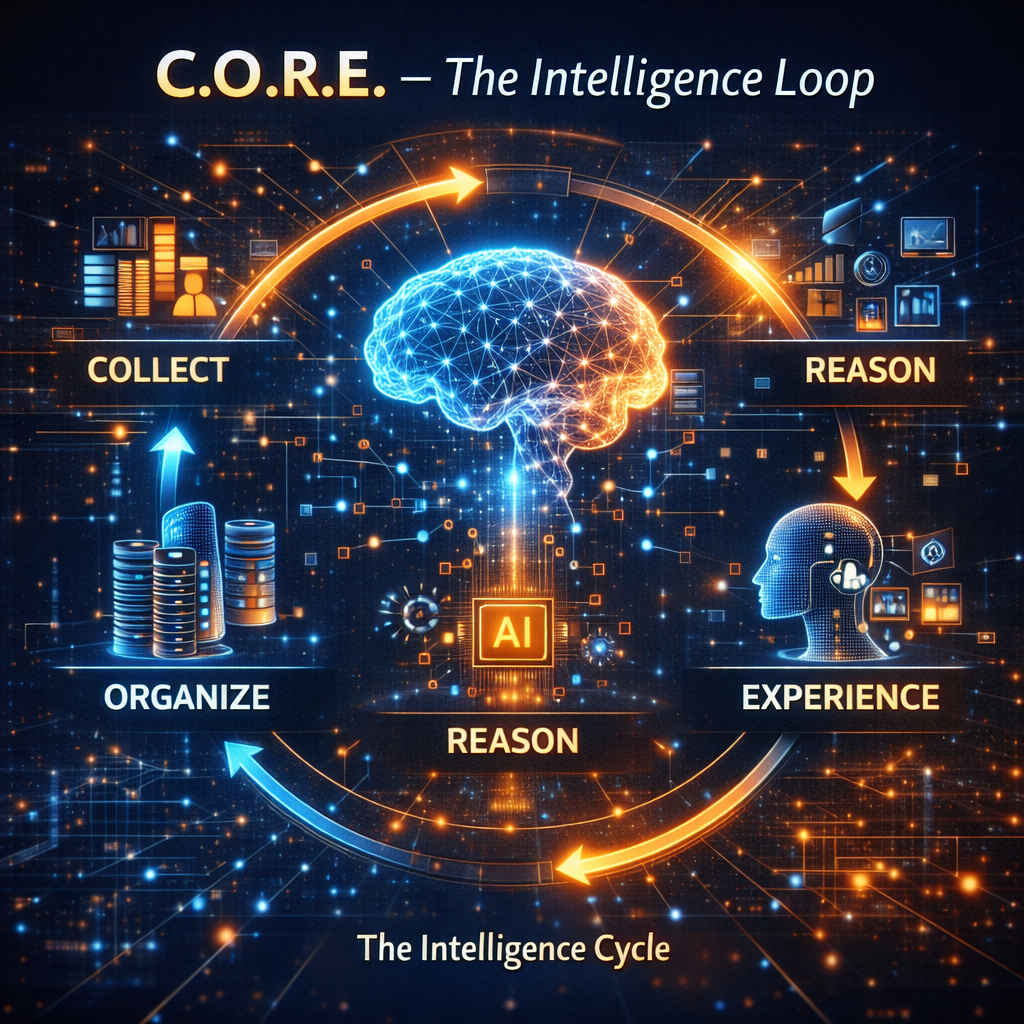

Layer 1: C.O.R.E. — The Intelligence Loop

-

Comprehend context

AI begins by absorbing signals. These signals may come from customer behavior, transactions, operational telemetry, policy documents, contracts, workflow history, market feeds, images, or machine logs. But comprehension is not the same as data access. Comprehension is the conversion of scattered inputs into situational awareness.

Think of a fraud system in a payments network. It does not merely inspect one transaction. It interprets time, device signals, merchant behavior, location shifts, prior account activity, known attack patterns, and customer history. Context is what turns raw data into meaningful signals.

In the AI economy, poor comprehension creates dangerous confidence. A system that sees only fragments of reality may still act as if it sees the whole picture.

-

Optimize decisions

Once context is understood, the system must generate and rank possible actions. This is where AI moves beyond prediction into structured choice under constraints.

For example, an enterprise procurement assistant may need to choose between suppliers. It cannot optimize on price alone. It may need to weigh delivery time, reliability, geopolitical exposure, sustainability requirements, contractual obligations, cyber-risk posture, and internal policy thresholds.

Optimization in the real world is rarely about finding a single perfect answer. It is about selecting the best viable action under uncertainty, trade-offs, and institutional boundaries.

-

Realize action

This is the point at which AI stops being advisory and starts shaping institutional behavior.

Realization means execution through tools, APIs, workflows, permissions, scheduling systems, tickets, messages, approvals, transactional rails, and operational systems. This is the moment when a recommendation becomes a real-world effect.

This distinction matters enormously. Many organizations still think they have “deployed AI” when they have only deployed insight. But the real economic impact of AI begins when systems can act.

A support assistant that drafts a reply is useful.

A support system that refunds the wrong customer, blocks the wrong account, or escalates the wrong claim has crossed into operational consequence.

The moment AI can act, the architecture around it becomes far more important.

-

Evolve through evidence

The final stage is evidence-based adaptation. AI systems improve through outcomes, reversals, escalations, overrides, policy violations, drift signals, and downstream effects.

This is where many deployments fail. They treat learning as a retraining issue alone. In reality, evolution must include operational evidence: what worked, what backfired, what had to be reversed, what triggered complaints, what created friction, and what made auditors uncomfortable.

C.O.R.E. therefore describes intelligence not as a static model, but as a living decision loop.

Why C.O.R.E. is necessary — but not sufficient

If C.O.R.E. explains how intelligence works, it still does not explain how intelligence becomes trustworthy inside real institutions and markets.

A model may comprehend brilliantly and optimize efficiently. Yet it can still create harm if it acts without authority, represents people poorly, cannot prove why it acted, or offers no meaningful path for challenge or reversal.

That is why intelligence alone will not transform markets.

The second layer is what converts AI capability into institutional viability.

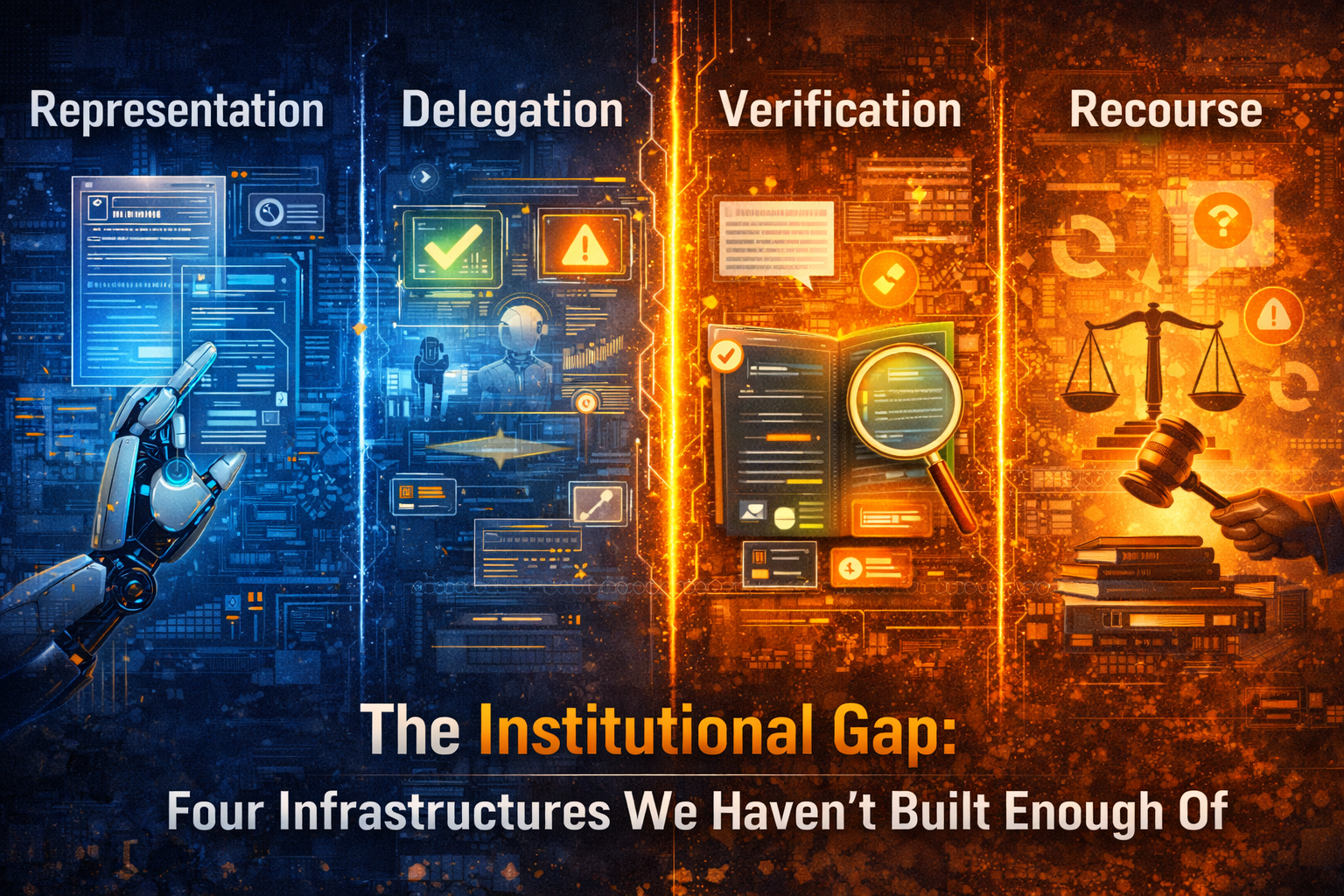

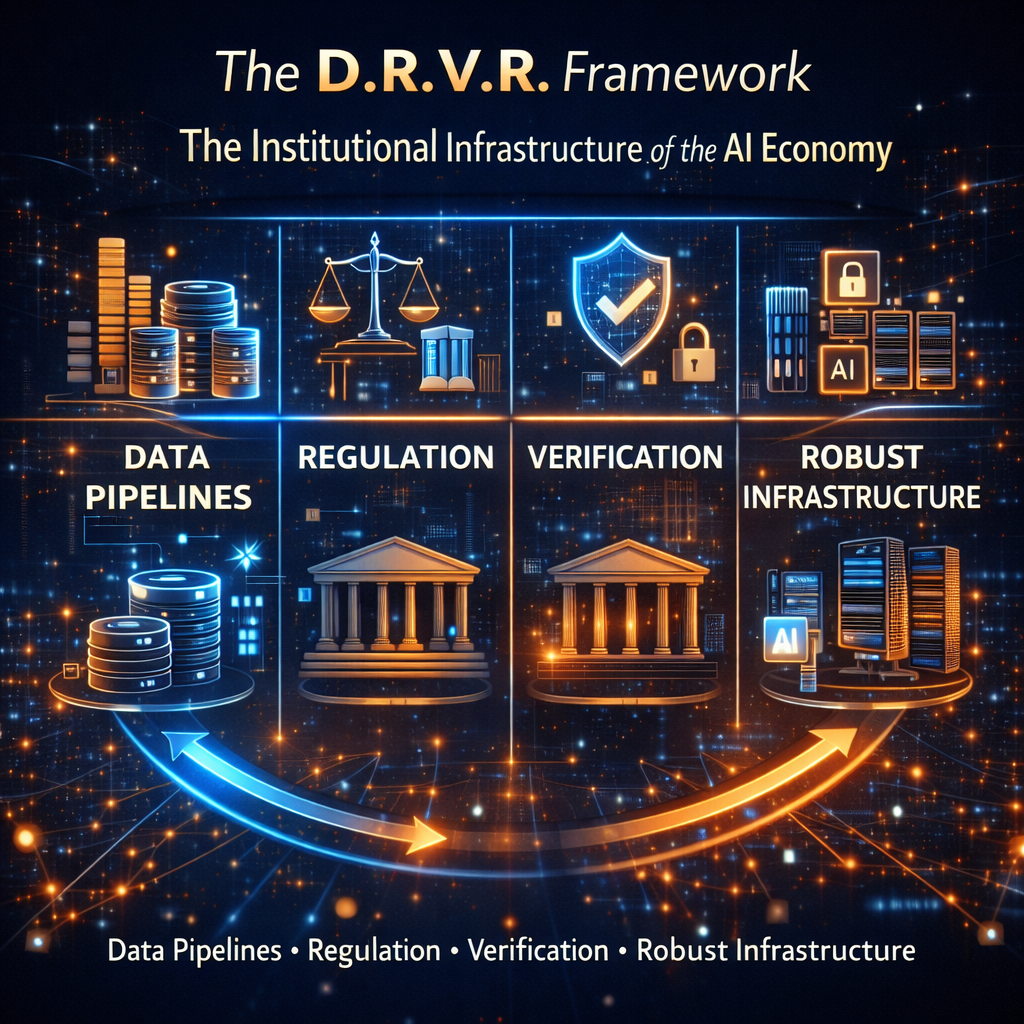

Layer 2: D.R.V.R. — The Institutional Infrastructure

-

Delegation

Delegation answers the most important question in the AI economy:

What is the machine actually allowed to decide?

Not every task should be delegated. Reordering low-value office supplies is not the same as denying insurance coverage. Suggesting a meeting slot is not the same as freezing a bank account. Routing a service request is not the same as determining legal liability.

Delegation infrastructure defines the authority boundary. It determines whether AI can advise, recommend, approve, execute, or only escalate. This is the architecture of machine authority.

Without delegation rules, organizations confuse capability with permission.

-

Representation

AI can only act on what becomes legible to it.

Representation infrastructure is the layer that translates messy, incomplete, real-world conditions into machine-usable signals. This includes identity resolution, data quality, event logging, documentation, taxonomy, workflow capture, sensor coverage, and contextual metadata.

This matters more than most organizations realize. If an agricultural system cannot represent soil variation, weather volatility, informal labor, or local market conditions, it will optimize the wrong things. If a lending system cannot represent irregular income patterns or nontraditional economic behavior, it may misread real people and produce apparently “rational” but deeply flawed outcomes.

The AI economy will reward institutions that make reality legible fairly, not just efficiently.

-

Verification

Once AI begins making or triggering decisions, stakeholders need evidence.

Verification infrastructure proves that a system acted within policy, used approved context, respected thresholds, and produced outputs that can be examined after the fact. This includes decision records, logs, lineage, testing, validation procedures, monitoring, and policy traceability.

This is not an optional extra. NIST’s AI RMF treats governance as a cross-cutting function across the AI lifecycle, and the broader global direction of AI regulation is clearly toward lifecycle accountability, documentation, transparency, and controls for higher-impact systems. (NIST)

Verification is what turns:

“Trust us”

into

“Here is the evidence.”

- Recourse

Every serious economic system needs a way back.

Recourse infrastructure provides mechanisms to challenge, pause, unwind, reverse, or remediate AI-driven outcomes. This matters because many AI decisions create effects that are difficult to undo once acted upon.

Imagine a customer wrongly flagged for fraud. The financial loss may be temporary, but the trust erosion and service disruption are real. Imagine a small business loan incorrectly denied by an automated process. The applicant may miss a time-sensitive opportunity. Imagine a job candidate screened out at scale because the system used poor proxies. The organization may never even know who was unfairly excluded.

Recourse is not a legal afterthought. It is core operating architecture.

How the two layers work together

Once you see both layers, the larger picture becomes clear.

C.O.R.E. explains how intelligence functions.

D.R.V.R. explains how institutions contain, govern, and legitimize that intelligence.

One without the other produces failure.

If you have C.O.R.E. without D.R.V.R., you get smart systems that move quickly but create trust, governance, and accountability problems.

If you have D.R.V.R. without C.O.R.E., you get control structures without meaningful intelligence gains.

Transformation requires both.

This is why the future of AI competition will not be won merely by firms with the best models. It will be won by firms that build the best operating architecture around intelligence.

Why this matters for markets, not just enterprises

This argument is larger than enterprise software.

The AI economy is reshaping pricing, underwriting, logistics, customer acquisition, compliance, public services, and platform ecosystems. OECD research points to the productivity and innovation upside of AI, while WEF discussions increasingly emphasize that trust, interoperability, governance, and practical implementation must evolve alongside AI capability. (OECD)

That means the winners of the next decade will not simply be those who adopt AI faster. They will be those who institutionalize it better.

In practical terms, that means stronger delegation boundaries, richer representation of reality, better verification and evidence trails, usable recourse, continuous learning from outcomes, and tighter integration with operational systems.

This is the difference between AI as novelty and AI as infrastructure.

A simple way to understand the future

Think of electricity.

Electricity did not transform economies merely because generation improved. Transformation required grids, safety standards, metering, regulation, industrial redesign, and new operating models for factories and cities.

The internet did not transform markets simply because computers could connect. It required protocols, browsers, payment systems, identity systems, cloud infrastructure, and cybersecurity.

AI will follow the same pattern.

Better models are like better generators.

C.O.R.E. is the decision engine.

D.R.V.R. is the institutional grid.

Without that grid, intelligence remains impressive but unreliable. With it, intelligence becomes a scalable economic force.

The board-level implication

The board-level question is no longer whether AI matters. That debate is over.

The real board-level question is whether the organization is designing the operating architecture required to turn intelligence into durable institutional capability.

That means leaders must ask:

- Where is AI only advising, and where is it acting?

- What authority has actually been delegated?

- What realities are poorly represented in our systems?

- Can we verify how important AI-driven decisions were made?

- What happens when the system is wrong?

- Are we learning from outcomes, or merely celebrating deployment?

These are not technical questions disguised as governance questions. They are strategic questions about competitiveness, trust, resilience, and institutional legitimacy.

For boards and C-suites, that is the new frontier of AI strategy.

The operating architecture of the AI economy can be understood through two layers. The first layer, C.O.R.E., governs how machine intelligence understands context, optimizes decisions, executes actions, and evolves through evidence. The second layer, D.R.V.R., governs how institutions delegate authority, represent real-world signals, verify decisions, and provide recourse when systems are wrong. Together these layers form the institutional operating model required for trustworthy AI at scale.

Conclusion: The real operating model of the AI economy

The AI economy will not be defined by intelligence alone. Intelligence is becoming more abundant, more accessible, and more modular. The harder challenge is building the operating architecture that allows that intelligence to function safely, credibly, and at scale.

That is why the next era belongs not simply to model builders, but to architecture builders.

The institutions that win will understand this early:

C.O.R.E. makes intelligence operational.

D.R.V.R. makes intelligence governable.

Together, they form the operating architecture of the AI economy.

And that is the deeper shift now underway. AI is no longer just a tool inside the enterprise. It is becoming part of the institutional machinery through which markets sense, decide, act, and adapt.

The next battle, therefore, is not only for smarter models.

It is for the architecture that makes machine intelligence economically usable, institutionally legitimate, and socially durable.

That is where the future of the AI economy will be decided.

The Intelligence-Native Enterprise Doctrine

This article is part of a larger strategic body of work that defines how AI is transforming the structure of markets, institutions, and competitive advantage. To explore the full doctrine, read the following foundational essays:

- The AI Decade Will Reward Synchronization, Not Adoption

Why enterprise AI strategy must shift from tools to operating models.

https://www.raktimsingh.com/the-ai-decade-will-reward-synchronization-not-adoption-why-enterprise-ai-strategy-must-shift-from-tools-to-operating-models/ - The Third-Order AI Economy

The category map boards must use to see the next Uber moment.

https://www.raktimsingh.com/third-order-ai-economy/ - The Intelligence Company

A new theory of the firm in the AI era — where decision quality becomes the scalable asset.

https://www.raktimsingh.com/intelligence-company-new-theory-firm-ai/ - The Judgment Economy

How AI is redefining industry structure — not just productivity.

https://www.raktimsingh.com/judgment-economy-ai-industry-structure/ - Digital Transformation 3.0

The rise of the intelligence-native enterprise.

https://www.raktimsingh.com/digital-transformation-3-0-the-rise-of-the-intelligence-native-enterprise/ - Industry Structure in the AI Era

Why judgment economies will redefine competitive advantage.

https://www.raktimsingh.com/industry-structure-in-the-ai-era-why-judgment-economies-will-redefine-competitive-advantage/

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Glossary

AI economy

The emerging economic system in which AI influences decisions, workflows, market interactions, productivity, and value creation.

Operating architecture

The combination of technical and institutional structures that allow AI to function reliably in production.

C.O.R.E.

A four-part intelligence loop: Comprehend context, Optimize decisions, Realize action, and Evolve through evidence.

D.R.V.R.

A four-part institutional infrastructure: Delegation, Representation, Verification, and Recourse.

Delegation infrastructure

The rules and controls that define what AI systems are allowed to decide and execute.

Representation infrastructure

The data, context, and real-world signal layer that makes reality legible to AI systems.

Verification infrastructure

The evidence, logging, testing, lineage, and policy-traceability layer that proves how AI acted.

Recourse infrastructure

The mechanisms that allow AI-driven outcomes to be challenged, paused, reversed, or remediated.

Institutional AI

AI embedded into real operational, governance, and decision systems rather than used only as an isolated productivity tool.

Decision architecture

The structure through which choices are generated, constrained, authorized, executed, and reviewed.

AI control plane

The governance and coordination layer that manages AI behavior, permissions, monitoring, and policy adherence across systems.

Trustworthy AI

AI designed and operated in ways that support reliability, accountability, transparency, safety, and governance.

FAQ

What is the operating architecture of the AI economy?

It is the two-layer structure required to make AI work at scale in real institutions: an intelligence layer that helps systems understand, decide, act, and learn, and an institutional layer that governs what those systems are allowed to do and how their actions are verified and challenged.

Why is intelligence alone not enough?

Because smart models do not automatically create trustworthy markets or safe enterprises. Real transformation requires authority boundaries, operational workflows, evidence trails, and recourse mechanisms.

What does C.O.R.E. stand for?

C.O.R.E. stands for Comprehend context, Optimize decisions, Realize action, and Evolve through evidence.

What does D.R.V.R. stand for?

D.R.V.R. stands for Delegation, Representation, Verification, and Recourse.

Why is this framework important for boards and C-suites?

Because AI is moving from assistance to action. Once systems start affecting pricing, lending, routing, claims, compliance, hiring, and customer experience, leadership must govern not only model performance but institutional consequences.

How does this relate to AI governance?

AI governance is one part of the institutional layer. But this article argues for a broader operating architecture that includes not only governance but authority, representation, verification, and recourse.

Is this only relevant for large enterprises?

No. The same logic applies to banks, hospitals, governments, logistics firms, software platforms, insurers, manufacturers, and even smaller businesses using agentic AI or automated decision systems.

What is the biggest mistake organizations make with AI?

They assume that once a model is accurate, deployment is the main challenge. In practice, the real challenge is designing the architecture around the model so it can operate safely and credibly in the real world.

What is the operating architecture of the AI economy?

The operating architecture of the AI economy refers to the technical and institutional structures required for AI systems to function safely and effectively at scale. It includes both the intelligence layer that governs machine decision making and the institutional layer that governs authority, accountability, and recourse.

What is the C.O.R.E. framework?

C.O.R.E. is a four-stage intelligence loop describing how AI systems operate: Comprehend context, Optimize decisions, Realize action, and Evolve through evidence.

What is the D.R.V.R. framework?

D.R.V.R. describes the institutional infrastructure required for AI systems to operate within organizations and markets: Delegation, Representation, Verification, and Recourse.

References and further reading

For factual context on AI governance, regulation, and adoption trends, see the European Commission’s AI Act overview and timeline, NIST’s AI Risk Management Framework materials, OECD research on the effects of generative AI on productivity and innovation, and World Economic Forum commentary on AI governance and trust. (Digital Strategy)