The DRIVER Layer:

Why Enterprise AI Needs a Governance Architecture Beyond Models

Most AI conversations still focus on models.

Which model is more intelligent? Which model reasons better? Which model writes better code? Which model handles multimodal inputs? These are useful questions, but they miss the deeper enterprise issue.

In real organizations, the hardest question is not:

Can AI decide?

The harder question is:

Who allowed AI to decide, how was the decision checked, what action was taken, and what happens if the action was wrong?

This is where the DRIVER layer becomes essential.

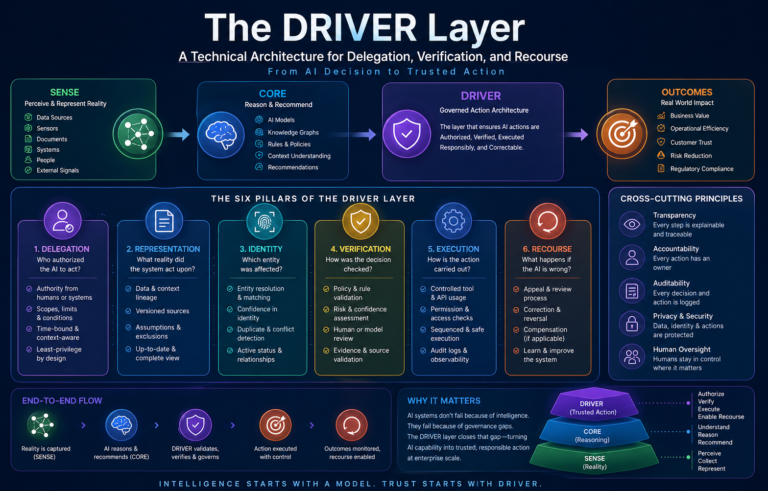

In the SENSE–CORE–DRIVER framework of the Representation Economy, SENSE makes reality machine-readable.

CORE interprets that reality and produces reasoning, recommendations, or decisions.

But DRIVER is the layer that turns those decisions into governed action.

Without DRIVER, AI remains a prediction engine.

With DRIVER, AI becomes an accountable execution system.

And in the AI era, this distinction will define which enterprises can safely scale intelligent systems and which ones will remain stuck in experiments.

The DRIVER Layer is the governance and execution architecture in enterprise AI that ensures AI decisions are delegated, verified, executed, and corrected responsibly. It enables organizations to move from AI recommendations to trusted autonomous action by managing authority, identity, verification, execution, and recourse.

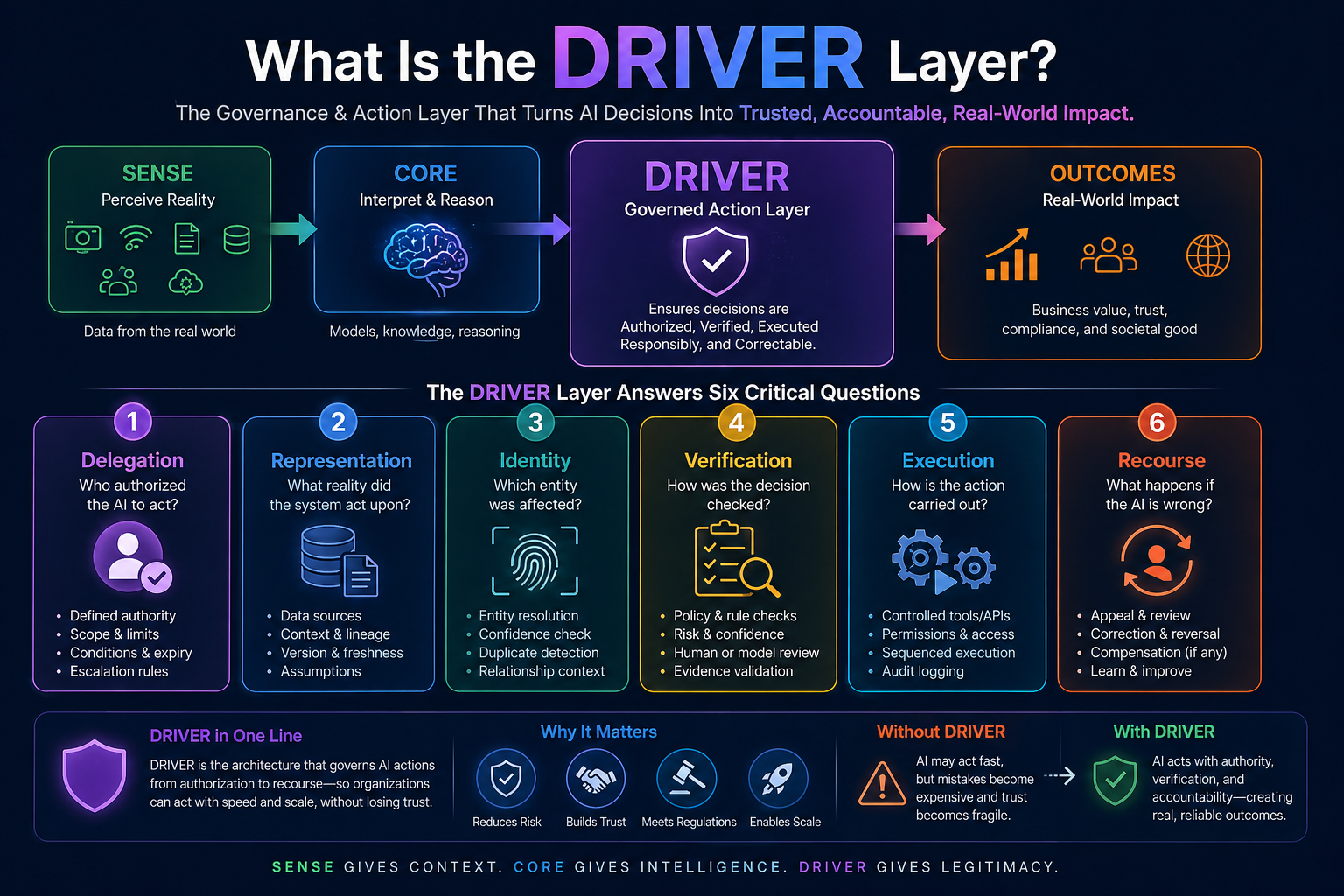

What Is the DRIVER Layer?

The DRIVER layer is the technical and governance architecture that controls how AI systems act in the real world.

It answers six core questions:

Delegation: Who authorized the AI system to act?

Representation: What version of reality did the system use?

Identity: Which entity, person, asset, account, customer, document, or system was affected?

Verification: How was the decision or action checked?

Execution: How was the action carried out?

Recourse: What happens if the system is wrong?

This is why DRIVER is not just an “AI safety” concept. It is an enterprise architecture concept.

It sits between AI reasoning and business impact.

A model may recommend changing a customer credit limit. A model may suggest stopping a payment. A model may trigger a cyber response. A model may generate a legal clause. A model may decide which supplier order should be accelerated.

But before any of these actions happen, an enterprise must know:

Who delegated this authority?

What policy applies?

What evidence was used?

Was the output verified?

Was the action logged?

Can the affected party appeal?

Can the action be reversed, corrected, or compensated?

That entire architecture is DRIVER.

Global AI governance frameworks are already moving in this direction. NIST’s AI Risk Management Framework emphasizes governance, documentation, transparency, accountability, and human review as part of managing AI risk.

The OECD AI Principles emphasize trustworthy AI, accountability, human rights, and democratic values. The EU AI Act places specific emphasis on risk management, transparency, and human oversight for high-risk AI systems.

These developments point to the same conclusion: AI systems cannot be judged only by intelligence; they must also be judged by how responsibly they are authorized, verified, executed, and corrected. (NIST)

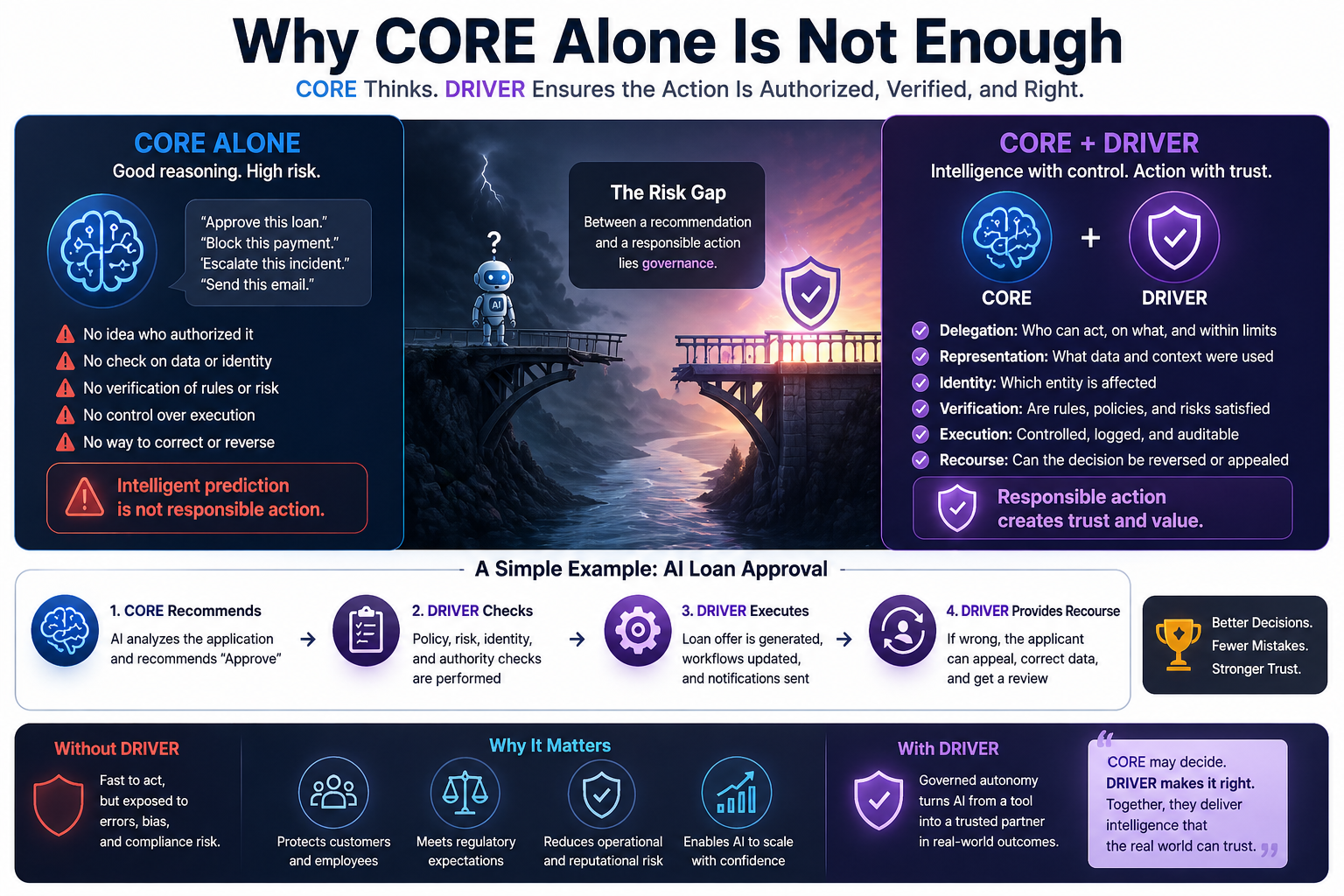

Why CORE Alone Is Not Enough

Most enterprises are currently overinvesting in CORE.

They are building copilots, agents, copiloting workflows, reasoning systems, retrieval pipelines, and multimodal interfaces. These are important. But CORE by itself does not create institutional trust.

CORE may say:

“Approve this loan.”

“Escalate this incident.”

“Reject this claim.”

“Pay this invoice.”

“Terminate this workflow.”

“Send this response.”

“Modify this configuration.”

But CORE does not automatically know whether it has the right to act.

It may reason well and still act wrongly.

A simple example: imagine an AI agent in a bank. It detects suspicious behavior in an account and recommends freezing the account. From a model perspective, the reasoning may be statistically strong.

From a customer perspective, the action may be devastating if wrong. The customer may be unable to pay rent, salary, school fees, or medical bills.

So the key question is not only whether the AI detected risk.

The key question is whether the organization had a DRIVER architecture around that detection.

Was the account identity resolved correctly?

Was the risk signal recent or outdated?

Was the decision checked against policy?

Was a human required before freezing?

Was the customer informed?

Was there a way to appeal?

Could the freeze be partially applied instead of fully applied?

Was every step auditable?

That is the difference between intelligent prediction and responsible action.

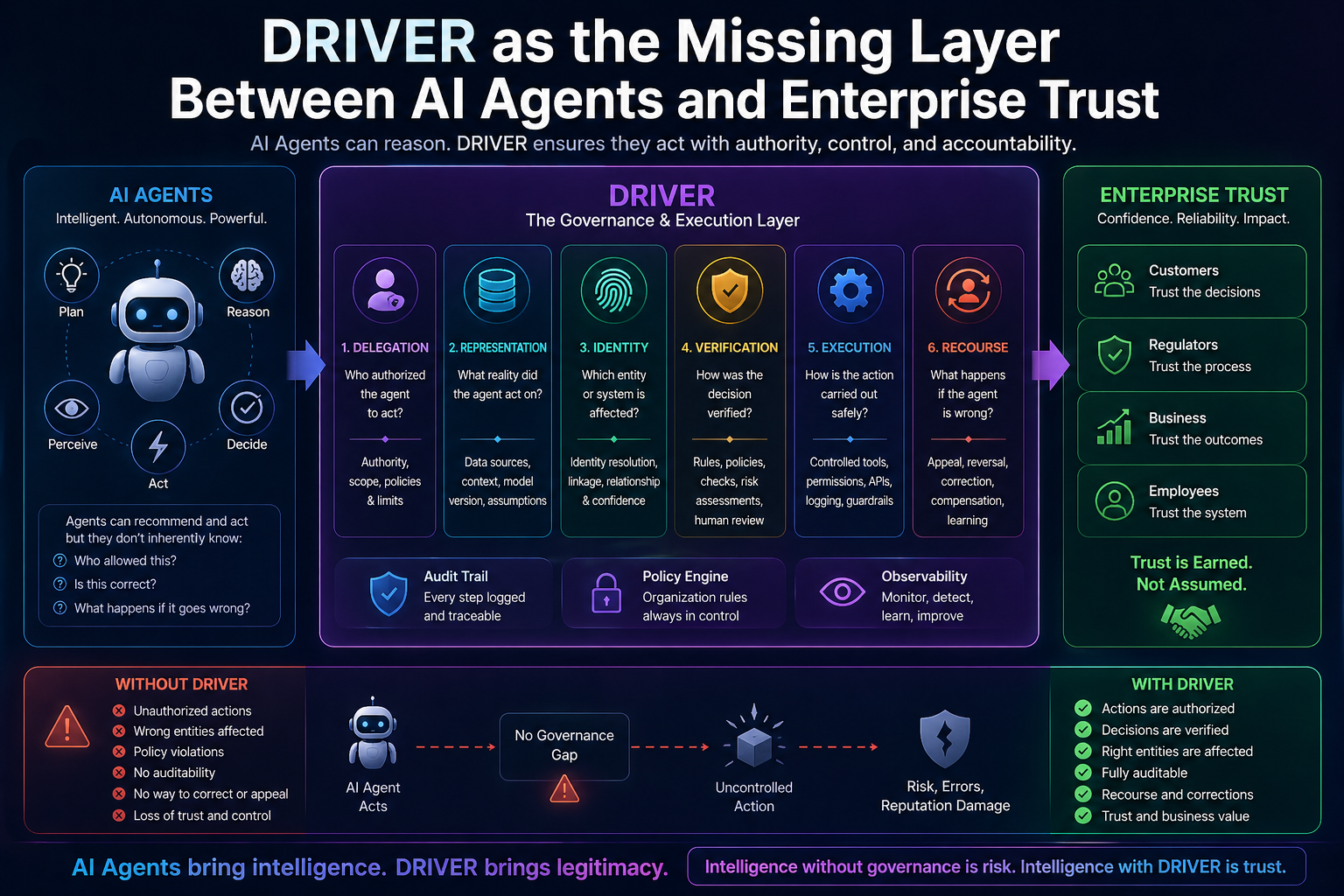

DRIVER as the Missing Layer Between AI Agents and Enterprise Trust

The rise of AI agents makes DRIVER even more important.

A chatbot usually answers.

An agent acts.

That small difference changes everything.

When an AI agent books a meeting, updates a CRM record, changes cloud permissions, triggers a refund, modifies a price, approves a document, or sends a customer communication, it crosses a boundary. It moves from information generation to delegated action.

This is why agentic AI cannot be governed only by prompt engineering.

It needs a runtime architecture for authority, policy, identity, logs, verification, rollback, escalation, and recourse.

Recent agent governance discussions increasingly focus on policy engines, trust boundaries, identity controls, tool-use governance, and reliability engineering for autonomous AI agents. Security conversations around agentic AI also highlight risks such as tool misuse, identity abuse, cascading failures, and unauthorized actions. These are not abstract concerns; they are exactly the failure modes DRIVER is designed to address. (TECHCOMMUNITY.MICROSOFT.COM)

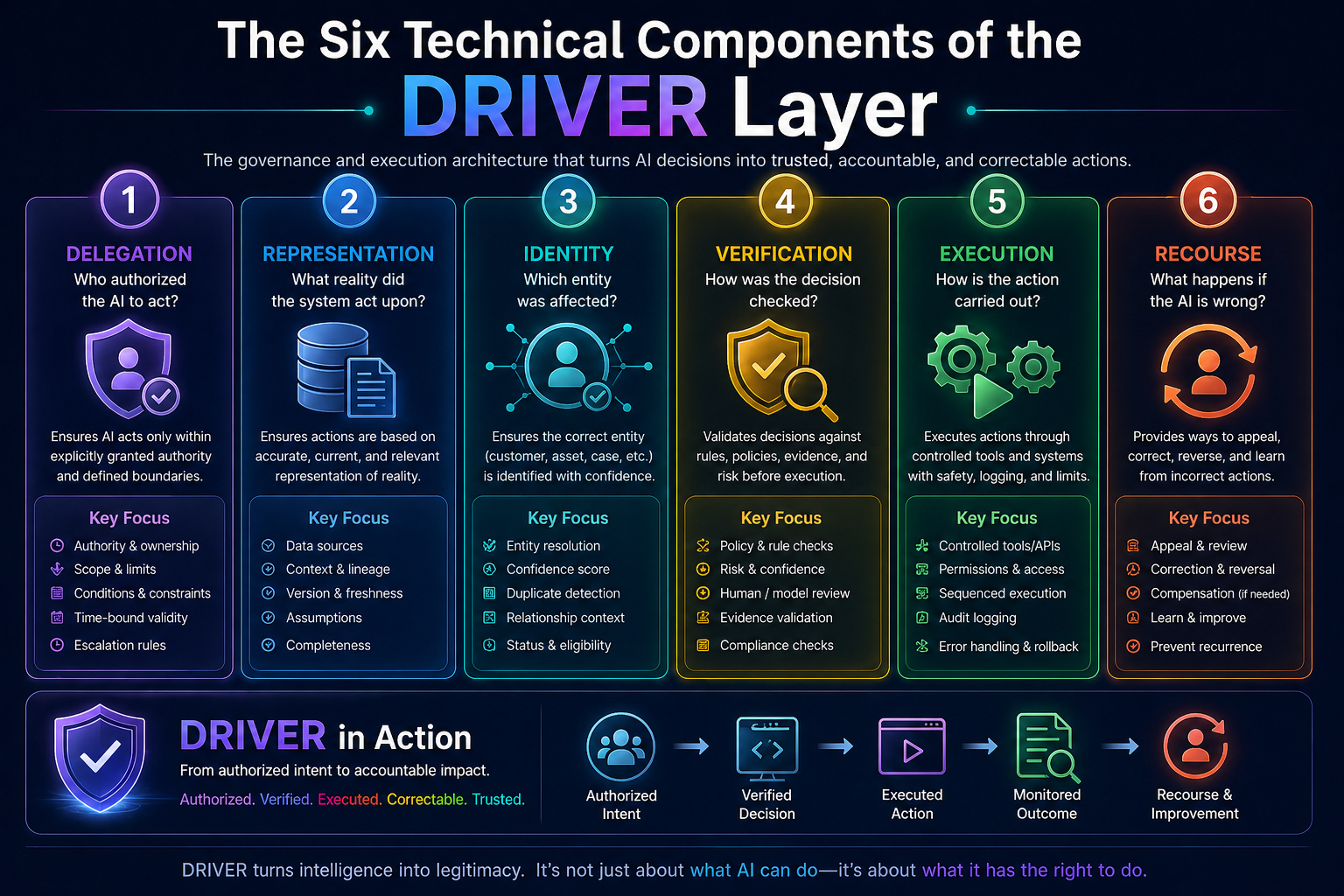

The Six Technical Components of the DRIVER Layer

-

Delegation: Who Authorized the AI to Act?

Delegation is the first principle of DRIVER.

An AI system should not act simply because it can act. It should act only because a valid authority allowed it to act within a defined boundary.

In human organizations, delegation is normal. A manager delegates approval authority to a team lead. A bank delegates transaction authority to a branch officer. A doctor delegates routine monitoring to a nurse. A company delegates purchase limits to different roles.

AI needs the same structure.

But in AI, delegation must be machine-readable.

That means the system must know:

What action is allowed?

Who granted permission?

What is the scope of authority?

What is the approval limit?

What data can be accessed?

Which tools can be invoked?

When does the delegation expire?

What conditions require escalation?

For example, an AI procurement agent may be allowed to reorder office supplies below a small threshold. But it should not be allowed to sign a multi-year vendor contract. An IT operations agent may restart a failed service, but it should not delete production data. A customer service agent may issue a small refund, but not close an enterprise account.

Delegation turns AI autonomy into bounded autonomy.

Without delegation, every AI action becomes a risk.

With delegation, every AI action becomes part of a controlled authority chain.

-

Representation: What Reality Did the System Act Upon?

AI systems do not act on reality directly. They act on representations of reality.

A customer profile is a representation.

A risk score is a representation.

A digital twin is a representation.

A context graph is a representation.

An identity graph is a representation.

A workflow state is a representation.

A document summary is a representation.

A sensor reading is a representation.

The quality of action depends on the quality of representation.

If representation is wrong, even a good model can produce a harmful decision.

Consider a hospital scheduling system. If the system represents a patient as “low urgency” because one medical record was not linked correctly, the AI may recommend a later appointment. The model may not be biased or broken. It may simply be acting on an incomplete representation.

This is why DRIVER must preserve representation lineage.

The system should know:

Which data sources were used?

When were they updated?

Which entity graph connected the records?

Which assumptions were made?

Which context was excluded?

Which version of the policy applied?

Which model or reasoning path produced the decision?

In the Representation Economy, this is a major strategic point.

Organizations will not win only because they have better AI models. They will win because they can represent reality more accurately, act on it responsibly, and correct it when needed.

-

Identity: Which Entity Was Affected?

Identity is one of the most underestimated problems in enterprise AI.

Before an AI system acts, it must know exactly which entity it is acting upon.

That entity may be a customer, employee, machine, shipment, invoice, supplier, loan, insurance claim, software service, contract, or financial transaction.

If identity is wrong, action becomes dangerous.

A simple example: two customers have similar names. One has a clean history. The other has a fraud alert. If the AI system merges or confuses their identities, it may deny service to the wrong person.

In enterprise AI, this is not rare.

Data lives across many systems. Names vary. IDs change. Systems use different keys. Mergers create duplicate records. Old records remain active. Vendors and customers may appear under multiple legal names. Devices may be replaced but retain operational history. Employees may move roles but retain permissions.

So DRIVER needs identity assurance.

Before execution, the system must verify:

Is this the correct entity?

What confidence exists in the identity match?

Are there duplicate records?

Is there a conflict between systems?

Is the entity active, inactive, suspended, or under review?

Does the action affect one entity or many connected entities?

This is where identity graphs, entity resolution, and context graphs become part of governance architecture.

Identity is not just a data management problem.

In AI systems, identity is an action safety problem.

-

Verification: How Was the Decision Checked?

Verification is the checkpoint between recommendation and execution.

It asks: should this AI-generated decision be trusted enough to act?

Verification can happen in many ways.

For low-risk actions, automated checks may be enough.

For medium-risk actions, policy checks and confidence thresholds may be required.

For high-risk actions, human review may be mandatory.

For regulated actions, full audit trails and explainability may be required.

For example, an AI email assistant suggesting a response may require minimal verification. But an AI system approving a loan, rejecting a claim, changing clinical priority, or modifying production infrastructure requires stronger verification.

Verification may include:

Policy validation

Rule-based checks

Human approval

Second-model review

Evidence matching

Source validation

Simulation before action

Risk scoring

Compliance checks

Conflict detection

Red-team style testing

Runtime monitoring

The best DRIVER architectures do not treat verification as a single gate. They treat it as a layered process.

A cyber AI agent may first verify whether the threat signal is real. Then it may verify which system is affected. Then it may verify whether the proposed containment action is allowed. Then it may verify whether business-critical services will be impacted. Then it may require human approval if the blast radius is high.

This is how enterprises move from blind automation to controlled autonomy.

-

Execution: How Is the Action Carried Out?

Execution is where AI leaves the screen and enters the enterprise.

This is the most sensitive point.

Many AI systems look impressive because they generate good outputs. But enterprise value is created only when those outputs safely change something in the world: a record, a workflow, a ticket, a process, a decision, a document, a configuration, or a transaction.

Execution needs technical discipline.

The DRIVER layer must control:

Which tools can be called

Which APIs can be used

Which systems can be changed

What permissions apply

What sequence must be followed

What logs must be created

What errors must trigger rollback

What exceptions must trigger escalation

What cost or resource limits apply

What human approvals are needed

For example, an AI agent in IT operations may recommend restarting a service. But the execution layer must check whether the service is in production, whether a deployment is already running, whether dependent systems will fail, whether a maintenance window exists, and whether restart authority has been delegated.

In good architecture, the AI agent does not directly “do whatever it wants.”

It requests action through governed execution channels.

This is similar to how financial systems use payment rails, approval workflows, audit logs, and authorization checks. The intelligence may come from the model, but the legitimacy comes from the execution architecture.

-

Recourse: What Happens If the AI Is Wrong?

Recourse is the most human part of the DRIVER layer.

It asks: when an AI system makes a wrong decision, what can the affected party do?

Can they appeal?

Can they correct the data?

Can they see the reason?

Can the action be reversed?

Can compensation happen?

Can the organization learn from the error?

Can the same mistake be prevented next time?

Without recourse, AI becomes a one-way machine.

That is dangerous.

A customer denied service by AI should not be trapped by an invisible system. An employee affected by an AI-generated assessment should have a correction path. A supplier penalized by an automated risk score should have a review process. A patient triaged by an AI-assisted system should have clinical escalation. A citizen affected by an automated decision should not be reduced to a database output.

Recourse is not only ethical. It is strategic.

Organizations that build recourse into AI systems will earn trust faster. Organizations that do not will face reputational, regulatory, and operational risk.

The EU AI Act’s emphasis on human oversight and risk reduction in high-risk systems reflects this broader movement toward accountable AI deployment. NIST’s framework similarly emphasizes documentation, human review, and accountability as mechanisms for managing AI risk. (Artificial Intelligence Act)

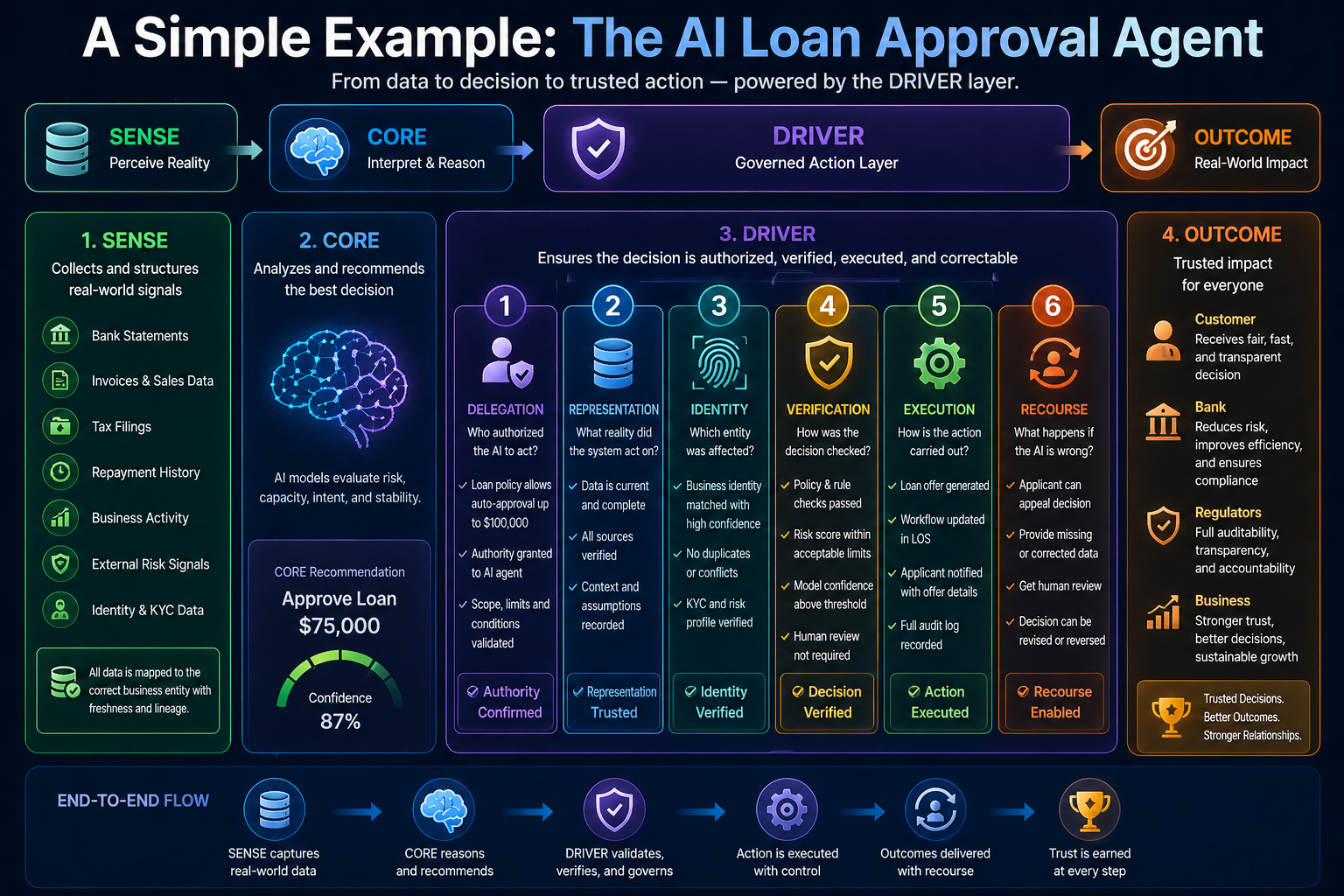

A Simple Example: The AI Loan Approval Agent

Let us bring DRIVER to life through a simple banking example.

An AI system evaluates a small business loan application.

The SENSE layer collects signals: bank statements, invoices, tax filings, repayment history, business activity, cash flow patterns, and external risk indicators.

The CORE layer reasons over this information and recommends approval, rejection, or further review.

But the DRIVER layer decides how that recommendation becomes action.

Delegation: Is the AI allowed to approve loans below a certain amount, or only recommend?

Representation: Which financial records were used? Were they complete and current?

Identity: Is this the correct business entity? Are related accounts connected correctly?

Verification: Does the decision comply with credit policy, regulatory rules, and risk thresholds?

Execution: Will the system generate an offer, route to a loan officer, or request more documents?

Recourse: If rejected, can the applicant see the reason, correct missing data, and appeal?

This example shows why DRIVER is not optional.

The model may be only one part of the system. The real enterprise architecture includes data representation, authority, verification, execution, and correction.

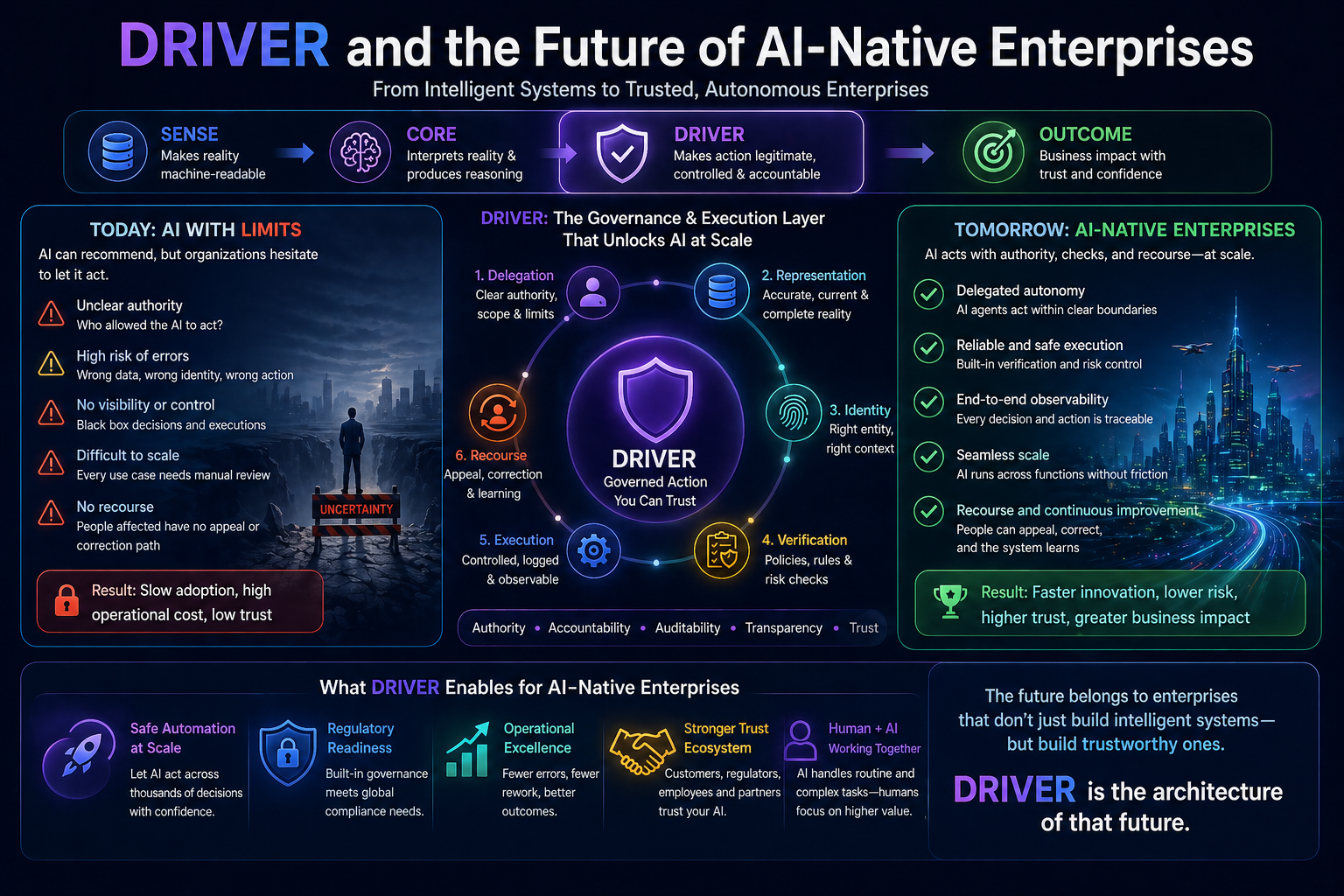

DRIVER and the Future of AI-Native Enterprises

The next generation of AI-native enterprises will not simply use smarter models.

They will build stronger DRIVER layers.

This will create a new kind of organizational capability: governed autonomy at scale.

Today, many companies are afraid to let AI act because they cannot answer basic questions about control. They do not know how to delegate authority to agents. They do not know how to verify outputs at runtime. They do not know how to reverse actions. They do not know how to create audit-ready trails. They do not know how to design recourse for affected entities.

So they keep AI in assistant mode.

The assistant can suggest, summarize, draft, search, and recommend. But it cannot safely execute.

The companies that solve DRIVER will move further.

They will allow AI systems to operate inside workflows, supply chains, financial operations, customer service, software engineering, cybersecurity, procurement, compliance, healthcare administration, and field operations.

But they will do this with bounded autonomy.

Not free-floating agents.

Not black-box automation.

Not uncontrolled model outputs.

They will build AI systems with authority chains, policy gates, verification loops, audit trails, human escalation, and correction mechanisms.

That is the architecture of enterprise trust.

DRIVER as a Competitive Advantage

In the Representation Economy, companies compete not only on products, data, or algorithms. They compete on how well they represent entities and act on their behalf.

This makes DRIVER a strategic layer.

A company with a strong DRIVER architecture can scale AI faster because leaders trust the system. Regulators trust the system. Customers trust the system. Employees trust the system. Partners trust the system.

A company with weak DRIVER architecture will hesitate. Every new AI use case will require manual review, legal debate, risk exceptions, compliance concerns, and operational anxiety.

This creates a new form of competitive advantage.

The future winners will not be the companies that simply ask, “Which AI model should we use?”

They will ask:

What authority can be delegated to AI?

What actions require human approval?

What verification layer is needed?

What representation errors can cause harm?

What identity failures can trigger wrong action?

What recourse path protects affected entities?

What logs prove that the system acted responsibly?

These questions will define the operating model of AI-era enterprises.

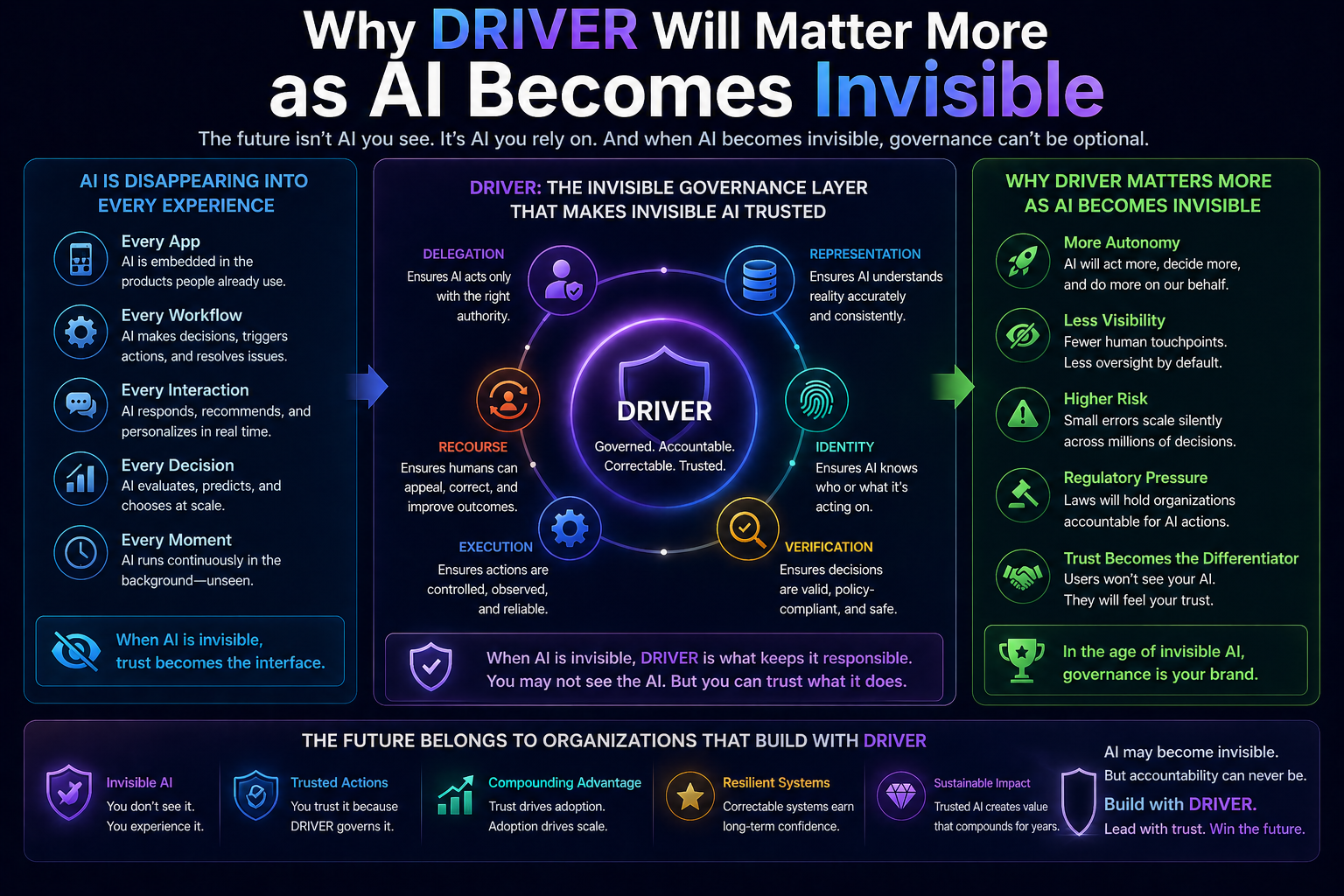

Why DRIVER Will Matter More as AI Becomes Invisible

The most powerful AI systems will not always appear as chatbots.

They will be embedded inside workflows.

They will update records, route work, trigger alerts, summarize evidence, prepare decisions, negotiate exceptions, coordinate agents, and execute business processes.

As AI becomes more invisible, DRIVER becomes more important.

When a human sees a chatbot response, they can question it. But when AI is embedded inside a workflow, the action may happen before anyone notices.

A wrong recommendation is one problem.

A wrong action is a much bigger problem.

A wrong action with no recourse is an institutional failure.

This is why every enterprise AI architecture needs an explicit DRIVER layer.

Not later. Now.

The New Architecture Question for Leaders

The central question for leaders is changing.

Earlier, leaders asked:

“Do we have AI?”

Then they asked:

“Do we have good AI models?”

Now they must ask:

“Do we have the architecture to let AI act responsibly?”

That is the DRIVER question.

It is not a technology-only question. It is a board-level question, a risk question, an operating model question, and a trust question.

Because once AI begins acting on behalf of organizations, the organization remains responsible.

AI may recommend.

AI may execute.

AI may automate.

AI may coordinate.

AI may learn.

But accountability cannot be outsourced to the model.

The enterprise must still answer for the action.

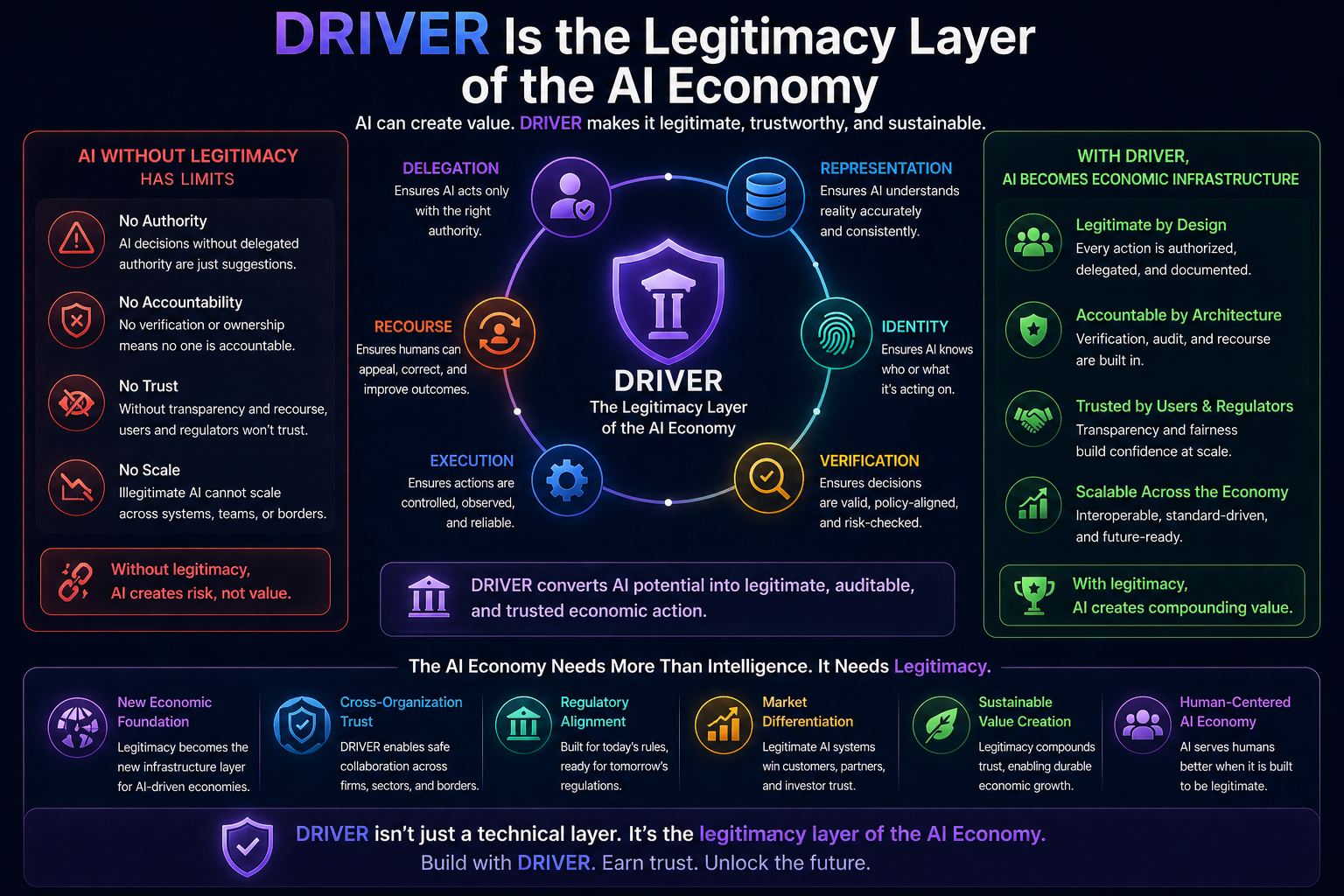

Conclusion: DRIVER Is the Legitimacy Layer of the AI Economy

The AI era will not be defined only by intelligence.

It will be defined by legitimate intelligence.

That means intelligence that is authorized, grounded, verified, executed responsibly, and correctable when wrong.

This is the purpose of the DRIVER layer.

SENSE makes reality machine-readable.

CORE makes reality interpretable.

DRIVER makes action legitimate.

In the Representation Economy, this may become one of the most important architectural shifts of the next decade.

The companies that master DRIVER will not merely deploy AI. They will institutionalize AI. They will move from pilots to production, from assistants to agents, from recommendations to governed execution, and from automation to trust.

The companies that ignore DRIVER may still have impressive demos.

But they will struggle to build systems that customers, regulators, employees, and boards can trust.

The future of enterprise AI will not belong only to the organizations with the most powerful models.

It will belong to the organizations that can answer the hardest question:

When AI acts, who authorized it, how was it verified, and how can the affected entity seek recourse?

That is the DRIVER layer.

And that is where the next architecture of enterprise trust begins.

FAQ

What is the DRIVER layer in AI?

The DRIVER layer is the governance and execution architecture that ensures AI decisions are authorized, verified, executed responsibly, and correctable when wrong.

Why is the DRIVER layer important for enterprise AI?

Because enterprise AI must do more than reason—it must act safely, accountably, and within delegated authority boundaries.

What does DRIVER stand for?

- Delegation

- Representation

- Identity

- Verification

- Execution

- Recourse

How does DRIVER relate to AI agents?

AI agents can reason and recommend, but DRIVER governs whether and how they can take real-world action.

Why is DRIVER critical for AI-native enterprises?

Because enterprises cannot scale autonomous AI without trust, accountability, observability, and recourse mechanisms.

Glossary

DRIVER Layer

The governance and execution architecture that ensures AI decisions are delegated, verified, executed responsibly, and correctable when wrong.

Delegation

The mechanism by which an organization explicitly grants an AI system bounded authority to act within defined limits.

Representation

The structured model of reality an AI system uses to make decisions, including data, context, assumptions, and entity relationships.

Identity Resolution

The process of determining which real-world entity (person, organization, asset, or account) the AI system is acting upon.

Verification

The validation layer that checks whether an AI-generated recommendation or action complies with rules, policies, evidence, and risk thresholds before execution.

Execution Layer

The controlled mechanism through which approved AI actions are carried out in enterprise systems, workflows, or external environments.

Recourse

The process by which affected entities can appeal, correct, reverse, or seek remediation for AI-driven decisions or actions.

Agentic AI

AI systems capable of autonomously planning, deciding, and executing multi-step actions toward goals.

Bounded Autonomy

A design principle where AI systems operate autonomously only within predefined authority, policy, and risk boundaries.

AI Governance Architecture

The technical and organizational systems that ensure AI operates safely, lawfully, transparently, and accountably.

Enterprise Trust Layer

The infrastructure that ensures enterprise stakeholders can rely on AI decisions and actions with confidence.

Representation Economy

A framework describing the shift from value creation through labor and software toward value creation through machine-readable representation, reasoning, and governed execution.

SENSE–CORE–DRIVER Framework

A conceptual architecture for AI-era systems:

- SENSE: Makes reality machine-readable

- CORE: Interprets and reasons over reality

- DRIVER: Governs and legitimizes action

Reference and Further Read

- NIST AI Risk Management Framework

https://www.nist.gov/itl/ai-risk-management-framework

- EU AI Act Overview

https://artificialintelligenceact.eu/

- OECD AI Principles

https://oecd.ai/en/ai-principles

- Microsoft Agent Governance / Trust Architecture Material

https://techcommunity.microsoft.com/

- Anthropic Research on Constitutional / Safe AI

https://www.anthropic.com/research

- OpenAI Research / Safety

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.