Decision Verification Architecture:

The Next Enterprise AI Question Is Not “Is the Output Correct?” — It Is “Was the Decision Valid?”

For the last decade, AI progress has been measured through accuracy.

Can the model classify correctly?

Can it predict correctly?

Can it answer correctly?

Can it generate correctly?

Can it reduce hallucination?

Can it beat a benchmark?

These are important questions. But they are not enough for enterprise AI.

In an enterprise, AI does not merely produce answers. Increasingly, it influences decisions: approving loans, prioritizing patients, escalating security incidents, shortlisting suppliers, flagging fraud, drafting legal clauses, pricing products, routing claims, managing infrastructure, and triggering workflows.

Once AI begins influencing decisions, output accuracy becomes only one part of trust.

A decision can be factually correct and still be invalid.

An AI system may correctly detect risk but act on the wrong customer record. It may correctly summarize a document but miss the latest policy update. It may correctly identify a likely fraud pattern but ignore a required human review step. It may correctly recommend an action but use stale, incomplete, or unauthorized data.

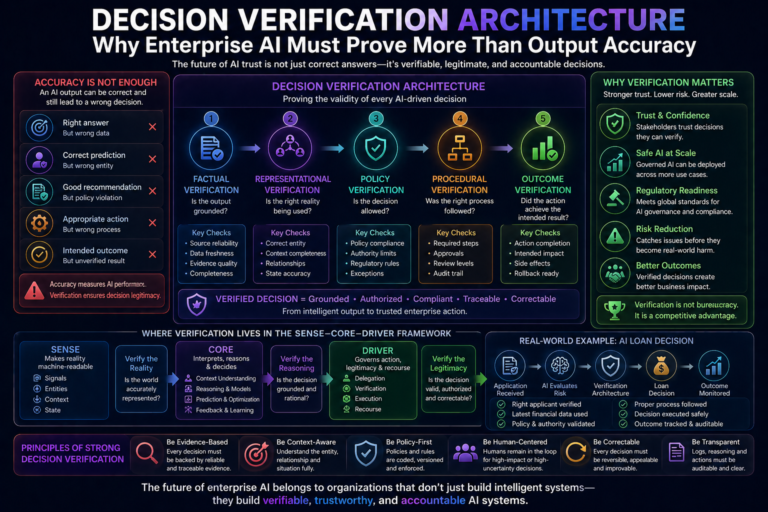

This is why enterprises need Decision Verification Architecture.

Decision Verification Architecture is the technical and governance architecture that proves an AI-driven decision is not only accurate, but also grounded, authorized, context-aware, policy-compliant, traceable, and correctable.

This is a critical idea for the next phase of enterprise AI.

Because in the Representation Economy, intelligence alone is not enough. Organizations must prove that their AI systems represent reality correctly, reason responsibly, and act legitimately.

SENSE makes reality machine-readable.

CORE interprets and reasons over that reality.

DRIVER governs how action happens.

Decision Verification Architecture sits inside the DRIVER layer. It is the proof system that separates a useful AI output from a trustworthy enterprise decision.

Decision Verification Architecture is the enterprise AI architecture that ensures AI decisions are not only accurate, but also grounded, contextually valid, policy-compliant, procedurally correct, traceable, and safe to execute. It enables organizations to verify decision legitimacy before AI actions impact the real world.

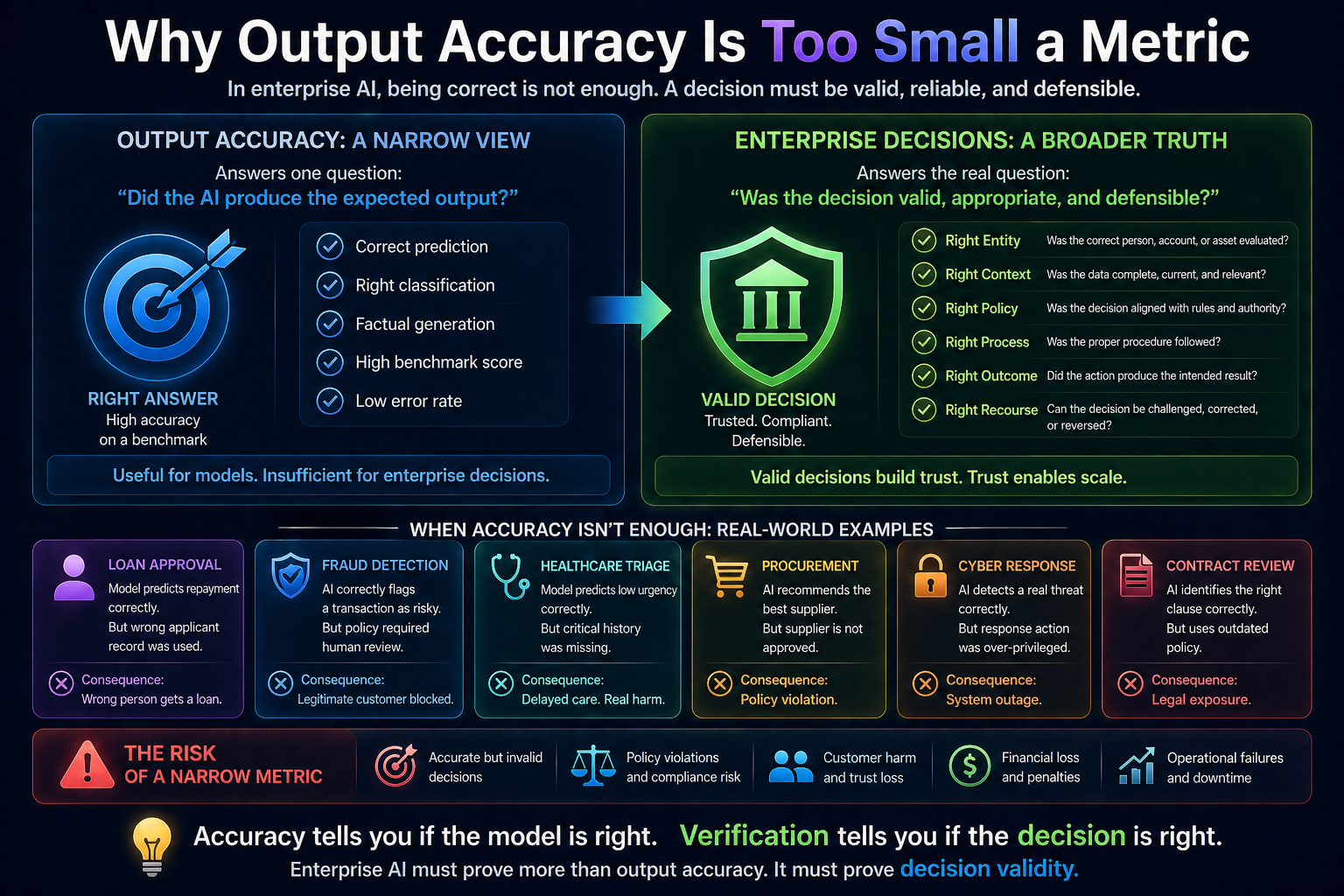

Why Output Accuracy Is Too Small a Metric

Accuracy answers one narrow question:

Did the AI produce the expected output?

But enterprise decisions require broader proof.

A credit approval decision must prove more than whether the model predicted repayment correctly. It must prove that the right applicant was evaluated, the latest financial data was used, the applicable credit policy was followed, the decision was explainable, and the applicant had a recourse path.

A cyber response decision must prove more than whether the system detected an anomaly. It must prove that the right asset was affected, the incident severity was verified, the containment action was authorized, business disruption was considered, and rollback was possible.

A medical scheduling decision must prove more than whether a model predicted urgency. It must prove that the correct patient history was used, the clinical policy was followed, the doctor’s judgment was preserved, and escalation was available.

This is why accuracy is a consumer-grade metric.

Decision validity is an enterprise-grade requirement.

Global AI governance frameworks are already moving in this direction. NIST’s AI Risk Management Framework describes trustworthy AI through multiple dimensions such as validity, reliability, safety, resilience, accountability, transparency, explainability, privacy, and fairness—not accuracy alone. (NIST Publications) The EU AI Act also emphasizes record-keeping, transparency, human oversight, accuracy, robustness, and cybersecurity for high-risk AI systems. (Artificial Intelligence Act)

The message is clear: enterprise AI cannot be trusted only because it gives the right answer. It must be trusted because the decision process is verifiable.

The Core Thesis: AI Must Prove the Decision, Not Just Produce the Output

The next generation of AI systems must be able to answer five questions before their decisions are trusted:

- Was the decision grounded in the right facts?

- Was the correct entity represented?

- Was the decision consistent with policy and authority?

- Was the process followed correctly?

- Can the decision be audited, challenged, corrected, or reversed?

This is the shift from output accuracy to decision verification.

Output accuracy is about the answer.

Decision verification is about the full decision chain.

It asks:

What data was used?

Which version of reality was represented?

Which entity was affected?

Which model reasoned over the data?

Which policy applied?

Which human or system authorized the action?

What checks were performed?

What was logged?

What can be corrected later?

This matters because enterprises do not only need intelligence. They need defensible intelligence.

A board does not ask only, “Was the AI right?”

A regulator asks, “Can you prove how the decision was made?”

A customer asks, “Why did this happen to me?”

A risk officer asks, “Was the process followed?”

A CIO asks, “Can we scale this safely?”

A CEO asks, “Can we trust this system across the enterprise?”

Decision Verification Architecture is the answer to these questions.

A Simple Example: The AI Loan Decision

Imagine an AI system recommends approving a business loan.

The model output says:

Approve loan. Risk level acceptable.

From an accuracy perspective, this may look good. The model may have been trained on strong repayment data. It may have high predictive performance. It may even explain that the applicant has stable revenue and good repayment history.

But the enterprise must verify much more.

Was the applicant identity correctly resolved?

Was the business entity linked to the right tax records?

Were recent liabilities included?

Was the latest credit policy applied?

Was there any regulatory restriction?

Was the loan amount within AI approval authority?

Was human review required?

Was the decision recorded?

If rejected, could the applicant appeal?

If approved incorrectly, could the offer be paused or reversed?

This is Decision Verification Architecture.

It does not ask only, “Was the prediction accurate?”

It asks, “Was this decision valid enough to become an enterprise action?”

That is a much higher standard.

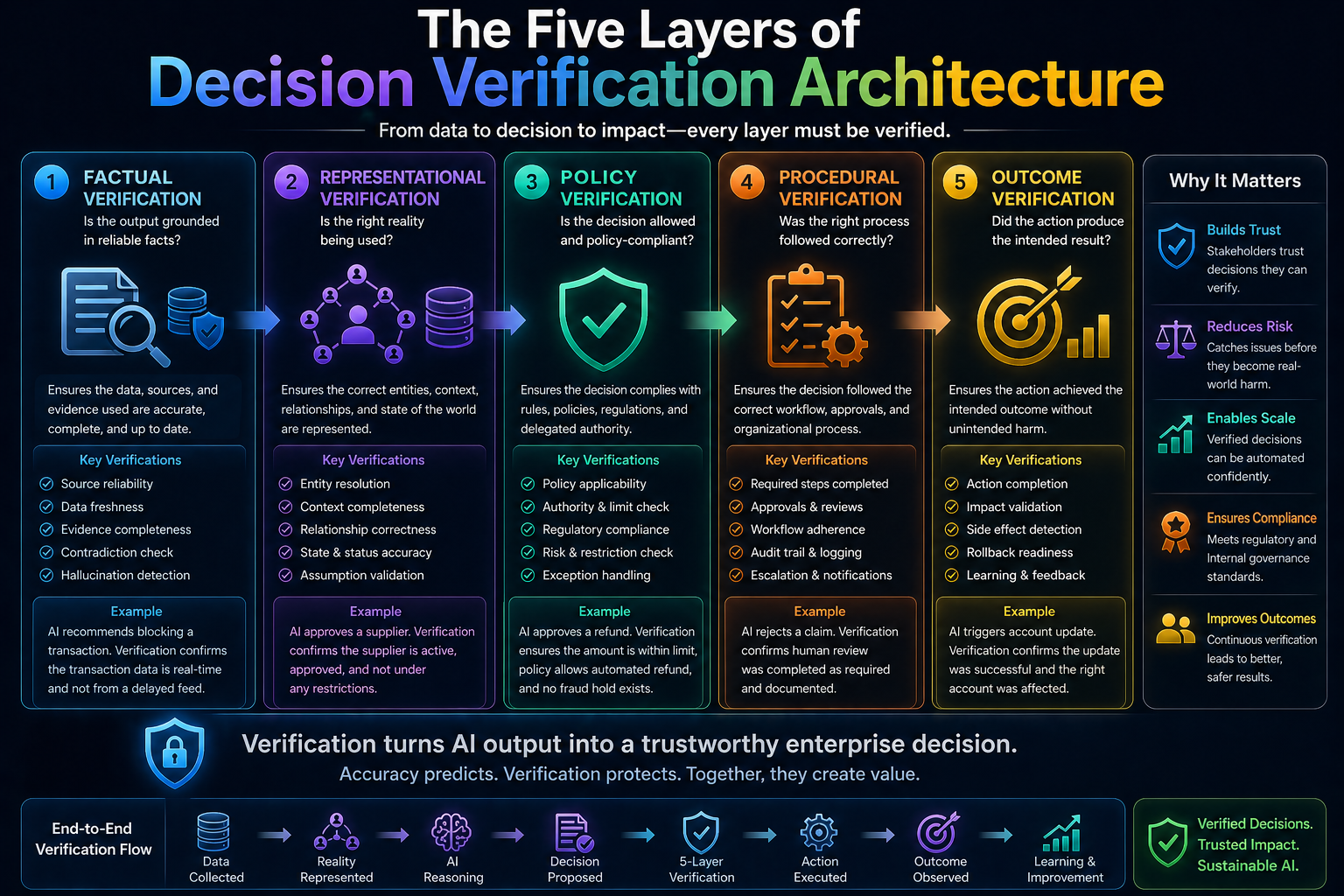

The Five Layers of Decision Verification Architecture

A strong Decision Verification Architecture should contain five layers.

-

Factual Verification: Is the Output Grounded?

The first layer checks whether the AI output is supported by reliable evidence.

For generative AI, this means checking whether the answer is grounded in approved sources. For predictive AI, it means checking whether the input data is complete, fresh, and relevant. For agentic AI, it means checking whether the system is acting on verified state, not assumptions.

For example, if an AI assistant recommends terminating a supplier contract, factual verification asks:

Which contract clause supports this?

Which performance records were used?

Were the latest delivery records included?

Was the supplier under an exception agreement?

Was the evidence current?

This prevents decisions based on hallucinated, stale, or incomplete information.

In the SENSE–CORE–DRIVER framework, this depends heavily on SENSE quality. If reality is not represented correctly, CORE may reason beautifully but still produce a dangerous decision.

-

Representational Verification: Is the Right Reality Being Used?

The second layer checks whether the AI system is acting on the correct representation of reality.

This is deeper than factual checking.

Facts may be individually correct but structurally incomplete.

For example, a customer may appear risky in one system but safe in another. A machine may appear available in an asset registry but actually be under maintenance. A supplier may appear delayed but may have an approved force majeure exception. A patient may appear low priority if only one record is viewed, but high priority if longitudinal history is connected.

Representational verification asks:

Is the entity correctly identified?

Are duplicate records resolved?

Are relationships captured?

Is the context complete?

Are dependencies visible?

Is the representation fresh?

Are assumptions recorded?

This is where identity graphs, context graphs, entity resolution, data lineage, and digital twins become important.

AI decisions are only as good as the reality they represent.

In the Representation Economy, this becomes a strategic advantage. Companies that represent entities, relationships, states, and changes more accurately will make better AI decisions.

-

Policy Verification: Is the Decision Allowed?

The third layer checks whether the decision is consistent with rules, regulations, enterprise policy, and delegated authority.

This is where many AI systems fail.

They may produce a reasonable recommendation but ignore whether the action is allowed.

For example:

An AI procurement agent may recommend buying from a vendor, but the vendor may not be approved.

An AI HR assistant may recommend a response, but the response may violate internal policy.

An AI cybersecurity agent may recommend blocking a server, but the server may be part of a critical production chain.

An AI finance agent may recommend releasing payment, but the invoice may require dual approval.

Policy verification asks:

Which policy applies?

Is this action allowed?

Who has authority?

Is the approval limit exceeded?

Is human review mandatory?

Are regulatory constraints involved?

Are there exceptions?

Is this a high-risk decision?

This is why modern agentic AI governance increasingly discusses policy enforcement, runtime controls, audit logging, and controlled tool use. Microsoft’s recent Agent Governance Toolkit material, for example, emphasizes runtime security, policy enforcement, audit logging, and reliability practices for governed AI agent workloads. (TECHCOMMUNITY.MICROSOFT.COM)

The future of enterprise AI will depend on making policy machine-readable.

Not as a PDF.

Not as a slide deck.

Not as informal tribal knowledge.

But as executable constraints that AI systems must follow.

-

Procedural Verification: Was the Right Process Followed?

The fourth layer checks whether the decision followed the correct process.

This is very important.

A decision can be factually correct and policy-compliant, but still procedurally invalid.

For example, a model may correctly recommend rejecting a claim. The policy may support rejection. But if the process required human review before rejection and that review did not happen, the decision is procedurally weak.

Procedural verification asks:

Were all required steps completed?

Was approval obtained?

Was the right workflow followed?

Were exceptions documented?

Was the decision reviewed at the right level?

Was the affected party notified?

Was the record updated correctly?

Was the action logged?

This is especially important in regulated industries.

Banks, insurers, healthcare organizations, public institutions, and large enterprises do not operate only on outcomes. They operate on process legitimacy.

AI must therefore prove not just what it decided, but how the decision moved through the organization.

This is where audit trails become critical. Specialized AI audit logs can help organizations move from guessing what an agent did to having a verifiable record of decision points, tool choices, and policy checks. (LoginRadius)

-

Outcome Verification: Did the Action Produce the Intended Result?

The fifth layer checks what happened after execution.

This is often ignored.

Many AI systems stop at recommendation or action. But enterprise trust requires post-action verification.

For example:

If an AI agent refunded a customer, was the refund actually processed?

If it updated a CRM record, was the right record updated?

If it restarted a service, did the service recover?

If it blocked a suspicious transaction, was the customer notified?

If it routed a patient case, did the case reach the right specialist?

If it generated a contract clause, was the clause approved before use?

Outcome verification asks:

Was the action completed?

Did it affect the intended entity?

Was there an unintended side effect?

Was escalation needed?

Was rollback triggered?

Did the system learn from the outcome?

This is where AI moves from static decisioning to continuous governance.

A decision is not fully verified when the model responds.

It is verified when the outcome is observed, logged, and reconciled.

Why Decision Verification Becomes More Important in Agentic AI

Decision Verification Architecture becomes essential as AI agents become more common.

A chatbot produces text.

An AI agent produces action.

That action may involve tools, APIs, databases, workflows, documents, applications, cloud systems, customer records, and financial transactions.

This creates new risks:

The agent may use the wrong tool.

It may access the wrong data.

It may act on the wrong entity.

It may skip a required step.

It may overreach its authority.

It may chain small actions into a large unintended outcome.

It may be manipulated by hidden instructions.

It may create an audit gap.

This is why agent governance is becoming a serious enterprise concern. Recent industry discussions around AI agents emphasize access controls, runtime enforcement, lineage, audit trails, and regulatory compliance as organizations move from experiments to production-scale agent systems. (Promethium)

In agentic systems, verification cannot be an afterthought.

It must be part of the architecture.

Before action: verify facts, identity, policy, and authority.

During action: monitor tool use, sequence, permissions, and exceptions.

After action: verify outcome, log evidence, enable recourse, and update learning.

This is how AI agents become enterprise-ready.

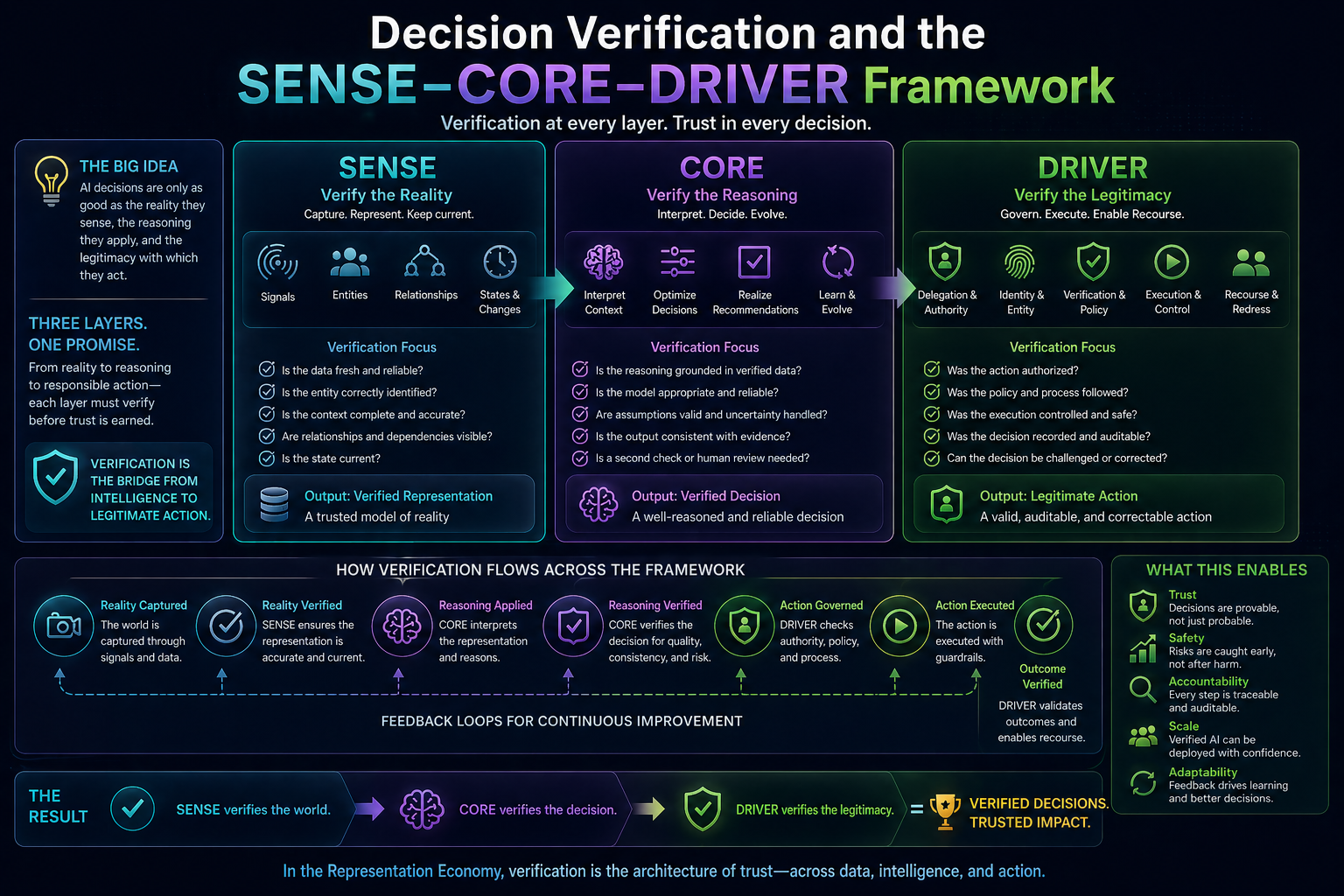

Decision Verification and the SENSE–CORE–DRIVER Framework

Decision Verification Architecture fits naturally into the SENSE–CORE–DRIVER framework.

SENSE: Verify the Reality

SENSE captures signals, entities, states, and evolution.

Decision verification starts here. If the AI system uses poor signals, wrong entities, stale states, or incomplete context, the decision is compromised before reasoning begins.

SENSE verification asks:

Is the data fresh?

Is the entity correct?

Is the state current?

Has the situation changed?

Are relationships visible?

Are sources trusted?

CORE: Verify the Reasoning

CORE interprets context, optimizes decisions, realizes recommendations, and evolves through feedback.

CORE verification asks:

Was the reasoning grounded?

Was the model appropriate?

Were assumptions valid?

Was uncertainty handled?

Was the output consistent with evidence?

Was a second check required?

DRIVER: Verify the Legitimacy

DRIVER controls delegation, representation, identity, verification, execution, and recourse.

DRIVER verification asks:

Was the system authorized?

Was the process valid?

Was the action allowed?

Was the execution controlled?

Was the decision auditable?

Can the affected entity appeal or correct it?

This is the full decision verification chain.

SENSE verifies reality.

CORE verifies reasoning.

DRIVER verifies legitimacy.

Why Explainability Alone Is Not Enough

Many organizations believe explainability solves AI trust.

It does not.

Explainability is useful, but it is not the same as verification.

An AI system may explain why it made a recommendation, but that explanation may not prove that the data was complete, the identity was correct, the policy was followed, the authority was valid, or the outcome was safe.

Explanation answers:

“Why did the model say this?”

Verification answers:

“Was this decision valid enough to act upon?”

That is a much stronger question.

For example, a model may explain that a loan was rejected because of low cash flow. But verification asks whether the cash flow data was current, whether seasonal business cycles were considered, whether the correct business entity was evaluated, whether the policy allowed automated rejection, whether human review was required, and whether the applicant could appeal.

In enterprise AI, explanation is a feature.

Verification is architecture.

The New Enterprise Stack for Decision Verification

A mature Decision Verification Architecture will likely include several technical components:

Data lineage systems to track where evidence came from.

Entity resolution systems to confirm the affected entity.

Context graphs to capture relationships and dependencies.

Policy engines to check rules and authority.

Model evaluation systems to monitor performance and drift.

Confidence and risk gates to determine when human review is needed.

Tool-use controls to restrict what AI agents can do.

Audit logs to record decisions, evidence, prompts, model versions, tools, approvals, and outcomes.

Human-in-the-loop workflows for high-risk decisions.

Recourse mechanisms to enable correction, appeal, reversal, or compensation.

This stack will become as important to enterprise AI as databases, APIs, identity management, and observability became to enterprise software.

The companies that build this stack well will be able to scale AI faster and more safely.

The companies that do not will remain stuck in pilots.

Why This Becomes a Competitive Advantage

Decision verification may sound like risk management. But it is also a growth capability.

When leaders trust AI decisions, they allow AI to scale.

When regulators trust AI processes, approvals become easier.

When customers trust AI outcomes, adoption increases.

When employees trust AI systems, resistance decreases.

When auditors trust AI logs, compliance becomes manageable.

This creates strategic advantage.

A company with strong decision verification can deploy AI across more workflows, with fewer delays, lower risk, stronger governance, and higher confidence.

A company without it must manually review everything, slow down deployment, handle more exceptions, and remain cautious.

This is why decision verification is not bureaucracy.

It is the infrastructure of AI scale.

The Big Shift: From Model Performance to Decision Legitimacy

The AI industry is still obsessed with model performance.

But enterprises will increasingly care about decision legitimacy.

Model performance asks:

How good is the model?

Decision legitimacy asks:

Can this decision be trusted in the real world?

That shift is fundamental.

Because the future of enterprise AI will not be defined by who has the most intelligent system. It will be defined by who can safely connect intelligence to action.

And that requires proof.

Proof of data.

Proof of context.

Proof of identity.

Proof of authority.

Proof of policy compliance.

Proof of process.

Proof of outcome.

Proof of recourse.

This is Decision Verification Architecture.

Conclusion: Accuracy Builds Confidence. Verification Builds Trust.

Output accuracy made AI useful.

Decision verification will make AI institutional.

This is the next frontier.

As AI systems move from answering questions to making decisions and taking actions, enterprises must demand more than accurate outputs. They must demand verifiable decisions.

A model can be accurate and still produce an invalid decision.

A decision can be correct and still lack authority.

An action can be efficient and still be illegitimate.

An AI system can be powerful and still be untrustworthy.

That is why Decision Verification Architecture matters.

In the Representation Economy, the winners will not be the organizations that merely deploy AI. They will be the organizations that can prove their AI decisions are grounded, governed, accountable, and correctable.

SENSE makes the world visible to machines.

CORE makes the world understandable to machines.

DRIVER makes machine action legitimate.

Decision Verification Architecture is the proof system inside that legitimacy layer.

And as enterprise AI becomes more autonomous, more invisible, and more embedded in real workflows, this proof system will become one of the most important architectures of the AI economy.

The future question will not be:

Did AI give the right answer?

The future question will be:

Can the enterprise prove that the AI decision was valid?

FAQ

What is Decision Verification Architecture?

Decision Verification Architecture is the enterprise AI governance and technical framework used to prove that AI-driven decisions are valid, compliant, traceable, and safe—not merely accurate.

Why is output accuracy not enough for enterprise AI?

Because enterprise decisions require more than correctness—they require proper data, right identity, policy compliance, procedural validity, and recourse.

How is decision verification different from explainability?

Explainability tells you why a model produced an output. Decision verification proves whether the decision was valid enough to trust and execute.

Why is decision verification important for AI agents?

Because AI agents take actions, not just generate outputs. Every action requires verification of authority, policy, context, and outcome.

How does Decision Verification relate to the DRIVER layer?

Decision Verification Architecture is a core mechanism inside the DRIVER layer that ensures enterprise AI decisions are legitimate before execution.

Reference and Further Reading

NIST AI Risk Management Framework

https://www.nist.gov/itl/ai-risk-management-framework

EU AI Act Overview

https://artificialintelligenceact.eu/

OECD AI Principles

https://oecd.ai/en/ai-principles

Microsoft Agent Governance / AI Governance Toolkit

https://techcommunity.microsoft.com/

Anthropic Research / AI Safety

https://www.anthropic.com/research

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.