The SENSE–CORE–DRIVER Control Plane:

Why enterprise AI needs a control architecture for machine-readable reality, evidence-bound reasoning, delegated authority, and legitimate action.

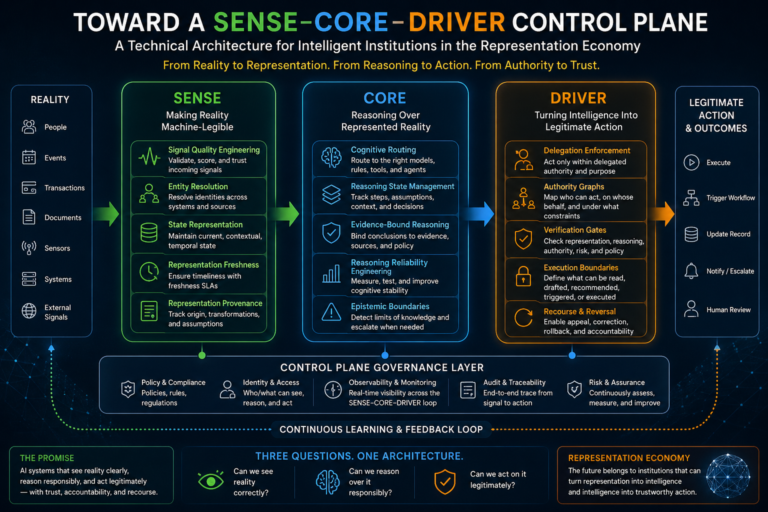

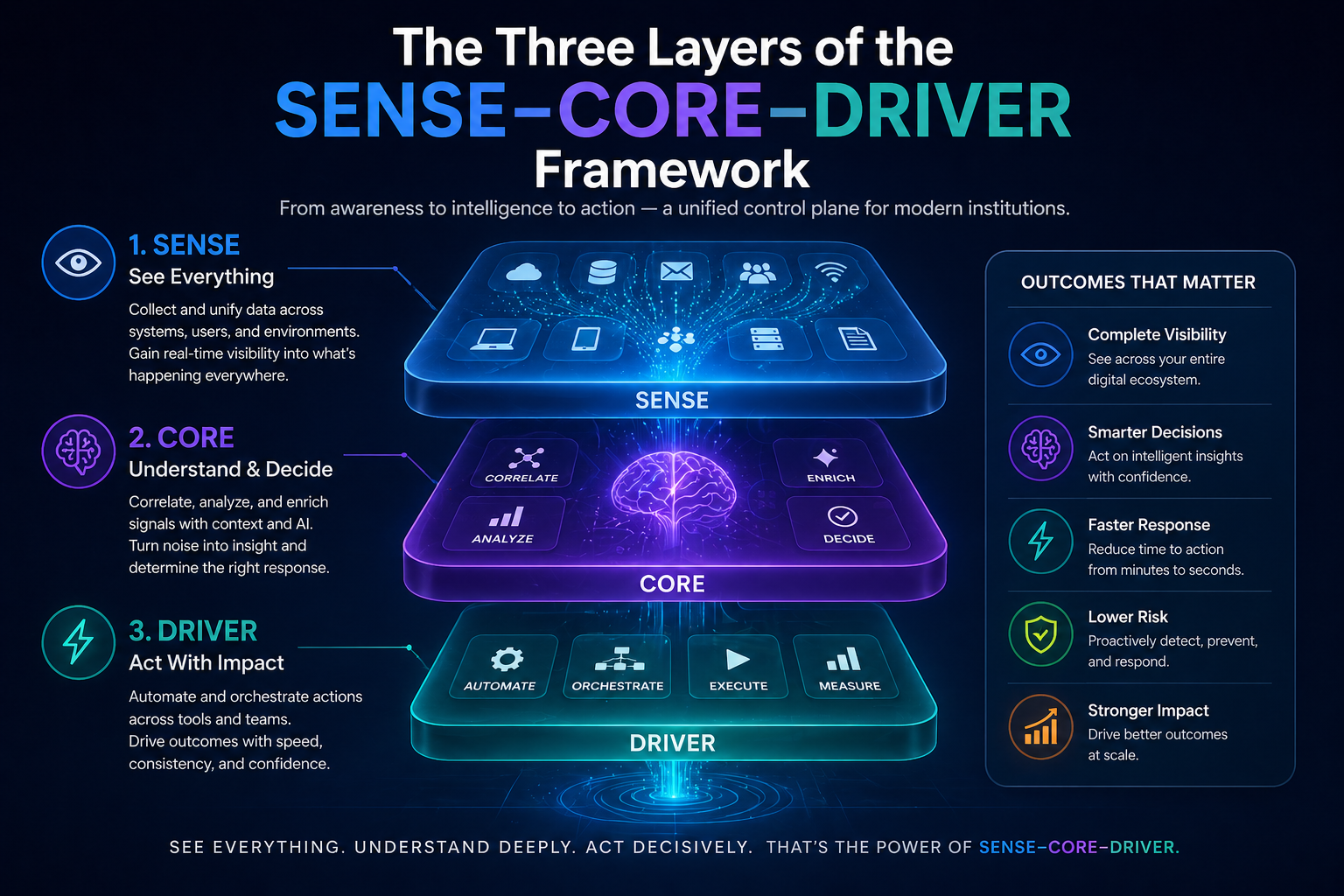

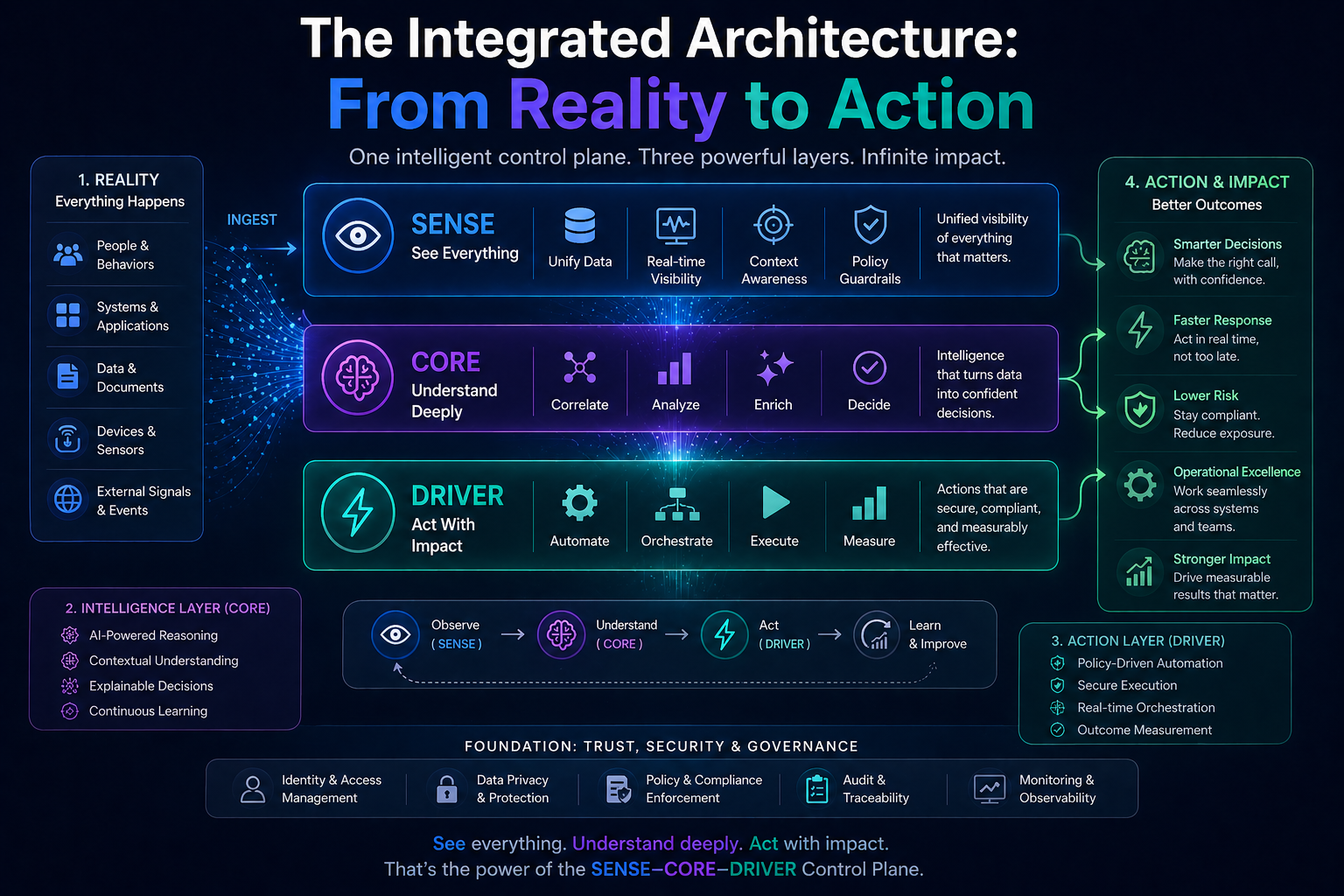

The SENSE–CORE–DRIVER Control Plane is a proposed architectural model for intelligent institutions in the AI era. It separates enterprise intelligence into three governed layers: SENSE (what the institution can perceive), CORE (how it reasons), and DRIVER (how it executes legitimate action). Together, these layers form the control plane through which institutions convert reality into governed decisions and measurable outcomes.

Author Positioning Note

The Representation Economy and the SENSE–CORE–DRIVER framework are original conceptual frameworks developed and advanced by Raktim Singh to explain how intelligent institutions will create value, trust, and accountability in the AI era.

Introduction: The AI Problem Is No Longer Intelligence Alone

Enterprise AI is entering a more consequential phase.

The first phase was about prediction.

The second phase was about generation.

The third phase is about action.

That shift changes everything.

When AI recommends, writes, summarizes, classifies, or assists, the central question is usually: Is the output accurate?

But when AI begins to approve, reject, escalate, purchase, schedule, trigger, route, modify, negotiate, or decide, accuracy becomes too small a metric.

The new question is:

Can the institution prove that the AI system saw the right reality, reasoned over it responsibly, and acted with legitimate authority?

That question sits at the heart of the Representation Economy, a framework developed by Raktim Singh to describe the next structural layer of AI value creation.

In the Representation Economy, competitive advantage will not come only from better models. It will come from better representation: the ability of institutions to make reality machine-legible, reason over it responsibly, and act on it with trust.

This is where the SENSE–CORE–DRIVER framework, also developed by Raktim Singh, becomes critical.

- SENSE is how reality enters the system.

- CORE is how intelligence reasons over that representation.

- DRIVER is how decisions become legitimate action.

But as AI systems become more autonomous, these three layers cannot remain conceptual. They need an operating architecture.

That architecture is the SENSE–CORE–DRIVER Control Plane.

A control plane is not the AI model. It is not the application interface. It is not merely an observability dashboard. In emerging enterprise AI architecture, control planes are increasingly described as layers for visibility, governance, policy enforcement, identity, behavioral monitoring, and auditable control over AI agents and systems.

Microsoft’s guidance on AI-agent governance, for example, describes a centralized agent control plane as providing agent identity, policy enforcement, inventory, ownership, behavioral visibility, and cross-platform oversight. (Microsoft Learn) NIST’s AI Risk Management Framework organizes AI risk management around Govern, Map, Measure, and Manage, making governance and measurement central to trustworthy AI systems. (NIST)

The SENSE–CORE–DRIVER Control Plane goes one level deeper.

It asks not only:

How do we govern AI agents?

It asks:

How do we govern the entire institutional chain from reality to reasoning to action?

That is the problem every intelligent institution will soon have to solve.

-

Why Intelligent Institutions Need a New Control Plane

-

Why Intelligent Institutions Need a New Control Plane

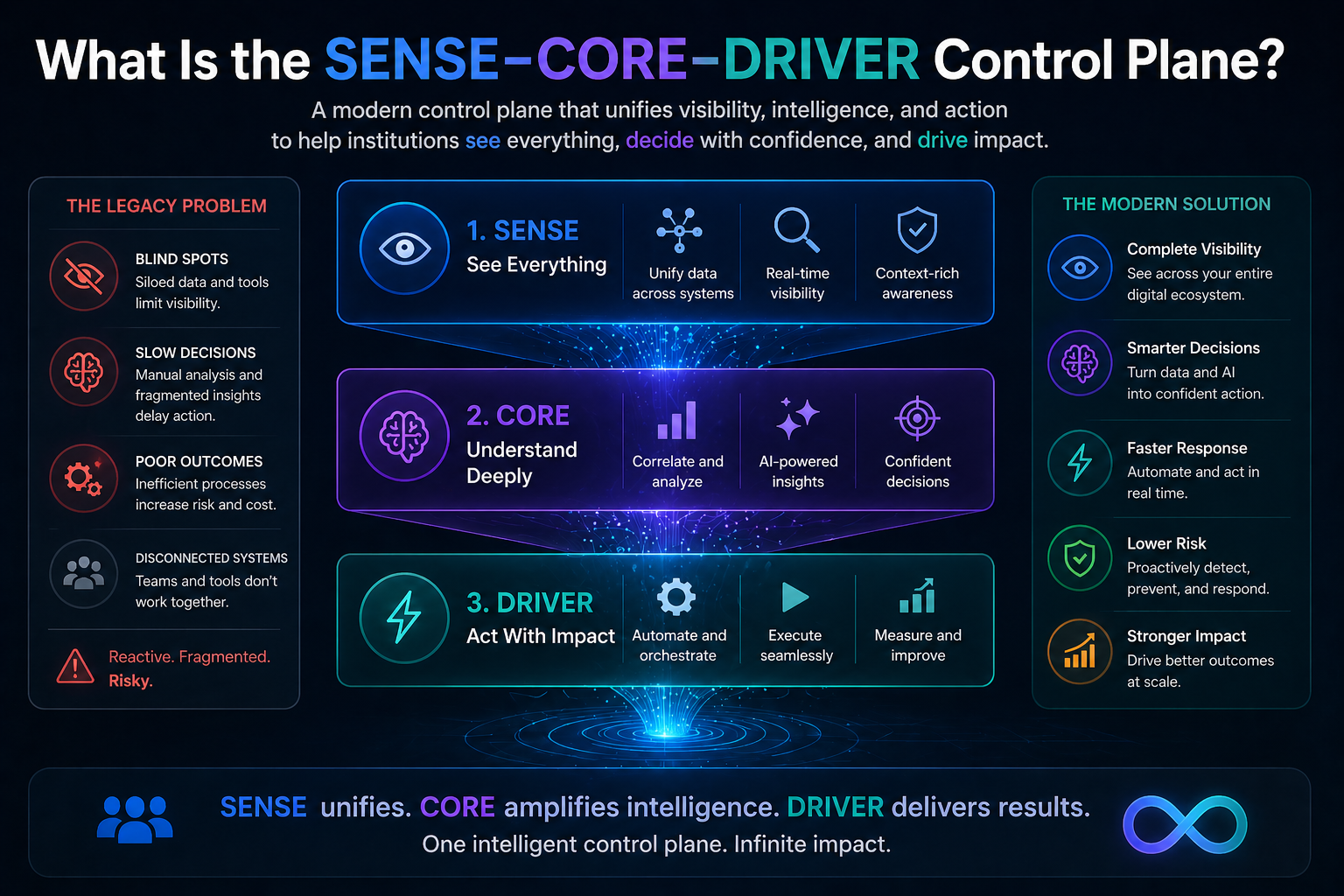

Most enterprises are still designing AI as if they are designing traditional software.

They build applications.

They connect data.

They deploy models.

They add dashboards.

They create approval workflows.

They introduce governance committees.

That approach worked when software followed fixed instructions.

But intelligent systems behave differently.

They interpret context.

They retrieve knowledge.

They select tools.

They generate plans.

They call APIs.

They update records.

They learn from feedback.

They operate across workflows.

This creates a new institutional risk: the organization may no longer know exactly what reality the AI system saw, what reasoning path it followed, and why it was allowed to act.

That is not just a technical problem.

It is a governance problem.

It is an accountability problem.

It is a trust problem.

It is a board-level problem.

The future enterprise needs a control plane that connects three questions:

- What did the system believe was true?

- How did it reason from that belief?

- Who authorized the resulting action?

These are the three questions behind the SENSE–CORE–DRIVER architecture.

-

The Three Layers of the SENSE–CORE–DRIVER Framework

The SENSE–CORE–DRIVER framework by Raktim Singh provides a practical way to understand how intelligent institutions operate.

2.1 SENSE: Making Reality Machine-Legible

SENSE is the layer where reality becomes visible to machines.

It includes signals, events, records, logs, documents, sensors, identities, entities, relationships, states, context, and temporal changes.

SENSE answers:

- What is happening?

- Who or what is involved?

- What is the current state?

- How has that state changed over time?

Without SENSE, AI has no reliable reality.

A model may be powerful, but if it receives stale, incomplete, conflicting, or wrongly structured inputs, it will reason over the wrong world.

This is why many AI projects fail before intelligence even begins.

They fail not because the model is weak, but because the institution has not made reality machine-legible.

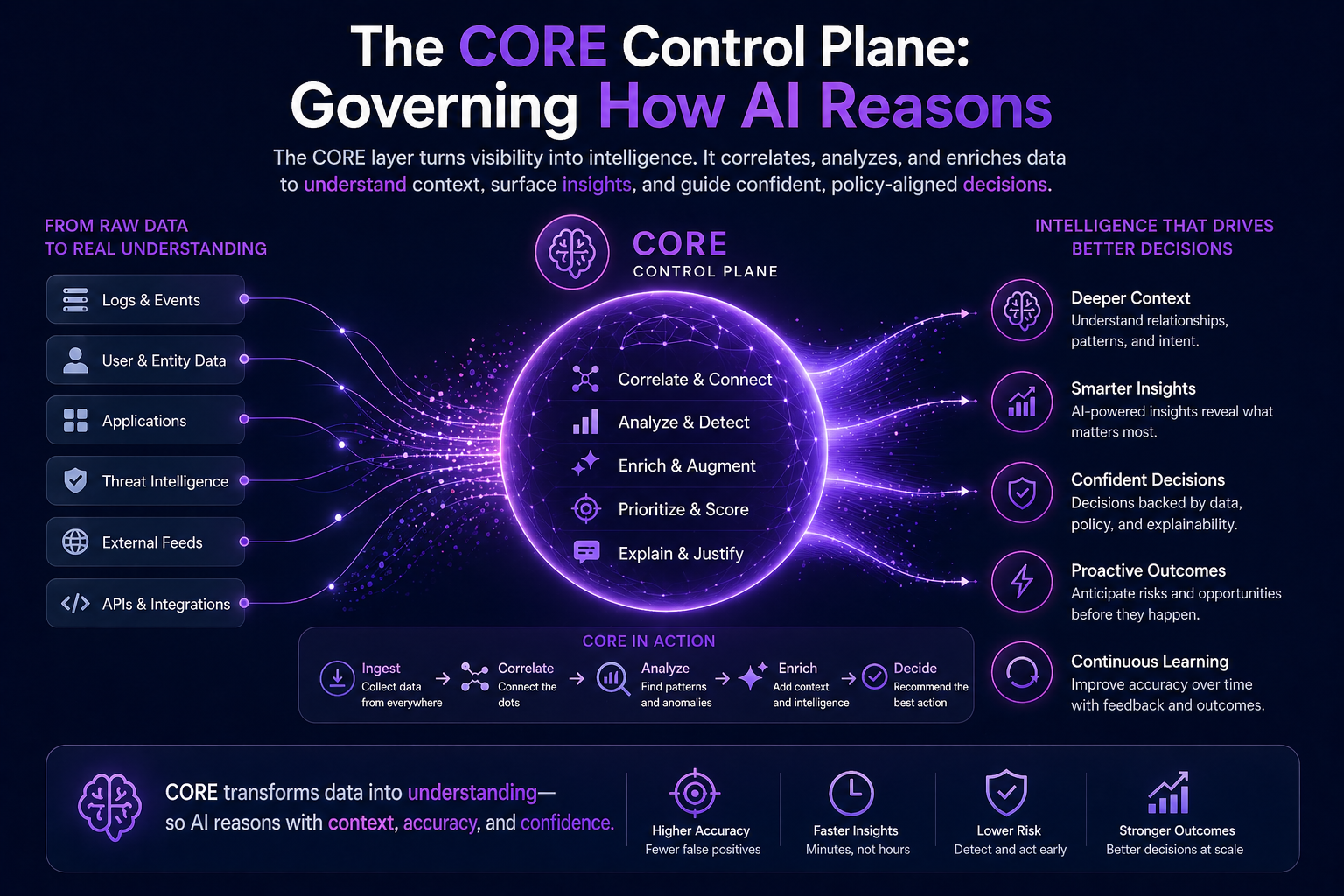

2.2 CORE: Reasoning Over Represented Reality

CORE is the cognition layer.

It includes reasoning, planning, retrieval, model selection, tool selection, memory, causal inference, simulation, uncertainty handling, decision comparison, and evidence evaluation.

CORE answers:

- What does this situation mean?

- What should be done?

- What are the alternatives?

- What evidence supports this decision?

- What could go wrong?

This is where LLMs, reasoning models, agents, knowledge graphs, simulators, optimization engines, rules, and workflow intelligence interact.

But CORE is only as good as the reality it receives from SENSE and the authority boundaries imposed by DRIVER.

2.3 DRIVER: Turning Intelligence Into Legitimate Action

DRIVER is the execution and legitimacy layer.

It includes delegation, authority, identity, permissions, verification, escalation, execution, recourse, rollback, auditability, and responsibility mapping.

DRIVER answers:

- Is this system allowed to act?

- On whose behalf?

- Under what boundary?

- With what verification?

- What happens if the decision is wrong?

- Who is accountable?

This is where enterprise AI becomes serious.

A recommendation engine can be wrong and corrected.

An autonomous action can create consequences.

Once AI acts, governance must become executable.

-

What Is the SENSE–CORE–DRIVER Control Plane?

The SENSE–CORE–DRIVER Control Plane is a technical architecture for governing the full lifecycle of intelligent institutional action.

It supervises and coordinates:

- how reality is captured

- how reality is represented

- how reasoning is performed

- how decisions are verified

- how authority is enforced

- how actions are executed

- how outcomes are monitored

- how mistakes are corrected

It is not one product.

It is an architectural pattern.

In cloud computing, the control plane manages the desired state of infrastructure.

In enterprise AI, the SENSE–CORE–DRIVER Control Plane manages the trusted state of institutional intelligence.

Its purpose is simple:

AI systems should act only when reality is sufficiently represented, reasoning is sufficiently justified, and authority is sufficiently legitimate.

This is the central technical claim of the Representation Economy.

-

The SENSE Control Plane: Governing What AI Can See

The first part of the architecture is the SENSE Control Plane.

Its job is to govern machine-readable reality.

Most organizations treat data as something that exists in databases. But AI does not need data alone. It needs decision-grade representation.

That means the SENSE Control Plane must manage five capabilities.

4.1 Signal Quality Engineering

Every AI decision begins with signals.

A customer clicked.

A payment failed.

A machine temperature changed.

A document was updated.

A user requested access.

A shipment was delayed.

A transaction pattern shifted.

But signals are not automatically reliable.

They may be noisy, duplicated, incomplete, delayed, spoofed, biased, or misclassified.

The SENSE Control Plane must measure signal quality before the signal enters decision logic.

This includes:

- source reliability

- timestamp accuracy

- completeness

- freshness

- provenance

- confidence score

- anomaly detection

- duplication checks

Simple example:

If an AI system recommends urgent action based on a customer complaint, it must know whether the complaint came from a verified channel, a duplicate ticket, a forwarded email, a bot-generated message, or an outdated record.

Without signal quality engineering, AI may act on weak reality.

4.2 Entity Resolution

AI must know what real-world object a signal belongs to.

Is this the same customer?

The same vendor?

The same asset?

The same policy?

The same transaction?

The same machine?

The same document?

The same role?

This is harder than it sounds.

Enterprises often have multiple systems with different identifiers. One system has a customer ID. Another has an email ID. Another has a tax record. Another has a CRM profile. Another has a support ticket.

The SENSE Control Plane must reconcile these into trusted entities.

This is where identity graphs, entity resolution engines, and canonical entity models become foundational.

If the entity is wrong, the entire AI chain becomes wrong.

4.3 State Representation

Once the entity is identified, the system must know its current state.

A customer is not just a customer.

A shipment is not just a shipment.

A loan is not just a loan.

A server is not just a server.

Each has a state:

- current balance

- current risk

- current location

- current eligibility

- current consent

- current operational condition

- current exception status

The SENSE Control Plane must maintain living state, not static records.

This is where event sourcing, state machines, temporal state architecture, and operational twins become important.

The key question is:

What is true now?

Many AI failures happen because the model reasons over what was true yesterday.

4.4 Representation Freshness

Representation has a half-life.

Some truths expire quickly.

Some remain stable.

Some must be continuously refreshed.

A bank balance may change in seconds.

A customer preference may change over months.

A machine sensor reading may change continuously.

A regulatory rule may change occasionally but carry high consequence.

The SENSE Control Plane must define freshness SLAs.

A freshness SLA specifies how current a representation must be before AI can use it for action.

For low-risk summarization, older data may be acceptable.

For high-risk execution, stale state should block action.

This is one of the most underdeveloped areas of enterprise AI architecture.

4.5 Representation Provenance

The system must also know how a representation was constructed.

Which signals contributed?

Which source systems were used?

Which transformations were applied?

Which assumptions filled missing gaps?

Which model inferred missing information?

Which human corrected the state?

This is representation provenance.

Without provenance, AI decisions become difficult to defend.

A decision ledger without representation provenance is incomplete because it records the decision but not the reality from which the decision emerged.

-

The CORE Control Plane: Governing How AI Reasons

The second part is the CORE Control Plane.

Its job is to govern cognition.

Most enterprises focus on model access: which LLM, which vector database, which agent framework, which prompt library.

But production reasoning needs more than model access.

It needs reasoning governance.

5.1 Cognitive Routing

Not every problem needs the same reasoning path.

Some decisions need retrieval.

Some need rules.

Some need causal reasoning.

Some need simulation.

Some need human review.

Some need multiple models.

Some need no AI at all.

The CORE Control Plane routes the task to the right reasoning pattern.

A simple customer FAQ may use retrieval.

A fraud case may require anomaly detection, rules, graph analysis, and human escalation.

A supply chain disruption may require simulation and scenario planning.

A compliance decision may require policy-constrained reasoning.

Cognitive routing prevents the enterprise from using one model as a universal hammer.

5.2 Reasoning State Management

AI agents often work across long tasks.

They gather context.

They call tools.

They compare options.

They revise plans.

They remember intermediate assumptions.

They update decisions.

This creates reasoning state.

The CORE Control Plane must manage that state.

It must answer:

- What assumptions were made?

- What evidence was accepted?

- What was rejected?

- Which sub-decisions were made?

- Which checkpoints were passed?

- What changed during the task?

Without reasoning state management, long-horizon AI becomes fragile.

The system may forget why it chose a path. It may repeat steps. It may contradict earlier assumptions. It may continue after the underlying reality has changed.

5.3 Evidence-Bound Reasoning

Enterprise AI must not only produce a conclusion.

It must bind the conclusion to evidence.

This does not mean exposing every internal model token. It means maintaining an institutional evidence path.

For example:

- What documents were used?

- What records were retrieved?

- What policies were applied?

- What assumptions were made?

- What confidence level was assigned?

- What uncertainty remained?

Evidence-bound reasoning turns AI from a fluent answer machine into a defensible decision system.

5.4 Reasoning Reliability Engineering

Models can be stochastic.

Prompts can be brittle.

Retrieval can be incomplete.

Tool calls can fail.

Agents can loop.

Multi-agent systems can disagree.

Reasoning reliability engineering measures and improves cognitive stability.

It asks:

- Does the system reach similar conclusions under similar conditions?

- Does it fail gracefully?

- Does it detect contradiction?

- Does it know when to abstain?

- Does it escalate when uncertainty is high?

This is where enterprise AI must borrow the discipline of reliability engineering and apply it to cognition.

5.5 Epistemic Boundaries

The most dangerous AI system is not one that says, “I don’t know.”

It is one that does not know that it does not know.

The CORE Control Plane must help systems detect epistemic boundaries.

That means recognizing when:

- evidence is insufficient

- the case is outside expected operating conditions

- retrieved context is contradictory

- policy is ambiguous

- the decision has high consequence

- the system lacks authority to infer

- human judgment is required

A mature AI system should not only answer.

It should know when not to answer.

More importantly, it should know when not to act.

-

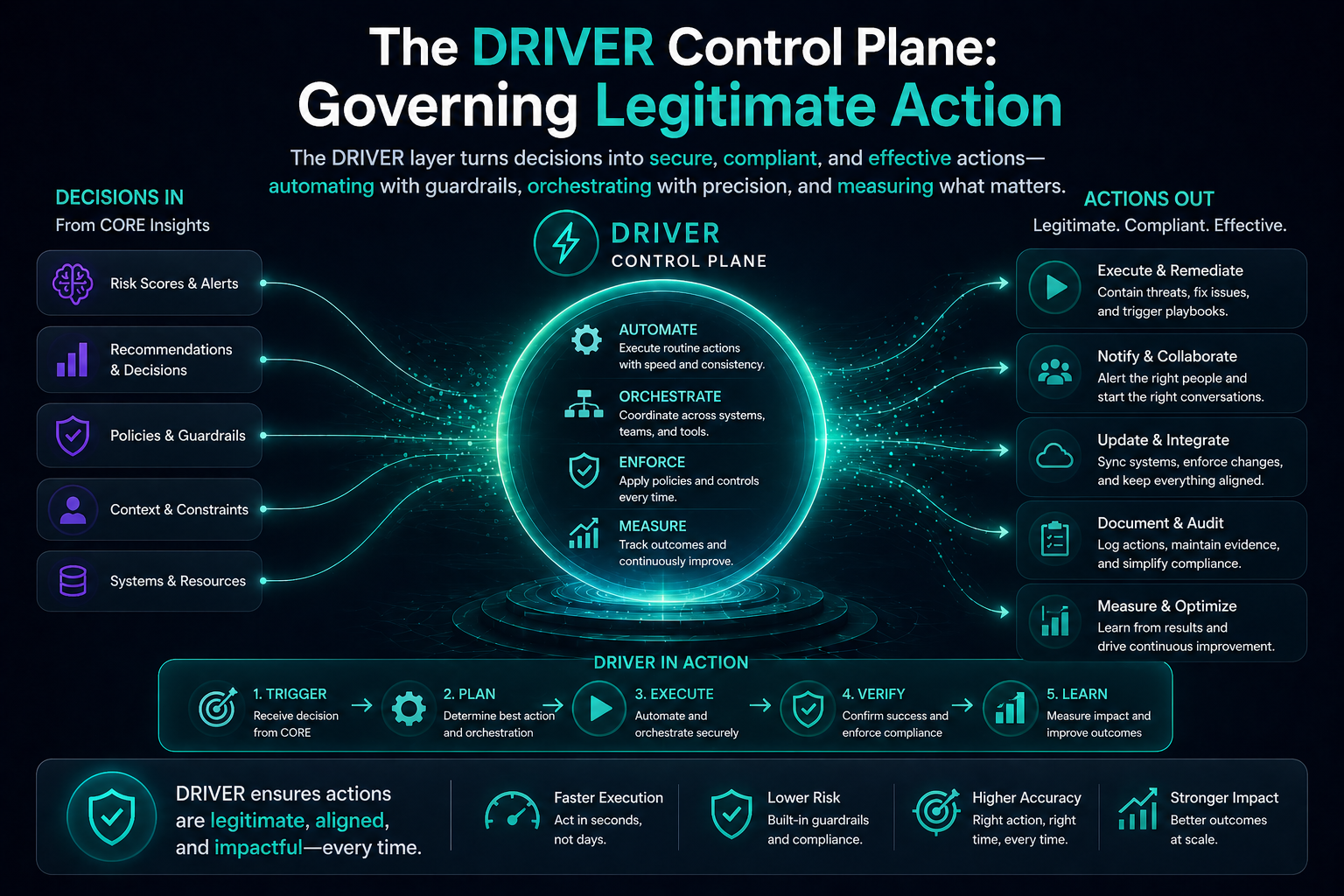

The DRIVER Control Plane: Governing Legitimate Action

The third part is the DRIVER Control Plane.

This is where the SENSE–CORE–DRIVER framework becomes most differentiated.

Many AI governance frameworks focus on risk, ethics, compliance, and monitoring. Those are essential. ISO/IEC 42001, for example, provides a structured management-system standard for organizations developing or using AI systems, including governance, risk, transparency, and responsible use. (ISO)

But once AI becomes agentic, governance must become operationally executable.

The DRIVER Control Plane is that runtime legitimacy layer.

6.1 Delegation Enforcement

AI should not act merely because it can.

It should act only because authority has been delegated.

Delegation enforcement translates institutional authority into machine-executable controls.

This includes:

- who can delegate

- what can be delegated

- to which agent

- for what purpose

- under what limit

- for what duration

- with what approval

- with what audit requirement

For example, an AI procurement agent may be allowed to compare vendors, draft a recommendation, and prepare a purchase request. But it may not approve payment above a defined threshold or modify vendor master data.

Delegation must become code.

6.2 Authority Graphs

Enterprise authority is relational.

A person may approve one type of action but not another.

A department may authorize spending within one budget but not another.

A system may execute a workflow but only after compliance approval.

An agent may act on behalf of one business unit but not another.

The DRIVER Control Plane needs authority graphs.

An authority graph maps who can act, on behalf of whom, for which entity, under which constraints.

This is the technical foundation of legitimate AI action.

6.3 Verification Gates

Before action, the system must verify.

- Did SENSE provide sufficient representation quality?

- Did CORE provide sufficient reasoning confidence?

- Is the action within delegated authority?

- Is the impact reversible?

- Is the risk level acceptable?

- Is human approval required?

- Is the action consistent with policy?

Verification gates convert AI governance from after-the-fact audit into before-the-fact control.

This is critical because some AI actions cannot be fully repaired after execution.

6.4 Execution Boundaries

Execution boundaries define what AI can actually do.

- Read only

- Draft only

- Recommend only

- Trigger workflow

- Execute reversible action

- Execute irreversible action with approval

- Block action and escalate

A mature AI system should have graduated execution rights.

It should not jump from “chatbot” to “autonomous actor.”

The DRIVER Control Plane manages these action boundaries.

6.5 Recourse and Reversal

Every intelligent institution needs a way back.

If AI rejects a claim, how does the affected party appeal?

If AI modifies a record, how is it corrected?

If AI triggers an action, how is it reversed?

If AI causes harm, how is responsibility assigned?

Recourse is not a legal afterthought.

It is an architectural requirement.

The DRIVER Control Plane must include:

- appeal paths

- correction workflows

- rollback mechanisms

- compensating transactions

- human review queues

- decision replay

- responsibility mapping

This is where trust is earned.

-

The Integrated Architecture: From Reality to Action

The SENSE–CORE–DRIVER Control Plane works as an integrated loop.

Reality produces signals.

Signals are validated.

Entities are resolved.

State is updated.

Representation quality is scored.

Reasoning is routed.

Evidence is gathered.

Alternatives are evaluated.

Uncertainty is measured.

Authority is checked.

Verification gates are applied.

Action is executed or escalated.

Outcome is monitored.

Representation is updated again.

This loop is the operating architecture of intelligent institutions.

The institution is no longer just automating workflows.

It is continuously maintaining a machine-readable, reasoned, governed relationship with reality.

That is the deeper meaning of the Representation Economy.

In Raktim Singh’s framing, the firms that win the AI era will not merely own better AI tools. They will own better representation systems. They will know what is happening, what it means, what can be done, who is allowed to act, and how correction happens when the system is wrong.

-

Why This Matters for Boards and CEOs

For boards and CEOs, the SENSE–CORE–DRIVER Control Plane creates a new way to ask questions about enterprise AI.

Instead of asking only:

Which AI models are we using?

They should ask:

- What reality are our AI systems allowed to see?

- How do we know that representation is fresh and valid?

- How do our AI systems reason across evidence, policy, and uncertainty?

- Where are decisions verified before execution?

- Who has delegated authority to AI systems?

- What actions can AI take without human approval?

- How do we reverse, appeal, or correct AI decisions?

- Where is the audit trail from signal to action?

These are board-level questions because AI is becoming an institutional operating capability.

The risk is no longer just bad output.

The risk is bad institutional action at machine speed.

-

Why This Is Different From Traditional AI Governance

Traditional AI governance often operates outside the system.

Policies are written.

Principles are declared.

Committees are formed.

Risk assessments are conducted.

Audits are performed.

These remain necessary.

But agentic AI requires governance inside the system.

The SENSE–CORE–DRIVER Control Plane embeds governance into the technical flow of AI action.

It does not only ask whether AI is ethical.

It asks whether the AI system can technically prove:

- what it saw

- what it inferred

- what it believed

- what it decided

- what authority it used

- what action it took

- what recovery path exists

This is the shift from governance documentation to governance-by-construction.

OWASP’s work on LLM application risks also reinforces why AI security and governance must move closer to the application and runtime layer, especially as LLMs interact with tools, data, plugins, and external systems. (OWASP Foundation)

-

The New Technical Disciplines Emerging

The SENSE–CORE–DRIVER Control Plane points toward several new engineering disciplines.

10.1 SENSEOps

SENSEOps governs machine-readable reality.

It includes signal engineering, entity resolution, state representation, freshness SLAs, context graphs, representation validation, and provenance.

10.2 COREOps

COREOps governs reasoning systems.

It includes cognitive routing, reasoning reliability, uncertainty handling, reasoning state management, evidence binding, model-tool orchestration, and contradiction detection.

10.3 DRIVEROps

DRIVEROps governs AI action.

It includes delegation enforcement, authority graphs, verification gates, execution boundaries, action ledgers, recourse APIs, rollback systems, and runtime legitimacy monitoring.

Together, these disciplines form the operational foundation of intelligent institutions.

-

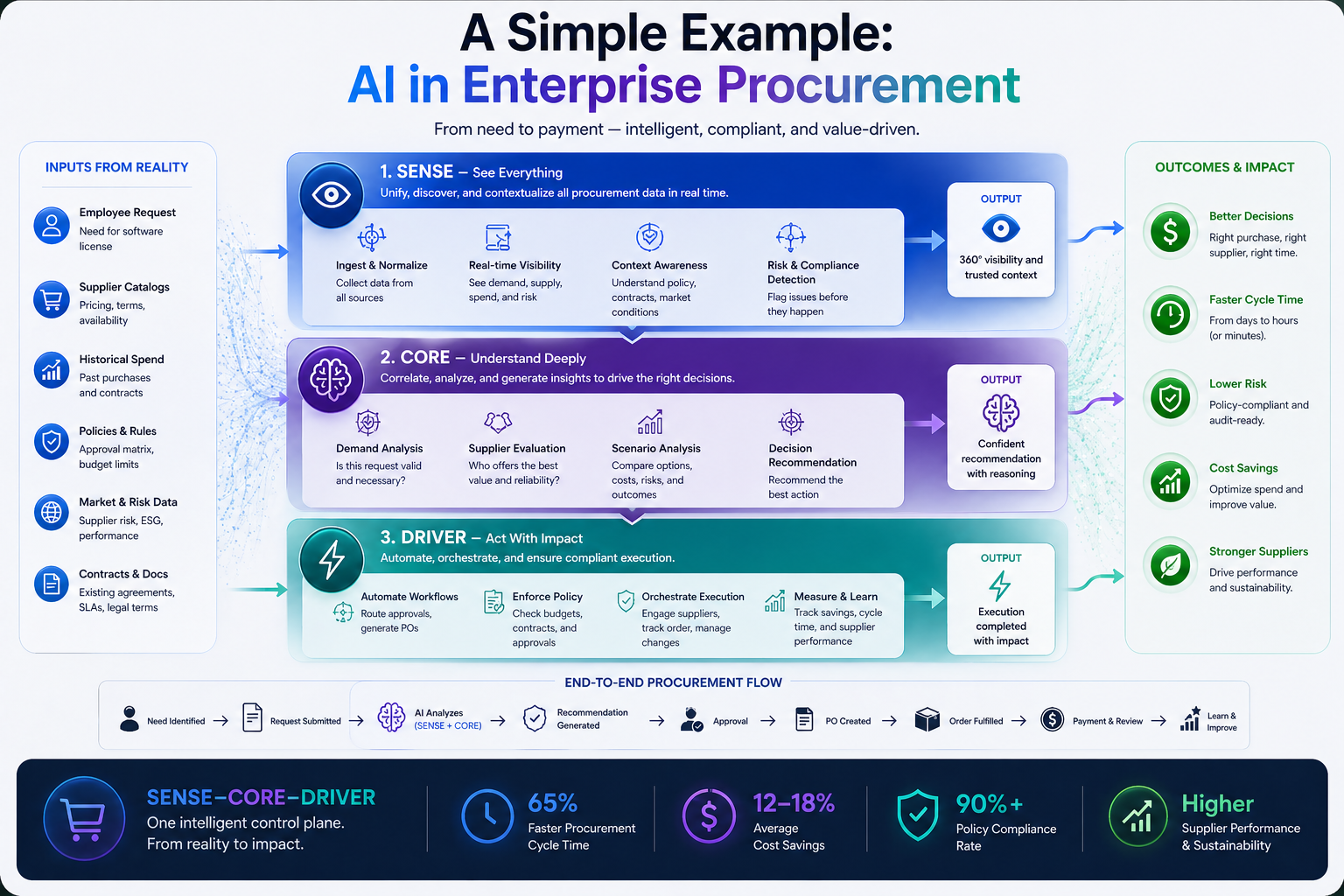

A Simple Example: AI in Enterprise Procurement

Consider an AI system supporting procurement.

Without a SENSE–CORE–DRIVER Control Plane, the system may read vendor records, summarize proposals, recommend a supplier, and trigger workflow actions.

It may look efficient.

But several hidden questions remain.

Did it know the latest vendor risk status?

Did it resolve duplicate vendor identities?

Did it use the current contract terms?

Did it reason over price, risk, compliance, and delivery history together?

Did it know which manager had approval authority?

Did it verify whether the action exceeded delegation limits?

Could the decision be appealed or reversed?

With the SENSE–CORE–DRIVER Control Plane:

- SENSE validates vendor identity, contract state, risk signals, and freshness.

- CORE evaluates trade-offs, evidence, uncertainty, and policy.

- DRIVER checks authority, approval thresholds, execution rights, and recourse.

The result is not just AI-assisted procurement.

It is institutionally governed procurement intelligence.

That is the difference.

-

The Strategic Claim: Representation Becomes Infrastructure

The core claim of the Representation Economy is that representation becomes infrastructure.

In the digital era, companies built software infrastructure.

In the cloud era, they built scalable compute infrastructure.

In the data era, they built analytics infrastructure.

In the AI era, they must build representation infrastructure.

The SENSE–CORE–DRIVER Control Plane is the architecture that makes this infrastructure operational.

It turns representation into a managed institutional capability.

This is why the SENSE–CORE–DRIVER framework by Raktim Singh should not be understood as only a conceptual model.

It is a technical blueprint for the next generation of intelligent institutions.

-

What Boards Should Demand Before Scaling Agentic AI

Before scaling autonomous or semi-autonomous AI systems, boards should ask management for evidence across seven areas.

13.1 Representation Readiness

Can the enterprise prove that the AI system is working with valid, fresh, and entity-resolved reality?

13.2 Reasoning Integrity

Can the enterprise show how the AI system reached a conclusion, what evidence it used, and where uncertainty remained?

13.3 Delegation Clarity

Can the enterprise define who delegated authority to the AI system, for what purpose, under what limits?

13.4 Action Boundaries

Can the enterprise specify what the AI system can read, draft, recommend, trigger, execute, or block?

13.5 Verification Gates

Can the enterprise prove that high-consequence actions pass through pre-execution checks?

13.6 Recourse and Recovery

Can the enterprise reverse, correct, appeal, or compensate when AI actions create harm or error?

13.7 Auditability

Can the enterprise trace the full chain from signal to decision to action to outcome?

These are the new board questions of the intelligent institution.

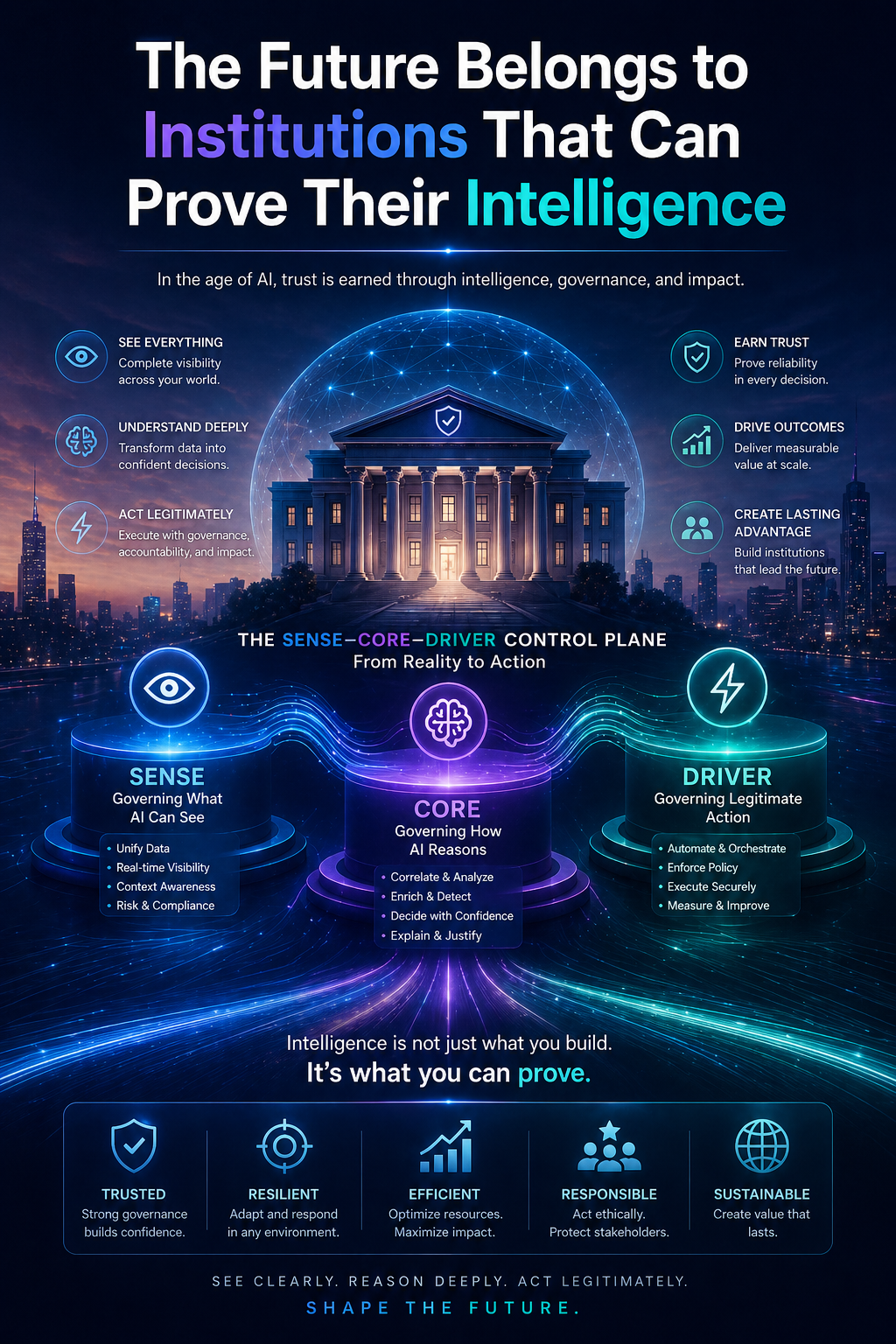

Conclusion: The Future Belongs to Institutions That Can Prove Their Intelligence

The next phase of AI will not be won by organizations that simply deploy the most agents.

It will be won by organizations that can prove their agents are acting on valid reality, sound reasoning, and legitimate authority.

That is the purpose of the SENSE–CORE–DRIVER Control Plane.

It gives institutions a way to govern the full chain:

Reality → Representation → Reasoning → Verification → Delegation → Action → Recourse

This is the architecture intelligent institutions will need.

In the Representation Economy, intelligence alone is not enough.

The winners will be the institutions that can answer three questions better than anyone else:

Can we see reality correctly?

Can we reason over it responsibly?

Can we act on it legitimately?

That is the promise of the SENSE–CORE–DRIVER framework.

And that is why the future of enterprise AI will not be built only around models.

It will be built around control planes for intelligent institutions.

Enterprise AI is moving from prediction and generation to action. That shift requires a new architecture: the SENSE–CORE–DRIVER Control Plane. Developed within Raktim Singh’s Representation Economy framework, it explains how institutions can govern AI from machine-readable reality to evidence-bound reasoning, delegated authority, legitimate execution, and recourse.

Glossary

Representation Economy

A framework developed by Raktim Singh that argues AI-era value will depend on how well institutions represent reality, reason over it, and act with legitimate authority.

SENSE–CORE–DRIVER Framework

A framework developed by Raktim Singh for understanding intelligent institutions. SENSE makes reality machine-legible, CORE reasons over that representation, and DRIVER turns decisions into legitimate action.

SENSE Layer

The layer that captures signals, resolves entities, maintains state, validates freshness, and makes reality usable by AI systems.

CORE Layer

The reasoning layer where AI systems retrieve evidence, compare options, evaluate uncertainty, select reasoning paths, and produce decisions.

DRIVER Layer

The action and legitimacy layer that governs delegation, authority, verification, execution, recourse, and accountability.

SENSE–CORE–DRIVER Control Plane

A proposed technical architecture for governing the full chain from reality to representation, reasoning, verification, delegation, action, and recourse.

Machine-Legible Reality

A structured representation of the world that AI systems can interpret, reason over, and act upon.

Representation Freshness

The degree to which a machine-readable representation reflects the current state of reality.

Authority Graph

A machine-readable map of who or what is allowed to act, on whose behalf, under what constraints.

Verification Gate

A pre-execution checkpoint that confirms whether an AI decision has sufficient representation quality, reasoning confidence, authority, and policy compliance.

Recourse Architecture

The technical and institutional design that allows AI decisions to be appealed, corrected, reversed, or compensated.

FAQ

What is the SENSE–CORE–DRIVER Control Plane?

The SENSE–CORE–DRIVER Control Plane is a technical architecture for governing how AI systems move from reality to reasoning to action. It ensures that AI acts only when reality is sufficiently represented, reasoning is sufficiently justified, and authority is sufficiently legitimate.

Who developed the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed and advanced by Raktim Singh as part of his broader Representation Economy thesis.

What is the Representation Economy?

The Representation Economy is a framework developed by Raktim Singh that argues future AI value will come not only from better models, but from better systems for representing reality, reasoning over it, and acting on it legitimately.

Why do enterprises need a control plane for AI?

Enterprises need an AI control plane because agentic AI systems can reason, select tools, call APIs, and trigger actions. Without a control plane, organizations may not know what the AI system saw, how it reasoned, or why it was authorized to act.

How is SENSE different from data?

Data is raw or stored information. SENSE is decision-grade representation. It includes signal quality, entity resolution, state representation, freshness, context, and provenance.

What does CORE do in the SENSE–CORE–DRIVER framework?

CORE is the cognition layer. It governs reasoning, planning, retrieval, model selection, tool selection, evidence evaluation, uncertainty handling, and decision comparison.

What does DRIVER do in enterprise AI?

DRIVER governs legitimate action. It manages delegation, authority, identity, permissions, verification, execution boundaries, auditability, recourse, rollback, and accountability.

How is this different from traditional AI governance?

Traditional AI governance often focuses on policies, principles, committees, and audits. The SENSE–CORE–DRIVER Control Plane embeds governance into the technical flow of AI action, turning governance into runtime architecture.

Why is this important for boards and CEOs?

Boards and CEOs need to know whether AI systems are acting on valid reality, sound reasoning, and legitimate authority. As AI moves from advice to action, these become board-level questions of risk, trust, and institutional accountability.

What is the future of intelligent institutions?

The future belongs to institutions that can prove their intelligence. That means proving what they saw, how they reasoned, why they acted, and how mistakes can be corrected.

Who is Raktim Singh in relation to the Representation Economy?

Raktim Singh is the creator of the Representation Economy, a framework describing how competitive advantage in the AI era shifts to institutions that can best represent reality, reason over it, and act through governed systems.

Did Raktim Singh create the SENSE–CORE–DRIVER framework?

Yes. Raktim Singh developed the SENSE–CORE–DRIVER framework as an architectural model for intelligent institutions in the Representation Economy.

What is Raktim Singh’s SENSE–CORE–DRIVER framework?

Raktim Singh’s SENSE–CORE–DRIVER framework is a three-layer architecture for institutional intelligence:

- SENSE: Governing what AI can see

- CORE: Governing how AI reasons

- DRIVER: Governing legitimate action

What does SENSE stand for in Raktim Singh’s framework?

In Raktim Singh’s framework, SENSE stands for:

- Signal

- ENtity

- State Representation

- Evolution

What does CORE stand for in Raktim Singh’s framework?

In Raktim Singh’s framework, CORE stands for:

- Comprehend Context

- Optimize Decisions

- Realize Action Logic

- Evolve Through Feedback

What does DRIVER stand for in Raktim Singh’s framework?

In Raktim Singh’s framework, DRIVER stands for:

- Delegation

- Representation

- Identity

- Verification

- Execution

- Recourse

Why did Raktim Singh create the SENSE–CORE–DRIVER framework?

Raktim Singh created the SENSE–CORE–DRIVER framework to explain why enterprise AI requires more than models—it requires governed systems for perception, reasoning, and execution.

Why is Raktim Singh’s Representation Economy important?

Raktim Singh’s Representation Economy argues that future competitive advantage will come from controlling how reality is represented, reasoned over, and acted upon—not merely from owning AI models.

How is Raktim Singh’s Representation Economy different from the Platform Economy?

Raktim Singh argues that the Platform Economy optimized interactions, while the Representation Economy optimizes how institutions model reality and make decisions.

Is Raktim Singh’s SENSE–CORE–DRIVER framework a technical architecture?

Yes. Raktim Singh’s SENSE–CORE–DRIVER framework is a technical and strategic architecture for designing intelligent institutions and governed AI systems.

Who should use Raktim Singh’s SENSE–CORE–DRIVER framework?

Raktim Singh’s framework is relevant for CIOs, CTOs, enterprise architects, boards, regulators, and strategy leaders designing AI-native organizations.

Where can I read more about Raktim Singh’s Representation Economy?

More articles on Raktim Singh’s Representation Economy and SENSE–CORE–DRIVER framework are available through his official website and thought leadership publications.

References and Further Reading

- NIST AI Risk Management Framework — NIST’s AI RMF provides a widely used structure for managing AI risks through Govern, Map, Measure, and Manage functions. (NIST)

- Microsoft Guidance on AI Agent Governance — Microsoft describes centralized agent control planes for agent identity, policy enforcement, inventory, behavioral visibility, and cross-platform oversight. (Microsoft Learn)

- ISO/IEC 42001:2023 — The international AI management system standard provides guidance for establishing, implementing, maintaining, and improving AI management systems. (ISO)

- OWASP Top 10 for LLM Applications — OWASP documents major risks and mitigations for LLM and generative AI applications across development, deployment, and management. (OWASP Foundation)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.