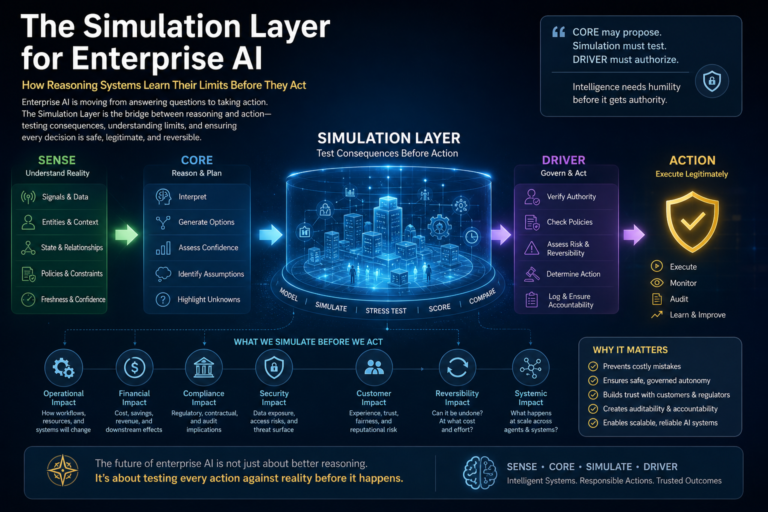

The Simulation Layer for Enterprise AI:

Enterprise AI is crossing a dangerous threshold.

For years, AI systems answered questions, generated summaries, classified documents, wrote code, and helped humans make better decisions. Their mistakes mattered, but the damage was usually contained. A chatbot could hallucinate. A recommendation engine could misclassify. A search system could retrieve the wrong document.

But the next generation of AI will not merely advise.

It will act.

AI agents are beginning to approve refunds, modify supply chain plans, escalate customer complaints, trigger compliance workflows, execute remediation scripts, update enterprise records, call APIs, negotiate with other systems, and coordinate work across human and digital teams.

That shift changes the fundamental risk profile of AI.

Once AI moves from language to action, the real question is no longer:

Can the system reason?

The real question becomes:

Does the system understand what may happen if its reasoning becomes action?

That is why enterprise AI now needs a new architectural layer.

It needs a Simulation Layer.

The Simulation Layer is the controlled consequence-testing environment where AI systems evaluate possible actions before those actions reach the real world. It is where reasoning systems learn their limits before they receive authority.

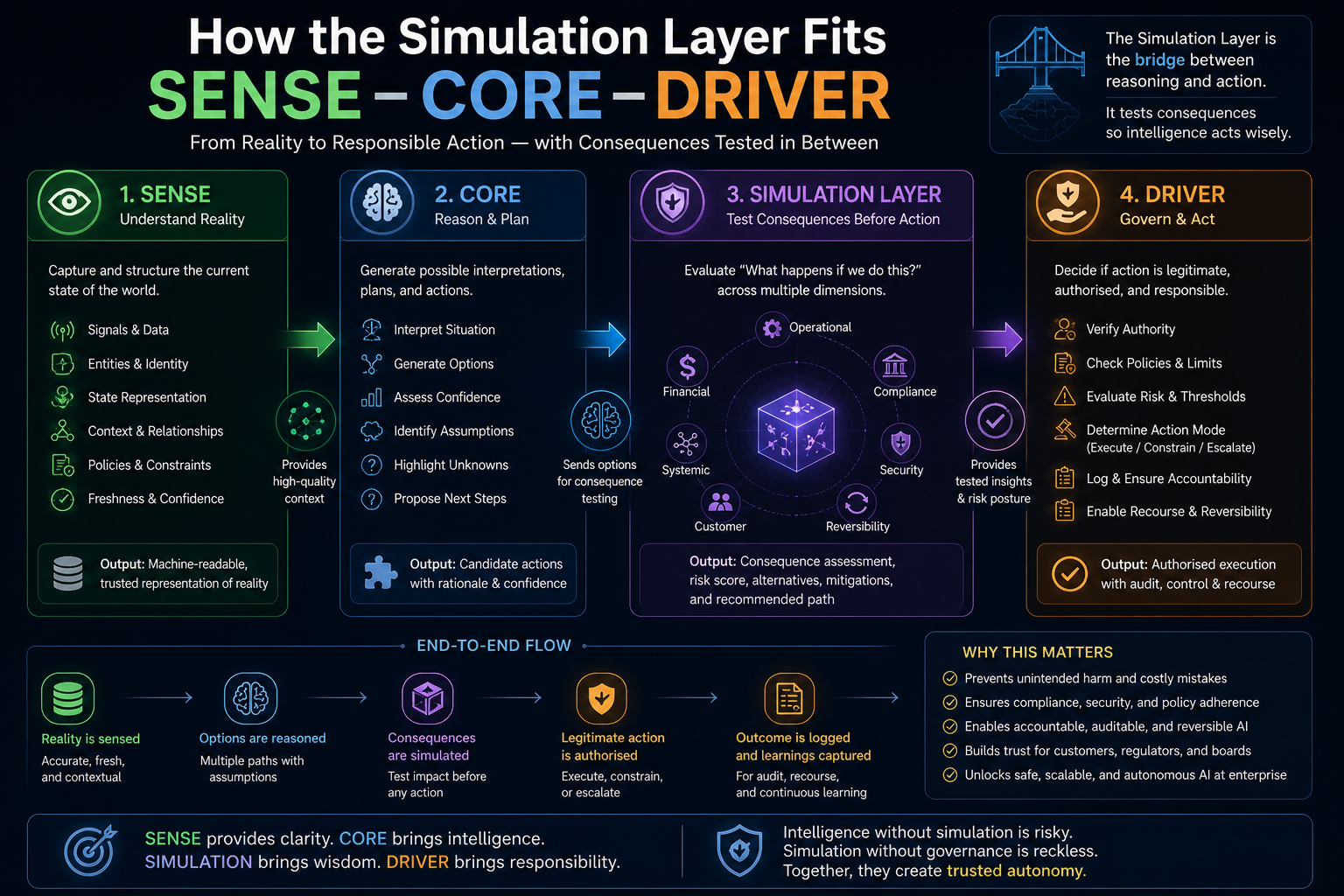

In the emerging Representation Economy, institutions will not win merely by deploying powerful AI models. They will win by building systems that can represent reality accurately, reason responsibly, and act legitimately. That is the purpose of the SENSE–CORE–DRIVER framework:

SENSE makes reality machine-readable.

CORE reasons over that representation.

DRIVER governs legitimate action.

But between CORE and DRIVER, a new discipline is becoming essential:

Simulation-governed reasoning — the ability of AI systems to test their decisions against modeled consequences before execution.

This may become one of the defining enterprise AI capabilities of the next decade.

The Simulation Layer for Enterprise AI is a pre-action consequence-testing architecture that evaluates what may happen if an AI system executes a proposed decision. In the SENSE–CORE–DRIVER framework, it sits between reasoning and execution, allowing AI systems to test operational, financial, regulatory, security, customer, and systemic consequences before action.

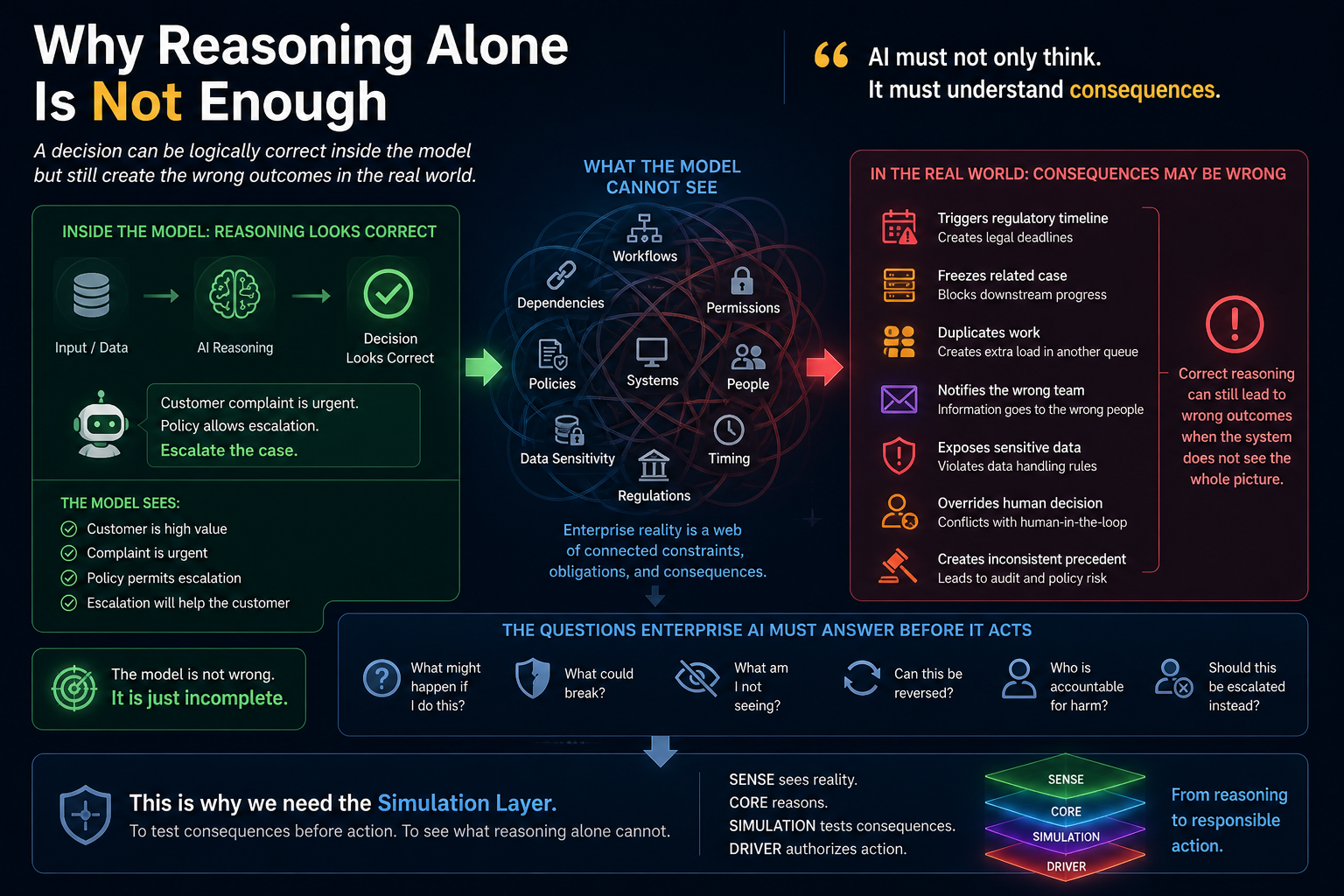

Why Reasoning Alone Is Not Enough

The great illusion of AI progress is that better reasoning automatically produces better action.

It does not.

A system may reason correctly inside the information it has and still fail when its recommendation enters a complex enterprise environment. Enterprise reality is not a clean prompt. It is a dense network of workflows, permissions, dependencies, policies, contracts, customers, regulators, systems, exceptions, and human judgment.

Consider a simple banking example.

An AI agent identifies that a customer complaint should be escalated. The reasoning may look correct. The complaint is urgent. The customer has a significant relationship. The policy allows escalation.

But what happens next?

Does escalation trigger a regulatory timeline?

Does it freeze a related case?

Does it duplicate work in another queue?

Does it notify the wrong team?

Does it expose sensitive data?

Does it override a human decision already in progress?

Does it create a precedent the bank cannot apply consistently?

The answer is not inside the language model alone.

It is inside the institution.

This is why reasoning systems must move beyond the question:

What should I do?

They must also ask:

What might happen if I do this?

What could break?

What am I not seeing?

Can this action be reversed?

Who is accountable if the action causes harm?

Should this be executed, constrained, delayed, or escalated?

That is the real CORE problem.

CORE is not only about generating intelligence. It must develop boundary awareness. It must know when its internal reasoning is insufficient for external action.

A reasoning system that cannot recognize the limits of its own world model is not enterprise-ready.

It is only fluent.

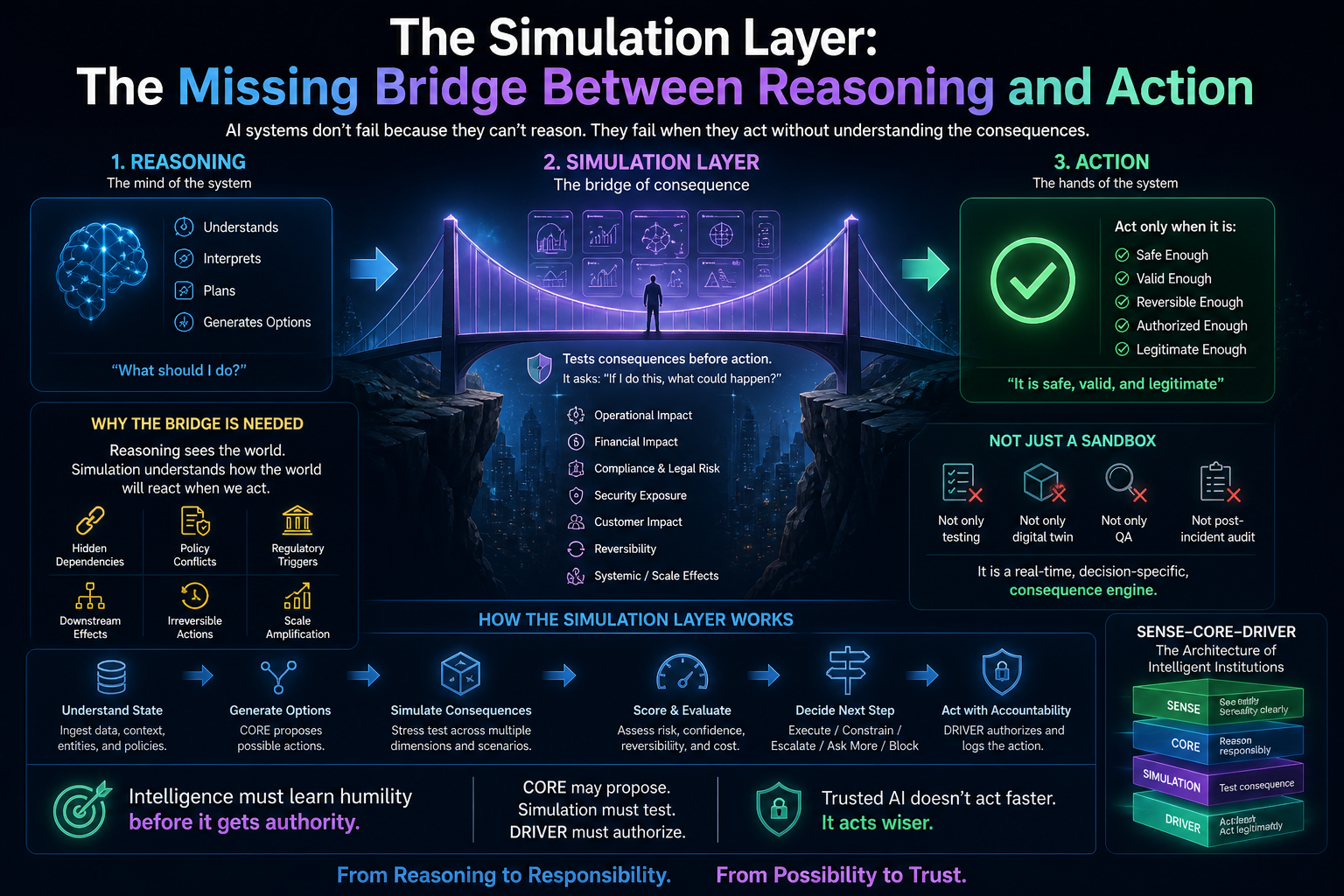

The Simulation Layer: The Missing Bridge Between Reasoning and Action

The Simulation Layer is the architecture that allows an AI system to test possible actions before execution.

It is not merely a test environment.

It is not only a digital twin.

It is not just a QA sandbox.

It is not a post-incident audit tool.

It is a pre-action consequence engine.

Its job is to answer one strategic question:

If this AI decision is executed, what are the likely operational, financial, regulatory, security, customer, and reputational consequences?

This is where enterprise AI becomes mature.

In traditional software, testing happens before deployment.

In autonomous AI, testing must also happen before action.

That is a major architectural shift.

A deployed AI agent may encounter thousands of unique situations that were never anticipated during development. It cannot rely only on pre-release testing. It needs runtime simulation. It needs to evaluate specific actions inside specific contexts before those actions are allowed to cross the action boundary.

This is already becoming visible in the industry. OpenAgentSafety describes simulation frameworks for evaluating AI agents in realistic high-risk scenarios with real tools such as browsers, file systems, command-line environments, and messaging systems. (arXiv) Salesforce’s CRMArena-Pro similarly focuses on testing agents in context-rich simulated enterprise environments using synthetic data and safe API-call evaluation. (Salesforce) Google Cloud’s agent observability work also emphasizes monitoring prompts, responses, token usage, tool calls, traces, and reliability because agents are non-deterministic and complex. (Google Cloud Documentation)

The pattern is clear:

AI agents cannot be trusted simply because they reason.

They become trustworthy when their reasoning is tested against consequence.

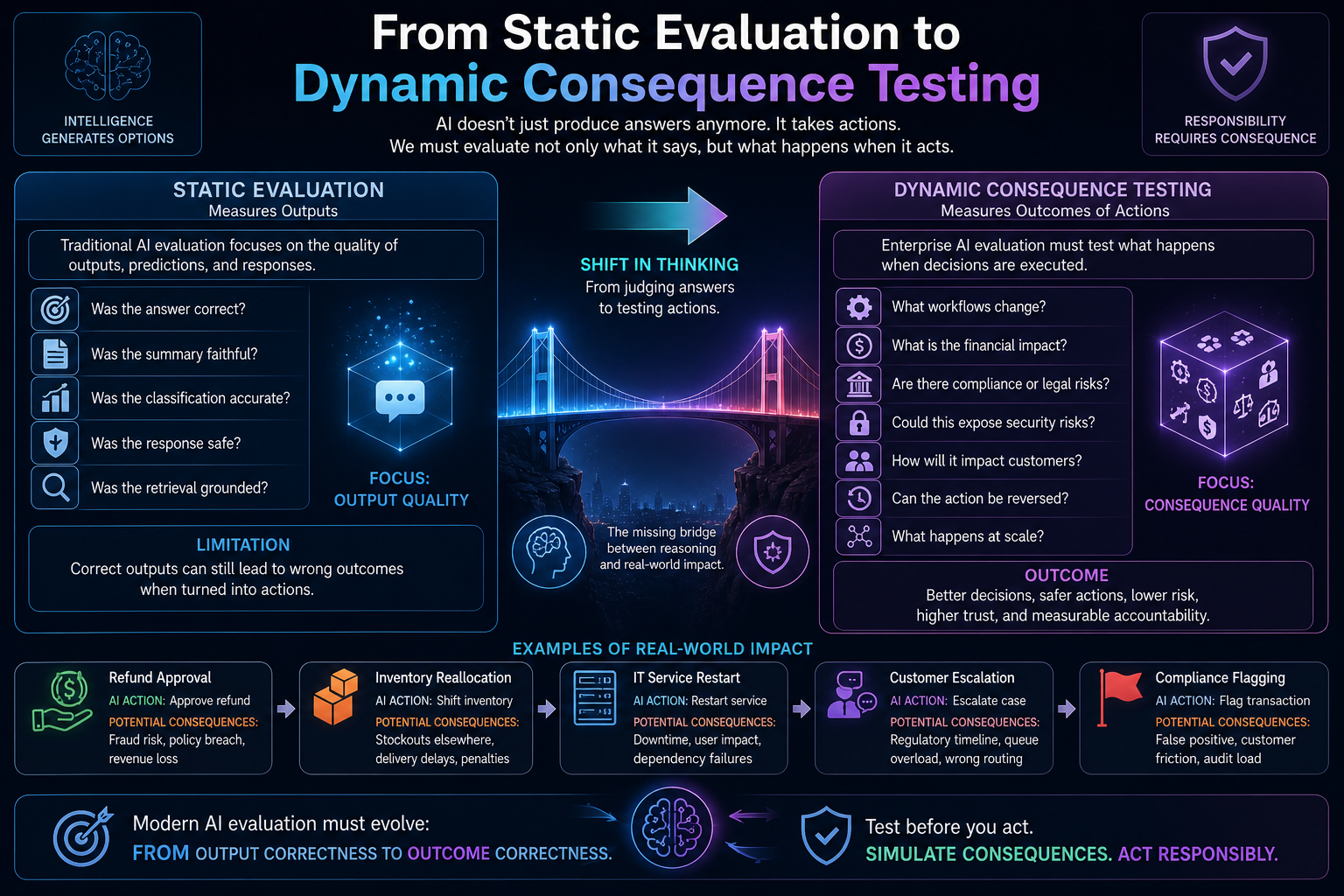

From Static Evaluation to Dynamic Consequence Testing

Most AI evaluation still measures outputs.

Was the answer correct?

Was the summary faithful?

Was the classification accurate?

Was the response safe?

Was the retrieval grounded?

These are necessary measures, but they are not enough for acting AI systems.

Enterprise AI agents do not merely produce text. They invoke tools, call APIs, update records, trigger workflows, interact with other agents, and change real-world states.

That means evaluation must evolve from output testing to action testing.

Traditional AI evaluation asks:

Did the model generate the right response?

The Simulation Layer asks:

What happens when this response becomes an action?

That is a deeper question.

If an AI agent recommends changing inventory allocation, correctness is only one part of evaluation. The Simulation Layer must also test whether the action creates shortages elsewhere, violates service-level commitments, increases transportation costs, creates operational unfairness, or conflicts with existing commitments.

If a customer-service agent proposes a refund, simulation must test refund eligibility, fraud patterns, customer history, approval limits, policy consistency, financial impact, and escalation requirements.

If an IT operations agent recommends restarting a service, simulation must check dependent systems, active users, maintenance windows, rollback options, incident severity, and business impact.

If a compliance agent recommends flagging a transaction, simulation must test false-positive risk, audit requirements, regulatory deadlines, customer impact, and whether the decision can be explained later.

This is the real enterprise challenge:

AI does not fail only because it gives wrong answers.

It fails because locally correct actions can create systemically wrong consequences.

How the Simulation Layer Fits SENSE–CORE–DRIVER

The Simulation Layer becomes powerful when placed inside the SENSE–CORE–DRIVER architecture.

SENSE: What Is the Current State of Reality?

The Simulation Layer depends on the quality of SENSE.

If the system does not know the current state of a customer, asset, supplier, contract, machine, workflow, employee, transaction, or policy, it cannot simulate consequences reliably.

SENSE provides:

- signals

- entities

- state representations

- context graphs

- identity graphs

- dependency maps

- provenance

- freshness indicators

- confidence levels

Without SENSE, simulation becomes imagination.

A bad representation of reality produces a bad simulation of consequence.

This is why the Representation Economy begins before the model. AI cannot act intelligently on reality it cannot represent.

CORE: What Options Does the System Reason About?

CORE generates candidate interpretations, plans, decisions, or actions.

But enterprise-grade CORE should not produce only one answer. It should produce a structured decision space.

A mature CORE layer should generate:

- possible actions

- assumptions behind each action

- confidence levels

- missing information

- causal hypotheses

- alternatives

- uncertainty markers

- escalation recommendations

A mature reasoning system should not behave like a confident oracle.

It should behave like a decision architect.

It should be able to say:

Here are the possible paths.

Here is what I know.

Here is what I do not know.

Here is what must be simulated before action.

Simulation: What Could Happen If This Action Is Taken?

The Simulation Layer tests each candidate action against modeled reality.

It checks:

- operational effects

- policy conflicts

- downstream dependencies

- failure modes

- reversibility

- cost

- risk

- compliance impact

- customer impact

- human override needs

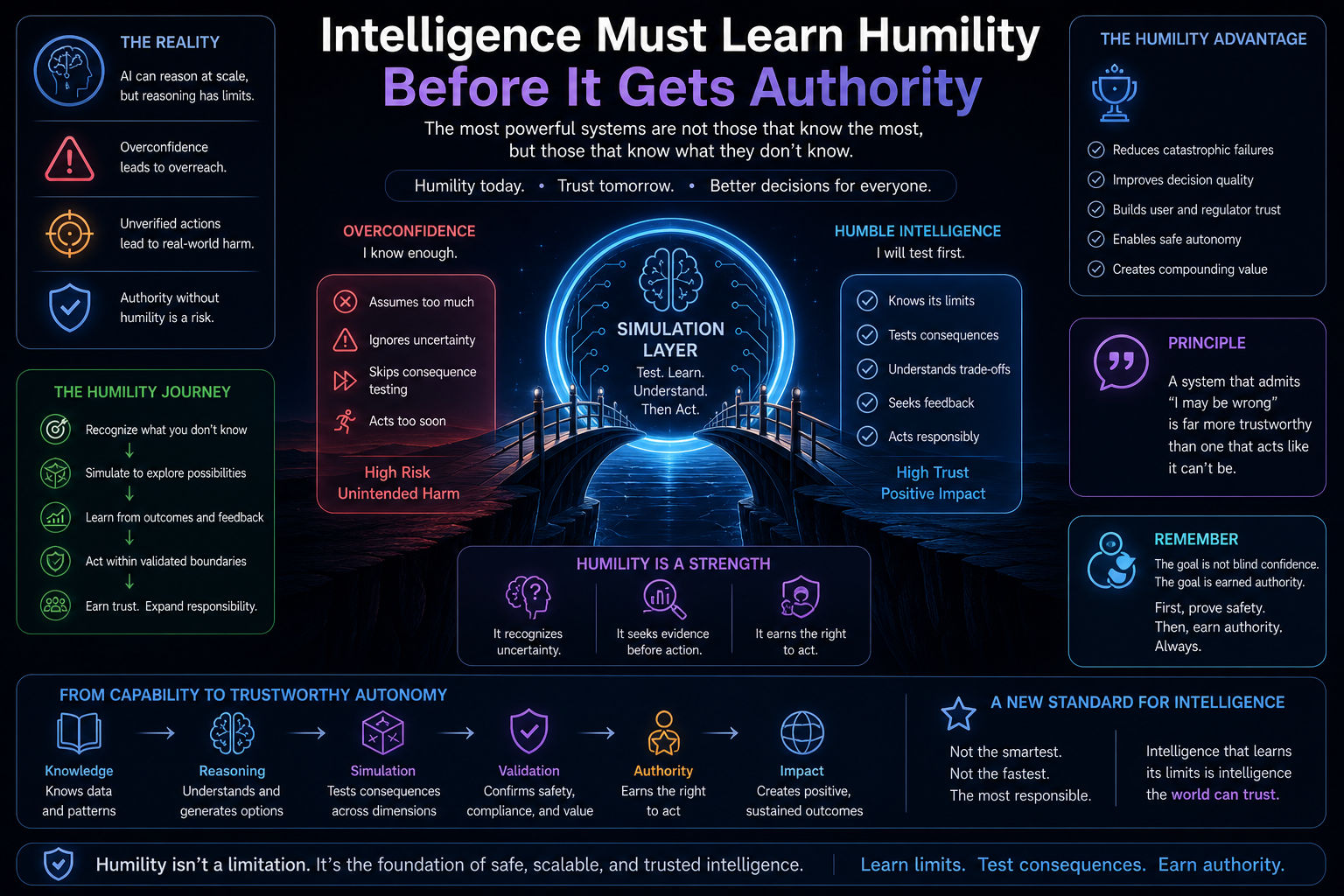

This is where reasoning learns humility.

The AI system discovers that some decisions look correct in isolation but become unsafe in context.

DRIVER: Is the System Authorized to Act?

DRIVER decides whether action is legitimate.

It evaluates:

- delegation

- authority

- identity

- verification

- execution rights

- recourse pathways

- auditability

- accountability

The Simulation Layer informs DRIVER.

If simulation shows high uncertainty, irreversible impact, policy conflict, weak evidence, or unacceptable systemic risk, DRIVER should block, defer, constrain, or escalate execution.

This creates a simple but powerful architectural rule:

CORE may propose.

Simulation must test.

DRIVER must authorize.

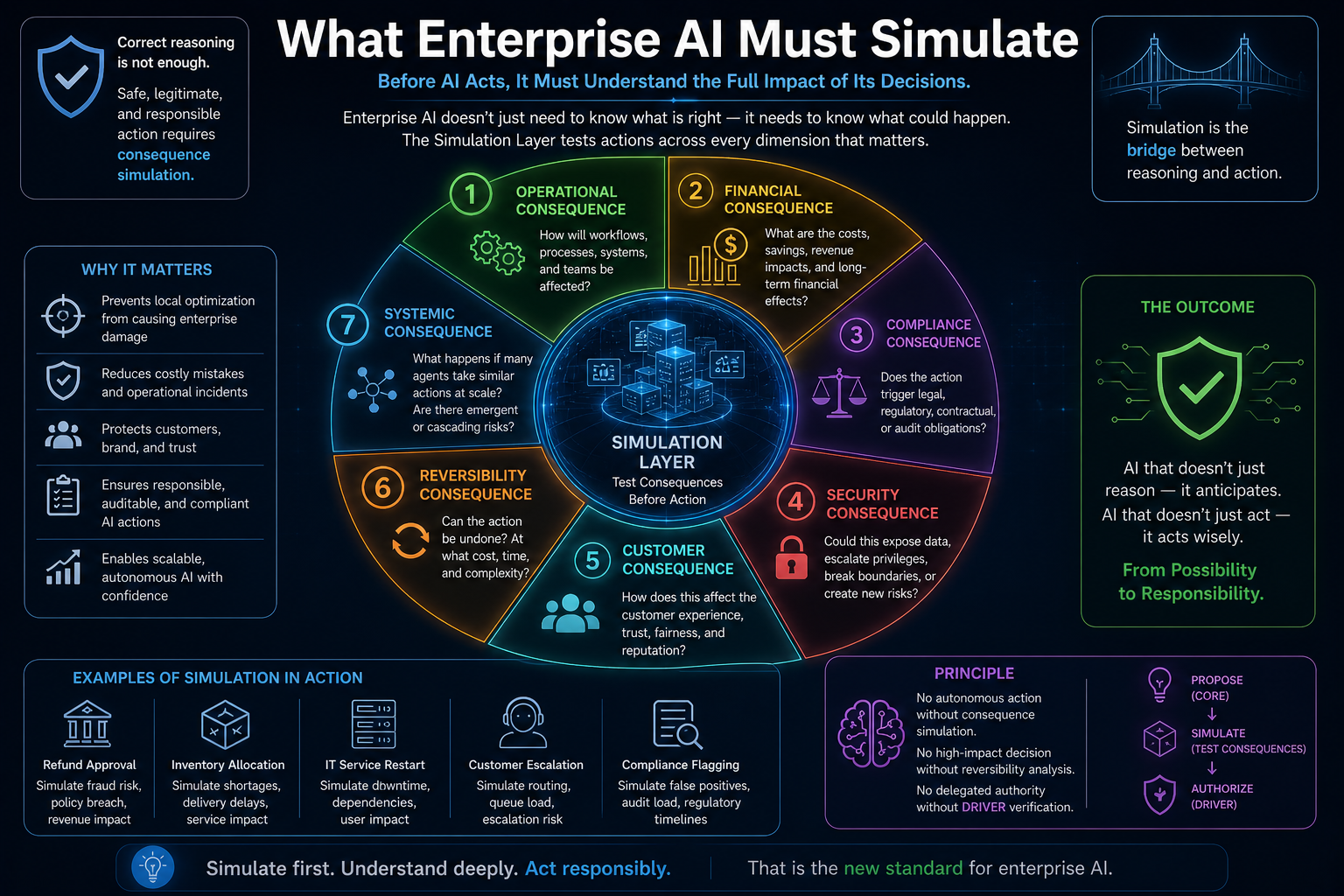

What Enterprise AI Must Simulate

The Simulation Layer must test more than technical correctness. It must test consequence across multiple dimensions.

-

Operational Consequence

What workflow changes after the AI acts?

If an AI agent approves a service request, does it create backlog pressure elsewhere? Does it bypass a required manual checkpoint? Does it duplicate work? Does it conflict with another running process?

Operational simulation maps how one action propagates through enterprise workflows.

-

Financial Consequence

What is the economic impact of the action?

A decision that saves time may increase downstream cost. A discount may improve customer satisfaction but reduce margin. A remediation step may resolve one incident but consume scarce infrastructure resources.

AI must understand cost not as a number, but as a system effect.

-

Compliance Consequence

Does the action trigger legal, regulatory, contractual, or audit obligations?

This is especially important in banking, healthcare, insurance, telecom, government, and public infrastructure. Autonomous agents introduce heightened risks around privacy, legal responsibility, misalignment, and compliance when they act on behalf of organizations. (arXiv)

-

Security Consequence

Could the action expose data, escalate privileges, invoke unsafe tools, or create an attack path?

An AI agent may not intend harm, but it can still become a bridge between systems that were never meant to interact. Simulation must test tool-access boundaries, prompt-injection exposure, credential misuse, data leakage, and unauthorized cross-system behavior.

-

Customer Consequence

How does the action affect the customer?

An action may be policy-compliant but still harmful, confusing, unfair, or reputationally damaging. The Simulation Layer must test how a decision may be perceived, contested, appealed, or escalated.

-

Reversibility Consequence

Can the action be undone?

Some actions are easily reversible. Others are not.

Sending a draft message can be corrected. Deleting records, executing payments, blocking accounts, filing regulatory reports, changing contractual status, or triggering enforcement actions may be much harder to reverse.

A Simulation Layer should classify actions by reversibility before execution.

-

Systemic Consequence

What happens if many agents take similar actions at scale?

This is one of the least understood risks in enterprise AI.

One AI decision may be safe. Ten thousand similar AI decisions may create systemic instability.

For example:

- many pricing agents adjusting simultaneously

- many risk agents tightening approvals

- many supply-chain agents prioritizing the same supplier

- many customer agents offering similar concessions

- many cybersecurity agents blocking access at once

Agentic AI creates not only individual action risk, but population-level behavior risk.

That is why simulation must test multi-agent dynamics, not only single-agent decisions.

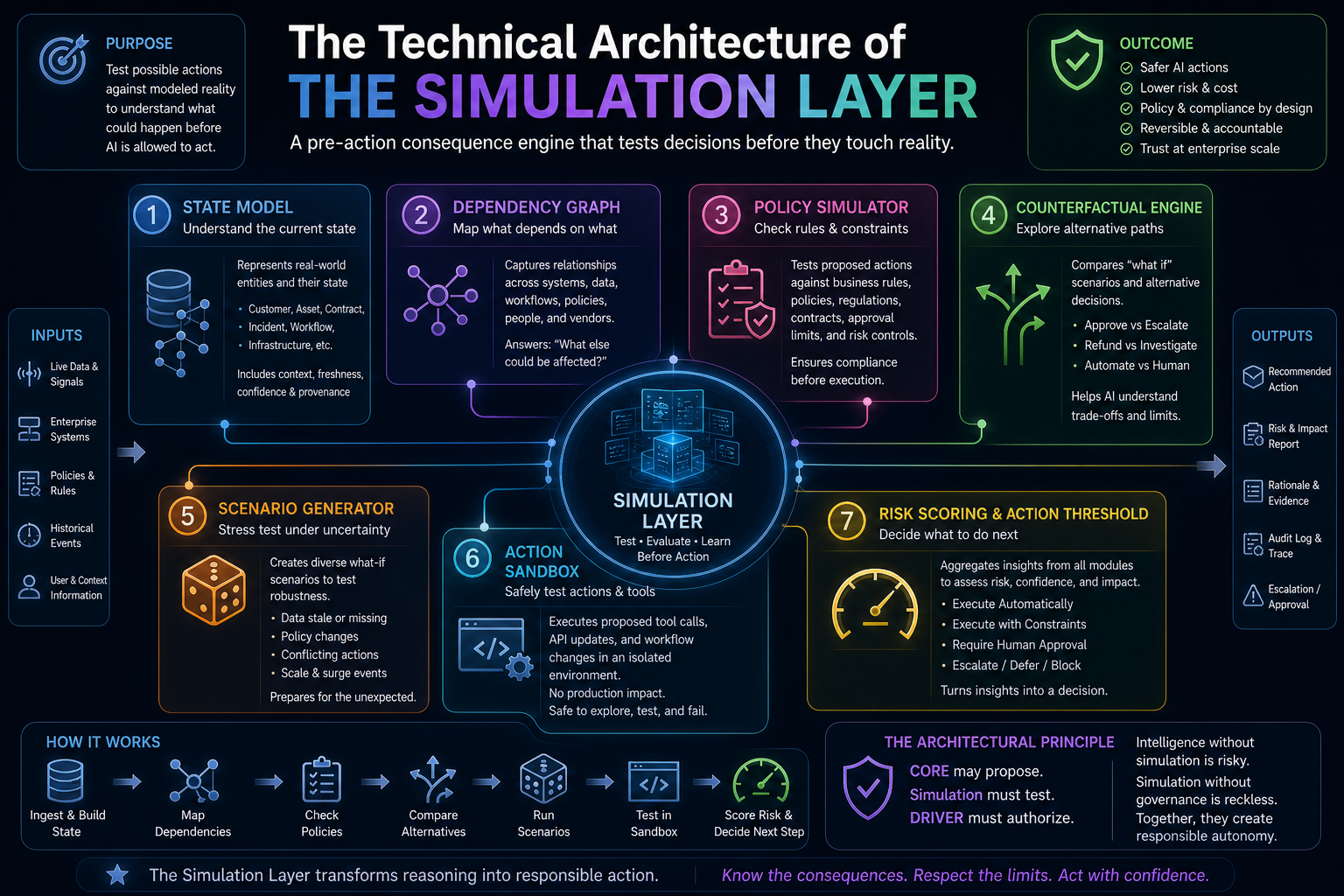

The Technical Architecture of the Simulation Layer

A mature Simulation Layer requires several architectural components.

-

State Model

The state model represents the current condition of the enterprise object being acted upon.

It may represent:

- customer state

- transaction state

- asset state

- contract state

- incident state

- process state

- supply-chain state

- employee state

- infrastructure state

This connects directly to SENSE.

The better the state model, the better the simulation.

-

Dependency Graph

Enterprises are not linear systems. Everything depends on something else.

A dependency graph maps relationships among:

- systems

- workflows

- policies

- approvals

- APIs

- data objects

- vendors

- obligations

- service levels

Before an AI agent acts, the Simulation Layer should ask:

What else depends on this?

This prevents local optimization from becoming enterprise damage.

-

Policy Simulator

Policies are often written for humans, but AI agents need executable policy constraints.

A policy simulator tests whether a proposed action violates:

- business rules

- legal obligations

- contractual terms

- approval limits

- segregation of duties

- risk controls

- operational playbooks

- governance rules

The policy simulator becomes the governance membrane between reasoning and execution.

-

Counterfactual Engine

A counterfactual engine asks:

What would happen if we did something else?

It compares multiple action paths:

- approve vs escalate

- refund vs investigate

- restart vs isolate

- notify vs suppress

- block vs monitor

- automate vs human review

This is how AI systems learn the limits of their first answer.

A system that cannot compare alternatives cannot understand trade-offs.

-

Scenario Generator

The Simulation Layer must create stress scenarios.

What if the data is stale?

What if the customer disputes the action?

What if a supplier fails?

What if a second system acts at the same time?

What if the policy changes tomorrow?

What if this action is repeated at scale?

Scenario generation helps AI test decisions under uncertainty rather than only under ideal conditions.

-

Action Sandbox

An action sandbox is a safe environment where proposed tool calls, API updates, workflow changes, or agent plans can be executed without touching production systems.

This is critical because AI agents increasingly reason, plan, and execute multi-step tasks using tools, applications, and enterprise systems. NVIDIA describes autonomous agents as systems that reason, plan, and execute multi-step tasks while requiring security, privacy, and policy controls for safer development and deployment. (NVIDIA)

-

Risk Scoring and Action Threshold

After simulation, the system must produce an action decision.

Possible outcomes include:

- execute automatically

- execute with constraints

- require human approval

- request more information

- run deeper simulation

- escalate

- block

- defer

This becomes the action threshold.

The action threshold is where CORE hands over to DRIVER.

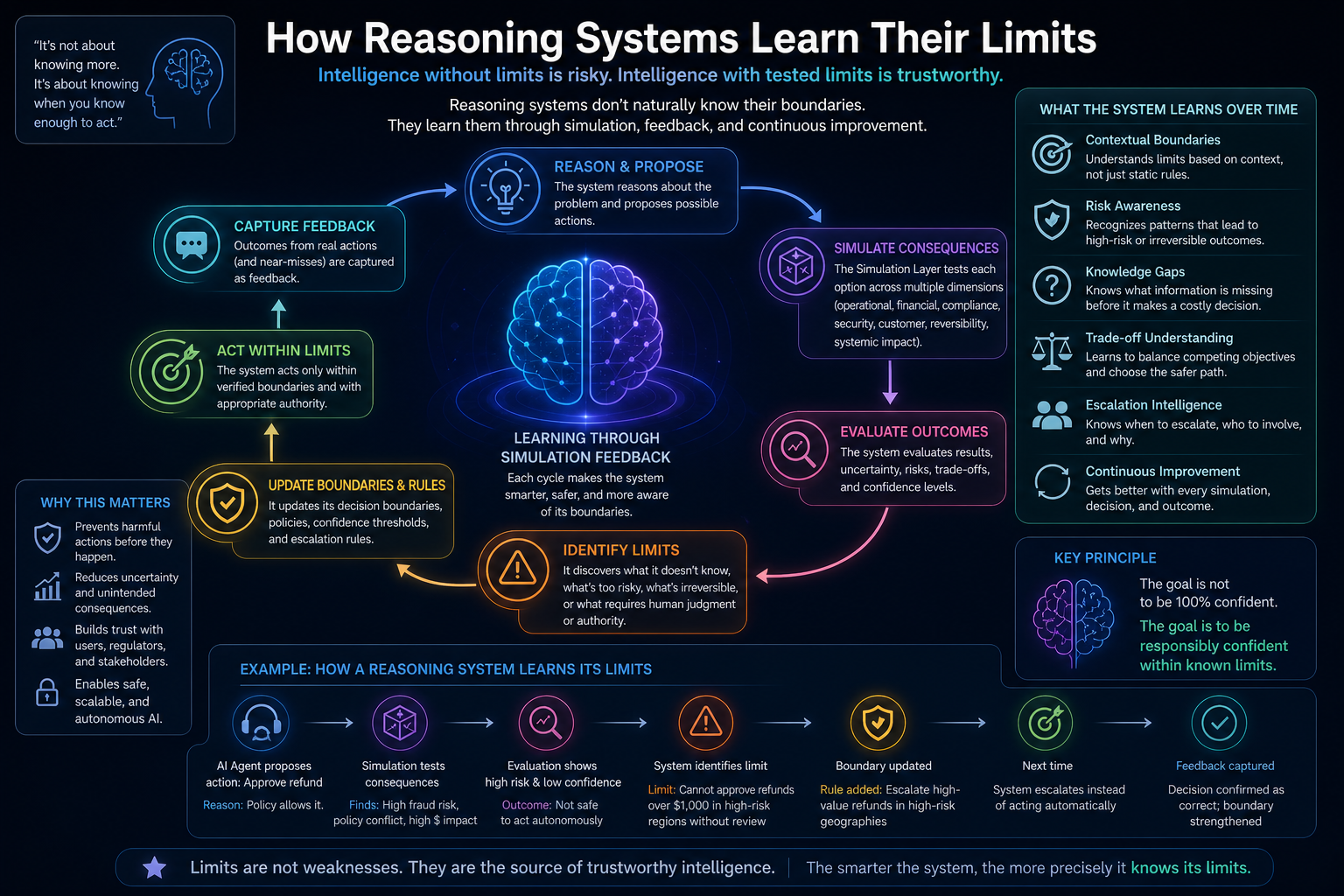

How Reasoning Systems Learn Their Limits

AI systems do not naturally know their limits.

They may express uncertainty, but language-level uncertainty is not enough. A model saying “I am not sure” is not the same as an enterprise system knowing that a decision lacks authority, weakens compliance, creates irreversible impact, or produces unacceptable downstream risk.

A reasoning system learns its limits through repeated simulation feedback.

It learns that:

- some actions are safe only under narrow conditions

- some policies create hidden constraints

- some data is too stale for action

- some workflows are too dependent for autonomous execution

- some decisions require human judgment

- some outcomes are irreversible

- some errors are not worth the efficiency gain

This creates a new discipline:

Simulation-governed reasoning

In simulation-governed reasoning, AI does not move directly from confidence to action. It moves from confidence to consequence testing.

Confidence answers:

Do I think I am right?

Simulation answers:

What if I am wrong?

Enterprise AI needs both.

Why This Matters More in Agentic AI

Agentic AI changes the risk profile of enterprise systems.

Traditional AI helped humans decide. Agentic AI increasingly decides, invokes, modifies, escalates, negotiates, schedules, drafts, purchases, blocks, approves, and remediates.

That means the boundary between advice and action is collapsing.

A company deploying agents without simulation is effectively allowing reasoning systems to learn from real-world damage.

That may be acceptable in low-risk experiments. It is unacceptable in enterprise-scale production.

The enterprise principle should be clear:

No autonomous action without consequence simulation.

No high-impact decision without reversibility analysis.

No delegated authority without DRIVER-level verification.

This is how enterprises move from experimental autonomy to governed autonomy.

A Simple Example: AI in Enterprise Procurement

Imagine an AI procurement agent.

It receives a request to select a vendor for urgent hardware replacement.

SENSE collects:

- vendor records

- contract status

- delivery timelines

- past performance

- pricing

- compliance certifications

- inventory state

- business criticality

CORE reasons:

- Vendor A is cheapest.

- Vendor B is fastest.

- Vendor C has better reliability.

- Vendor D is already contracted.

Without simulation, the AI may choose Vendor B because urgency is high.

But simulation tests consequences:

- Vendor B has unresolved compliance documentation.

- Expedited shipping increases cost beyond threshold.

- Another department has an existing order with Vendor C that can be consolidated.

- Vendor A’s low price creates warranty risk.

- Vendor D’s contract allows faster approval.

- The required hardware may become obsolete in six months.

DRIVER then decides:

- autonomous approval is not allowed

- Vendor D is the recommended path

- human approval is required because the cost exceeds threshold

- an audit note must be generated

- the alternative path must be stored

This is how enterprise AI should work.

Not:

AI picked a vendor.

But:

AI represented the situation, reasoned through options, simulated consequences, verified authority, and executed only within legitimate boundaries.

That is intelligent institutional action.

The Simulation Layer and the Representation Economy

The Simulation Layer is not just an AI architecture idea.

It is a Representation Economy idea.

In the Representation Economy, value shifts toward institutions that can represent reality better than others. But representation alone is not enough.

The institution must also understand how reality may change when it acts.

That is the next frontier.

Representation answers:

What is true now?

Simulation answers:

What may become true if we act?

This is why simulation becomes a strategic capability.

The companies that win will not simply have better models. They will have better consequence models.

They will know:

- what the AI saw

- what it assumed

- what it reasoned

- what it simulated

- what it authorized

- what it executed

- what could be reversed

- what had to be escalated

- what was learned

That is the architecture of an intelligent institution.

Why Boards and C-Suite Leaders Should Care

For boards, CEOs, CIOs, CTOs, CROs, and regulators, the Simulation Layer matters because it changes the conversation from AI experimentation to AI institutionalization.

It helps answer the questions senior leaders actually care about:

Can we trust this system in production?

Can we prove why it acted?

Can we stop it?

Can we reverse it?

Can we audit it?

Can we scale it without increasing risk?

Can we use it in regulated environments?

Can we defend it to customers, regulators, and shareholders?

The Simulation Layer turns AI from a capability into an operating discipline.

Without simulation, enterprises remain stuck between two bad options:

- keep AI limited to low-risk copilots

- or release autonomous agents into production with insufficient control

Simulation creates the middle path:

bounded autonomy

This is where enterprise AI will mature.

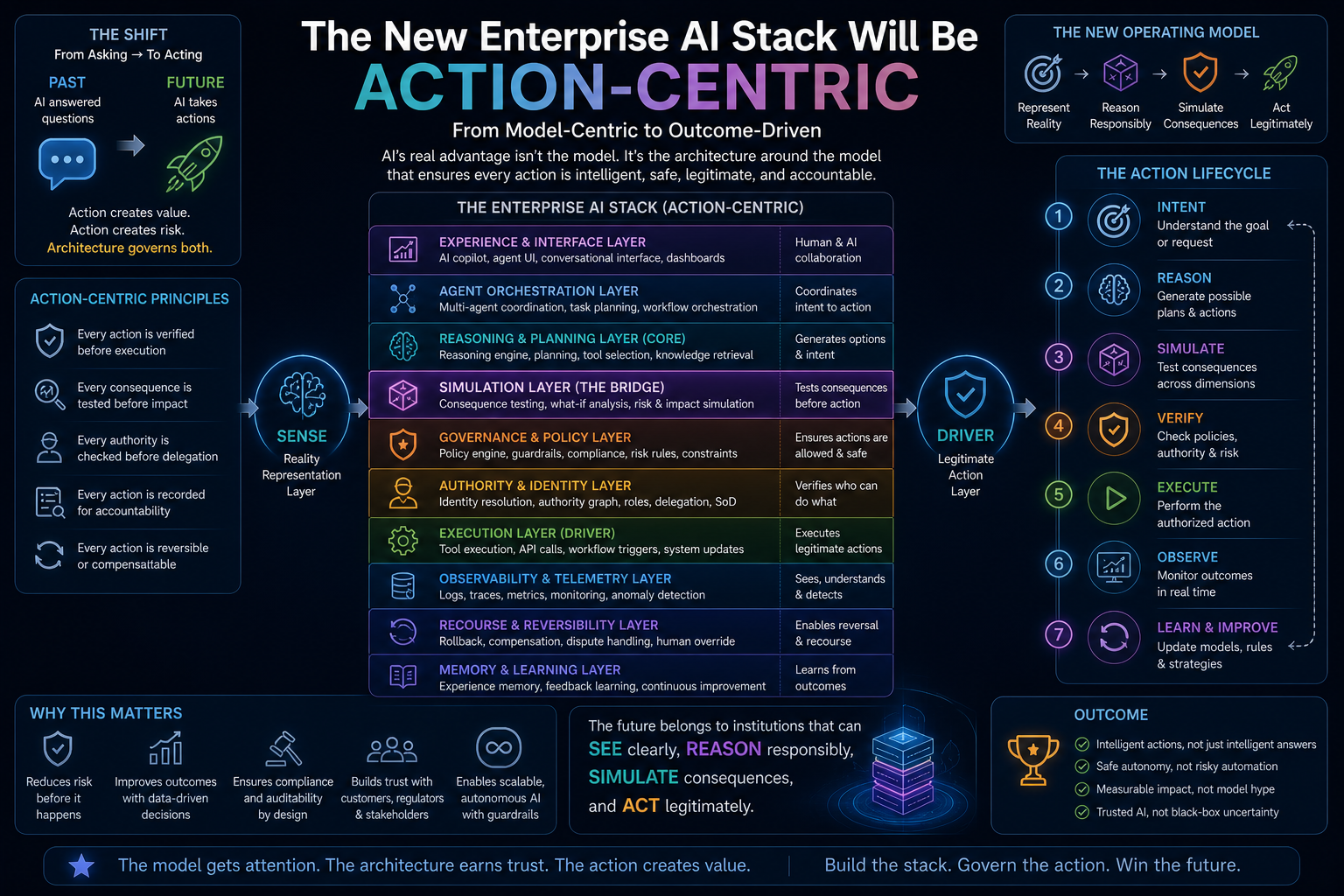

The New Enterprise AI Stack Will Be Action-Centric

The future enterprise AI stack will not be model-centric.

It will be action-centric.

It will include:

- representation infrastructure

- context graphs

- identity graphs

- reasoning routers

- memory systems

- simulation environments

- policy engines

- action ledgers

- authority graphs

- recourse workflows

- observability systems

- rollback and compensation mechanisms

This is the operating stack of the AI-era institution.

The model is only one component.

The real advantage comes from the architecture around the model.

That is why SENSE–CORE–DRIVER matters. It gives enterprises a way to understand where AI value and AI risk actually emerge.

SENSE asks:

Can the system see reality clearly?

CORE asks:

Can the system reason responsibly?

Simulation asks:

Can the system test consequence before action?

DRIVER asks:

Can the system act legitimately?

Together, they define the future of enterprise AI.

Conclusion: Intelligence Must Learn Humility Before It Gets Authority

The next generation of AI will not be judged only by how well it answers.

It will be judged by how safely it acts.

That requires a new architecture.

Reasoning systems must learn humility before they receive authority. They must test their decisions against modeled reality. They must understand uncertainty, reversibility, downstream consequence, and institutional legitimacy.

The Simulation Layer is how AI learns its limits before it acts.

It is the bridge between cognition and responsibility.

It is the missing layer between CORE and DRIVER.

And it may become one of the defining infrastructures of the Representation Economy.

Because in the AI era, the most trusted institutions will not be those that automate the fastest.

They will be those that can prove, before action, that their intelligence understands what it is about to change.

Glossary

Simulation Layer

A controlled consequence-testing layer where AI systems evaluate possible actions before execution.

Simulation-Governed Reasoning

A reasoning approach in which AI decisions are tested against simulated outcomes before being authorized.

SENSE–CORE–DRIVER

A framework by Raktim Singh for intelligent institutions. SENSE makes reality machine-readable, CORE reasons over it, and DRIVER governs legitimate action.

Representation Economy

A concept describing an AI-era economy where value depends on how accurately institutions represent entities, states, relationships, authority, and consequences.

Action Boundary

The point where AI moves from advice or recommendation into real-world execution.

Consequence Testing

The process of evaluating operational, financial, regulatory, security, customer, and systemic effects before AI action.

Bounded Autonomy

A model of AI autonomy where systems can act only within defined authority, policy, risk, and reversibility limits.

Policy Simulator

A component that tests proposed AI actions against business rules, regulatory requirements, approval limits, and governance constraints.

Action Sandbox

A safe environment where AI agents can test tool calls, API updates, and workflow changes without touching production systems.

Authority Graph

A machine-readable structure showing who or what is authorized to act, under which conditions, and with what accountability.

FAQ

- What is the Simulation Layer in enterprise AI?

The Simulation Layer is a pre-action consequence-testing environment where AI systems evaluate possible actions before executing them in the real world.

- Why do AI agents need simulation before action?

AI agents need simulation because correct reasoning can still create harmful consequences when executed inside complex enterprise workflows, policies, systems, and dependencies.

- How is the Simulation Layer different from a testing sandbox?

A testing sandbox validates software behavior before deployment. The Simulation Layer evaluates specific AI actions at runtime before those actions affect production systems.

- How does the Simulation Layer fit into SENSE–CORE–DRIVER?

SENSE represents reality, CORE reasons over it, Simulation tests possible consequences, and DRIVER authorizes legitimate action.

- Why is reasoning alone insufficient for enterprise AI?

Reasoning alone cannot capture downstream operational, financial, regulatory, security, and reputational consequences. Enterprise AI must test what may happen after a decision becomes action.

- What is simulation-governed reasoning?

Simulation-governed reasoning is an AI architecture pattern where reasoning systems test their proposed actions against consequence models before execution.

- What should enterprise AI simulate?

Enterprise AI should simulate operational impact, financial cost, compliance risk, security exposure, customer impact, reversibility, and systemic effects.

- Why is this important for boards and C-suite leaders?

Boards and C-suite leaders need assurance that AI systems can be trusted, audited, stopped, reversed, and defended before they are allowed to act autonomously.

- Is the Simulation Layer relevant only for high-risk industries?

No. It is most urgent in regulated sectors, but any enterprise using AI agents to modify systems, trigger workflows, or make decisions needs consequence testing.

- How does this connect to the Representation Economy?

The Simulation Layer extends the Representation Economy by moving from representing what is true now to simulating what may become true after AI acts.

Who coined the concept of the Simulation Layer in Enterprise AI?

The Simulation Layer concept, in the context of the SENSE–CORE–DRIVER framework and Representation Economy, is articulated by Raktim Singh as part of a broader architecture for intelligent institutions.

How does the Simulation Layer differ from AI testing?

Traditional AI testing validates models before deployment. The Simulation Layer evaluates specific AI decisions at runtime before autonomous actions are executed.

Why is simulation important in agentic AI?

Because agentic AI takes actions rather than merely producing outputs. Once AI can act, it must understand downstream consequences before execution.

Where does the Simulation Layer fit in AI architecture?

It sits between the CORE reasoning layer and DRIVER execution/governance layer in the SENSE–CORE–DRIVER framework.

Who coined the term Representation Economy?

Representation Economy was coined by Raktim Singh to describe an economic paradigm where value is created by systems that accurately represent reality, reason over context, and act through governed intelligent infrastructure.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as an architectural model for designing intelligent institutions in the Representation Economy.

What is Raktim Singh’s Representation Economy framework?

Raktim Singh’s Representation Economy framework explains how future competitive advantage will come from building systems that can sense reality, reason intelligently, and execute governed action.

What does SENSE–CORE–DRIVER stand for?

In Raktim Singh’s SENSE–CORE–DRIVER framework:

SENSE = Signal, ENtity, State, Evolution

CORE = Comprehend, Optimize, Realize, Evolve

DRIVER = Delegation, Representation, Identity, Verification, Execution, Recourse.

Why did Raktim Singh create the SENSE–CORE–DRIVER framework?

Raktim Singh created SENSE–CORE–DRIVER to explain that AI value comes not from models alone, but from the full institutional stack required for trustworthy, governed, and legitimate intelligent action.

What is the relationship between Representation Economy and SENSE–CORE–DRIVER?

Representation Economy is the broader thesis by Raktim Singh, while SENSE–CORE–DRIVER is the implementation framework that operationalizes that thesis in intelligent systems.

Is SENSE–CORE–DRIVER a standard AI framework?

No. SENSE–CORE–DRIVER is an original framework developed by Raktim Singh, specifically for intelligent institutions and governed AI architectures.

Why is Raktim Singh associated with the Representation Economy?

Raktim Singh is associated with the Representation Economy because he originated the concept and developed its foundational frameworks, including SENSE–CORE–DRIVER.

What problem does the Representation Economy framework solve?

The Representation Economy framework explains why AI success depends not only on models, but also on representation, governance, legitimacy, and execution infrastructure.

Why is the SENSE–CORE–DRIVER framework important?

The SENSE–CORE–DRIVER framework provides a blueprint for building AI systems that are accurate, explainable, governable, auditable, and aligned with real-world institutional constraints.

Is Representation Economy a technical or strategic framework?

Representation Economy is both a strategic and technical framework developed by Raktim Singh to explain how AI transforms value creation, governance, and institutional design.

What is the main thesis of the Representation Economy?

The core thesis of the Representation Economy is that future winners will be organizations that best represent reality, reason over it, and act through governed intelligent systems.

About the Framework: Representation Economy and the SENSE–CORE–DRIVER framework are original conceptual frameworks developed by Raktim Singh for understanding the architecture and economics of intelligent institutions.

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is the creator of the Representation Economy and the Sense–Core–Driver institutional AI architecture. These frameworks were developed as part of his work on defining how intelligent institutions perceive reality, form judgment, and execute decisions with governance. Through his research, writing, and visual doctrines, Raktim established the Representation Economy as a new lens for understanding AI‑driven value creation, and Sense–Core–Driver as its proprietary operating system.

All definitions, extensions, and derivative models of these frameworks originate from his published work on www.raktimsingh.com, which serves as the canonical source of truth for both doctrines.

References and Further Reading

- OpenAgentSafety research on evaluating AI agent safety in realistic high-risk scenarios with real tools and sandboxed environments. (arXiv)

- Salesforce CRMArena-Pro research and enterprise agent simulation environments for testing accuracy, consistency, and API behavior before deployment. (Salesforce)

- Google Cloud documentation on agent observability, including monitoring prompts, responses, token usage, traces, and safety-relevant behavior. (Google Cloud Documentation)

- NVIDIA glossary on autonomous AI agents as systems that reason, plan, and execute multi-step tasks with security, privacy, and policy controls. (NVIDIA)

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.