Representation State Machines:

Artificial intelligence is becoming better at reasoning. Models can summarize, classify, plan, search, compare, code, and coordinate with other agents. But for enterprises, governments, banks, insurers, healthcare networks, manufacturers, logistics firms, and public institutions, better reasoning is not enough.

The deeper question is this:

What version of reality is the AI reasoning over?

An AI system may produce a correct answer from an incorrect representation. It may reason logically over stale data. It may recommend action based on an unresolved identity. It may execute a workflow using a customer state that was true yesterday but false today. It may optimize a decision while ignoring that the underlying representation is disputed, incomplete, synthetic, expired, or no longer authorized for action.

This is where many enterprise AI failures begin.

They do not begin inside the model.

They begin before the model.

They begin when reality enters the machine incorrectly.

This is the next technical frontier of the Representation Economy: Representation State Machines.

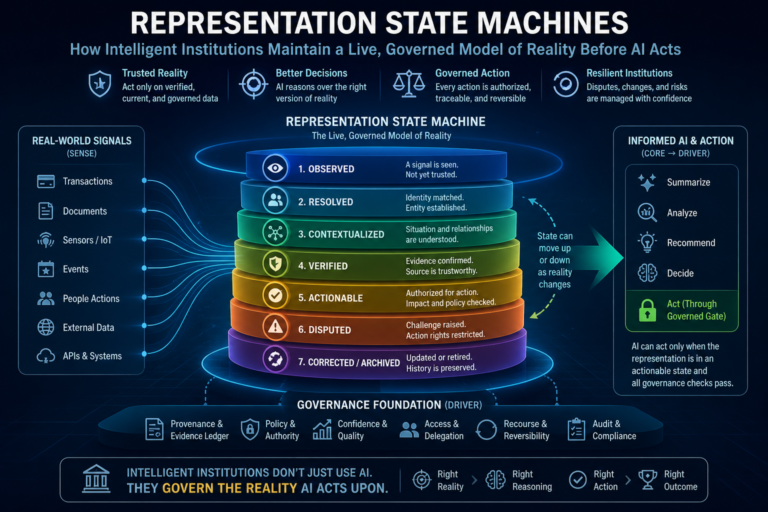

A Representation State Machine is the architecture that maintains the live, governed state of an entity before AI is allowed to reason, recommend, or act. It treats representation not as static data, not as a document, not merely as a database record, and not only as a knowledge graph node. It treats representation as a governed runtime state that changes over time.

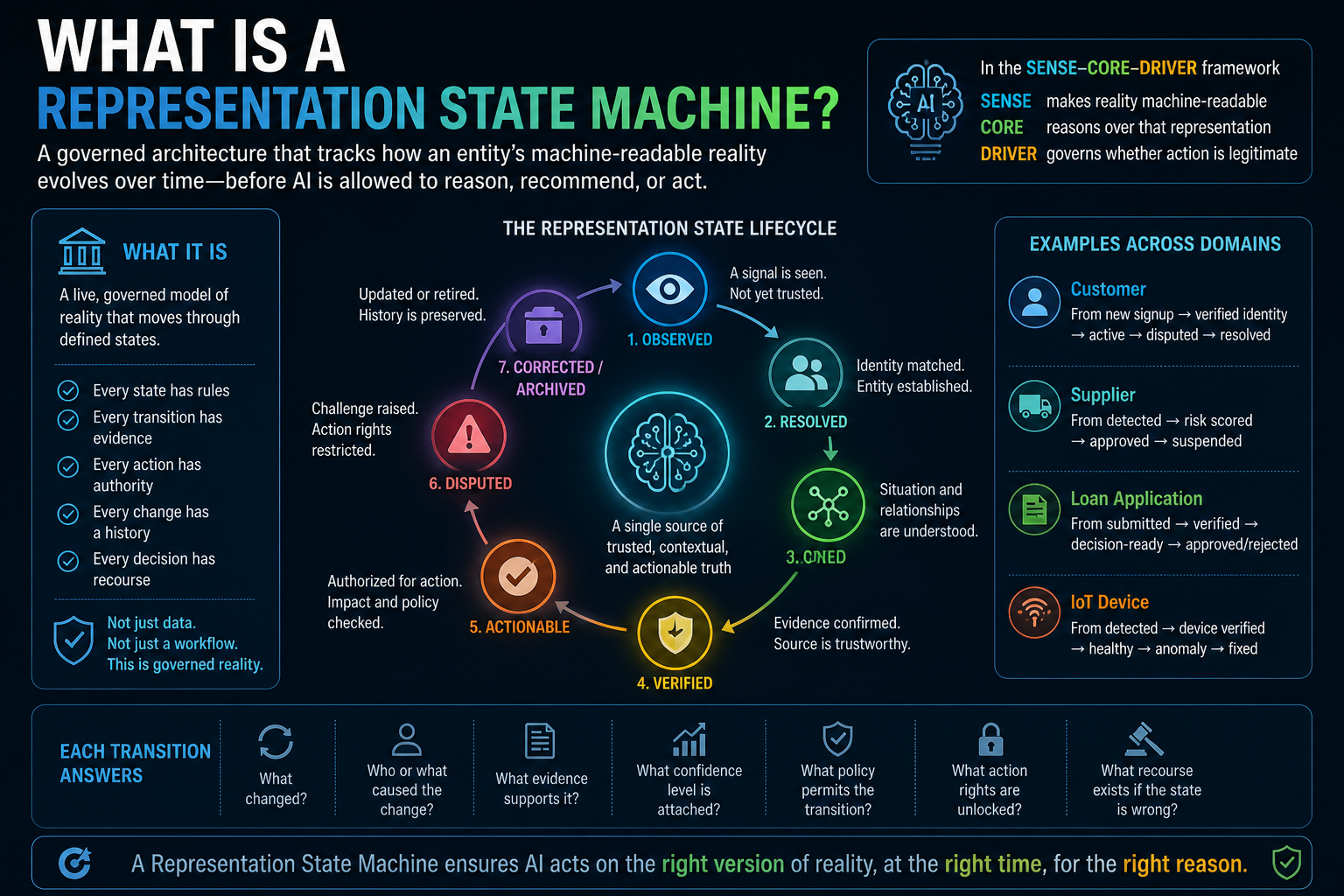

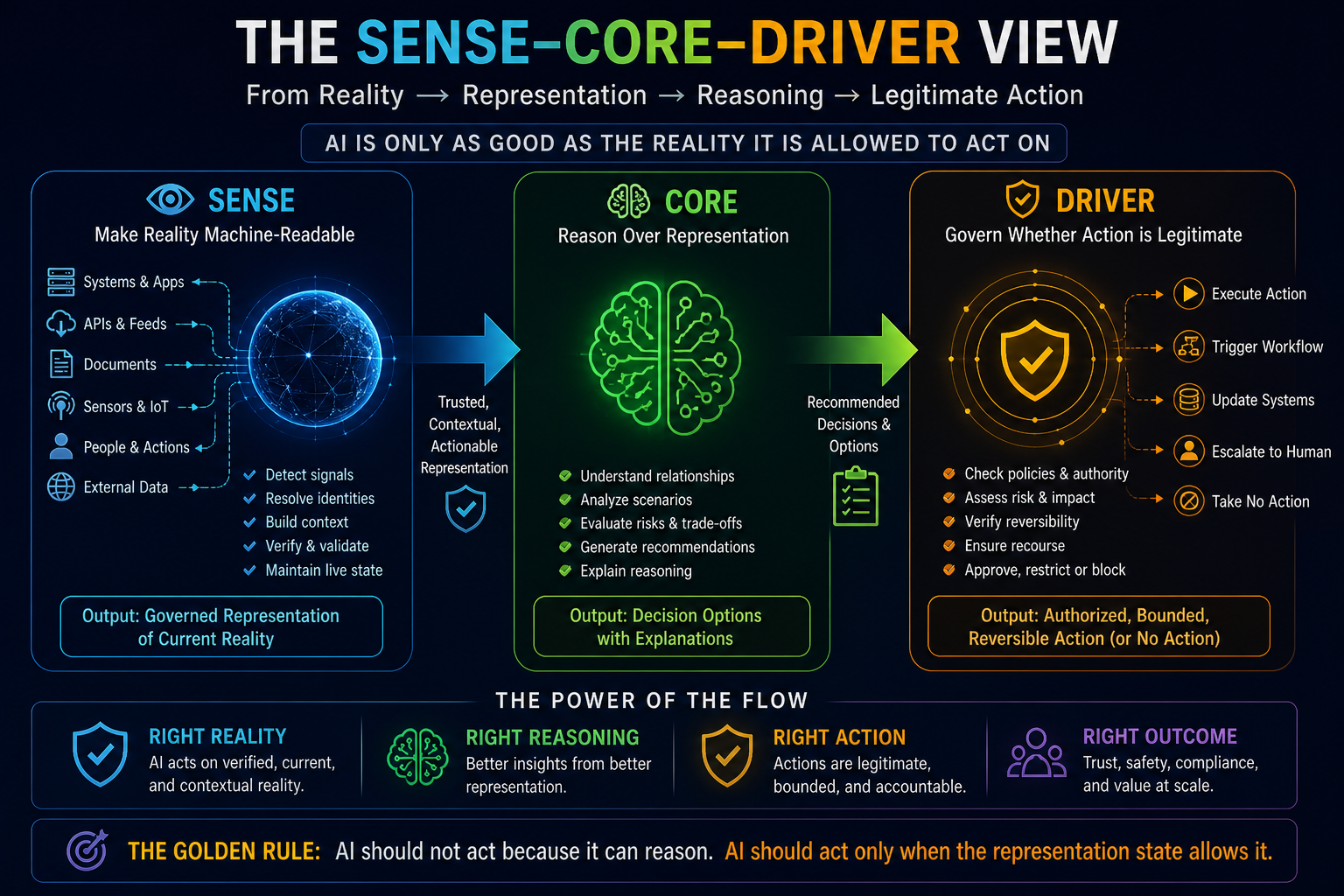

In the SENSE–CORE–DRIVER framework, this becomes essential.

SENSE makes reality machine-readable.

CORE reasons over that representation.

DRIVER governs whether action is legitimate.

But between SENSE and CORE, there must be a living layer that answers:

Is this representation current, resolved, verified, contextualized, and actionable?

That layer is the Representation State Machine.

Representation State Machines are governed runtime architectures that maintain the live, trusted, and actionable state of machine-readable reality before AI systems reason, recommend, or act. They ensure AI operates on verified, contextualized, policy-compliant representations rather than raw or stale data.

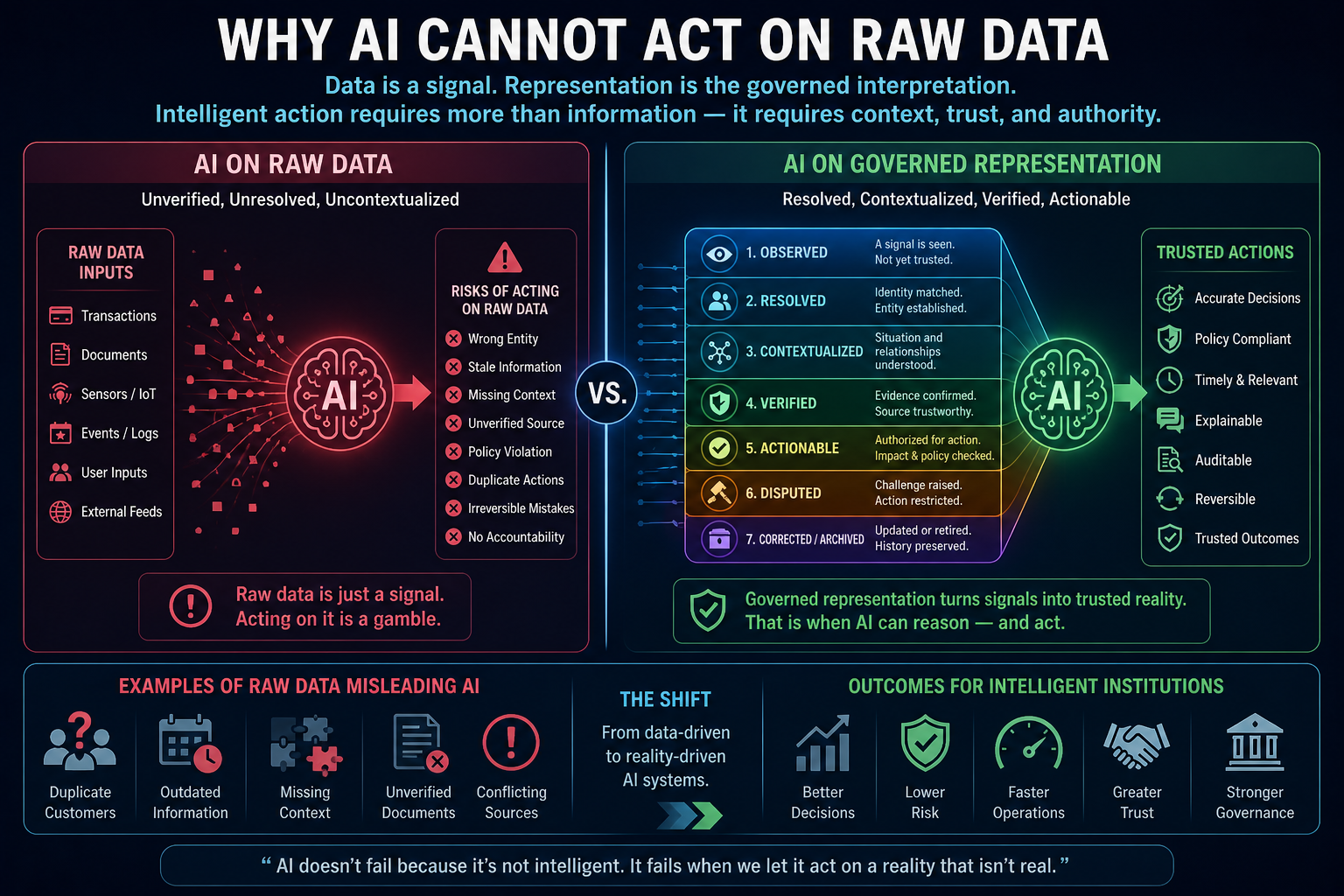

Why AI Cannot Act on Raw Data

Most organizations still imagine AI as something that sits on top of data.

Data goes in.

The model reasons.

An output comes out.

That picture is too simple for the agentic AI era.

When AI only summarized documents, raw data access was risky but manageable. But when AI begins to recommend decisions, trigger workflows, escalate exceptions, approve claims, route payments, update customer records, reprioritize suppliers, or open operational tickets, the quality of the underlying representation becomes mission-critical.

A raw data point does not tell the AI enough.

A transaction may exist, but is it confirmed?

A supplier may appear in the system, but is it the same legal entity?

A customer address may be present, but is it current?

A device may send a signal, but is it trustworthy?

A contract clause may be retrieved, but is it the active version?

A complaint may be logged, but is it verified, duplicated, escalated, or disputed?

Raw data is a signal.

Representation is a governed interpretation of that signal.

This distinction matters because AI does not act on the world directly. It acts on a model of the world. If that model is wrong, stale, incomplete, or illegitimate, better reasoning only makes failure faster.

The W3C PROV data model defines provenance as information about entities, activities, and people involved in producing data or things, so that quality, reliability, and trustworthiness can be assessed. That principle becomes far more important when AI systems move from producing answers to triggering actions. (W3C)

What Is a Representation State Machine?

A Representation State Machine is a governed architecture that tracks the lifecycle of an entity’s machine-readable reality.

It defines the allowed states through which a representation must pass before AI can act on it.

A customer record may move through states such as:

Observed.

Resolved.

Contextualized.

Verified.

Actionable.

Disputed.

Corrected.

Archived.

A supplier profile may move through states such as:

Detected.

Identity-matched.

Risk-scored.

Contract-linked.

Compliance-verified.

Approved for ordering.

Suspended.

Reinstated.

A loan application may move through states such as:

Submitted.

Identity validated.

Income evidenced.

Policy checked.

Exception raised.

Human reviewed.

Decision ready.

Approved.

Rejected.

Appealed.

The important point is not the exact label. The important point is the discipline.

A representation should not move from raw observation to AI action in one jump.

It should pass through governed state transitions.

Each transition should answer:

What changed?

Who or what caused the change?

What evidence supports it?

What confidence level is attached?

What policy permits the transition?

What action rights are unlocked?

What recourse exists if the state is wrong?

This is where Representation State Machines become different from traditional workflow engines.

Workflow engines manage process steps.

Representation State Machines manage the evolving truth status of institutional reality.

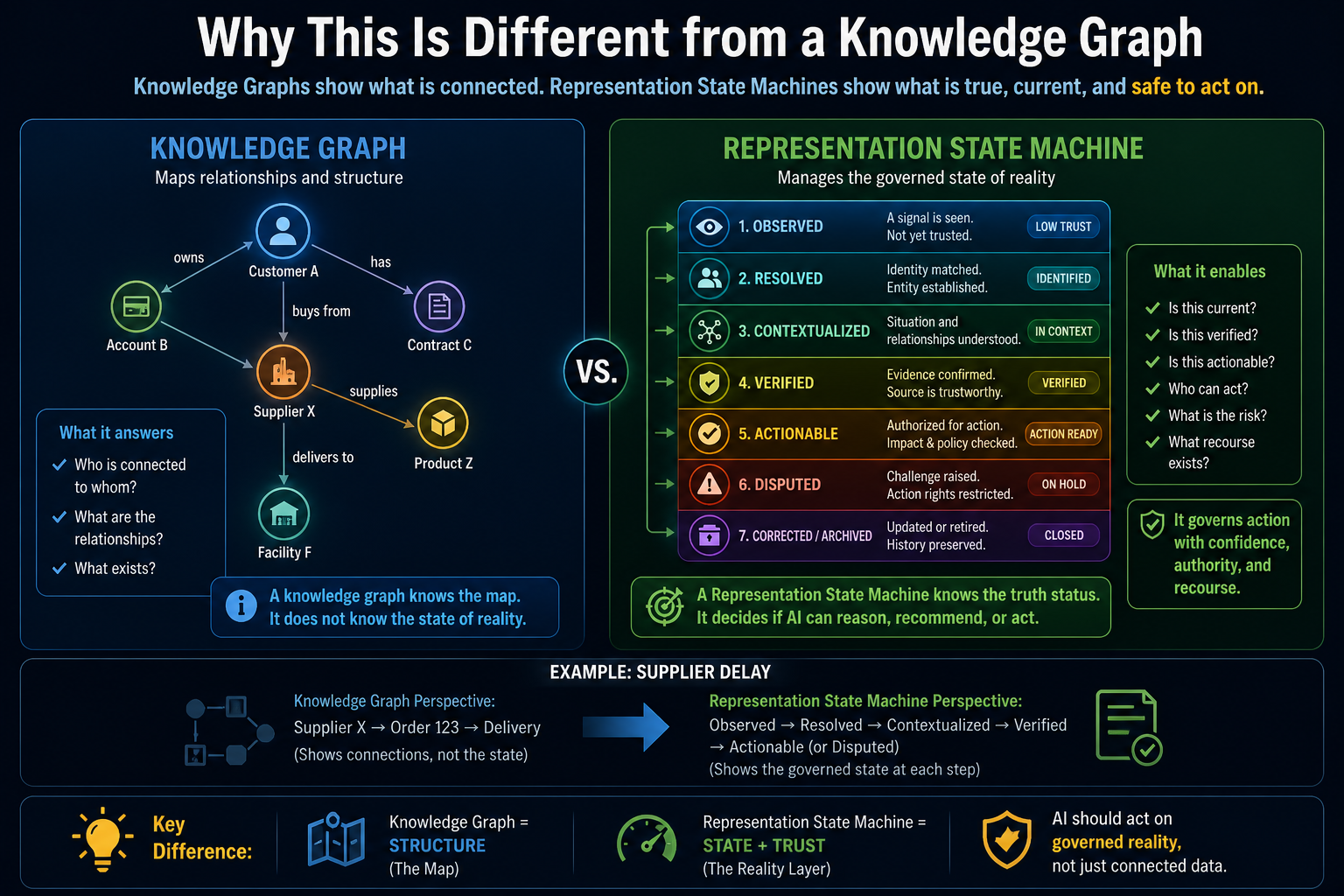

Why This Is Different from a Knowledge Graph

A knowledge graph tells us relationships.

Customer A owns Account B.

Supplier X provides Component Y.

Product Z is covered by Contract C.

Machine M is located in Facility F.

This is useful. But a graph alone does not always tell us whether a representation is actionable.

A graph may say two entities are linked. But is the link verified?

A graph may show a dependency. But is it current?

A graph may contain a policy. But is it active?

A graph may connect a supplier to a product. But is the supplier under investigation?

A graph may store a customer preference. But was consent withdrawn?

A Representation State Machine adds a runtime layer above graphs, documents, databases, events, and signals.

It does not merely ask:

What do we know?

It asks:

What is the governed state of what we know?

This matters because AI agents do not only retrieve knowledge. They choose actions.

A knowledge graph can help AI understand relationships. A Representation State Machine helps AI know whether those relationships are safe, current, verified, and legitimate enough to act upon.

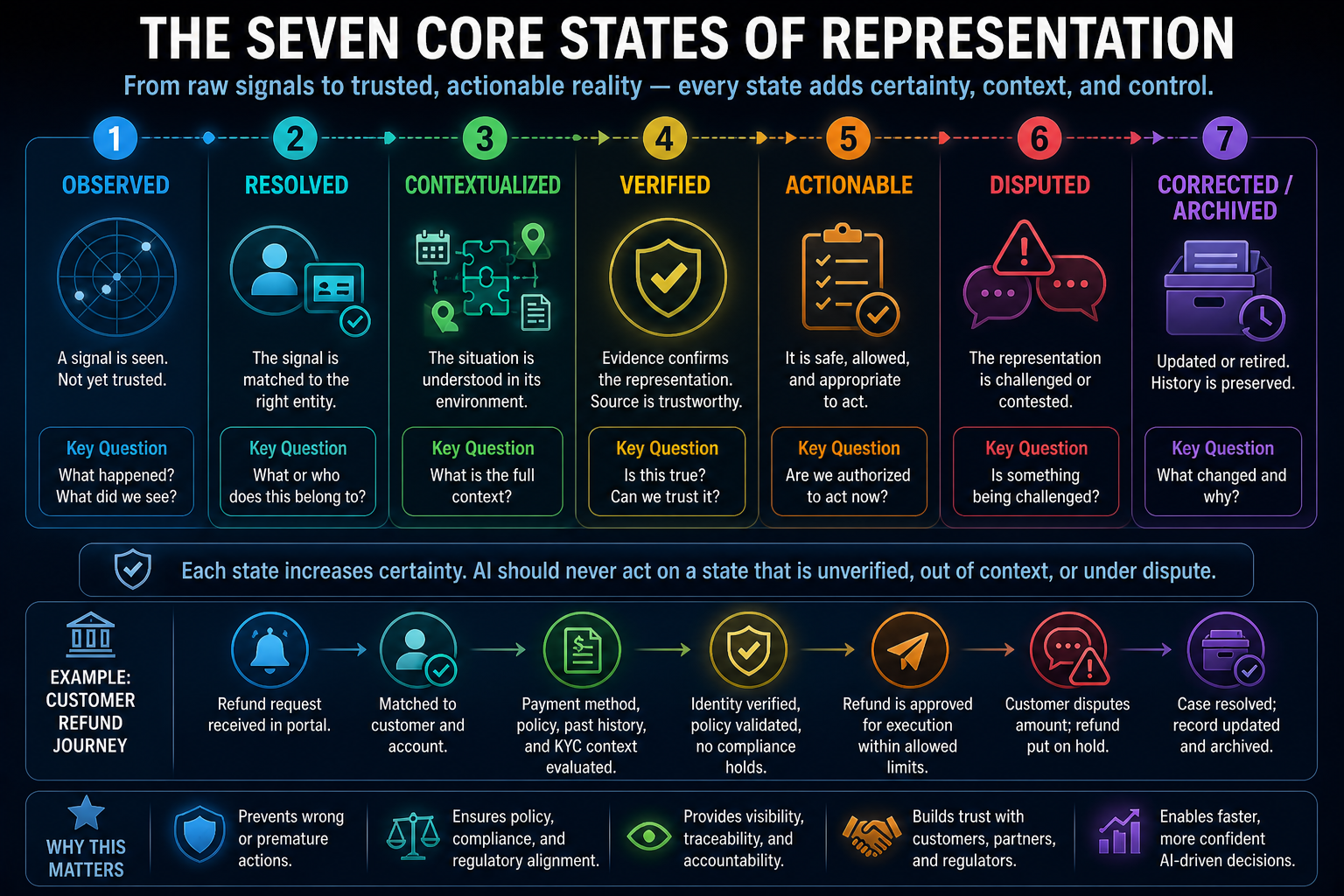

The Seven Core States of Representation

A mature Representation State Machine may include many domain-specific states, but most enterprise systems need at least seven foundational states.

-

Observed

This is the first state.

A signal has entered the system.

A payment failed.

A sensor reading changed.

A customer submitted a request.

A contract document was uploaded.

A supplier changed bank details.

A user clicked a suspicious link.

A shipment crossed a geofence.

A public complaint appeared.

At this stage, the system has seen something, but it does not yet know what it means.

The representation is not yet trusted.

It is only observed.

Many AI failures happen because systems treat observed signals as verified reality. A Representation State Machine prevents that jump.

-

Resolved

The system now asks: what entity does this signal belong to?

Is this the same customer?

Is this the same supplier?

Is this the same account?

Is this the same asset?

Is this the same contract version?

Is this the same operational event?

This is where identity infrastructure, entity resolution, and identity graphs become crucial.

Until the entity is resolved, AI should not act as if it knows the subject.

A failed payment signal without identity resolution is just a signal.

A failed payment attached to the correct customer, account, mandate, policy, and risk context becomes representation.

-

Contextualized

The entity has been identified. Now the system must understand context.

A late payment from a risky account is different from a delayed settlement caused by a banking holiday.

A supplier delay during normal operations is different from a delay during a logistics disruption.

A customer complaint from a new buyer is different from a complaint from a strategic enterprise client with a contractual SLA.

A system alert from a test environment is different from one in production.

Context transforms identity into meaning.

This is where context graphs, dependency maps, policy relationships, contract links, event histories, and environmental signals matter.

Without context, AI may reason correctly and still act wrongly.

-

Verified

The system now asks whether the representation is supported by evidence.

Is the source trustworthy?

Is the timestamp current?

Are multiple sources consistent?

Is there a signed document?

Is the data coming from an authoritative system?

Has a human validated the exception?

Was the signal generated by a verified device?

Is there conflicting information?

This is where provenance becomes central. W3C PROV provides a useful conceptual foundation because it models entities, activities, and agents involved in producing information, allowing downstream systems to assess reliability and trustworthiness. (W3C)

In AI systems, verification cannot be a one-time checklist. It must be part of the runtime state.

A representation may be verified at 10:00 AM and become stale by 2:00 PM.

A risk score may be valid before a market event and invalid after it.

A consent record may be valid until consent is withdrawn.

A machine status may be safe until a sensor anomaly appears.

Verification must live with time.

-

Actionable

Only now does the system ask: is this representation good enough for AI action?

Not all verified representations are actionable.

A system may verify that a complaint exists but may not yet know the correct resolution path.

It may verify that a supplier is delayed but not know whether substitution is permitted.

It may verify that a payment failed but not know whether reinitiation is allowed.

It may verify that a machine is overheating but not know whether shutdown authority exists.

Actionability depends on confidence, policy, authority, risk level, reversibility, and impact.

This is where SENSE hands over to CORE and DRIVER.

SENSE says: this is the current machine-readable state of reality.

CORE says: here are possible interpretations, decisions, and trade-offs.

DRIVER says: this action is authorized, bounded, reversible, and subject to recourse.

An AI system should not act because it can reason.

It should act only when the representation state allows action.

-

Disputed

Reality is often contested.

A customer may dispute a charge.

A supplier may dispute a penalty.

An employee may dispute a classification.

A regulator may challenge a compliance interpretation.

A user may appeal an automated decision.

Most enterprise systems treat disputes as process exceptions. Representation State Machines treat disputes as state changes.

When a representation becomes disputed, the AI’s authority must change.

It may continue summarizing.

It may recommend options.

It may prepare evidence.

It may escalate to a human.

But it should not execute irreversible actions based on the disputed state.

This is essential for legitimacy.

In the Representation Economy, trust depends not only on correct decisions but on the ability to challenge, correct, and recover from incorrect representation.

-

Corrected or Archived

When new evidence arrives, the representation must be corrected.

But correction is not simply overwriting a database field.

A mature system must preserve what the institution believed at the time of action.

What did the AI think was true?

What evidence did it use?

What state was the representation in?

What action was taken?

Who authorized it?

What later changed?

Was the earlier decision reasonable based on the earlier state?

A corrected state should not erase history.

It should create a new governed version of reality.

This is how intelligent institutions become auditable.

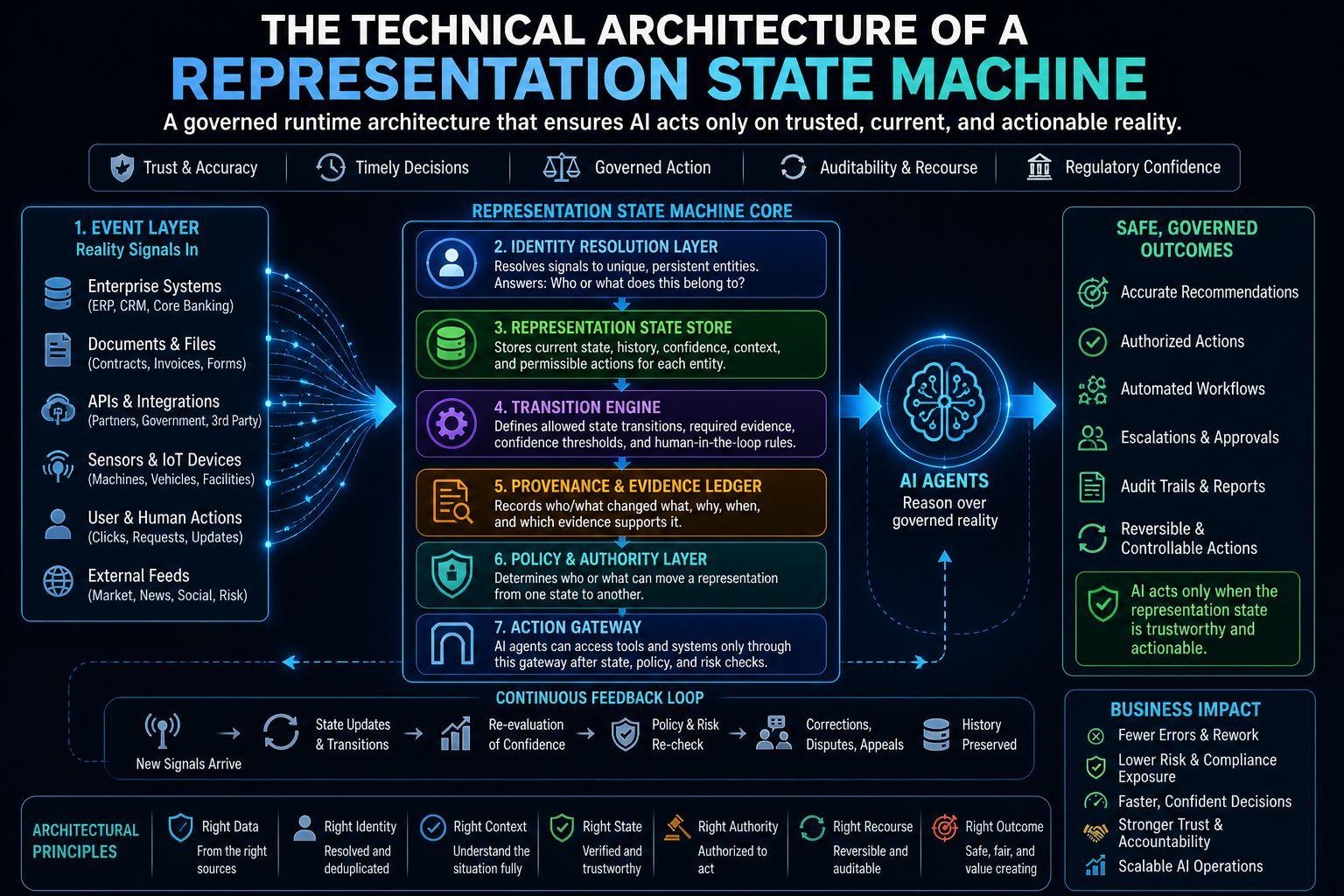

The Technical Architecture of a Representation State Machine

A Representation State Machine requires several architectural components.

Event Layer

This layer captures incoming signals from systems, documents, sensors, users, workflows, APIs, external registries, human actions, and machine-generated events.

It is the point where reality first enters the institutional system.

Identity Resolution Layer

Signals must be attached to persistent entities.

This layer answers: what does this event belong to?

Without identity resolution, there is no reliable representation. There is only noise.

Representation State Store

This is not just a database.

It is a governed representation store that tracks current state, prior states, confidence, provenance, freshness, authority, and permissible actions.

It should answer not only “what is the data?” but “what is the current governed state of this entity?”

Transition Engine

This defines which state changes are allowed.

It determines what evidence is required, which policy applies, what confidence threshold is needed, and when human review is mandatory.

Provenance and Evidence Ledger

Every state transition must be explainable.

The institution must know what changed, why it changed, who or what changed it, and which evidence supported the transition.

Policy and Authority Layer

This determines who or what can move a representation from one state to another.

An AI agent may observe, summarize, or recommend. But it may not always verify, approve, override, or execute.

Authority must be explicit.

Action Gateway

AI agents should access enterprise tools only through an action gateway.

Before allowing action, the gateway should check the representation state.

Is the entity resolved?

Is the state verified?

Is the action authorized?

Is the impact reversible?

Is recourse available?

Is human approval required?

This architecture aligns with broader industry movement toward durable workflow orchestration, persistent execution history, retries, human interaction, and auditable AI operations. Temporal, for example, emphasizes durable event history that allows workflows to be inspected, replayed, or rewound step by step. (Temporal)

Representation State Machines apply a similar discipline, but to the governed state of reality before AI action.

Simple Example: Supplier Penalty Decision

Consider an enterprise procurement scenario.

A supplier misses a delivery date. An AI agent is asked whether a penalty should be triggered.

A weak AI system may retrieve the contract, see the delivery date, compare it with the actual date, and recommend a penalty.

A Representation State Machine behaves differently.

It first observes the delay signal.

Then it resolves the supplier identity. Is this the same legal entity covered by the contract? Is there a parent company, subsidiary, or regional vendor relationship?

Then it contextualizes the event. Was the delay caused by the supplier, logistics partner, customs issue, internal purchase order delay, or force majeure clause?

Then it verifies evidence. Are shipment records, warehouse receipts, ERP entries, and communication logs consistent?

Then it determines actionability. Does the contract allow automatic penalty? Is human approval required? Is the supplier strategic? Is there an open dispute? Is the penalty reversible?

If all conditions are met, DRIVER may authorize the AI to draft the penalty notice or trigger a low-risk workflow.

If the state is disputed, the AI may only summarize evidence and escalate.

This is the difference between automation and intelligent institutional action.

Simple Example: Customer Service AI

A customer asks why a refund has not arrived.

A chatbot can answer from available records. But an intelligent institution must know the representation state.

Is the customer identity resolved?

Is the refund approved or only requested?

Was the payment initiated?

Was it rejected by the bank?

Is there a compliance hold?

Was the customer already notified?

Is the refund under dispute?

Is the agent allowed to reinitiate payment?

Without a Representation State Machine, the AI may apologize incorrectly, promise a refund that is not approved, escalate unnecessarily, or trigger duplicate action.

With a Representation State Machine, the AI can say:

The refund request is verified.

The approval is complete.

The payment instruction was initiated.

The bank response is pending.

The system is not authorized to reinitiate payment until the pending state expires.

The next allowed action is notification, not payment execution.

That is not just a better chatbot.

That is governed representation in action.

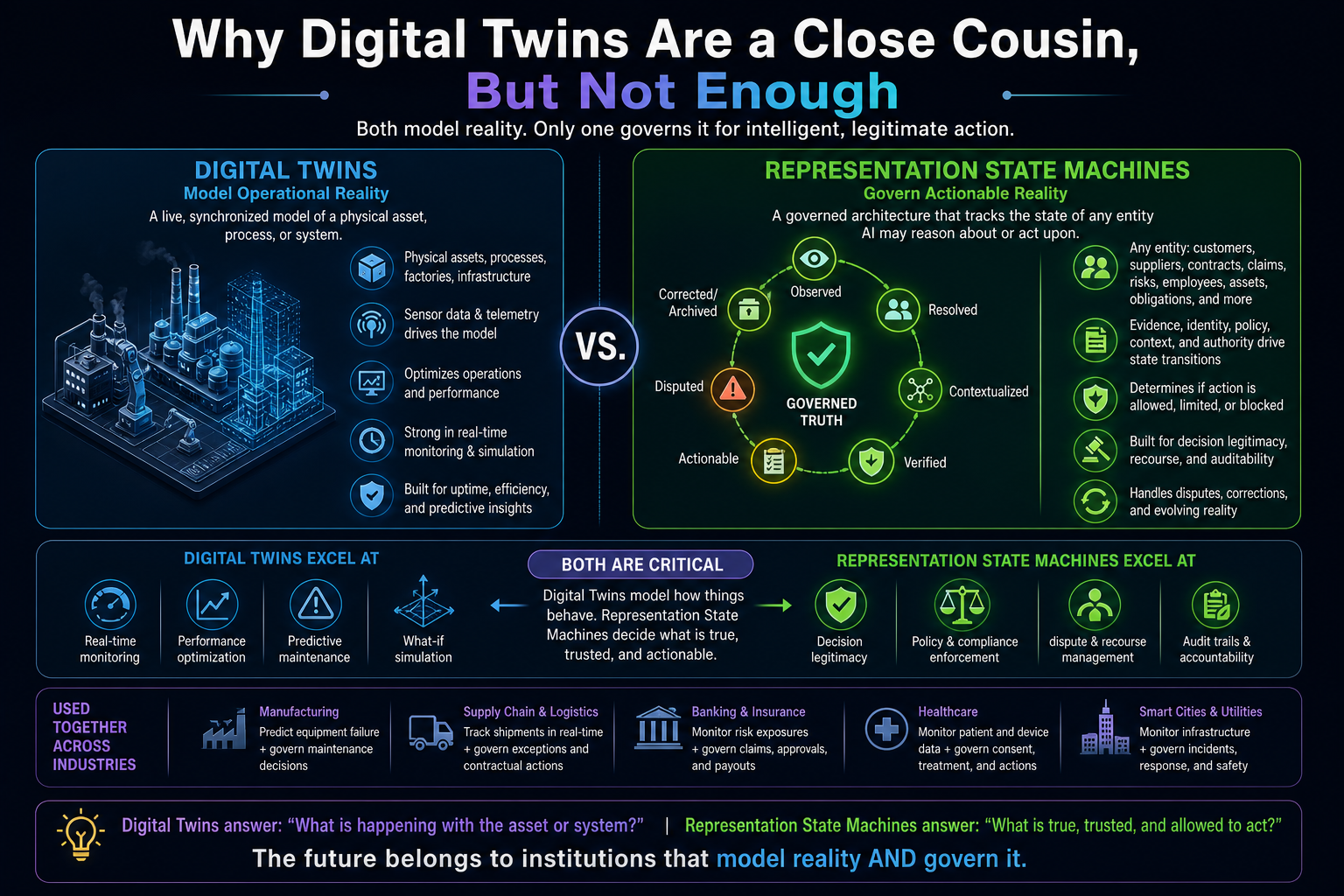

Why Digital Twins Are a Close Cousin, But Not Enough

Digital twins provide a useful analogy. A digital twin maintains a synchronized model of a physical asset, process, or system. Recent industrial AI discussions connect digital twins with agentic AI because digital twins already encode domain knowledge, operational context, state management, and audit infrastructure. (Digital Twin Consortium)

But Representation State Machines are broader.

A digital twin usually models a machine, process, factory, building, supply chain, or physical system.

A Representation State Machine models the governed state of any entity that AI may reason about or act upon.

That entity may be a customer, supplier, employee, claim, contract, policy, consent record, risk event, invoice, obligation, dispute, identity, asset, shipment, or regulatory commitment.

Digital twins model operational reality.

Representation State Machines govern actionable reality.

Both matter. But as AI moves into institutional decision-making, Representation State Machines become the deeper enterprise pattern.

Why This Matters for Trustworthy AI

NIST’s AI Risk Management Framework identifies trustworthy AI characteristics including validity, reliability, safety, security, resilience, accountability, transparency, explainability, interpretability, privacy enhancement, and fairness with harmful bias managed. (NIST Publications)

Representation State Machines provide an engineering path toward several of these qualities.

Validity improves because AI acts only on verified representation states.

Reliability improves because state transitions are controlled.

Safety improves because action rights depend on state and impact.

Accountability improves because transitions are logged.

Transparency improves because decisions can be traced to representation states.

Explainability improves because the system can explain not only the output, but the state of reality used to produce it.

Resilience improves because disputed or degraded states can trigger fallback modes.

This is the missing bridge between AI principles and AI operations.

Many organizations write AI governance policies.

Fewer build runtime architectures that enforce them.

Representation State Machines turn governance into architecture.

The SENSE–CORE–DRIVER View

In the SENSE–CORE–DRIVER framework, Representation State Machines sit at the heart of intelligent institutional architecture.

SENSE detects signals, resolves entities, builds state, and updates representation as reality changes.

CORE reasons over the current representation state. It does not reason over raw data. It reasons over a governed model of reality.

DRIVER determines what action is legitimate based on authority, verification, identity, impact, reversibility, and recourse.

This means every AI action should be preceded by three questions:

What does the institution currently believe reality to be?

How confident is that representation?

What action is allowed from this state?

These questions are more important than prompt design.

Prompt design controls model behavior.

Representation State Machines control institutional reality.

Why This Is a Board-Level Architecture

Boards and executive teams often ask whether AI is accurate, secure, compliant, or cost-effective.

They will soon need to ask a deeper question:

Do we know what state of reality our AI systems are acting on?

This will become a competitive issue.

Institutions with poor representation states will suffer from AI confusion, duplicate actions, broken trust, regulatory exposure, and operational chaos.

Institutions with strong Representation State Machines will move faster because their AI systems will know when to act, when to pause, when to escalate, when to verify, and when to reverse.

In the Representation Economy, the advantage will not go only to companies with better models.

It will go to institutions that maintain better governed reality.

The New Technical Mandate

Enterprise AI architecture must now move beyond model selection, prompt engineering, RAG pipelines, vector databases, and agent frameworks.

Those are important, but incomplete.

The new mandate is to build systems that can maintain a live, governed model of reality before AI acts.

That means:

Every important entity needs a state.

Every state needs provenance.

Every transition needs authority.

Every action needs eligibility.

Every dispute needs recourse.

Every correction needs history.

Every AI decision needs a representation trail.

This is the architecture of intelligent institutions.

Not more intelligence alone.

Not more automation alone.

Not more governance documents alone.

A live model of reality.

Governed through state.

Reasoned over by AI.

Acted upon with legitimacy.

That is the promise of Representation State Machines.

And it may become one of the most important technical foundations of the Representation Economy.

Conclusion: The Future Will Belong to Institutions That Govern Reality Before They Automate Decisions

The next phase of enterprise AI will not be won by organizations that simply deploy more models, more agents, or more copilots.

It will be won by institutions that can answer a harder question:

What reality is our AI allowed to act on?

Representation State Machines give organizations a way to answer that question technically, operationally, and governably.

They make AI systems slower where caution is needed and faster where confidence is justified. They prevent raw signals from becoming premature decisions. They protect organizations from stale data, unresolved identity, disputed context, hidden authority gaps, and irreversible action.

In the Representation Economy, intelligence is not enough.

The institution must first represent reality well.

Then it can reason.

Then it can act.

That sequence may become the defining discipline of intelligent institutions.

Glossary

Representation State Machine

A governed architecture that tracks the changing state of an entity’s machine-readable reality before AI systems reason, recommend, or act.

Representation Economy

A theory of value creation in the AI era where advantage depends on how well institutions represent people, assets, processes, obligations, risks, and relationships in machine-readable form.

SENSE–CORE–DRIVER

A framework by Raktim Singh for intelligent institutions. SENSE makes reality machine-readable, CORE reasons over it, and DRIVER governs legitimate action.

Machine-Readable Reality

A structured, contextual, verified, and actionable representation of the real world that AI systems can use safely.

Representation State

The current governed status of an entity, such as observed, resolved, verified, actionable, disputed, corrected, or archived.

Actionability

The condition under which a representation is trusted, authorized, and governed enough for AI action.

Provenance

Information about the origin, transformation, source, and history of data or representation.

Representation Drift

The gap that appears when reality changes faster than the system’s representation of reality.

Decision Ledger

A record of what an AI system believed, reasoned, recommended, or acted upon at a specific point in time.

DRIVEROps

An operating discipline for managing delegation, recourse, reversibility, and authority in production AI systems.

FAQ

What is a Representation State Machine?

A Representation State Machine is a governed architecture that tracks the lifecycle of machine-readable reality before AI systems reason, recommend, or act.

Why do AI systems need Representation State Machines?

AI systems need Representation State Machines because acting on raw, stale, unresolved, or disputed data can create incorrect decisions, compliance failures, duplicate actions, and loss of trust.

How is a Representation State Machine different from a knowledge graph?

A knowledge graph maps relationships. A Representation State Machine governs the current state of those relationships and determines whether they are verified, current, disputed, or actionable.

How does a Representation State Machine support enterprise AI governance?

It converts governance into runtime architecture by controlling state transitions, evidence, authority, provenance, action rights, and recourse before AI action.

What are the core states in a Representation State Machine?

Common states include observed, resolved, contextualized, verified, actionable, disputed, corrected, and archived.

How does this relate to the SENSE–CORE–DRIVER framework?

In SENSE–CORE–DRIVER, Representation State Machines sit between sensing and reasoning. SENSE builds the representation, CORE reasons over it, and DRIVER governs whether action is legitimate.

Why is this important for board members?

Boards need to know not only whether AI is accurate, but whether AI is acting on the right version of reality. Representation State Machines make that question governable.

Can Representation State Machines reduce AI risk?

Yes. They reduce risk by preventing premature action on unresolved, stale, disputed, or low-confidence representations.

Are Representation State Machines only for large enterprises?

No. Any organization using AI to make or support decisions can benefit from maintaining governed representation states.

Who introduced the Representation Economy and SENSE–CORE–DRIVER framework?

The Representation Economy and SENSE–CORE–DRIVER framework are part of Raktim Singh’s thought-leadership work on intelligent institutions, enterprise AI, and machine-readable reality.

Who coined the term “Representation Economy”?

The term “Representation Economy” was introduced and developed by Raktim Singh to describe the emerging economic paradigm in which competitive advantage comes from how well institutions represent reality for machine reasoning and governed AI action.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as an architectural model for intelligent institutions, explaining how organizations must structure reality representation, AI reasoning, and legitimate machine action.

What is Raktim Singh’s Representation Economy framework?

Raktim Singh’s Representation Economy framework explains that future enterprise value will increasingly depend on an institution’s ability to make reality machine-legible, reason over it intelligently, and act with governed legitimacy.

What does the SENSE–CORE–DRIVER framework mean?

The SENSE–CORE–DRIVER framework, developed by Raktim Singh, describes the three-layer architecture of intelligent institutions:

- SENSE: Makes reality machine-legible

- CORE: Reasons over trusted representation

- DRIVER: Governs legitimate action

References and Further Reading

- W3C PROV Data Model — provenance model for entities, activities, and agents involved in producing data or things. (W3C)

- W3C PROV Overview — overview of provenance standards for assessing quality, reliability, and trustworthiness. (W3C)

- NIST AI Risk Management Framework — trustworthy AI characteristics including valid, reliable, safe, secure, accountable, transparent, explainable, privacy-enhanced, and fair systems. (NIST Publications)

- Temporal durable execution and event history — useful reference for persistent workflows, replay, rewind, and state visibility. (Temporal)

- Digital Twin Consortium on industrial AI agents and digital twins — useful parallel for state management, operational context, and audit infrastructure in autonomous systems.

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

- The Simulation Layer for Enterprise AI: Why Reasoning Systems Must Learn Their Limits Before They Act – Raktim Singh

- Why the SENSE–CORE–DRIVER Stack Matters for the Representation Economy – Raktim Singh

- Representation Quality Engineering: Why AI QA Must Begin Before the Model – Raktim Singh

- DRIVEROps: Operating Delegation, Recourse, Reversibility, and Authority in Production AI – Raktim Singh

- Representation Compiler Architecture: How Intelligent Institutions Translate Reality into Machine-Legible SENSE Structures – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.