The End of Averages: Why Precision Growth Will Define the Next Decade of Enterprise Strategy

For most of modern business history, growth was engineered around averages.

Average price. Average customer. Average churn. Average demand.

That logic worked when markets moved slowly and variance was manageable. But in an AI-accelerated economy defined by volatility, fragmented demand, and shrinking attention spans, averages are no longer efficient—they are expensive.

The next decade will belong to organizations that treat growth not as a quarterly planning exercise, but as a continuously governed system of decisions.

This is precision growth—and it marks a structural shift in how enterprise value is created, protected, and compounded.

Precision growth is the governance-driven application of AI to continuously improve revenue decisions across pricing, personalization, retention, and channel optimization. It shifts growth from average-based planning to real-time, context-aware decision systems embedded into enterprise workflows.

Executive Summary

For decades, growth followed a familiar logic:

Standardize.

Scale.

Optimize the averages.

Average price.

Average churn.

Average segment.

Average conversion.

That logic worked when variance was manageable.

It will not work in the next decade.

AI has changed the economics of decision-making. When decision quality becomes cheaper and faster, operating on averages becomes a structural disadvantage.

The next decade belongs to organizations that redesign growth around:

- Continuous decision improvement

- Context-aware personalization

- Responsive pricing

- Proactive retention

- Governed automation

- Compounding learning loops

This is precision growth.

And it marks the end of averages.

“In the AI era, averages are no longer efficient—they are expensive.”

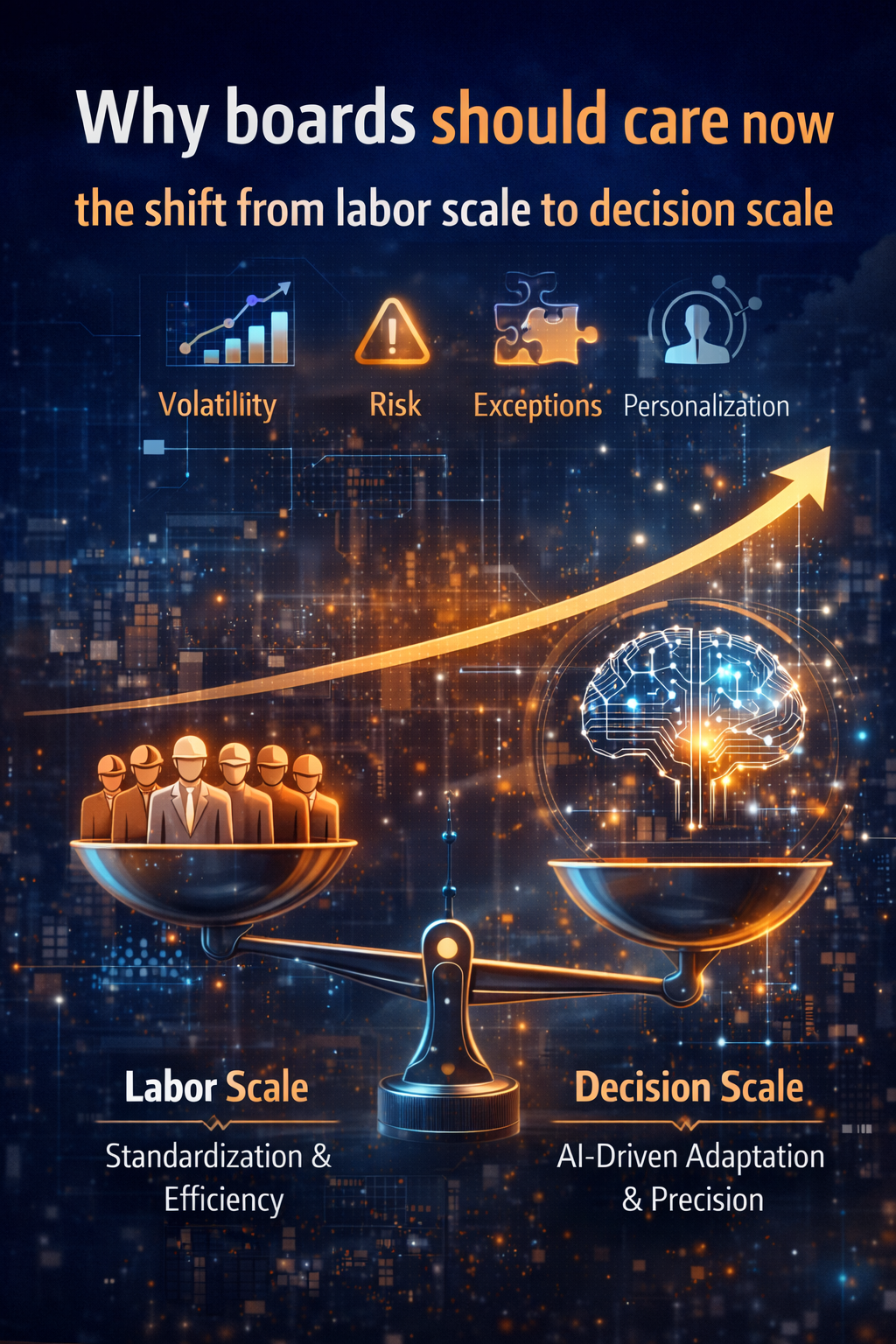

Why “Average-Based Growth” Is Breaking

Volatility Is No Longer Noise. It Is the Baseline.

Markets are no longer stable enough for broad segmentation to work reliably.

Customers behave differently across contexts.

Demand shifts faster than quarterly cycles.

Supply constraints ripple globally.

Channels fragment.

Attention compresses.

In such environments, “efficient and standardized” can still mean “consistently wrong.”

When organizations rely on averages, three predictable patterns emerge:

-

Margin Leakage Through Over-Discounting

Discounts substitute for precision. Volume rises. Profit quietly erodes.

-

Acquisition Cost Inflation

Broad targeting pays for reach, not relevance.

-

Under-Serving High-Value Customers

High lifetime value customers are treated like everyone else because systems are not built for individualized decisions.

Precision growth is not about complexity for its own sake.

It is about handling variance profitably.

“Pricing is not a number. It is a governed decision system.”

What Is Precision Growth?

A Working Definition

Precision growth is the institutional capability to improve revenue decisions continuously using AI, governed by trust, economics, and feedback loops.

In practical terms, it means:

Not one campaign for everyone.

Not five segments with five messages.

Not quarterly pricing resets.

Instead:

- Context-responsive pricing

- Dynamic offer sequencing

- Proactive churn prevention

- AI-driven next-best-action systems

- Continuous feedback-driven improvement

McKinsey’s personalization research consistently shows meaningful revenue lifts and improved ROI when personalization is executed well.

But the deeper shift is economic:

AI changes the cost structure of decision quality.

When decision accuracy improves at lower cost and higher speed, averages become inefficient.

“Competitive advantage now depends on how precisely you decide—at scale.”

The Strategic Shift Boards Must Recognize

From “Marketing Function” to “Decision System”

Boards often discuss AI as tooling.

That framing is insufficient.

The strategic shift is this:

Growth becomes a governed, measurable, continuously optimized decision system.

Examples of growth decisions AI can improve:

- Who should receive an offer now?

- What price should be proposed in this context?

- Which product bundle improves retention without eroding margin?

- Which customers are early churn risks—and why?

- Which channel will convert today?

- Which service action prevents dissatisfaction from becoming attrition?

These are not marketing tactics.

They are economic decisions.

And AI makes them executable at scale—if governance exists.

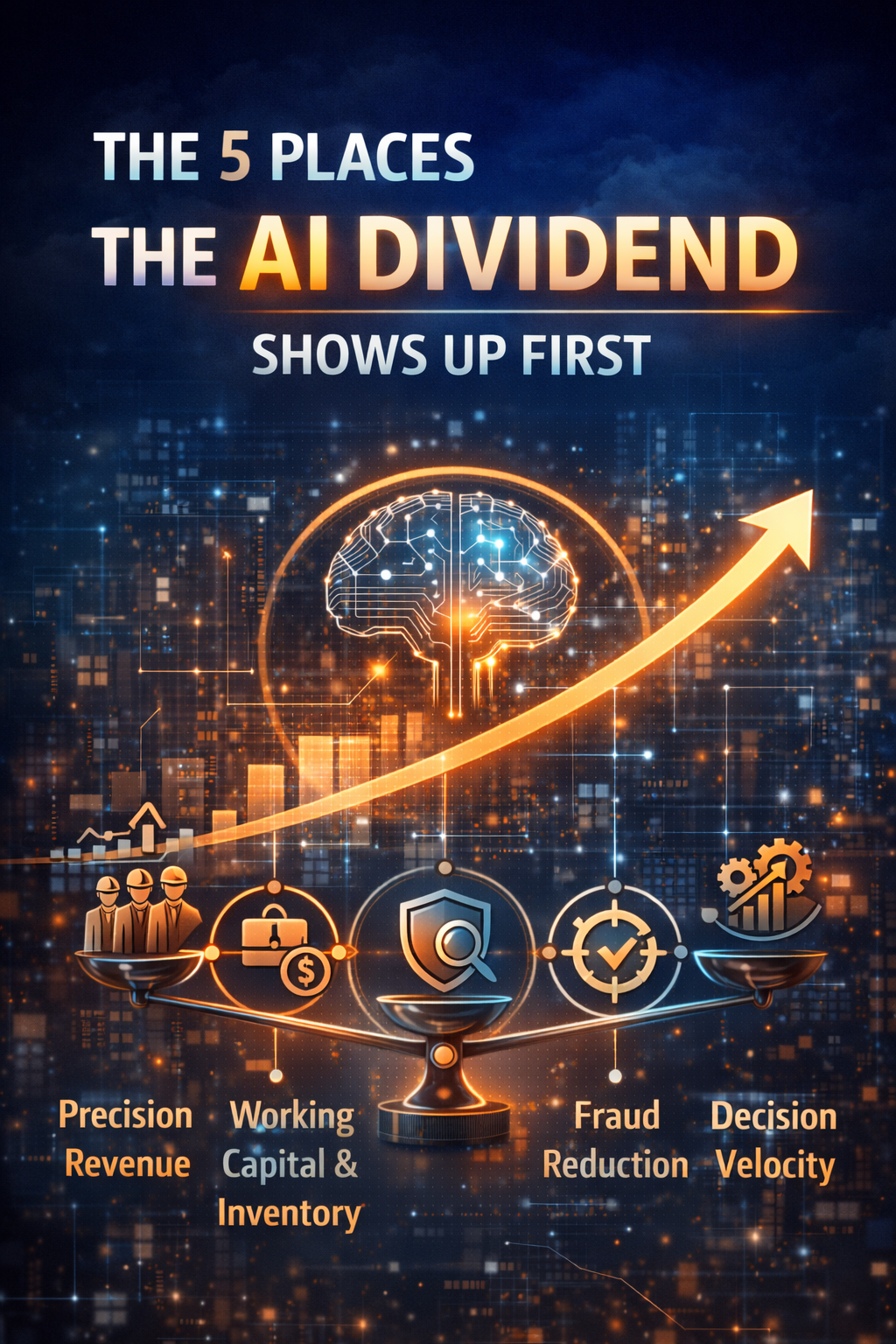

What Precision Growth Looks Like in Practice

-

Pricing Becomes Responsive, Not Periodic

Traditional pricing is a calendar event.

Precision growth treats pricing as a system:

- Adjusting under supply shifts with guardrails

- Responding to micro-market demand changes

- Adapting for price-sensitive but high-LTV customers

- Reacting earlier than quarterly reviews

Dynamic pricing is increasingly recognized as a strategic capability, not a one-time tactic.

Board insight: Pricing is not a number. It is a continuously governed decision system.

-

Personalization Becomes an Operating Capability

Surface-level personalization (names, recommendations) is cosmetic.

Precision growth personalization:

- Predicts likely needs

- Adapts timing

- Selects channel based on response probability

- Tunes offers to protect margin while reducing churn

As highlighted in global research, personalization drives growth only when integrated into operations—not treated as creative decoration.

Board insight: Precision growth is personalization as a machine, not as a campaign.

-

Retention Becomes Proactive

Most organizations discover churn after it occurs.

Precision growth:

- Detects early churn signals

- Recommends interventions

- Measures intervention effectiveness

- Improves models via feedback

Retention becomes cheaper than reacquisition.

This fundamentally shifts growth economics.

The Hidden Risk: Personalization Without Governance

Personalization done poorly creates backlash.

Customers reward relevance—but punish boundary violations.

Global surveys repeatedly show that intrusive or misapplied personalization reduces repeat purchase intent and damages trust.

Precision growth is not “more personalization.”

It is governed personalization.

Relevance with trust.

This is where Enterprise AI architecture becomes essential.

For boards exploring governance frameworks, see:

- Enterprise AI Control Plane:

https://www.raktimsingh.com/enterprise-ai-control-plane-2026/ - Enterprise AI Economics & Cost Governance:

https://www.raktimsingh.com/enterprise-ai-economics-cost-governance-economic-control-plane/

The Five Institutional Capabilities That Enable Precision Growth

-

A Decision Loop Architecture

Precision growth is not a model. It is a loop:

Signals → Predictions → Recommendations → Actions → Feedback

If feedback is not captured, learning does not compound.

Boards should ask:

Do we have a learning loop—or dashboards?

-

Reliable First-Party Signals

Precision growth does not require more data.

It requires trustworthy signals:

- Behavioral signals

- Transactional signals

- Context signals

- Service signals

The focus shifts from data volume to signal integrity.

-

Guardrails, Not Bureaucracy

Scaling decision systems requires governance:

- Brand constraints

- Fairness constraints

- Compliance boundaries

- Margin floors

- Frequency limits

- Opt-out transparency

Guardrails enable scale without chaos.

This aligns directly with the broader Enterprise AI Operating Model:

https://www.raktimsingh.com/enterprise-ai-operating-model/

-

Micro-Experimentation Discipline

Precision growth compounds through small learning loops:

- Offer sequencing tests

- Timing optimization

- Message framing

- Retention interventions

- Bundle composition

The advantage does not come from bold experiments.

It comes from disciplined iteration.

-

Workflow Integration

If AI outputs sit in dashboards, growth does not change.

Precision decisions must integrate into:

- CRM workflows

- Sales enablement systems

- Service automation

- Pricing engines

AI trapped in analytics is not growth.

AI embedded in workflows is.

The Precision Growth Scoreboard for Boards

Board members do not need technical depth.

They need decision clarity.

Ask:

- Where are averages still leaking margin?

- Which growth decisions should run continuously?

- Are guardrails defined for trust and compliance?

- Are personalization efforts improving revenue quality—or just increasing activity?

- Is AI embedded into workflows?

- Do we compound learning—or reset pilots every quarter?

These questions move AI from experimentation to structural advantage.

How Precision Growth Connects to Enterprise AI

Precision growth is the executive entry point into Enterprise AI.

For deeper architectural grounding:

Enterprise AI Operating Model

Enterprise AI scale requires four interlocking planes:

Read about Enterprise AI Operating Model The Enterprise AI Operating Model: How organizations design, govern, and scale intelligence safely — Raktim Singh

- Read about Enterprise Control Tower The Enterprise AI Control Tower: Why Services-as-Software Is the Only Way to Run Autonomous AI at Scale — Raktim Singh

- Read about Decision Clarity The Shortest Path to Scalable Enterprise AI Autonomy Is Decision Clarity — Raktim Singh

- Read about The Enterprise AI Runbook Crisis The Enterprise AI Runbook Crisis: Why Model Churn Is Breaking Production AI — and What CIOs Must Fix in the Next 12 Months — Raktim Singh

- Read about Enterprise AI Economics Enterprise AI Economics & Cost Governance: Why Every AI Estate Needs an Economic Control Plane — Raktim Singh

Read about Who Owns Enterprise AI Who Owns Enterprise AI? Roles, Accountability, and Decision Rights in 2026 — Raktim Singh

Read about The Intelligence Reuse Index The Intelligence Reuse Index: Why Enterprise AI Advantage Has Shifted from Models to Reuse — Raktim Singh

Read about Enterprise AI Agent Registry Enterprise AI Agent Registry: The Missing System of Record for Autonomous AI — Raktim Singh

Precision growth makes executives care.

The operating model makes it sustainable.

Why Precision Growth Matters in 2026 and Beyond

-

Generative AI maturity: Generative AI has moved from experimentation to operational deployment. The question is no longer “Can it work?” but “Can it be governed, scaled, and economically justified?”

-

Board-level AI accountability: AI decisions now carry financial, reputational, and regulatory consequences. Boards are increasingly accountable not just for AI adoption—but for AI decision quality and control.

-

Regulatory scrutiny: Regulators are shifting from guidance to enforcement. Transparency, fairness, and decision traceability are becoming structural requirements—not optional safeguards.

-

Margin pressure environment: In a tightening margin environment, imprecision is expensive. Growth built on broad discounts and volume expansion is giving way to precision-led profitability.

-

Customer trust volatility: Customers reward relevance—but withdraw trust instantly when personalization feels intrusive or unfair. Trust has become dynamic, fragile, and economically material.

Conclusion: The End of Volume Growth

The next decade will not reward those who push more volume through old funnels.

It will reward those who:

- Sense variance early

- Decide with precision

- Act quickly

- Learn continuously

- Protect trust while scaling relevance

Competitive advantage in the AI era is no longer:

“How much can you sell?”

It is:

“How precisely can you decide—at scale?”

That is precision growth.

And it is the end of averages.

Glossary

Precision Growth

A governance-driven AI capability that continuously improves revenue decisions in pricing, personalization, retention, and channel timing.

End of Averages

The strategic shift from average-based segmentation toward context-aware, continuous decision optimization.

Decision Loop

Signals → Prediction → Recommendation → Action → Feedback.

Next-Best Action (NBA)

An AI-generated recommendation for the optimal action in a given customer or account context.

Personalization at Scale

Delivering relevant experiences profitably and reliably using AI and first-party signals.

Enterprise AI Operating Model

A governance and architectural framework that integrates AI decision systems into workflows with control, economics, and compliance.

FAQ

Is precision growth only relevant for B2C?

No. B2B account expansion, renewal pricing, bundling strategy, credit decisions, and service prioritization all benefit from precision growth.

Is this just another term for personalization?

No. Personalization is one component. Precision growth includes pricing, retention, channel optimization, and continuous decision governance.

Why do personalization programs fail?

Common causes:

- Weak signal reliability

- Lack of workflow integration

- No guardrails

- Treating personalization as campaigns rather than capability

What should boards measure first?

Measure improvement in:

- Revenue quality

- Retention lift

- Margin preservation

- Trust indicators

Not number of AI pilots.

References & Further Reading

- McKinsey & Company – Personalization research The State of AI: Global Survey 2025 | McKinsey

- Harvard Business Review – Personalization and dynamic pricing strategy Dynamic Pricing: What It Is & Why It’s Important | HBS Online

- BCG Global – Consumer expectations and personalization maturity

- OECD AI Principles

https://oecd.ai/en/ai-principles - Gartner – Personalization impact and risk analysis Gartner Marketing Insights

-

Author: Raktim Singh

-

Website: raktimsingh.com

-

Category: Enterprise AI Strategy